【统计学习方法】朴素贝叶斯

一、前言

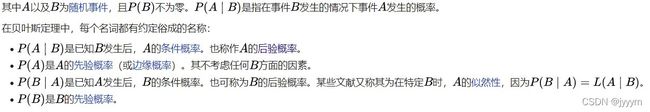

首先介绍朴素贝叶斯的核心公式:

P A ∣ B ) = P ( A ) P ( B ∣ A ) P ( B ) PA|B) = \frac{{P(A)P(B|A)}}{{P(B)}} PA∣B)=P(B)P(A)P(B∣A)

Wikipedia贝叶斯定理

朴素贝叶斯是基于贝叶斯定理与特征条件独立假设的分类方法。”朴素贝叶斯“之”朴素“之名即来源于其特征条件独立的假设。对于给定的数据集,首先基于特征条件独立假设学习输入输出的联合概率分布,而后基于此模型,对于给定的输入x,利用贝叶斯定理求出后验概率最大的输出y。具体地,条件独立性假设是:

P ( X = x ∣ Y = c k ) = P ( X ( 1 ) = x ( 1 ) , . . . , X ( n ) = x ( n ) ∣ Y = c k ) = ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) P(X = {\rm{x}}|Y = {c_k}) = P({X^{(1)}} = {x^{(1)}},...,{X^{(n)}} \\= {x^{(n)}}|Y = {c_k}) = \prod\limits_{j = 1}^n {P({X^{(j)}} = {x^{(j)}}|Y = {c_k})} P(X=x∣Y=ck)=P(X(1)=x(1),...,X(n)=x(n)∣Y=ck)=j=1∏nP(X(j)=x(j)∣Y=ck)

二、朴素贝叶斯算法

对于训练数据:

T = { ( x 1 , y 1 ) , ( x 2 , y 2 ) , . . . , ( x N , y N ) } , x i = ( x i ( 1 ) , x i ( 2 ) , . . . , x i ( n ) ) T , x i ( j ) ∈ { a j 1 , a j 2 , . . . , a j S j } T = \{ ({x_1},{y_1}),({x_2},{y_2}),...,({x_N},{y_N})\} ,\\{x_i} = {(x_i^{(1)},x_i^{(2)},...,x_i^{(n)})^T} ,x_i^{(j)} \in \{ {a_{j1}},{a_{j2}},...,{a_{j{S_j}}}\} T={(x1,y1),(x2,y2),...,(xN,yN)},xi=(xi(1),xi(2),...,xi(n))T,xi(j)∈{aj1,aj2,...,ajSj}, a j l {a_{jl}} ajl是第j个特征可能取的第 l l l个值, y i ∈ { c 1 , c 2 , . . . , c K } y_i^{} \in \{ {c_1},{c_2},...,{c_K}\} yi∈{c1,c2,...,cK}。

-

计算先验概率和条件概率

先 验 概 率 : P ( Y = c k ) = ∑ i = 1 N I ( y i = c k ) N , k = 1 , 2 , . . . , K ( K 为 Y 的 类 别 总 数 ) 先验概率:P(Y = {c_k}) = \frac{{\sum\limits_{i = 1}^N {I({y_i} = {c_k})} }}{N},k = 1,2,...,K(K为Y的类别总数) 先验概率:P(Y=ck)=Ni=1∑NI(yi=ck),k=1,2,...,K(K为Y的类别总数)

条 件 概 率 : P ( X ( j ) = a j l ∣ Y = c k ) = ∑ i = 1 N I ( x i ( j ) = a j l , y i = c k ) ∑ i = 1 N I ( y i = c k ) , j = 1 , 2 , . . , n ; l = 1 , 2 , . . . , S j ; k = 1 , 2 , . . . , K 条件概率:P({X^{(j)}} = {a_{jl}}|Y = {c_k}) = \frac{{\sum\limits_{i = 1}^N {I(x_i^{(j)} = {a_{jl}},{y_i} = {c_k})} }}{{\sum\limits_{i = 1}^N {I({y_i} = {c_k})} }},\\ \\ \\ j = 1,2,..,n; l = 1,2,...,{S_j};k = 1,2,...,K 条件概率:P(X(j)=ajl∣Y=ck)=i=1∑NI(yi=ck)i=1∑NI(xi(j)=ajl,yi=ck),j=1,2,..,n;l=1,2,...,Sj;k=1,2,...,K -

对于给定实例,计算:

P ( Y = c k ) ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) , k = 1 , 2 , . . . , K P(Y = {c_k})\prod\limits_{j = 1}^n {P({X^{(j)}} = {x^{(j)}}|Y = {c_k}),k = 1,2,...,K} P(Y=ck)j=1∏nP(X(j)=x(j)∣Y=ck),k=1,2,...,K -

确定实例x的类别

y = arg max P ( Y = c k ) ∏ j = 1 n P ( X ( j ) = x ( j ) ∣ Y = c k ) y = \arg \max P(Y = {c_k})\prod\limits_{j = 1}^n {P({X^{(j)}} = {x^{(j)}}|Y = {c_k})} y=argmaxP(Y=ck)j=1∏nP(X(j)=x(j)∣Y=ck)

注意:如果某个属性值在训练集中没有与某个类同时出现同时出现过,则直接基于上述算法进行概率估计进而判别将出现问题,这可能导致算法步骤 2 2 2连乘式计算出来的概率值为零,为了避免上述情况即其他属性携带的信息被训练集中未出现的属性值抹去,在估计概率值时通常要进行平滑:常用拉普拉斯修正来解决这个问题。

三、代码实现

In the case of categorical variables, such as counts or labels, a multinomial distribution can be used. If the variables are binary, such as yes/no or true/false, a binomial distribution can be used. If a variable is numerical, such as a measurement, often a Gaussian distribution is used.

- Binary: Binomial distribution.

- Categorical: Multinomial distribution.

- Numeric: Gaussian distribution.

These three distributions are so common that the Naive Bayes

implementation is often named after the distribution. For example:

- Binomial Naive Bayes: Naive Bayes that uses a binomial distribution.

- Multinomial Naive Bayes: Naive Bayes that uses a multinomial distribution.

- Gaussian Naive Bayes: Naive Bayes that uses a Gaussian distribution.

Using one of the three common distributions is not mandatory; for example, if a real-valued variable is known to have a different specific distribution, such as exponential, then that specific distribution may be used instead. If a real-valued variable does not have a well-defined distribution, such as bimodal or multimodal, then a kernel density estimator can be used to estimate the probability distribution instead. a good example of a kernel density estimator

3.1 离散数据

对于书中P63页例4.1的Python实现如下。首先将数据用pandas.DataFrame存储:

3.1.1 数据准备

#train data

x = [[1,'S'],[1,'M'],[1,'M'], [1,'S'], [1,'S'], [2, 'S'], [2, 'M'], [2, 'M'], [2, 'L'], [2, 'L'], [3, 'L'], [3, 'M'], [3, 'M'], [3, 'L'], [3, 'L']]

y = [-1,-1,1,1,-1,-1,-1,1,1,1,1,1,1,1,-1]

#test data

t = [2, 'S']

x = pd.DataFrame(x)

y = pd.DataFrame(y, columns=['class'])

data = pd.concat([x, y], axis=1)

3.1.2 Python实现

def prior_prob(y_class):

# 计算先验概率

return sum(data['class']==y_class) / len(data)

def cond_prob(y_class, x_j):

# 计算条件概率

prob = {}

for i in data[x_j].unique():

prob[i] = sum((data[x_j]==i) & (data['class']==y_class)) / sum(data['class']==y_class)

return prob

def predict(k):

# k为带预测样本

temp = {}

for j in data['class'].unique():

x = 1

for i in range(len(k)):

temp_cond_prob = cond_prob(j, i)

temp_prior_prob = prior_prob(j)

x = x * temp_cond_prob[k[i]]

x = x * temp_prior_prob

temp[j] = x

return max(temp, key=temp.get)

3.2连续数据

本节将利用编程语言实现高斯朴素贝叶斯,其适用于连续变量,高斯朴素贝叶斯假设各个特征 x i {x_i} xi在各个类别下服从正态分布:

P ( x i ∣ y k ) = 1 2 π σ y 2 exp ( − ( x i − y i ) 2 2 σ y 2 ) P({{\rm{x}}_i}|{y_k}) = \frac{1}{{\sqrt {2\pi \sigma _y^2} }}\exp ( - \frac{{{{({x_i} - {y_i})}^2}}} {{2\sigma _y^2}}) P(xi∣yk)=2πσy21exp(−2σy2(xi−yi)2)

- μ y {{\mu}_y} μy指的是在类别为 y y y的样本中,特征 x i {x_i} xi的样本均值

- σ y {{\sigma}_y} σy指的是在类别为 y y y的样本中,特征 x i {x_i} xi的标准差

3.2.1 数据准备

from sklearn.datasets import make_blobs

from sklearn.model_selection import train_test_split

features, labels = make_blobs(100, n_features=2, centers=2, cluster_std=0.5, random_state=42)

x_train, x_test, y_train, y_test = train_test_split(features, labels, train_size=0.8, random_state=42)

traindf = pd.DataFrame(x_train)

testdf = pd.DataFrame(x_test)

trainlabel = pd.DataFrame(y_train,columns=['class'])

data = pd.concat([traindf, trainlabel],axis=1)

3.2.2 Python实现

未引入拉普拉斯平滑:

class gaussian_NB:

def __init__(self, traindata):

self.data = traindata

self.label_list = traindata['class'].unique()

def prior_prob(self, y_class):

# 计算先验概率

return sum(self.data['class']==y_class) / len(self.data)

def sigmu(self, x_j):

# 计算类别为i的样本中,训练样本第x_j维特征的样本均值和标准差

# 并存储在一个dict中,格式为{'label':[sigma, mu],...}

prob = {}

for i in self.label_list:

sigma_y = self.data[x_j][self.data['class']==i].std()

mu_y = self.data[x_j][self.data['class']==i].mean()

prob[i] = [sigma_y, mu_y]

return prob

def cond_prob(self, feat):

# 计算条件概率,probs中存储格式:{'label':[probability]}

# probability的计算与算法步骤2符合

# probability大小为1*len(feat)

probs = {label: [] for label in self.label_list}

for k in range(len(feat)):

# 第k维的数据

sigma_mu = sigmu(k)

for j in sigma_mu.keys():

sigma, mu = sigma_mu[j]

temp = (1 / np.sqrt(2*np.pi*np.square(sigma))) * np.exp(-np.square(feat[k]-mu) / 2*np.square(sigma))

probs[j].append(temp)

return probs

def predict(self, feat):

# 对于新样本样本进行预测,输出判断的类别标签

temp_cond_prob = cond_prob(feat)

pred_prob = {}

for i in self.label_list:

temp = np.log(prior_prob(i))

for j in temp_cond_prob[i]:

temp = temp * np.log(j)

pred_prob[i] = temp

return max(pred_prob, key=pred_prob.get)

def test(self, testdata):

# 批量预测

pred_list = []

for i in testdata.values:

pred_list.append(self.predict(i))

return pred_list

def acc(pred_label, true_label):

# 计算精确率

return sum(pred_label==true_label) / len(true_label)

四、扩展阅读

- How to Develop a Naive Bayes Classifier from Scratch in Python

- A Gentle Introduction to Bayes Theorem for Machine Learning

- Better Naive Bayes: 12 Tips To Get The Most From The Naive Bayes Algorithm

- 统计学习方法习题解答-DataWhale