目标检测算法——YOLOv5/YOLOv7改进之结合NAMAttention

关注”PandaCVer“公众号

深度学习Tricks,第一时间送达

目录

NAMAttention,一种新的注意力计算方式,无需额外的参数!

(一)前沿介绍

1.NAM结构图

2.相关实验结果

(二)YOLOv5/YOLOv7改进之结合NAMAttention

1.配置common.py文件

2.配置yolo.py文件

3.配置yolov5_NAM.yaml文件

NAMAttention,一种新的注意力计算方式,无需额外的参数!

(一)前沿介绍

论文题目:NAM: Normalization-based Attention Module

论文地址:https://arxiv.org/abs/2111.12419

代码地址:https://github.com/Christian-lyc/NAM

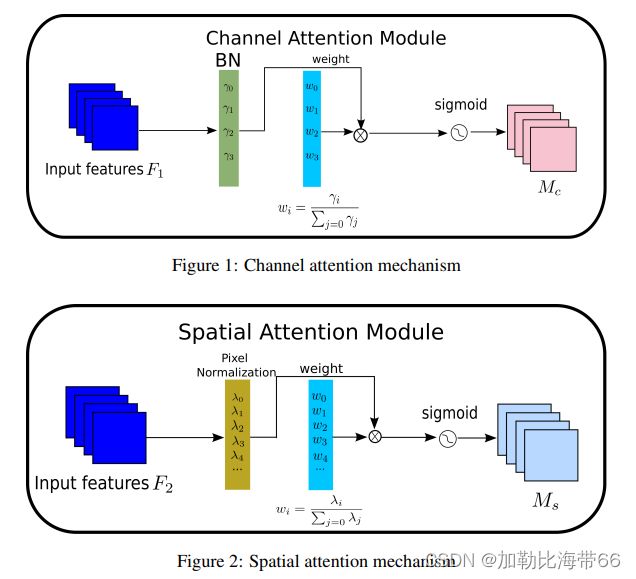

作者提出了一种基于归一化的注意力模块(NAMAttention),可以降低不太显著的特征的权重,这种方式在注意力模块上应用了稀疏的权重惩罚,这使得这些权重在计算上更加高效,同时能够保持同样的性能。在ResNet和MobileNet上和其他的注意力方式进行了对比,该方法可以达到更高的准确率。

1.NAM结构图

2.相关实验结果

作者将NAM和SE,BAM,CBAM,TAM在ResNet和MobileNet上,在CIFAR100数据集和ImageNet数据集上进行了对比,对每种注意力机制都使用了同样的预处理和训练方式,对比结果表示,在CIFAR100上,单独使用NAM的通道注意力或者空间注意力就可以达到超越其他方式的效果。在ImageNet上,同时使用NAM的通道注意力和空间注意力可以达到超越其他方法的效果。

(二)YOLOv5/YOLOv7改进之结合NAMAttention

改进方法和其他注意力机制一样,分三步走:

1.配置common.py文件

加入NAM代码。

#NAM

class Channel_Att(nn.Module):

def __init__(self, channels, t=16):

super(Channel_Att, self).__init__()

self.channels = channels

self.bn2 = nn.BatchNorm2d(self.channels, affine=True)

def forward(self, x):

residual = x

x = self.bn2(x)

weight_bn = self.bn2.weight.data.abs() / torch.sum(self.bn2.weight.data.abs())

x = x.permute(0, 2, 3, 1).contiguous()

x = torch.mul(weight_bn, x)

x = x.permute(0, 3, 1, 2).contiguous()

x = torch.sigmoid(x) * residual #

return x

class NAM(nn.Module):

def __init__(self, channels, out_channels=None, no_spatial=True):

super(NAM, self).__init__()

self.Channel_Att = Channel_Att(channels)

def forward(self, x):

x_out1 = self.Channel_Att(x)

return x_out12.配置yolo.py文件

加入NAM模块。

#NAM

elif m is NAM:

c1, c2 = ch[f], args[0]

if c2 != no:

c2 = make_divisible(c2 * gw, 8)

args = [c1, *args[1:]]3.配置yolov5_NAM.yaml文件

添加方法灵活多变,Backbone或者Neck都可。示例如下:

# anchors

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Focus, [64, 3]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 9, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 1, SPP, [1024, [5, 9, 13]]],

[-1, 3, C3, [1024, False]], # 9

]

# YOLOv5 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 5], 1, Concat, [1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, nn.Upsample, [None, 2, 'nearest']],

[[-1, 3], 1, Concat, [1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 3, NAM, [256,256]], #18

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 3, NAM, [512,512]],

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[-1, 3, NAM, [1024,1024]],

[[18, 22, 26], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]