pytorch下使用tensorboardX可视化

环境:py36+pytorch1.10.2+tensorboardx2.5

1.配置tensorboardX环境

1.进入py36环境,pip install tensorboardX

2.conda 创建一个新的环境 假设名为tf ,在tf中pip install tensorboard

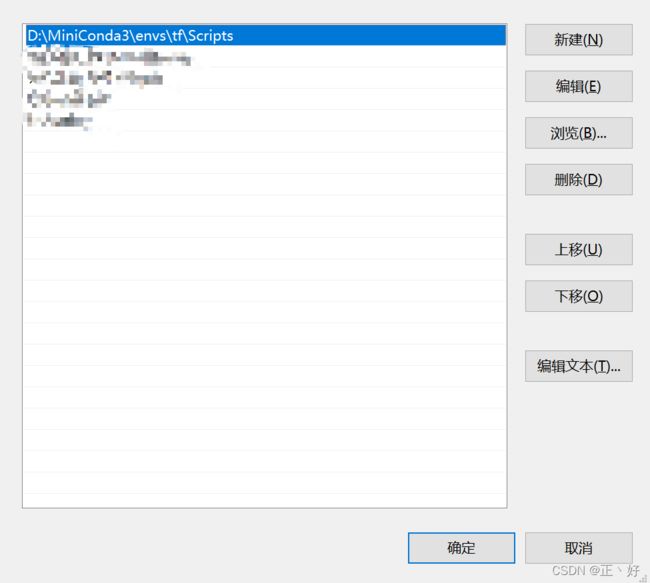

3.找到tf环境所在路径,如下列代码所示

D:\MiniConda3\envs\tf\Scripts4.将此路径放到环境变量的Path中

到此为止就可以使用tf环境中的tensorboard.exe去实现其他pytorch环境中的项目了

2.tensorboardX使用简介

# -*- coding:utf-8 -*-

# @Time:2022/3/10 - 9:25

# @Author: Yongzheng

import torch

from tensorboardX import SummaryWriter

from torch import nn

from torch.utils.data import DataLoader

from torchvision import datasets

from torchvision.transforms import ToTensor

# ==================================================1.数据集==================================================#

# Download training data from open datasets.

training_data = datasets.FashionMNIST(

root="dataset", # 数据集保存路径

train=True,

download=True,

transform=ToTensor(),

)

# Download test data from open datasets.

test_data = datasets.FashionMNIST(

root="dataset", # 数据集保存路径

train=False,

download=True,

transform=ToTensor(),

)

# ==================================================2.超参数==================================================#

batch_size = 64

epochs = 9

# Get cpu or gpu device for training.

device = "cuda" if torch.cuda.is_available() else "cpu"

print(f"Using {device} device")

# ==================================================3.模型定义==================================================#

# Define model

class NeuralNetwork(nn.Module):

def __init__(self):

super(NeuralNetwork, self).__init__()

self.flatten = nn.Flatten()

self.linear_relu_stack = nn.Sequential(

nn.Linear(28 * 28, 512),

nn.ReLU(),

nn.Linear(512, 512),

nn.ReLU(),

nn.Linear(512, 10)

)

# self.linear1 = nn.Linear(28*28,512)

# self.relu1 = nn.ReLU()

# self.linear2 = nn.Linear(512,512)

# self.relu2 = nn.ReLU()

# self.out = nn.Linear(512,10)

def forward(self, x):

x = self.flatten(x)

logits = self.linear_relu_stack(x)

return logits

class LeNet(nn.Module):

def __init__(self):

super(LeNet, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(in_channels=1, out_channels=32, kernel_size=3, stride=1, padding=2),

nn.ReLU(),

nn.MaxPool2d(2, 2)

)

self.conv2 = nn.Sequential(

nn.Conv2d(32, 64, 5),

nn.ReLU(),

nn.MaxPool2d(2, 2)

)

self.fc1 = nn.Sequential(

nn.Linear(64 * 5 * 5, 128),

nn.BatchNorm1d(128),

nn.ReLU()

)

self.fc2 = nn.Sequential(

nn.Linear(128, 64),

nn.BatchNorm1d(64), # 加快收敛速度的方法(注:批标准化一般放在全连接层后面,激活函数层的前面)

nn.ReLU()

)

self.fc3 = nn.Linear(64, 10)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

x = x.view(x.size()[0], -1)

x = self.fc1(x)

x = self.fc2(x)

x = self.fc3(x)

return x

# ==================================================4.训练过程定义==================================================#

def train(dataloader, model, loss_fn, optimizer,epoch):

size = len(dataloader.dataset)

model.train()

loss_sigma = 0.0 # 记录一个epoch的loss之和

correct_sigma = 0.0 # 准确率

total=0 # 训练过的样本总数

for batch, (X, y) in enumerate(dataloader):

X, y = X.to(device), y.to(device)

# Compute prediction error

pred = model(X)

loss = loss_fn(pred, y)

# 反向传播

optimizer.zero_grad()

loss.backward()

optimizer.step()

# 计算总损失和平均值

loss_sigma+=loss.item()

_,pred2class = torch.max(pred,1)

correct_sigma += (pred2class == y).cpu().squeeze().sum().numpy()

total += y.size(0)

if batch % 100 == 0:

loss, current = loss.item(), batch * len(X)

print(f"loss: {loss:>7f} [{current:>5d}/{size:>5d}]")

loss_avg = loss_sigma / len(dataloader)

loss_sigma = 0.0

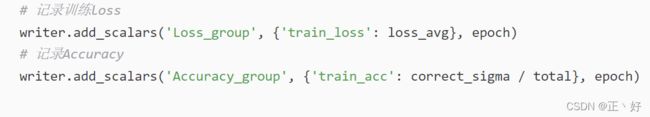

# 记录训练loss

writer.add_scalars('Loss_group', {'train_loss': loss_avg}, epoch)

# 记录Accuracy

writer.add_scalars('Accuracy_group', {'train_acc': correct_sigma / total}, epoch)

def test(dataloader, model, loss_fn):

size = len(dataloader.dataset)

num_batches = len(dataloader)

model.eval()

test_loss, correct = 0, 0

with torch.no_grad():

for X, y in dataloader:

X, y = X.to(device), y.to(device)

pred = model(X)

test_loss += loss_fn(pred, y).item()

correct += (pred.argmax(1) == y).type(torch.float).sum().item()

test_loss /= num_batches

correct /= size

print(f"Test Error: \n Accuracy: {(100 * correct):>0.1f}%, Avg loss: {test_loss:>8f} \n")

# ==================================================5.优化器和损失函数==================================================#

model = LeNet().to(device)

print(model)

loss_fn = nn.CrossEntropyLoss() # 损失函数

# optimizer = torch.optim.SGD(model.parameters(), lr=1e-3) # 反向传播

optimizer = torch.optim.SGD(model.parameters(), lr=1e-3, momentum=0.9)

# Create data loaders.

train_dataloader = DataLoader(training_data, batch_size=batch_size)

test_dataloader = DataLoader(test_data, batch_size=batch_size)

# ==================================================6.训练==================================================#

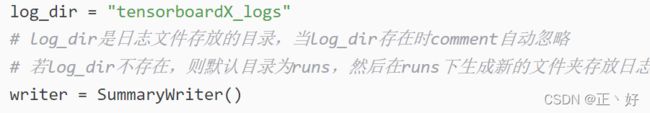

log_dir = "tensorboardX_logs"

# log_dir是日志文件存放的目录,当log_dir存在时comment自动忽略

# 若log_dir不存在,则默认目录为runs,然后在runs下生成新的文件夹存放日志,如Mar17_14-47-47_LAPTOP-KI10RP71-test,后缀‘-test’就是comment指定的内容

writer = SummaryWriter()

for t in range(epochs):

print(f"Epoch {t + 1}\n-------------------------------")

train(train_dataloader, model, loss_fn, optimizer,t+1)

test(test_dataloader, model, loss_fn)

print("Done!")

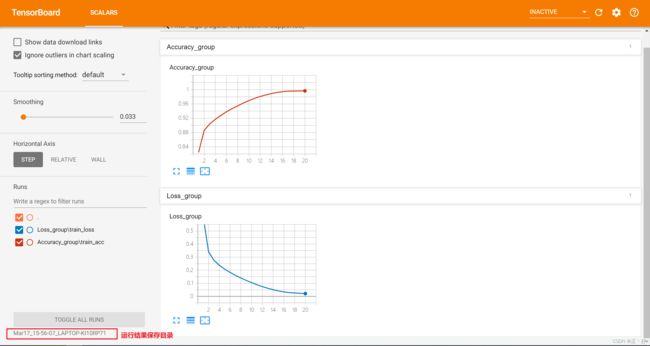

代码中的如下部分就是在代码中使用tensorboardX

writer.add_scalars(table_name,y轴数据,x轴数据)

以epoch为x轴变量,每个epoch的平均损失为y轴数据,生成一个图像

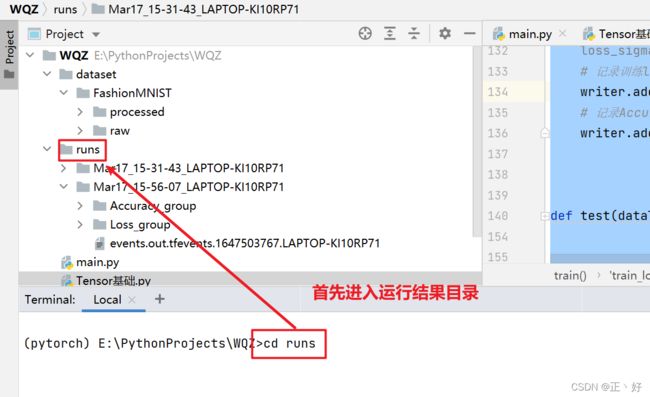

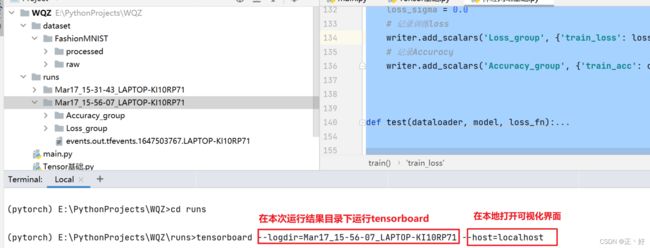

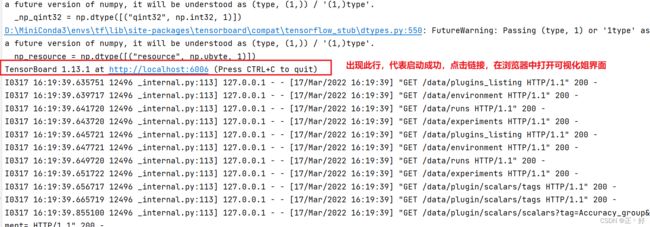

3.tensorboardX可视化

1.切换目录

2.启动tensorboard

3.查看可视化结果