chineseocr测试具体部署步骤(不用web界面)

源项目地址:

https://github.com/chineseocr/chineseocr

由于chineseocr需要在web上展示检测结果,还需要安装web相关内容,我的硬件是nvidia agx orin只需要在本地查看检测结果,做如下操作

找到源码项目中的test.ipynb,改写成test.py

首先将下载源项目地址下载的model放入项目的models文件夹内

import cv2

import time

from PIL import Image

import numpy as np

import os

import json

import time

#import web

import numpy as np

from PIL import Image

from config import *

from apphelper.image import union_rbox,adjust_box_to_origin,base64_to_PIL

from application import trainTicket,idcard

from text.opencv_dnn_detect import angle_detect

from main import TextOcrModel

print("aaaaaa: ",yoloTextFlag)#打印yoloTextFlag可以看到当前使用的什么文字检测引擎,在config.py更改文字检测引擎

if yoloTextFlag =='keras' or AngleModelFlag=='tf' or ocrFlag=='keras':

if GPU:

os.environ["CUDA_VISIBLE_DEVICES"] = str(GPUID)

import tensorflow as tf

#from keras import backend as K

from tensorflow.compat.v1.keras import backend as K

tf.compat.v1.disable_eager_execution()

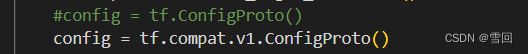

#config = tf.ConfigProto()

config = tf.compat.v1.ConfigProto()

config.gpu_options.allocator_type = 'BFC'

config.gpu_options.per_process_gpu_memory_fraction = 0.3## GPU最大占用量

config.gpu_options.allow_growth = True##GPU是否可动态增加

K.set_session(tf.compat.v1.Session(config=config))

#K.get_session().run(tf.global_variables_initializer())

K.get_session().run(tf.compat.v1.global_variables_initializer())

else:

##CPU启动

os.environ["CUDA_VISIBLE_DEVICES"] = ''

if yoloTextFlag=='opencv':

scale,maxScale = IMGSIZE

from text.opencv_dnn_detect import text_detect

elif yoloTextFlag=='darknet':

scale,maxScale = IMGSIZE

from text.darknet_detect import text_detect

elif yoloTextFlag=='keras':

scale,maxScale = IMGSIZE[0],2048

from text.keras_detect import text_detect

else:

print( "err,text engine in keras\opencv\darknet")

if ocr_redis:

##多任务并发识别

from helper.redisbase import redisDataBase

ocr = redisDataBase().put_values

else:

from crnn.keys import alphabetChinese,alphabetEnglish

if ocrFlag=='keras':

from crnn.network_keras import CRNN

if chineseModel:

alphabet = alphabetChinese

if LSTMFLAG:

ocrModel = ocrModelKerasLstm

else:

ocrModel = ocrModelKerasDense

else:

ocrModel = ocrModelKerasEng

alphabet = alphabetEnglish

LSTMFLAG = True

elif ocrFlag=='torch':

from crnn.network_torch import CRNN

if chineseModel:

alphabet = alphabetChinese

if LSTMFLAG:

ocrModel = ocrModelTorchLstm

else:

ocrModel = ocrModelTorchDense

else:

ocrModel = ocrModelTorchEng

alphabet = alphabetEnglish

LSTMFLAG = True

elif ocrFlag=='opencv':

from crnn.network_dnn import CRNN

ocrModel = ocrModelOpencv

alphabet = alphabetChinese

else:

print( "err,ocr engine in keras\opencv\darknet")

nclass = len(alphabet)+1

if ocrFlag=='opencv':

crnn = CRNN(alphabet=alphabet)

else:

crnn = CRNN( 32, 1, nclass, 256, leakyRelu=False,lstmFlag=LSTMFLAG,GPU=GPU,alphabet=alphabet)

if os.path.exists(ocrModel):

crnn.load_weights(ocrModel)

else:

print("download model or tranform model with tools!")

ocr = crnn.predict_job

model = TextOcrModel(ocr,text_detect,angle_detect)

from apphelper.image import xy_rotate_box,box_rotate,solve

def plot_box(img,boxes):

blue = (0, 0, 0) #18

tmp = np.copy(img)

for box in boxes:

cv2.rectangle(tmp, (int(box[0]),int(box[1])), (int(box[2]), int(box[3])), blue, 1) #19

return Image.fromarray(tmp)

def plot_boxes(img,angle, result,color=(0,0,0)):

tmp = np.array(img)

c = color

h,w = img.shape[:2]

thick = int((h + w) / 300)

i = 0

if angle in [90,270]:

imgW,imgH = img.shape[:2]

else:

imgH,imgW= img.shape[:2]

for line in result:

cx =line['cx']

cy = line['cy']

degree =line['degree']

w = line['w']

h = line['h']

x1,y1,x2,y2,x3,y3,x4,y4 = xy_rotate_box(cx, cy, w, h, degree/180*np.pi)

x1,y1,x2,y2,x3,y3,x4,y4 = box_rotate([x1,y1,x2,y2,x3,y3,x4,y4],angle=(360-angle)%360,imgH=imgH,imgW=imgW)

cx =np.mean([x1,x2,x3,x4])

cy = np.mean([y1,y2,y3,y4])

cv2.line(tmp,(int(x1),int(y1)),(int(x2),int(y2)),c,1)

cv2.line(tmp,(int(x2),int(y2)),(int(x3),int(y3)),c,1)

cv2.line(tmp,(int(x3),int(y3)),(int(x4),int(y4)),c,1)

cv2.line(tmp,(int(x4),int(y4)),(int(x1),int(y1)),c,1)

mess=str(i)

cv2.putText(tmp, mess, (int(cx), int(cy)),0, 1e-3 * h, c, thick // 2)

i+=1

cv2.imwrite("/home/nvidia/chineseocr-app/output/1.jpg",tmp)

return Image.fromarray(tmp).convert('RGB')

def plot_rboxes(img,boxes,color=(0,0,0)):

tmp = np.array(img)

c = color

h,w = img.shape[:2]

thick = int((h + w) / 300)

i = 0

for box in boxes:

x1,y1,x2,y2,x3,y3,x4,y4 = box

cx =np.mean([x1,x2,x3,x4])

cy = np.mean([y1,y2,y3,y4])

cv2.line(tmp,(int(x1),int(y1)),(int(x2),int(y2)),c,1)

cv2.line(tmp,(int(x2),int(y2)),(int(x3),int(y3)),c,1)

cv2.line(tmp,(int(x3),int(y3)),(int(x4),int(y4)),c,1)

cv2.line(tmp,(int(x4),int(y4)),(int(x1),int(y1)),c,1)

mess=str(i)

cv2.putText(tmp, mess, (int(cx), int(cy)),0, 1e-3 * h, c, thick // 2)

i+=1

cv2.imwrite("/home/nvidia/chineseocr-app/output/2.jpg",tmp)

return Image.fromarray(tmp).convert('RGB')

p = '/home/nvidia/chineseocr-app/test/zw2_0.jpg'

img = cv2.imread(p)

h,w = img.shape[:2]

timeTake = time.time()

scale=608

maxScale=2048

result,angle= model.model(img,

detectAngle=True,##是否进行文字方向检测

scale=scale,

maxScale=maxScale,

MAX_HORIZONTAL_GAP=80,##字符之间的最大间隔,用于文本行的合并

MIN_V_OVERLAPS=0.6,

MIN_SIZE_SIM=0.6,

TEXT_PROPOSALS_MIN_SCORE=0.1,

TEXT_PROPOSALS_NMS_THRESH=0.7,

TEXT_LINE_NMS_THRESH = 0.9,##文本行之间测iou值

LINE_MIN_SCORE=0.1,

leftAdjustAlph=0,##对检测的文本行进行向左延伸

rightAdjustAlph=0.1,##对检测的文本行进行向右延伸

)

timeTake = time.time()-timeTake

print("angle: ",angle)

print('It take:{}s'.format(timeTake))

for line in result:

print(line['text'])

plot_boxes(img,angle, result,color=(0,0,0))

#cv2.imwrite("/home/nvidia/chineseocr-app/output/1.jpg",img1)

boxes,scores = model.detect_box(img,608,2048)

plot_box(img,boxes)

#cv2.imwrite("/home/nvidia/chineseocr-app/output/2.jpg",img2)

config.py

在config.py中看到可以选择三种文字检测引擎,首先使用keras引擎。

我现在的tensorflow版本是2.10.0,源码中使用的tensorflow应该是比较老的版本,很多函数的写法已经不一样了,比如调试的时候经常出现的报错

AttributeError: module ‘tensorflow’ has no attribute ‘ConfigProto’

此类是函数写法在tensorflow升级后改变了,应该在tf与函数之间添加compat.v1

还有numpy中增加维度的newaxis报错

TypeError: list indices must be integers or slices, not tuple,不知道什么原因,改成expand_dims后正常。

![]()

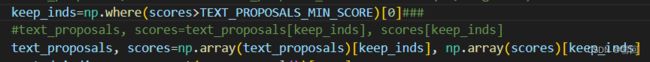

报错:TypeError: only integer scalar arrays can be converted to a scalar index

好像numpy版本变更导致不能直接索引,改成第三行的写法就可以了

这样opencv和darknet两种引擎可以使用,keras引擎暂未调通,暂定

测试结果带角度的ocr预测效果较差,将带角度预测关闭反而正确率提高