Deep Stream Ai落地--初体验

Deep Stream

介绍

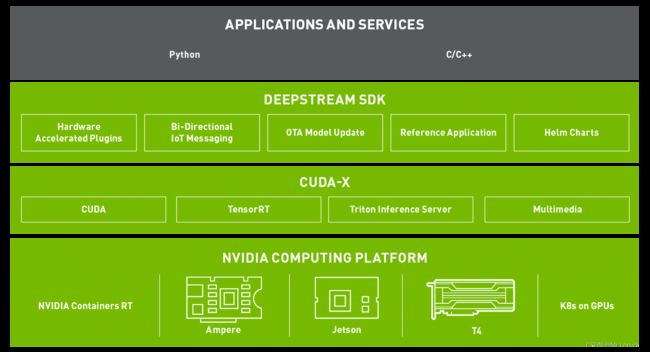

NVIDIA的DeepStream SDK提供了一整套数据流分析工具包,透过智能视频分析(IVA)和多传感器的数据处理来感知情景和意识。

DeepStream应用程序框架具有硬件加速构建块,可将深层神经网络和其他复杂处理任务带入流处理管道。开发者只需专注于构建核心深度学习网络和IP,而不是从头开始设计端到端解决方案。

更多详情了解,移步官网介绍,nvidia-deepSteam

解决问题

- 快速开发Ai技能

- 快速部署Ai服务

- 提供本地部署

- 提供边端设备部署

- 提供远端部署

- 高吞吐量

主要特点

- 具有统一规范的sdk

- 基于多传感器,音频,视频,图像整套的流分析工具

- 具有基于graph composer拖拽式的低代码编程

- 支持云原声k8s编排

- 适用视觉Ai场景

- 高吞吐量

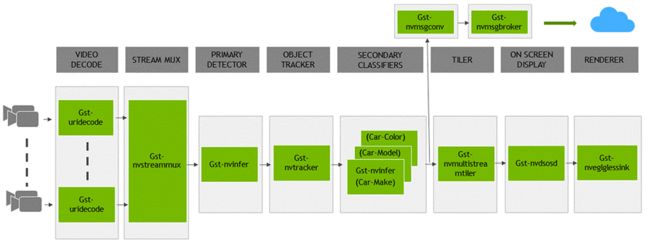

整体流分析过程

重点模块

-

加速解码器Gst-nvvideo4linux2

- 流数据可以通过 RTSP 通过网络或来自本地文件系统或直接来自摄像机。使用 CPU 捕获流。一旦帧进入内存,它们就会被发送到使用 NVDEC 加速器进行解码

-

流缓冲区 Gst-nvstreammux

- 缓冲区批量数据帧进行推理时可以更好的利用硬件资源

-

推理引擎 Gst-nvinfer

- 本地端进行TensorRT的Inference,使用的方法是GST-nvinfer,使用TensorRT加速推理时,会做网络层之间的优化,且建立好一个可以被直接调用的推理引擎,该推理引擎可以被直接序列化,下次重新调用该引擎时,直接反序列化即可

-

格式转换Gst-nvvideoconvert

- 批量转换,批量输出

-

可视化

- gst-nvdsosd 可以帮助你根据实际场景绘制你感兴趣的部分

积木搭建

- 根据上面整体的结构以及重点模块,我们可以结合Deep Stream SDK 来构建自己业务的pipline

安装

安装必要的依赖

[~]# apt install \

libssl1.0.0 \

libgstreamer1.0-0 \

gstreamer1.0-tools \

gstreamer1.0-plugins-good \

gstreamer1.0-plugins-bad \

gstreamer1.0-plugins-ugly \

gstreamer1.0-libav \

libgstrtspserver-1.0-0 \

libjansson4 \

gcc \

make \

git \

python3

nvidia驱动安装

- 下载:https://www.nvidia.com/Download/driverResults.aspx/179599/en-us

cuda toolkit 安装

- 下载:https://developer.nvidia.com/cuda-11-4-1-download-archive

deep stream 安装

- 下载gpu版本(需要账号):https://developer.nvidia.com/deepstream-getting-started

$ sudo tar -xvf deepstream_sdk_v6.0.0_x86_64.tbz2 -C /

$ cd /opt/nvidia/deepstream/deepstream-6.0/

$ sudo ./install.sh

$ sudo ldconfig

- ./samples目录是参考示例

docker运行实例

- 基于gpu

| 说明 | 拉取命令 |

|---|---|

| 基础 docker(仅包含运行时库和 GStreamer 插件。可用作为 DeepStream 应用程序构建自定义 docker 的基础) | docker pull nvcr.io/nvidia/deepstream:6.0-base |

| devel docker(包含整个 SDK 以及用于构建 DeepStream 应用程序和图形编辑器的开发环境 | docker pull nvcr.io/nvidia/deepstream:6.0-devel |

| 安装了 Triton 推理服务器和依赖项的 Triton 推理服务器 docker 以及用于构建 DeepStream 应用程序的开发环境 | docker pull nvcr.io/nvidia/deepstream:6.0-triton |

| 安装了 deepstream-test5-app 并删除了所有其他参考应用程序的 DeepStream IoT docker | docker pull nvcr.io/nvidia/deepstream:6.0-iot |

| DeepStream 示例 docker(包含运行时库、GStreamer 插件、参考应用程序和示例流、模型和配置) | docker pull nvcr.io/nvidia/deepstream:6.0-samples |

- 以下为镜像构建的dockerfile参考样例,允许用户自定义镜像

# Set CUDA_VERSION, example: 11.4.1

ARG CUDA_VERSION

# Use CUDAGL base devel docker

FROM nvcr.io/nvidia/cudagl:${CUDA_VERSION}-devel-ubuntu18.04

# Set TENSORRT_VERSION, example: 8.0.1-1+cuda11.4

ARG TENSORRT_VERSION

# Set CUDNN_VERSION, example: 8.2.1.32-1+cuda11.4

ARG CUDNN_VERSION

# Install dependencies

RUN apt-get update && \

DEBIAN_FRONTEND=noninteractive apt-get install -y --no-install-recommends \

linux-libc-dev \

libglew2.0 libssl1.0.0 libjpeg8 libjson-glib-1.0-0 \

gstreamer1.0-plugins-good gstreamer1.0-plugins-bad gstreamer1.0-plugins-ugly gstreamer1.0-tools gstreamer1.0-libav \

gstreamer1.0-alsa \

libcurl3 \

libcurl3-gnutls \

libuuid1 \

libjansson4 \

libjansson-dev \

librabbitmq4 \

libgles2-mesa \

libgstrtspserver-1.0-0 \

libv4l-dev \

gdb bash-completion libboost-dev \

uuid-dev libgstrtspserver-1.0-0 libgstrtspserver-1.0-0-dbg libgstrtspserver-1.0-dev \

libgstreamer1.0-dev \

libgstreamer-plugins-base1.0-dev \

libglew-dev \

libssl-dev \

libopencv-dev \

freeglut3-dev \

libjpeg-dev \

libcurl4-gnutls-dev \

libjson-glib-dev \

libboost-dev \

librabbitmq-dev \

libgles2-mesa-dev libgtk-3-dev libgdk3.0-cil-dev \

pkg-config \

libxau-dev \

libxdmcp-dev \

libxcb1-dev \

libxext-dev \

libx11-dev \

git \

rsyslog \

vim \

gstreamer1.0-rtsp \

libcudnn8=${CUDNN_VERSION} \

libcudnn8-dev=${CUDNN_VERSION} \

libnvinfer8=${TENSORRT_VERSION} \

libnvinfer-dev=${TENSORRT_VERSION} \

libnvparsers8=${TENSORRT_VERSION} \

libnvparsers-dev=${TENSORRT_VERSION} \

libnvonnxparsers8=${TENSORRT_VERSION} \

libnvonnxparsers-dev=${TENSORRT_VERSION} \

libnvinfer-plugin8=${TENSORRT_VERSION} \

libnvinfer-plugin-dev=${TENSORRT_VERSION} \

python-libnvinfer=${TENSORRT_VERSION} \

python3-libnvinfer=${TENSORRT_VERSION} \

python-libnvinfer-dev=${TENSORRT_VERSION} \

python3-libnvinfer-dev=${TENSORRT_VERSION} && \

rm -rf /var/lib/apt/lists/* && \

apt autoremove

# Install DeepStreamSDK using debian package. DeepStream tar package can also be installed in a similar manner

ADD deepstream-6.0_6.0.0-1_amd64.deb /root

RUN apt-get update && \

DEBIAN_FRONTEND=noninteractive apt-get install -y --no-install-recommends \

/root/deepstream-6.0_6.0.0-1_amd64.deb

WORKDIR /opt/nvidia/deepstream/deepstream

RUN ln -s /usr/lib/x86_64-linux-gnu/libnvcuvid.so.1 /usr/lib/x86_64-linux-gnu/libnvcuvid.so

RUN ln -s /usr/lib/x86_64-linux-gnu/libnvidia-encode.so.1 /usr/lib/x86_64-linux-gnu/libnvidia-encode.so

deepstream-python-app

- 使用镜像测试

$ docker pull nvcr.io/nvidia/deepstream:6.0-samples

- 环境安装

apt-get install -y python-gi-dev

apt install -y python3-gst-1.0

apt install -y python3-pip

pip3 install pyds -i https://pypi.tuna.tsinghua.edu.cn/simple

- 拉取代码包

git clone https://github.com/NVIDIA-AI-IOT/deepstream_python_apps.git

- 示例代码

def main(args):

# Check input arguments

if len(args) != 2:

sys.stderr.write("usage: %s \n" % args[0])

sys.exit(1)

# Standard GStreamer initialization

GObject.threads_init()

Gst.init(None)

# Create gstreamer elements

# Create Pipeline element that will form a connection of other elements

print("Creating Pipeline \n ")

pipeline = Gst.Pipeline()

if not pipeline:

sys.stderr.write(" Unable to create Pipeline \n")

# Source element for reading from the file

print("Creating Source \n ")

source = Gst.ElementFactory.make("filesrc", "file-source")

if not source:

sys.stderr.write(" Unable to create Source \n")

# Since the data format in the input file is elementary h264 stream,

# we need a h264parser

print("Creating H264Parser \n")

h264parser = Gst.ElementFactory.make("h264parse", "h264-parser")

if not h264parser:

sys.stderr.write(" Unable to create h264 parser \n")

# Use nvdec_h264 for hardware accelerated decode on GPU

print("Creating Decoder \n")

decoder = Gst.ElementFactory.make("nvv4l2decoder", "nvv4l2-decoder")

if not decoder:

sys.stderr.write(" Unable to create Nvv4l2 Decoder \n")

# Create nvstreammux instance to form batches from one or more sources.

streammux = Gst.ElementFactory.make("nvstreammux", "Stream-muxer")

if not streammux:

sys.stderr.write(" Unable to create NvStreamMux \n")

# Use nvinfer to run inferencing on decoder's output,

# behaviour of inferencing is set through config file

pgie = Gst.ElementFactory.make("nvinfer", "primary-inference")

if not pgie:

sys.stderr.write(" Unable to create pgie \n")

# Use convertor to convert from NV12 to RGBA as required by nvosd

nvvidconv = Gst.ElementFactory.make("nvvideoconvert", "convertor")

if not nvvidconv:

sys.stderr.write(" Unable to create nvvidconv \n")

# Create OSD to draw on the converted RGBA buffer

nvosd = Gst.ElementFactory.make("nvdsosd", "onscreendisplay")

if not nvosd:

sys.stderr.write(" Unable to create nvosd \n")

# Finally render the osd output

if is_aarch64():

transform = Gst.ElementFactory.make("nvegltransform", "nvegl-transform")

print("Creating EGLSink \n")

sink = Gst.ElementFactory.make("nveglglessink", "nvvideo-renderer")

if not sink:

sys.stderr.write(" Unable to create egl sink \n")

print("Playing file %s " % args[1])

source.set_property('location', args[1])

streammux.set_property('width', 1920)

streammux.set_property('height', 1080)

streammux.set_property('batch-size', 1)

streammux.set_property('batched-push-timeout', 4000000)

pgie.set_property('config-file-path', "dstest1_pgie_config.txt")

print("Adding elements to Pipeline \n")

pipeline.add(source)

pipeline.add(h264parser)

pipeline.add(decoder)

pipeline.add(streammux)

pipeline.add(pgie)

pipeline.add(nvvidconv)

pipeline.add(nvosd)

pipeline.add(sink)

if is_aarch64():

pipeline.add(transform)

# we link the elements together

# file-source -> h264-parser -> nvh264-decoder ->

# nvinfer -> nvvidconv -> nvosd -> video-renderer

print("Linking elements in the Pipeline \n")

source.link(h264parser)

h264parser.link(decoder)

sinkpad = streammux.get_request_pad("sink_0")

if not sinkpad:

sys.stderr.write(" Unable to get the sink pad of streammux \n")

srcpad = decoder.get_static_pad("src")

if not srcpad:

sys.stderr.write(" Unable to get source pad of decoder \n")

srcpad.link(sinkpad)

streammux.link(pgie)

pgie.link(nvvidconv)

nvvidconv.link(nvosd)

if is_aarch64():

nvosd.link(transform)

transform.link(sink)

else:

nvosd.link(sink)

# create an event loop and feed gstreamer bus mesages to it

loop = GObject.MainLoop()

bus = pipeline.get_bus()

bus.add_signal_watch()

bus.connect("message", bus_call, loop)

# Lets add probe to get informed of the meta data generated, we add probe to

# the sink pad of the osd element, since by that time, the buffer would have

# had got all the metadata.

osdsinkpad = nvosd.get_static_pad("sink")

if not osdsinkpad:

sys.stderr.write(" Unable to get sink pad of nvosd \n")

osdsinkpad.add_probe(Gst.PadProbeType.BUFFER, osd_sink_pad_buffer_probe, 0)

# start play back and listen to events

print("Starting pipeline \n")

pipeline.set_state(Gst.State.PLAYING)

try:

loop.run()

except:

pass

# cleanup

pipeline.set_state(Gst.State.NULL)

if __name__ == '__main__':

sys.exit(main(sys.argv))

- 运行代码

$ cd /opt/nvidia/deepstream/deepstream-6.0/deepstream_python_apps/apps/deepstream-test1

$ python3 deepstream_test_1.py /opt/nvidia/deepstream/deepstream-6.0/samples/streams/sample_720p.jpg

Creating Pipeline

Creating Source

Creating H264Parser

Creating Decoder

Unable to create NvStreamMux

Unable to create pgie

Unable to create nvvidconv

Creating EGLSink

Playing file /opt/nvidia/deepstream/deepstream-6.0/samples/streams/sample_720p.jpg

Traceback (most recent call last):

File "deepstream_test_1.py", line 261, in <module>

sys.exit(main(sys.argv))

File "deepstream_test_1.py", line 194, in main

streammux.set_property('width', 1920)

AttributeError: 'NoneType' object has no attribute 'set_property'

此bug还未解决

- 样例说明

| 名称 | 说明 |

|---|---|

| deepstream-imagedata-multistream | |

| deepstream-imagedata-multistream-redaction | |

| deepstream-nvdsanalytics | |

| deepstream-opticalflow | |

| deepstream-rtsp-in-rtsp-out | |

| deepstream-segmentation | |

| deepstream-ssd-parser | |

| deepstream-test1 | 如何将 DeepStream 元素用于单个 H.264 流的简单示例:filesrc → decode → nvstreammux → nvinfer (primary detection) → nvdsosd → renderer |

| deepstream-test1-rtsp-out | |

| deepstream-test1-usbcam | |

| deepstream-test2 | 如何将 DeepStream 元素用于单个 H.264 流的简单示例:filesrc → decode → nvstreammux → nvinfer(主检测器) → nvtracker → nvinfer(二级分类器) → nvdsosd → 渲染器 |

| deepstream-test3 | |

| deepstream-test4 | |

| runtime_source_add_delete |

Deep Stream Pipline 架构设计

Deep Stream 是一个基于GStreamer

,并由其插件来组建的流水线的过程

- Gst-nvstreammux:

用于从多个输入源形成一批缓冲区 - Gst-nvdspreprocess:

用于对预定义的 ROI 进行预处理以进行初级推理 - Gst-nvinfer:

基于TensorRT的推理引擎 - Gst-nvtracker: 对象跟踪去重

- Gst-nvmultistreamtiler:

用于形成 2D 帧数据 - Gst-nvdsosd:

使用生成的元数据在合成帧上绘制阴影框、矩形和文本

有关graph Composer使用

安装中出现的问题可能在这里可以找到

借鉴思路

- Pipline流水式

- 组件式开发

- 拖拽式编程,块状可视化(流程图中块可修改代码)

- Pipline配置化

- 缓冲区设计

- …