hadoop安装(超详细)

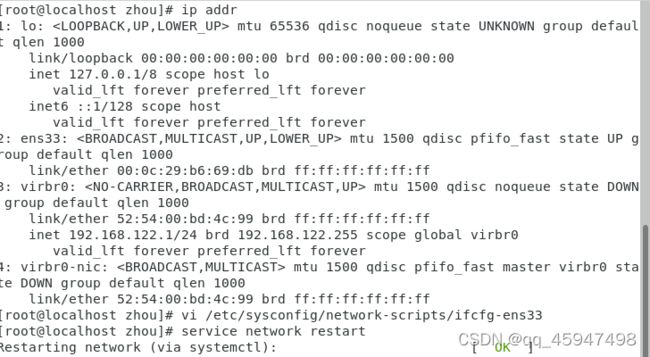

设置固定IP

(1)

(2)修改配置文件“/etc/sysconfig/network-scripts/ifcfg-ens33”

#修改:

ONBOOT=yes

NM_CONTROLLED=yes

BOOTPROTO=static

\#添加以下内容

IPADDR=192.168.128.130(根据自身情况)

NETMASK=255.255.255.0

GATEWAY=192.168.128.2

DNS1=192.168.128.2

重启服务,输入“service network restart”

克隆节点

修改主机名

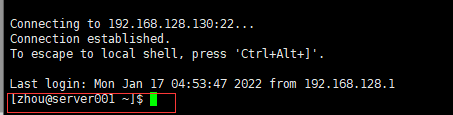

最好是在xshell中进行

使用hostnamectl set-hostname +修改后的主机名, 命令修改主机名

修改好后重启虚拟机(reboot)

配置IP映射

每个节点都要添加后

能够相互ping通

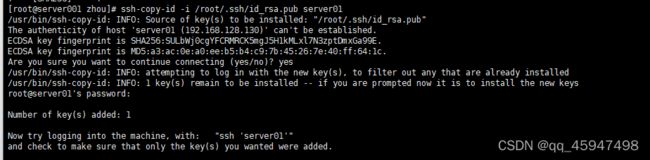

安装免密

应该是在主节点安装就可以了

ssh-keygen -t rsa

生成私有密钥id_rsa和公有密钥id_rsa.pub两个文件。ssh-keygen用来生成RSA类型的密钥以及管理该密钥,参数“-t”用于指定要创建的SSH密钥的类型为RSA。

(2)用ssh-copy-id将公钥复制到远程机器中

ssh-copy-id -i /root/.ssh/id_rsa.pub server001`//依次输入yes,123456(root用户的密码)

ssh-copy-id -i /root/.ssh/id_rsa.pub server002

ssh-copy-id -i /root/.ssh/id_rsa.pub server003

验证是否设置无密码登录

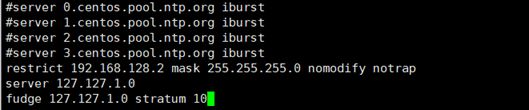

配置时间同步服务

(1)安装NTP服务。在各节点:

yum -y install ntp

(2)设置假设master节点为NTP服务主节点,那么其配置如下。

使用命令“vim /etc/ntp.conf”打开/etc/ntp.conf文件,注释掉以server开头的行,并添加:

restrict 192.168.128.2 mask 255.255.255.0 nomodify notrap

server 127.127.1.0

fudge 127.127.1.0 stratum 10

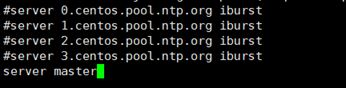

(3)在slave中配置NTP,同样修改/etc/ntp.conf文件,注释掉server开头的行,并添加:

server master

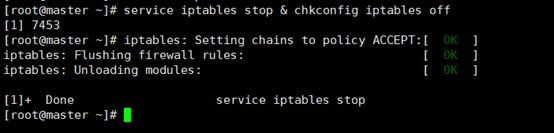

(4)执行命令“sudo systemctl stop firewalld.service”永久性关闭防火墙,主节点和从节点都要关闭。

(5)启动NTP服务

① 在master节点执行命令“service ntpd start & chkconfig ntpd on”

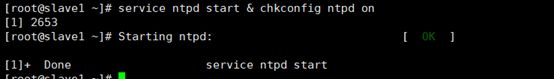

② 在slave上执行命令“ntpdate master”即可同步时间

![]()

③ 在slave上分别执行“service ntpd start & chkconfig ntpd on”即可启动并永久启动NTP服务。

安装jdk(以master为例,主节点和从节点都要配置)

每一个结点都要安装

(2) 进入/opt目录,执行命令“rpm -ivh jdk-8u151-linux-x64.rpm”安装JDK

(3) 在/etc/profile添加

export JAVA_HOME=/usr/java/jdk1.8.0_151

export PATH=$PATH:$JAVA_HOME/bin

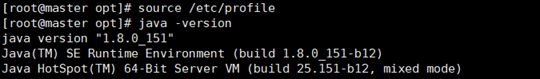

执行source /etc/profile 使配置生效

(4) 验证JDK是否配置成功,执行命令“java -version”

Hadoop集群配置()

- 后面的配置文件都是复制粘贴就好

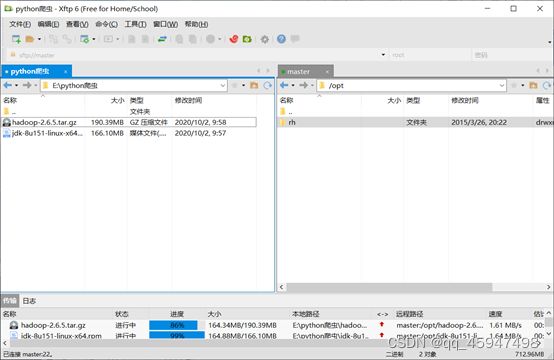

- 通过xmanager的Xftp上传hadoop-2.6.5.tar.gz文件到/opt目录

- 解压缩hadoop-2.6.5.tar.gz 文件

tar -zxf hadoop-2.6.5.tar.gz -C /usr/local

解压后即可,看到/usr/local/hadoop-2.6.5文件夹

- 配置Hadoop

进入目录:

cd /usr/local/hadoop-2.6.5/etc/hadoop/

依次修改下面的文件:

4.1 core-site.xml

fs.defaultFS

hdfs://master:8020

hadoop.tmp.dir

/var/log/hadoop/tmp

4.2 hadoop-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_151

4.3 hdfs-site.xml

dfs.namenode.name.dir

file:///data/hadoop/hdfs/name

dfs.datanode.data.dir

file:///data/hadoop/hdfs/data

dfs.namenode.secondary.http-address

master:50090

dfs.replication

3

4.4复制cp mapred-site.xml.template mapred-site.xml

mapred-site.xml

mapreduce.framework.name

yarn

mapreduce.jobhistory.address

master:10020

mapreduce.jobhistory.webapp.address

master:19888

4.5 yarn-site.xml

yarn.resourcemanager.hostname

master

yarn.resourcemanager.address

${yarn.resourcemanager.hostname}:8032

yarn.resourcemanager.scheduler.address

${yarn.resourcemanager.hostname}:8030

yarn.resourcemanager.webapp.address

${yarn.resourcemanager.hostname}:8088

yarn.resourcemanager.webapp.https.address

${yarn.resourcemanager.hostname}:8090

yarn.resourcemanager.resource-tracker.address

${yarn.resourcemanager.hostname}:8031

yarn.resourcemanager.admin.address

${yarn.resourcemanager.hostname}:8033

yarn.nodemanager.local-dirs

/data/hadoop/yarn/local

yarn.log-aggregation-enable

true

yarn.nodemanager.remote-app-log-dir

/data/tmp/logs

yarn.log.server.url

http://master:19888/jobhistory/logs/

URL for job history server

yarn.nodemanager.vmem-check-enabled

false

yarn.nodemanager.aux-services

mapreduce_shuffle

yarn.nodemanager.aux-services.mapreduce.shuffle.class

org.apache.hadoop.mapred.ShuffleHandler

yarn.nodemanager.resource.memory-mb

2048

yarn.scheduler.minimum-allocation-mb

512

yarn.scheduler.maximum-allocation-mb

4096

mapreduce.map.memory.mb

2048

mapreduce.reduce.memory.mb

2048

yarn.nodemanager.resource.cpu-vcores

1

4.6 yarn-env.sh

export JAVA_HOME=/usr/java/jdk1.8.0_151

4.7 slaves

删除localhost,(有几个添加几个)添加:

slave1

slave2

slave3

拷贝hadoop安装文件到集群slave节点

scp -r /usr/local/hadoop-2.6.5 slave1:/usr/local

scp -r /usr/local/hadoop-2.6.5 slave2:/usr/local

scp -r /usr/local/hadoop-2.6.5 slave3:/usr/local

5.在/etc/profile添加Hadoop路径

export HADOOP_HOME=/usr/local/hadoop-2.6.5

export PATH=$HADOOP_HOME/bin:$PATH

source /etc/profile使修改生效

- 格式化NameNode

进入目录

cd /usr/local/hadoop-2.6.5/bin

执行格式化

./hdfs namenode -format

7.启动集群

进入目录

cd /usr/local/hadoop-2.6.5/sbin

执行启动:

./start-dfs.sh

./start-yarn.sh

./mr-jobhistory-daemon.sh start historyserver

使用jps,查看进程

[root@master sbin]# jps

1765 NameNode

1929 SecondaryNameNode

2378 JobHistoryServer

2412 Jps

2077 ResourceManager

[root@slave1 ~]# jps

1844 Jps

1612 DataNode

1711 NodeManager

在Windows下C:\Windows\System32\drivers\etc\hosts添加IP映射

192.168.128.130 master master.centos.com

192.168.128.131 slave1 slave1.centos.com

192.168.128.132 slave2 slave2.centos.com

192.168.128.133 slave3 slave3.centos.com

- 浏览器查看:

http://master:50070

yNameNode

2378 JobHistoryServer

2412 Jps

2077 ResourceManager

[root@slave1 ~]# jps

1844 Jps

1612 DataNode

1711 NodeManager

在Windows下C:\Windows\System32\drivers\etc\hosts添加IP映射

192.168.128.130 master master.centos.com

192.168.128.131 slave1 slave1.centos.com

192.168.128.132 slave2 slave2.centos.com

192.168.128.133 slave3 slave3.centos.com

- 浏览器查看:

http://master:50070

http://master:8088

总结:这就是一篇笔记,根据以前学校老师给出的文件自己搭建的集群。后面很多配置文件我也看不懂。