pytorch深度学习实战lesson31

第三十一课 多GPU并行

这个图是2014年的时候,农历新年那一天,沐神和老板在 CMU 装机器,但是这台机器没装好,散热有问题,因为 GPU 之间靠太近了,用了一个月之后烧掉了一块GPU。这是沐神第一次装 GPU 犯了个错误。我们引以为戒。

目录

理论部分

实践部分

理论部分

一台机器一般能装16个 GPU ,那么如果有16个 GPU 的话,在训练和预测的时候,我们都可以将一个小批量切割多次到多个 GPU ,每个 GPU 做一些运算,或者把整个模型切割到多个 GPU 来完成加速。就是说同样一个小批量可以用多个 GPU 同时运行来一起完成这个计算梯度的过程。

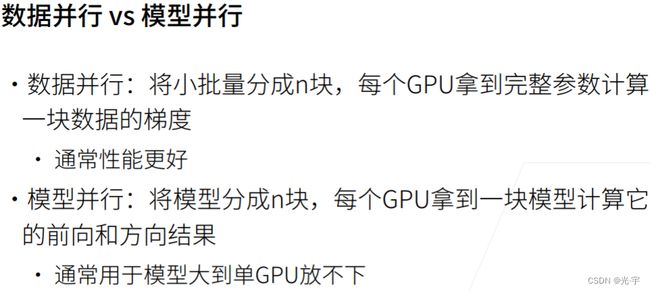

数据并行的原理是说假设有一个批量里面有128个样本,然后有两个 GPU 的话,那么每个 GPU 会拿到64个样本,就说有 N 个 GPU 的话,我会把小批量切成 N 块,然后每个 GPU 拿到完整的参数来计算这一块数据的梯度。通常来说它的性能会比较好一点,因为比较均。

模型并行是说把模型分成 N 块,比如说有100层的 restnet ,有两个 GPU 的话,一个 GPU 拿50层,另外一个 GPU 拿另外50层。那么第0号 GPU 拿到完整的数据,把自己的50层算完之后把结果给到 GPU 1 ,接着再往下算,然后算梯度的时候就倒过来。它的 bug 是什么?它 bug 是说 GPU 0算的时候, GPU 1可能在空的, GPU 1在算的时候 GPU 0可能在空着。

绿的表示梯度。

实践部分

从零开始(多GPU使用此代码)

代码:

#多GPU训练

import matplotlib.pyplot as plt

import torch

from torch import nn

from torch.nn import functional as F

from d2l import torch as d2l

#简单网络

scale = 0.01

W1 = torch.randn(size=(20, 1, 3, 3)) * scale

b1 = torch.zeros(20)

W2 = torch.randn(size=(50, 20, 5, 5)) * scale

b2 = torch.zeros(50)

W3 = torch.randn(size=(800, 128)) * scale

b3 = torch.zeros(128)

W4 = torch.randn(size=(128, 10)) * scale

b4 = torch.zeros(10)

params = [W1, b1, W2, b2, W3, b3, W4, b4]

def lenet(X, params):

h1_conv = F.conv2d(input=X, weight=params[0], bias=params[1])

h1_activation = F.relu(h1_conv)

h1 = F.avg_pool2d(input=h1_activation, kernel_size=(2, 2), stride=(2, 2))

h2_conv = F.conv2d(input=h1, weight=params[2], bias=params[3])

h2_activation = F.relu(h2_conv)

h2 = F.avg_pool2d(input=h2_activation, kernel_size=(2, 2), stride=(2, 2))

h2 = h2.reshape(h2.shape[0], -1)

h3_linear = torch.mm(h2, params[4]) + params[5]

h3 = F.relu(h3_linear)

y_hat = torch.mm(h3, params[6]) + params[7]

return y_hat

loss = nn.CrossEntropyLoss(reduction='none')

#向多个设备分发参数

def get_params(params, device):

new_params = [p.clone().to(device) for p in params]

for p in new_params:

p.requires_grad_()

return new_params

new_params = get_params(params, d2l.try_gpu(0))

print('b1 weight:', new_params[1])

print('b1 grad:', new_params[1].grad)

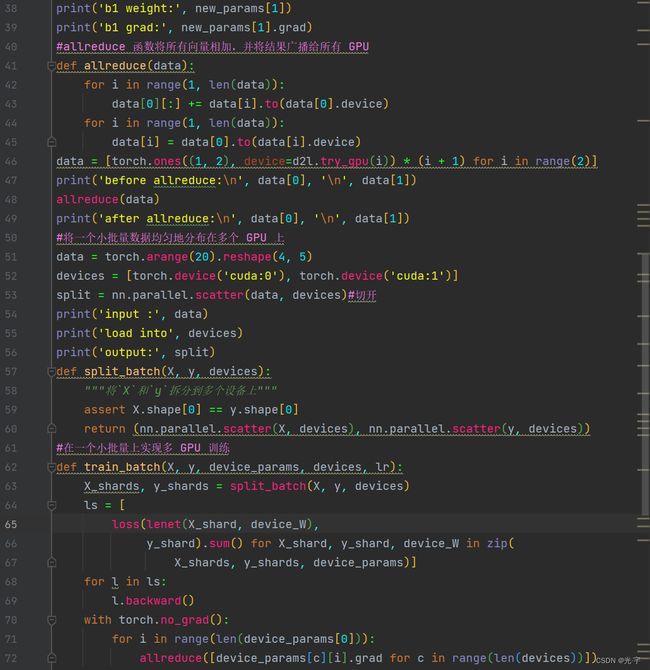

#allreduce 函数将所有向量相加,并将结果广播给所有 GPU

def allreduce(data):

for i in range(1, len(data)):

data[0][:] += data[i].to(data[0].device)

for i in range(1, len(data)):

data[i] = data[0].to(data[i].device)

data = [torch.ones((1, 2), device=d2l.try_gpu(i)) * (i + 1) for i in range(2)]

print('before allreduce:\n', data[0], '\n', data[1])

allreduce(data)

print('after allreduce:\n', data[0], '\n', data[1])

#将一个小批量数据均匀地分布在多个 GPU 上

data = torch.arange(20).reshape(4, 5)

devices = [torch.device('cuda:0'), torch.device('cuda:1')]

split = nn.parallel.scatter(data, devices)#切开

print('input :', data)

print('load into', devices)

print('output:', split)

def split_batch(X, y, devices):

"""将`X`和`y`拆分到多个设备上"""

assert X.shape[0] == y.shape[0]

return (nn.parallel.scatter(X, devices), nn.parallel.scatter(y, devices))

#在一个小批量上实现多 GPU 训练

def train_batch(X, y, device_params, devices, lr):

X_shards, y_shards = split_batch(X, y, devices)

ls = [

loss(lenet(X_shard, device_W),

y_shard).sum() for X_shard, y_shard, device_W in zip(

X_shards, y_shards, device_params)]

for l in ls:

l.backward()

with torch.no_grad():

for i in range(len(device_params[0])):

allreduce([device_params[c][i].grad for c in range(len(devices))])

for param in device_params:

d2l.sgd(param, lr, X.shape[0])

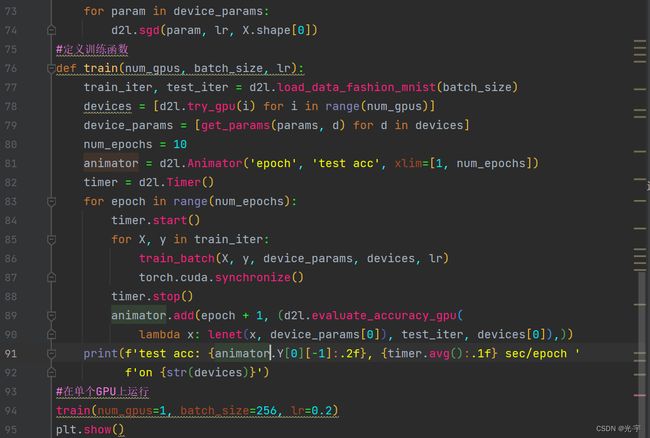

#定义训练函数

def train(num_gpus, batch_size, lr):

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

devices = [d2l.try_gpu(i) for i in range(num_gpus)]

device_params = [get_params(params, d) for d in devices]

num_epochs = 10

animator = d2l.Animator('epoch', 'test acc', xlim=[1, num_epochs])

timer = d2l.Timer()

for epoch in range(num_epochs):

timer.start()

for X, y in train_iter:

train_batch(X, y, device_params, devices, lr)

torch.cuda.synchronize()

timer.stop()

animator.add(epoch + 1, (d2l.evaluate_accuracy_gpu(

lambda x: lenet(x, device_params[0]), test_iter, devices[0]),))

print(f'test acc: {animator.Y[0][-1]:.2f}, {timer.avg():.1f} sec/epoch '

f'on {str(devices)}')

#在单个GPU上运行

train(num_gpus=1, batch_size=256, lr=0.2)

plt.show()简洁实现:

代码:

#多GPU的简洁实现

import torch

from torch import nn

from d2l import torch as d2l

import matplotlib.pyplot as plt

#简单网络

def resnet18(num_classes, in_channels=1):

"""稍加修改的 ResNet-18 模型。"""

def resnet_block(in_channels, out_channels, num_residuals,first_block=False):

blk = []

for i in range(num_residuals):

if i == 0 and not first_block:

blk.append(d2l.Residual(in_channels, out_channels, use_1x1conv=True,strides=2))

else:

blk.append(d2l.Residual(out_channels, out_channels))

return nn.Sequential(*blk)

net = nn.Sequential(nn.Conv2d(in_channels, 64, kernel_size=3, stride=1, padding=1),nn.BatchNorm2d(64), nn.ReLU())

net.add_module("resnet_block1", resnet_block(64, 64, 2, first_block=True))

net.add_module("resnet_block2", resnet_block(64, 128, 2))

net.add_module("resnet_block3", resnet_block(128, 256, 2))

net.add_module("resnet_block4", resnet_block(256, 512, 2))

net.add_module("global_avg_pool", nn.AdaptiveAvgPool2d((1, 1)))

net.add_module("fc",nn.Sequential(nn.Flatten(), nn.Linear(512, num_classes)))

return net

net = resnet18(10)

devices = d2l.try_all_gpus()

#训练

def train(net, num_gpus, batch_size, lr):

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size)

devices = [d2l.try_gpu(i) for i in range(num_gpus)]

def init_weights(m):

if type(m) in [nn.Linear, nn.Conv2d]:

nn.init.normal_(m.weight, std=0.01)

net.apply(init_weights)

net = nn.DataParallel(net, device_ids=devices)#把net弄到每个GPU上

trainer = torch.optim.SGD(net.parameters(), lr)

loss = nn.CrossEntropyLoss()

timer, num_epochs = d2l.Timer(), 10

animator = d2l.Animator('epoch', 'test acc', xlim=[1, num_epochs])

for epoch in range(num_epochs):

net.train()

timer.start()

for X, y in train_iter:

trainer.zero_grad()

X, y = X.to(devices[0]), y.to(devices[0])

l = loss(net(X), y)

l.backward()

trainer.step()

timer.stop()

animator.add(epoch + 1, (d2l.evaluate_accuracy_gpu(net, test_iter),))

print(f'test acc: {animator.Y[0][-1]:.2f}, {timer.avg():.1f} sec/epoch 'f'on {str(devices)}')

#在单个GPU上训练网络

train(net, num_gpus=1, batch_size=256, lr=0.1)

plt.show()