mediapipe学习-手势识别android(3)

前言

本章主要讲ANDROID 版本

1:把demo工程拷贝出来

把android 整个目录拷贝出来

2:用 android studio 打开

1>windows下电极 solutions\create_win_symlinks.bat 生成替换资源文件

用android studio 打开****\solutions

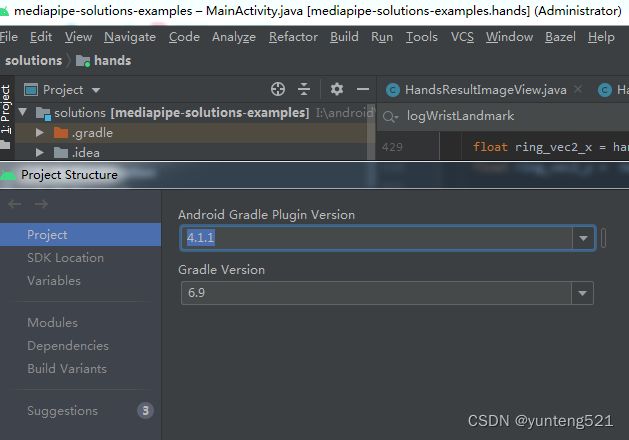

这里用的android studio 4.1.1 打开的,稍微修改了下

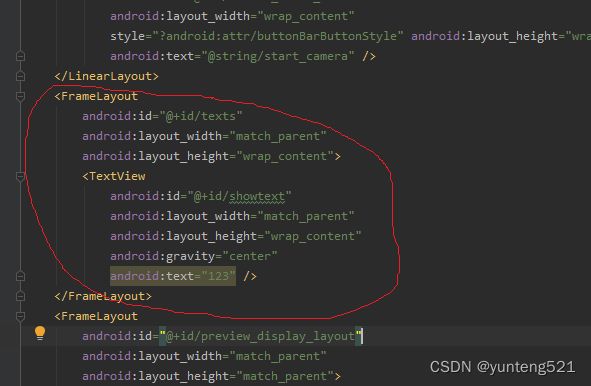

activity_main.xml 增加了一个textview

修改MainActivity.java 代码如下:

// Copyright 2021 The MediaPipe Authors.

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writing, software

// distributed under the License is distributed on an "AS IS" BASIS,

// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

// See the License for the specific language governing permissions and

// limitations under the License.

package com.google.mediapipe.examples.hands;

import android.content.Intent;

import android.graphics.Bitmap;

import android.graphics.Matrix;

import android.os.Bundle;

import android.provider.MediaStore;

import androidx.appcompat.app.AppCompatActivity;

import android.util.Log;

import android.view.View;

import android.widget.Button;

import android.widget.FrameLayout;

import android.widget.TextView;

import androidx.activity.result.ActivityResultLauncher;

import androidx.activity.result.contract.ActivityResultContracts;

import androidx.exifinterface.media.ExifInterface;

// ContentResolver dependency

import com.google.mediapipe.formats.proto.LandmarkProto.Landmark;

import com.google.mediapipe.formats.proto.LandmarkProto.NormalizedLandmark;

import com.google.mediapipe.solutioncore.CameraInput;

import com.google.mediapipe.solutioncore.SolutionGlSurfaceView;

import com.google.mediapipe.solutioncore.VideoInput;

import com.google.mediapipe.solutions.hands.HandLandmark;

import com.google.mediapipe.solutions.hands.Hands;

import com.google.mediapipe.solutions.hands.HandsOptions;

import com.google.mediapipe.solutions.hands.HandsResult;

import java.io.IOException;

import java.io.InputStream;

import java.util.ArrayList;

import java.util.List;

/** Main activity of MediaPipe Hands app. */

public class MainActivity extends AppCompatActivity {

private static final String TAG = "MainActivity";

private Hands hands;

// Run the pipeline and the model inference on GPU or CPU.

private static final boolean RUN_ON_GPU = true;

private enum InputSource {

UNKNOWN,

IMAGE,

VIDEO,

CAMERA,

}

private InputSource inputSource = InputSource.UNKNOWN;

// Image demo UI and image loader components.

private ActivityResultLauncher<Intent> imageGetter;

private HandsResultImageView imageView;

// Video demo UI and video loader components.

private VideoInput videoInput;

private ActivityResultLauncher<Intent> videoGetter;

// Live camera demo UI and camera components.

private CameraInput cameraInput;

private SolutionGlSurfaceView<HandsResult> glSurfaceView;

private TextView showtext;

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

setContentView(R.layout.activity_main);

showtext = findViewById(R.id.showtext);

setupStaticImageDemoUiComponents();

setupVideoDemoUiComponents();

setupLiveDemoUiComponents();

}

@Override

protected void onResume() {

super.onResume();

if (inputSource == InputSource.CAMERA) {

// Restarts the camera and the opengl surface rendering.

cameraInput = new CameraInput(this);

cameraInput.setNewFrameListener(textureFrame -> hands.send(textureFrame));

glSurfaceView.post(this::startCamera);

glSurfaceView.setVisibility(View.VISIBLE);

} else if (inputSource == InputSource.VIDEO) {

videoInput.resume();

}

}

@Override

protected void onPause() {

super.onPause();

if (inputSource == InputSource.CAMERA) {

glSurfaceView.setVisibility(View.GONE);

cameraInput.close();

} else if (inputSource == InputSource.VIDEO) {

videoInput.pause();

}

}

private Bitmap downscaleBitmap(Bitmap originalBitmap) {

double aspectRatio = (double) originalBitmap.getWidth() / originalBitmap.getHeight();

int width = imageView.getWidth();

int height = imageView.getHeight();

if (((double) imageView.getWidth() / imageView.getHeight()) > aspectRatio) {

width = (int) (height * aspectRatio);

} else {

height = (int) (width / aspectRatio);

}

return Bitmap.createScaledBitmap(originalBitmap, width, height, false);

}

private Bitmap rotateBitmap(Bitmap inputBitmap, InputStream imageData) throws IOException {

int orientation =

new ExifInterface(imageData)

.getAttributeInt(ExifInterface.TAG_ORIENTATION, ExifInterface.ORIENTATION_NORMAL);

if (orientation == ExifInterface.ORIENTATION_NORMAL) {

return inputBitmap;

}

Matrix matrix = new Matrix();

switch (orientation) {

case ExifInterface.ORIENTATION_ROTATE_90:

matrix.postRotate(90);

break;

case ExifInterface.ORIENTATION_ROTATE_180:

matrix.postRotate(180);

break;

case ExifInterface.ORIENTATION_ROTATE_270:

matrix.postRotate(270);

break;

default:

matrix.postRotate(0);

}

return Bitmap.createBitmap(

inputBitmap, 0, 0, inputBitmap.getWidth(), inputBitmap.getHeight(), matrix, true);

}

/** Sets up the UI components for the static image demo. */

private void setupStaticImageDemoUiComponents() {

// The Intent to access gallery and read images as bitmap.

imageGetter =

registerForActivityResult(

new ActivityResultContracts.StartActivityForResult(),

result -> {

Intent resultIntent = result.getData();

if (resultIntent != null) {

if (result.getResultCode() == RESULT_OK) {

Bitmap bitmap = null;

try {

bitmap =

downscaleBitmap(

MediaStore.Images.Media.getBitmap(

this.getContentResolver(), resultIntent.getData()));

} catch (IOException e) {

Log.e(TAG, "Bitmap reading error:" + e);

}

try {

InputStream imageData =

this.getContentResolver().openInputStream(resultIntent.getData());

bitmap = rotateBitmap(bitmap, imageData);

} catch (IOException e) {

Log.e(TAG, "Bitmap rotation error:" + e);

}

if (bitmap != null) {

hands.send(bitmap);

}

}

}

});

Button loadImageButton = findViewById(R.id.button_load_picture);

loadImageButton.setOnClickListener(

v -> {

if (inputSource != InputSource.IMAGE) {

stopCurrentPipeline();

setupStaticImageModePipeline();

}

// Reads images from gallery.

Intent pickImageIntent = new Intent(Intent.ACTION_PICK);

pickImageIntent.setDataAndType(MediaStore.Images.Media.INTERNAL_CONTENT_URI, "image/*");

imageGetter.launch(pickImageIntent);

});

imageView = new HandsResultImageView(this);

}

/** Sets up core workflow for static image mode. */

private void setupStaticImageModePipeline() {

this.inputSource = InputSource.IMAGE;

// Initializes a new MediaPipe Hands solution instance in the static image mode.

hands =

new Hands(

this,

HandsOptions.builder()

.setStaticImageMode(true)

.setMaxNumHands(2)

.setRunOnGpu(RUN_ON_GPU)

.build());

// Connects MediaPipe Hands solution to the user-defined HandsResultImageView.

hands.setResultListener(

handsResult -> {

logWristLandmark(handsResult, /*showPixelValues=*/ true);

imageView.setHandsResult(handsResult);

runOnUiThread(() -> imageView.update());

});

hands.setErrorListener((message, e) -> Log.e(TAG, "MediaPipe Hands error:" + message));

// Updates the preview layout.

FrameLayout frameLayout = findViewById(R.id.preview_display_layout);

frameLayout.removeAllViewsInLayout();

imageView.setImageDrawable(null);

frameLayout.addView(imageView);

imageView.setVisibility(View.VISIBLE);

}

/** Sets up the UI components for the video demo. */

private void setupVideoDemoUiComponents() {

// The Intent to access gallery and read a video file.

videoGetter =

registerForActivityResult(

new ActivityResultContracts.StartActivityForResult(),

result -> {

Intent resultIntent = result.getData();

if (resultIntent != null) {

if (result.getResultCode() == RESULT_OK) {

glSurfaceView.post(

() ->

videoInput.start(

this,

resultIntent.getData(),

hands.getGlContext(),

glSurfaceView.getWidth(),

glSurfaceView.getHeight()));

}

}

});

Button loadVideoButton = findViewById(R.id.button_load_video);

loadVideoButton.setOnClickListener(

v -> {

stopCurrentPipeline();

setupStreamingModePipeline(InputSource.VIDEO);

// Reads video from gallery.

Intent pickVideoIntent = new Intent(Intent.ACTION_PICK);

pickVideoIntent.setDataAndType(MediaStore.Video.Media.INTERNAL_CONTENT_URI, "video/*");

videoGetter.launch(pickVideoIntent);

});

}

/** Sets up the UI components for the live demo with camera input. */

private void setupLiveDemoUiComponents() {

Button startCameraButton = findViewById(R.id.button_start_camera);

startCameraButton.setOnClickListener(

v -> {

if (inputSource == InputSource.CAMERA) {

return;

}

stopCurrentPipeline();

setupStreamingModePipeline(InputSource.CAMERA);

});

}

/** Sets up core workflow for streaming mode. */

private void setupStreamingModePipeline(InputSource inputSource) {

this.inputSource = inputSource;

// Initializes a new MediaPipe Hands solution instance in the streaming mode.

hands =

new Hands(

this,

HandsOptions.builder()

.setStaticImageMode(false)

.setMaxNumHands(2)

.setRunOnGpu(RUN_ON_GPU)

.build());

hands.setErrorListener((message, e) -> Log.e(TAG, "MediaPipe Hands error:" + message));

if (inputSource == InputSource.CAMERA) {

cameraInput = new CameraInput(this);

cameraInput.setNewFrameListener(textureFrame -> hands.send(textureFrame));

} else if (inputSource == InputSource.VIDEO) {

videoInput = new VideoInput(this);

videoInput.setNewFrameListener(textureFrame -> hands.send(textureFrame));

}

// Initializes a new Gl surface view with a user-defined HandsResultGlRenderer.

glSurfaceView =

new SolutionGlSurfaceView<>(this, hands.getGlContext(), hands.getGlMajorVersion());

glSurfaceView.setSolutionResultRenderer(new HandsResultGlRenderer());

glSurfaceView.setRenderInputImage(true);

hands.setResultListener(

handsResult -> {

logWristLandmark(handsResult, /*showPixelValues=*/ false);

glSurfaceView.setRenderData(handsResult);

glSurfaceView.requestRender();

});

// The runnable to start camera after the gl surface view is attached.

// For video input source, videoInput.start() will be called when the video uri is available.

if (inputSource == InputSource.CAMERA) {

glSurfaceView.post(this::startCamera);

}

// Updates the preview layout.

FrameLayout frameLayout = findViewById(R.id.preview_display_layout);

imageView.setVisibility(View.GONE);

frameLayout.removeAllViewsInLayout();

frameLayout.addView(glSurfaceView);

glSurfaceView.setVisibility(View.VISIBLE);

frameLayout.requestLayout();

}

private void startCamera() {

cameraInput.start(

this,

hands.getGlContext(),

CameraInput.CameraFacing.FRONT,

glSurfaceView.getWidth(),

glSurfaceView.getHeight());

}

private void stopCurrentPipeline() {

if (cameraInput != null) {

cameraInput.setNewFrameListener(null);

cameraInput.close();

}

if (videoInput != null) {

videoInput.setNewFrameListener(null);

videoInput.close();

}

if (glSurfaceView != null) {

glSurfaceView.setVisibility(View.GONE);

}

if (hands != null) {

hands.close();

}

}

private void logWristLandmark(HandsResult result, boolean showPixelValues) {

if (result.multiHandLandmarks().isEmpty()) {

return;

}

NormalizedLandmark wristLandmark =

result.multiHandLandmarks().get(0).getLandmarkList().get(HandLandmark.WRIST);

// For Bitmaps, show the pixel values. For texture inputs, show the normalized coordinates.

if (showPixelValues) {

int width = result.inputBitmap().getWidth();

int height = result.inputBitmap().getHeight();

Log.i(

TAG,

String.format(

"MediaPipe Hand wrist coordinates (pixel values): x=%f, y=%f",

wristLandmark.getX() * width, wristLandmark.getY() * height));

} else {

Log.i(

TAG,

String.format(

"MediaPipe Hand wrist normalized coordinates (value range: [0, 1]): x=%f, y=%f",

wristLandmark.getX(), wristLandmark.getY()));

}

if (result.multiHandWorldLandmarks().isEmpty()) {

return;

}

Landmark wristWorldLandmark =

result.multiHandWorldLandmarks().get(0).getLandmarkList().get(HandLandmark.WRIST);

Log.i(

TAG,

String.format(

"MediaPipe Hand wrist world coordinates (in meters with the origin at the hand's"

+ " approximate geometric center): x=%f m, y=%f m, z=%f m",

wristWorldLandmark.getX(), wristWorldLandmark.getY(), wristWorldLandmark.getZ()));

int numHands = result.multiHandLandmarks().size();

ArrayList<String> sites = new ArrayList<>(); //eh add

for (int i = 0; i < numHands; ++i) {

String str = GetGestureResult(GestureRecognition(result.multiHandLandmarks().get(i).getLandmarkList()));

sites.add("[" + i + "] " + str);

// Log.d(TAG, "[" + numHands + "] " + str);

}

showtext.setText(sites.toString());

}

//eh add

//手势识别

private int GestureRecognition( List<NormalizedLandmark> handLandmarkList){

if (handLandmarkList.size() != 21)

return -1;

// 大拇指角度

// NormalizedLandmark thumb_vec1;

float thumb_vec1_x = handLandmarkList.get(0).getX() - handLandmarkList.get(2).getX() ;

float thumb_vec1_y = handLandmarkList.get(0).getY() - handLandmarkList.get(2).getY() ;

float thumb_vec2_x = handLandmarkList.get(3).getX() - handLandmarkList.get(4).getX() ;

float thumb_vec2_y = handLandmarkList.get(3).getY() - handLandmarkList.get(4).getY() ;

float thumb_angle = Vector2DAngle(thumb_vec1_x, thumb_vec1_y,thumb_vec2_x, thumb_vec2_y);

// 食指角度

float index_vec1_x = handLandmarkList.get(0).getX() - handLandmarkList.get(6).getX() ;

float index_vec1_y = handLandmarkList.get(0).getY() - handLandmarkList.get(6).getY() ;

float index_vec2_x = handLandmarkList.get(7).getX() - handLandmarkList.get(8).getX() ;

float index_vec2_y = handLandmarkList.get(7).getY() - handLandmarkList.get(8).getY() ;

float index_angle = Vector2DAngle(index_vec1_x, index_vec1_y,index_vec2_x,index_vec2_y);

// 中指角度

float middle_vec1_x = handLandmarkList.get(0).getX() - handLandmarkList.get(10).getX() ;

float middle_vec1_y = handLandmarkList.get(0).getY() - handLandmarkList.get(10).getY() ;

float middle_vec2_x = handLandmarkList.get(11).getX() - handLandmarkList.get(12).getX() ;

float middle_vec2_y = handLandmarkList.get(11).getY() - handLandmarkList.get(12).getY() ;

float middle_angle = Vector2DAngle(middle_vec1_x, middle_vec1_y,middle_vec2_x,middle_vec2_y);

// 无名指角度

float ring_vec1_x = handLandmarkList.get(0).getX() - handLandmarkList.get(14).getX() ;

float ring_vec1_y = handLandmarkList.get(0).getY() - handLandmarkList.get(14).getY() ;

float ring_vec2_x = handLandmarkList.get(15).getX() - handLandmarkList.get(16).getX() ;

float ring_vec2_y = handLandmarkList.get(15).getY() - handLandmarkList.get(16).getY() ;

float ring_angle = Vector2DAngle(ring_vec1_x, ring_vec1_y,ring_vec2_x,ring_vec2_y);

// 小拇指角度

float pink_vec1_x = handLandmarkList.get(0).getX() - handLandmarkList.get(18).getX() ;

float pink_vec1_y = handLandmarkList.get(0).getY() - handLandmarkList.get(18).getY() ;

float pink_vec2_x = handLandmarkList.get(19).getX() - handLandmarkList.get(20).getX() ;

float pink_vec2_y = handLandmarkList.get(19).getY() - handLandmarkList.get(20).getY() ;

float pink_angle = Vector2DAngle(pink_vec1_x, pink_vec1_y,pink_vec2_x,pink_vec2_y);

// 根据角度判断手势

float angle_threshold = 65;

float thumb_angle_threshold = 40;

int result = -1;

if ((thumb_angle > 5) && (index_angle < angle_threshold) && (middle_angle > angle_threshold) && (ring_angle > angle_threshold) && (pink_angle > angle_threshold))

result = 1;

else if ((thumb_angle > thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle > angle_threshold) && (pink_angle > angle_threshold))

result = 2;

else if ((thumb_angle > thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle > angle_threshold))

result = 3;

else if ((thumb_angle > thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle < angle_threshold))

result = 4;

else if ((thumb_angle < thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle < angle_threshold))

result = 5;

else if ((thumb_angle < thumb_angle_threshold) && (index_angle > angle_threshold) && (middle_angle > angle_threshold) && (ring_angle > angle_threshold) && (pink_angle < angle_threshold))

result = 6;

else if ((thumb_angle < thumb_angle_threshold) && (index_angle > angle_threshold) && (middle_angle > angle_threshold) && (ring_angle > angle_threshold) && (pink_angle > angle_threshold))

result = 7;

else if ((thumb_angle > 5) && (index_angle > angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle < angle_threshold))

result = 8;

else

result = -1;

return result;

}

private float Vector2DAngle(float x1,float y1,float x2,float y2)

{

double PI = 3.141592653;

double t2 = Math.sqrt(Math.pow(x1, 2) + Math.pow(y1, 2)) * Math.sqrt(Math.pow(x2, 2) + Math.pow(y2, 2));

double t = (x1 * x2 + y1 * y2) /t2 ;

double angle = Math.acos(t) * (180 / Math.PI);

Double D = Double.valueOf(angle);

float f = D.floatValue();

return f;

}

private String GetGestureResult(int result)

{

String result_str = "无";

switch (result)

{

case 1:

result_str = "One";

break;

case 2:

result_str = "Two";

break;

case 3:

result_str = "Three";

break;

case 4:

result_str = "Four";

break;

case 5:

result_str = "Five";

break;

case 6:

result_str = "Six";

break;

case 7:

result_str = "ThumbUp";

break;

case 8:

result_str = "Ok";

break;

default:

break;

}

return result_str;

}

}

3:编译

android 支持 选择图片 / 视屏 /摄像头(代码里写的是调用前置)

4:后面继续补充其他实例

感觉比 seetaface 靠谱多了