PyTorch入门一:PyTorch搭建多层全连接神经网络实现MNIST手写数字识别分类

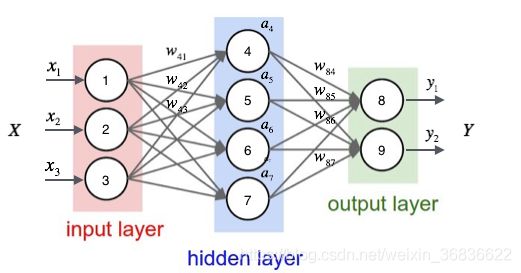

①全连接神经网络

神经网络中除输入层之外的每个节点都和上一层的所有节点有连接

②先用pytorch实现最简单的三层全连接神经网络

A简单的三层全连接神经网络

class simpleNet(nn.Module): #定义模组,固定

def_init_(self,in_dim,n_hidden_1,n_hidden_2,out_dim): #输入的维度,第一层神经元的个数,第二层个数,输出维度

super(simpleNet,self)._init_() #固定

slf.layer1 = nn.linear(in_dim,n.hidden_1)

slf.layer2 = nn.linear(n.hidden_1,n.hidden_2)

slf.layer3 = nn.linear(n.hidden_2,out_dim)

def foward(self,x): #固定

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

return x

B添加激活函数的FC

class Activate_Net(nn.Module):

def_init_(self,in_dim,n_hidden_1,n_hidden_2,out_dim):

super(NeuralNetwork,self)._init_()

slf.layer1 = nn.sequential(nn.linear(in_dim,n.hidden_1),nn.Relu(True)) #nn.sequentials是把网络组合到一起

slf.layer2 = nn.sequential(nn.linear(n.hidden_1,n.hidden_2),nn.Relu(True))

slf.layer3 = nn.sequential(nn.linear(n.hidden_2,out_dim))

def foward(self,x):

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

return xC添加BN(批量正则化)的FC

BN层要放在全连接层后面,激活函数前面。

class Batch_Net(nn.Module):

def_init_(self,in_dim,n_hidden_1,n_hidden_2,out_dim):

super(Batch_Net,self)._init_()

slf.layer1 = nn.sequential(nn.linear(in_dim,n.hidden_1),nn.BtachNormld(n_hidden_1),nn.Relu(True)) #nn.sequentials是把网络组合到一起

slf.layer2 = nn.sequential(nn.linear(n.hidden_1,n.hidden_2),,nn.BtachNormld(n_hidden_2),nn.Relu(True))

slf.layer3 = nn.sequential(nn.linear(n.hidden_2,out_dim))

def foward(self,x):

x = self.layer1(x)

x = self.layer2(x)

x = self.layer3(x)

return x网络定义结束,现在用minst数据集测试上述3种网络。

接下来进行数据预处理

import torch

from torch import nn,optim

from torch.autograd import Variable

from torch.utils.data import DataLoader

from torchvision import datasets,transforms

import net

#超参数

batch_size = 64

learning_rate = le-2

num_epoches = 20

#数据预处理

data_tf = transforms.Compose( #将下面两个操作组合

[transforms.ToTensor(), #将图片转换为tensor

transforms.Normalize([0.5],[0.5])]) #需要传入两个参数,一个是均值,一个是方差。减均值,除方差

#下载数据集

train_dataset = datasets.MNIST(

root='./data',train = true, transform = data_tf, download = True)

test_dataset = datasets.MNIST(root='./data',train=False,transform=data_tf)

train_loader = DataLoader(train_dataset,batch_size=batch_size,shuffle=True)

test_loader = Dataloader(test_dataset,batch_size=batch_size,shuffle=False)

#导入网络,定义损失函数和优化方法

model = net.simpleNet(28*28,300,100,10) #输入图片是28x28,两层分别为300,100,最后10类

if torch.cuda.is_avaiale():

model = model.cuda()

criterion = nn.CrossEntropyLoss() #使用交叉熵损失函数

optimizer = optim.SGD(model.parameters(),lr=learning_rate) #使用SGD优化

最后是模型评测

# 模型评估

model.eval()

eval_loss = 0

eval_acc = 0

for data in test_loader:

img, label = data

img = img.view(img.size(0), -1)

if torch.cuda.is_available():

img = img.cuda()

label = label.cuda()

out = model(img)

loss = criterion(out, label)

eval_loss += loss.data.item()*label.size(0)

_, pred = torch.max(out, 1)

num_correct = (pred == label).sum()

eval_acc += num_correct.item()

print('Test Loss: {:.6f}, Acc: {:.6f}'.format(

eval_loss / (len(test_dataset)),

eval_acc / (len(test_dataset))

))