零基础:训练并部署TensorFlow版YOLOv5模型

概述:

目标检测是计算机视觉上的一个重要任务,本文介绍的是YOLO算法,其全称是You Only Look Once: Unified, Real-Time Object Detection,它是目标检测中实现端到端目标检测的佼佼者,从YOLOv1到YOLOv5其中在数据处理、网络结构上都做了不少优化,而YOLOv5能够达到体积更小、精度更好,本文就从零开始介绍如何通过用TensorFlow 对YOLOv5进行搭建训练和部署。本实例源码可在点击以下链接:https://github.com/Yunying-CN/Yolov5-TF

1.安装Tensorflow2.x

为了提高训练速度减少训练时长,在训练阶段最好在配有GPU的本地服务器或者云服务器上进行。本例以Linux 64位下的Python 3.8版本为例,可选择下载对应的安装包。在保存安装包的路径下打开终端,运行命令进行安装TensorFlow。这里安装的是Tensorflow2.3.0-gpu版本,搭配cuda10.1和对应的cudnn,也可以直接通过pip安装命令来下载安装,如果速度较慢可以修改下载的源。

$sudo apt-get install python-pip python-dev

$pip3 install --upgrade pip

$pip3 install tensorflow-gpu==2.3.1 -i https://pypi.tuna.tsinghua.edu.cn/simple

安装完成后,可以终端打开python并导入Tensorflow来查看版本来验证是否安装成功。

$python3

>>import tensorflow as tf

>>tf.__version__

2.训练YOLOv5网络

YOLO是经典的目标检测识别网络,而YOLOv5是YOLO系列中识别率最高而且速度最快的目标检测识别模型。YOLOv5模型属于监督学习,训练模型的样本需要包括物体的位置坐标(矩形框)和物体所属的类别。将数据集中的图片作为网络输入,物体的类别和坐标作为标签信息,对网络进行训练,得到网络对物体检测和识别的能力。

2.1●数据集

本实例以开源的Pascal Voc2012数据集。Pascal VOC2012作为基准数据之一,在对象检测、图像分割网络对比实验与模型效果评估中被频频使用,Pascal VOC2012数据集主要是针对视觉任务中监督学习提供标签数据,它一共包含有20个类别,分别为:aeroplane、bicycle、bird、boat、bottle、bus、car、cat、chair、cow、dining table、dog、horse、motorbike、person、

potted plant、sheep、sofa、train、tv/monitor,训练图像有5717张,目标数13609个,测试图像有11540张,目标数27450个。Pascal Voc2012数据集可以在官网上进行下载(http://host.robots.ox.ac.uk/pascal/VOC/voc2012/),也可以在终端通过命令下载数据集并解压。

$wget http://host.robots.ox.ac.uk/pascal/VOC/voc2012/VOCtrainval_11-May-2012.tar -O ./data/voc2012.tar

$mkdir -p ./data/voc

$tar -xf ./data/voc2012.tar -C ./data/voc

$ls ./data/voc

里面包括有Annotations、ImageSets、JPEGImages、SegmentationClass 和SegmentationObject 五个文件夹,Annotations 文件夹中保存了进行目标检测任务时的标签文件为.xml格式,标签文件名与图片名一一对应。.xml文档记录了该图片的尺寸信息以及图片中识别物体的类别和其具体位置信息。ImageSets包含三个子文件夹 Layout、Main、Segmentation,其中 Main 存放的是分类和检测的数据集分割文件,JPEGImages 存放.jpg 格式的图片文件,SegmentationClass 存放按照类别进行分割的图片,SegmentationObject 存放按照物体进行分割的图片。

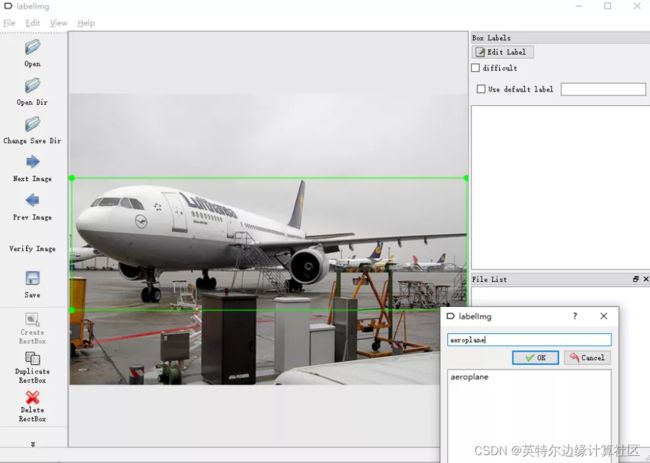

如果想制作自己的数据集,也可以通过使用LabelImg工具,框选出图像中所需识别物体的位置和标记该物体的类别并将所有所识别的类型保存在.names文件中。

2.2●生成TFRecord文件

为了高效的读取数据,可以将数据进行序列化存储,便于网络流式读取数据,TFRecord是存储二进制序列数据的文件格式,只占用一个内存块,保存记录的方法可以任意的数据转换为TensorFlow所支持的格式,这种方法可以使TensorFlow的数据集更容易与网络应用架构相匹配。在生成TFRecord文件中需要保存训练图片及图片中物体的类别和位置信息。

import os

from absl import app, flags, logging

from absl.flags import FLAGS

import tensorflow as tf

import lxml.etree

import tqdm

flags.DEFINE_string('data_dir', './data/voc/VOCdevkit/VOC2012/',

'path to PASCAL VOC dataset')

flags.DEFINE_enum('split', 'val', [

'train', 'val'], 'specify train or val spit')

flags.DEFINE_string('output_file', './data/voc2012_train.tfrecord', 'outpot dataset')

flags.DEFINE_string('classes', './data/voc2012.names', 'classes file') def build_example(annotation, class_map):

img_path = os.path.join(

FLAGS.data_dir, 'JPEGImages', annotation['filename'])

img_raw = open(img_path, 'rb').read()

width = int(annotation['size']['width'])

height = int(annotation['size']['height'])

xmin = []

ymin = []

xmax = []

ymax = []

classes = []

classes_text = []

if 'object' in annotation:

for obj in annotation['object']:

xmin.append(float(obj['bndbox']['xmin']) / width)

ymin.append(float(obj['bndbox']['ymin']) / height)

xmax.append(float(obj['bndbox']['xmax']) / width)

ymax.append(float(obj['bndbox']['ymax']) / height)

classes_text.append(obj['name'].encode('utf8')) classes.append(class_map[obj['name']])

example = tf.train.Example(features=tf.train.Features(feature={

'image/encoded': tf.train.Feature(bytes_list=tf.train.BytesList(value=[img_raw])),

'image/object/bbox/xmin': tf.train.Feature(float_list=tf.train.FloatList(value=xmin)),

'image/object/bbox/xmax': tf.train.Feature(float_list=tf.train.FloatList(value=xmax)),

'image/object/bbox/ymin': tf.train.Feature(float_list=tf.train.FloatList(value=ymin)),

'image/object/bbox/ymax': tf.train.Feature(float_list=tf.train.FloatList(value=ymax)),

'image/object/class/text': tf.train.Feature(bytes_list=tf.train.BytesList(value=classes_text)),

'image/object/class/label': tf.train.Feature(int64_list=tf.train.Int64List(value=classes)),

}))

return exampledef parse_xml(xml):

if not len(xml):

return {xml.tag: xml.text}

result = {}

for child in xml:

child_result = parse_xml(child)

if child.tag != 'object':

result[child.tag] = child_result[child.tag]

else:

if child.tag not in result:

result[child.tag] = []

result[child.tag].append(child_result[child.tag])

return {xml.tag: result}

def main(_argv):

class_map = {name: idx for idx, name in enumerate(

open(FLAGS.classes).read().splitlines())}

writer = tf.io.TFRecordWriter(FLAGS.output_file)

image_list = open(os.path.join(

FLAGS.data_dir, 'ImageSets', 'Main', '%s.txt' % FLAGS.split)).read().splitlines()

logging.info("Image list loaded: %d", len(image_list))

for name in tqdm.tqdm(image_list):

annotation_xml = os.path.join(

FLAGS.data_dir, 'Annotations', name + '.xml')

annotation_xml = lxml.etree.fromstring(open(annotation_xml).read()) annotation = parse_xml(annotation_xml)['annotation']

tf_example = build_example(annotation, class_map)

writer.write(tf_example.SerializeToString())

writer.close()

logging.info("Done")

if __name__ == '__main__':

app.run(main)

2.3●YOLOv5网络结构

YOLOv5目标检测网络中一共有4个版本,分别是YOLOv5s、YOLOv5m、YOLOv5l、YOLOv5x四个模型,通过用.yaml文件来配置模型。在yaml文件里面分别定义了各个参数变量如: nc代表分类目标的数量,depth_multiple即网络深度表示channel的缩放系数,即将配置里面的backbone和head部分有关通道的设置。而width_multiple即网络宽度表示BottleneckCSP模块的层缩放系数,将所有的BottleneckCSP模块的number系数乘上该参数即为最终的层个数。通过这参数就可以实现不同大小不同复杂度的模型设计,4个版本的YOLOv5也做了不同的设计。Anchors为预设锚定框,预设了640×640图像大小下9种锚定框的尺寸。此外还有模型的主干网络backbone和通用检测层head,head主要用于最终检测部分。它在特征图上应用锚定框并生成带有类概率、对象得分和边界框的最终输出向量。以下是以YOLOv5s.yaml为例。

# Parameters

nc: 20 # number of classes

depth_multiple: 0.67 # model depth multiple

width_multiple: 0.75 # layer channel multiple

anchors:

- [10,13, 16,30, 33,23] # P3/8

- [30,61, 62,45, 59,119] # P4/16

- [116,90, 156,198, 373,326] # P5/32

# YOLOv5 backbone

backbone:

# [from, number, module, args]

[[-1, 1, Focus, [64, 3]], # 0-P1/2

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 1, Conv, [128, 3, 2]], # 1-P2/4

[-1, 3, C3, [128]],

[-1, 1, Conv, [256, 3, 2]], # 3-P3/8

[-1, 9, C3, [256]],

[-1, 1, Conv, [512, 3, 2]], # 5-P4/16

[-1, 9, C3, [512]],

[-1, 1, Conv, [1024, 3, 2]], # 7-P5/32

[-1, 1, SPP, [1024, [5, 9, 13]]],

[-1, 3, C3, [1024, False]], # 9

]

# YOLOv5 head

head:

[[-1, 1, Conv, [512, 1, 1]],

[-1, 1, Upsample, [None, 2, 'nearest']],

[[-1, 6], 1, Concat, [-1]], # cat backbone P4

[-1, 3, C3, [512, False]], # 13

[-1, 1, Conv, [256, 1, 1]],

[-1, 1, Upsample, [None, 2, 'nearest']],

[[-1, 4], 1, Concat, [-1]], # cat backbone P3

[-1, 3, C3, [256, False]], # 17 (P3/8-small)

[-1, 1, Conv, [256, 3, 2]],

[[-1, 14], 1, Concat, [-1]], # cat head P4

[-1, 3, C3, [512, False]], # 20 (P4/16-medium)

[-1, 1, Conv, [512, 3, 2]],

[[-1, 10], 1, Concat, [-1]], # cat head P5

[-1, 3, C3, [1024, False]], # 23 (P5/32-large)

[[17, 20, 23], 1, Detect, [nc, anchors]], # Detect(P3, P4, P5)

]

2.4●YOLOv5网络模块

YOLOv5网络可以分为输入图像、backbone主干网络以获得图像的特征图、head检测头用作预测目标物体和位置,neck为对特征图在输入head前的特殊处理,4个部分组成整个网络。而这4部分由几个不同的模块堆叠得到。

2.4.1. CBL模块

CBL为卷积模块,YOLOv5主干网络中的CBL模块以Convolution + Batch Normalization + Activation的形式,对输入数据进行卷积计算、批标准化计算和经过一个激活函数,其中的激活函数选用LeakyRelu,对网络加入非线性并加快网络的收敛速度。

2.4.2. Focus模块

从.yaml 配置文件中可以看到在backbone主干网络中包含了focus模块,focus模块是对图片进行切片操作,通过在图片中每间隔1个像素取值,得到4张图片,使得图片的长和宽分别减半,通道数扩展为原来的4倍,该操作类似于2倍下采样但是保证了图片信息没有丢失,以YOLOV5s为例,原始的640 × 640 × 3的图像通过Focus模块,输出得到320 × 320 × 12的特征图。

2.4.3. bottleneck模块

Bottleneck模块可以通过卷积计算改变数据的通道数,bottleneck瓶颈层有多种形式,其标准形式为进行一个1×1和3×3的卷积后加上其本身的短路连接, 而BottleneckCSP是几个标准bottleneck的堆叠,YOLOV5网络中的C3模块与BottleneckCSP模块类似,只是在C3中的卷积计算后加上了BN层和激活函数积操作。

2.4.4. SPP模块

在目标检测中,通常输入图像的尺寸大小并不固定,为了得到统一大小的特征图,从YOLOv3开始引入了SPP空间金字塔池化模块,通过使用CBL模块使其通道数减半,然后将输入经过三个不同尺寸大小的最大池化层,连同输入通过concat级联在一起,最后通过CBL模块使通道数减半,保证不同大小的输入在池化后的特征图长和宽能保持一致。

2.4.5. Upsample模块

在进行预测前,网络对都特征图做了两次向上采样,得到3个不同尺寸大小的特征图,使图像的长和宽分别扩展为原来的2倍,这能够使得相对较大或较小的物体都能更好地识别。

import tensorflow as tf

from tensorflow.keras.layers import Layer, Conv2D, BatchNormalization, MaxPool2D

from tensorflow import kerasimport math

import numpy as np

class Conv2d(keras.layers.Layer):

def __init__(self, c1, c2, k, s=1, g=1, bias=True, w=None):

super(Conv2d, self).__init__()

assert g == 1, "TF v2.2 Conv2D does not support 'groups' argument"

self.conv = keras.layers.Conv2D(

c2, k, s, 'VALID', use_bias=bias,

kernel_initializer=keras.initializers.Constant(w.weight.permute(2, 3, 1, 0).numpy()),

bias_initializer=keras.initializers.Constant(w.bias.numpy()) if bias else None )

def call(self, inputs):

return self.conv(inputs)

class LeakyRelu(object):

def __call__(self, x):

return tf.nn.leaky_relu(x)

class Conv(Layer):

def __init__(self, filters, kernel_size, strides, padding='SAME', groups=1):

super(Conv, self).__init__()

self.conv = Conv2D(filters, kernel_s

self.conv = Conv2D(filters, kernel_size, strides, padding, groups=groups, use_bias=False,

kernel_initializer=tf.random_normal_initializer(stddev=0.01),

kernel_regularizer=tf.keras.regularizers.L2(5e-4))

self.bn = BatchNormalization()

self.activation = LeakyRelu()

def call(self, x):

return self.activation(self.bn(self.conv(x)))

class Focus(Layer):

def __init__(self, filters, kernel_size, strides=1, padding='SAME'):

super(Focus, self).__init__()

self.conv = Conv(filters, kernel_size, strides, padding)

def call(self, x):

return self.conv(tf.concat([x[..., ::2, ::2, :],

x[..., 1::2, ::2, :],

x[..., ::2, 1::2, :],

x[..., 1::2, 1::2, :]],

axis=-1))

class Bottleneck(Layer):

def __init__(self, units, shortcut=True, expansion=0.5):

super(Bottleneck, self).__init__()

self.conv1 = Conv(int(units * expansion), 1, 1)

self.conv2 = Conv(units, 3, 1)

self.shortcut = shortcut

def call(self, x):

if self.shortcut:

return x + self.conv2(self.conv1(x))

return self.conv2(self.conv1(x))

class BottleneckCSP(Layer):

def __init__(self, units, n_layer=1, shortcut=True, expansion=0.5):

super(BottleneckCSP, self).__init__()

units_e = int(units * expansion)

self.conv1 = Conv(units_e, 1, 1)

self.conv2 = Conv2D(units_e, 1, 1, use_bias=False, kernel_initializer=tf.random_normal_initializer(stddev=0.01))

self.conv3 = Conv2D(units_e, 1, 1, use_bias=False, kernel_initializer=tf.random_normal_initializer(stddev=0.01))

self.conv4 = Conv(units, 1, 1)

self.bn = BatchNormalization(momentum=0.03)

self.activation = LeakyRelu()

self.modules = tf.keras.Sequential([Bottleneck(units_e, shortcut, expansion=1.0) for _ in range(n_layer)])

def call(self, x):

class BottleneckCSP(Layer):

def __init__(self, units, n_layer=1, shortcut=True, expansion=0.5):

super(BottleneckCSP, self).__init__()

units_e = int(units * expansion)

self.conv1 = Conv(units_e, 1, 1)

self.conv2 = Conv2D(units_e, 1, 1, use_bias=False, kernel_initializer=tf.random_normal_initializer(stddev=0.01))

self.conv3 = Conv2D(units_e, 1, 1, use_bias=False, kernel_initializer=tf.random_normal_initializer(stddev=0.01))

self.conv4 = Conv(units, 1, 1)

self.bn = BatchNormalization(momentum=0.03)

self.activation = LeakyRelu()

self.modules = tf.keras.Sequential([Bottleneck(units_e, shortcut, expansion=1.0) for _ in range(n_layer)])

def call(self, x):

y1 = self.conv3(self.modules(self.conv1(x)))

y2 = self.conv2(x)

return self.conv4(self.activation(self.bn(tf.concat([y1, y2], axis=-1)))) class SPP(Layer):

def __init__(self, units, kernels=(5, 9, 13)):

super(SPP, self).__init__()

units_e = units // 2 # Todo:

self.conv1 = Conv(units_e, 1, 1)

self.conv2 = Conv(units, 1, 1)

self.modules = [MaxPool2D(pool_size=x, strides=1, padding='SAME') for x in kernels]

def call(self, x):

x = self.conv1(x)

return self.conv2(tf.concat([x] + [module(x) for module in self.modules], axis=-1))

class SPPCSP(Layer):

# Cross Stage Partial Networks

def __init__(self, units, n=1, shortcut=False, expansion=0.5, kernels=(5, 9, 13)):

super(SPPCSP, self).__init__()

units_e = int(2 * units * expansion)

self.conv1 = Conv(units_e, 1, 1)

self.conv2 = Conv2D(units_e, 1, 1, use_bias=False, kernel_initializer=tf.random_normal_initializer(stddev=0.01))

self.conv3 = Conv(units_e, 3, 1)

self.conv4 = Conv(units_e, 1, 1)

self.modules = [MaxPool2D(pool_size=x, strides=1, padding='same') for x in kernels]

self.conv5 = Conv(units_e, 1, 1)

self.conv6 = Conv(units_e, 3, 1)

self.bn = BatchNormalization()

self.act = LeakyRelu()

self.act = LeakyRelu()

self.conv7 = Conv(units, 1, 1)

def call(self, x):

x1 = self.conv4(self.conv3(self.conv1(x)))

y1 = self.conv6(self.conv5(tf.concat([x1] + [module(x1) for module in self.modules], axis=-1)))

y2 = self.conv2(x)

return self.conv7(self.act(self.bn(tf.concat([y1, y2], axis=-1))))

class Upsample(Layer):

def __init__(self, i=None, ratio=2, method='bilinear'):

super(Upsample, self).__init__()

self.ratio = ratio

self.method = method

def call(self, x):

return tf.image.resize(x, (tf.shape(x)[1] * self.ratio, tf.shape(x)[2] * self.ratio), method=self.method)

2.5●网络和损失函数

读取.yaml文件中的backbone和head结构可以以序列形式把上述定义好的网络模块堆叠起来完成网络框架。

在损失计算中,分类任务和置信度任务都是通过二元交叉熵损失函数计算,再通过gamma和alpha的Focal Loss来调整权重,而边界框是通过以GIOU来计算其损失函数。

def parse_model(yaml_dict): # model_dict, input_channels(3)

anchors, nc = yaml_dict['anchors'], yaml_dict['nc']

depth_multiple, width_multiple = yaml_dict['depth_multiple'], yaml_dict['width_multiple']

num_anchors = (len(anchors[0]) // 2) if isinstance(anchors, list) else anchors

output_dims = num_anchors * (nc + 5)

layers = []

# from, number, module, args

for i, (f, number, module, args) in enumerate(yaml_dict['backbone'] + yaml_dict['head']):

# all component is a Class, initialize here, call in self.forward

module = eval(module) if isinstance(module, str) else module

for j, arg in enumerate(args):

try:

args[j] = eval(arg) if isinstance(arg, str) else arg except:

pass

number = max(round(number * depth_multiple), 1) if number > 1 else number

if module in [Conv2D, Conv, Bottleneck, SPP, Focus, BottleneckCSP, C3]:

c2 = args[0]

c2 = math.ceil(c2 * width_multiple / 8) * 8 if c2 != output_dims else c2

args = [c2, *args[1:]]

if module in [BottleneckCSP, C3, SPPCSP]:

args.insert(1, number)

number = 1

modules = tf.keras.Sequential(*[module(*args) for _ in range(number)]) if number > 1 else module(*args)

modules.i, modules.f = i, f

layers.append(modules)

return layers class Model(object): # model, channels, classes

def __init__(self, cfg='yolov5s.yaml', ch=3, nc=20, model=None, imgsz=(640, 640)): super(Model, self).__init__()

if isinstance(cfg, dict):

self.yaml = cfg # model dict

else: # is *.yaml

import yaml # for torch hub

self.yaml_file = Path(cfg).name

with open(cfg) as f:

self.yaml = yaml.load(f, Loader=yaml.FullLoader) # model dict

self.imgsz =imgsz

# Define model

if nc and nc != self.yaml['nc']:

print('Overriding %s nc=%g with nc=%g' % (cfg, self.yaml['nc'], nc))

self.yaml['nc'] = nc # override yaml value

self.model = parse_model(self.yaml)

if isinstance(model, Detect):

# transfer the anchors to grid coordinator, 3 * 3 * 2

model.anchors /= tf.reshape(module.stride, [-1, 1, 1])

def __call__(self, img_size, name='yolo'):

x = tf.keras.Input([img_size, img_size, 3])

output = self.forward(x)

return tf.keras.Model(inputs=x, outputs=output, name=name)

def forward(self, inputs, tf_nms=False, agnostic_nms=False, topk_per_class=100, topk_all=100, iou_thres=0.45, conf_thres=0.25):

y = [] # outputs

x = inputs

for i, m in enumerate(self.model):

if m.f != -1:

if isinstance(m.f, int):

x = y[m.f]

x = y[m.f]

else:

x = [x if j == -1 else y[j] for j in m.f]

x = m(x) # run

y.append(x)

return x

class Loss(object):

def __init__(self, anchors, iou_thres, num_classes=20, img_size=640, label_smoothing=0):

self.anchors = anchors

self.strides = [8, 16, 32]

self.iou_thres = iou_thres

self.num_classes = num_classes

self.img_size = img_size

self.bce_conf = tf.keras.losses.BinaryCrossentropy(reduction=tf.keras.losses.Reduction.NONE)

self.bce_class = tf.keras.losses.BinaryCrossentropy(reduction=tf.keras.losses.Reduction.NONE,

label_smoothing=label_smoothing)

def __call__(self, y_true, y_pred):

iou_loss_all = obj_loss_all = class_loss_all = tf.zeros(1)

balance = [4.0, 1.0, 0.4] if len(y_pred) == 3 else [4.0, 1.0, 0.25, 0.06]

for i, (pred, true) in enumerate(zip(y_pred, y_true)):

true_box, true_obj, true_class = tf.split(true, (4, 1, -1), axis=-1)

pred_box, pred_obj, pred_class = tf.split(pred, (4, 1, -1), axis=-1)

if tf.shape(true_class)[-1] == 1 and self.num_classes > 1:

true_class = tf.squeeze(tf.one_hot(tf.cast(true_class, tf.dtypes.int32), depth=self.num_classes, axis=-1), -2)

box_scale = 2 - 1.0 * true_box[..., 2] * true_box[..., 3] / (self.img_size ** 2)

obj_mask = tf.squeeze(true_obj, -1) # obj or noobj

background_mask = 1.0 - obj_mask

conf_focal = tf.squeeze(tf.math.pow(true_obj - pred_obj, 2), -1)

# giou loss

iou = bbox_iou(pred_box, true_box, xyxy=False, giou=True)

iou_loss = (1 - iou) * obj_mask * box_scale # batch_size * grid * grid * 3

# confidence loss

conf_loss = self.bce_conf(true_obj, pred_obj)

conf_loss = conf_focal * (obj_mask * conf_loss + background_mask * conf_loss) # class loss

class_loss = obj_mask * self.bce_class(true_class, pred_class)

iou_loss = tf.reduce_mean(tf.reduce_sum(iou_loss, axis=[1, 2, 3]))

conf_loss = tf.reduce_mean(tf.reduce_sum(conf_loss, axis=[1, 2, 3]))

class_loss = tf.reduce_mean(tf.reduce_sum(class_loss, axis=[1, 2, 3]))

iou_loss_all += iou_loss * balance[i]

iou_loss_all += iou_loss * balance[i]

obj_loss_all += conf_loss * balance[i]

class_loss_all += class_loss * self.num_classes * balance[i] # to balance the 3 loss

return (iou_loss_all, obj_loss_all, class_loss_all)

def bbox_iou(bbox1, bbox2, xyxy=False, giou=False, diou=False, ciou=False, epsilon=1e-9):

assert bbox1.shape == bbox2.shape

# giou loss: https://arxiv.org/abs/1902.09630

if xyxy:

b1x1, b1y1, b1x2, b1y2 = bbox1[..., 0], bbox1[..., 1], bbox1[..., 2], bbox1[..., 3]

b2x1, b2y1, b2x2, b2y2 = bbox2[..., 0], bbox2[..., 1], bbox2[..., 2], bbox2[..., 3]

else: # xywh -> xyxy

b1x1, b1x2 = bbox1[..., 0] - bbox1[..., 2] / 2, bbox1[..., 0] + bbox1[..., 2] / 2

b1y1, b1y2 = bbox1[..., 1] - bbox1[..., 3] / 2, bbox1[..., 1] + bbox1[..., 3] / 2

b2x1, b2x2 = bbox2[..., 0] - bbox2[..., 2] / 2, bbox2[..., 0] + bbox2[..., 2] / 2

b2y1, b2y2 = bbox2[..., 1] - bbox2[..., 3] / 2, bbox2[..., 1] + bbox2[..., 3] / 2

# intersection area

inter = tf.maximum(tf.minimum(b1x2, b2x2) - tf.maximum(b1x1, b2x1), 0) * \

tf.maximum(tf.minimum(b1y2, b2y2) - tf.maximum(b1y1, b2y1), 0)

# union area

w1, h1 = b1x2 - b1x1 + epsilon, b1y2 - b1y1 + epsilon

w2, h2 = b2x2 - b2x1+ epsilon, b2y2 - b2y1 + epsilon

union = w1 * h1 + w2 * h2 - inter + epsilon

# Giou

iou = inter / union

cw = tf.maximum(b1x2, b2x2) - tf.minimum(b1x1, b2x1)

ch = tf.maximum(b1y2, b2y2) - tf.minimum(b1y1, b2y1)

enclose_area = cw * ch + epsilon

giou = iou - 1.0 * (enclose_area - union) / enclose_area

return tf.clip_by_value(giou, -1, 1)

2.6●传入训练数据设置训练参数

在完成网络的搭建后,需要从上述生成得到的TFRecord文件中读取训练数据,需要设置网络的分类类别数,根据batch size分批把数据放入网络中,并且设置网络训练轮数、优化器和学习率等,并将训练的网络模型保存为.pb或.pbtxt文件。

from absl import app, flags, logging

from absl.flags import FLAGS

import tensorflow as tf

import numpy as np

import cv2

import time

from models.yolo import *

from data.dataset import *

flags.DEFINE_string('dataset', './data/voc2012_train.tfrecord', 'path to dataset')

flags.DEFINE_string('val_dataset', './data/voc2012_val.tfrecord', 'path to validation dataset')

flags.DEFINE_string('yaml_dir', './models/yolov5s.yaml', 'path to yaml file')

flags.DEFINE_string('classes', './data/voc2012.names', 'path to classes file')

flags.DEFINE_integer('epochs', 20, 'number of epochs')

flags.DEFINE_integer('batch_size', 8, 'batch size')

flags.DEFINE_integer('img_size', 640, 'image size')

flags.DEFINE_float('learning_rate', 1e-3, 'learning rate')

flags.DEFINE_integer('num_classes', 20, 'number of classes in the model')

flags.DEFINE_boolean('multi_gpu', False, 'Use if wishing to train with more than 1 GPU.')

flags.DEFINE_float('label_smoothing', 0.02, 'label smoothing')

flags.DEFINE_integer('yolo_max_boxes', 100, 'yolo max boxes')

def transform(image, label):

label_encoder = anchor_label.encode(label)

return image, label_encoder

def main(_argv):

train_dataset = load_tfrecord_dataset(FLAGS.batch_size,

FLAGS.dataset, FLAGS.classes, FLAGS.size)

Yolo = Model(cfg=FLAGS.yaml_dir)

anchors = Yolo.model[-1].anchors

stride = Yolo.model[-1].stride

num_classes = FLAGS.num_classes

anchor_label = AnchorLabeler(anchors,

grids=FLAGS.img_size / stride,

img_size=FLAGS.img_size,

assign_method='wh',

extend_offset='True')

train_dataset = train_dataset.map(transform, num_parallel_calls=tf.data.experimental.AUTOTUNE)

train_dataset = train_dataset.batch(FLAGS.batch_size).prefetch(tf.data.experimental.AUTOTUNE)

print(train_dataset)

Yolo_loss = Loss(anchors, iou_thres=0.3,

num_classes=num_classes,

label_smoothing=FLAGS.label_smoothing,

img_size=FLAGS.img_size)

optimizer = tf.keras.optimizers.Adam(lr=FLAGS.learning_rate)

Yolo = Yolo(FLAGS.img_size)

for epoch in range(0, FLAGS.epochs):

for step, (image, target) in enumerate(train_dataset):

with tf.GradientTape() as tape:

output = Yolo(image)

iou_loss, conf_loss, prob_loss = Yolo_loss(target, output)

pred_loss = iou_loss+conf_loss+prob_loss

total_loss = tf.reduce_sum(pred_loss)

grads = tape.gradient(total_loss, Yolo.trainable_variables)

optimizer.apply_gradients(zip(grads, Yolo.trainable_variables))

logging.info("{}_train_{}, {}, {}".format(epoch, step, total_loss.numpy(),

list(map(lambda x: np.sum(x.numpy()), pred_loss))))

tf.saved_model.save(Yolo, '/data/Yolov5/weights/')

if __name__=='__main__':

app.run(main)

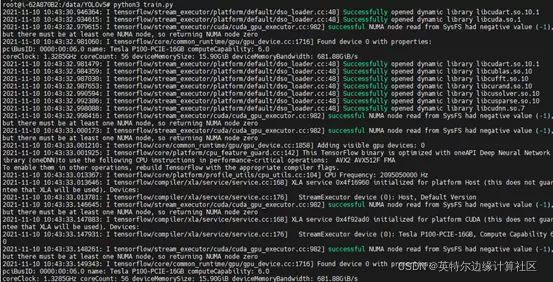

运行train.py脚本文件开始训练,此处要注意cuda和cudnn的安装,使得TensorFlow能够成功调用GPU进行训练,效果如下。

训练完成后保存的网络模型saved_model.pb和Variables参数文件夹将保存在项目中的weights文件路径下。

3.部署

登录官网:

https://www.intel.cn/content/www/cn/zh/developer/tools/openvino-toolkit/overview.html

选择部署的操作系统和版本等进行下载和安装,本文的所有实现基于Windows操作系统下的2021.4.1 LTS版本。

3.2●转换OpenVINO™ 工具套件的IR格式

$python mo_tf.py --saved_model_dir <.pb文件夹路径> --input_shape [1,640,640,3] --output_dir <输出文件夹路径> --data_type FP32

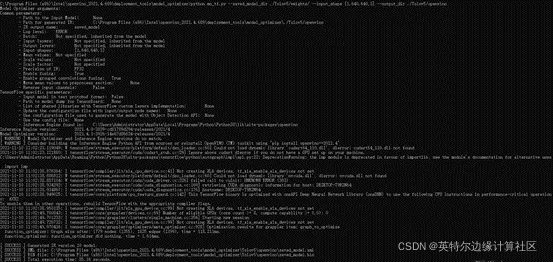

运行成功之后会在输出文件夹路径下获得.xml和.bin文件,.xml和.bin是OpenVINO™ 工具套件中的模型存储方式,后续将基于.bin和.xml文件进行部署,效果如下。

3.3●推理部署

此实例将在C++上进行推理部署, 在部署中包括有引擎初始化、数据准备、推理、结果处理等方面。引擎初始化需要读入转化后的模型文件并获取图像的输入输出信息。在数据准备中需要将输入图像缩放到640*640的尺寸大小并将通道输入改为RGB。然后将输入填充在blob中,进行推理。得到3个检测头,分别对应80、40和20的栅格尺寸,并依次对结果进行解析。最后通过NMS剔除多余的候选框。

// 导入头文件

#include

#include

using namespace InferenceEngine;

using namespace std;

using namespace cv;

int main() {

// 初始化推理引擎

Core ie;

// 读取转换得到的.xml和.bin文件

CNNNetwork network = ie.ReadNetwork("./openvino/yolov5s.xml", "./openvino/yolov5s.bin");

// 获取设置输入输出格式

// 从模型中获取输入数据的格式信息

InputsDataMap inputsInfo = network.getInputsInfo();

InputInfo::Ptr& input = inputsInfo.begin()->second;

string inputs_name = inputsInfo.begin()->first;

ICNNNetwork::InputShapes inputShapes = network.getInputShapes();

network.reshape(inputShapes);

// 从模型中获取推断结果的格式

OutputsDataMap outputsInfo = network.getOutputsInfo();

vectorOutputsBlobs_names;

for (auto& item_out : outputsInfo) {

OutputsBlobs_names.push_back(item_out.first);

item_out.second->setPrecision(Precision::FP32);

}

// 获取可执行网络,这里的CPU指的是推断运行的器件,可选"GPU"

ExecutableNetwork executable_network = ie.LoadNetwork(network, "CPU");

// 推理请求

InferRequest infer_request = executable_network.CreateInferRequest();

//输入推理图像

Mat src = cv::imread("./img/test.jpg");

size_t h = lrInputBlob->getTensorDesc().getDims()[2];

size_t w = lrInputBlob->getTensorDesc().getDims()[3];

size_t image_size = h * w;

Mat inframe = src.clone();

cv::resize(src, src, Size(640, 640));

cv::cvtColor(src, src, COLOR_BGR2RGB);

InferenceEngine::LockedMemory<void> blobMapped = InferenceEngine::as(lrInputBlob)->wmap();

float* blob_data = blobMapped.as<float*>();

//nchw

for (size_t row = 0; row < h; row++) {

for (size_t col = 0; col < w; col++) {

for (size_t ch = 0; ch < 3; ch++) {

blob_data[image_size*ch + row * w + col] = float(src.at(row, col)[ch]) / 255.0f;

}

}

}

//执行推理

infer_request.Infer();

//设置置信度阈值和NMS阈值

float _cof_threshold = 0.1;

float _nms_area_threshold = 0.5;

//获取各层结果

vectororigin_rect;

vector<float> origin_rect_cof;

int s[3] = { 80,40,20 };

vectorblobs;

int i = 0;

for (auto OutputsBlob_name : OutputsBlobs_names) {

Blob::Ptr OutputBlob = infer_request.GetBlob(OutputsBlob_name);

parse_yolov5(OutputBlob, s[i], _cof_threshold, origin_rect, origin_rect_cof);

++i;

}

//后处理获得最终检测结果

vector<int> final_id;

//根据final_id获取最终结果

for (size_t i = 0; i < final_id.size(); ++i)

{

int idx = final_id[i];

Rect box = origin_rect[idx];

cv::rectangle(inframe, box, Scalar(140, 199, 0), 1, 8, 0);

}

cv::imwrite("./img/output.jpg", inframe);

}

此处要注意的是网络输出的结果需要进行转换处理,将中心点坐标转化为角点坐标和剔除置信度较低的候选框

bool Detector::parse_yolov5(const Blob::Ptr &blob,int net_grid,float cof_threshold,

vector&o_rect,vector<float>& o_rect_cof){

vector<int> anchors = get_anchors(net_grid);

LockedMemory<const void> blobMapped = as(blob)->rmap();

const float *output_blob = blobMapped.as<float *>();

//n个类是n+5

int item_size = 25;

size_t anchor_n = 3;

for(int n=0;n<anchor_n;++n)

for(int i=0;i<net_grid;++i)

for(int j=0;j<net_grid;++j)

{

double box_prob = output_blob[n*net_grid*net_grid*item_size + i*net_grid*item_size + j *item_size+ 4];

box_prob = sigmoid(box_prob);

//框置信度不满足则整体置信度不满足

if(box_prob < cof_threshold)

continue;

//将中心点坐标转化为角点坐标

double x = output_blob[n*net_grid*net_grid*item_size + i*net_grid*item_size + j*item_size + 0];

double y = output_blob[n*net_grid*net_grid*item_size + i*net_grid*item_size + j*item_size + 1];

double w = output_blob[n*net_grid*net_grid*item_size + i*net_grid*item_size + j*item_size + 2];

double max_prob = 0;

int idx=0;

for(int t=5;t<25;++t){

double tp= output_blob[n*net_grid*net_grid*item_size + i*net_grid*item_size + j *item_size+ t];

tp = sigmoid(tp);

if(tp > max_prob){

max_prob = tp;

idx = t;

}

}

float cof = box_prob * max_prob;

//剔除边框置信度小于阈值的边框

if(cof < cof_threshold)

continue;

x = (sigmoid(x)*2 - 0.5 + j)*640.0f/net_grid;

y = (sigmoid(y)*2 - 0.5 + i)*640.0f/net_grid;

w = pow(sigmoid(w)*2,2) * anchors[n*2];

h = pow(sigmoid(h)*2,2) * anchors[n*2 + 1];

double r_x = x - w/2;

double r_y = y - h/2;

Rect rect = Rect(round(r_x),round(r_y),round(w),round(h));

o_rect.push_back(rect);

o_rect_cof.push_back(cof);

}

if(o_rect.size() == 0) return false;

else return true;

}

double Detector::sigmoid(double x){

return (1 / (1 + exp(-x)));

}

vector<int> Detector::get_anchors(int net_grid){

vector<int> anchors(6);

int anchor_80[6] = {10,13, 16,30, 33,23};

int anchor_40[6] = {30,61, 62,45, 59,119};

int anchor_20[6] = {116,90, 156,198, 373,326};

if(net_grid == 80){ anchors.insert(anchors.begin(), anchor_80, anchor_80 + 6); }

else if(net_grid == 40){ anchors.insert(anchors.begin(), anchor_40, anchor_40 + 6); }

else if(net_grid == 20){ anchors.insert(anchors.begin(), anchor_20, anchor_20 + 6); }

return anchors;

}

运行以上的main.cpp工程,可以输出得到图像的检测结果画出候选的边界框并将结果保存为img文件夹下的ouput.jpg。

4.性能测试

Intel® DevCloud for the Edge 支持在英特尔的硬件平台上主动构建原型并试验面向计算机视觉的 AI 工作负载。可以使用OpenVINO™ 工具套件以及 CPU、GPU 和 VPU 和 FPGA 的组合来测试模型的性能。Intel® DevCloud 使用 Jupyter* Notebook 直接在 web 浏览器中执行代码,并立即看到可视化结果。通过转换得到的.xml和.bin文件在不同边缘节点进行测试来分析性能,具体操作可以参考https://bizwebcast.intel.cn/dev/articleDetails.html?id=95,测试结果见表4-1。