365天深度学习训练营-第10周:数据增强

- 本文为365天深度学习训练营 中的学习记录博客

- 参考文章:365天深度学习训练营-第10周:数据增强(训练营内部成员可读)

- 原作者:K同学啊|接辅导、项目定制

第10周:数据增强

难度:夯实基础⭐⭐

语言:Python3、TensorFlow2

要求:

学会在代码中使用数据增强手段来提高acc

请探索更多的数据增强手段并记录

在本教程中,你将学会如何进行数据增强,并通过数据增强用少量数据达到非常非常棒的识别准确率。

我将展示两种数据增强方式,以及如何自定义数据增强方式并将其放到我们代码当中,两种数据增强方式如下:

● 将数据增强模块嵌入model中

● 在Dataset数据集中进行数据增强

我的环境:

● 语言环境:Python3.6.5

● 编译器:Jupyter Notebook

● 深度学习环境:TensorFlow2.4.1

● 数据地址:和鲸、百度网盘

前期准备工作

设置GPU

import matplotlib.pyplot as plt

import numpy as np

#隐藏警告

import warnings

warnings.filterwarnings('ignore')

from tensorflow.keras import layers

import tensorflow as tf

gpus = tf.config.list_physical_devices("GPU")

if gpus:

tf.config.experimental.set_memory_growth(gpus[0], True) #设置GPU显存用量按需使用

tf.config.set_visible_devices([gpus[0]],"GPU")

# 打印显卡信息,确认GPU可用

print(gpus)

[]

加载数据

data_dir = "./365-8-data/"

img_height = 224

img_width = 224

batch_size = 32

train_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.3,

subset="training",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 3400 files belonging to 2 classes.

Using 2380 files for training.

val_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

validation_split=0.3,

subset="training",

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 3400 files belonging to 2 classes.

Using 2380 files for training.

由于原始数据集不包含测试集,因此需要创建一个。使用 tf.data.experimental.cardinality 确定验证集中有多少批次的数据,然后将其中的 20% 移至测试集。

val_batches = tf.data.experimental.cardinality(val_ds)

test_ds = val_ds.take(val_batches // 5)

val_ds = val_ds.skip(val_batches // 5)

print('Number of validation batches: %d' % tf.data.experimental.cardinality(val_ds))

print('Number of test batches: %d' % tf.data.experimental.cardinality(test_ds))

Number of validation batches: 60

Number of test batches: 15

class_names = train_ds.class_names

print(class_names)

['cat', 'dog']

AUTOTUNE = tf.data.AUTOTUNE

def preprocess_image(image,label):

return (image/255.0,label)

# 归一化处理

train_ds = train_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

val_ds = val_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

test_ds = test_ds.map(preprocess_image, num_parallel_calls=AUTOTUNE)

train_ds = train_ds.cache().prefetch(buffer_size=AUTOTUNE)

val_ds = val_ds.cache().prefetch(buffer_size=AUTOTUNE)

plt.figure(figsize=(15, 10)) # 图形的宽为15高为10

for images, labels in train_ds.take(1):

for i in range(8):

ax = plt.subplot(5, 8, i + 1)

plt.imshow(images[i])

plt.title(class_names[labels[i]])

plt.axis("off")

数据增强

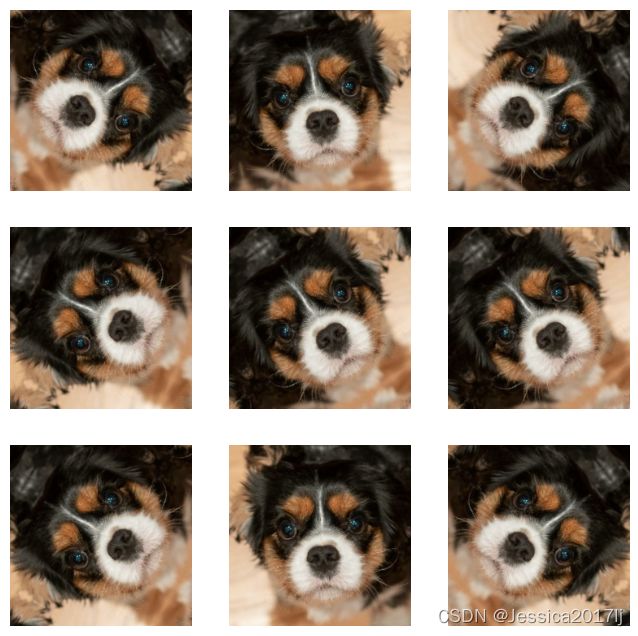

我们可以使用 tf.keras.layers.experimental.preprocessing.RandomFlip 与 tf.keras.layers.experimental.preprocessing.RandomRotation 进行数据增强

tf.keras.layers.experimental.preprocessing.RandomFlip:水平和垂直随机翻转每个图像。

tf.keras.layers.experimental.preprocessing.RandomRotation:随机旋转每个图像

data_augmentation = tf.keras.Sequential([

tf.keras.layers.experimental.preprocessing.RandomFlip("horizontal_and_vertical"),

tf.keras.layers.experimental.preprocessing.RandomRotation(0.2),

])

第一个层表示进行随机的水平和垂直翻转,而第二个层表示按照 0.2 的弧度值进行随机旋转

# Add the image to a batch.

image = tf.expand_dims(images[i], 0)

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = data_augmentation(image)

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0])

plt.axis("off")

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:5 out of the last 5 calls to .f at 0x0000024253359B80> triggered tf.function retracing. Tracing is expensive and the excessive number of tracings could be due to (1) creating @tf.function repeatedly in a loop, (2) passing tensors with different shapes, (3) passing Python objects instead of tensors. For (1), please define your @tf.function outside of the loop. For (2), @tf.function has reduce_retracing=True option that can avoid unnecessary retracing. For (3), please refer to https://www.tensorflow.org/guide/function#controlling_retracing and https://www.tensorflow.org/api_docs/python/tf/function for more details.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:6 out of the last 6 calls to .f at 0x0000024253051E50> triggered tf.function retracing. Tracing is expensive and the excessive number of tracings could be due to (1) creating @tf.function repeatedly in a loop, (2) passing tensors with different shapes, (3) passing Python objects instead of tensors. For (1), please define your @tf.function outside of the loop. For (2), @tf.function has reduce_retracing=True option that can avoid unnecessary retracing. For (3), please refer to https://www.tensorflow.org/guide/function#controlling_retracing and https://www.tensorflow.org/api_docs/python/tf/function for more details.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

增强方式

方法一:将其嵌入model中

model = tf.keras.Sequential([

data_augmentation,

layers.Conv2D(16, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

])

这样做的好处是:

● 数据增强这块的工作可以得到GPU的加速(如果你使用了GPU训练的话)

注意:只有在模型训练时(Model.fit)才会进行增强,在模型评估(Model.evaluate)以及预测(Model.predict)时并不会进行增强操作

方法二:在Dataset数据集中进行数据增强

batch_size = 32

AUTOTUNE = tf.data.AUTOTUNE

def prepare(ds):

ds = ds.map(lambda x, y: (data_augmentation(x, training=True), y), num_parallel_calls=AUTOTUNE)

return ds

train_ds = prepare(train_ds)

WARNING:tensorflow:Using a while_loop for converting RngReadAndSkip cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting Bitcast cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting StatelessRandomUniformV2 cause there is no registered converter for this op.

WARNING:tensorflow:Using a while_loop for converting ImageProjectiveTransformV3 cause there is no registered converter for this op.

训练模型

model = tf.keras.Sequential([

layers.Conv2D(16, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(32, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Conv2D(64, 3, padding='same', activation='relu'),

layers.MaxPooling2D(),

layers.Flatten(),

layers.Dense(128, activation='relu'),

layers.Dense(len(class_names))

])

在准备对模型进行训练之前,还需要再对其进行一些设置。以下内容是在模型的编译步骤中添加的:

损失函数(loss):用于衡量模型在训练期间的准确率。

优化器(optimizer):决定模型如何根据其看到的数据和自身的损失函数进行更新。

评价函数(metrics):用于监控训练和测试步骤。以下示例使用了准确率,即被正确分类的图像的比率。

model.compile(optimizer='adam',

loss=tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True),

metrics=['accuracy'])

epochs=20

history = model.fit(

train_ds,

validation_data=val_ds,

epochs=epochs

)

Epoch 1/20

75/75 [==============================] - 22s 289ms/step - loss: 0.6459 - accuracy: 0.6958 - val_loss: 0.3205 - val_accuracy: 0.8668

Epoch 2/20

75/75 [==============================] - 23s 305ms/step - loss: 0.2935 - accuracy: 0.8765 - val_loss: 0.2129 - val_accuracy: 0.9179

Epoch 3/20

75/75 [==============================] - 23s 308ms/step - loss: 0.1988 - accuracy: 0.9277 - val_loss: 0.1838 - val_accuracy: 0.9226

Epoch 4/20

75/75 [==============================] - 23s 309ms/step - loss: 0.1624 - accuracy: 0.9349 - val_loss: 0.1245 - val_accuracy: 0.9511

Epoch 5/20

75/75 [==============================] - 24s 311ms/step - loss: 0.1316 - accuracy: 0.9462 - val_loss: 0.1120 - val_accuracy: 0.9611

Epoch 6/20

75/75 [==============================] - 23s 307ms/step - loss: 0.1291 - accuracy: 0.9475 - val_loss: 0.0893 - val_accuracy: 0.9637

Epoch 7/20

75/75 [==============================] - 23s 307ms/step - loss: 0.1027 - accuracy: 0.9618 - val_loss: 0.0999 - val_accuracy: 0.9658

Epoch 8/20

75/75 [==============================] - 23s 310ms/step - loss: 0.0852 - accuracy: 0.9664 - val_loss: 0.0733 - val_accuracy: 0.9758

Epoch 9/20

75/75 [==============================] - 23s 310ms/step - loss: 0.0835 - accuracy: 0.9697 - val_loss: 0.0658 - val_accuracy: 0.9742

Epoch 10/20

75/75 [==============================] - 24s 312ms/step - loss: 0.0678 - accuracy: 0.9727 - val_loss: 0.0517 - val_accuracy: 0.9789

Epoch 11/20

75/75 [==============================] - 23s 308ms/step - loss: 0.0694 - accuracy: 0.9773 - val_loss: 0.0680 - val_accuracy: 0.9737

Epoch 12/20

75/75 [==============================] - 24s 319ms/step - loss: 0.0822 - accuracy: 0.9718 - val_loss: 0.0669 - val_accuracy: 0.9789

Epoch 13/20

75/75 [==============================] - 24s 323ms/step - loss: 0.0552 - accuracy: 0.9811 - val_loss: 0.0460 - val_accuracy: 0.9842

Epoch 14/20

75/75 [==============================] - 24s 313ms/step - loss: 0.0706 - accuracy: 0.9735 - val_loss: 0.0485 - val_accuracy: 0.9811

Epoch 15/20

75/75 [==============================] - 23s 309ms/step - loss: 0.0545 - accuracy: 0.9807 - val_loss: 0.0798 - val_accuracy: 0.9747

Epoch 16/20

75/75 [==============================] - 24s 314ms/step - loss: 0.0610 - accuracy: 0.9840 - val_loss: 0.0470 - val_accuracy: 0.9842

Epoch 17/20

75/75 [==============================] - 25s 334ms/step - loss: 0.0642 - accuracy: 0.9761 - val_loss: 0.0315 - val_accuracy: 0.9884

Epoch 18/20

75/75 [==============================] - 27s 351ms/step - loss: 0.0549 - accuracy: 0.9811 - val_loss: 0.0644 - val_accuracy: 0.9768

Epoch 19/20

75/75 [==============================] - 25s 329ms/step - loss: 0.0464 - accuracy: 0.9836 - val_loss: 0.0428 - val_accuracy: 0.9816

Epoch 20/20

75/75 [==============================] - 23s 299ms/step - loss: 0.0550 - accuracy: 0.9819 - val_loss: 0.0347 - val_accuracy: 0.9874

loss, acc = model.evaluate(test_ds)

print("Accuracy", acc)

15/15 [==============================] - 1s 51ms/step - loss: 0.0375 - accuracy: 0.9854

Accuracy 0.9854166507720947

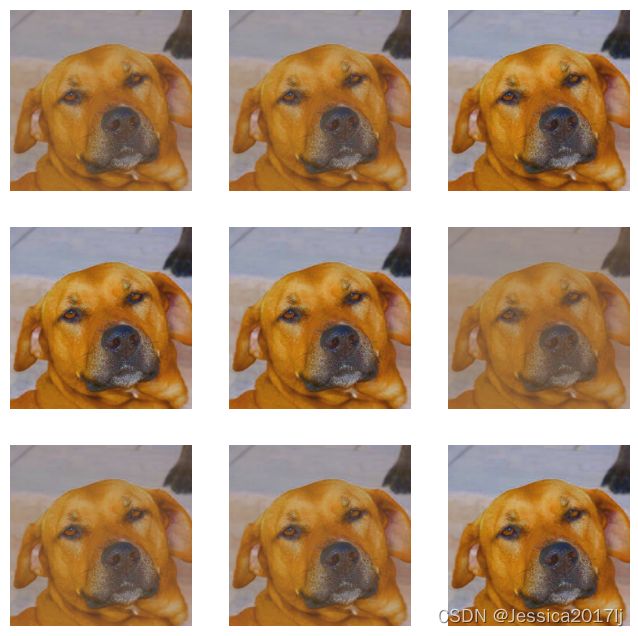

自定义增强函数

import random

# 这是大家可以自由发挥的一个地方

def aug_img(image):

seed = (random.randint(0,9), 0)

# 随机改变图像对比度

stateless_random_brightness = tf.image.stateless_random_contrast(image, lower=0.1, upper=1.0, seed=seed)

return stateless_random_brightness

image = tf.expand_dims(images[3]*255, 0)

print("Min and max pixel values:", image.numpy().min(), image.numpy().max())

Min and max pixel values: 2.4591687 241.47968

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = aug_img(image)

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0].numpy().astype("uint8"))

plt.axis("off")

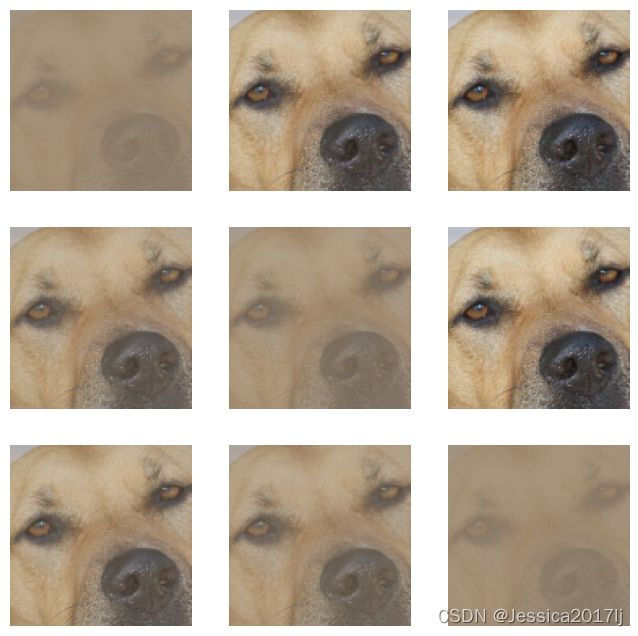

调整图像饱和度

#visualize(image, saturated)

image = tf.expand_dims(images[3]*255, 0)

saturated = tf.image.adjust_saturation(image, 3)

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = aug_img(saturated)

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0].numpy().astype("uint8"))

plt.axis("off")

更改图像亮度

#visualize(image, saturated)

image = tf.expand_dims(images[3]*255, 0)

bright = tf.image.adjust_brightness(image, 0.4)

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = aug_img(bright )

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0].numpy().astype("uint8"))

plt.axis("off")

对图像进行裁剪

image = tf.expand_dims(images[3]*255, 0)

cropped = tf.image.central_crop(image, central_fraction=0.5)

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = aug_img(cropped)

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0].numpy().astype("uint8"))

plt.axis("off")

旋转图像

image = tf.expand_dims(images[3]*255, 0)

rotated = tf.image.rot90(image)

plt.figure(figsize=(8, 8))

for i in range(9):

augmented_image = aug_img(rotated)

ax = plt.subplot(3, 3, i + 1)

plt.imshow(augmented_image[0].numpy().astype("uint8"))

plt.axis("off")

那么如何将自定义增强函数应用到我们数据上呢?

请参考上文的 preprocess_image 函数,将 aug_img 函数嵌入到 preprocess_image 函数中,在数据预处理时完成数据增强就OK啦。

预测

import numpy as np

# 采用加载的模型(new_model)来看预测结果

plt.figure(figsize=(18, 3)) # 图形的宽为18高为5

plt.suptitle("The prediction")

for images, labels in val_ds.take(1):

for i in range(8):

ax = plt.subplot(1,8, i + 1)

# 显示图片

plt.imshow(images[i].numpy())

# 需要给图片增加一个维度

img_array = tf.expand_dims(images[i], 0)

# 使用模型预测图片中的人物

predictions = model.predict(img_array)

plt.title(class_names[np.argmax(predictions)])

plt.axis("off")

1/1 [==============================] - 0s 67ms/step

1/1 [==============================] - 0s 19ms/step

1/1 [==============================] - 0s 18ms/step

1/1 [==============================] - 0s 18ms/step

1/1 [==============================] - 0s 18ms/step

1/1 [==============================] - 0s 18ms/step

1/1 [==============================] - 0s 18ms/step

1/1 [==============================] - 0s 18ms/step

[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-iKuMlgUM-1664521063999)(output_47_1.png)]

在网上下载了4张图片进行预测

cd 365-8-data

C:\Users\jie liang\Downloads\365-8-data

#隐藏警告

import warnings

import os,PIL,pathlib

warnings.filterwarnings('ignore')

data_dir = "./test/"

data_dir = pathlib.Path(data_dir)

image_count = len(list(data_dir.glob('*')))

print("图片总数为:",image_count)

图片总数为: 2

testbyme_ds = tf.keras.preprocessing.image_dataset_from_directory(

data_dir,

seed=12,

image_size=(img_height, img_width),

batch_size=batch_size)

Found 4 files belonging to 2 classes.

预测结果

from PIL import Image

import numpy as np

plt.figure(figsize=(10, 4)) # 图形的宽为10高为5

for images, labels in testbyme_ds.take(1):

for i in range(4):

ax = plt.subplot(1,4, i + 1)

# 显示图片

plt.imshow(images[i].numpy().astype("uint8"))

# 需要给图片增加一个维度

img_array = tf.expand_dims(images[i], 0)

# 使用模型预测图片中的人物

predictions = model.predict(img_array)

plt.title(class_names[np.argmax(predictions)])

plt.axis("off")

1/1 [==============================] - 0s 17ms/step

1/1 [==============================] - 0s 17ms/step

1/1 [==============================] - 0s 18ms/step

1/1 [==============================] - 0s 19ms/step

实际结果

plt.figure(figsize=(10, 4)) # 图形的宽为10高为5

for images, labels in testbyme_ds.take(1):

for i in range(4):

ax = plt.subplot(1, 4, i + 1)

plt.imshow(images[i].numpy().astype("uint8"))

plt.title(class_names[labels[i]])

plt.axis("off")

预测结果还是存在一些偏差

![[外链图片转存失败,源站可能有防盗链机制,建议将图片保存下来直接上传(img-U5NruHdP-1664521063996)(output_13_0.png)]](http://img.e-com-net.com/image/info8/525755bbb7ba4cbdbc3dcc90892029d0.jpg)