mmdetection使用方法(使用mmdetection搭建faster-RCNN模型)

使用mmdetection搭建faster-RCNN模型

- 配置环境

- 使用方法

-

- 使用py文件训练模型

- 使用训练好的模型做预测

- 参考链接

- 附录

配置环境

操作系统:Ubuntu20.04

CUDA版本:10.2

Pytorch版本:1.6.0

TorchVision版本:0.7.0

mmdet版本:2.5.0

mmcv版本:1.1.5

IDE:PyCharm

硬件:RTX2070S*2

使用方法

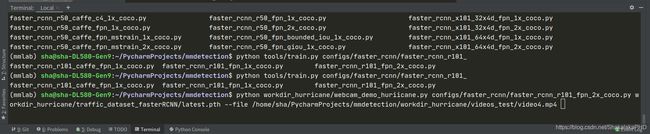

在PyCharm的终端中输入如下命令

python tools/train.py configs/faster_rcnn/faster_rcnn_r101_fpn_2x_coco.py --gpus 1 --work-dir workdir_hurricane/

- tools/train.py 表示调用tools文件夹下的train.py文件

- configs/faster_rcnn/faster_rcnn_r101_fpn_2x_coco.py表示我们的网络模型是faster_rcnn中backbone为resnet101,数据集的形势为coco数据集

- –gpus 1表示我们使用训练的GPU为1块,当然你也可以设置为2或者其他数量(如果你有多块GPU的话)

- –work-dir workdir_hurricane/ 表示我们指定的工作目录为当前文件件下的workdir_hurricane文件夹,生成的faster_rcnn_r101_fpn_2x_coco.py文件便存在这个文件夹下

此时便生成一个py文件并开始训练

这py文件就是我们需要的文件

使用py文件训练模型

在pycharm的终端中输入如下命令

python tools/train.py workdir_hurricane/faster_rcnn_r101_fpn_2x_coco.py --gpus 1

便开始训练了,训练结果如下:

可以看到权重文件以及训练的日志都记录下来了

使用训练好的模型做预测

在pycharm的终端中输入如下命令

python workdir_hurricane/webcam_demo_huriicane.py configs/faster_rcnn/faster_rcnn_r101_fpn_2x_coco.py workdir_hurricane/traffic_dataset_fasterRCNN/latest.pth --file /home/sha/PycharmProjects/mmdetection/workdir_hurricane/videos_test/video4.mp4

注意,此处的webcam_demo_huriicane.py文件是根据mmdetection的demo文件夹下的webcam_demo.py修改而来,其代码如下:

import argparse

import os

import cv2 as cv

import torch

from mmdet.apis import inference_detector, init_detector

file_path = __file__

dir_path = os.path.dirname(file_path)

output_video_path = os.path.join(dir_path, 'result.mp4')

def parse_args():

parser = argparse.ArgumentParser(description='MMDetection webcam demo')

parser.add_argument('config', help='test config file path')

parser.add_argument('checkpoint', help='checkpoint file')

parser.add_argument(

'--device', type=str, default='cuda:0', help='CPU/CUDA device option')

parser.add_argument(

'--camera-id', type=int, default=0, help='camera device id')

parser.add_argument(

'--score-thr', type=float, default=0.5, help='bbox score threshold')

parser.add_argument(

'--file', type=str, help='where the test video path')

parser.add_argument(

'--out', type=str, default=output_video_path, help='the out put video path')

args = parser.parse_args()

return args

def main():

args = parse_args()

print("*" * 50)

print(args)

print("*"*50)

if not args.file:

print('No target file!')

exit(0)

device = torch.device(args.device)

print('device:', args.device)

model = init_detector(args.config, args.checkpoint, device=device)

camera = cv.VideoCapture(args.file)

camera_width = int(camera.get(cv.CAP_PROP_FRAME_WIDTH))

camera_hight = int(camera.get(cv.CAP_PROP_FRAME_HEIGHT))

print(camera_hight,camera_width)

fps = camera.get(cv.CAP_PROP_FPS)

video_writer = cv.VideoWriter(args.out, cv.VideoWriter_fourcc(*'mp4v'),

fps, (camera_width, camera_hight))

count = 0

print('Press "Esc", "q" or "Q" to exit.')

while True:

torch.cuda.empty_cache()

ret_val, img = camera.read()

if ret_val:

if count < 0:

count += 1

print("Write {} in result Successfuly!".format(count))

continue

result = inference_detector(model, img)

ch = cv.waitKey(1)

if ch == 27 or ch == ord('q') or ch == ord('Q'):

break

frame = model.show_result(img, result, score_thr=args.score_thr, wait_time=1, show=False)

cv.imshow('frame', frame)

if len(frame)>=1:

video_writer.write(frame)

count += 1

print("Write {} in result Successfuly!".format(count))

else:

print('Load fail!')

break

camera.release()

video_writer.release()

cv.destroyWindow()

if __name__ == '__main__':

main()

python workdir_hurricane/webcam_demo_huriicane.py configs/faster_rcnn/faster_rcnn_r101_fpn_2x_coco.py workdir_hurricane/traffic_dataset_fasterRCNN/latest.pth --file /home/sha/PycharmProjects/mmdetection/workdir_hurricane/videos_test/video4.mp4

这段命令的作用在于:

读取home/sha/PycharmProjects/mmdetection/workdir_hurricane/videos_test/video4.mp4的MP4视频文件,并使用训练好的权重进行检测

参考链接

博主学习mmdetection方法时发现B站上有位博主讲的很不错,大家如果按照本博客教程发现跑不通的话可以参考此链接:https://www.bilibili.com/video/BV1jV411U7zb?p=7

这位博主讲的十分细致,大家如果觉得有收获记得给B站那位UP主一键三连哦!

另外,如果看完发现还是有不清晰的地方,推荐阅读mmdetection的说明文件,参考此链接

可以先从getting_started.md这个文件读起

遵循互联网精神,本文引用了mmdetectin,现附上引用链接:

遵循互联网精神,本文引用了mmdetectin,现附上引用链接:

@article{mmdetection,

title = {{MMDetection}: Open MMLab Detection Toolbox and Benchmark},

author = {Chen, Kai and Wang, Jiaqi and Pang, Jiangmiao and Cao, Yuhang and

Xiong, Yu and Li, Xiaoxiao and Sun, Shuyang and Feng, Wansen and

Liu, Ziwei and Xu, Jiarui and Zhang, Zheng and Cheng, Dazhi and

Zhu, Chenchen and Cheng, Tianheng and Zhao, Qijie and Li, Buyu and

Lu, Xin and Zhu, Rui and Wu, Yue and Dai, Jifeng and Wang, Jingdong

and Shi, Jianping and Ouyang, Wanli and Loy, Chen Change and Lin, Dahua},

journal= {arXiv preprint arXiv:1906.07155},

year={2019}

}

附录

有些朋友或许会对生成的faster_rcnn_r101_fpn_2x_coco.py文件感兴趣,他的注释如下:

这一段是在mmdetection的config.md文件copy过来的:

model = dict(

type='MaskRCNN', # The name of detector

pretrained=

'torchvision://resnet50', # The ImageNet pretrained backbone to be loaded

backbone=dict( # The config of backbone

type='ResNet', # The type of the backbone, refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/backbones/resnet.py#L288 for more details.

depth=50, # The depth of backbone, usually it is 50 or 101 for ResNet and ResNext backbones.

num_stages=4, # Number of stages of the backbone.

out_indices=(0, 1, 2, 3), # The index of output feature maps produced in each stages

frozen_stages=1, # The weights in the first 1 stage are fronzen

norm_cfg=dict( # The config of normalization layers.

type='BN', # Type of norm layer, usually it is BN or GN

requires_grad=True), # Whether to train the gamma and beta in BN

norm_eval=True, # Whether to freeze the statistics in BN

style='pytorch'), # The style of backbone, 'pytorch' means that stride 2 layers are in 3x3 conv, 'caffe' means stride 2 layers are in 1x1 convs.

neck=dict(

type='FPN', # The neck of detector is FPN. We also support 'NASFPN', 'PAFPN', etc. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/necks/fpn.py#L10 for more details.

in_channels=[256, 512, 1024, 2048], # The input channels, this is consistent with the output channels of backbone

out_channels=256, # The output channels of each level of the pyramid feature map

num_outs=5), # The number of output scales

rpn_head=dict(

type='RPNHead', # The type of RPN head is 'RPNHead', we also support 'GARPNHead', etc. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/dense_heads/rpn_head.py#L12 for more details.

in_channels=256, # The input channels of each input feature map, this is consistent with the output channels of neck

feat_channels=256, # Feature channels of convolutional layers in the head.

anchor_generator=dict( # The config of anchor generator

type='AnchorGenerator', # Most of methods use AnchorGenerator, SSD Detectors uses `SSDAnchorGenerator`. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/anchor/anchor_generator.py#L10 for more details

scales=[8], # Basic scale of the anchor, the area of the anchor in one position of a feature map will be scale * base_sizes

ratios=[0.5, 1.0, 2.0], # The ratio between height and width.

strides=[4, 8, 16, 32, 64]), # The strides of the anchor generator. This is consistent with the FPN feature strides. The strides will be taken as base_sizes if base_sizes is not set.

bbox_coder=dict( # Config of box coder to encode and decode the boxes during training and testing

type='DeltaXYWHBBoxCoder', # Type of box coder. 'DeltaXYWHBBoxCoder' is applied for most of methods. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/bbox/coder/delta_xywh_bbox_coder.py#L9 for more details.

target_means=[0.0, 0.0, 0.0, 0.0], # The target means used to encode and decode boxes

target_stds=[1.0, 1.0, 1.0, 1.0]), # The standard variance used to encode and decode boxes

loss_cls=dict( # Config of loss function for the classification branch

type='CrossEntropyLoss', # Type of loss for classification branch, we also support FocalLoss etc.

use_sigmoid=True, # RPN usually perform two-class classification, so it usually uses sigmoid function.

loss_weight=1.0), # Loss weight of the classification branch.

loss_bbox=dict( # Config of loss function for the regression branch.

type='L1Loss', # Type of loss, we also support many IoU Losses and smooth L1-loss, etc. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/losses/smooth_l1_loss.py#L56 for implementation.

loss_weight=1.0)), # Loss weight of the regression branch.

roi_head=dict( # RoIHead encapsulates the second stage of two-stage/cascade detectors.

type='StandardRoIHead', # Type of the RoI head. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/roi_heads/standard_roi_head.py#L10 for implementation.

bbox_roi_extractor=dict( # RoI feature extractor for bbox regression.

type='SingleRoIExtractor', # Type of the RoI feature extractor, most of methods uses SingleRoIExtractor. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/roi_heads/roi_extractors/single_level.py#L10 for details.

roi_layer=dict( # Config of RoI Layer

type='RoIAlign', # Type of RoI Layer, DeformRoIPoolingPack and ModulatedDeformRoIPoolingPack are also supported. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/ops/roi_align/roi_align.py#L79 for details.

output_size=7, # The output size of feature maps.

sampling_ratio=0), # Sampling ratio when extracting the RoI features. 0 means adaptive ratio.

out_channels=256, # output channels of the extracted feature.

featmap_strides=[4, 8, 16, 32]), # Strides of multi-scale feature maps. It should be consistent to the architecture of the backbone.

bbox_head=dict( # Config of box head in the RoIHead.

type='Shared2FCBBoxHead', # Type of the bbox head, Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/roi_heads/bbox_heads/convfc_bbox_head.py#L177 for implementation details.

in_channels=256, # Input channels for bbox head. This is consistent with the out_channels in roi_extractor

fc_out_channels=1024, # Output feature channels of FC layers.

roi_feat_size=7, # Size of RoI features

num_classes=80, # Number of classes for classification

bbox_coder=dict( # Box coder used in the second stage.

type='DeltaXYWHBBoxCoder', # Type of box coder. 'DeltaXYWHBBoxCoder' is applied for most of methods.

target_means=[0.0, 0.0, 0.0, 0.0], # Means used to encode and decode box

target_stds=[0.1, 0.1, 0.2, 0.2]), # Standard variance for encoding and decoding. It is smaller since the boxes are more accurate. [0.1, 0.1, 0.2, 0.2] is a conventional setting.

reg_class_agnostic=False, # Whether the regression is class agnostic.

loss_cls=dict( # Config of loss function for the classification branch

type='CrossEntropyLoss', # Type of loss for classification branch, we also support FocalLoss etc.

use_sigmoid=False, # Whether to use sigmoid.

loss_weight=1.0), # Loss weight of the classification branch.

loss_bbox=dict( # Config of loss function for the regression branch.

type='L1Loss', # Type of loss, we also support many IoU Losses and smooth L1-loss, etc.

loss_weight=1.0)), # Loss weight of the regression branch.

mask_roi_extractor=dict( # RoI feature extractor for bbox regression.

type='SingleRoIExtractor', # Type of the RoI feature extractor, most of methods uses SingleRoIExtractor.

roi_layer=dict( # Config of RoI Layer that extracts features for instance segmentation

type='RoIAlign', # Type of RoI Layer, DeformRoIPoolingPack and ModulatedDeformRoIPoolingPack are also supported

output_size=14, # The output size of feature maps.

sampling_ratio=0), # Sampling ratio when extracting the RoI features.

out_channels=256, # Output channels of the extracted feature.

featmap_strides=[4, 8, 16, 32]), # Strides of multi-scale feature maps.

mask_head=dict( # Mask prediction head

type='FCNMaskHead', # Type of mask head, refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/models/roi_heads/mask_heads/fcn_mask_head.py#L21 for implementation details.

num_convs=4, # Number of convolutional layers in mask head.

in_channels=256, # Input channels, should be consistent with the output channels of mask roi extractor.

conv_out_channels=256, # Output channels of the convolutional layer.

num_classes=80, # Number of class to be segmented.

loss_mask=dict( # Config of loss function for the mask branch.

type='CrossEntropyLoss', # Type of loss used for segmentation

use_mask=True, # Whether to only train the mask in the correct class.

loss_weight=1.0)))) # Loss weight of mask branch.

train_cfg = dict( # Config of training hyperparameters for rpn and rcnn

rpn=dict( # Training config of rpn

assigner=dict( # Config of assigner

type='MaxIoUAssigner', # Type of assigner, MaxIoUAssigner is used for many common detectors. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/bbox/assigners/max_iou_assigner.py#L10 for more details.

pos_iou_thr=0.7, # IoU >= threshold 0.7 will be taken as positive samples

neg_iou_thr=0.3, # IoU < threshold 0.3 will be taken as negative samples

min_pos_iou=0.3, # The minimal IoU threshold to take boxes as positive samples

match_low_quality=True, # Whether to match the boxes under low quality (see API doc for more details).

ignore_iof_thr=-1), # IoF threshold for ignoring bboxes

sampler=dict( # Config of positive/negative sampler

type='RandomSampler', # Type of sampler, PseudoSampler and other samplers are also supported. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/bbox/samplers/random_sampler.py#L8 for implementation details.

num=256, # Number of samples

pos_fraction=0.5, # The ratio of positive samples in the total samples.

neg_pos_ub=-1, # The upper bound of negative samples based on the number of positive samples.

add_gt_as_proposals=False), # Whether add GT as proposals after sampling.

allowed_border=-1, # The border allowed after padding for valid anchors.

pos_weight=-1, # The weight of positive samples during training.

debug=False), # Whether to set the debug mode

rpn_proposal=dict( # The config to generate proposals during training

nms_across_levels=False, # Whether to do NMS for boxes across levels

nms_pre=2000, # The number of boxes before NMS

nms_post=1000, # The number of boxes to be kept by NMS

max_num=1000, # The number of boxes to be used after NMS

nms_thr=0.7, # The threshold to be used during NMS

min_bbox_size=0), # The allowed minimal box size

rcnn=dict( # The config for the roi heads.

assigner=dict( # Config of assigner for second stage, this is different for that in rpn

type='MaxIoUAssigner', # Type of assigner, MaxIoUAssigner is used for all roi_heads for now. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/bbox/assigners/max_iou_assigner.py#L10 for more details.

pos_iou_thr=0.5, # IoU >= threshold 0.5 will be taken as positive samples

neg_iou_thr=0.5, # IoU >= threshold 0.5 will be taken as positive samples

min_pos_iou=0.5, # The minimal IoU threshold to take boxes as positive samples

match_low_quality=False, # Whether to match the boxes under low quality (see API doc for more details).

ignore_iof_thr=-1), # IoF threshold for ignoring bboxes

sampler=dict(

type='RandomSampler', # Type of sampler, PseudoSampler and other samplers are also supported. Refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/bbox/samplers/random_sampler.py#L8 for implementation details.

num=512, # Number of samples

pos_fraction=0.25, # The ratio of positive samples in the total samples.

neg_pos_ub=-1, # The upper bound of negative samples based on the number of positive samples.

add_gt_as_proposals=True

), # Whether add GT as proposals after sampling.

mask_size=28, # Size of mask

pos_weight=-1, # The weight of positive samples during training.

debug=False)) # Whether to set the debug mode

test_cfg = dict( # Config for testing hyperparameters for rpn and rcnn

rpn=dict( # The config to generate proposals during testing

nms_across_levels=False, # Whether to do NMS for boxes across levels

nms_pre=1000, # The number of boxes before NMS

nms_post=1000, # The number of boxes to be kept by NMS

max_num=1000, # The number of boxes to be used after NMS

nms_thr=0.7, # The threshold to be used during NMS

min_bbox_size=0), # The allowed minimal box size

rcnn=dict( # The config for the roi heads.

score_thr=0.05, # Threshold to filter out boxes

nms=dict( # Config of nms in the second stage

type='nms', # Type of nms

iou_thr=0.5), # NMS threshold

max_per_img=100, # Max number of detections of each image

mask_thr_binary=0.5)) # Threshold of mask prediction

dataset_type = 'CocoDataset' # Dataset type, this will be used to define the dataset

data_root = 'data/coco/' # Root path of data

img_norm_cfg = dict( # Image normalization config to normalize the input images

mean=[123.675, 116.28, 103.53], # Mean values used to pre-training the pre-trained backbone models

std=[58.395, 57.12, 57.375], # Standard variance used to pre-training the pre-trained backbone models

to_rgb=True

) # The channel orders of image used to pre-training the pre-trained backbone models

train_pipeline = [ # Training pipeline

dict(type='LoadImageFromFile'), # First pipeline to load images from file path

dict(

type='LoadAnnotations', # Second pipeline to load annotations for current image

with_bbox=True, # Whether to use bounding box, True for detection

with_mask=True, # Whether to use instance mask, True for instance segmentation

poly2mask=False), # Whether to convert the polygon mask to instance mask, set False for acceleration and to save memory

dict(

type='Resize', # Augmentation pipeline that resize the images and their annotations

img_scale=(1333, 800), # The largest scale of image

keep_ratio=True

), # whether to keep the ratio between height and width.

dict(

type='RandomFlip', # Augmentation pipeline that flip the images and their annotations

flip_ratio=0.5), # The ratio or probability to flip

dict(

type='Normalize', # Augmentation pipeline that normalize the input images

mean=[123.675, 116.28, 103.53], # These keys are the same of img_norm_cfg since the

std=[58.395, 57.12, 57.375], # keys of img_norm_cfg are used here as arguments

to_rgb=True),

dict(

type='Pad', # Padding config

size_divisor=32), # The number the padded images should be divisible

dict(type='DefaultFormatBundle'), # Default format bundle to gather data in the pipeline

dict(

type='Collect', # Pipeline that decides which keys in the data should be passed to the detector

keys=['img', 'gt_bboxes', 'gt_labels', 'gt_masks'])

]

test_pipeline = [

dict(type='LoadImageFromFile'), # First pipeline to load images from file path

dict(

type='MultiScaleFlipAug', # An encapsulation that encapsulates the testing augmentations

img_scale=(1333, 800), # Decides the largest scale for testing, used for the Resize pipeline

flip=False, # Whether to flip images during testing

transforms=[

dict(type='Resize', # Use resize augmentation

keep_ratio=True), # Whether to keep the ratio between height and width, the img_scale set here will be supressed by the img_scale set above.

dict(type='RandomFlip'), # Thought RandomFlip is added in pipeline, it is not used because flip=False

dict(

type='Normalize', # Normalization config, the values are from img_norm_cfg

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(

type='Pad', # Padding config to pad images divisable by 32.

size_divisor=32),

dict(

type='ImageToTensor', # convert image to tensor

keys=['img']),

dict(

type='Collect', # Collect pipeline that collect necessary keys for testing.

keys=['img'])

])

]

data = dict(

samples_per_gpu=2, # Batch size of a single GPU

workers_per_gpu=2, # Worker to pre-fetch data for each single GPU

train=dict( # Train dataset config

type='CocoDataset', # Type of dataset, refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/datasets/coco.py#L19 for details.

ann_file='data/coco/annotations/instances_train2017.json', # Path of annotation file

img_prefix='data/coco/train2017/', # Prefix of image path

pipeline=[ # pipeline, this is passed by the train_pipeline created before.

dict(type='LoadImageFromFile'),

dict(

type='LoadAnnotations',

with_bbox=True,

with_mask=True,

poly2mask=False),

dict(type='Resize', img_scale=(1333, 800), keep_ratio=True),

dict(type='RandomFlip', flip_ratio=0.5),

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='Pad', size_divisor=32),

dict(type='DefaultFormatBundle'),

dict(

type='Collect',

keys=['img', 'gt_bboxes', 'gt_labels', 'gt_masks'])

]),

val=dict( # Validation dataset config

type='CocoDataset',

ann_file='data/coco/annotations/instances_val2017.json',

img_prefix='data/coco/val2017/',

pipeline=[ # Pipeline is passed by test_pipeline created before

dict(type='LoadImageFromFile'),

dict(

type='MultiScaleFlipAug',

img_scale=(1333, 800),

flip=False,

transforms=[

dict(type='Resize', keep_ratio=True),

dict(type='RandomFlip'),

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='Pad', size_divisor=32),

dict(type='ImageToTensor', keys=['img']),

dict(type='Collect', keys=['img'])

])

]),

test=dict( # Test dataset config, modify the ann_file for test-dev/test submission

type='CocoDataset',

ann_file='data/coco/annotations/instances_val2017.json',

img_prefix='data/coco/val2017/',

pipeline=[ # Pipeline is passed by test_pipeline created before

dict(type='LoadImageFromFile'),

dict(

type='MultiScaleFlipAug',

img_scale=(1333, 800),

flip=False,

transforms=[

dict(type='Resize', keep_ratio=True),

dict(type='RandomFlip'),

dict(

type='Normalize',

mean=[123.675, 116.28, 103.53],

std=[58.395, 57.12, 57.375],

to_rgb=True),

dict(type='Pad', size_divisor=32),

dict(type='ImageToTensor', keys=['img']),

dict(type='Collect', keys=['img'])

])

],

samples_per_gpu=2 # Batch size of a single GPU used in testing

))

evaluation = dict( # The config to build the evaluation hook, refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/evaluation/eval_hooks.py#L7 for more details.

interval=1, # Evaluation interval

metric=['bbox', 'segm']) # Metrics used during evaluation

optimizer = dict( # Config used to build optimizer, support all the optimizers in PyTorch whose arguments are also the same as those in PyTorch

type='SGD', # Type of optimizers, refer to https://github.com/open-mmlab/mmdetection/blob/master/mmdet/core/optimizer/default_constructor.py#L13 for more details

lr=0.02, # Learning rate of optimizers, see detail usages of the parameters in the documentaion of PyTorch

momentum=0.9, # Momentum

weight_decay=0.0001) # Weight decay of SGD

optimizer_config = dict( # Config used to build the optimizer hook, refer to https://github.com/open-mmlab/mmcv/blob/master/mmcv/runner/hooks/optimizer.py#L8 for implementation details.

grad_clip=None) # Most of the methods do not use gradient clip

lr_config = dict( # Learning rate scheduler config used to register LrUpdater hook

policy='step', # The policy of scheduler, also support CosineAnnealing, Cyclic, etc. Refer to details of supported LrUpdater from https://github.com/open-mmlab/mmcv/blob/master/mmcv/runner/hooks/lr_updater.py#L9.

warmup='linear', # The warmup policy, also support `exp` and `constant`.

warmup_iters=500, # The number of iterations for warmup

warmup_ratio=

0.001, # The ratio of the starting learning rate used for warmup

step=[8, 11]) # Steps to decay the learning rate

total_epochs = 12 # Total epochs to train the model

checkpoint_config = dict( # Config to set the checkpoint hook, Refer to https://github.com/open-mmlab/mmcv/blob/master/mmcv/runner/hooks/checkpoint.py for implementation.

interval=1) # The save interval is 1

log_config = dict( # config to register logger hook

interval=50, # Interval to print the log

hooks=[

# dict(type='TensorboardLoggerHook') # The Tensorboard logger is also supported

dict(type='TextLoggerHook')

]) # The logger used to record the training process.

dist_params = dict(backend='nccl') # Parameters to setup distributed training, the port can also be set.

log_level = 'INFO' # The level of logging.

load_from = None # load models as a pre-trained model from a given path. This will not resume training.

resume_from = None # Resume checkpoints from a given path, the training will be resumed from the epoch when the checkpoint's is saved.

workflow = [('train', 1)] # Workflow for runner. [('train', 1)] means there is only one workflow and the workflow named 'train' is executed once. The workflow trains the model by 12 epochs according to the total_epochs.

work_dir = 'work_dir' # Directory to save the model checkpoints and logs for the current experiments.