深度学习入门学习笔记之——深度学习

深度学习

深度学习是加深了层的深度神经网络。基于之前介绍的网络,只需通过叠加层,就可以创建深度网络。本章我们将看一下深度学习的性质、课题和可能性,然后对当前的深度学习进行概括性的说明。

1、加深网络

关于神经网络,我们已经学了很多东西,比如构成神经网络的各种层、 学习时的有效技巧、对图像特别有效的 CNN、参数的最优化方法等,这些都是深度学习中的重要技术。本节我们将这些已经学过的技术汇总起来,创 建一个深度网络,挑战 MNIST 数据集的手写数字识别。

1.1、向更深的网络出发

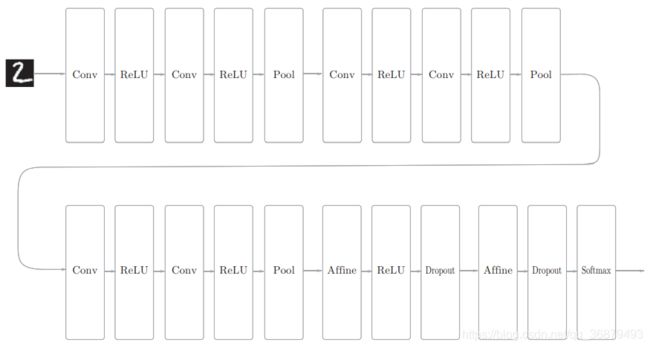

话不多说,这里我们来创建一个如下图所示的网络结构的 CNN(一个比之前的网络都深的网络)。这个网络参考了下一节要介绍的 VGG。

如下图所示,这个网络的层比之前实现的网络都更深。这里使用的卷积层全都是 3 × 3 的小型滤波器,特点是随着层的加深,通道数变大(卷积层的通道数从前面的层开始按顺序以 16、16、32、32、64、64 的方式增加)。 此外,如下图所示,插入了池化层,以逐渐减小中间数据的空间大小;并且, 后面的全连接层中使用了 Dropout 层。

这个网络使用 He 初始值作为权重的初始值,使用 Adam 更新权重参数。 把上述内容总结起来,这个网络有如下特点。

- 基于 3×3 的小型滤波器的卷积层;

- 激活函数是 ReLU;

- 全连接层的后面使用 Dropout 层;

- 基于 Adam 的最优化;

- 使用 He 初始值作为权重初始值。

从这些特征中可以看出,上图的网络中使用了多个之前介绍的神经网络技术。现在,我们使用这个网络进行学习。先说一下结论,这个网络的识别精度为 99.38%,可以说是非常优秀的性能了!

import pickle

import numpy as np

from collections import OrderedDict

from common.layers import *

class DeepConvNet:

"""识别率为99%以上的高精度的ConvNet

网络结构如下所示

conv - relu - conv- relu - pool -

conv - relu - conv- relu - pool -

conv - relu - conv- relu - pool -

affine - relu - dropout - affine - dropout - softmax

"""

def __init__(self, input_dim=(1, 28, 28),

conv_param_1 = {'filter_num':16, 'filter_size':3, 'pad':1, 'stride':1},

conv_param_2 = {'filter_num':16, 'filter_size':3, 'pad':1, 'stride':1},

conv_param_3 = {'filter_num':32, 'filter_size':3, 'pad':1, 'stride':1},

conv_param_4 = {'filter_num':32, 'filter_size':3, 'pad':2, 'stride':1},

conv_param_5 = {'filter_num':64, 'filter_size':3, 'pad':1, 'stride':1},

conv_param_6 = {'filter_num':64, 'filter_size':3, 'pad':1, 'stride':1},

hidden_size=50, output_size=10):

# 初始化权重===========

# 各层的神经元平均与前一层的几个神经元有连接(TODO:自动计算)

pre_node_nums = np.array([1*3*3, 16*3*3, 16*3*3, 32*3*3, 32*3*3, 64*3*3, 64*4*4, hidden_size])

wight_init_scales = np.sqrt(2.0 / pre_node_nums) # 使用ReLU的情况下推荐的初始值

self.params = {}

pre_channel_num = input_dim[0]

for idx, conv_param in enumerate([conv_param_1, conv_param_2, conv_param_3, conv_param_4, conv_param_5, conv_param_6]):

self.params['W' + str(idx+1)] = wight_init_scales[idx] * np.random.randn(conv_param['filter_num'], pre_channel_num, conv_param['filter_size'], conv_param['filter_size'])

self.params['b' + str(idx+1)] = np.zeros(conv_param['filter_num'])

pre_channel_num = conv_param['filter_num']

self.params['W7'] = wight_init_scales[6] * np.random.randn(64*4*4, hidden_size)

self.params['b7'] = np.zeros(hidden_size)

self.params['W8'] = wight_init_scales[7] * np.random.randn(hidden_size, output_size)

self.params['b8'] = np.zeros(output_size)

# 生成层===========

self.layers = []

self.layers.append(Convolution(self.params['W1'], self.params['b1'],

conv_param_1['stride'], conv_param_1['pad']))

self.layers.append(Relu())

self.layers.append(Convolution(self.params['W2'], self.params['b2'],

conv_param_2['stride'], conv_param_2['pad']))

self.layers.append(Relu())

self.layers.append(Pooling(pool_h=2, pool_w=2, stride=2))

self.layers.append(Convolution(self.params['W3'], self.params['b3'],

conv_param_3['stride'], conv_param_3['pad']))

self.layers.append(Relu())

self.layers.append(Convolution(self.params['W4'], self.params['b4'],

conv_param_4['stride'], conv_param_4['pad']))

self.layers.append(Relu())

self.layers.append(Pooling(pool_h=2, pool_w=2, stride=2))

self.layers.append(Convolution(self.params['W5'], self.params['b5'],

conv_param_5['stride'], conv_param_5['pad']))

self.layers.append(Relu())

self.layers.append(Convolution(self.params['W6'], self.params['b6'],

conv_param_6['stride'], conv_param_6['pad']))

self.layers.append(Relu())

self.layers.append(Pooling(pool_h=2, pool_w=2, stride=2))

self.layers.append(Affine(self.params['W7'], self.params['b7']))

self.layers.append(Relu())

self.layers.append(Dropout(0.5))

self.layers.append(Affine(self.params['W8'], self.params['b8']))

self.layers.append(Dropout(0.5))

self.last_layer = SoftmaxWithLoss()

def predict(self, x, train_flg=False):

for layer in self.layers:

if isinstance(layer, Dropout):

x = layer.forward(x, train_flg)

else:

x = layer.forward(x)

return x

def loss(self, x, t):

y = self.predict(x, train_flg=True)

return self.last_layer.forward(y, t)

def accuracy(self, x, t, batch_size=100):

if t.ndim != 1 : t = np.argmax(t, axis=1)

acc = 0.0

for i in range(int(x.shape[0] / batch_size)):

tx = x[i*batch_size:(i+1)*batch_size]

tt = t[i*batch_size:(i+1)*batch_size]

y = self.predict(tx, train_flg=False)

y = np.argmax(y, axis=1)

acc += np.sum(y == tt)

return acc / x.shape[0]

def gradient(self, x, t):

# forward

self.loss(x, t)

# backward

dout = 1

dout = self.last_layer.backward(dout)

tmp_layers = self.layers.copy()

tmp_layers.reverse()

for layer in tmp_layers:

dout = layer.backward(dout)

# 设定

grads = {}

for i, layer_idx in enumerate((0, 2, 5, 7, 10, 12, 15, 18)):

grads['W' + str(i+1)] = self.layers[layer_idx].dW

grads['b' + str(i+1)] = self.layers[layer_idx].db

return grads

def save_params(self, file_name="params.pkl"):

params = {}

for key, val in self.params.items():

params[key] = val

with open(file_name, 'wb') as f:

pickle.dump(params, f)

def load_params(self, file_name="params.pkl"):

with open(file_name, 'rb') as f:

params = pickle.load(f)

for key, val in params.items():

self.params[key] = val

for i, layer_idx in enumerate((0, 2, 5, 7, 10, 12, 15, 18)):

self.layers[layer_idx].W = self.params['W' + str(i+1)]

self.layers[layer_idx].b = self.params['b' + str(i+1)]

import numpy as np

import matplotlib.pyplot as plt

from dataset.mnist import load_mnist

from deep_convnet import DeepConvNet

from common.trainer import Trainer

(x_train, t_train), (x_test, t_test) = load_mnist(flatten=False)

network = DeepConvNet()

trainer = Trainer(network, x_train, t_train, x_test, t_test,

epochs=20, mini_batch_size=100,

optimizer='Adam', optimizer_param={'lr':0.001},

evaluate_sample_num_per_epoch=1000)

trainer.train()

# 保存参数

network.save_params("deep_convnet_params.pkl")

print("Saved Network Parameters!")

2、深度学习的应用案例

-

物体检测

-

图像分割

-

图像标题的生成