手撕ResNet50简易速学复现,深度学习入门必备+面试利器

参考资料:

[1] ResNet手写代码实现

[2]ResNet50复现笔记

适合对象:对pytorch和torch.nn工具使用已经有一定的了解,对resnet框架和原理基本熟悉,本教程简单快速速通resnet全流程,帮助算法岗面试 / 进一步提高工程能力。

本教程为微调其他大佬代码而来,完全按照论文中的参数配置,调通无bug

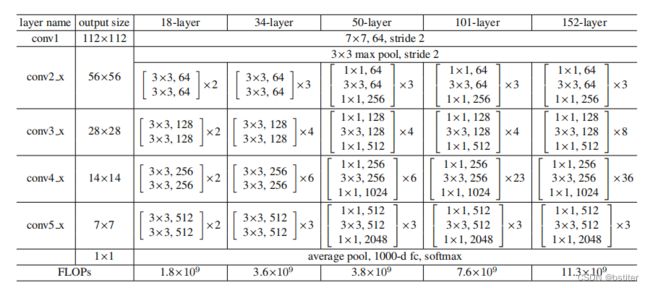

ResNet50参数配置:

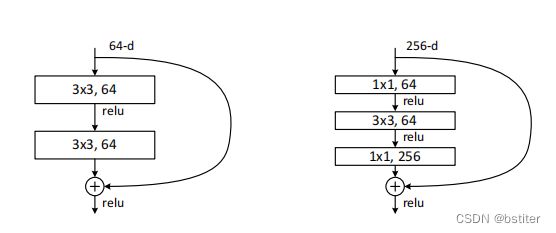

其中resnet50的每一个block结构如下图右所示:

复现时注意的点:

- 每个stage(表中除conv1外包含4个stage)都是由上图block重复若干次构成,仅在于out_channels不同。每个stage的第一个block为 自定义的stride,后几个block固定stride=1

- 四个stage的自定义stride = [1, 2, 2, 2]

- ResNet50以下的,每个block包含3,3两个核,out_channels维持不变。ResNet50及以上的,每个block包含1, 3, 1三个核,注意第三个核的out_channels变为4倍,放进下一个重复的block时,输入维度要注意转换

ResNetBlock (或叫BottleNeck)

import torch

import torch.nn as nn

class ResNetBlock(nn.Module):

def __init__(self, inp_c, out_c, stride=1):

super(ResNetBlock, self).__init__()

self.block = nn.Sequential(

nn.Conv2d(inp_c, out_c, 1, stride=stride),

nn.BatchNorm2d(out_c),

nn.ReLU(),

nn.Conv2d(out_c, out_c, 3, stride=1, padding=1),

nn.BatchNorm2d(out_c),

nn.ReLU(),

nn.Conv2d(out_c, out_c * 4, 1, stride=1), # resnet50以上,最后一个kernel的channel提高4倍

nn.BatchNorm2d(out_c * 4),

nn.ReLU()

)

self.downsample = None

if stride != 1 or inp_c != out_c * 4: # 将输入调整至与output一致尺寸

self.downsample = nn.Sequential(

nn.Conv2d(inp_c, out_c * 4, 1, stride=stride),

nn.BatchNorm2d(out_c * 4)

)

def forward(self, x):

residual = x

out = self.block(x)

if self.downsample != None:

residual = self.downsample(x)

out += residual

return out

ResNet50

class ResNet(nn.Module):

def __init__(self, layers, num_classes=1000):

super(ResNet, self).__init__()

self.in_channel = 64

# stem, 即表格中的conv1,将3通道转换至64

self.stem_block = nn.Sequential(

nn.Conv2d(3, self.in_channel, kernel_size=7, stride=2, padding=3),

nn.BatchNorm2d(self.in_channel),

nn.ReLU(),

nn.MaxPool2d(kernel_size=3, stride=2, padding=1)

)

# stage

self.stage1 = self.make_layer(ResNetBlock, 64, layers[0], stride=1)

self.stage2 = self.make_layer(ResNetBlock, 128, layers[1], stride=2)

self.stage3 = self.make_layer(ResNetBlock, 256, layers[2], stride=2)

self.stage4 = self.make_layer(ResNetBlock, 512, layers[3], stride=2)

# 后处理:1000分类

self.avg_pool = nn.AvgPool2d(7)

self.fc = nn.Linear(512 * 4, num_classes)

def make_layer(self, block, out_c, num_blocks, stride):

stride_list = [stride] + [1] * (num_blocks - 1) # 自定义stride + 固定1

block_list = []

for stride_ in stride_list:

block_list.append(block(self.in_channel, out_c, stride=stride_))

self.in_channel = out_c * 4 # 上面输出的维度已不是out_c, 而是out_c * 4

return nn.Sequential(*block_list)

def forward(self, x):

# stem

out = self.stem_block(x)

# stage

out = self.stage1(out)

out = self.stage2(out)

out = self.stage3(out)

out = self.stage4(out)

# post-process

out = self.avg_pool(out)

out = torch.flatten(out, 1)

out = self.fc(out)

return out

主函数

def main():

input = torch.rand(4, 3, 224, 224) # batchsize=4, channel=3, 224*224

net = ResNet([3, 4, 6, 3]) # 四个block的重复数

output = net(input)

print(output.shape)

if __name__ == '__main__':

main()

输出

torch.Size([4, 1000])