- 天文图像处理:星系分类与天体定位

xcLeigh

计算机视觉CV图像处理分类人工智能AI计算机视觉

天文图像处理:星系分类与天体定位一、前言二、天文图像处理基础2.1天文图像的获取2.2天文图像的格式2.3天文图像处理的基本流程三、天文图像预处理3.1去噪处理3.2平场校正3.3偏置校正四、星系分类4.1星系的分类体系4.2基于特征提取的星系分类方法4.3基于深度学习的星系分类方法五、天体定位5.1天体坐标系统5.2基于星图匹配的天体定位方法5.3基于深度学习的天体定位方法六、总结与展望致读者一

- 深度学习——CNN(3)

飘涯

前言:前面介绍了最基本的Lenet,下面介绍几种其他的网络结构CNN-AlexNet网络结构如下图:从图中可以看出,采用双gpu训练增加LRN归一化层:本质上,这个层也是为了防止激活函数的饱和的。采用dropout防止过拟合基于AlexNet进行微调,诞生了ZF-netCNN-GoogleNetGoogLeNet借鉴了NIN的特性,在原先的卷积过程中附加了11的卷积核加上ReLU激活。这不仅仅提升

- 微算法科技技术突破:用于前馈神经网络的量子算法技术助力神经网络变革

MicroTech2025

量子计算算法神经网络

随着量子计算和机器学习的迅猛发展,企业界正逐步迈向融合这两大领域的新时代。在这一背景下,微算法科技(NASDAQ:MLGO)成功研发出一套用于前馈神经网络的量子算法,突破了传统神经网络在训练和评估中的性能瓶颈。这一创新性的量子算法以经典的前馈和反向传播算法为基础,借助量子计算的强大算力,极大提升了网络训练和评估效率,并带来了对过拟合的天然抗性。前馈神经网络是深度学习的核心架构,广泛应用于图像分类、

- 英伟达Triton 推理服务详解

leo0308

基础知识机器人Triton人工智能

1.TritonInferenceServer简介TritonInferenceServer(简称Triton,原名NVIDIATensorRTInferenceServer)是英伟达推出的一个开源、高性能的推理服务器,专为AI模型的部署和推理服务而设计。它支持多种深度学习框架和硬件平台,能够帮助开发者和企业高效地将AI模型部署到生产环境中。Triton主要用于模型推理服务化,即将训练好的模型通过

- Java NLP炼金术:从词袋到深度学习,构建AI时代的语言魔方

墨夶

Java学习资料人工智能java自然语言处理

一、JavaNLP的“三剑客”:框架与工具链1.1ApacheOpenNLP:传统NLP的“瑞士军刀”目标:用词袋模型实现文本分类与实体识别代码实战:文档分类器的“炼成术”//OpenNLP文档分类器(基于词袋模型)importopennlp.tools.doccat.*;importopennlp.tools.util.*;publicclassDocumentClassifier{//训练模型

- PyTorch & TensorFlow速成复习:从基础语法到模型部署实战(附FPGA移植衔接)

阿牛的药铺

算法移植部署pytorchtensorflowfpga开发

PyTorch&TensorFlow速成复习:从基础语法到模型部署实战(附FPGA移植衔接)引言:为什么算法移植工程师必须掌握框架基础?针对光学类产品算法FPGA移植岗位需求(如可见光/红外图像处理),深度学习框架是算法落地的"桥梁"——既要用PyTorch/TensorFlow验证算法可行性,又要将训练好的模型(如CNN、目标检测)转换为FPGA可部署的格式(ONNX、TFLite)。本文采用"

- 深度学习模型表征提取全解析

ZhangJiQun&MXP

教学2024大模型以及算力2021AIpython深度学习人工智能pythonembedding语言模型

模型内部进行表征提取的方法在自然语言处理(NLP)中,“表征(Representation)”指将文本(词、短语、句子、文档等)转化为计算机可理解的数值形式(如向量、矩阵),核心目标是捕捉语言的语义、语法、上下文依赖等信息。自然语言表征技术可按“静态/动态”“有无上下文”“是否融入知识”等维度划分一、传统静态表征(无上下文,词级为主)这类方法为每个词分配固定向量,不考虑其在具体语境中的含义(无法解

- 【Qualcomm】高通SNPE框架简介、下载与使用

Jackilina_Stone

人工智能QualcommSNPE

目录一高通SNPE框架1SNPE简介2QNN与SNPE3Capabilities4工作流程二SNPE的安装与使用1下载2Setup3SNPE的使用概述一高通SNPE框架1SNPE简介SNPE(SnapdragonNeuralProcessingEngine),是高通公司推出的面向移动端和物联网设备的深度学习推理框架。SNPE提供了一套完整的深度学习推理框架,能够支持多种深度学习模型,包括Pytor

- 深度学习篇---昇腾NPU&CANN 工具包

Atticus-Orion

上位机知识篇图像处理篇深度学习篇深度学习人工智能NPU昇腾CANN

介绍昇腾NPU是华为推出的神经网络处理器,具有强大的AI计算能力,而CANN工具包则是面向AI场景的异构计算架构,用于发挥昇腾NPU的性能优势。以下是详细介绍:昇腾NPU架构设计:采用达芬奇架构,是一个片上系统,主要由特制的计算单元、大容量的存储单元和相应的控制单元组成。集成了多个CPU核心,包括控制CPU和AICPU,前者用于控制处理器整体运行,后者承担非矩阵类复杂计算。此外,还拥有AICore

- 深度学习图像分类数据集—桃子识别分类

AI街潜水的八角

深度学习图像数据集深度学习分类人工智能

该数据集为图像分类数据集,适用于ResNet、VGG等卷积神经网络,SENet、CBAM等注意力机制相关算法,VisionTransformer等Transformer相关算法。数据集信息介绍:桃子识别分类:['B1','M2','R0','S3']训练数据集总共有6637张图片,每个文件夹单独放一种数据各子文件夹图片统计:·B1:1601张图片·M2:1800张图片·R0:1601张图片·S3:

- NumPy-@运算符详解

GG不是gg

numpynumpy

NumPy-@运算符详解一、@运算符的起源与设计目标1.从数学到代码:符号的统一2.设计目标二、@运算符的核心语法与运算规则1.基础用法:二维矩阵乘法2.一维向量的矩阵语义3.高维数组:批次矩阵运算4.广播机制:灵活的形状匹配三、@运算符与其他乘法方式的核心区别1.对比`np.dot()`2.对比元素级乘法`*`3.对比`np.matrix`的`*`运算符四、典型应用场景:从基础到高阶1.深度学习

- NLP_知识图谱_大模型——个人学习记录

macken9999

自然语言处理知识图谱大模型自然语言处理知识图谱学习

1.自然语言处理、知识图谱、对话系统三大技术研究与应用https://github.com/lihanghang/NLP-Knowledge-Graph深度学习-自然语言处理(NLP)-知识图谱:知识图谱构建流程【本体构建、知识抽取(实体抽取、关系抽取、属性抽取)、知识表示、知识融合、知识存储】-元気森林-博客园https://www.cnblogs.com/-402/p/16529422.htm

- 解决 Python 包安装失败问题:以 accelerate 为例

在使用Python开发项目时,我们经常会遇到依赖包安装失败的问题。今天,我们就以accelerate包为例,详细探讨一下可能的原因以及解决方法。通过这篇文章,你将了解到Python包安装失败的常见原因、如何切换镜像源、如何手动安装包,以及一些实用的注意事项。一、问题背景在开发一个深度学习项目时,我需要安装accelerate包来优化模型的训练过程。然而,当我运行以下命令时:bash复制pipins

- 从RNN循环神经网络到Transformer注意力机制:解析神经网络架构的华丽蜕变

熊猫钓鱼>_>

神经网络rnntransformer

1.引言在自然语言处理和序列建模领域,神经网络架构经历了显著的演变。从早期的循环神经网络(RNN)到现代的Transformer架构,这一演变代表了深度学习方法在处理序列数据方面的重大进步。本文将深入比较这两种架构,分析它们的工作原理、优缺点,并通过实验结果展示它们在实际应用中的性能差异。2.循环神经网络(RNN)2.1基本原理循环神经网络是专门为处理序列数据而设计的神经网络架构。RNN的核心思想

- 如何使用Python实现交通工具识别

如何使用Python实现交通工具识别文章目录技术架构功能流程识别逻辑用户界面增强特性依赖项主要类别内容展示该系统是一个基于深度学习的交通工具识别工具,具备以下核心功能与特点:技术架构使用预训练的ResNet50卷积神经网络模型(来自ImageNet数据集)集成图像增强预处理技术(随机裁剪、旋转、翻转等)采用多数投票机制提升预测稳定性基于置信度评分的结果筛选策略功能流程用户通过GUI界面选择待识别图

- Python OpenCV教程从入门到精通的全面指南【文末送书】

一键难忘

pythonopencv开发语言

文章目录PythonOpenCV从入门到精通1.安装OpenCV2.基本操作2.1读取和显示图像2.2图像基本操作3.图像处理3.1图像转换3.2图像阈值处理3.3图像平滑4.边缘检测和轮廓4.1Canny边缘检测4.2轮廓检测5.高级操作5.1特征检测5.2目标跟踪5.3深度学习与OpenCVPythonOpenCV从入门到精通【文末送书】PythonOpenCV从入门到精通OpenCV(Ope

- 第八周 tensorflow实现猫狗识别

降花绘

365天深度学习tensorflow系列tensorflow深度学习人工智能

本文为365天深度学习训练营内部限免文章(版权归K同学啊所有)**参考文章地址:[TensorFlow入门实战|365天深度学习训练营-第8周:猫狗识别(训练营内部成员可读)]**作者:K同学啊文章目录一、本周学习内容:1、自己搭建VGG16网络2、了解model.train_on_batch()3、了解tqdm,并使用tqdm实现可视化进度条二、前言三、电脑环境四、前期准备1、导入相关依赖项2、

- 深度学习实战-使用TensorFlow与Keras构建智能模型

程序员Gloria

Python超入门TensorFlowpython

深度学习实战-使用TensorFlow与Keras构建智能模型深度学习已经成为现代人工智能的重要组成部分,而Python则是实现深度学习的主要编程语言之一。本文将探讨如何使用TensorFlow和Keras构建深度学习模型,包括必要的代码实例和详细的解析。1.深度学习简介深度学习是机器学习的一个分支,使用多层神经网络来学习和表示数据中的复杂模式。其广泛应用于图像识别、自然语言处理、推荐系统等领域。

- AI在垂直领域的深度应用:医疗、金融与自动驾驶的革新之路

AI在垂直领域的深度应用:医疗、金融与自动驾驶的革新之路一、医疗领域:AI驱动的精准诊疗与效率提升1.医学影像诊断AI算法通过深度学习技术,已实现对X光、CT、MRI等影像的快速分析,辅助医生检测癌症、骨折等疾病。例如,GoogleDeepMind的AI系统在乳腺癌筛查中,误检率比人类专家低9.4%;中国的推想医疗AI系统可在20秒内完成肺部CT扫描分析,为急诊救治争取黄金时间。2.药物研发传统药

- 专题:2025云计算与AI技术研究趋势报告|附200+份报告PDF、原数据表汇总下载

原文链接:https://tecdat.cn/?p=42935关键词:2025,云计算,AI技术,市场趋势,深度学习,公有云,研究报告云计算和AI技术正以肉眼可见的速度重塑商业世界。过去十年,全球云服务收入激增8倍,中国云计算市场规模突破6000亿元,而深度学习算法的应用量更是暴涨400倍。这些数字背后,是企业从“自建机房”到“云原生开发”的转型,是AI从“实验室”走向“产业级应用”的跨越。本报告

- 【深度学习解惑】在实践中如何发现和修正RNN训练过程中的数值不稳定?

云博士的AI课堂

大模型技术开发与实践哈佛博后带你玩转机器学习深度学习深度学习rnn人工智能tensorflowpytorch神经网络机器学习

在实践中发现和修正RNN训练过程中的数值不稳定目录引言与背景介绍原理解释代码说明与实现应用场景与案例分析实验设计与结果分析性能分析与技术对比常见问题与解决方案创新性与差异性说明局限性与挑战未来建议和进一步研究扩展阅读与资源推荐图示与交互性内容语言风格与通俗化表达互动交流1.引言与背景介绍循环神经网络(RNN)在处理序列数据时表现出色,但训练过程中常面临梯度消失和梯度爆炸问题,导致数值不稳定。当网络

- 【深度学习实战】当前三个最佳图像分类模型的代码详解

云博士的AI课堂

大模型技术开发与实践哈佛博后带你玩转机器学习深度学习深度学习人工智能分类模型机器学习TransformerEfficientNetConvNeXt

下面给出三个在当前图像分类任务中精度表现突出的模型示例,分别基于SwinTransformer、EfficientNet与ConvNeXt。每个模型均包含:训练代码(使用PyTorch)从预训练权重开始微调(也可注释掉预训练选项,从头训练)数据集目录结构:└──dataset_root├──buy#第一类图像└──nobuy#第二类图像随机拆分:80%训练,20%验证每个Epoch输出一次loss

- 第35周—————糖尿病预测模型优化探索

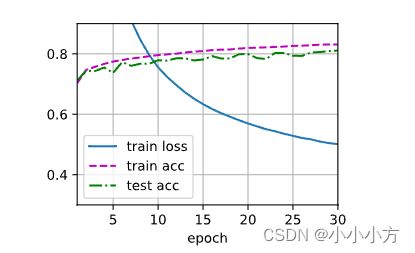

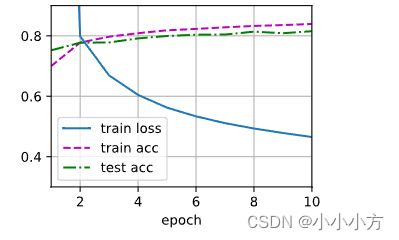

目录目录前言1.检查GPU2.查看数据编辑3.划分数据集4.创建模型与编译训练5.编译及训练模型6.结果可视化7.总结前言本文为365天深度学习训练营中的学习记录博客原作者:K同学啊1.检查GPUimporttorch.nnasnnimporttorch.nn.functionalasFimporttorchvision,torch#设置硬件设备,如果有GPU则使用,没有则使用cpudevice=

- 深度学习预备知识

AmazingMQ

深度学习人工智能

1.Tensor张量定义:张量(tensor)表示一个由数值组成的数组,这个数组可能有多个维度(轴)。具有一个轴的张量对应数学上的向量,具有两个轴的张量对应数学上的矩阵,具有两个以上轴的张量目前没有特定的数学名称。importtorch#arange创建一个行向量x,这个行向量包含以0开始的前12个整数。x=torch.arange(12)print("x=",x)#x=tensor([0,1,2

- 根茎式装配体(RA)作为下一代协同智能范式的理论、架构与应用

由数入道

人工智能思维框架软件工程智能体

一、引言——范式危机与新大陆的召唤1.1表征主义的黄昏:当前AI协同范式的认知天花板自艾伦·图灵在《计算机器与智能》中播下思想的种子以来,人工智能的漫长征途始终被一个强大而内隐的哲学范式所笼罩——我们称之为“表征主义”(Representationism)。这一范式,无论其外在形态如何演变,从早期的符号逻辑、专家系统,到如今风靡全球的深度学习神经网络,其核心信念从未动摇:智能的核心,在于构建一个关

- Manus AI与多语言手写识别

ManusAI与多语言手写识别背景与概述手写识别技术的发展现状与挑战ManusAI的核心技术与应用场景多语言手写识别的市场需求与难点ManusAI的技术架构深度学习在手写识别中的应用多语言支持的模型设计数据预处理与特征提取方法多语言手写识别的关键挑战不同语言字符的多样性处理上下文语义与书写风格适应性低资源语言的训练数据获取解决方案与优化策略迁移学习在多语言任务中的应用端到端模型的优化与轻量化用户反

- 基于LIDC-IDRI肺结节肺癌数据集的人工智能深度学习分类良性和恶性肺癌(Python 全代码)全流程解析(二)

基于LIDC-IDRI肺结节肺癌数据集的人工智能深度学习分类良性和恶性肺癌(Python全代码)全流程解析(二)1环境配置和数据集预处理1.1环境配置1.1数据集预处理2深度学习模型训练和评估2.1深度学习模型训练2.1深度学习模型评估笑话一则开心一下喽完整代码如下:模型文件如下深度学习模型讲解---待续第一部分内容的传送门第三部分传送门1环境配置和数据集预处理1.1环境配置环境配置建议使用ana

- 深度学习交互式图像分割技术演进与突破

wang1776866571

深度学习交互式分割深度学习人工智能交互式分割

说明本文为作者读研期间基于交互式图像分割领域公开文献的系统梳理与个人理解总结,所有内容均为原创撰写(ai辅助创作),未直接复制或抄袭他人成果。文中涉及的算法、模型及实验结论均参考自领域内公开发表的学术论文(具体文献见文末参考文献列表)。本文旨在为交互式图像分割领域的学习者提供一份结构化的综述参考,内容涵盖技术演进、核心方法、关键技术优化及应用前景,希望能为相关研究提供启发。摘要:本文系统综述了基于

- 前沿交叉:Fluent与深度学习驱动的流体力学计算体系

m0_75133639

流体力学深度学习人工智能航空航天fluent流体力学材料科学CFD

基础模块流体力学方程求解1、不可压缩N-S方程数值解法(有限差分/有限元/伪谱法)·Fluent工业级应用:稳态/瞬态流、两相流仿真(圆柱绕流、入水问题)·Tecplot流场可视化与数据导出2、CFD数据的AI预处理·基于PCA/SVD的流场数据降维·特征值分解与时空特征提取深度学习核心3.物理机理嵌入的神经网络架构·物理信息神经网络(PINN):将N-S方程嵌入损失函数(JAX框架实现)·神经常

- 如何使用目标检测深度学习框架yolov8训练钢管管道表面缺陷VOC+YOLO格式1159张3类别的检测数据集步骤和流程

FL1623863129

深度学习目标检测深度学习YOLO

【数据集介绍】数据集中有很多增强图片,大约300张为原图剩余为增强图片数据集格式:PascalVOC格式+YOLO格式(不包含分割路径的txt文件,仅仅包含jpg图片以及对应的VOC格式xml文件和yolo格式txt文件)图片数量(jpg文件个数):1159标注数量(xml文件个数):1159标注数量(txt文件个数):1159标注类别数:3所在仓库:firc-dataset标注类别名称(注意yo

- Maven

Array_06

eclipsejdkmaven

Maven

Maven是基于项目对象模型(POM), 信息来管理项目的构建,报告和文档的软件项目管理工具。

Maven 除了以程序构建能力为特色之外,还提供高级项目管理工具。由于 Maven 的缺省构建规则有较高的可重用性,所以常常用两三行 Maven 构建脚本就可以构建简单的项目。由于 Maven 的面向项目的方法,许多 Apache Jakarta 项目发文时使用 Maven,而且公司

- ibatis的queyrForList和queryForMap区别

bijian1013

javaibatis

一.说明

iBatis的返回值参数类型也有种:resultMap与resultClass,这两种类型的选择可以用两句话说明之:

1.当结果集列名和类的属性名完全相对应的时候,则可直接用resultClass直接指定查询结果类

- LeetCode[位运算] - #191 计算汉明权重

Cwind

java位运算LeetCodeAlgorithm题解

原题链接:#191 Number of 1 Bits

要求:

写一个函数,以一个无符号整数为参数,返回其汉明权重。例如,‘11’的二进制表示为'00000000000000000000000000001011', 故函数应当返回3。

汉明权重:指一个字符串中非零字符的个数;对于二进制串,即其中‘1’的个数。

难度:简单

分析:

将十进制参数转换为二进制,然后计算其中1的个数即可。

“

- 浅谈java类与对象

15700786134

java

java是一门面向对象的编程语言,类与对象是其最基本的概念。所谓对象,就是一个个具体的物体,一个人,一台电脑,都是对象。而类,就是对象的一种抽象,是多个对象具有的共性的一种集合,其中包含了属性与方法,就是属于该类的对象所具有的共性。当一个类创建了对象,这个对象就拥有了该类全部的属性,方法。相比于结构化的编程思路,面向对象更适用于人的思维

- linux下双网卡同一个IP

被触发

linux

转自:

http://q2482696735.blog.163.com/blog/static/250606077201569029441/

由于需要一台机器有两个网卡,开始时设置在同一个网段的IP,发现数据总是从一个网卡发出,而另一个网卡上没有数据流动。网上找了下,发现相同的问题不少:

一、

关于双网卡设置同一网段IP然后连接交换机的时候出现的奇怪现象。当时没有怎么思考、以为是生成树

- 安卓按主页键隐藏程序之后无法再次打开

肆无忌惮_

安卓

遇到一个奇怪的问题,当SplashActivity跳转到MainActivity之后,按主页键,再去打开程序,程序没法再打开(闪一下),结束任务再开也是这样,只能卸载了再重装。而且每次在Log里都打印了这句话"进入主程序"。后来发现是必须跳转之后再finish掉SplashActivity

本来代码:

// 销毁这个Activity

fin

- 通过cookie保存并读取用户登录信息实例

知了ing

JavaScripthtml

通过cookie的getCookies()方法可获取所有cookie对象的集合;通过getName()方法可以获取指定的名称的cookie;通过getValue()方法获取到cookie对象的值。另外,将一个cookie对象发送到客户端,使用response对象的addCookie()方法。

下面通过cookie保存并读取用户登录信息的例子加深一下理解。

(1)创建index.jsp文件。在改

- JAVA 对象池

矮蛋蛋

javaObjectPool

原文地址:

http://www.blogjava.net/baoyaer/articles/218460.html

Jakarta对象池

☆为什么使用对象池

恰当地使用对象池化技术,可以有效地减少对象生成和初始化时的消耗,提高系统的运行效率。Jakarta Commons Pool组件提供了一整套用于实现对象池化

- ArrayList根据条件+for循环批量删除的方法

alleni123

java

场景如下:

ArrayList<Obj> list

Obj-> createTime, sid.

现在要根据obj的createTime来进行定期清理。(释放内存)

-------------------------

首先想到的方法就是

for(Obj o:list){

if(o.createTime-currentT>xxx){

- 阿里巴巴“耕地宝”大战各种宝

百合不是茶

平台战略

“耕地保”平台是阿里巴巴和安徽农民共同推出的一个 “首个互联网定制私人农场”,“耕地宝”由阿里巴巴投入一亿 ,主要是用来进行农业方面,将农民手中的散地集中起来 不仅加大农民集体在土地上面的话语权,还增加了土地的流通与 利用率,提高了土地的产量,有利于大规模的产业化的高科技农业的 发展,阿里在农业上的探索将会引起新一轮的产业调整,但是集体化之后农民的个体的话语权 将更少,国家应出台相应的法律法规保护

- Spring注入有继承关系的类(1)

bijian1013

javaspring

一个类一个类的注入

1.AClass类

package com.bijian.spring.test2;

public class AClass {

String a;

String b;

public String getA() {

return a;

}

public void setA(Strin

- 30岁转型期你能否成为成功人士

bijian1013

成功

很多人由于年轻时走了弯路,到了30岁一事无成,这样的例子大有人在。但同样也有一些人,整个职业生涯都发展得很优秀,到了30岁已经成为职场的精英阶层。由于做猎头的原因,我们接触很多30岁左右的经理人,发现他们在职业发展道路上往往有很多致命的问题。在30岁之前,他们的职业生涯表现很优秀,但从30岁到40岁这一段,很多人

- [Velocity三]基于Servlet+Velocity的web应用

bit1129

velocity

什么是VelocityViewServlet

使用org.apache.velocity.tools.view.VelocityViewServlet可以将Velocity集成到基于Servlet的web应用中,以Servlet+Velocity的方式实现web应用

Servlet + Velocity的一般步骤

1.自定义Servlet,实现VelocityViewServl

- 【Kafka十二】关于Kafka是一个Commit Log Service

bit1129

service

Kafka is a distributed, partitioned, replicated commit log service.这里的commit log如何理解?

A message is considered "committed" when all in sync replicas for that partition have applied i

- NGINX + LUA实现复杂的控制

ronin47

lua nginx 控制

安装lua_nginx_module 模块

lua_nginx_module 可以一步步的安装,也可以直接用淘宝的OpenResty

Centos和debian的安装就简单了。。

这里说下freebsd的安装:

fetch http://www.lua.org/ftp/lua-5.1.4.tar.gz

tar zxvf lua-5.1.4.tar.gz

cd lua-5.1.4

ma

- java-14.输入一个已经按升序排序过的数组和一个数字, 在数组中查找两个数,使得它们的和正好是输入的那个数字

bylijinnan

java

public class TwoElementEqualSum {

/**

* 第 14 题:

题目:输入一个已经按升序排序过的数组和一个数字,

在数组中查找两个数,使得它们的和正好是输入的那个数字。

要求时间复杂度是 O(n) 。如果有多对数字的和等于输入的数字,输出任意一对即可。

例如输入数组 1 、 2 、 4 、 7 、 11 、 15 和数字 15 。由于

- Netty源码学习-HttpChunkAggregator-HttpRequestEncoder-HttpResponseDecoder

bylijinnan

javanetty

今天看Netty如何实现一个Http Server

org.jboss.netty.example.http.file.HttpStaticFileServerPipelineFactory:

pipeline.addLast("decoder", new HttpRequestDecoder());

pipeline.addLast(&quo

- java敏感词过虑-基于多叉树原理

cngolon

违禁词过虑替换违禁词敏感词过虑多叉树

基于多叉树的敏感词、关键词过滤的工具包,用于java中的敏感词过滤

1、工具包自带敏感词词库,第一次调用时读入词库,故第一次调用时间可能较长,在类加载后普通pc机上html过滤5000字在80毫秒左右,纯文本35毫秒左右。

2、如需自定义词库,将jar包考入WEB-INF工程的lib目录,在WEB-INF/classes目录下建一个

utf-8的words.dict文本文件,

- 多线程知识

cuishikuan

多线程

T1,T2,T3三个线程工作顺序,按照T1,T2,T3依次进行

public class T1 implements Runnable{

@Override

- spring整合activemq

dalan_123

java spring jms

整合spring和activemq需要搞清楚如下的东东1、ConnectionFactory分: a、spring管理连接到activemq服务器的管理ConnectionFactory也即是所谓产生到jms服务器的链接 b、真正产生到JMS服务器链接的ConnectionFactory还得

- MySQL时间字段究竟使用INT还是DateTime?

dcj3sjt126com

mysql

环境:Windows XPPHP Version 5.2.9MySQL Server 5.1

第一步、创建一个表date_test(非定长、int时间)

CREATE TABLE `test`.`date_test` (`id` INT NOT NULL AUTO_INCREMENT ,`start_time` INT NOT NULL ,`some_content`

- Parcel: unable to marshal value

dcj3sjt126com

marshal

在两个activity直接传递List<xxInfo>时,出现Parcel: unable to marshal value异常。 在MainActivity页面(MainActivity页面向NextActivity页面传递一个List<xxInfo>): Intent intent = new Intent(this, Next

- linux进程的查看上(ps)

eksliang

linux pslinux ps -llinux ps aux

ps:将某个时间点的进程运行情况选取下来

转载请出自出处:http://eksliang.iteye.com/admin/blogs/2119469

http://eksliang.iteye.com

ps 这个命令的man page 不是很好查阅,因为很多不同的Unix都使用这儿ps来查阅进程的状态,为了要符合不同版本的需求,所以这个

- 为什么第三方应用能早于System的app启动

gqdy365

System

Android应用的启动顺序网上有一大堆资料可以查阅了,这里就不细述了,这里不阐述ROM启动还有bootloader,软件启动的大致流程应该是启动kernel -> 运行servicemanager 把一些native的服务用命令启动起来(包括wifi, power, rild, surfaceflinger, mediaserver等等)-> 启动Dalivk中的第一个进程Zygot

- App Framework发送JSONP请求(3)

hw1287789687

jsonp跨域请求发送jsonpajax请求越狱请求

App Framework 中如何发送JSONP请求呢?

使用jsonp,详情请参考:http://json-p.org/

如何发送Ajax请求呢?

(1)登录

/***

* 会员登录

* @param username

* @param password

*/

var user_login=function(username,password){

// aler

- 发福利,整理了一份关于“资源汇总”的汇总

justjavac

资源

觉得有用的话,可以去github关注:https://github.com/justjavac/awesome-awesomeness-zh_CN 通用

free-programming-books-zh_CN 免费的计算机编程类中文书籍

精彩博客集合 hacke2/hacke2.github.io#2

ResumeSample 程序员简历

- 用 Java 技术创建 RESTful Web 服务

macroli

java编程WebREST

转载:http://www.ibm.com/developerworks/cn/web/wa-jaxrs/

JAX-RS (JSR-311) 【 Java API for RESTful Web Services 】是一种 Java™ API,可使 Java Restful 服务的开发变得迅速而轻松。这个 API 提供了一种基于注释的模型来描述分布式资源。注释被用来提供资源的位

- CentOS6.5-x86_64位下oracle11g的安装详细步骤及注意事项

超声波

oraclelinux

前言:

这两天项目要上线了,由我负责往服务器部署整个项目,因此首先要往服务器安装oracle,服务器本身是CentOS6.5的64位系统,安装的数据库版本是11g,在整个的安装过程中碰到很多的坑,不过最后还是通过各种途径解决并成功装上了。转别写篇博客来记录完整的安装过程以及在整个过程中的注意事项。希望对以后那些刚刚接触的菜鸟们能起到一定的帮助作用。

安装过程中可能遇到的问题(注

- HttpClient 4.3 设置keeplive 和 timeout 的方法

supben

httpclient

ConnectionKeepAliveStrategy kaStrategy = new DefaultConnectionKeepAliveStrategy() {

@Override

public long getKeepAliveDuration(HttpResponse response, HttpContext context) {

long keepAlive

- Spring 4.2新特性-@Import注解的升级

wiselyman

spring 4

3.1 @Import

@Import注解在4.2之前只支持导入配置类

在4.2,@Import注解支持导入普通的java类,并将其声明成一个bean

3.2 示例

演示java类

package com.wisely.spring4_2.imp;

public class DemoService {

public void doSomethin