Transformer主干网络——PVT_V1保姆级解析

前言

论文地址:PVT1

代码地址:github

作者很厉害…各种cv的顶会收割机…

系列文章

Transformer主干网络——ViT保姆级解析

Transformer主干网络——DeiT保姆级解析

Transformer主干网络——T2T-ViT保姆级解析

Transformer主干网络——TNT保姆级解析

Transformer主干网络——PVT_V1保姆级解析

Transformer主干网络——PVT_V2保姆级解析

Transformer主干网络——Swin保姆级解析

Transformer主干网络——PatchConvNet保姆级解析

持续更新…

动机

出发点:改进Vit的不足。

- 不足一:Vit输出的特征图是single-scale的,也就是不像resnet那样有4个block可以输出四个尺度的特征图。多尺度的特征图对下游任务来说是很有用的,主要是因为之前主流的backbone是resnet,因此很多结构都是根据resnet来设计的(比如FPN,结合不同尺度的特征融合得到一个包含深浅层语义的特征),这样的话可以很好的将transformer的主干网络替换之前的resnet。

- 不足二:即使正常的输入尺寸(作者举例:短边800像素在COCO标准上)对于Vit来说也要花费大量的计算开销以及内存开销。

网络分析

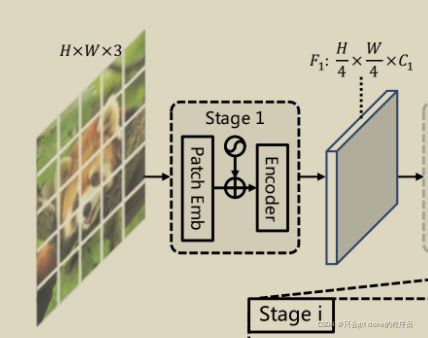

作者知乎说到:设计了n种方法最后实验发现还是简单的堆叠block最有效。完整的网络结构如图所示:

![]()

从图来看其实结构不是很复杂,基本对齐resnet的结构,大致来讲就是:

H * W * 3 -> stage1 block -> H/4 * W/4 * C1 -> stage2 block -> H/8 * W/8 * C2 -> stage3 block -> H/16 * W/16 * C3 -> stage3 block -> H/32 * W/32 * C4

因此只要理解stage block中做的事情就了解清楚了整个网络结构。本文结合作者代码讲解下图部分,其余基本相同不做赘述:

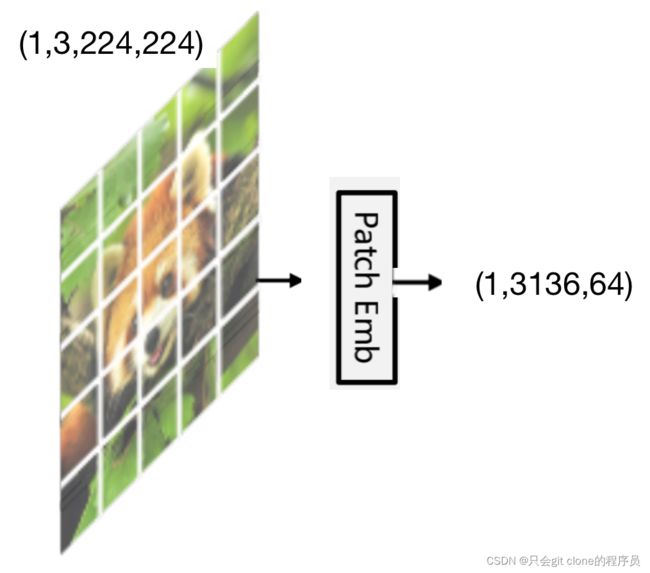

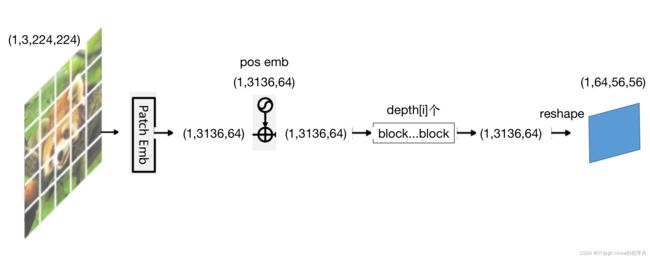

Patch Emb:

1、首先输入的data的shape是(bs,channal,H,W),为了方便直接用batchsize是1的图片做例子,因此输入是(1,3,224,224)

code对应:

model = pvt_small(**cfg)

data = torch.randn((1, 3, 224, 224))

output = model(data)

2、输入数据首先经过stage 1 block的Patch emb操作,这个操作首先把224*224的图像分成4*4的一个个小patch,这个实现是用卷积实现的,用4*4的卷积和对224*224的图像进行卷积,步长为4即可。

code对应:

self.proj = nn.Conv2d(in_chans=3, embed_dim=64, kernel_size=4, stride=4)# 其中64是网络第一层输出特征的维度对应图中的C1

print(x.shape)# torch.Size([1, 3, 224, 224])

x = self.proj(x)

print(x.shape)# torch.Size([1, 64, 56, 56])

这样就可以用56*56的矩阵每一个点表示原来4*4的patch

3、对1*64*56*56的矩阵在进行第二个维度展平

code对应:

print(x.shape) # torch.Size([1, 64, 56, 56])

x = x.flatten(2)

print(x.shape) # torch.Size([1, 64, 3136])

这时候就可以用3136这个一维的向量来表示224*224的图像了

4、为了方便计算调换下第二第三两个维度,然后对数据进行layer norm。

code对应:

print(x.shape) # torch.Size([1, 64, 3136])

x = x.transpose(1, 2)

print(x.shape) # torch.Size([1, 3136, 64])

x = self.norm(x)

print(x.shape) # torch.Size([1, 3136, 64])

以上就完成了Patch emb的操作,完整代码对应:

def forward(self, x):

B, C, H, W = x.shape # 1,3,224,224

x = self.proj(x) # 卷积操作,输出1,64,56,56

x = x.flatten(2) # 展平操作,输出1,64,3136

x = x.transpose(1, 2) # 交换维度,输出 1,3136,64

x = self.norm(x) # layer normal,输出 1,3136,64

H, W = H // 4, W // 4 # 最终的高宽变成56,56

return x, (H, W)

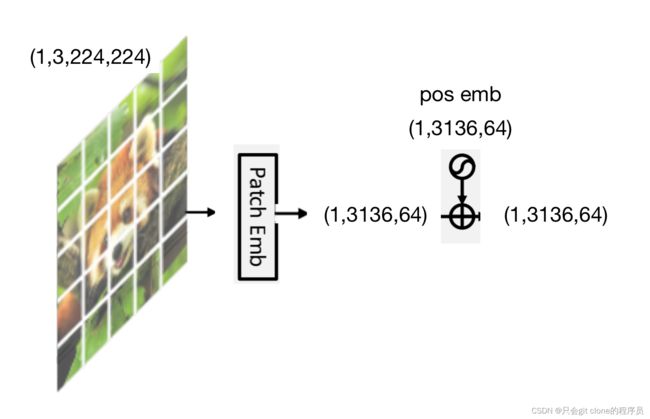

position embedding部分

1、这部分和Vit的位置编码基本是一样的,创建一个可学习的参数,大小和patch emb出来的tensor的大小一致就是(1,3136,64),这是个可学习的参数。

code对应:

pos_embed = nn.Parameter(torch.zeros(1, 3136, 64))

2、位置编码的使用也是和Vit一样,直接和输出的x进行矩阵加,因此shape不变化。

code对应:

print(x.shape) # torch.Size([1, 3136, 64])

x = x + pos_embed

print(x.shape) # torch.Size([1, 3136, 64])

3、相加完后,作者加了个dropout进行正则化。

code对应:

pos_drop = nn.Dropout(p=drop_rate)

x = pos_drop(x)

以上就完成了position embedding的操作,完整代码对应:

x = x + pos_embed

x = pos_drop(x)

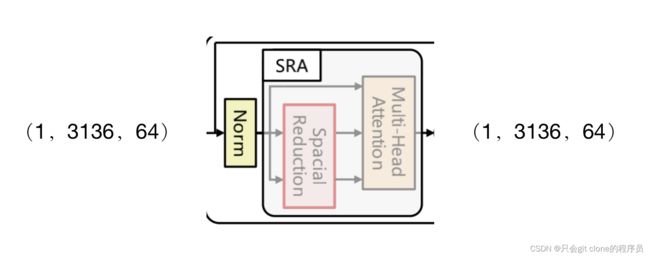

Encoder部分

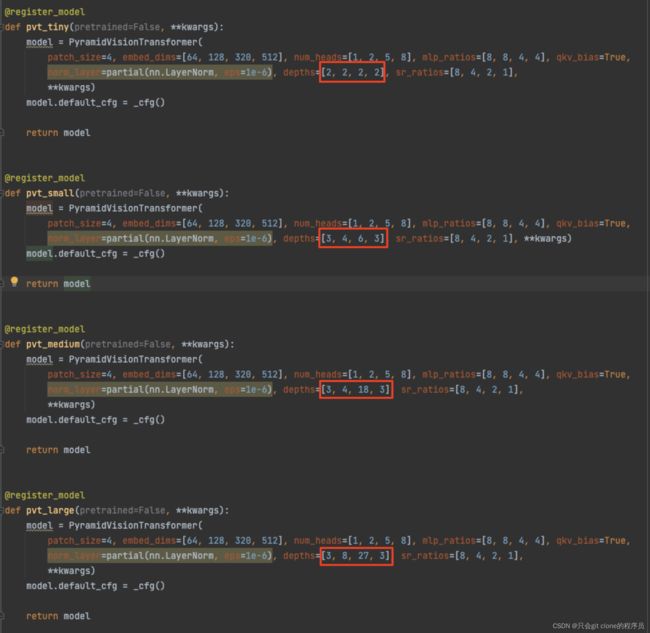

第i个stage的encoder部分由depth[i]个block构成,对于pvt_tiny到pvt_large来说主要就是depth的参数的不同:

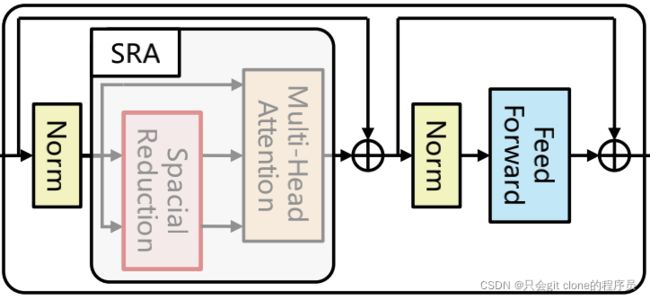

例如对于pvt_tiny来说,每个encoder都是由两个block构成,每个block的结构如下图所示:

对于第一个encoder的第一个block的输入就是我们前面分析的经过position embedding后拿到的tensor,因此他的输入的大小是(1,3136,64),与此同时图像经过Patch emb后变成了56*56的大小。

1、首先从上图可以看出先对输入拷贝一份,给残差结构用。然后输入的x先经过一层layer norm层,此时维度不变,然后经过作者修改的Multi head attention层(SRA,后面再讲)与之前拷贝的输入叠加。

code对应:

print(x.shape) # (1,3136,64)

x = x + self.drop_path(self.attn(self.norm1(x), H, W))

print(x.shape) # (1,3136,64)

2、经过SRA层的特征拷贝一份留给残差结构,然后将输入经过layer norm层,维度不变,再送入feed forward层(后面再讲),之后与之前拷贝的输入叠加。

code对应:

print(x.shape) # (1,3136,64)

x = x + self.drop_path(self.mlp(self.norm2(x)))

print(x.shape) # (1,3136,64)

因此可以发现经过一个block,tensor的shape是不发生变化的。完整的代码对应:

def forward(self, x, H, W):

x = x + self.drop_path(self.attn(self.norm1(x), H, W)) # SRA层

x = x + self.drop_path(self.mlp(self.norm2(x))) # feed forward层

return x

3、这样经过depth[i]个block之后拿到的tensor的大小仍然是(1,3136,64),只需要将它的shape还原成图像的形状就可以输入给下一个stage了。而还原shape,直接调用reshape函数即可,这时候的特征就还原成(bs,channal,H,W)了,数值为(1,64,56,56)

code对应:

print(x.shape) # 1,3136,64

x = x.reshape(B, H, W, -1)

print(x.shape) # 1,56,56,64

x = x.permute(0, 3, 1, 2).contiguous()

print(x.shape) # 1,64,56,56

这时候stage2输入的tensor就是(1,64,56,56),就完成了数据输出第一个stage的完整分析。

最后只要在不同的encoder中堆叠不同个数的block就可以构建出pvt_tiny、pvt_small、pvt_medium、pvt_large了。

完整图示如下:

所以!经过stage1,输入为(1,3,224,224)的tensor变成了(1,64,56,56)的tensor,这个tensor可以再次输入给下一个stage重复上述的计算就完成了PVT的设计。

SRA

attention看不懂可以参考这里:transformer详解

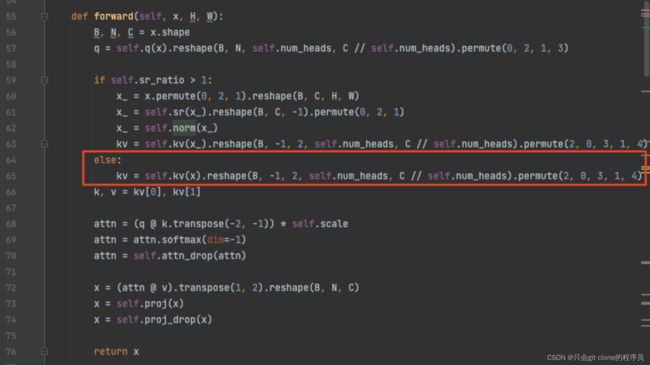

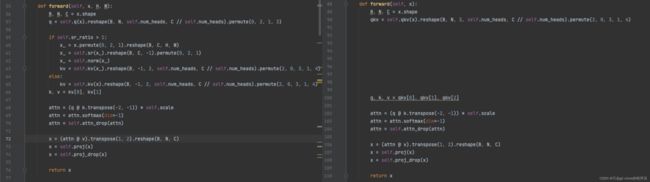

SRA其实就是对Vit里面的attention模块进行了小小的改动(可以说就是attention),来节省比较大的计算量。首先看代码diff对比,为了方便对比加了些换行来对齐代码:

1、首先如果参数self.sr_ratio为1的话,那么pvt的attetion就和vit的attetion一模一样了:

2、因此分析不一样的地方,

code对应:

self.sr = nn.Conv2d(dim, dim, kernel_size=sr_ratio, stride=sr_ratio)

x_ = x.permute(0, 2, 1).reshape(B, C, H, W)

x_ = self.sr(x_).reshape(B, C, -1).permute(0, 2, 1)

x_ = self.norm(x_)

kv = self.kv(x_).reshape(B, -1, 2, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

2.1:首先输入进来的x的shape是(1,3136,64),

2.2:先permute置换维度得到(1,64,3136),

2.3:reshape得到(1,64,56,56)

2.4:self.sr(x_)是一个卷积操作,卷积的步长和大小都是sr_ratio,这个数值这里是8因此相当于将56*56的大小长宽缩小到8分之一,也就是面积缩小到64分之一,因此输出的shape是(1,64,7,7)

2.5:reshape(B, C, -1)得到(1,64,49)

2.6:permute(0, 2, 1)得到(1,49,64)

2.7:经过layer norm后shape不变

2.8:kv = self.kv(x_).reshape(B, -1, 2, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)这一行就是和vit一样用x生成k和v,不同的是这里的x通过卷积的方式降低了x的大小。

这一行shape的变化是这样的:(1,49,64)->(1,49,128)->(1,49,2,1,64)->(2,1,1,49,64)

2.9:拿到kv:(2,1,1,49,64)分别取index为0和1就可以得到k和v对应k, v = kv[0], kv[1]因此k和v的shape为(1,1,49,64)

3、之后的代码与vit相同,主要就是拿到了x生成的q,k,v之后,q和所有的k矩阵乘之后算softmax,然后加权到v上。

code对应:

attn = (q @ k.transpose(-2, -1)) * self.scale # (1, 1, 3136, 64)@(1, 1, 64, 49) = (1, 1, 3136, 49)

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

x = (attn @ v).transpose(1, 2).reshape(B, N, C)# (1, 1, 3136, 49)@(1, 1, 49, 64) = (1, 1, 3136, 64)

x = self.proj(x)

x = self.proj_drop(x)

# x:(1, 1, 3136, 64)

4、所以对于attention模块输入进来的x的大小是(1,3136,64)输出的shape也是(1,3136,64)

feed forward

这部分比较简单了,其实就是一个mlp构成的模块。

1、完整代码:

class Mlp(nn.Module):

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

首先forward函数的输入是attention的输出和原始输入残差相加的结果,

输入大小是(1,3136,64)

fc1输出(1,3136,512)

act是GELU激活函数,输出(1,3136,512)

drop输出(1,3136,512)

fc2输出(1,3136,64)

drop输出(1,3136,64)

完整测试代码

# 依赖库

python3 -m pip install timm

# 运行

python3 pvt.py

pvt.py代码源自博客开头作者github!

import torch

import torch.nn as nn

import torch.nn.functional as F

from functools import partial

from timm.models.layers import DropPath, to_2tuple, trunc_normal_

from timm.models.registry import register_model

from timm.models.vision_transformer import _cfg

__all__ = [

'pvt_tiny', 'pvt_small', 'pvt_medium', 'pvt_large'

]

class Mlp(nn.Module):

def __init__(self, in_features, hidden_features=None, out_features=None, act_layer=nn.GELU, drop=0.):

super().__init__()

out_features = out_features or in_features

hidden_features = hidden_features or in_features

self.fc1 = nn.Linear(in_features, hidden_features)

self.act = act_layer()

self.fc2 = nn.Linear(hidden_features, out_features)

self.drop = nn.Dropout(drop)

def forward(self, x):

x = self.fc1(x)

x = self.act(x)

x = self.drop(x)

x = self.fc2(x)

x = self.drop(x)

return x

class Attention(nn.Module):

def __init__(self, dim, num_heads=8, qkv_bias=False, qk_scale=None, attn_drop=0., proj_drop=0., sr_ratio=1):

super().__init__()

assert dim % num_heads == 0, f"dim {dim} should be divided by num_heads {num_heads}."

self.dim = dim

self.num_heads = num_heads

head_dim = dim // num_heads

self.scale = qk_scale or head_dim ** -0.5

self.q = nn.Linear(dim, dim, bias=qkv_bias)

self.kv = nn.Linear(dim, dim * 2, bias=qkv_bias)

self.attn_drop = nn.Dropout(attn_drop)

self.proj = nn.Linear(dim, dim)

self.proj_drop = nn.Dropout(proj_drop)

self.sr_ratio = sr_ratio

if sr_ratio > 1:

self.sr = nn.Conv2d(dim, dim, kernel_size=sr_ratio, stride=sr_ratio)

self.norm = nn.LayerNorm(dim)

def forward(self, x, H, W):

B, N, C = x.shape

q = self.q(x).reshape(B, N, self.num_heads, C // self.num_heads).permute(0, 2, 1, 3)

if self.sr_ratio > 1:

x_ = x.permute(0, 2, 1).reshape(B, C, H, W)

x_ = self.sr(x_).reshape(B, C, -1).permute(0, 2, 1)

x_ = self.norm(x_)

kv = self.kv(x_).reshape(B, -1, 2, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

else:

kv = self.kv(x).reshape(B, -1, 2, self.num_heads, C // self.num_heads).permute(2, 0, 3, 1, 4)

k, v = kv[0], kv[1]

attn = (q @ k.transpose(-2, -1)) * self.scale

attn = attn.softmax(dim=-1)

attn = self.attn_drop(attn)

x = (attn @ v).transpose(1, 2).reshape(B, N, C)

x = self.proj(x)

x = self.proj_drop(x)

return x

class Block(nn.Module):

def __init__(self, dim, num_heads, mlp_ratio=4., qkv_bias=False, qk_scale=None, drop=0., attn_drop=0.,

drop_path=0., act_layer=nn.GELU, norm_layer=nn.LayerNorm, sr_ratio=1):

super().__init__()

self.norm1 = norm_layer(dim)

self.attn = Attention(

dim,

num_heads=num_heads, qkv_bias=qkv_bias, qk_scale=qk_scale,

attn_drop=attn_drop, proj_drop=drop, sr_ratio=sr_ratio)

# NOTE: drop path for stochastic depth, we shall see if this is better than dropout here

self.drop_path = DropPath(drop_path) if drop_path > 0. else nn.Identity()

self.norm2 = norm_layer(dim)

mlp_hidden_dim = int(dim * mlp_ratio)

self.mlp = Mlp(in_features=dim, hidden_features=mlp_hidden_dim, act_layer=act_layer, drop=drop)

def forward(self, x, H, W):

x = x + self.drop_path(self.attn(self.norm1(x), H, W))

x = x + self.drop_path(self.mlp(self.norm2(x)))

return x

class PatchEmbed(nn.Module):

""" Image to Patch Embedding

"""

def __init__(self, img_size=224, patch_size=16, in_chans=3, embed_dim=768):

super().__init__()

img_size = to_2tuple(img_size)

patch_size = to_2tuple(patch_size)

self.img_size = img_size

self.patch_size = patch_size

# assert img_size[0] % patch_size[0] == 0 and img_size[1] % patch_size[1] == 0, \

# f"img_size {img_size} should be divided by patch_size {patch_size}."

self.H, self.W = img_size[0] // patch_size[0], img_size[1] // patch_size[1]

self.num_patches = self.H * self.W

self.proj = nn.Conv2d(in_chans, embed_dim, kernel_size=patch_size, stride=patch_size)

self.norm = nn.LayerNorm(embed_dim)

def forward(self, x):

B, C, H, W = x.shape

x = self.proj(x)

x = x.flatten(2)

x = x.transpose(1, 2)

x = self.norm(x)

H, W = H // self.patch_size[0], W // self.patch_size[1]

return x, (H, W)

class PyramidVisionTransformer(nn.Module):

def __init__(self, img_size=224, patch_size=16, in_chans=3, num_classes=1000, embed_dims=[64, 128, 256, 512],

num_heads=[1, 2, 4, 8], mlp_ratios=[4, 4, 4, 4], qkv_bias=False, qk_scale=None, drop_rate=0.,

attn_drop_rate=0., drop_path_rate=0., norm_layer=nn.LayerNorm,

depths=[3, 4, 6, 3], sr_ratios=[8, 4, 2, 1], num_stages=4):

super().__init__()

self.num_classes = num_classes

self.depths = depths

self.num_stages = num_stages

dpr = [x.item() for x in torch.linspace(0, drop_path_rate, sum(depths))] # stochastic depth decay rule

cur = 0

for i in range(num_stages):

patch_embed = PatchEmbed(img_size=img_size if i == 0 else img_size // (2 ** (i + 1)),

patch_size=patch_size if i == 0 else 2,

in_chans=in_chans if i == 0 else embed_dims[i - 1],

embed_dim=embed_dims[i])

num_patches = patch_embed.num_patches if i != num_stages - 1 else patch_embed.num_patches + 1

pos_embed = nn.Parameter(torch.zeros(1, num_patches, embed_dims[i]))

pos_drop = nn.Dropout(p=drop_rate)

block = nn.ModuleList([Block(

dim=embed_dims[i], num_heads=num_heads[i], mlp_ratio=mlp_ratios[i], qkv_bias=qkv_bias,

qk_scale=qk_scale, drop=drop_rate, attn_drop=attn_drop_rate, drop_path=dpr[cur + j],

norm_layer=norm_layer, sr_ratio=sr_ratios[i])

for j in range(depths[i])])

cur += depths[i]

setattr(self, f"patch_embed{i + 1}", patch_embed)

setattr(self, f"pos_embed{i + 1}", pos_embed)

setattr(self, f"pos_drop{i + 1}", pos_drop)

setattr(self, f"block{i + 1}", block)

self.norm = norm_layer(embed_dims[3])

# cls_token

self.cls_token = nn.Parameter(torch.zeros(1, 1, embed_dims[3]))

# classification head

self.head = nn.Linear(embed_dims[3], num_classes) if num_classes > 0 else nn.Identity()

# init weights

for i in range(num_stages):

pos_embed = getattr(self, f"pos_embed{i + 1}")

trunc_normal_(pos_embed, std=.02)

trunc_normal_(self.cls_token, std=.02)

self.apply(self._init_weights)

def _init_weights(self, m):

if isinstance(m, nn.Linear):

trunc_normal_(m.weight, std=.02)

if isinstance(m, nn.Linear) and m.bias is not None:

nn.init.constant_(m.bias, 0)

elif isinstance(m, nn.LayerNorm):

nn.init.constant_(m.bias, 0)

nn.init.constant_(m.weight, 1.0)

@torch.jit.ignore

def no_weight_decay(self):

# return {'pos_embed', 'cls_token'} # has pos_embed may be better

return {'cls_token'}

def get_classifier(self):

return self.head

def reset_classifier(self, num_classes, global_pool=''):

self.num_classes = num_classes

self.head = nn.Linear(self.embed_dim, num_classes) if num_classes > 0 else nn.Identity()

def _get_pos_embed(self, pos_embed, patch_embed, H, W):

if H * W == self.patch_embed1.num_patches:

return pos_embed

else:

return F.interpolate(

pos_embed.reshape(1, patch_embed.H, patch_embed.W, -1).permute(0, 3, 1, 2),

size=(H, W), mode="bilinear").reshape(1, -1, H * W).permute(0, 2, 1)

def forward_features(self, x):

B = x.shape[0]

for i in range(self.num_stages):

patch_embed = getattr(self, f"patch_embed{i + 1}")

pos_embed = getattr(self, f"pos_embed{i + 1}")

pos_drop = getattr(self, f"pos_drop{i + 1}")

block = getattr(self, f"block{i + 1}")

x, (H, W) = patch_embed(x)

"""

stage0:

"""

if i == self.num_stages - 1:

cls_tokens = self.cls_token.expand(B, -1, -1)

x = torch.cat((cls_tokens, x), dim=1)

pos_embed_ = self._get_pos_embed(pos_embed[:, 1:], patch_embed, H, W)

pos_embed = torch.cat((pos_embed[:, 0:1], pos_embed_), dim=1)

else:

pos_embed = self._get_pos_embed(pos_embed, patch_embed, H, W)

x = pos_drop(x + pos_embed)

for blk in block:

x = blk(x, H, W)

if i != self.num_stages - 1:

x = x.reshape(B, H, W, -1).permute(0, 3, 1, 2).contiguous()

x = self.norm(x)

return x[:, 0]

def forward(self, x):

x = self.forward_features(x)

x = self.head(x)

return x

def _conv_filter(state_dict, patch_size=16):

""" convert patch embedding weight from manual patchify + linear proj to conv"""

out_dict = {}

for k, v in state_dict.items():

if 'patch_embed.proj.weight' in k:

v = v.reshape((v.shape[0], 3, patch_size, patch_size))

out_dict[k] = v

return out_dict

@register_model

def pvt_tiny(pretrained=False, **kwargs):

model = PyramidVisionTransformer(

patch_size=4, embed_dims=[64, 128, 320, 512], num_heads=[1, 2, 5, 8], mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[2, 2, 2, 2], sr_ratios=[8, 4, 2, 1],

**kwargs)

model.default_cfg = _cfg()

return model

@register_model

def pvt_small(pretrained=False, **kwargs):

model = PyramidVisionTransformer(

patch_size=4, embed_dims=[64, 128, 320, 512], num_heads=[1, 2, 5, 8], mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[3, 4, 6, 3], sr_ratios=[8, 4, 2, 1], **kwargs)

model.default_cfg = _cfg()

return model

@register_model

def pvt_medium(pretrained=False, **kwargs):

model = PyramidVisionTransformer(

patch_size=4, embed_dims=[64, 128, 320, 512], num_heads=[1, 2, 5, 8], mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[3, 4, 18, 3], sr_ratios=[8, 4, 2, 1],

**kwargs)

model.default_cfg = _cfg()

return model

@register_model

def pvt_large(pretrained=False, **kwargs):

model = PyramidVisionTransformer(

patch_size=4, embed_dims=[64, 128, 320, 512], num_heads=[1, 2, 5, 8], mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[3, 8, 27, 3], sr_ratios=[8, 4, 2, 1],

**kwargs)

model.default_cfg = _cfg()

return model

@register_model

def pvt_huge_v2(pretrained=False, **kwargs):

model = PyramidVisionTransformer(

patch_size=4, embed_dims=[128, 256, 512, 768], num_heads=[2, 4, 8, 12], mlp_ratios=[8, 8, 4, 4], qkv_bias=True,

norm_layer=partial(nn.LayerNorm, eps=1e-6), depths=[3, 10, 60, 3], sr_ratios=[8, 4, 2, 1],

# drop_rate=0.0, drop_path_rate=0.02)

**kwargs)

model.default_cfg = _cfg()

return model

if __name__ == '__main__':

cfg = dict(

num_classes = 2,

pretrained=False

)

model = pvt_small(**cfg)

data = torch.randn((1, 3, 224, 224))

output = model(data)

print(output.shape)