【ML1】决策树算法decision tree(ID3)理论详解及实战

首先我们要深入了解决策树算法的每一个步骤,最基本的是ID3算法。以下这几个博客介绍的很详细,一定要推导

官网地址: https://scikit-learn.org/stable/modules/tree.html

经过上面重要的阶段,接下来进行简要总结,

一、理论讲解

0. 机器学习中分类和预测算法的评估:

准确率

速度

强壮行

可规模性

可解释性

1. 什么是决策树/判定树(decision tree)?

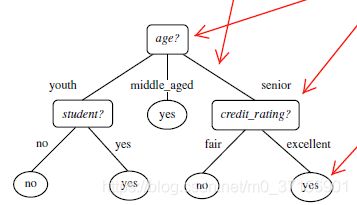

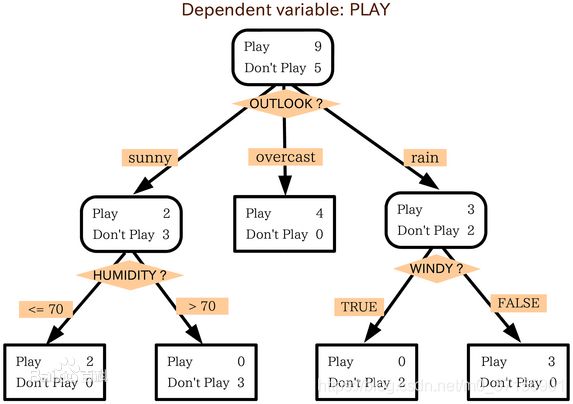

判定树是一个类似于流程图的树结构:其中,每个内部结点表示在一个属性上的测试,每个分支代表一个属性输出,而每个树叶结点代表类或类分布。树的最顶层是根结点。

2. 机器学习中分类方法中的一个重要算法

3.1 熵(entropy)概念:

信息和抽象,如何度量?

1948年,香农提出了 ”信息熵(entropy)“的概念

一条信息的信息量大小和它的不确定性有直接的关系,要搞清楚一件非常非常不确定的事情,或者

是我们一无所知的事情,需要了解大量信息==>信息量的度量就等于不确定性的多少

例子:猜世界杯冠军,假如一无所知,猜多少次?

每个队夺冠的几率不是相等的

比特(bit)来衡量信息的多少

![]()

变量的不确定性越大,熵也就越大

3.1 决策树归纳算法 (ID3)

1970-1980, J.Ross. Quinlan, ID3算法

选择属性判断结点

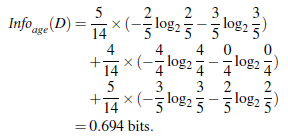

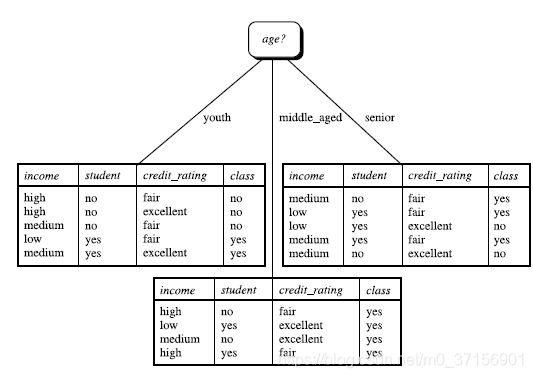

信息获取量(Information Gain):Gain(A) = Info(D) - Infor_A(D)

通过A来作为节点分类获取了多少信息

![]()

类似,Gain(income) = 0.029, Gain(student) = 0.151, Gain(credit_rating)=0.048

所以,选择age作为第一个根节点

算法:

- 树以代表训练样本的单个结点开始(步骤1)。

- 如果样本都在同一个类,则该结点成为树叶,并用该类标号(步骤2 和3)。

- 否则,算法使用称为信息增益的基于熵的度量作为启发信息,选择能够最好地将样本分类的属性(步骤6)。该属性 成为该结点的“测试”或“判定”属性(步骤7)。在算法的该版本中,

- 所有的属性都是分类的,即离散值。连续属性必须离散化。

- 对测试属性的每个已知的值,创建一个分枝,并据此划分样本(步骤8-10)。

- 算法使用同样的过程,递归地形成每个划分上的样本判定树。一旦一个属性出现在一个结点上,就不必该结点的任 何后代上考虑它(步骤13)。

- 递归划分步骤仅当下列条件之一成立停止:

- (a) 给定结点的所有样本属于同一类(步骤2 和3)。

- (b) 没有剩余属性可以用来进一步划分样本(步骤4)。在此情况下,使用多数表决(步骤5)。

- 这涉及将给定的结点转换成树叶,并用样本中的多数所在的类标记它。替换地,可以存放结

- 点样本的类分布。

- (c) 分枝

- test_attribute = a i 没有样本(步骤11)。在这种情况下,以 samples 中的多数类

- 创建一个树叶(步骤12)

3.1 其他算法:

C4.5: Quinlan

Classification and Regression Trees (CART): (L. Breiman, J. Friedman, R. Olshen, C. Stone)

共同点:都是贪心算法,自上而下(Top-down approach)

区别:属性选择度量方法不同: C4.5 (gain ratio), CART(gini index), ID3 (Information Gain)

3.2 如何处理连续性变量的属性?

4. 树剪枝叶 (避免overfitting)

4.1 先剪枝

4.2 后剪枝

5. 决策树的优点:

直观,便于理解,小规模数据集有效

6. 决策树的缺点:

处理连续变量不好

类别较多时,错误增加的比较快

可规模性一般

二、接下来实战自己建立决策树

1. Python

2. Python机器学习的库:scikit-learn

2.1: 特性:

简单高效的数据挖掘和机器学习分析

对所有用户开放,根据不同需求高度可重用性

基于Numpy, SciPy和matplotlib

开源,商用级别:获得 BSD许可

2.2 覆盖问题领域:

分类(classification), 回归(regression), 聚类(clustering), 降维(dimensionality reduction)

模型选择(model selection), 预处理(preprocessing)

3. 使用用scikit-learn

安装scikit-learn: pip, easy_install, windows installer

安装必要package:numpy, SciPy和matplotlib, 可使用Anaconda (包含numpy, scipy等科学计算常用

package)

安装注意问题:Python解释器版本(2.7 or 3.4?), 32-bit or 64-bit系统

文档: http://scikit-learn.org/stable/modules/tree.html

解释Python代码

安装 Graphviz: http://www.graphviz.org/

配置环境变量

转化dot文件至pdf可视化决策树:dot -Tpdf iris.dot -o outpu.pdf

三、代码实战决策树

# 将特征值转化为dummy variable

from sklearn.feature_extraction import DictVectorizer

# 自带 - 读取csv

import csv

from sklearn import tree

from sklearn import preprocessing

from sklearn.externals.six import StringIO

# 1. 预处理:对数据整合规范化

# 读取表头

myData = open("buyComputer.csv", "rt") # [2] myData = open("buyComputer.csv", "rb")

reader = csv.reader(myData) # print(reader)

headers = next(reader) # [2] headers = reader.next()

# print(headers) # ['RID', 'age', 'income', 'student', 'credit_rating', 'class_buys_computer']

featureList = []

labelList = []

for row in reader:

# print(row) # ['RID', 'age', 'income', 'student', 'credit_rating', 'class_buys_computer']['1', 'youth', 'high', 'no', 'fair', 'no']

labelList.append(row[len(row) - 1])

rowDict = {} # 一个属性一个字典

for i in range(1, len(row) - 1):

rowDict[headers[i]] = row[i] # {'age': 'youth', 'income': 'high', 'student': 'no', 'credit_rating': 'fair'}...

featureList.append(rowDict)

# print(featureList) # [{'age': 'youth', 'income': 'high', 'student': 'no', 'credit_rating': 'fair'}, {'age': 'youth', 'income': 'high', 'student': 'no', 'credit_rating': 'excellent'}, {'age': 'middle_aged', 'income': 'high', 'student': 'no', 'credit_rating': 'fair'}, {'age': 'senior', 'income': 'medium', 'student': 'no', 'credit_rating': 'fair'}, {'age': 'senior', 'income': 'low', 'student': 'yes', 'credit_rating': 'fair'}, {'age': 'senior', 'income': 'low', 'student': 'yes', 'credit_rating': 'excellent'}, {'age': 'middle_aged', 'income': 'low', 'student': 'yes', 'credit_rating': 'excellent'}, {'age': 'youth', 'income': 'medium', 'student': 'no', 'credit_rating': 'fair'}, {'age': 'youth', 'income': 'low', 'student': 'yes', 'credit_rating': 'fair'}, {'age': 'senior', 'income': 'medium', 'student': 'yes', 'credit_rating': 'fair'}, {'age': 'youth', 'income': 'medium', 'student': 'yes', 'credit_rating': 'excellent'}, {'age': 'middle_aged', 'income': 'medium', 'student': 'no', 'credit_rating': 'excellent'}, {'age': 'middle_aged', 'income': 'high', 'student': 'yes', 'credit_rating': 'fair'}, {'age': 'senior', 'income': 'medium', 'student': 'no', 'credit_rating': 'excellent'}]

# Vetorize features

vec = DictVectorizer()

dummyX = vec.fit_transform(featureList).toarray()

# print("dummyX:" + str(dummyX)) # [[0. 0. 1. 0. 1. 1. 0. 0. 1. 0.] [0. 0. 1. 1. 0. 1. 0. 0. 1. 0.]]

# print(vec.get_feature_names()) # ['age=middle_aged', 'age=senior', 'age=youth', 'credit_rating=excellent', 'credit_rating=fair', 'income=high', 'income=low', 'income=medium', 'student=no', 'student=yes']

# print("labelList: " + str(labelList)) # labelList: ['no', 'no', 'yes', 'yes', 'yes', 'no', 'yes', 'no', 'yes', 'yes', 'yes', 'yes', 'yes', 'no']

# vectorize class labels

lb = preprocessing.LabelBinarizer()

dummyY = lb.fit_transform(labelList)

# print("dummyY:" + str(dummyY)) #[[0] [0] [1] [1]...]

# 2. 调用库函数

# Using decision tree for classification

clf = tree.DecisionTreeClassifier(criterion="entropy")

clf = clf.fit(dummyX, dummyY)

# print(str(clf)) # DecisionTreeClassifier(class_weight=None, criterion='entropy', max_depth=None,max_features=None, max_leaf_nodes=None,

# 3. 可视化

# Visualize model

with open("dsTree.dot","w") as f:

f = tree.export_graphviz(clf, feature_names=vec.get_feature_names(), out_file=f) # 将 011 还原回 young名称这些

oneRowX = dummyX[0, :]

# print(str(oneRowX)) # [0. 0. 1. 0. 1. 1. 0. 0. 1. 0.]

newRowX = oneRowX

newRowX[0] = 1

newRowX[2] = 0

# predictY = clf.predict(newRowX)

predictY = clf.predict(newRowX.reshape(1, -1))

print(str(predictY))

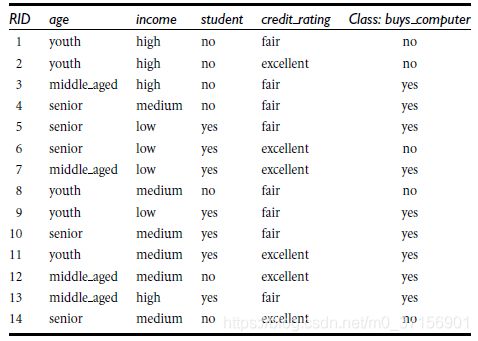

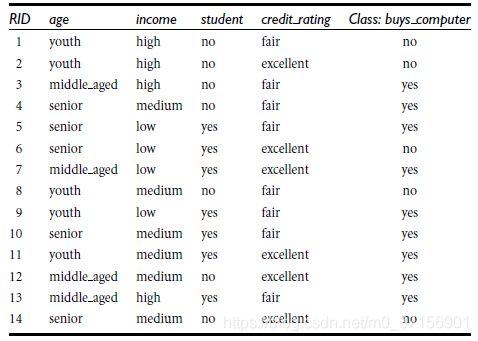

四、实验数据

| RID | age | income | student | credit_rating | class_buys_computer | |

| 1 | youth | high | no | fair | no | |

| 2 | youth | high | no | excellent | no | |

| 3 | middle_aged | high | no | fair | yes | |

| 4 | senior | medium | no | fair | yes | |

| 5 | senior | low | yes | fair | yes | |

| 6 | senior | low | yes | excellent | no | |

| 7 | middle_aged | low | yes | excellent | yes | |

| 8 | youth | medium | no | fair | no | |

| 9 | youth | low | yes | fair | yes | |

| 10 | senior | medium | yes | fair | yes | |

| 11 | youth | medium | yes | excellent | yes | |

| 12 | middle_aged | medium | no | excellent | yes | |

| 13 | middle_aged | high | yes | fair | yes | |

| 14 | senior | medium | no | excellent | no | |