MODNet 图片抠像

引用来源

@article{MODNet,

author = {Zhanghan Ke and Kaican Li and Yurou Zhou and Qiuhua Wu and Xiangyu Mao and Qiong Yan and Rynson W.H. Lau},

title = {Is a Green Screen Really Necessary for Real-Time Portrait Matting?},

journal={ArXiv},

volume={abs/2011.11961},

year = {2020},

}

利用神经网络实现图片抠像,虽然比不上PS高手精细的抠像成果,但实现自动抠像还是蛮不错滴。本文介绍MODNet抠像。

克隆MODNet到本地目录 MODNet

git clone https://github.com/ZHKKKe/MODNet

requirements.txt包括如下:

numpy

gdown

opencv-python

pillow

torch == 1.1.0

torchvision == 0.3.0

安装运行所需的环境

pip install -r requirements.txt

预训练模型在这里 :

modnet_photographic_portrait_matting.ckpt

模型百度网盘:在这里

密码:gchf

把模型下载到目录:MODNet/pretrained,下面运行需要加载此模型。

现在,工作目录是MODNet,在其目录下建立输入图片和输出图片的目录:

input-img, output-img

把需要抠图的图片放到input-img

MODNet目录下,运行

python -m demo.image_matting.colab.inference-1 \

--input-path input-img \

--output-path output-img \

--ckpt-path pretrained/modnet_photographic_portrait_matting.ckpt

现在可以从output-img中找到已经抠好的图片xxx_fg.png,遮罩图片xxx_matte.png

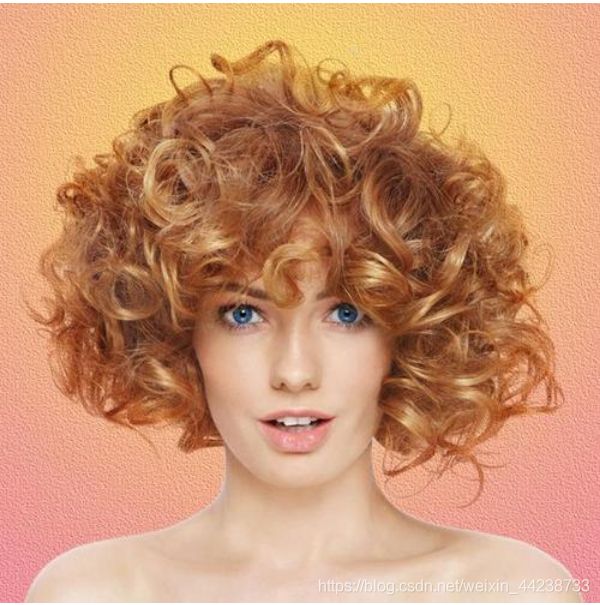

看看MODNet模型的抠图效果

python程序如下。原作者的程序中只给出遮罩matte,没有抠图结果。鄙人不才,添加了抠出的前景图片,供参考。

import os

import sys

import argparse

import numpy as np

from PIL import Image

import torch

import torch.nn as nn

import torch.nn.functional as F

import torchvision.transforms as transforms

from src.models.modnet import MODNet

if __name__ == '__main__':

# define cmd arguments

parser = argparse.ArgumentParser()

parser.add_argument('--input-path', type=str, help='path of input images')

parser.add_argument('--output-path', type=str, help='path of output images')

parser.add_argument('--ckpt-path', type=str, help='path of pre-trained MODNet')

args = parser.parse_args()

# check input arguments

if not os.path.exists(args.input_path):

print('Cannot find input path: {0}'.format(args.input_path))

exit()

if not os.path.exists(args.output_path):

print('Cannot find output path: {0}'.format(args.output_path))

exit()

if not os.path.exists(args.ckpt_path):

print('Cannot find ckpt path: {0}'.format(args.ckpt_path))

exit()

# define hyper-parameters

ref_size = 512

# define image to tensor transform

im_transform = transforms.Compose(

[

transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))

]

)

# create MODNet and load the pre-trained ckpt

modnet = MODNet(backbone_pretrained=False)

modnet = nn.DataParallel(modnet).cuda()

modnet.load_state_dict(torch.load(args.ckpt_path))

modnet.eval()

# 注:程序中的数字仅表示某张输入图片尺寸,如1080x1440,此处只为记住其转换过程。

# inference images

im_names = os.listdir(args.input_path)

for im_name in im_names:

print('Process image: {0}'.format(im_name))

# read image

im = Image.open(os.path.join(args.input_path, im_name))

# unify image channels to 3

im = np.asarray(im)

if len(im.shape) == 2:

im = im[:, :, None]

if im.shape[2] == 1:

im = np.repeat(im, 3, axis=2)

elif im.shape[2] == 4:

im = im[:, :, 0:3]

im_org = im # 保存numpy原始数组 (1080,1440,3)

# convert image to PyTorch tensor

im = Image.fromarray(im)

im = im_transform(im)

# add mini-batch dim

im = im[None, :, :, :]

# resize image for input

im_b, im_c, im_h, im_w = im.shape

if max(im_h, im_w) < ref_size or min(im_h, im_w) > ref_size:

if im_w >= im_h:

im_rh = ref_size

im_rw = int(im_w / im_h * ref_size)

elif im_w < im_h:

im_rw = ref_size

im_rh = int(im_h / im_w * ref_size)

else:

im_rh = im_h

im_rw = im_w

im_rw = im_rw - im_rw % 32

im_rh = im_rh - im_rh % 32

im = F.interpolate(im, size=(im_rh, im_rw), mode='area')

# inference

_, _, matte = modnet(im.cuda(), True) # 从模型获得的 matte ([1,1,512, 672])

# resize and save matte,foreground picture

matte = F.interpolate(matte, size=(im_h, im_w), mode='area') #内插,扩展到([1,1,1080,1440]) 范围[0,1]

matte = matte[0][0].data.cpu().numpy() # torch 张量转换成numpy (1080, 1440)

matte_name = im_name.split('.')[0] + '_matte.png'

Image.fromarray(((matte * 255).astype('uint8')), mode='L').save(os.path.join(args.output_path, matte_name))

matte_org = np.repeat(np.asarray(matte)[:, :, None], 3, axis=2) # 扩展到 (1080, 1440, 3) 以便和im_org计算

foreground = im_org * matte_org + np.full(im_org.shape, 255) * (1 - matte_org) # 计算前景,获得抠像

fg_name = im_name.split('.')[0] + '_fg.png'

Image.fromarray(((foreground).astype('uint8')), mode='RGB').save(os.path.join(args.output_path, fg_name))