CANN训练营第三季进阶班基于昇腾PyTorch框架的模型训练调优-数字识别

CANN训练营第三季进阶班基于昇腾PyTorch框架的模型训练调优-数字识别

环境搭建

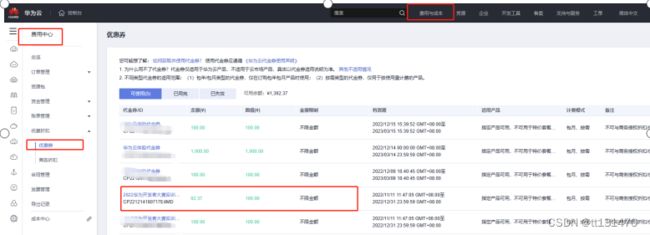

申请镜像和代金券到账情况如下:

使用镜像申请ECS服务器如下:

| 规格 |

AI加速型 | ai1s.large.4 | 2vCPUs | 8GiB |

|

| 镜像 |

6.0.RC1.alpha001_new | 共享镜像 版本:Ubuntu 18.04 server 64bit |

登录ECS服务器

使用“xshell”,通过SSH到ECS上(root用户):

需要修改HwHiAiUser用户的默认shell为bash。用root用户,vi /etc/passwd,修改成如下: HwHiAiUser:x:1000:1000::/home/HwHiAiUser:/bin/bash

编译运行项目

MNIST数据集简介

MNIST数据集是一个公开的数据集,相当于深度学习的hello world,用来检验一个模型库框架是否有效的一个评价指标。

MNIST数据集是由0〜9手写数字图片和数字标签所组成的,由60000个训练样本和10000个测试样本组成,每个样本都是一张28 * 28像素的灰度手写数字图片。MNIST 数据集来自美国国家标准与技术研究所,整个训练集由250个不同人的手写数字组成,其中50%来自美国高中学生,50%来自人口普查的工作人员。

代码:

import torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets,transforms

import torchvision

from torch.autograd import Variable

from torch.utils.data import DataLoader

import cv2

class LeNet(nn.Module):

def__init__(self):

super(LeNet, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d(1, 6, 3, 1, 2),

nn.ReLU(),

nn.MaxPool2d(2, 2)

)

self.conv2 = nn.Sequential(

nn.Conv2d(6, 16, 5),

nn.ReLU(),

nn.MaxPool2d(2, 2)

)

self.fc1 = nn.Sequential(

nn.Linear(16 * 5 * 5, 120),

nn.BatchNorm1d(120),

nn.ReLU()

)

self.fc2 = nn.Sequential(

nn.Linear(120, 84),

nn.BatchNorm1d(84),#加快收敛速度的方法(注:批标准化一般放在全连接层后面,激活函数层的前面) nn.ReLU()

)

self.fc3 = nn.Linear(84, 10)

# self.sfx = nn.Softmax()def forward(self, x):

x = self.conv1(x)

x = self.conv2(x)

# print(x.shape)

x = x.view(x.size()[0], -1)

x = self.fc1(x)

x = self.fc2(x)

x = self.fc3(x)

# x = self.sfx(x)return x

device = torch.device('cuda'if torch.cuda.is_available() else'cpu')

batch_size = 64

LR = 0.001

Momentum = 0.9

# 下载数据集

train_dataset = datasets.MNIST(root = './data/',

train=True,

transform = transforms.ToTensor(),

download=False)

test_dataset =datasets.MNIST(root = './data/',

train=False,

transform=transforms.ToTensor(),

download=False)

#建立一个数据迭代器

train_loader = torch.utils.data.DataLoader(dataset = train_dataset,

batch_size = batch_size,

shuffle = True)

test_loader = torch.utils.data.DataLoader(dataset = test_dataset,

batch_size = batch_size,

shuffle = False)

#实现单张图片可视化

# images,labels = next(iter(train_loader))

# img = torchvision.utils.make_grid(images)

# img = img.numpy().transpose(1,2,0)

# # img.shape

# std = [0.5,0.5,0.5]

# mean = [0.5,0.5,0.5]

# img = img*std +mean

# cv2.imshow('win',img)

# key_pressed = cv2.waitKey(0)

net = LeNet().to(device)

criterion = nn.CrossEntropyLoss()#定义损失函数

optimizer = optim.SGD(net.parameters(),lr=LR,momentum=Momentum)

epoch = 1

if__name__ == '__main__':

for epoch in range(epoch):

sum_loss = 0.0

for i, data in enumerate(train_loader):

inputs, labels = data

inputs, labels = Variable(inputs).cuda(), Variable(labels).cuda()

optimizer.zero_grad()#将梯度归零

outputs = net(inputs)#将数据传入网络进行前向运算

loss = criterion(outputs, labels)#得到损失函数

loss.backward()#反向传播

optimizer.step()#通过梯度做一步参数更新# print(loss)

sum_loss += loss.item()

if i % 100 == 99:

print('[%d,%d] loss:%.03f' % (epoch + 1, i + 1, sum_loss / 100))

sum_loss = 0.0

#验证测试集

net.eval()#将模型变换为测试模式

correct = 0

total = 0

for data_test in test_loader:

images, labels = data_test

images, labels = Variable(images).cuda(), Variable(labels).cuda()

output_test = net(images)

# print("output_test:",output_test.shape)

_, predicted = torch.max(output_test, 1)#此处的predicted获取的是最大值的下标# print("predicted:",predicted.shape)

total += labels.size(0)

correct += (predicted == labels).sum()

print("correct1: ",correct)

print("Test acc: {0}".format(correct.item() / len(test_dataset)))#.cpu().numpy()