基于机器学习算法与历史数据预测未来的站点关闭(Matlab代码实现)

目录

1 概述

2 运行结果

3 参考文献

4 Matlab代码

1 概述

应用背景:

通过分析序列进行合理预测,做到提前掌握未来的发展趋势,为业务决策提供依据,这也是决策科学化的前提。

时间序列分析:

时间序列就是按时间顺序排列的一组数据序列。

时间序列分析就是发现这组数据的变动规律并用于预测的统计技术。

一、时间序列分析简介

时间序列分析有三个基本特点:

- 假设事物发展趋势会延伸到未来

- 预测所依据的数据具有不规则性

- 不考虑事物发展之间的因果关系

2 运行结果

3 参考文献

[1]杨学威.基于机器学习算法的股指期货价格预测模型研究[J].软件工程,2022,25(12):1-8.DOI:10.19644/j.cnki.issn2096-1472.2022.012.001.

4 Matlab代码

%% Machine Learning Online Class - Exercise 1: Linear Regression

% Instructions

% ------------

%

% This file contains code that helps you get started on the

% linear exercise. You will need to complete the following functions

% in this exericse:

%

% warmUpExercise.m

% plotData.m

% gradientDescent.m

% computeCost.m

% gradientDescentMulti.m

% computeCostMulti.m

% featureNormalize.m

% normalEqn.m

%

% For this exercise, you will not need to change any code in this file,

% or any other files other than those mentioned above.

%

%主函数部分代码:

%% Initialization

clear ; close all; clc

%% ======================= Part 2: Plotting =======================

fprintf('Plotting Data ...\n')

data = load('P1.txt');

X = data(:, 1); y = data(:, 2);

m = length(y); % number of training examples

% Plot Data

% Note: You have to complete the code in plotData.m

plotData(X, y);

fprintf('Program paused. Press enter to continue.\n');

pause;

%% =================== Part 3: Cost and Gradient descent ===================

X = [ones(m, 1), data(:,1)]; % Add a column of ones to x

theta = zeros(2, 1); % initialize fitting parameters

% Some gradient descent settings

iterations = 1500;

alpha = 0.01;

fprintf('\nTesting the cost function ...\n')

% compute and display initial cost

J = computeCost(X, y, theta);

fprintf('With theta = [0 ; 0]\nCost computed = %f\n', J);

% further testing of the cost function

J = computeCost(X, y, [-1 ; 2]);

fprintf('\nWith theta = [-1 ; 2]\nCost computed = %f\n', J);

fprintf('Program paused. Press enter to continue.\n');

pause;

fprintf('\nRunning Gradient Descent ...\n')

% run gradient descent

theta = gradientDescent(X, y, theta, alpha, iterations);

% print theta to screen

fprintf('Theta found by gradient descent:\n');

fprintf('%f\n', theta);

%fprintf('Expected theta values (approx)\n');

% Plot the linear fit

hold on; % keep previous plot visible

plot(X(:,2), X*theta, '-')

legend('Training data', 'Linear regression')

hold off % don't overlay any more plots on this figure

fprintf('Program paused. Press enter to continue.\n');

pause;

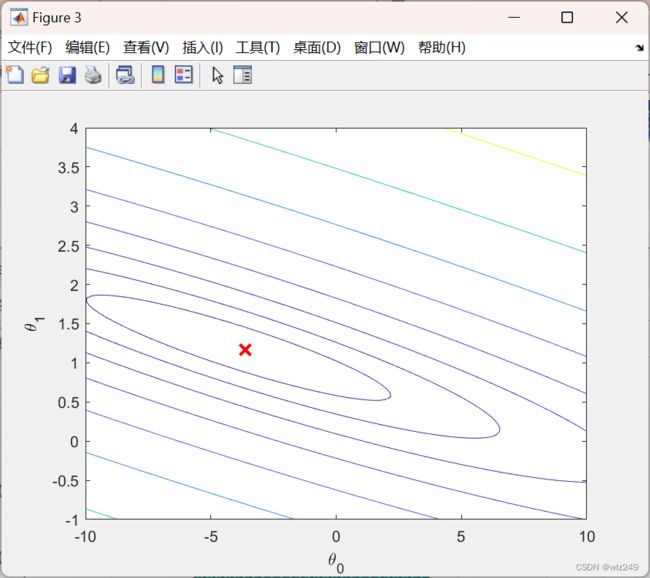

%% ============= Part 4: Visualizing J(theta_0, theta_1) =============

fprintf('Visualizing J(theta_0, theta_1) ...\n')

% Grid over which we will calculate J

theta0_vals = linspace(-10, 10, 100);

theta1_vals = linspace(-1, 4, 100);

% initialize J_vals to a matrix of 0's

J_vals = zeros(length(theta0_vals), length(theta1_vals));

% Fill out J_vals

for i = 1:length(theta0_vals)

for j = 1:length(theta1_vals)

t = [theta0_vals(i); theta1_vals(j)];

J_vals(i,j) = computeCost(X, y, t);

end

end