Inception V4与 Inception-ResNet-v2网络结构与源码解读

一、网络结构

网络总体结构

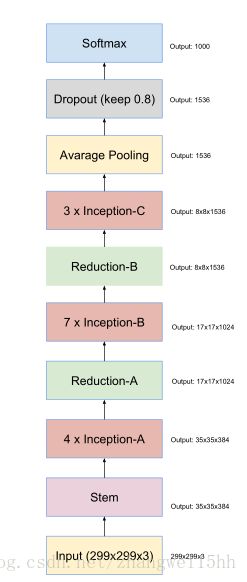

1.Inception V4

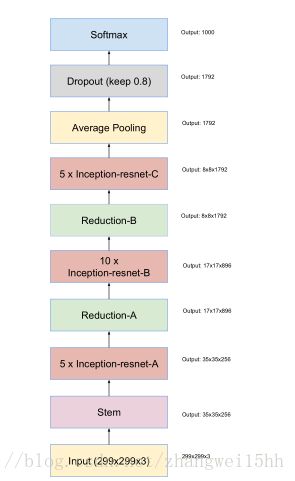

2. Inception-ResNet-v2

3. 二者的区别主要在于Inception和Inception-resnet,下面将这两部分的结构进行对比说明。

二、网络分解部分及源码

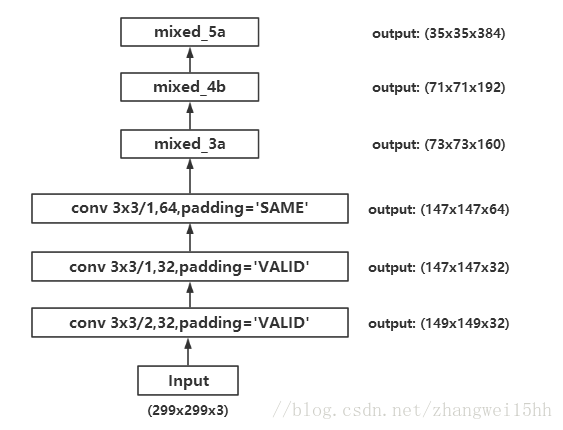

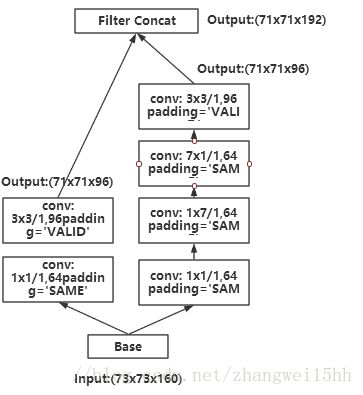

2.1 stem 部分

2.1.1 展开结构图

2.1.2 前3层conv代码

with tf.variable_scope(scope, 'InceptionV4', [inputs]):

with slim.arg_scope([slim.conv2d, slim.max_pool2d, slim.avg_pool2d],

stride=1, padding='SAME'):

# 299 x 299 x 3

net = slim.conv2d(inputs, 32, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

if add_and_check_final('Conv2d_1a_3x3', net): return net, end_points

# 149 x 149 x 32

net = slim.conv2d(net, 32, [3, 3], padding='VALID',

scope='Conv2d_2a_3x3')

if add_and_check_final('Conv2d_2a_3x3', net): return net, end_points

# 147 x 147 x 32

net = slim.conv2d(net, 64, [3, 3], scope='Conv2d_2b_3x3')

if add_and_check_final('Conv2d_2b_3x3', net): return net, end_points

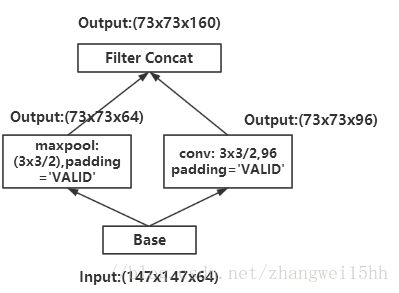

# 147 x 147 x 642.1.3 mixed_3a

代码:

with tf.variable_scope('Mixed_3a'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.max_pool2d(net, [3, 3], stride=2, padding='VALID',

scope='MaxPool_0a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 96, [3, 3], stride=2, padding='VALID',

scope='Conv2d_0a_3x3')

net = tf.concat(axis=3, values=[branch_0, branch_1])

if add_and_check_final('Mixed_3a', net): return net, end_points2.1.4 mixed_4a

with tf.variable_scope('Mixed_4a'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_0 = slim.conv2d(branch_0, 96, [3, 3], padding='VALID',

scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 64, [1, 7], scope='Conv2d_0b_1x7')

branch_1 = slim.conv2d(branch_1, 64, [7, 1], scope='Conv2d_0c_7x1')

branch_1 = slim.conv2d(branch_1, 96, [3, 3], padding='VALID',

scope='Conv2d_1a_3x3')

net = tf.concat(axis=3, values=[branch_0, branch_1])

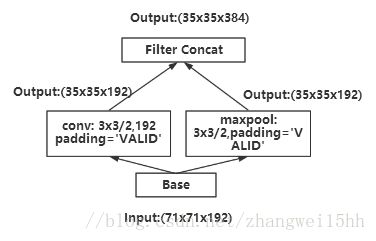

if add_and_check_final('Mixed_4a', net): return net, end_points2.1.5 mixed_5a

代码:

with tf.variable_scope('Mixed_5a'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 192, [3, 3], stride=2, padding='VALID',

scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.max_pool2d(net, [3, 3], stride=2, padding='VALID',

scope='MaxPool_1a_3x3')

net = tf.concat(axis=3, values=[branch_0, branch_1])

if add_and_check_final('Mixed_5a', net): return net, end_points

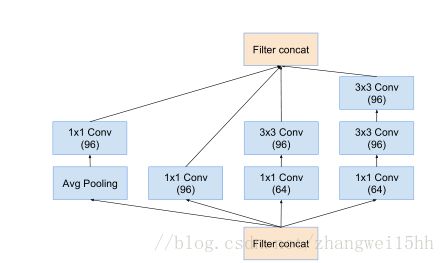

2.2 Inception A 与 Inception-Resnet-A

2.2.1 Inception A

def block_inception_a(inputs, scope=None, reuse=None):

"""Builds Inception-A block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(scope, 'BlockInceptionA', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 96, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 96, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(inputs, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 96, [3, 3], scope='Conv2d_0b_3x3')

branch_2 = slim.conv2d(branch_2, 96, [3, 3], scope='Conv2d_0c_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.avg_pool2d(inputs, [3, 3], scope='AvgPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 96, [1, 1], scope='Conv2d_0b_1x1')

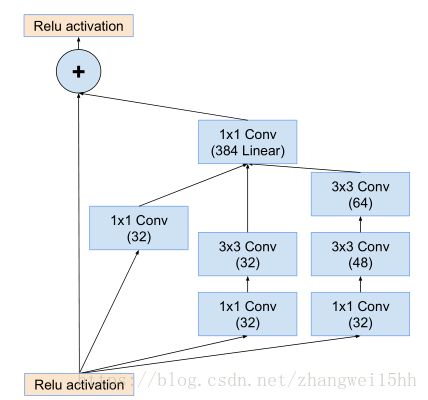

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])2.2.2 Inception-Resnet-A

def block35(net, scale=1.0, activation_fn=tf.nn.relu, scope=None, reuse=None):

"""Builds the 35x35 resnet block."""

with tf.variable_scope(scope, 'Block35', [net], reuse=reuse):

with tf.variable_scope('Branch_0'):

tower_conv = slim.conv2d(net, 32, 1, scope='Conv2d_1x1')

with tf.variable_scope('Branch_1'):

tower_conv1_0 = slim.conv2d(net, 32, 1, scope='Conv2d_0a_1x1')

tower_conv1_1 = slim.conv2d(tower_conv1_0, 32, 3, scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

tower_conv2_0 = slim.conv2d(net, 32, 1, scope='Conv2d_0a_1x1')

tower_conv2_1 = slim.conv2d(tower_conv2_0, 48, 3, scope='Conv2d_0b_3x3')

tower_conv2_2 = slim.conv2d(tower_conv2_1, 64, 3, scope='Conv2d_0c_3x3')

mixed = tf.concat(axis=3, values=[tower_conv, tower_conv1_1, tower_conv2_2])

up = slim.conv2d(mixed, net.get_shape()[3], 1, normalizer_fn=None,

activation_fn=None, scope='Conv2d_1x1')

scaled_up = up * scale

if activation_fn == tf.nn.relu6:

# Use clip_by_value to simulate bandpass activation.

scaled_up = tf.clip_by_value(scaled_up, -6.0, 6.0)

net += scaled_up

if activation_fn:

net = activation_fn(net)

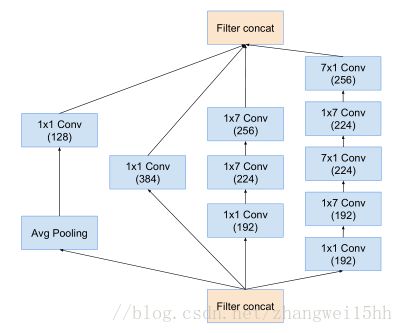

return net2.3 Inception B 与 Inception-Resnet-B

2.3.1 Inception B

def block_inception_b(inputs, scope=None, reuse=None):

"""Builds Inception-B block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(scope, 'BlockInceptionB', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 384, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 224, [1, 7], scope='Conv2d_0b_1x7')

branch_1 = slim.conv2d(branch_1, 256, [7, 1], scope='Conv2d_0c_7x1')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 192, [7, 1], scope='Conv2d_0b_7x1')

branch_2 = slim.conv2d(branch_2, 224, [1, 7], scope='Conv2d_0c_1x7')

branch_2 = slim.conv2d(branch_2, 224, [7, 1], scope='Conv2d_0d_7x1')

branch_2 = slim.conv2d(branch_2, 256, [1, 7], scope='Conv2d_0e_1x7')

with tf.variable_scope('Branch_3'):

branch_3 = slim.avg_pool2d(inputs, [3, 3], scope='AvgPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 128, [1, 1], scope='Conv2d_0b_1x1')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])2.3.2 Inception-Resnet-B

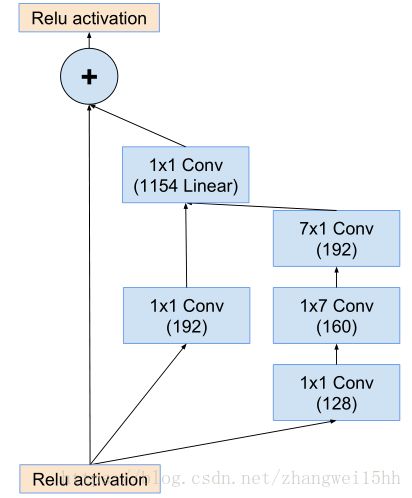

def block17(net, scale=1.0, activation_fn=tf.nn.relu, scope=None, reuse=None):

"""Builds the 17x17 resnet block."""

with tf.variable_scope(scope, 'Block17', [net], reuse=reuse):

with tf.variable_scope('Branch_0'):

tower_conv = slim.conv2d(net, 192, 1, scope='Conv2d_1x1')

with tf.variable_scope('Branch_1'):

tower_conv1_0 = slim.conv2d(net, 128, 1, scope='Conv2d_0a_1x1')

tower_conv1_1 = slim.conv2d(tower_conv1_0, 160, [1, 7],

scope='Conv2d_0b_1x7')

tower_conv1_2 = slim.conv2d(tower_conv1_1, 192, [7, 1],

scope='Conv2d_0c_7x1')

mixed = tf.concat(axis=3, values=[tower_conv, tower_conv1_2])

up = slim.conv2d(mixed, net.get_shape()[3], 1, normalizer_fn=None,

activation_fn=None, scope='Conv2d_1x1')

scaled_up = up * scale

if activation_fn == tf.nn.relu6:

# Use clip_by_value to simulate bandpass activation.

scaled_up = tf.clip_by_value(scaled_up, -6.0, 6.0)

net += scaled_up

if activation_fn:

net = activation_fn(net)

return net2.4 Inception C 与 Inception-Resnet-C

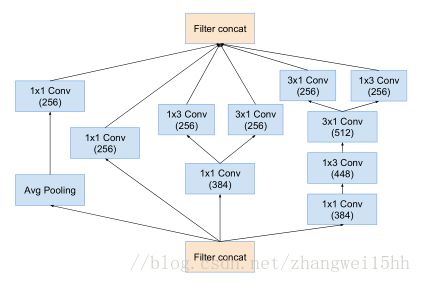

2.4.1 Inception C

def block_inception_c(inputs, scope=None, reuse=None):

"""Builds Inception-C block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(scope, 'BlockInceptionC', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 256, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 384, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = tf.concat(axis=3, values=[

slim.conv2d(branch_1, 256, [1, 3], scope='Conv2d_0b_1x3'),

slim.conv2d(branch_1, 256, [3, 1], scope='Conv2d_0c_3x1')])

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(inputs, 384, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 448, [3, 1], scope='Conv2d_0b_3x1')

branch_2 = slim.conv2d(branch_2, 512, [1, 3], scope='Conv2d_0c_1x3')

branch_2 = tf.concat(axis=3, values=[

slim.conv2d(branch_2, 256, [1, 3], scope='Conv2d_0d_1x3'),

slim.conv2d(branch_2, 256, [3, 1], scope='Conv2d_0e_3x1')])

with tf.variable_scope('Branch_3'):

branch_3 = slim.avg_pool2d(inputs, [3, 3], scope='AvgPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 256, [1, 1], scope='Conv2d_0b_1x1')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])2.4.2Inception-Resnet-C

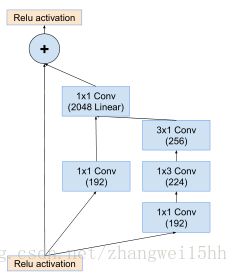

def block8(net, scale=1.0, activation_fn=tf.nn.relu, scope=None, reuse=None):

"""Builds the 8x8 resnet block."""

with tf.variable_scope(scope, 'Block8', [net], reuse=reuse):

with tf.variable_scope('Branch_0'):

tower_conv = slim.conv2d(net, 192, 1, scope='Conv2d_1x1')

with tf.variable_scope('Branch_1'):

tower_conv1_0 = slim.conv2d(net, 192, 1, scope='Conv2d_0a_1x1')

tower_conv1_1 = slim.conv2d(tower_conv1_0, 224, [1, 3],

scope='Conv2d_0b_1x3')

tower_conv1_2 = slim.conv2d(tower_conv1_1, 256, [3, 1],

scope='Conv2d_0c_3x1')

mixed = tf.concat(axis=3, values=[tower_conv, tower_conv1_2])

up = slim.conv2d(mixed, net.get_shape()[3], 1, normalizer_fn=None,

activation_fn=None, scope='Conv2d_1x1')

scaled_up = up * scale

if activation_fn == tf.nn.relu6:

# Use clip_by_value to simulate bandpass activation.

scaled_up = tf.clip_by_value(scaled_up, -6.0, 6.0)

net += scaled_up

if activation_fn:

net = activation_fn(net)

return net2.5 Reduction A

def block_reduction_a(inputs, scope=None, reuse=None):

"""Builds Reduction-A block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(scope, 'BlockReductionA', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 384, [3, 3], stride=2, padding='VALID',

scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 224, [3, 3], scope='Conv2d_0b_3x3')

branch_1 = slim.conv2d(branch_1, 256, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.max_pool2d(inputs, [3, 3], stride=2, padding='VALID',

scope='MaxPool_1a_3x3')

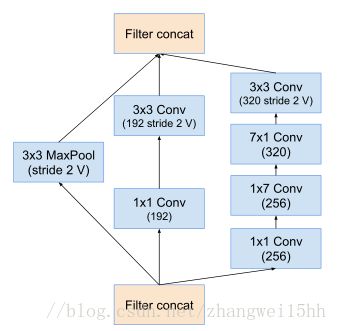

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2])2.6 Reduction B

def block_reduction_b(inputs, scope=None, reuse=None):

"""Builds Reduction-B block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(scope, 'BlockReductionB', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_0 = slim.conv2d(branch_0, 192, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 256, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 256, [1, 7], scope='Conv2d_0b_1x7')

branch_1 = slim.conv2d(branch_1, 320, [7, 1], scope='Conv2d_0c_7x1')

branch_1 = slim.conv2d(branch_1, 320, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.max_pool2d(inputs, [3, 3], stride=2, padding='VALID',

scope='MaxPool_1a_3x3')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2])

2.7 剩下部分

2.7.1 Average pooling

with tf.variable_scope('Logits'):

# 8 x 8 x 1536

kernel_size = net.get_shape()[1:3]

if kernel_size.is_fully_defined():

net = slim.avg_pool2d(net, kernel_size, padding='VALID',

scope='AvgPool_1a')

else:

net = tf.reduce_mean(net, [1, 2], keep_dims=True,

name='global_pool')

end_points['global_pool'] = net

if not num_classes:

return net, end_points

# 1 x 1 x 15362.7.2 DropOut

net = slim.dropout(net, dropout_keep_prob, scope='Dropout_1b')

net = slim.flatten(net, scope='PreLogitsFlatten')

end_points['PreLogitsFlatten'] = net

# 15362.8.3 Softmax

logits = slim.fully_connected(net, num_classes, activation_fn=None,

scope='Logits')

end_points['Logits'] = logits

end_points['Predictions'] = tf.nn.softmax(logits, name='Predictions')参考:

1.https://github.com/tensorflow/models/blob/master/research/slim/nets/inception_v4.py

2.https://arxiv.org/pdf/1602.07261.pdf