maskrcnn训练自己的数据集_MaskRCNN-Benchmark(Pytorch版本)训练自己的数据以及避坑指南

一、安装

地址:MaskRCNN-Benchmark(Pytorch版本)

首先要阅读官网说明的环境要求,千万不要一股脑直接安装,不然后面程序很有可能会报错!!!

- PyTorch 1.0 from a nightly release. It will not work with 1.0 nor 1.0.1. Installation instructions can be found in https://pytorch.org/get-started/locally/

- torchvision from master

- cocoapi

- yacs

- matplotlib

- GCC >= 4.9

- OpenCV

# first, make sure that your conda is setup properly with the right environment

# for that, check that `which conda`, `which pip` and `which python` points to the

# right path. From a clean conda env, this is what you need to do

conda create --name maskrcnn_benchmark

conda activate maskrcnn_benchmark

# this installs the right pip and dependencies for the fresh python

conda install ipython

# maskrcnn_benchmark and coco api dependencies

pip install ninja yacs cython matplotlib tqdm opencv-python

# follow PyTorch installation in https://pytorch.org/get-started/locally/

# we give the instructions for CUDA 9.0

conda install -c pytorch pytorch-nightly torchvision cudatoolkit=9.0

export INSTALL_DIR=$PWD

# install pycocotools

cd $INSTALL_DIR

git clone https://github.com/cocodataset/cocoapi.git

cd cocoapi/PythonAPI

python setup.py build_ext install

# install apex

cd $INSTALL_DIR

git clone https://github.com/NVIDIA/apex.git

cd apex

python setup.py install --cuda_ext --cpp_ext

# install PyTorch Detection

cd $INSTALL_DIR

git clone https://github.com/facebookresearch/maskrcnn-benchmark.git

cd maskrcnn-benchmark

# the following will install the lib with

# symbolic links, so that you can modify

# the files if you want and won't need to

# re-build it

python setup.py build develop

unset INSTALL_DIR

# or if you are on macOS

# MACOSX_DEPLOYMENT_TARGET=10.9 CC=clang CXX=clang++ python setup.py build develop一定要按上面的说明一步一步来,千万别省略,不然后面程序很有可能会报错!!!

二、数据准备

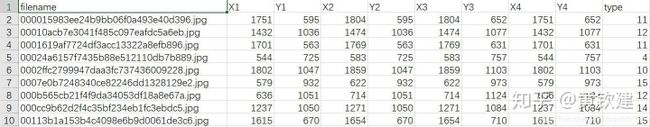

我要制作的原始数据格式是训练文件在一个文件(train),标注文件是csv格式,内容如下:

第一步,先把全部有标记的图片且分为训练集,验证集,分别存储在两个文件夹中,代码如下:

#!/usr/bin/env python

# coding=UTF-8

'''

@Description:

@Author: HuangQinJian

@LastEditors: HuangQinJian

@Date: 2019-05-01 12:56:08

@LastEditTime: 2019-05-01 13:11:38

'''

import pandas as pd

import random

import os

import shutil

if not os.path.exists('trained/'):

os.mkdir('trained/')

if not os.path.exists('val/'):

os.mkdir('val/')

val_rate = 0.15

img_path = 'train/'

img_list = os.listdir(img_path)

train = pd.read_csv('train_label_fix.csv')

# print(img_list)

random.shuffle(img_list)

total_num = len(img_list)

val_num = int(total_num*val_rate)

train_num = total_num-val_num

for i in range(train_num):

img_name = img_list[i]

shutil.copy('train/' + img_name, 'trained/' + img_name)

for j in range(val_num):

img_name = img_list[j+train_num]

shutil.copy('train/' + img_name, 'val/' + img_name)第二步,把csv格式的标注文件转换成coco的格式,代码如下:

#!/usr/bin/env python

# coding=UTF-8

'''

@Description:

@Author: HuangQinJian

@LastEditors: HuangQinJian

@Date: 2019-04-23 11:28:23

@LastEditTime: 2019-05-01 13:15:57

'''

import sys

import os

import json

import cv2

import pandas as pd

START_BOUNDING_BOX_ID = 1

PRE_DEFINE_CATEGORIES = {}

def convert(csv_path, img_path, json_file):

"""

csv_path : csv文件的路径

img_path : 存放图片的文件夹

json_file : 保存生成的json文件路径

"""

json_dict = {"images": [], "type": "instances", "annotations": [],

"categories": []}

bnd_id = START_BOUNDING_BOX_ID

categories = PRE_DEFINE_CATEGORIES

csv = pd.read_csv(csv_path)

img_nameList = os.listdir(img_path)

img_num = len(img_nameList)

print("图片总数为{0}".format(img_num))

for i in range(img_num):

# for i in range(30):

image_id = i+1

img_name = img_nameList[i]

if img_name == '60f3ea2534804c9b806e7d5ae1e229cf.jpg' or img_name == '6b292bacb2024d9b9f2d0620f489b1e4.jpg':

continue

# 可能需要根据具体格式修改的地方

lines = csv[csv.filename == img_name]

img = cv2.imread(os.path.join(img_path, img_name))

height, width, _ = img.shape

image = {'file_name': img_name, 'height': height, 'width': width,

'id': image_id}

print(image)

json_dict['images'].append(image)

for j in range(len(lines)):

# 可能需要根据具体格式修改的地方

category = str(lines.iloc[j]['type'])

if category not in categories:

new_id = len(categories)

categories[category] = new_id

category_id = categories[category]

# 可能需要根据具体格式修改的地方

xmin = int(lines.iloc[j]['X1'])

ymin = int(lines.iloc[j]['Y1'])

xmax = int(lines.iloc[j]['X3'])

ymax = int(lines.iloc[j]['Y3'])

# print(xmin, ymin, xmax, ymax)

assert(xmax > xmin)

assert(ymax > ymin)

o_width = abs(xmax - xmin)

o_height = abs(ymax - ymin)

ann = {'area': o_width*o_height, 'iscrowd': 0, 'image_id':

image_id, 'bbox': [xmin, ymin, o_width, o_height],

'category_id': category_id, 'id': bnd_id, 'ignore': 0,

'segmentation': []}

json_dict['annotations'].append(ann)

bnd_id = bnd_id + 1

for cate, cid in categories.items():

cat = {'supercategory': 'none', 'id': cid, 'name': cate}

json_dict['categories'].append(cat)

json_fp = open(json_file, 'w')

json_str = json.dumps(json_dict, indent=4)

json_fp.write(json_str)

json_fp.close()

if __name__ == '__main__':

# csv_path = 'data/train_label_fix.csv'

# img_path = 'data/train/'

# json_file = 'train.json'

csv_path = 'train_label_fix.csv'

img_path = 'trained/'

json_file = 'trained.json'

convert(csv_path, img_path, json_file)

csv_path = 'train_label_fix.csv'

img_path = 'val/'

json_file = 'val.json'

convert(csv_path, img_path, json_file)第三步,可视化转换后的coco的格式,以确保转换正确,代码如下:

(注意:在这一步中,需要先下载 cocoapi , 可能出现的 问题)

#!/usr/bin/env python

# coding=UTF-8

'''

@Description:

@Author: HuangQinJian

@LastEditors: HuangQinJian

@Date: 2019-04-23 13:43:24

@LastEditTime: 2019-04-30 21:29:26

'''

from pycocotools.coco import COCO

import skimage.io as io

import matplotlib.pyplot as plt

import pylab

import cv2

import os

from skimage.io import imsave

import numpy as np

pylab.rcParams['figure.figsize'] = (8.0, 10.0)

img_path = 'data/train/'

annFile = 'train.json'

if not os.path.exists('anno_image_coco/'):

os.makedirs('anno_image_coco/')

def draw_rectangle(coordinates, image, image_name):

for coordinate in coordinates:

left = np.rint(coordinate[0])

right = np.rint(coordinate[1])

top = np.rint(coordinate[2])

bottom = np.rint(coordinate[3])

# 左上角坐标, 右下角坐标

cv2.rectangle(image,

(int(left), int(right)),

(int(top), int(bottom)),

(0, 255, 0),

2)

imsave('anno_image_coco/'+image_name, image)

# 初始化标注数据的 COCO api

coco = COCO(annFile)

# display COCO categories and supercategories

cats = coco.loadCats(coco.getCatIds())

nms = [cat['name'] for cat in cats]

# print('COCO categories: n{}n'.format(' '.join(nms)))

nms = set([cat['supercategory'] for cat in cats])

# print('COCO supercategories: n{}'.format(' '.join(nms)))

img_path = 'data/train/'

img_list = os.listdir(img_path)

# for i in range(len(img_list)):

for i in range(7):

imgIds = i+1

img = coco.loadImgs(imgIds)[0]

image_name = img['file_name']

# print(img)

# 加载并显示图片

# I = io.imread('%s/%s' % (img_path, img['file_name']))

# plt.axis('off')

# plt.imshow(I)

# plt.show()

# catIds=[] 说明展示所有类别的box,也可以指定类别

annIds = coco.getAnnIds(imgIds=img['id'], catIds=[], iscrowd=None)

anns = coco.loadAnns(annIds)

# print(anns)

coordinates = []

img_raw = cv2.imread(os.path.join(img_path, image_name))

for j in range(len(anns)):

coordinate = []

coordinate.append(anns[j]['bbox'][0])

coordinate.append(anns[j]['bbox'][1]+anns[j]['bbox'][3])

coordinate.append(anns[j]['bbox'][0]+anns[j]['bbox'][2])

coordinate.append(anns[j]['bbox'][1])

# print(coordinate)

coordinates.append(coordinate)

# print(coordinates)

draw_rectangle(coordinates, img_raw, image_name)三、文件配置

在训练自己的数据集过程中需要修改的地方可能很多,下面我就列出常用的几个:

- 修改

maskrcnn_benchmark/config/paths_catalog.py中数据集路径:

class DatasetCatalog(object):

# 看自己的实际情况修改路径!!!

# 看自己的实际情况修改路径!!!

# 看自己的实际情况修改路径!!!

DATA_DIR = ""

DATASETS = {

"coco_2017_train": {

"img_dir": "coco/train2017",

"ann_file": "coco/annotations/instances_train2017.json"

},

"coco_2017_val": {

"img_dir": "coco/val2017",

"ann_file": "coco/annotations/instances_val2017.json"

},

# 改成训练集所在路径!!!

# 改成训练集所在路径!!!

# 改成训练集所在路径!!!

"coco_2014_train": {

"img_dir": "/data1/hqj/traffic-sign-identification/trained",

"ann_file": "/data1/hqj/traffic-sign-identification/trained.json"

},

# 改成验证集所在路径!!!

# 改成验证集所在路径!!!

# 改成验证集所在路径!!!

"coco_2014_val": {

"img_dir": "/data1/hqj/traffic-sign-identification/val",

"ann_file": "/data1/hqj/traffic-sign-identification/val.json"

},

# 改成测试集所在路径!!!

# 改成测试集所在路径!!!

# 改成测试集所在路径!!!

"coco_2014_test": {

"img_dir": "/data1/hqj/traffic-sign-identification/test"

...- config下的配置文件:

由于这个文件下的参数很多,往往需要根据自己的具体需求改,我就列出自己的配置(使用的是e2e_faster_rcnn_X_101_32x8d_FPN_1x.yaml,其中我有注释的必须改,比如 NUM_CLASSES):

INPUT:

MIN_SIZE_TRAIN: (1000,)

MAX_SIZE_TRAIN: 1667

MIN_SIZE_TEST: 1000

MAX_SIZE_TEST: 1667

MODEL:

META_ARCHITECTURE: "GeneralizedRCNN"

WEIGHT: "catalog://ImageNetPretrained/FAIR/20171220/X-101-32x8d"

BACKBONE:

CONV_BODY: "R-101-FPN"

RPN:

USE_FPN: True

BATCH_SIZE_PER_IMAGE: 128

ANCHOR_SIZES: (16, 32, 64, 128, 256)

ANCHOR_STRIDE: (4, 8, 16, 32, 64)

PRE_NMS_TOP_N_TRAIN: 2000

PRE_NMS_TOP_N_TEST: 1000

POST_NMS_TOP_N_TEST: 1000

FPN_POST_NMS_TOP_N_TEST: 1000

FPN_POST_NMS_TOP_N_TRAIN: 1000

ASPECT_RATIOS : (1.0,)

FPN:

USE_GN: True

ROI_HEADS:

# 是否使用FPN

USE_FPN: True

ROI_BOX_HEAD:

USE_GN: True

POOLER_RESOLUTION: 7

POOLER_SCALES: (0.25, 0.125, 0.0625, 0.03125)

POOLER_SAMPLING_RATIO: 2

FEATURE_EXTRACTOR: "FPN2MLPFeatureExtractor"

PREDICTOR: "FPNPredictor"

# 修改成自己任务所需要检测的类别数+1

NUM_CLASSES: 22

RESNETS:

BACKBONE_OUT_CHANNELS: 256

STRIDE_IN_1X1: False

NUM_GROUPS: 32

WIDTH_PER_GROUP: 8

DATASETS:

# paths_catalog.py文件中的配置,数据集指定时如果仅有一个数据集不要忘了逗号(如:("coco_2014_val",))

TRAIN: ("coco_2014_train",)

TEST: ("coco_2014_val",)

DATALOADER:

SIZE_DIVISIBILITY: 32

SOLVER:

BASE_LR: 0.001

WEIGHT_DECAY: 0.0001

STEPS: (240000, 320000)

MAX_ITER: 360000

# 很重要的设置,具体可以参见官网说明:https://github.com/facebookresearch/maskrcnn-benchmark/blob/master/README.md

IMS_PER_BATCH: 1

# 保存模型的间隔

CHECKPOINT_PERIOD: 18000

# 输出文件路径

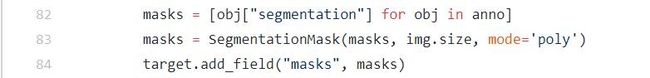

OUTPUT_DIR: "./weight/"- 如果只做检测任务的话,删除

maskrcnn-benchmark/maskrcnn_benchmark/data/datasets/coco.py中 82-84这三行比较保险。

maskrcnn_benchmark/engine/trainer.py中 第 90 行可设置输出日志的间隔(默认20,我感觉输出太频繁,看你自己)

四、模型训练

- 单GPU

官网给出的是:

python /path_to_maskrcnn_benchmark/tools/train_net.py --config-file "/path/to/config/file.yaml"但是这个默认会使用第一个GPU,如果想指定GPU的话,可以使用以下命令:

# 3是要使用GPU的ID

CUDA_VISIBLE_DEVICES=3 python /path_to_maskrcnn_benchmark/tools/train_net.py --config-file "/path/to/config/file.yaml"如果出现内存溢出的情况,这时候就需要调整参数,具体可以参见官网:内存溢出解决 - 多GPU

官网给出的是:

export NGPUS=8

python -m torch.distributed.launch --nproc_per_node=$NGPUS /path_to_maskrcnn_benchmark/tools/train_net.py --config-file "path/to/config/file.yaml" MODEL.RPN.FPN_POST_NMS_TOP_N_TRAIN images_per_gpu x 1000但是这个默认会随机使用GPU,如果想指定GPU的话,可以使用以下命令:

# --nproc_per_node=4 是指使用GPU的数目为4

CUDA_VISIBLE_DEVICES=0,1,2,3 python -m torch.distributed.launch --nproc_per_node=4 /path_to_maskrcnn_benchmark/tools/train_net.py --config-file "path/to/config/file.yaml"遗憾的是,多GPU在我的服务器上一直运行不成功,还请大家帮忙解决!!!

问题地址:Multi-GPU training error

五、模型验证

- 修改 config 配置文件中

WEIGHT: "../weight/model_final.pth"(此处应为训练完保存的权重) - 运行命令:

CUDA_VISIBLE_DEVICES=5 python tools/test_net.py --config-file "/path/to/config/file.yaml" TEST.IMS_PER_BATCH 8其中TEST.IMS_PER_BATCH 8也可以在config文件中直接配置:

TEST:

IMS_PER_BATCH: 8六、模型预测

- 修改 config 配置文件中

WEIGHT: "../weight/model_final.pth"(此处应为训练完保存的权重) - 修改

demo/predictor.py中 CATEGORIES ,替换成自己数据的物体类别(如果想可视化结果,没有可以不改,可以参考demo/下面的例子):

class COCODemo(object):

# COCO categories for pretty print

CATEGORIES = [

"__background",

...

]- 新建一个文件

demo/predict.py(需要修改的地方已做注释)

#!/usr/bin/env python

# coding=UTF-8

'''

@Description:

@Author: HuangQinJian

@LastEditors: HuangQinJian

@Date: 2019-05-01 12:36:04

@LastEditTime: 2019-05-03 17:29:23

'''

import os

import matplotlib.pylab as pylab

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from PIL import Image

from maskrcnn_benchmark.config import cfg

from predictor import COCODemo

from tqdm import tqdm

# this makes our figures bigger

pylab.rcParams['figure.figsize'] = 20, 12

# 替换成自己的配置文件

# 替换成自己的配置文件

# 替换成自己的配置文件

config_file = "../configs/e2e_faster_rcnn_R_50_FPN_1x.yaml"

# update the config options with the config file

cfg.merge_from_file(config_file)

# manual override some options

cfg.merge_from_list(["MODEL.DEVICE", "cuda"])

def load(img_path):

pil_image = Image.open(img_path).convert("RGB")

# convert to BGR format

image = np.array(pil_image)[:, :, [2, 1, 0]]

return image

# 根据自己的需求改

# 根据自己的需求改

# 根据自己的需求改

coco_demo = COCODemo(

cfg,

min_image_size=1600,

confidence_threshold=0.7,

)

# 测试图片的路径

# 测试图片的路径

# 测试图片的路径

imgs_dir = '/data1/hqj/traffic-sign-identification/test'

img_names = os.listdir(imgs_dir)

submit_v4 = pd.DataFrame()

empty_v4 = pd.DataFrame()

filenameList = []

X1List = []

X2List = []

X3List = []

X4List = []

Y1List = []

Y2List = []

Y3List = []

Y4List = []

TypeList = []

empty_img_name = []

# for img_name in img_names:

for i, img_name in enumerate(tqdm(img_names)):

path = os.path.join(imgs_dir, img_name)

image = load(path)

# compute predictions

predictions = coco_demo.compute_prediction(image)

try:

scores = predictions.get_field("scores").numpy()

bbox = predictions.bbox[np.argmax(scores)].numpy()

labelList = predictions.get_field("labels").numpy()

label = labelList[np.argmax(scores)]

filenameList.append(img_name)

X1List.append(round(bbox[0]))

Y1List.append(round(bbox[1]))

X2List.append(round(bbox[2]))

Y2List.append(round(bbox[1]))

X3List.append(round(bbox[2]))

Y3List.append(round(bbox[3]))

X4List.append(round(bbox[0]))

Y4List.append(round(bbox[3]))

TypeList.append(label)

# print(filenameList, X1List, X2List, X3List, X4List, Y1List,

# Y2List, Y3List, Y4List, TypeList)

print(label)

except:

empty_img_name.append(img_name)

print(empty_img_name)

submit_v4['filename'] = filenameList

submit_v4['X1'] = X1List

submit_v4['Y1'] = Y1List

submit_v4['X2'] = X2List

submit_v4['Y2'] = Y2List

submit_v4['X3'] = X3List

submit_v4['Y3'] = Y3List

submit_v4['X4'] = X4List

submit_v4['Y4'] = Y4List

submit_v4['type'] = TypeList

empty_v4['filename'] = empty_img_name

submit_v4.to_csv('submit_v4.csv', index=None)

empty_v4.to_csv('empty_v4.csv', index=None)- 运行命令:

CUDA_VISIBLE_DEVICES=5 python demo/predict.py七、结束语

- 若有修改

maskrcnn-benchmark文件夹下的代码,一定要重新编译!一定要重新编译!一定要重新编译!

更多内容欢迎关注博客是大黄鸭 BLOG。