Learn NLP with Transformer (Chapter 6)

BERT应用

Task06 BERT应用

本次学习参照Datawhale开源学习:https://github.com/datawhalechina/learn-nlp-with-transformers

内容大体源自原文,结合自己学习思路有所调整。

个人总结:一、BERT 预训练任务包括Masked Language Model(MLM训练模型根据上下文理解单词的意思)和Next Sentence Prediction(NSP训练模型理解预测句子间的关系)。二、 Fine-tune 包括句子分类、多项选择、词分类、问答任务等。

本文基于 Transformers 版本 4.4.2(2021 年 3 月 19 日发布)项目中,pytorch 版的 BERT 相关代码,从代码结构、具体实现与原理,以及使用的角度进行分析,包含以下内容:

Bert解决NLP任务

- BertForSequenceClassification

- BertForMultiChoice

- BertForTokenClassification

- BertForQuestionAnswering

BERT训练与优化

- Pre-Training

- Fine-Tuning

- AdamW

- Warmup

6 BERT应用

6.1 BERT-based Models

首先,以下所有的模型都是基于BertPreTrainedModel这一抽象基类,BertPreTrainedModel的功能:

用于初始化模型权重,同时维护继承自PreTrainedModel的一些标记身份或者加载模型时的类变量。

下面,首先从预训练模型开始分析。

6.1.1 BertForPreTraining

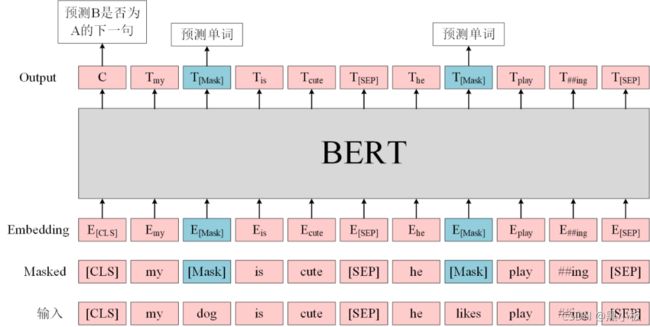

BERT 预训练任务包括两个:

- Masked Language Model(MLM):在句子中随机用

[MASK]替换一部分单词,然后将句子传入 BERT 中编码每一个单词的信息,最终用[MASK]的编码信息预测该位置的正确单词,这一任务旨在训练模型根据上下文理解单词的意思; - Next Sentence Prediction(NSP):将句子对 A 和 B 输入 BERT,使用

[CLS]的编码信息进行预测 B 是否 A 的下一句,这一任务旨在训练模型理解预测句子间的关系。

而对应到代码中,这一融合两个任务的模型就是BertForPreTraining,其中包含两个组件:

class BertForPreTraining(BertPreTrainedModel):

def __init__(self, config):

super().__init__(config)

self.bert = BertModel(config)

self.cls = BertPreTrainingHeads(config)

self.init_weights()

# ...

这里的BertModel在上一章节中已经详细介绍了(注意,这里设置的是默认add_pooling_layer=True,即会提取[CLS]对应的输出用于 NSP 任务),而BertPreTrainingHeads则是负责两个任务的预测模块:

class BertPreTrainingHeads(nn.Module):

def __init__(self, config):

super().__init__()

self.predictions = BertLMPredictionHead(config)

self.seq_relationship = nn.Linear(config.hidden_size, 2)

def forward(self, sequence_output, pooled_output):

prediction_scores = self.predictions(sequence_output)

seq_relationship_score = self.seq_relationship(pooled_output)

return prediction_scores, seq_relationship_score

又是一层封装:BertPreTrainingHeads包裹了BertLMPredictionHead 和一个代表 NSP 任务的线性层。这里不把 NSP 对应的任务也封装一个BertXXXPredictionHead。

其实是有封装这个类的,不过它叫做BertOnlyNSPHead,在这里用不上

继续下探BertPreTrainingHeads:

class BertLMPredictionHead(nn.Module):

def __init__(self, config):

super().__init__()

self.transform = BertPredictionHeadTransform(config)

# The output weights are the same as the input embeddings, but there is

# an output-only bias for each token.

self.decoder = nn.Linear(config.hidden_size, config.vocab_size, bias=False)

self.bias = nn.Parameter(torch.zeros(config.vocab_size))

# Need a link between the two variables so that the bias is correctly resized with `resize_token_embeddings`

self.decoder.bias = self.bias

def forward(self, hidden_states):

hidden_states = self.transform(hidden_states)

hidden_states = self.decoder(hidden_states)

return hidden_states

这个类用于预测[MASK]位置的输出在每个词作为类别的分类输出,注意到:

- 该类重新初始化了一个全 0 向量作为预测权重的 bias;

- 该类的输出形状为[batch_size, seq_length, vocab_size],即预测每个句子每个词是什么类别的概率值(注意这里没有做 softmax);

- 又一个封装的类:BertPredictionHeadTransform,用来完成一些线性变换:

class BertPredictionHeadTransform(nn.Module):

def __init__(self, config):

super().__init__()

self.dense = nn.Linear(config.hidden_size, config.hidden_size)

if isinstance(config.hidden_act, str):

self.transform_act_fn = ACT2FN[config.hidden_act]

else:

self.transform_act_fn = config.hidden_act

self.LayerNorm = nn.LayerNorm(config.hidden_size, eps=config.layer_norm_eps)

def forward(self, hidden_states):

hidden_states = self.dense(hidden_states)

hidden_states = self.transform_act_fn(hidden_states)

hidden_states = self.LayerNorm(hidden_states)

return hidden_states

回到BertForPreTraining,继续看两块 loss 是怎么处理的。它的前向传播和BertModel的有所不同,多了labels和next_sentence_label 两个输入:

-

labels:形状为[batch_size, seq_length] ,代表 MLM 任务的标签,注意这里对于原本未被遮盖的词设置为 -100,被遮盖词才会有它们对应的 id,和任务设置是反过来的。

- 例如,原始句子是I want to [MASK] an apple,这里我把单词eat给遮住了输入模型,对应的label设置为[-100, -100, -100, 【eat对应的id】, -100, -100];

- 为什么要设置为 -100 而不是其他数?因为torch.nn.CrossEntropyLoss默认的ignore_index=-100,也就是说对于标签为 100 的类别输入不会计算 loss。

-

next_sentence_label:这一个输入很简单,就是 0 和 1 的二分类标签。

# ...

def forward(

self,

input_ids=None,

attention_mask=None,

token_type_ids=None,

position_ids=None,

head_mask=None,

inputs_embeds=None,

labels=None,

next_sentence_label=None,

output_attentions=None,

output_hidden_states=None,

return_dict=None,

): ...

接下来两部分 loss 的组合:

# ...

total_loss = None

if labels is not None and next_sentence_label is not None:

loss_fct = CrossEntropyLoss()

masked_lm_loss = loss_fct(prediction_scores.view(-1, self.config.vocab_size), labels.view(-1))

next_sentence_loss = loss_fct(seq_relationship_score.view(-1, 2), next_sentence_label.view(-1))

total_loss = masked_lm_loss + next_sentence_loss

# ...

直接相加,就是这么单纯的策略。

当然,这份代码里面也包含了对于只想对单个目标进行预训练的 BERT 模型(具体细节不作展开):

- BertForMaskedLM:只进行 MLM 任务的预训练;

- 基于BertOnlyMLMHead,而后者也是对BertLMPredictionHead的另一层封装;

- BertLMHeadModel:这个和上一个的区别在于,这一模型是作为 decoder 运行的版本;

- 同样基于BertOnlyMLMHead;

- BertForNextSentencePrediction:只进行 NSP 任务的预训练。

- 基于BertOnlyNSPHead,内容就是一个线性层。

from transformers import BertTokenizer, BertForPreTraining

import torch

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = BertForPreTraining.from_pretrained('bert-base-uncased')

inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

outputs = model(**inputs)

prediction_logits = outputs.prediction_logits

seq_relationship_logits = outputs.seq_relationship_logits

Some weights of BertForPreTraining were not initialized from the model checkpoint at bert-base-uncased and are newly initialized: ['cls.predictions.decoder.bias']

You should probably TRAIN this model on a down-stream task to be able to use it for predictions and inference.

from transformers import BertTokenizer, BertLMHeadModel, BertConfig

import torch

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

config = BertConfig.from_pretrained("bert-base-uncased")

config.is_decoder = True

model = BertLMHeadModel.from_pretrained('bert-base-uncased', config=config)

inputs = tokenizer("Hello, my dog is cute", return_tensors="pt")

outputs = model(**inputs)

prediction_logits = outputs.logits

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertLMHeadModel: ['cls.seq_relationship.weight', 'cls.seq_relationship.bias']

- This IS expected if you are initializing BertLMHeadModel from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertLMHeadModel from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

from transformers import BertTokenizer, BertForNextSentencePrediction

import torch

tokenizer = BertTokenizer.from_pretrained('bert-base-uncased')

model = BertForNextSentencePrediction.from_pretrained('bert-base-uncased')

prompt = "In Italy, pizza served in formal settings, such as at a restaurant, is presented unsliced."

next_sentence = "The sky is blue due to the shorter wavelength of blue light."

encoding = tokenizer(prompt, next_sentence, return_tensors='pt')

outputs = model(**encoding, labels=torch.LongTensor([1]))

logits = outputs.logits

assert logits[0, 0] < logits[0, 1] # next sentence was random

Downloading: 100%|██████████| 440M/440M [00:30<00:00, 14.5MB/s]

Some weights of the model checkpoint at bert-base-uncased were not used when initializing BertForNextSentencePrediction: ['cls.predictions.bias', 'cls.predictions.transform.dense.weight', 'cls.predictions.transform.dense.bias', 'cls.predictions.decoder.weight', 'cls.predictions.transform.LayerNorm.weight', 'cls.predictions.transform.LayerNorm.bias']

- This IS expected if you are initializing BertForNextSentencePrediction from the checkpoint of a model trained on another task or with another architecture (e.g. initializing a BertForSequenceClassification model from a BertForPreTraining model).

- This IS NOT expected if you are initializing BertForNextSentencePrediction from the checkpoint of a model that you expect to be exactly identical (initializing a BertForSequenceClassification model from a BertForSequenceClassification model).

接下来介绍的是各种 Fine-tune 模型,基本都是分类任务:

6.1.2 BertForSequenceClassification

这一模型用于句子分类(也可以是回归)任务,比如 GLUE benchmark 的各个任务。

- 句子分类的输入为句子(对),输出为单个分类标签。

结构上很简单,就是BertModel(有 pooling)过一个 dropout 后接一个线性层输出分类:

class BertForSequenceClassification(BertPreTrainedModel):

def __init__(self, config):

super().__init__(config)

self.num_labels = config.num_labels

self.bert = BertModel(config)

self.dropout = nn.Dropout(config.hidden_dropout_prob)

self.classifier = nn.Linear(config.hidden_size, config.num_labels)

self.init_weights()

# ...

在前向传播时,和上面预训练模型一样需要传入labels输入。

-

如果初始化的num_labels=1,那么就默认为回归任务,使用 MSELoss;

-

否则认为是分类任务。

from transformers.models.bert.tokenization_bert import BertTokenizer

from transformers.models.bert.modeling_bert import BertForSequenceClassification

tokenizer = BertTokenizer.from_pretrained("bert-base-cased-finetuned-mrpc")

model = BertForSequenceClassification.from_pretrained("bert-base-cased-finetuned-mrpc")

classes = ["not paraphrase", "is paraphrase"]

sequence_0 = "The company HuggingFace is based in New York City"

sequence_1 = "Apples are especially bad for your health"

sequence_2 = "HuggingFace's headquarters are situated in Manhattan"

# The tokekenizer will automatically add any model specific separators (i.e. and ) and tokens to the sequence, as well as compute the attention masks.

paraphrase = tokenizer(sequence_0, sequence_2, return_tensors="pt")

not_paraphrase = tokenizer(sequence_0, sequence_1, return_tensors="pt")

paraphrase_classification_logits = model(**paraphrase).logits

not_paraphrase_classification_logits = model(**not_paraphrase).logits

paraphrase_results = torch.softmax(paraphrase_classification_logits, dim=1).tolist()[0]

not_paraphrase_results = torch.softmax(not_paraphrase_classification_logits, dim=1).tolist()[0]

# Should be paraphrase

for i in range(len(classes)):

print(f"{classes[i]}: {int(round(paraphrase_results[i] * 100))}%")

# Should not be paraphrase

for i in range(len(classes)):

print(f"{classes[i]}: {int(round(not_paraphrase_results[i] * 100))}%")

Downloading: 100%|██████████| 213k/213k [00:00<00:00, 596kB/s]

Downloading: 100%|██████████| 29.0/29.0 [00:00<00:00, 12.4kB/s]

Downloading: 100%|██████████| 436k/436k [00:00<00:00, 808kB/s]

Downloading: 100%|██████████| 433/433 [00:00<00:00, 166kB/s]

Downloading: 100%|██████████| 433M/433M [00:29<00:00, 14.5MB/s]

not paraphrase: 10%

is paraphrase: 90%

not paraphrase: 94%

is paraphrase: 6%

6.1.3 BertForMultipleChoice

这一模型用于多项选择,如 RocStories/SWAG 任务。

- 多项选择任务的输入为一组分次输入的句子,输出为选择某一句子的单个标签。

结构上与句子分类相似,只不过线性层输出维度为 1,即每次需要将每个样本的多个句子的输出拼接起来作为每个样本的预测分数。 - 实际上,具体操作时是把每个 batch 的多个句子一同放入的,所以一次处理的输入为[batch_size, num_choices]数量的句子,因此相同 batch 大小时,比句子分类等任务需要更多的显存,在训练时需要小心。

6.1.4 BertForTokenClassification

这一模型用于序列标注(词分类),如 NER 任务。

- 序列标注任务的输入为单个句子文本,输出为每个 token 对应的类别标签。

由于需要用到每个 token对应的输出而不只是某几个,所以这里的BertModel不用加入 pooling 层; - 同时,这里将

_keys_to_ignore_on_load_unexpected这一个类参数设置为[r"pooler"],也就是在加载模型时对于出现不需要的权重不发生报错。

from transformers import BertForTokenClassification, BertTokenizer

import torch

model = BertForTokenClassification.from_pretrained("dbmdz/bert-large-cased-finetuned-conll03-english")

tokenizer = BertTokenizer.from_pretrained("bert-base-cased")

label_list = [

"O", # Outside of a named entity

"B-MISC", # Beginning of a miscellaneous entity right after another miscellaneous entity

"I-MISC", # Miscellaneous entity

"B-PER", # Beginning of a person's name right after another person's name

"I-PER", # Person's name

"B-ORG", # Beginning of an organisation right after another organisation

"I-ORG", # Organisation

"B-LOC", # Beginning of a location right after another location

"I-LOC" # Location

]

sequence = "Hugging Face Inc. is a company based in New York City. Its headquarters are in DUMBO, therefore very close to the Manhattan Bridge."

# Bit of a hack to get the tokens with the special tokens

tokens = tokenizer.tokenize(tokenizer.decode(tokenizer.encode(sequence)))

inputs = tokenizer.encode(sequence, return_tensors="pt")

outputs = model(inputs).logits

predictions = torch.argmax(outputs, dim=2)

Downloading: 100%|██████████| 998/998 [00:00<00:00, 382kB/s]

Downloading: 100%|██████████| 1.33G/1.33G [01:30<00:00, 14.7MB/s]

for token, prediction in zip(tokens, predictions[0].numpy()):

print((token, model.config.id2label[prediction]))

('[CLS]', 'O')

('Hu', 'I-ORG')

('##gging', 'I-ORG')

('Face', 'I-ORG')

('Inc', 'I-ORG')

('.', 'O')

('is', 'O')

('a', 'O')

('company', 'O')

('based', 'O')

('in', 'O')

('New', 'I-LOC')

('York', 'I-LOC')

('City', 'I-LOC')

('.', 'O')

('Its', 'O')

('headquarters', 'O')

('are', 'O')

('in', 'O')

('D', 'I-LOC')

('##UM', 'I-LOC')

('##BO', 'I-LOC')

(',', 'O')

('therefore', 'O')

('very', 'O')

('close', 'O')

('to', 'O')

('the', 'O')

('Manhattan', 'I-LOC')

('Bridge', 'I-LOC')

('.', 'O')

('[SEP]', 'O')

6.1.5 BertForQuestionAnswering

这一模型用于解决问答任务,例如 SQuAD 任务。

- 问答任务的输入为问题 +(对于 BERT 只能是一个)回答组成的句子对,输出为起始位置和结束位置用于标出回答中的具体文本。

这里需要两个输出,即对起始位置的预测和对结束位置的预测,两个输出的长度都和句子长度一样,从其中挑出最大的预测值对应的下标作为预测的位置。 - 对超出句子长度的非法 label,会将其压缩(torch.clamp_)到合理范围。

以上就是关于 BERT 源码的介绍,下面介绍一些关于 BERT 模型实用的训练细节。

from transformers import AutoTokenizer, AutoModelForQuestionAnswering

import torch

tokenizer = AutoTokenizer.from_pretrained("bert-large-uncased-whole-word-masking-finetuned-squad")

model = AutoModelForQuestionAnswering.from_pretrained("bert-large-uncased-whole-word-masking-finetuned-squad")

text = " Transformers (formerly known as pytorch-transformers and pytorch-pretrained-bert) provides general-purpose architectures (BERT, GPT-2, RoBERTa, XLM, DistilBert, XLNet…) for Natural Language Understanding (NLU) and Natural Language Generation (NLG) with over 32+ pretrained models in 100+ languages and deep interoperability between TensorFlow 2.0 and PyTorch."

questions = [

"How many pretrained models are available in Transformers?",

"What does Transformers provide?",

" Transformers provides interoperability between which frameworks?",

]

for question in questions:

inputs = tokenizer(question, text, add_special_tokens=True, return_tensors="pt")

input_ids = inputs["input_ids"].tolist()[0]

outputs = model(**inputs)

answer_start_scores = outputs.start_logits

answer_end_scores = outputs.end_logits

answer_start = torch.argmax(

answer_start_scores

) # Get the most likely beginning of answer with the argmax of the score

answer_end = torch.argmax(answer_end_scores) + 1 # Get the most likely end of answer with the argmax of the score

answer = tokenizer.convert_tokens_to_string(tokenizer.convert_ids_to_tokens(input_ids[answer_start:answer_end]))

print(f"Question: {question}")

print(f"Answer: {answer}")

Downloading: 100%|██████████| 443/443 [00:00<00:00, 186kB/s]

Downloading: 100%|██████████| 232k/232k [00:00<00:00, 438kB/s]

Downloading: 100%|██████████| 466k/466k [00:00<00:00, 845kB/s]

Downloading: 100%|██████████| 28.0/28.0 [00:00<00:00, 10.5kB/s]

Downloading: 100%|██████████| 1.34G/1.34G [01:28<00:00, 15.1MB/s]

Question: How many pretrained models are available in Transformers?

Answer: over 32 +

Question: What does Transformers provide?

Answer: general - purpose architectures

Question: Transformers provides interoperability between which frameworks?

Answer: tensorflow 2. 0 and pytorch

6.2 BERT训练和优化

6.2.1 Pre-Training

预训练阶段,除了众所周知的 15%、80% mask 比例,有一个值得注意的地方就是参数共享。

不止 BERT,所有 huggingface 实现的 PLM 的 word embedding 和 masked language model 的预测权重在初始化过程中都是共享的:

class PreTrainedModel(nn.Module, ModuleUtilsMixin, GenerationMixin):

# ...

def tie_weights(self):

"""

Tie the weights between the input embeddings and the output embeddings.

If the :obj:`torchscript` flag is set in the configuration, can't handle parameter sharing so we are cloning

the weights instead.

"""

output_embeddings = self.get_output_embeddings()

if output_embeddings is not None and self.config.tie_word_embeddings:

self._tie_or_clone_weights(output_embeddings, self.get_input_embeddings())

if self.config.is_encoder_decoder and self.config.tie_encoder_decoder:

if hasattr(self, self.base_model_prefix):

self = getattr(self, self.base_model_prefix)

self._tie_encoder_decoder_weights(self.encoder, self.decoder, self.base_model_prefix)

# ...

至于为什么,应该是因为 word_embedding 和 prediction 权重太大了,以 bert-base 为例,其尺寸为(30522, 768),降低训练难度。

6.2.2 Fine-Tuning

微调也就是下游任务阶段,也有两个值得注意的地方。

6.2.2.1 AdamW

首先介绍一下 BERT 的优化器:AdamW(AdamWeightDecayOptimizer)。

这一优化器来自 ICLR 2017 的 Best Paper:《Fixing Weight Decay Regularization in Adam》中提出的一种用于修复 Adam 的权重衰减错误的新方法。论文指出,L2 正则化和权重衰减在大部分情况下并不等价,只在 SGD 优化的情况下是等价的;而大多数框架中对于 Adam+L2 正则使用的是权重衰减的方式,两者不能混为一谈。

AdamW 是在 Adam+L2 正则化的基础上进行改进的算法,与一般的 Adam+L2 的区别如下:

关于 AdamW 的分析可以参考:

- AdamW and Super-convergence is now the fastest way to train neural nets [1]

- paperplanet:都 9102 年了,别再用 Adam + L2 regularization了 [2]

通常,我们会选择模型的 weight 部分参与 decay 过程,而另一部分(包括 LayerNorm 的 weight)不参与(代码最初来源应该是 Huggingface 的示例)

# model: a Bert-based-model object

# learning_rate: default 2e-5 for text classification

param_optimizer = list(model.named_parameters())

no_decay = ['bias', 'LayerNorm.bias', 'LayerNorm.weight']

optimizer_grouped_parameters = [

{'params': [p for n, p in param_optimizer if not any(

nd in n for nd in no_decay)], 'weight_decay': 0.01},

{'params': [p for n, p in param_optimizer if any(

nd in n for nd in no_decay)], 'weight_decay': 0.0}

]

optimizer = AdamW(optimizer_grouped_parameters,

lr=learning_rate)

# ...

6.2.2.2 Warmup

BERT 的训练中另一个特点在于 Warmup,其含义为:

在训练初期使用较小的学习率(从 0 开始),在一定步数(比如 1000 步)内逐渐提高到正常大小(比如上面的 2e-5),避免模型过早进入局部最优而过拟合;

- 在训练后期再慢慢将学习率降低到 0,避免后期训练还出现较大的参数变化。

- 在 Huggingface 的实现中,可以使用多种 warmup 策略:

TYPE_TO_SCHEDULER_FUNCTION = {

SchedulerType.LINEAR: get_linear_schedule_with_warmup,

SchedulerType.COSINE: get_cosine_schedule_with_warmup,

SchedulerType.COSINE_WITH_RESTARTS: get_cosine_with_hard_restarts_schedule_with_warmup,

SchedulerType.POLYNOMIAL: get_polynomial_decay_schedule_with_warmup,

SchedulerType.CONSTANT: get_constant_schedule,

SchedulerType.CONSTANT_WITH_WARMUP: get_constant_schedule_with_warmup,

}

具体而言:

- CONSTANT:保持固定学习率不变;

- CONSTANT_WITH_WARMUP:在每一个 step 中线性调整学习率;

- LINEAR:上文提到的两段式调整;

- COSINE:和两段式调整类似,只不过采用的是三角函数式的曲线调整;

- COSINE_WITH_RESTARTS:训练中将上面 COSINE 的调整重复 n 次;

- POLYNOMIAL:按指数曲线进行两段式调整。

具体使用参考transformers/optimization.py:

最常用的还是get_linear_scheduler_with_warmup即线性两段式调整学习率的方案。

def get_scheduler(

name: Union[str, SchedulerType],

optimizer: Optimizer,

num_warmup_steps: Optional[int] = None,

num_training_steps: Optional[int] = None,

): ...