InceptionV4 and Inception-ResNet模型介绍及实现代码

InceptionV4 and Inception-ResNet概述

Inception 结构回顾

GoogLeNet(Inception-V1)

BN-inception(使用batch-normalization促进整个学习过程)

Inception-V2 and V3

堆叠三个模块为V2

结合下采样模块和其他优化方法为V3

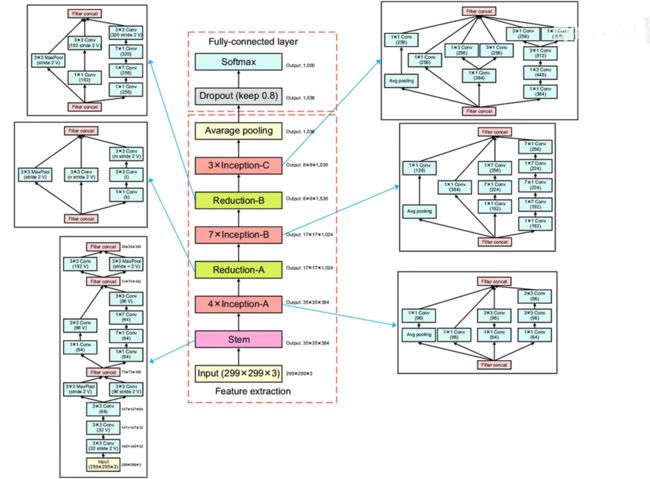

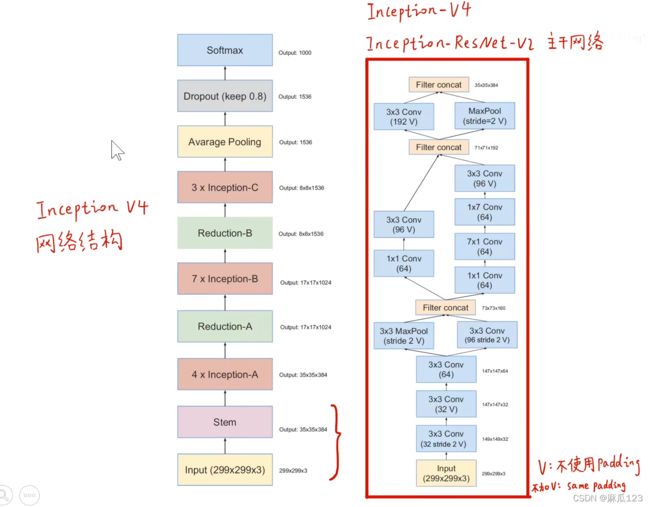

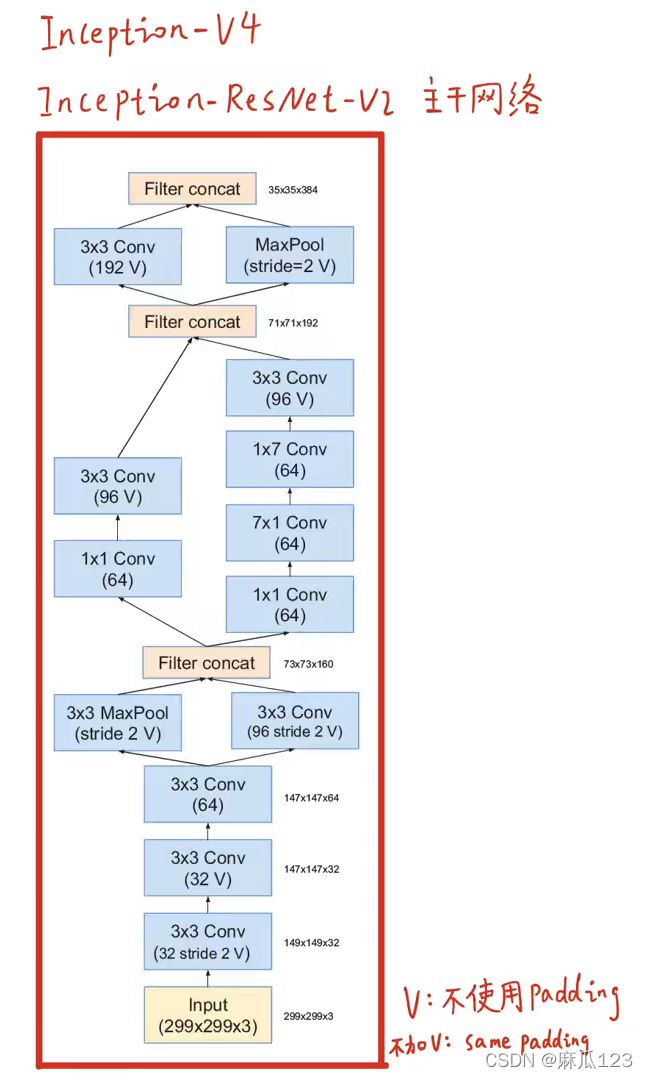

Inception-V4

Inception V4性能

top1 和 top5 error

参数量和计算效率

推断时间

2017年数据对比

可以看到V4的top1识别精度是最高的,同时模型参数量和效果也是相对最好的

InceptionV4详细结构

图中右半部分中的V是指不加padding

效果对比

单模型、单帧、多模型、多帧对比

多帧:将一个图像通过放缩、裁剪等方式获得多张小图,并进行训练的效果

多模型:多个网络模型集成在一起的效果

Inception-V4源码

模块A

class InceptionA(nn.Module):

def __init__(self, in_channels, out_channels):

super(InceptionA, self).__init__()

#branch1: avgpool --> conv1*1(96)

self.b1_1 = nn.AvgPool2d(kernel_size=3, padding=1, stride=1)

self.b1_2 = BasicConv2d(in_channels, 96, kernel_size=1)

#branch2: conv1*1(96)

self.b2 = BasicConv2d(in_channels, 96, kernel_size=1)

#branch3: conv1*1(64) --> conv3*3(96)

self.b3_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.b3_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

#branch4: conv1*1(64) --> conv3*3(96) --> conv3*3(96)

self.b4_1 = BasicConv2d(in_channels, 64, kernel_size=1)

self.b4_2 = BasicConv2d(64, 96, kernel_size=3, padding=1)

self.b4_3 = BasicConv2d(96, 96, kernel_size=3, padding=1)

def forward(self, x):

y1 = self.b1_2(self.b1_1(x))

y2 = self.b2(x)

y3 = self.b3_2(self.b3_1(x))

y4 = self.b4_3(self.b4_2(self.b4_1(x)))

outputsA = [y1, y2, y3, y4]

return torch.cat(outputsA, 1)

模块B

class InceptionB(nn.Module):

def __init__(self, in_channels, out_channels):

super(InceptionB, self).__init__()

#branch1: avgpool --> conv1*1(128)

self.b1_1 = nn.AvgPool2d(kernel_size=3, padding=1, stride=1)

self.b1_2 = BasicConv2d(in_channels, 128, kernel_size=1)

#branch2: conv1*1(384)

self.b2 = BasicConv2d(in_channels, 384, kernel_size=1)

#branch3: conv1*1(192) --> conv1*7(224) --> conv1*7(256)

self.b3_1 = BasicConv2d(in_channels, 192, kernel_size=1)

self.b3_2 = BasicConv2d(192, 224, kernel_size=(1,7), padding=(0,3))

self.b3_3 = BasicConv2d(224, 256, kernel_size=(1,7), padding=(0,3))

#branch4: conv1*1(192) --> conv1*7(192) --> conv7*1(224) --> conv1*7(224) --> conv7*1(256)

self.b4_1 = BasicConv2d(in_channels, 192, kernel_size=1, stride=1)

self.b4_2 = BasicConv2d(192, 192, kernel_size=(1,7), padding=(0,3))

self.b4_3 = BasicConv2d(192, 224, kernel_size=(7,1), padding=(3,0))

self.b4_4 = BasicConv2d(224, 224, kernel_size=(1,7), padding=(0,3))

self.b4_5 = BasicConv2d(224, 256, kernel_size=(7,1), padding=(3,0))

def forward(self, x):

y1 = self.b1_2(self.b1_1(x))

y2 = self.b2(x)

y3 = self.b3_3(self.b3_2(self.b3_1(x)))

y4 = self.b4_5(self.b4_4(self.b4_3(self.b4_2(self.b4_1(x)))))

outputsB = [y1, y2, y3, y4]

return torch.cat(outputsB, 1)

模块C

class InceptionC(nn.Module):

def __init__(self, in_channels, out_channels):

super(InceptionC, self).__init__()

#branch1: avgpool --> conv1*1(256)

self.b1_1 = nn.AvgPool2d(kernel_size=3, padding=1, stride=1)

self.b1_2 = BasicConv2d(in_channels, 256, kernel_size=1)

#branch2: conv1*1(256)

self.b2 = BasicConv2d(in_channels, 256, kernel_size=1)

#branch3: conv1*1(384) --> conv1*3(256) & conv3*1(256)

self.b3_1 = BasicConv2d(in_channels, 384, kernel_size=1)

self.b3_2_1 = BasicConv2d(384, 256, kernel_size=(1,3), padding=(0,1))

self.b3_2_2 = BasicConv2d(384, 256, kernel_size=(3,1), padding=(1,0))

#branch4: conv1*1(384) --> conv1*3(448) --> conv3*1(512) --> conv3*1(256) & conv7*1(256)

self.b4_1 = BasicConv2d(in_channels, 384, kernel_size=1, stride=1)

self.b4_2 = BasicConv2d(384, 448, kernel_size=(1,3), padding=(0,1))

self.b4_3 = BasicConv2d(448, 512, kernel_size=(3,1), padding=(1,0))

self.b4_4_1 = BasicConv2d(512, 256, kernel_size=(3,1), padding=(1,0))

self.b4_4_2 = BasicConv2d(512, 256, kernel_size=(1,3), padding=(0,1))

def forward(self, x):

y1 = self.b1_2(self.b1_1(x))

y2 = self.b2(x)

y3_1 = self.b3_2_1(self.b3_1(x))

y3_2 = self.b3_2_2(self.b3_1(x))

y4_1 = self.b4_4_1(self.b4_3(self.b4_2(self.b4_1(x))))

y4_2 = self.b4_4_2(self.b4_3(self.b4_2(self.b4_1(x))))

outputsC = [y1, y2, y3_1, y3_2, y4_1, y4_2]

return torch.cat(outputsC, 1)

下采样模块A

class ReductionA(nn.Module):

def __init__(self, in_channels, out_channels, k, l, m, n):

super(ReductionA, self).__init__()

#branch1: maxpool3*3(stride2 valid)

self.b1 = nn.MaxPool2d(kernel_size=3, stride=2)

#branch2: conv3*3(n stride2 valid)

self.b2 = BasicConv2d(in_channels, n, kernel_size=3, stride=2)

#branch3: conv1*1(k) --> conv3*3(l) --> conv3*3(m stride2 valid)

self.b3_1 = BasicConv2d(in_channels, k, kernel_size=1)

self.b3_2 = BasicConv2d(k, l, kernel_size=3, padding=1)

self.b3_3 = BasicConv2d(l, m, kernel_size=3, stride=2)

def forward(self, x):

y1 = self.b1(x)

y2 = self.b2(x)

y3 = self.b3_3(self.b3_2(self.b3_1(x)))

outputsRedA = [y1, y2, y3]

return torch.cat(outputsRedA, 1)

下采样模块B

class ReductionB(nn.Module):

def __init__(self, in_channels, out_channels):

super(ReductionB, self).__init__()

#branch1: maxpool3*3(stride2 valid)

self.b1 = nn.MaxPool2d(kernel_size=3, stride=2)

#branch2: conv1*1(192) --> conv3*3(192 stride2 valid)

self.b2_1 = BasicConv2d(in_channels, 192, kernel_size=1)

self.b2_2 = BasicConv2d(192, 192, kernel_size=3, stride=2)

#branch3: conv1*1(256) --> conv1*7(256) --> conv7*1(320) --> conv3*3(320 stride2 valid)

self.b3_1 = BasicConv2d(in_channels, 256, kernel_size=1)

self.b3_2 = BasicConv2d(256, 256, kernel_size=(1,7), padding=(0,3))

self.b3_3 = BasicConv2d(256, 320, kernel_size=(7,1), padding=(3,0))

self.b3_4 = BasicConv2d(320, 320, kernel_size=3, stride=2)

def forward(self, x):

y1 = self.b1(x)

y2 = self.b2_2(self.b2_1((x)))

y3 = self.b3_4(self.b3_3(self.b3_2(self.b3_1(x))))

outputsRedB = [y1, y2, y3]

return torch.cat(outputsRedB, 1)

主干网络

class Stem(nn.Module):

def __init__(self, in_channels, out_channels):

super(Stem, self).__init__()

#conv3*3(32 stride2 valid)

self.conv1 = BasicConv2d(in_channels, 32, kernel_size=3, stride=2)

#conv3*3(32 valid)

self.conv2 = BasicConv2d(32, 32, kernel_size=3)

#conv3*3(64)

self.conv3 = BasicConv2d(32, 64, kernel_size=3, padding=1)

#maxpool3*3(stride2 valid) & conv3*3(96 stride2 valid)

self.maxpool4 = nn.MaxPool2d(kernel_size=3, stride=2)

self.conv4 = BasicConv2d(64, 96, kernel_size=3, stride=2)

#conv1*1(64) --> conv3*3(96 valid)

self.conv5_1_1 = BasicConv2d(160, 64, kernel_size=1)

self.conv5_1_2 = BasicConv2d(64, 96, kernel_size=3)

#conv1*1(64) --> conv7*1(64) --> conv1*7(64) --> conv3*3(96 valid)

self.conv5_2_1 = BasicConv2d(160, 64, kernel_size=1)

self.conv5_2_2 = BasicConv2d(64, 64, kernel_size=(7,1), padding=(3,0))

self.conv5_2_3 = BasicConv2d(64, 64, kernel_size=(1,7), padding=(0,3))

self.conv5_2_4 = BasicConv2d(64, 96, kernel_size=3)

#conv3*3(192 valid)

self.conv6 = BasicConv2d(192, 192, kernel_size=3, stride=2)

#maxpool3*3(stride2 valid)

self.maxpool6 = nn.MaxPool2d(kernel_size=3, stride=2)

def forward(self, x):

y1_1 = self.maxpool4(self.conv3(self.conv2(self.conv1(x))))

y1_2 = self.conv4(self.conv3(self.conv2(self.conv1(x))))

y1 = torch.cat([y1_1, y1_2], 1)

y2_1 = self.conv5_1_2(self.conv5_1_1(y1))

y2_2 = self.conv5_2_4(self.conv5_2_3(self.conv5_2_2(self.conv5_2_1(y1))))

y2 = torch.cat([y2_1, y2_2], 1)

y3_1 = self.conv6(y2)

y3_2 = self.maxpool6(y2)

y3 = torch.cat([y3_1, y3_2], 1)

return y3汇总模块

class Googlenetv4(nn.Module):

def __init__(self):

super(Googlenetv4, self).__init__()

self.stem = Stem(3, 384)

self.icpA = InceptionA(384, 384)

self.redA = ReductionA(384, 1024, 192, 224, 256, 384)

self.icpB = InceptionB(1024, 1024)

self.redB = ReductionB(1024, 1536)

self.icpC = InceptionC(1536, 1536)

self.avgpool = nn.AvgPool2d(kernel_size=8)

self.dropout = nn.Dropout(p=0.8)

self.linear = nn.Linear(1536, 1000)

def forward(self, x):

#Stem Module

out = self.stem(x)

#InceptionA Module * 4

out = self.icpA(self.icpA(self.icpA(self.icpA(out))))

#ReductionA Module

out = self.redA(out)

#InceptionB Module * 7

out = self.icpB(self.icpB(self.icpB(self.icpB(self.icpB(self.icpB(self.icpB(out)))))))

#ReductionB Module

out = self.redB(out)

#InceptionC Module * 3

out = self.icpC(self.icpC(self.icpC(out)))

#Average Pooling

out = self.avgpool(out)

out = out.view(out.size(0), -1)

#Dropout

out = self.dropout(out)

#Linear(Softmax)

out = self.linear(out)

return out

上面是Pytorch代码版本,官网给出的是Tensorflow版本,下面是全部Tensorflow实现InceptionV4的代码

# Copyright 2016 The TensorFlow Authors. All Rights Reserved.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

# ==============================================================================

"""Contains the definition of the Inception V4 architecture.

As described in http://arxiv.org/abs/1602.07261.

Inception-v4, Inception-ResNet and the Impact of Residual Connections

on Learning

Christian Szegedy, Sergey Ioffe, Vincent Vanhoucke, Alex Alemi

"""

from __future__ import absolute_import

from __future__ import division

from __future__ import print_function

import tensorflow.compat.v1 as tf

import tf_slim as slim

from nets import inception_utils

def block_inception_a(inputs, scope=None, reuse=None):

"""Builds Inception-A block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(

scope, 'BlockInceptionA', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 96, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 96, [3, 3], scope='Conv2d_0b_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(inputs, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 96, [3, 3], scope='Conv2d_0b_3x3')

branch_2 = slim.conv2d(branch_2, 96, [3, 3], scope='Conv2d_0c_3x3')

with tf.variable_scope('Branch_3'):

branch_3 = slim.avg_pool2d(inputs, [3, 3], scope='AvgPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 96, [1, 1], scope='Conv2d_0b_1x1')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

def block_reduction_a(inputs, scope=None, reuse=None):

"""Builds Reduction-A block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(

scope, 'BlockReductionA', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 384, [3, 3], stride=2, padding='VALID',

scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 224, [3, 3], scope='Conv2d_0b_3x3')

branch_1 = slim.conv2d(branch_1, 256, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.max_pool2d(inputs, [3, 3], stride=2, padding='VALID',

scope='MaxPool_1a_3x3')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2])

def block_inception_b(inputs, scope=None, reuse=None):

"""Builds Inception-B block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(

scope, 'BlockInceptionB', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 384, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 224, [1, 7], scope='Conv2d_0b_1x7')

branch_1 = slim.conv2d(branch_1, 256, [7, 1], scope='Conv2d_0c_7x1')

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 192, [7, 1], scope='Conv2d_0b_7x1')

branch_2 = slim.conv2d(branch_2, 224, [1, 7], scope='Conv2d_0c_1x7')

branch_2 = slim.conv2d(branch_2, 224, [7, 1], scope='Conv2d_0d_7x1')

branch_2 = slim.conv2d(branch_2, 256, [1, 7], scope='Conv2d_0e_1x7')

with tf.variable_scope('Branch_3'):

branch_3 = slim.avg_pool2d(inputs, [3, 3], scope='AvgPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 128, [1, 1], scope='Conv2d_0b_1x1')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

def block_reduction_b(inputs, scope=None, reuse=None):

"""Builds Reduction-B block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(

scope, 'BlockReductionB', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 192, [1, 1], scope='Conv2d_0a_1x1')

branch_0 = slim.conv2d(branch_0, 192, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 256, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 256, [1, 7], scope='Conv2d_0b_1x7')

branch_1 = slim.conv2d(branch_1, 320, [7, 1], scope='Conv2d_0c_7x1')

branch_1 = slim.conv2d(branch_1, 320, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_2'):

branch_2 = slim.max_pool2d(inputs, [3, 3], stride=2, padding='VALID',

scope='MaxPool_1a_3x3')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2])

def block_inception_c(inputs, scope=None, reuse=None):

"""Builds Inception-C block for Inception v4 network."""

# By default use stride=1 and SAME padding

with slim.arg_scope([slim.conv2d, slim.avg_pool2d, slim.max_pool2d],

stride=1, padding='SAME'):

with tf.variable_scope(

scope, 'BlockInceptionC', [inputs], reuse=reuse):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(inputs, 256, [1, 1], scope='Conv2d_0a_1x1')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(inputs, 384, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = tf.concat(axis=3, values=[

slim.conv2d(branch_1, 256, [1, 3], scope='Conv2d_0b_1x3'),

slim.conv2d(branch_1, 256, [3, 1], scope='Conv2d_0c_3x1')])

with tf.variable_scope('Branch_2'):

branch_2 = slim.conv2d(inputs, 384, [1, 1], scope='Conv2d_0a_1x1')

branch_2 = slim.conv2d(branch_2, 448, [3, 1], scope='Conv2d_0b_3x1')

branch_2 = slim.conv2d(branch_2, 512, [1, 3], scope='Conv2d_0c_1x3')

branch_2 = tf.concat(axis=3, values=[

slim.conv2d(branch_2, 256, [1, 3], scope='Conv2d_0d_1x3'),

slim.conv2d(branch_2, 256, [3, 1], scope='Conv2d_0e_3x1')])

with tf.variable_scope('Branch_3'):

branch_3 = slim.avg_pool2d(inputs, [3, 3], scope='AvgPool_0a_3x3')

branch_3 = slim.conv2d(branch_3, 256, [1, 1], scope='Conv2d_0b_1x1')

return tf.concat(axis=3, values=[branch_0, branch_1, branch_2, branch_3])

def inception_v4_base(inputs, final_endpoint='Mixed_7d', scope=None):

"""Creates the Inception V4 network up to the given final endpoint.

Args:

inputs: a 4-D tensor of size [batch_size, height, width, 3].

final_endpoint: specifies the endpoint to construct the network up to.

It can be one of [ 'Conv2d_1a_3x3', 'Conv2d_2a_3x3', 'Conv2d_2b_3x3',

'Mixed_3a', 'Mixed_4a', 'Mixed_5a', 'Mixed_5b', 'Mixed_5c', 'Mixed_5d',

'Mixed_5e', 'Mixed_6a', 'Mixed_6b', 'Mixed_6c', 'Mixed_6d', 'Mixed_6e',

'Mixed_6f', 'Mixed_6g', 'Mixed_6h', 'Mixed_7a', 'Mixed_7b', 'Mixed_7c',

'Mixed_7d']

scope: Optional variable_scope.

Returns:

logits: the logits outputs of the model.

end_points: the set of end_points from the inception model.

Raises:

ValueError: if final_endpoint is not set to one of the predefined values,

"""

end_points = {}

def add_and_check_final(name, net):

end_points[name] = net

return name == final_endpoint

with tf.variable_scope(scope, 'InceptionV4', [inputs]):

with slim.arg_scope([slim.conv2d, slim.max_pool2d, slim.avg_pool2d],

stride=1, padding='SAME'):

# 299 x 299 x 3

net = slim.conv2d(inputs, 32, [3, 3], stride=2,

padding='VALID', scope='Conv2d_1a_3x3')

if add_and_check_final('Conv2d_1a_3x3', net): return net, end_points

# 149 x 149 x 32

net = slim.conv2d(net, 32, [3, 3], padding='VALID',

scope='Conv2d_2a_3x3')

if add_and_check_final('Conv2d_2a_3x3', net): return net, end_points

# 147 x 147 x 32

net = slim.conv2d(net, 64, [3, 3], scope='Conv2d_2b_3x3')

if add_and_check_final('Conv2d_2b_3x3', net): return net, end_points

# 147 x 147 x 64

with tf.variable_scope('Mixed_3a'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.max_pool2d(net, [3, 3], stride=2, padding='VALID',

scope='MaxPool_0a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 96, [3, 3], stride=2, padding='VALID',

scope='Conv2d_0a_3x3')

net = tf.concat(axis=3, values=[branch_0, branch_1])

if add_and_check_final('Mixed_3a', net): return net, end_points

# 73 x 73 x 160

with tf.variable_scope('Mixed_4a'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_0 = slim.conv2d(branch_0, 96, [3, 3], padding='VALID',

scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.conv2d(net, 64, [1, 1], scope='Conv2d_0a_1x1')

branch_1 = slim.conv2d(branch_1, 64, [1, 7], scope='Conv2d_0b_1x7')

branch_1 = slim.conv2d(branch_1, 64, [7, 1], scope='Conv2d_0c_7x1')

branch_1 = slim.conv2d(branch_1, 96, [3, 3], padding='VALID',

scope='Conv2d_1a_3x3')

net = tf.concat(axis=3, values=[branch_0, branch_1])

if add_and_check_final('Mixed_4a', net): return net, end_points

# 71 x 71 x 192

with tf.variable_scope('Mixed_5a'):

with tf.variable_scope('Branch_0'):

branch_0 = slim.conv2d(net, 192, [3, 3], stride=2, padding='VALID',

scope='Conv2d_1a_3x3')

with tf.variable_scope('Branch_1'):

branch_1 = slim.max_pool2d(net, [3, 3], stride=2, padding='VALID',

scope='MaxPool_1a_3x3')

net = tf.concat(axis=3, values=[branch_0, branch_1])

if add_and_check_final('Mixed_5a', net): return net, end_points

# 35 x 35 x 384

# 4 x Inception-A blocks

for idx in range(4):

block_scope = 'Mixed_5' + chr(ord('b') + idx)

net = block_inception_a(net, block_scope)

if add_and_check_final(block_scope, net): return net, end_points

# 35 x 35 x 384

# Reduction-A block

net = block_reduction_a(net, 'Mixed_6a')

if add_and_check_final('Mixed_6a', net): return net, end_points

# 17 x 17 x 1024

# 7 x Inception-B blocks

for idx in range(7):

block_scope = 'Mixed_6' + chr(ord('b') + idx)

net = block_inception_b(net, block_scope)

if add_and_check_final(block_scope, net): return net, end_points

# 17 x 17 x 1024

# Reduction-B block

net = block_reduction_b(net, 'Mixed_7a')

if add_and_check_final('Mixed_7a', net): return net, end_points

# 8 x 8 x 1536

# 3 x Inception-C blocks

for idx in range(3):

block_scope = 'Mixed_7' + chr(ord('b') + idx)

net = block_inception_c(net, block_scope)

if add_and_check_final(block_scope, net): return net, end_points

raise ValueError('Unknown final endpoint %s' % final_endpoint)

def inception_v4(inputs, num_classes=1001, is_training=True,

dropout_keep_prob=0.8,

reuse=None,

scope='InceptionV4',

create_aux_logits=True):

"""Creates the Inception V4 model.

Args:

inputs: a 4-D tensor of size [batch_size, height, width, 3].

num_classes: number of predicted classes. If 0 or None, the logits layer

is omitted and the input features to the logits layer (before dropout)

are returned instead.

is_training: whether is training or not.

dropout_keep_prob: float, the fraction to keep before final layer.

reuse: whether or not the network and its variables should be reused. To be

able to reuse 'scope' must be given.

scope: Optional variable_scope.

create_aux_logits: Whether to include the auxiliary logits.

Returns:

net: a Tensor with the logits (pre-softmax activations) if num_classes

is a non-zero integer, or the non-dropped input to the logits layer

if num_classes is 0 or None.

end_points: the set of end_points from the inception model.

"""

end_points = {}

with tf.variable_scope(

scope, 'InceptionV4', [inputs], reuse=reuse) as scope:

with slim.arg_scope([slim.batch_norm, slim.dropout],

is_training=is_training):

net, end_points = inception_v4_base(inputs, scope=scope)

with slim.arg_scope([slim.conv2d, slim.max_pool2d, slim.avg_pool2d],

stride=1, padding='SAME'):

# Auxiliary Head logits

if create_aux_logits and num_classes:

with tf.variable_scope('AuxLogits'):

# 17 x 17 x 1024

aux_logits = end_points['Mixed_6h']

aux_logits = slim.avg_pool2d(aux_logits, [5, 5], stride=3,

padding='VALID',

scope='AvgPool_1a_5x5')

aux_logits = slim.conv2d(aux_logits, 128, [1, 1],

scope='Conv2d_1b_1x1')

aux_logits = slim.conv2d(aux_logits, 768,

aux_logits.get_shape()[1:3],

padding='VALID', scope='Conv2d_2a')

aux_logits = slim.flatten(aux_logits)

aux_logits = slim.fully_connected(aux_logits, num_classes,

activation_fn=None,

scope='Aux_logits')

end_points['AuxLogits'] = aux_logits

# Final pooling and prediction

# TODO(sguada,arnoegw): Consider adding a parameter global_pool which

# can be set to False to disable pooling here (as in resnet_*()).

with tf.variable_scope('Logits'):

# 8 x 8 x 1536

kernel_size = net.get_shape()[1:3]

if kernel_size.is_fully_defined():

net = slim.avg_pool2d(net, kernel_size, padding='VALID',

scope='AvgPool_1a')

else:

net = tf.reduce_mean(

input_tensor=net,

axis=[1, 2],

keepdims=True,

name='global_pool')

end_points['global_pool'] = net

if not num_classes:

return net, end_points

# 1 x 1 x 1536

net = slim.dropout(net, dropout_keep_prob, scope='Dropout_1b')

net = slim.flatten(net, scope='PreLogitsFlatten')

end_points['PreLogitsFlatten'] = net

# 1536

logits = slim.fully_connected(net, num_classes, activation_fn=None,

scope='Logits')

end_points['Logits'] = logits

end_points['Predictions'] = tf.nn.softmax(logits, name='Predictions')

return logits, end_points

inception_v4.default_image_size = 299

inception_v4_arg_scope = inception_utils.inception_arg_scope参考:【精读AI论文】Inception V4、Inception-ResNet图像分类算法_哔哩哔哩_bilibili

Inception-v4(GoogLeNet-v4)模型框架(PyTorch)_Leung WaiHo的博客-CSDN博客_inceptionv4 pytorch