- pycharm中使用anaconda部署python环境_pycharm部署配置anaconda环境教程

weixin_39796652

本篇文章小编给大家分享一下pycharm部署配置anaconda环境教程,小编觉得挺不错的,现在分享给大家供大家参考,有需要的小伙伴们可以来看看。pycharm部署anaconda环境Pycharm:python编辑器,社区版本Anaconda:开源的python发行版本(专注于数据分析的python版本),包含大量的科学包环境基本指令(准备工作):conda--version查看anaconda

- python poetry添加某个git仓库的某个分支

waketzheng

git

命令行不太清楚怎么弄,但可以通过编辑pyproject.toml实现实例:pypika-tortoise={git="https://github.com/henadzit/pypika-tortoise",branch="do-not-use-builder"}参考:WIPDonotcopypypikaquerybyhenadzit·PullRequest#1851·tortoise/torto

- The following modules are *disabled* in configure script:_sqlite3

waketzheng

python

Unabletoupgradepast3.6.9-#24byRosuav-PythonHelp-DiscussionsonPython.orgsudoaptinstalllibsqlite3-devcdPython-3.13.1./configure--enable-optimizations--enable-loadable-sqlite-extensionsmakesudomakealtins

- 高效快速教你DeepSeek如何进行本地部署并且可视化对话

大富大贵7

程序员知识储备1程序员知识储备2程序员知识储备3经验分享

科技文章:高效快速教你DeepSeek如何进行本地部署并且可视化对话摘要:随着自然语言处理(NLP)技术的进步,DeepSeek作为一款基于深度学习的语义搜索技术,广泛应用于文本理解、对话系统及信息检索等多个领域。本文将探讨如何高效快速地在本地部署DeepSeek,并结合可视化工具实现对话过程的监控与分析。通过详尽的步骤、案例分析与代码示例,帮助开发者更好地理解和应用DeepSeek技术。同时,本

- CentOS7 python安装Ta-lib 0.6.x【talib不能直接安装,必须先安装ta_lib之c++库才可以】

weixin_43343144

服务器运维

正常流程:CentOS7python安装Ta-lib【talib不能直接安装,必须先安装ta_lib之c++库才可以】_centos7安装ta-lib-CSDN博客不同的版本参考如下!参考官方文档:ta-lib·PyPI务必下载匹配版本的【ta-lib-0.6.4-src.tar.gz】才可以正常安装$wgethttps://github.com/ta-lib/ta-lib/releases/do

- 【Kivy App】Pyjnius是什么?

Botiway

移动APPKivypython

Pyjnius是一个Python库,用于在Python中访问Java类和方法,特别适用于在Kivy或其它Python应用中调用AndroidAPI。以下是Pyjnius的详细介绍、安装和使用方法:1.Pyjnius是什么?Pyjnius是一个Python-to-Java的桥接工具,允许Python代码直接调用Java类和方法。它基于JavaNativeInterface(JNI),主要用于以下场景

- 基于Python PYQT5 的相机定时采集图像程序,GUI打包独立运行

夏时summer time

pythonqt数码相机相机

基于PythonPYQT5编写相机定时采集图像及手动采集版本介绍Python3.6pyqt55.15.4pyqt5-tools5.15.4.3.2另外就是常用的cv2和numpy包fromPyQt5importQtCore,QtGui,QtWidgetsfromPyQt5importQtCore,QtGui,QtWidgetsimportcv2importnumpyasnpfromdatetime

- 《AI医疗系统开发实战录》第6期——智能导诊系统实战

骆驼_代码狂魔

程序员的法宝人工智能djangopythonneo4j知识图谱

关注我,后期文章全部免费开放,一起推进AI医疗的发展核心主题:如何构建95%准确率的智能导诊系统?技术突破:结合BERT+知识图谱的混合模型设计一、智能导诊架构设计python基于BERT的意图识别模型(PyTorch)fromtransformersimportBertTokenizer,BertForSequenceClassificationimporttorchclassTriageMod

- 量化交易系统中如何处理机器学习模型的训练和部署?

openwin_top

量化交易系统开发机器学习人工智能量化交易

microPythonPython最小内核源码解析NI-motion运动控制c语言示例代码解析python编程示例系列python编程示例系列二python的Web神器Streamlit如何应聘高薪职位量化交易系统中,机器学习模型的训练和部署需要遵循一套严密的流程,以确保模型的可靠性、性能和安全性。以下是详细描述以及相关的示例:1.数据收集和预处理数据收集在量化交易中,数据是最重要的资产。收集的数

- Mac下载python并安装

小小酥*

下载pythonPython官网:https://www.python.org/进入官网后点击download,选择MacOSX版本2.安装MAC系统一般都自带有Python2.x版本的环境,你也可以在链接https://www.python.org/downloads/mac-osx/上下载最新版安装。3.设置环境变量程序和可执行文件可以在许多目录,而这些路径很可能不在操作系统提供可执行文件的搜

- Python使用minIO上传下载

身似山河挺脊梁

python

前提VSCode+Python3.9minIO有Python的例子1.python生成临时文件2.写入一些数据3.上传到minIO4.获取分享出连接5.发出通知#创建一个客户端minioClient=Minio(endpoint='xx',access_key='xx',secret_key='xx',secure=False)#生成文件名current_datetime=datetime.dat

- 深入理解Python上下文管理器

……-……

python开发语言

1.什么是上下文管理器?2.with语句的魔法3.创建上下文管理器的两种方式3.1基于类的实现3.2使用contextlib模块4.异常处理1.什么是上下文管理器?上下文管理器(ContextManager)是Python中用于精确分配和释放资源的机制。它通过__enter__()和__exit__()两个魔术方法实现了上下文管理协议,确保即使在代码执行出错的情况下,资源也能被正确清理。#经典文件

- 【Appium】Appium征服安卓自动化:GitHub 10.5k+星开源神器,Python代码实战全解析!

山河不见老

python测试appiumandroid自动化

Appium一、为什么开发者都在用Appium?二、环境搭建:5分钟极速配置2.1核心工具链2.2安卓设备连接三、脚本实战:从零编写自动化操作3.1示例1:自动登录微信并发送消息3.2示例2:动态滑动屏幕与数据抓取四、避坑指南4.1元素定位优化4.2稳定性增强4.3云真机集成五、生态扩展:超越安卓的自动化版图一、为什么开发者都在用Appium?万星认证:GitHub超10.5k+星标,活跃社区持续

- 基于Streamlit实现的音频处理示例

大霸王龙

音视频ffmpeg

基于Streamlit实现的音频处理示例,包含录音、语音转文本、文件下载和进度显示功能,整合了多个技术方案:一、环境准备#安装依赖库pipinstallstreamlitstreamlit-webrtcaudio-recorder-streamlitopenai-whisperpython-dotx二、完整示例代码importstreamlitasstfromaudio_recorder_stre

- npm错误 gyp错误 vs版本不对 msvs_version不兼容

澎湖Java架构师

前端htmlnpmnode.js前端

npm错误gyp错误vs版本不对msvs_version不兼容windowsSDK报错执行更新GYP语句第一种方案第二种方案执行更新GYP语句npminstall-gnode-gyp最新的GYP好像已经不支持Python2.7版本,npm会提示你更新都3.*.*版本安装Node.js的时候一定要勾选以下这个,会自动检测安装缺少的环境第一种方案管理员运行CMD(PowerShell也行)执行更新工具

- 深入了解 ArangoDB 的图数据库应用与 Python 实践

eahba

数据库python开发语言

在当前数据驱动的时代,对连接数据的高效处理和分析需求日益增长。ArangoDB作为一个可扩展的图数据库系统,能够加速从连接数据中获取价值。本文将介绍如何使用Python连接和操作ArangoDB,并展示如何结合图问答链来获取数据洞察。技术背景介绍ArangoDB是一个多模型数据库,支持文档、图和键值类型的数据存储。其强大的图形存储和查询能力使其成为处理复杂数据关系的理想选择。通过JSON支持和单一

- 不懂英语可以学编程吗?,不懂英文可以学编程吗

P5688346

人工智能

大家好,给大家分享一下英语不好能学python编程吗,很多人还不知道这一点。下面详细解释一下。现在让我们来看看!Sourcecodedownload:本文相关源码提到人工智能,就不得不提Python编程语言,大多数人觉得编程语言肯定会涉及到很多代码,满屏的英文字母,想想就头疼,觉得自己不会英语,肯定学不好Python,但是不会英语到底能不能够学习Python呢,下面小编给大家分析分析。其实各位想要

- 一、Python入门基础

MeyrlNotFound

python开发语言

1.Python简介与环境搭建•了解Python的历史、特点和应用领域Python的历史Python是一种高级编程语言,由GuidovanRossum于1989年发明。Python语言的设计目标是让代码易读、易写、易维护,从而提高开发效率和代码质量。自其诞生以来,Python已从一个简单的系统管理工具发展成为一种广泛应用于多个领域的编程语言。Python的特点1.简单易学:Python的语法简洁明

- npm error gyp info

计算机辅助工程

npm前端node.js

在使用npm安装Node.js包时,可能会遇到各种错误,其中gyp错误是比较常见的一种。gyp是Node.js的一个工具,用于编译C++代码。这些错误通常发生在需要编译原生模块的npm包时。下面是一些常见的原因和解决方法:常见原因及解决方法Python未安装或版本不兼容:Node.js使用Python来运行gyp。确保你的系统上安装了Python,并且版本与node-gyp兼容。通常推荐使用Pyt

- 股票量化交易开发 Yfinance

数字化转型2025

python开发语言

以下是一段基于Python的股票量化分析代码,包含数据获取、技术指标计算、策略回测和可视化功能:pythonimportyfinanceasyfimportpandasaspdimportnumpyasnpimportmatplotlib.pyplotaspltimportseabornassnsfrombacktestingimportBacktest,Strategyfrombacktesti

- sqlmap笔记

君如尘

网络安全-渗透笔记笔记

1.运行环境sqlmap是用Python编写的,因此首先需要确保你的系统上安装了Python。sqlmap支持Python2.6、2.7和Python3.4及以上版本。2.常用命令通用格式:bythonsqlmap.py-r注入点地址--参数-rpost请求-uget请求--level=测试等级--risk=测试风险-v显示详细信息级别-p针对某个注入点注入-threads更改线程数,加速--ba

- python环境部署工具 uv

Honnnnnn

uv

以原先使用的pipenv工具为例子,通过pipfile.lock生成requirements文件,再将requirements转成pyproject.toml文件,最后生成uv.lock基于当前虚拟环境导出requirements.txt--pipfreeze>requirements.txt(如果原先不是env而是基础的通过requirements.txt文件,省去转化requirements的

- leetcode-hot100-python-专题三:滑动窗口

༺ Dorothy ༻

leetcodehot100leetcodepython算法

1、无重复字符的最长子串中等给定一个字符串s,请你找出其中不含有重复字符的最长子串的长度。示例1:输入:s=“abcabcbb”输出:3解释:因为无重复字符的最长子串是“abc”,所以其长度为3示例2:输入:s=“bbbbb”输出:1解释:因为无重复字符的最长子串是“b”,所以其长度为1。示例3:输入:s=“pwwkew”输出:3解释:因为无重复字符的最长子串是“wke”,所以其长度为3。请注意,

- Python UV - 安装、升级、卸载

云客Coder

pythonuv开发语言

文章目录安装检查升级设置自动补全卸载UV命令官方文档详见:https://docs.astral.sh/uv/getting-started/installation/安装pipinstalluv检查安装后可运行下面命令,查看是否安装成功uv--version%uv--versionuv0.6.3(a0b9f22a22025-02-24)升级uvselfupdate将重新运行安装程序并可能修改您的

- 使用Python构建去中心化预测市场:从概念到实现

Echo_Wish

Python!实战!python去中心化开发语言

使用Python构建去中心化预测市场:从概念到实现大家好,我是Echo_Wish。今天,我们将深入探讨一个前沿的区块链应用——去中心化预测市场,并学习如何使用Python来构建一个简易的预测市场平台。预测市场是基于市场参与者对未来事件的预测来产生结果的地方,通常被用来预测政治事件、金融市场走向、体育比赛结果等。传统的预测市场如Augur、Polymarket等,基于去中心化平台,利用区块链技术确保

- Python自动登陆、登出南京理工大学NJUST校园网程序

JimesMz

python开发语言

本文程序针对南京理工大学NJUST和NJUST-FREE校园网开发,其他学校无法使用。文章目录开发目的使用说明参考资料开发目的今天突然想要用代码实现一下自动登陆校园网,上网搜寻了一下。知乎有一些教程,CSDN也有一些完整的代码,但是我跟随教程或者直接运行现有代码都没有能够成功登陆,且NJUST校园网付费,我想要一个“登出”功能,借助Kimi自己写了一下。本人技术不精,以实现功能为主。使用说明请确保

- Python爬虫笔记一(来自MOOC) Requests库入门

小灰不停前进

#Pythonpythonpycharm爬虫

Python爬虫笔记一通用代码框架:importrequestsdefgetHTMLText(url):try:r=requests.get(url,timeput=30)r.raise_for_status()#如果状态不是200,引发HTTPError异常r.encoding=r.apparemt_encodingreturnr.textexcept:return"产生异常"if__name_

- Python调用fofa API接口并写入csv文件中

YOHO !GIRL

网络测绘python网络安全

前言一.功能目的二.功能调研三.编写代码1.引入库2.读取数据3.写入csv文件中总结前言上一篇我们讲述了目前较为主流的几款网络探测系统,简单介绍了页面的使用方法。链接如下,点击跳转:网络空间测绘引擎集合:Zoomeye、fofa、360、shodan、censys、鹰图然而当我们需要针对单个引擎进行二次开发时,页面就不能满足我们的需求了,这就需要参考API文档进行简单的数据处理,接下来,给大家介

- SenseVoice 部署记录

安静六角

开源软件

最近试用了SenseVoice(阿里团队开源的语音转文字)效果可以,可以本地部署,有webui界面,测试了万字以上的转换效果可以。首先部署好conda环境和cuda,这个可以查看他人的文章。步骤1.创建虚拟环境:condacreate-nmainenvpython=3.102.然后安装依赖condaactivatemainenvpipinstall-rC:\Users\xx\Documents\P

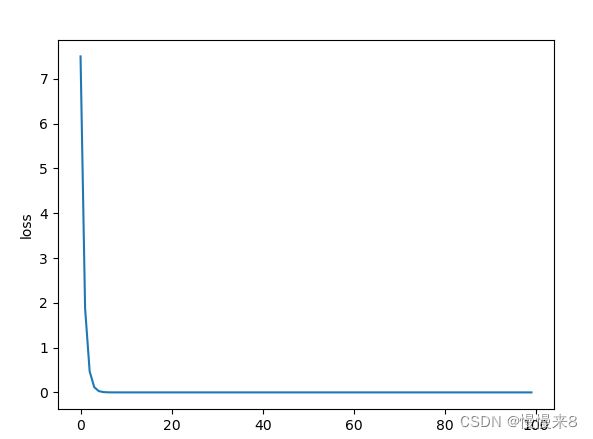

- Python基于深度学习的动物图片识别技术的研究与实现

Java老徐

Python毕业设计python深度学习开发语言深度学习的动物图片识别技术Python动物图片识别技术

博主介绍:✌程序员徐师兄、7年大厂程序员经历。全网粉丝12w+、csdn博客专家、掘金/华为云/阿里云/InfoQ等平台优质作者、专注于Java技术领域和毕业项目实战✌文末获取源码联系精彩专栏推荐订阅不然下次找不到哟2022-2024年最全的计算机软件毕业设计选题大全:1000个热门选题推荐✅Java项目精品实战案例《100套》Java微信小程序项目实战《100套》感兴趣的可以先收藏起来,还有大家

- 集合框架

天子之骄

java数据结构集合框架

集合框架

集合框架可以理解为一个容器,该容器主要指映射(map)、集合(set)、数组(array)和列表(list)等抽象数据结构。

从本质上来说,Java集合框架的主要组成是用来操作对象的接口。不同接口描述不同的数据类型。

简单介绍:

Collection接口是最基本的接口,它定义了List和Set,List又定义了LinkLi

- Table Driven(表驱动)方法实例

bijian1013

javaenumTable Driven表驱动

实例一:

/**

* 驾驶人年龄段

* 保险行业,会对驾驶人的年龄做年龄段的区分判断

* 驾驶人年龄段:01-[18,25);02-[25,30);03-[30-35);04-[35,40);05-[40,45);06-[45,50);07-[50-55);08-[55,+∞)

*/

public class AgePeriodTest {

//if...el

- Jquery 总结

cuishikuan

javajqueryAjaxWebjquery方法

1.$.trim方法用于移除字符串头部和尾部多余的空格。如:$.trim(' Hello ') // Hello2.$.contains方法返回一个布尔值,表示某个DOM元素(第二个参数)是否为另一个DOM元素(第一个参数)的下级元素。如:$.contains(document.documentElement, document.body); 3.$

- 面向对象概念的提出

麦田的设计者

java面向对象面向过程

面向对象中,一切都是由对象展开的,组织代码,封装数据。

在台湾面向对象被翻译为了面向物件编程,这充分说明了,这种编程强调实体。

下面就结合编程语言的发展史,聊一聊面向过程和面向对象。

c语言由贝尔实

- linux网口绑定

被触发

linux

刚在一台IBM Xserver服务器上装了RedHat Linux Enterprise AS 4,为了提高网络的可靠性配置双网卡绑定。

一、环境描述

我的RedHat Linux Enterprise AS 4安装双口的Intel千兆网卡,通过ifconfig -a命令看到eth0和eth1两张网卡。

二、双网卡绑定步骤:

2.1 修改/etc/sysconfig/network

- XML基础语法

肆无忌惮_

xml

一、什么是XML?

XML全称是Extensible Markup Language,可扩展标记语言。很类似HTML。XML的目的是传输数据而非显示数据。XML的标签没有被预定义,你需要自行定义标签。XML被设计为具有自我描述性。是W3C的推荐标准。

二、为什么学习XML?

用来解决程序间数据传输的格式问题

做配置文件

充当小型数据库

三、XML与HTM

- 为网页添加自己喜欢的字体

知了ing

字体 秒表 css

@font-face {

font-family: miaobiao;//定义字体名字

font-style: normal;

font-weight: 400;

src: url('font/DS-DIGI-e.eot');//字体文件

}

使用:

<label style="font-size:18px;font-famil

- redis范围查询应用-查找IP所在城市

矮蛋蛋

redis

原文地址:

http://www.tuicool.com/articles/BrURbqV

需求

根据IP找到对应的城市

原来的解决方案

oracle表(ip_country):

查询IP对应的城市:

1.把a.b.c.d这样格式的IP转为一个数字,例如为把210.21.224.34转为3524648994

2. select city from ip_

- 输入两个整数, 计算百分比

alleni123

java

public static String getPercent(int x, int total){

double result=(x*1.0)/(total*1.0);

System.out.println(result);

DecimalFormat df1=new DecimalFormat("0.0000%");

- 百合——————>怎么学习计算机语言

百合不是茶

java 移动开发

对于一个从没有接触过计算机语言的人来说,一上来就学面向对象,就算是心里上面接受的了,灵魂我觉得也应该是跟不上的,学不好是很正常的现象,计算机语言老师讲的再多,你在课堂上面跟着老师听的再多,我觉得你应该还是学不会的,最主要的原因是你根本没有想过该怎么来学习计算机编程语言,记得大一的时候金山网络公司在湖大招聘我们学校一个才来大学几天的被金山网络录取,一个刚到大学的就能够去和

- linux下tomcat开机自启动

bijian1013

tomcat

方法一:

修改Tomcat/bin/startup.sh 为:

export JAVA_HOME=/home/java1.6.0_27

export CLASSPATH=$CLASSPATH:$JAVA_HOME/lib/tools.jar:$JAVA_HOME/lib/dt.jar:.

export PATH=$JAVA_HOME/bin:$PATH

export CATALINA_H

- spring aop实例

bijian1013

javaspringAOP

1.AdviceMethods.java

package com.bijian.study.spring.aop.schema;

public class AdviceMethods {

public void preGreeting() {

System.out.println("--how are you!--");

}

}

2.beans.x

- [Gson八]GsonBuilder序列化和反序列化选项enableComplexMapKeySerialization

bit1129

serialization

enableComplexMapKeySerialization配置项的含义

Gson在序列化Map时,默认情况下,是调用Key的toString方法得到它的JSON字符串的Key,对于简单类型和字符串类型,这没有问题,但是对于复杂数据对象,如果对象没有覆写toString方法,那么默认的toString方法将得到这个对象的Hash地址。

GsonBuilder用于

- 【Spark九十一】Spark Streaming整合Kafka一些值得关注的问题

bit1129

Stream

包括Spark Streaming在内的实时计算数据可靠性指的是三种级别:

1. At most once,数据最多只能接受一次,有可能接收不到

2. At least once, 数据至少接受一次,有可能重复接收

3. Exactly once 数据保证被处理并且只被处理一次,

具体的多读几遍http://spark.apache.org/docs/lates

- shell脚本批量检测端口是否被占用脚本

ronin47

#!/bin/bash

cat ports |while read line

do#nc -z -w 10 $line

nc -z -w 2 $line 58422>/dev/null2>&1if[ $?-eq 0]then

echo $line:ok

else

echo $line:fail

fi

done

这里的ports 既可以是文件

- java-2.设计包含min函数的栈

bylijinnan

java

具体思路参见:http://zhedahht.blog.163.com/blog/static/25411174200712895228171/

import java.util.ArrayList;

import java.util.List;

public class MinStack {

//maybe we can use origin array rathe

- Netty源码学习-ChannelHandler

bylijinnan

javanetty

一般来说,“有状态”的ChannelHandler不应该是“共享”的,“无状态”的ChannelHandler则可“共享”

例如ObjectEncoder是“共享”的, 但 ObjectDecoder 不是

因为每一次调用decode方法时,可能数据未接收完全(incomplete),

它与上一次decode时接收到的数据“累计”起来才有可能是完整的数据,是“有状态”的

p

- java生成随机数

cngolon

java

方法一:

/**

* 生成随机数

* @author

[email protected]

* @return

*/

public synchronized static String getChargeSequenceNum(String pre){

StringBuffer sequenceNum = new StringBuffer();

Date dateTime = new D

- POI读写海量数据

ctrain

海量数据

import java.io.FileOutputStream;

import java.io.OutputStream;

import org.apache.poi.xssf.streaming.SXSSFRow;

import org.apache.poi.xssf.streaming.SXSSFSheet;

import org.apache.poi.xssf.streaming

- mysql 日期格式化date_format详细使用

daizj

mysqldate_format日期格式转换日期格式化

日期转换函数的详细使用说明

DATE_FORMAT(date,format) Formats the date value according to the format string. The following specifiers may be used in the format string. The&n

- 一个程序员分享8年的开发经验

dcj3sjt126com

程序员

在中国有很多人都认为IT行为是吃青春饭的,如果过了30岁就很难有机会再发展下去!其实现实并不是这样子的,在下从事.NET及JAVA方面的开发的也有8年的时间了,在这里在下想凭借自己的亲身经历,与大家一起探讨一下。

明确入行的目的

很多人干IT这一行都冲着“收入高”这一点的,因为只要学会一点HTML, DIV+CSS,要做一个页面开发人员并不是一件难事,而且做一个页面开发人员更容

- android欢迎界面淡入淡出效果

dcj3sjt126com

android

很多Android应用一开始都会有一个欢迎界面,淡入淡出效果也是用得非常多的,下面来实现一下。

主要代码如下:

package com.myaibang.activity;

import android.app.Activity;import android.content.Intent;import android.os.Bundle;import android.os.CountDown

- linux 复习笔记之常见压缩命令

eksliang

tar解压linux系统常见压缩命令linux压缩命令tar压缩

转载请出自出处:http://eksliang.iteye.com/blog/2109693

linux中常见压缩文件的拓展名

*.gz gzip程序压缩的文件

*.bz2 bzip程序压缩的文件

*.tar tar程序打包的数据,没有经过压缩

*.tar.gz tar程序打包后,并经过gzip程序压缩

*.tar.bz2 tar程序打包后,并经过bzip程序压缩

*.zi

- Android 应用程序发送shell命令

gqdy365

android

项目中需要直接在APP中通过发送shell指令来控制lcd灯,其实按理说应该是方案公司在调好lcd灯驱动之后直接通过service送接口上来给APP,APP调用就可以控制了,这是正规流程,但我们项目的方案商用的mtk方案,方案公司又没人会改,只调好了驱动,让应用程序自己实现灯的控制,这不蛋疼嘛!!!!

发就发吧!

一、关于shell指令:

我们知道,shell指令是Linux里面带的

- java 无损读取文本文件

hw1287789687

读取文件无损读取读取文本文件charset

java 如何无损读取文本文件呢?

以下是有损的

@Deprecated

public static String getFullContent(File file, String charset) {

BufferedReader reader = null;

if (!file.exists()) {

System.out.println("getFull

- Firebase 相关文章索引

justjavac

firebase

Awesome Firebase

最近谷歌收购Firebase的新闻又将Firebase拉入了人们的视野,于是我做了这个 github 项目。

Firebase 是一个数据同步的云服务,不同于 Dropbox 的「文件」,Firebase 同步的是「数据」,服务对象是网站开发者,帮助他们开发具有「实时」(Real-Time)特性的应用。

开发者只需引用一个 API 库文件就可以使用标准 RE

- C++学习重点

lx.asymmetric

C++笔记

1.c++面向对象的三个特性:封装性,继承性以及多态性。

2.标识符的命名规则:由字母和下划线开头,同时由字母、数字或下划线组成;不能与系统关键字重名。

3.c++语言常量包括整型常量、浮点型常量、布尔常量、字符型常量和字符串性常量。

4.运算符按其功能开以分为六类:算术运算符、位运算符、关系运算符、逻辑运算符、赋值运算符和条件运算符。

&n

- java bean和xml相互转换

q821424508

javabeanxmlxml和bean转换java bean和xml转换

这几天在做微信公众号

做的过程中想找个java bean转xml的工具,找了几个用着不知道是配置不好还是怎么回事,都会有一些问题,

然后脑子一热谢了一个javabean和xml的转换的工具里,自己用着还行,虽然有一些约束吧 ,

还是贴出来记录一下

顺便你提一下下,这个转换工具支持属性为集合、数组和非基本属性的对象。

packag

- C 语言初级 位运算

1140566087

位运算c

第十章 位运算 1、位运算对象只能是整形或字符型数据,在VC6.0中int型数据占4个字节 2、位运算符: 运算符 作用 ~ 按位求反 << 左移 >> 右移 & 按位与 ^ 按位异或 | 按位或 他们的优先级从高到低; 3、位运算符的运算功能: a、按位取反: ~01001101 = 101

- 14点睛Spring4.1-脚本编程

wiselyman

spring4

14.1 Scripting脚本编程

脚本语言和java这类静态的语言的主要区别是:脚本语言无需编译,源码直接可运行;

如果我们经常需要修改的某些代码,每一次我们至少要进行编译,打包,重新部署的操作,步骤相当麻烦;

如果我们的应用不允许重启,这在现实的情况中也是很常见的;

在spring中使用脚本编程给上述的应用场景提供了解决方案,即动态加载bean;

spring支持脚本