mediapipe学习-手势识别windows(2)

前言

前面已经讲过怎么搭建环境了,也简单的讲述了编译 hand_tracking

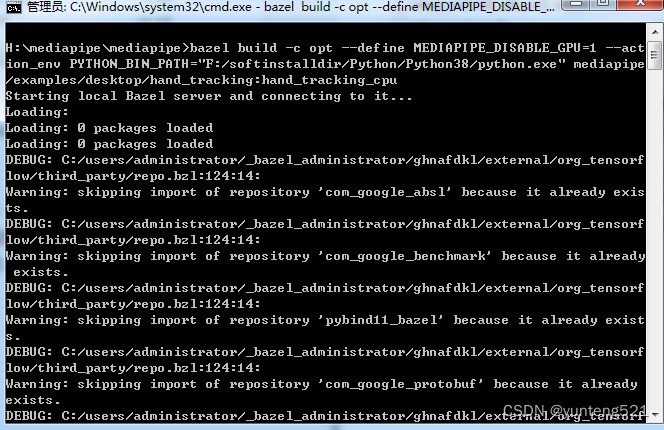

1:编译 CPU 版本 hand_tracking

目录结构如下

cd mediapipe ####有WORKSPACE 目录 这里PYTHON目录 F:/softinstalldir/Python/Python38/python.exe

bazel build -c opt --define MEDIAPIPE_DISABLE_GPU=1 --action_env PYTHON_BIN_PATH=“F:/softinstalldir/Python/Python38/python.exe” mediapipe/examples/desktop/hand_tracking:hand_tracking_cpu

注意找不到得包 ,自行下载 参考 mediapipe学习-安装编译windows(1)

编译速度根据本地机器得CPU 有关,用的老机子 第一次编译了好久(i5 3470) 再有就跟网络有关系 会下载很多包下来

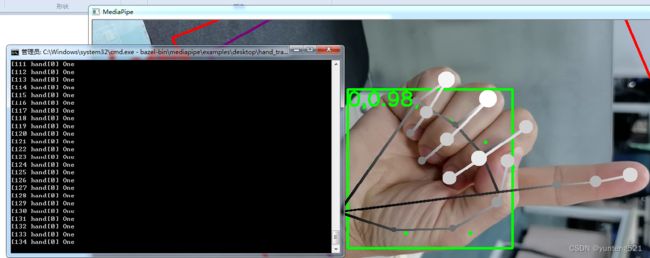

2:修改CODE,增加手势中显示手势的结果打印

// Copyright 2019 The MediaPipe Authors.

//

// Licensed under the Apache License, Version 2.0 (the "License");

// you may not use this file except in compliance with the License.

// You may obtain a copy of the License at

//

// http://www.apache.org/licenses/LICENSE-2.0

//

// Unless required by applicable law or agreed to in writing, software

// distributed under the License is distributed on an "AS IS" BASIS,

// WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

// See the License for the specific language governing permissions and

// limitations under the License.

//

// An example of sending OpenCV webcam frames into a MediaPipe graph.

#include

mediapipe::Packet packet_landmarks;

if (m_pPoller_landmarks->QueueSize() > 0)

{

if (m_pPoller_landmarks->Next(&packet_landmarks)) {

// std::vector output_landmarks = packet_landmarks.Get>();

// for (int m = 0; m < output_landmarks.size(); ++m) {

// LOG(INFO) << "output_landmarks.size." << output_landmarks.size();

// break;

// }

std::vector<mediapipe::NormalizedLandmarkList> output_landmarks = packet_landmarks.Get<std::vector<mediapipe::NormalizedLandmarkList>>();

for (int m = 0; m < output_landmarks.size(); ++m) {

// LOG(INFO) << "output_landmarks.size."<< output_landmarks.size(); //1 表示一只手 2 表示2只手

std::vector<PoseInfo> singleHandGestureInfo;

singleHandGestureInfo.clear();

// break;

mediapipe::NormalizedLandmarkList single_hand_NormalizedLandmarkList = output_landmarks[m];

for (int i = 0; i < single_hand_NormalizedLandmarkList.landmark_size(); ++i)

{

PoseInfo info;

const mediapipe::NormalizedLandmark landmark = single_hand_NormalizedLandmarkList.landmark(i);

info.x = landmark.x() * camera_frame.cols;

info.y = landmark.y() * camera_frame.rows;

singleHandGestureInfo.push_back(info);

}

int handres = GestureRecognition(singleHandGestureInfo);

// LOG(INFO) << "[" << m << "] " << GetGestureResult(handres);

std::cout <<"["<< nframe<< " hand[" << m << "] " << GetGestureResult(handres) <<std::endl;

}

}

}else{

LOG(INFO) << "output_landmarks.size is zero.";

}

// Convert back to opencv for display or saving.

cv::Mat output_frame_mat = mediapipe::formats::MatView(&output_frame);

cv::cvtColor(output_frame_mat, output_frame_mat, cv::COLOR_RGB2BGR);

if (save_video) {

if (!writer.isOpened()) {

LOG(INFO) << "Prepare video writer.";

writer.open(absl::GetFlag(FLAGS_output_video_path),

mediapipe::fourcc('a', 'v', 'c', '1'), // .mp4

capture.get(cv::CAP_PROP_FPS), output_frame_mat.size());

RET_CHECK(writer.isOpened());

}

writer.write(output_frame_mat);

} else {

cv::imshow(kWindowName, output_frame_mat);

// Press any key to exit.

const int pressed_key = cv::waitKey(5);

if (pressed_key >= 0 && pressed_key != 255) grab_frames = false;

}

}

LOG(INFO) << "Shutting down.";

if (writer.isOpened()) writer.release();

MP_RETURN_IF_ERROR(graph.CloseInputStream(kInputStream));

return graph.WaitUntilDone();

}

int main(int argc, char** argv) {

google::InitGoogleLogging(argv[0]);

absl::ParseCommandLine(argc, argv);

absl::Status run_status = RunMPPGraph();

if (!run_status.ok()) {

LOG(ERROR) << "Failed to run the graph: " << run_status.message();

return EXIT_FAILURE;

} else {

LOG(INFO) << "Success!";

}

return EXIT_SUCCESS;

}

//手势识别

int GestureRecognition(const std::vector<PoseInfo>& single_hand_joint_vector)

{

if (single_hand_joint_vector.size() != 21)

return -1;

// 大拇指角度

Vector2D thumb_vec1;

thumb_vec1.x = single_hand_joint_vector[0].x - single_hand_joint_vector[2].x;

thumb_vec1.y = single_hand_joint_vector[0].y - single_hand_joint_vector[2].y;

Vector2D thumb_vec2;

thumb_vec2.x = single_hand_joint_vector[3].x - single_hand_joint_vector[4].x;

thumb_vec2.y = single_hand_joint_vector[3].y - single_hand_joint_vector[4].y;

float thumb_angle = Vector2DAngle(thumb_vec1, thumb_vec2);

//std::cout << "thumb_angle = " << thumb_angle << std::endl;

//std::cout << "thumb.y = " << single_hand_joint_vector[0].y << std::endl;

// 食指角度

Vector2D index_vec1;

index_vec1.x = single_hand_joint_vector[0].x - single_hand_joint_vector[6].x;

index_vec1.y = single_hand_joint_vector[0].y - single_hand_joint_vector[6].y;

Vector2D index_vec2;

index_vec2.x = single_hand_joint_vector[7].x - single_hand_joint_vector[8].x;

index_vec2.y = single_hand_joint_vector[7].y - single_hand_joint_vector[8].y;

float index_angle = Vector2DAngle(index_vec1, index_vec2);

//std::cout << "index_angle = " << index_angle << std::endl;

// 中指角度

Vector2D middle_vec1;

middle_vec1.x = single_hand_joint_vector[0].x - single_hand_joint_vector[10].x;

middle_vec1.y = single_hand_joint_vector[0].y - single_hand_joint_vector[10].y;

Vector2D middle_vec2;

middle_vec2.x = single_hand_joint_vector[11].x - single_hand_joint_vector[12].x;

middle_vec2.y = single_hand_joint_vector[11].y - single_hand_joint_vector[12].y;

float middle_angle = Vector2DAngle(middle_vec1, middle_vec2);

//std::cout << "middle_angle = " << middle_angle << std::endl;

// 无名指角度

Vector2D ring_vec1;

ring_vec1.x = single_hand_joint_vector[0].x - single_hand_joint_vector[14].x;

ring_vec1.y = single_hand_joint_vector[0].y - single_hand_joint_vector[14].y;

Vector2D ring_vec2;

ring_vec2.x = single_hand_joint_vector[15].x - single_hand_joint_vector[16].x;

ring_vec2.y = single_hand_joint_vector[15].y - single_hand_joint_vector[16].y;

float ring_angle = Vector2DAngle(ring_vec1, ring_vec2);

//std::cout << "ring_angle = " << ring_angle << std::endl;

// 小拇指角度

Vector2D pink_vec1;

pink_vec1.x = single_hand_joint_vector[0].x - single_hand_joint_vector[18].x;

pink_vec1.y = single_hand_joint_vector[0].y - single_hand_joint_vector[18].y;

Vector2D pink_vec2;

pink_vec2.x = single_hand_joint_vector[19].x - single_hand_joint_vector[20].x;

pink_vec2.y = single_hand_joint_vector[19].y - single_hand_joint_vector[20].y;

float pink_angle = Vector2DAngle(pink_vec1, pink_vec2);

//std::cout << "pink_angle = " << pink_angle << std::endl;

// 根据角度判断手势

float angle_threshold = 65;

float thumb_angle_threshold = 40;

int result = -1;

if ((thumb_angle > thumb_angle_threshold) && (index_angle > angle_threshold) && (middle_angle > angle_threshold) && (ring_angle > angle_threshold) && (pink_angle > angle_threshold))

result = Gesture::Fist;

else if ((thumb_angle > 5) && (index_angle < angle_threshold) && (middle_angle > angle_threshold) && (ring_angle > angle_threshold) && (pink_angle > angle_threshold))

result = Gesture::One;

else if ((thumb_angle > thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle > angle_threshold) && (pink_angle > angle_threshold))

result = Gesture::Two;

else if ((thumb_angle > thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle > angle_threshold))

result = Gesture::Three;

else if ((thumb_angle > thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle < angle_threshold))

result = Gesture::Four;

else if ((thumb_angle < thumb_angle_threshold) && (index_angle < angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle < angle_threshold))

result = Gesture::Five;

else if ((thumb_angle < thumb_angle_threshold) && (index_angle > angle_threshold) && (middle_angle > angle_threshold) && (ring_angle > angle_threshold) && (pink_angle < angle_threshold))

result = Gesture::Six;

else if ((thumb_angle < thumb_angle_threshold) && (index_angle > angle_threshold) && (middle_angle > angle_threshold) && (ring_angle > angle_threshold) && (pink_angle > angle_threshold))

result = Gesture::ThumbUp;

else if ((thumb_angle > 5) && (index_angle > angle_threshold) && (middle_angle < angle_threshold) && (ring_angle < angle_threshold) && (pink_angle < angle_threshold))

result = Gesture::Ok;

else

result = -1;

return result;

}

float Vector2DAngle(const Vector2D& vec1, const Vector2D& vec2)

{

double PI = 3.141592653;

float t = (vec1.x * vec2.x + vec1.y * vec2.y) / (sqrt(pow(vec1.x, 2) + pow(vec1.y, 2)) * sqrt(pow(vec2.x, 2) + pow(vec2.y, 2)));

float angle = acos(t) * (180 / PI);

return angle;

}

std::string GetGestureResult(int result)

{

std::string result_str = "无";

switch (result)

{

case 1:

result_str = "One";

break;

case 2:

result_str = "Two";

break;

case 3:

result_str = "Three";

break;

case 4:

result_str = "Four";

break;

case 5:

result_str = "Five";

break;

case 6:

result_str = "Six";

break;

case 7:

result_str = "ThumbUp";

break;

case 8:

result_str = "Ok";

break;

case 9:

result_str = "Fist";

break;

default:

break;

}

return result_str;

}

hand_tracking_data.h 这个文件参考了别人的

#ifndef HANDS_TRACKING_DATA_H

#define HANDS_TRACKING_DATA_H

struct PoseInfo {

float x;

float y;

};

typedef PoseInfo Point2D;

typedef PoseInfo Vector2D;

struct GestureRecognitionResult

{

int m_Gesture_Recognition_Result[2] = {-1,-1};

int m_HandUp_HandDown_Detect_Result[2] = {-1,-1};

};

enum Gesture

{

NoGesture = -1,

One = 1,

Two = 2,

Three = 3,

Four = 4,

Five = 5,

Six = 6,

ThumbUp = 7,

Ok = 8,

Fist = 9

};

#endif // !HANDS_TRACKING_DATA_H

修改BUILD

cc_library(

name = "demo_run_graph_main",

srcs = ["demo_run_graph_main.cc","hand_tracking_data.h"], #这里增加 hand_tracking_data.h

deps = [

"//mediapipe/framework/formats:landmark_cc_proto", #这句是增加的

"//mediapipe/framework:calculator_framework",

.............................................

.............................................

],

)

4: 下章讲述android版本 手势识别 实现