Kubeadm部署k8s集群

目录

- kubeadm部署k8s

-

- 1.部署准备工作

-

- 小知识

- 2.安装具体步骤

-

- 1. 安装docker

- 2. 配置国内镜像加速器

- 3. 添加k8s的阿里云yum源

- 4. 安装 kubeadm,kubelet 和 kubectl

- 5. 查询是否安装成功

- 6. 部署Kubernetes Master主节点

- 7. 在Master上执行上一步提示的命令

- 8. 将node节点添加到master中,在node机器中执行此命令即可【该命令是第6步中初始化节点时出现的】

- 9. 部署网络插件 用于节点之间的项目通讯

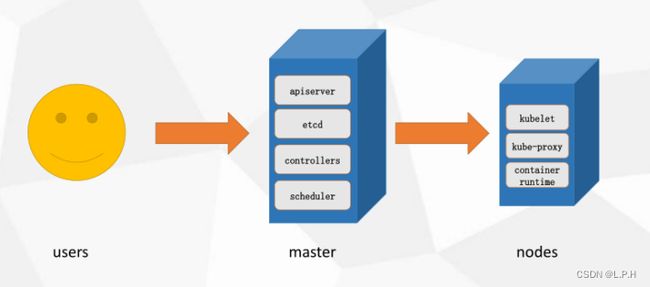

kubeadm部署k8s

1.创建Master节点

kubeadm init

2.将node节点添加到Master集群中

kubeadm join <Master节点的IP和端口>

Kubernetes部署环境要求

(1)一台或多台机器,操作系统CentOS 7.x-86_x64

(2)硬件配置:内存2GB或2G+,CPU 2核或CPU 2核+;

(3)集群内各个机器之间能相互通信;

(4)集群内各个机器可以访问外网,需要拉取镜像;

(5)禁止swap分区;

1.部署准备工作

小知识

如果是使用vmware创建的镜像,ip可能会变化,因此需要设置静态ip,文件有则修改,无则添加!之后重启即可

# 修改ifcfg-ens33文件 vi /etc/sysconfig/network-scripts/ifcfg-ens33BOOTPROTO="static" # 设置静态地址 [设置dbcp吧,要不然没网QWQ] IPADDR="192.168.226.132" # 设置固定的ip地址

- 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

- 关闭selinux

sed -i 's/enforcing/disabled/' /etc/selinux/config #永久

setenforce 0 #临时

- 关闭swap(k8s禁止虚拟内存以提高性能)

sed -ri 's/.*swap.*/#&/' /etc/fstab #永久

swapoff -a #临时

- 修改主机名

cat >> /etc/hosts << EOF

192.168.85.132 k8s-master

192.168.85.130 k8s-node1

192.168.85.133 k8s-node2

EOF

- 设置网桥参数

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

sysctl --system #生效

- 时间同步

yum install ntpdate -y #若是没有这个工具的需要下载

ntpdate time.windows.com

2.安装具体步骤

所有服务器节点安装 Docker/kubeadm/kubelet/kubectl

Kubernetes 默认容器运行环境是Docker,因此首先需要安装Docker;

- 安装wget、vim

yum install wget -y

yum install vim -y

1. 安装docker

更新docker的yum源

wget https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo -O /etc/yum.repos.d/docker-ce.repo

查看docker版本

yum list docker-ce --showduplicates|sort -r

#显示版本

[root@localhost ~]# yum list docker-ce --showduplicates | sort -r

已加载插件:fastestmirror

已安装的软件包

可安装的软件包

* updates: mirrors.huaweicloud.com

Loading mirror speeds from cached hostfile

* extras: mirrors.nju.edu.cn

docker-ce.x86_64 3:20.10.9-3.el7 docker-ce-stable

docker-ce.x86_64 3:20.10.9-3.el7 @docker-ce-stable

docker-ce.x86_64 3:20.10.8-3.el7 docker-ce-stable

docker-ce.x86_64 3:20.10.7-3.el7 docker-ce-stable

docker-ce.x86_64 3:20.10.6-3.el7 docker-ce-stable

# 复制中间就可以了:3:20.10.9-3.el7 即 20.10.9

安装指定版本的docker

yum install docker-ce-20.10.9 -y

2. 配置国内镜像加速器

sudo vi /etc/docker/daemon.json

{

"registry-mirrors" : ["https://q5bf287q.mirror.aliyuncs.com", "https://registry.docker-cn.com","http://hub-mirror.c.163.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

自动启动docker

systemctl enable docker.service

3. 添加k8s的阿里云yum源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

4. 安装 kubeadm,kubelet 和 kubectl

yum install kubelet-1.23.6 kubeadm-1.23.6 kubectl-1.23.6 -y

卸载k8s

kubeadm reset -f

yum remove -y kubelet-1.26.0 kubeadm-1.26.0 kubectl-1.26.0

rm -rf /etc/cni /etc/kubernetes /var/lib/dockershim /var/lib/etcd /var/lib/kubelet /var/run/kubernetes ~/.kube/*

自动启动

systemctl enable kubelet.service

5. 查询是否安装成功

yum list installed | grep kubelet

yum list installed | grep kubeadm

yum list installed | grep kubectl

Kubelet:运行在cluster所有节点上,负责启动POD和容器;

Kubeadm:用于初始化cluster的一个工具;

Kubectl:kubectl是kubenetes命令行工具,通过kubectl可以部署和管理应用,查看各种资源,创建,删除和更新组件

6. 部署Kubernetes Master主节点

# kubeadm init --apiserver-advertise-address=192.168.85.129 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.26.0 --service-cidr=10.96.0.0/12 --pod-network-cidr=10.244.0.0/16 # 版本太高,不适合docker了

kubeadm init --apiserver-advertise-address=192.168.85.132 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.23.6 --service-cidr=10.96.0.0/16 --pod-network-cidr=10.244.0.0/16

安装成功:

[root@k8s-master system]# kubeadm init --apiserver-advertise-address=192.168.85.132 --image-repository registry.aliyuncs.com/google_containers --kubernetes-version v1.23.6 --service-cidr=10.96.0.0/16 --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.23.6

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [k8s-master kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.85.132]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.85.132 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [k8s-master localhost] and IPs [192.168.85.132 127.0.0.1 ::1]

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Starting the kubelet

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[apiclient] All control plane components are healthy after 11.012407 seconds

[upload-config] Storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.23" in namespace kube-system with the configuration for the kubelets in the cluster

NOTE: The "kubelet-config-1.23" naming of the kubelet ConfigMap is deprecated. Once the UnversionedKubeletConfigMap feature gate graduates to Beta the default name will become just "kubelet-config". Kubeadm upgrade will handle this transition transparently.

[upload-certs] Skipping phase. Please see --upload-certs

[mark-control-plane] Marking the node k8s-master as control-plane by adding the labels: [node-role.kubernetes.io/master(deprecated) node-role.kubernetes.io/control-plane node.kubernetes.io/exclude-from-external-load-balancers]

[mark-control-plane] Marking the node k8s-master as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: z7srgx.xy1cf18s9saqkiij

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to get nodes

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] Creating the "cluster-info" ConfigMap in the "kube-public" namespace

[kubelet-finalize] Updating "/etc/kubernetes/kubelet.conf" to point to a rotatable kubelet client certificate and key

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxy

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

# 接下来要执行的命令

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

# 添加节点的方式

kubeadm join 192.168.85.132:6443 --token z7srgx.xy1cf18s9saqkiij \

--discovery-token-ca-cert-hash sha256:69acc269317fe7baf0838909e1a4b9514dc73520d99c94af31941f53c261b58a

[root@k8s-master system]# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.aliyuncs.com/google_containers/kube-apiserver v1.23.6 8fa62c12256d 8 months ago 135MB

registry.aliyuncs.com/google_containers/kube-controller-manager v1.23.6 df7b72818ad2 8 months ago 125MB

registry.aliyuncs.com/google_containers/kube-scheduler v1.23.6 595f327f224a 8 months ago 53.5MB

registry.aliyuncs.com/google_containers/kube-proxy v1.23.6 4c0375452406 8 months ago 112MB

registry.aliyuncs.com/google_containers/etcd 3.5.1-0 25f8c7f3da61 14 months ago 293MB

registry.aliyuncs.com/google_containers/coredns v1.8.6 a4ca41631cc7 15 months ago 46.8MB

registry.aliyuncs.com/google_containers/pause 3.6 6270bb605e12 16 months ago 683kB

[root@k8s-master system]#

可能出现的错误:

kubelet版本过高,v1.24版本后kubernetes放弃docker了,根据上面安装1.23.6版本的

错误1:

[ERROR CRI]: container runtime is not running: output: E0104 17:29:26.251535 1676 remote_runtime.go:948] "Status from runtime service failed" err="rpc error: code = Unimplemented desc = unknown service runtime.v1alpha2.RuntimeService" time="2023-01-04T17:29:26+08:00" level=fatal msg="getting status of runtime: rpc error: code = Unimplemented desc = unknown service runtime.v1alpha2.RuntimeService" , error: exit status 1解决方案:

rm /etc/containerd/config.toml systemctl restart containerd kubeadm init #重新部署节点出错之后,运行

kubeadm reset然后再次kubeadm init。kubeadm reset错误2:

[kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp [::1]:10248: connect: connection refused. [kubelet-check] It seems like the kubelet isn't running or healthy. [kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp [::1]:10248: connect: connection refused. [kubelet-check] It seems like the kubelet isn't running or healthy. [kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp [::1]:10248: connect: connection refused. [kubelet-check] It seems like the kubelet isn't running or healthy. [kubelet-check] The HTTP call equal to 'curl -sSL http://localhost:10248/healthz' failed with error: Get "http://localhost:10248/healthz": dial tcp [::1]:10248: connect: connection refused. [kubelet-check] It seems like the kubelet isn't running or healthy.解决

#修改kubelet的启动配置文件 /usr/lib/systemd/system/kubelet.service.d/10-kubeadm.conf ,在ExecStart上添加 --feature-gates SupportPodPidsLimit=false --feature-gates SupportNodePidsLimit=false #修改后执行 systemctl daemon-reload && systemctl restart kubelet。错误3:

[init] Using Kubernetes version: v1.26.0 [preflight] Running pre-flight checks error execution phase preflight: [preflight] Some fatal errors occurred: [ERROR CRI]: container runtime is not running: output: E0105 14:58:45.055862 8435 remote_runtime.go:948] "Status from runtime service failed" err="rpc error: code = Unimplemented desc = unknown service runtime.v1alpha2.RuntimeService" time="2023-01-05T14:58:45+08:00" level=fatal msg="getting status of runtime: rpc error: code = Unimplemented desc = unknown service runtime.v1alpha2.RuntimeService" , error: exit status 1 [preflight] If you know what you are doing, you can make a check non-fatal with `--ignore-preflight-errors=...` To see the stack trace of this error execute with --v=5 or higher解决:

rm -rf /etc/containerd/config.toml systemctl restart containerd正常显示:

[init] Using Kubernetes version: v1.26.0 [preflight] Running pre-flight checks [preflight] Pulling images required for setting up a Kubernetes cluster [preflight] This might take a minute or two, depending on the speed of your internet connection [preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

7. 在Master上执行上一步提示的命令

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

查询节点命令

kubectl get nodes

显示:

[root@k8s-master system]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady control-plane,master 9m37s v1.23.6

8. 将node节点添加到master中,在node机器中执行此命令即可【该命令是第6步中初始化节点时出现的】

kubeadm join 192.168.85.132:6443 --token z7srgx.xy1cf18s9saqkiij \

--discovery-token-ca-cert-hash sha256:69acc269317fe7baf0838909e1a4b9514dc73520d99c94af31941f53c261b58a

运行结果:

[root@k8s-node1 ~]# kubeadm join 192.168.85.132:6443 --token z7srgx.xy1cf18s9saqkiij \

> --discovery-token-ca-cert-hash sha256:69acc269317fe7baf0838909e1a4b9514dc73520d99c94af31941f53c261b58a

[preflight] Running pre-flight checks

[preflight] Reading configuration from the cluster...

[preflight] FYI: You can look at this config file with 'kubectl -n kube-system get cm kubeadm-config -o yaml'

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Starting the kubelet

[kubelet-start] Waiting for the kubelet to perform the TLS Bootstrap...

This node has joined the cluster:

* Certificate signing request was sent to apiserver and a response was received.

* The Kubelet was informed of the new secure connection details.

Run 'kubectl get nodes' on the control-plane to see this node join the cluster.

9. 部署网络插件 用于节点之间的项目通讯

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

有时候可能会下载失败

[root@k8s-master docker]# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml --2023-01-05 16:28:08-- https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml 正在解析主机 raw.githubusercontent.com (raw.githubusercontent.com)... 0.0.0.0, :: 正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|0.0.0.0|:443... 失败:拒绝连接。 正在连接 raw.githubusercontent.com (raw.githubusercontent.com)|::|:443... 失败:拒绝连接。就手动配置,直接打开连接复制就可以

kube-flannel.yml

--- apiVersion: policy/v1beta1 kind: PodSecurityPolicy metadata: name: psp.flannel.unprivileged annotations: seccomp.security.alpha.kubernetes.io/allowedProfileNames: docker/default seccomp.security.alpha.kubernetes.io/defaultProfileName: docker/default apparmor.security.beta.kubernetes.io/allowedProfileNames: runtime/default apparmor.security.beta.kubernetes.io/defaultProfileName: runtime/default spec: privileged: false volumes: - configMap - secret - emptyDir - hostPath allowedHostPaths: - pathPrefix: "/etc/cni/net.d" - pathPrefix: "/etc/kube-flannel" - pathPrefix: "/run/flannel" readOnlyRootFilesystem: false # Users and groups runAsUser: rule: RunAsAny supplementalGroups: rule: RunAsAny fsGroup: rule: RunAsAny # Privilege Escalation allowPrivilegeEscalation: false defaultAllowPrivilegeEscalation: false # Capabilities allowedCapabilities: ['NET_ADMIN', 'NET_RAW'] defaultAddCapabilities: [] requiredDropCapabilities: [] # Host namespaces hostPID: false hostIPC: false hostNetwork: true hostPorts: - min: 0 max: 65535 # SELinux seLinux: # SELinux is unused in CaaSP rule: 'RunAsAny' --- kind: ClusterRole apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel rules: - apiGroups: ['extensions'] resources: ['podsecuritypolicies'] verbs: ['use'] resourceNames: ['psp.flannel.unprivileged'] - apiGroups: - "" resources: - pods verbs: - get - apiGroups: - "" resources: - nodes verbs: - list - watch - apiGroups: - "" resources: - nodes/status verbs: - patch --- kind: ClusterRoleBinding apiVersion: rbac.authorization.k8s.io/v1 metadata: name: flannel roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: flannel subjects: - kind: ServiceAccount name: flannel namespace: kube-system --- apiVersion: v1 kind: ServiceAccount metadata: name: flannel namespace: kube-system --- kind: ConfigMap apiVersion: v1 metadata: name: kube-flannel-cfg namespace: kube-system labels: tier: node app: flannel data: cni-conf.json: | { "name": "cbr0", "cniVersion": "0.3.1", "plugins": [ { "type": "flannel", "delegate": { "hairpinMode": true, "isDefaultGateway": true } }, { "type": "portmap", "capabilities": { "portMappings": true } } ] } net-conf.json: | { "Network": "10.244.0.0/16", "Backend": { "Type": "vxlan" } } --- apiVersion: apps/v1 kind: DaemonSet metadata: name: kube-flannel-ds namespace: kube-system labels: tier: node app: flannel spec: selector: matchLabels: app: flannel template: metadata: labels: tier: node app: flannel spec: affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: kubernetes.io/os operator: In values: - linux hostNetwork: true priorityClassName: system-node-critical tolerations: - operator: Exists effect: NoSchedule serviceAccountName: flannel initContainers: - name: install-cni image: quay.io/coreos/flannel:v0.13.0 command: - cp args: - -f - /etc/kube-flannel/cni-conf.json - /etc/cni/net.d/10-flannel.conflist volumeMounts: - name: cni mountPath: /etc/cni/net.d - name: flannel-cfg mountPath: /etc/kube-flannel/ containers: - name: kube-flannel image: quay.io/coreos/flannel:v0.13.0 command: - /opt/bin/flanneld args: - --ip-masq - --kube-subnet-mgr resources: requests: cpu: "100m" memory: "50Mi" limits: cpu: "100m" memory: "50Mi" securityContext: privileged: false capabilities: add: ["NET_ADMIN", "NET_RAW"] env: - name: POD_NAME valueFrom: fieldRef: fieldPath: metadata.name - name: POD_NAMESPACE valueFrom: fieldRef: fieldPath: metadata.namespace volumeMounts: - name: run mountPath: /run/flannel - name: flannel-cfg mountPath: /etc/kube-flannel/ volumes: - name: run hostPath: path: /run/flannel - name: cni hostPath: path: /etc/cni/net.d - name: flannel-cfg configMap: name: kube-flannel-cfg

应用kube-flannel.yml文件

kubectl apply -f kube-flannel.yml

运行结果

[root@k8s-master k8s]# kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

clusterrole.rbac.authorization.k8s.io/flannel configured

clusterrolebinding.rbac.authorization.k8s.io/flannel configured

serviceaccount/flannel created

configmap/kube-flannel-cfg created

daemonset.apps/kube-flannel-ds created

查看运行时的容器pod【一个pod里面可以运行多个docker容器】

kubectl get pods -n kube-system

启动可能需要等一会

# 成功之后

[root@k8s-master k8s]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready control-plane,master 52m v1.23.6

k8s-node1 Ready <none> 30m v1.23.6

k8s-node2 Ready <none> 24m v1.23.6

至此部署完成!