Xavier NX 使用OpenCV+GStreamer实现硬解码

提示:文章写完后,目录可以自动生成,如何生成可参考右边的帮助文档

文章目录

- 前言

- 一、NVCODEC是什么?

- 二、编译OpenCV

-

- 1.准备环境

- 2.编译

- 3.测试代码

- 总结

前言

最近在NX上做一个项目,需要把rtsp流出来的图片送入AI推理,结果发现OpenCV的VideoCapture使用的是软解码大量消耗了cpu的资源影响了实际效果。我只能把解码这部分送到硬件解码器去处理,分摊掉cpu的压力,接下来我就具体说说怎么处理。由于我手上用的是NX,所以本教程是基于Xavier NX的,不排除其他Jetson产品也能用,这个需要大家自行测试。

一、NVCODEC是什么?

这个东西我在其他文章里已经介绍很多了,这里就不过多赘述了,一语概括就是Nvidia提供的硬件编解码,它是一个专门的硬件元器件,在处理视频上面很有优势,但是不代表不消耗任何的cpu或者内存资源。

使用硬解码的方式有很多种,这里我介绍OpenCV+GStreamer组合的方式,其他方法请自行研究。

二、编译OpenCV

1.准备环境

你首先需要一台刷好机的NX,而且在刷机的时候一定要勾选CUDA相关的选项,因为这个教程需要CUDA和CuDNN。接下来安装必要的软件,由于软件很多,建议使用国内源。

sudo apt-get update

sudo apt-get dist-upgrade -y --autoremove

sudo apt-get install -y \

build-essential \

cmake \

git \

gfortran \

libatlas-base-dev \

libavcodec-dev \

libavformat-dev \

libavresample-dev \

libcanberra-gtk3-module \

libdc1394-22-dev \

libeigen3-dev \

libglew-dev \

libgstreamer-plugins-base1.0-dev \

libgstreamer-plugins-good1.0-dev \

libgstreamer1.0-dev \

libgtk-3-dev \

libjpeg-dev \

libjpeg8-dev \

libjpeg-turbo8-dev \

liblapack-dev \

liblapacke-dev \

libopenblas-dev \

libpng-dev \

libpostproc-dev \

libswscale-dev \

libtbb-dev \

libtbb2 \

libtesseract-dev \

libtiff-dev \

libv4l-dev \

libxine2-dev \

libxvidcore-dev \

libx264-dev \

pkg-config \

python-dev \

python-numpy \

python3-dev \

python3-numpy \

python3-matplotlib \

qv4l2 \

v4l-utils \

v4l2ucp \

zlib1g-dev

只后下载opencv-4.5.1和opencv_contrib-4.5.1,这里我使用的是4.5.1版本,其他的版本请大家自行测试。

2.编译

在此之前你需要删除自带的opencv,假如你刷机的时候选择了opencv,如果这个opencv满足要求你就不需要重新编译,如果不满足要求请继续往下看。

cd opencv-4.5.1

mkdir build && cd build

cmake -D CMAKE_BUILD_TYPE=RELEASE \

-D CMAKE_INSTALL_PREFIX=/usr/local \

-D ENABLE_PRECOMPILED_HEADERS=OFF \

-D INSTALL_C_EXAMPLES=OFF \

-D INSTALL_PYTHON_EXAMPLES=OFF \

-D BUILD_opencv_python2=OFF \

-D BUILD_opencv_python3=ON \

-D PYTHON_DEFAULT_EXECUTABLE=$(/usr/bin/python3 -c "import sys; print(sys.executable)") \

-D PYTHON3_EXECUTABLE=$(/usr/bin/python3 -c "import sys; print(sys.executable)") \

-D PYTHON3_NUMPY_INCLUDE_DIRS=$(/usr/bin/python3 -c "import numpy; print (numpy.get_include())") \

-D PYTHON3_PACKAGES_PATH=$(/usr/bin/python3 -c "from distutils.sysconfig import get_python_lib; print(get_python_lib())") \

-D WITH_V4L=ON \

-D WITH_LIBV4L=ON \

-D WITH_CUDA=ON \

-D CUDA_CUDA_LIBRARY=ON \

-D WITH_CUDNN=ON \

-D CUDNN_VERSION='8.0' \

-D ENABLE_FAST_MATH=ON \

-D CUDA_FAST_MATH=ON \

-D WITH_CUBLAS=ON \

-D WITH_OPENGL=ON \

-D WITH_FFMPEG=ON \

-D CUDA_ARCH_BIN=5.3,6.2,7.2 \

-D CUDA_ARCH_PTX= \

-D EIGEN_INCLUDE_PATH=/usr/include/eigen3 \

-D ENABLE_NEON=ON \

-D OPENCV_DNN_CUDA=ON \

-D OPENCV_ENABLE_NONFREE=ON \

-D OPENCV_GENERATE_PKGCONFIG=ON \

-D WITH_GSTREAMER=ON \

-D WITH_OPENGL=ON \

-D OPENCV_EXTRA_MODULES_PATH=../../opencv_contrib-4.5.1/modules ..

如果不想把opencv和numpy结合起来的话就把numpy选项去掉,不需要ffmpeg的话就去掉ffmpeg。cmake构建成功的时候需要看一下你关联的这些库找到没有,一定要是YES才行,后面跟的是版本号,我用的是Ubuntu18.04,版本号应该大差不差,不用太关心,能找到就行了。接下来编译

make -j5

sudo make install

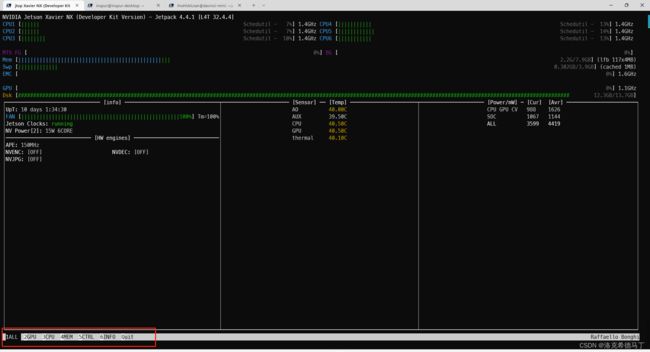

这里我测试了下用5个线程编译比较合适,前提是打开了6核心设置,具体方法在jtop里面

看红框标记的那一栏,这些可以通过数字来切换的,我们切换到5(控制选项)

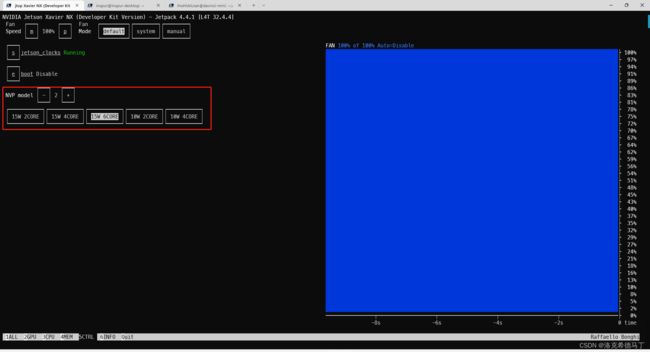

按一下’s’就打开了jetson_clocks,这个时候GPU的频率会从114MHZ到1.1GHZ。

NVP model就是设置性能模式,15W 2CORE的话CPU只有2个核心可以用,每个核心的频率可以达到最大1.9GHZ,如果是15W 6CORE每个核心频率最大可以达到1.4GHZ,可以根据实际需求设置。

3.测试代码

# --------------------------------------------------------

# Camera sample code for Tegra X2/X1

#

# This program could capture and display video from

# IP CAM, USB webcam, or the Tegra onboard camera.

# Refer to the following blog post for how to set up

# and run the code:

# https://jkjung-avt.github.io/tx2-camera-with-python/

#

# Written by JK Jung 我关闭了显示功能,需要的可以自行打开。

总结

我用的是Jetpack 4.4.2 版本,OpenCV预装4.5.4不带GStreamer的,需要删除重新编译。只要依赖库装好,中间编译一气呵成,没有遇到问题。和x86服务器的编译方式还是有差别的。