IOS音视频(二)AVFoundation视频捕捉

IOS音视频(二)AVFoundation视频捕捉

- 1. 媒体捕捉概念

- 2. 视频捕捉实例

-

- 2.1 创建预览视图

- 2.2 设置捕捉会话

- 2.3 启动, 停止会话

- 2.4 切换摄像头

- 2.5 调整焦距和曝光, 闪光灯和手电筒模式

-

- 2.5.1 对焦

- 2.5.2 曝光

- 2.5.3 闪光灯

- 2.5.4 手电筒

- 2.6 拍摄静态图片

- 2.7 视频捕捉

- 2.8 视频缩放

- 2.9 视频编辑

- 2.10 高帧率捕捉

- 2.11 人脸识别

- 2.12 二维码识别

- 3. 实例

-

- 3.1 捕捉照片和录制视频Demo Swift版本

-

- 3.1.1 配置捕获会话

- 3.1.2 请求访问输入设备的授权

- 3.1.3 在前后摄像头之间切换

- 3.1.4 处理中断和错误

- 3.1.5 捕捉一张照片

- 3.1.6 通过照片捕获委托跟踪结果

- 3.1.7 捕捉实时的照片

- 3.1.8 捕获深度数据和人像效果曝光

- 3.1.9 捕捉语义分割

- 3.1.10 保存照片到用户的照片库

- 3.1.11 录制视频文件

- 3.1.12 录制视频时要抓拍图片

- 上一篇“IOS音视频(一)AVFoundation核心类”博客粗略的讲解了AVFoundation的一些核心类,里面也描述了关于AVFoundation的视频捕获功能。

- 本篇博客的Demo下载地址:Swift 视频捕获Demo, OC 视频捕获相机Demo

1. 媒体捕捉概念

- 理解捕捉媒体,需要先了解一些基本概念:

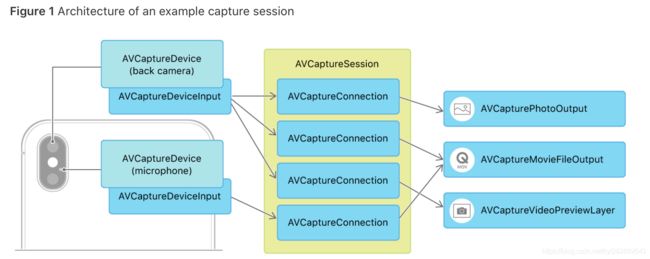

- 捕捉会话:

AVCaptureSession 是管理捕获活动并协调从输入设备到捕获输出的数据流的对象。 AVCaptureSession 用于连接输入和输出的资源,从物理设备如摄像头和麦克风等获取数据流,输出到一个或多个目的地。 AVCaptureSession 可以额外配置一个会话预设值(session preset),用于控制捕捉数据的格式和质量,预设值默认值为 AVCaptureSessionPresetHigh。

要执行实时捕获,需要实例化AVCaptureSession对象并添加适当的输入和输出。下面的代码片段演示了如何配置捕获设备来录制音频。

// Create the capture session.

let captureSession = AVCaptureSession()

// Find the default audio device.

guard let audioDevice = AVCaptureDevice.default(for: .audio) else { return }

do {

// Wrap the audio device in a capture device input.

let audioInput = try AVCaptureDeviceInput(device: audioDevice)

// If the input can be added, add it to the session.

if captureSession.canAddInput(audioInput) {

captureSession.addInput(audioInput)

}

} catch {

// Configuration failed. Handle error.

}

您可以调用startRunning()来启动从输入到输出的数据流,并调用stopRunning()来停止该流。

注意:

startRunning()方法是一个阻塞调用,可能会花费一些时间,因此应该在串行队列上执行会话设置,以免阻塞主队列(这使UI保持响应)。参见AVCam:构建摄像机应用程序的实现示例。

- 捕捉设备:

AVCaptureDevice 是为捕获会话提供输入(如音频或视频)并为特定于硬件的捕获特性提供控制的设备。它为物理设备定义统一接口,以及大量控制方法,获取指定类型的默认设备方法如下:

self.activeVideoDevice = [AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeVideo];

- 一个 AVCaptureDevice 对象表示一个物理捕获设备和与该设备相关联的属性。您可以使用捕获设备来配置底层硬件的属性。捕获设备还向AVCaptureSession对象提供输入数据(如音频或视频)。

- 捕捉设备的输入:

不能直接将 AVCaptureDevice 加入到 AVCaptureSession 中,需要封装为 AVCaptureDeviceInput。

self.captureVideoInput = [AVCaptureDeviceInput deviceInputWithDevice:self.activeVideoDevice error:&videoError];

if (self.captureVideoInput) {

if ([self.captureSession canAddInput:self.captureVideoInput]){

[self.captureSession addInput:self.captureVideoInput];

}

} else if (videoError) {

}

- 捕捉输出 :

AVCaptureOutput 作为抽象基类提供了捕捉会话数据流的输出目的地,同时定义了此抽象类的高级扩展类。

- AVCaptureStillImageOutput - 静态照片( 在ios10后被废弃,使用AVCapturePhotoOutput代替)

- AVCaptureMovieFileOutput - 视频,

- AVCaptureAudioFileOutput - 音频

- AVCaptureAudioDataOutput - 音频底层数字样本

- AVCaptureVideoDataOutput - 视频底层数字样本

- 捕捉连接:

AVCaptureConnection :捕获会话中捕获输入和捕获输出对象的特定对之间的连接。AVCaptureConnection 用于确定哪些输入产生视频,哪些输入产生音频,能够禁用特定连接或访问单独的音频轨道。

- 捕获输入有一个或多个输入端口(avcaptureinpu . port的实例)。捕获输出可以接受来自一个或多个源的数据(例如,AVCaptureMovieFileOutput对象同时接受视频和音频数据)。

只有在canAddConnection(:)方法返回true时,才可以使用addConnection(:)方法将AVCaptureConnection实例添加到会话中。当使用addInput(:)或addOutput(:)方法时,会话自动在所有兼容的输入和输出之间形成连接。在添加没有连接的输入或输出时,只需手动添加连接。您还可以使用连接来启用或禁用来自给定输入或到给定输出的数据流。

- 捕捉预览 :

AVCaptureVideoPreviewLayer 是一个 CALayer 的子类,可以对捕捉视频数据进行实时预览。

2. 视频捕捉实例

- 这个实例的项目代码点击这里下载:OC 视频捕获相机Demo

- 项目是OC编写的,主要功能实现在THCameraController中,如下图:

- 主要接口变量在头文件

THCameraController.h里面:

#import <AVFoundation/AVFoundation.h>

extern NSString *const THThumbnailCreatedNotification;

@protocol THCameraControllerDelegate <NSObject>

// 1发生错误事件是,需要在对象委托上调用一些方法来处理

- (void)deviceConfigurationFailedWithError:(NSError *)error;

- (void)mediaCaptureFailedWithError:(NSError *)error;

- (void)assetLibraryWriteFailedWithError:(NSError *)error;

@end

@interface THCameraController : NSObject

@property (weak, nonatomic) id<THCameraControllerDelegate> delegate;

@property (nonatomic, strong, readonly) AVCaptureSession *captureSession;

// 2 用于设置、配置视频捕捉会话

- (BOOL)setupSession:(NSError **)error;

- (void)startSession;

- (void)stopSession;

// 3 切换不同的摄像头

- (BOOL)switchCameras;

- (BOOL)canSwitchCameras;

@property (nonatomic, readonly) NSUInteger cameraCount;

@property (nonatomic, readonly) BOOL cameraHasTorch; //手电筒

@property (nonatomic, readonly) BOOL cameraHasFlash; //闪光灯

@property (nonatomic, readonly) BOOL cameraSupportsTapToFocus; //聚焦

@property (nonatomic, readonly) BOOL cameraSupportsTapToExpose;//曝光

@property (nonatomic) AVCaptureTorchMode torchMode; //手电筒模式

@property (nonatomic) AVCaptureFlashMode flashMode; //闪光灯模式

// 4 聚焦、曝光、重设聚焦、曝光的方法

- (void)focusAtPoint:(CGPoint)point;

- (void)exposeAtPoint:(CGPoint)point;

- (void)resetFocusAndExposureModes;

// 5 实现捕捉静态图片 & 视频的功能

//捕捉静态图片

- (void)captureStillImage;

//视频录制

//开始录制

- (void)startRecording;

//停止录制

- (void)stopRecording;

//获取录制状态

- (BOOL)isRecording;

//录制时间

- (CMTime)recordedDuration;

@end

- 我们需要添加访问权限,如果没有获取到相机和麦克风权限,在设置 captureVideoInput 时就会出错。

/// 检测 AVAuthorization 权限

/// 传入待检查的 AVMediaType,AVMediaTypeVideo or AVMediaTypeAudio

/// 返回是否权限可用

- (BOOL)ifAVAuthorizationValid:(NSString *)targetAVMediaType grantedCallback:(void (^)())grantedCallback

{

NSString *mediaType = targetAVMediaType;

BOOL result = NO;

if ([AVCaptureDevice respondsToSelector:@selector(authorizationStatusForMediaType:)]) {

AVAuthorizationStatus authStatus = [AVCaptureDevice authorizationStatusForMediaType:mediaType];

switch (authStatus) {

case AVAuthorizationStatusNotDetermined: { // 尚未请求授权

[AVCaptureDevice requestAccessForMediaType:targetAVMediaType completionHandler:^(BOOL granted) {

dispatch_async(dispatch_get_main_queue(), ^{

if (granted) {

grantedCallback();

}

});

}];

break;

}

case AVAuthorizationStatusDenied: { // 明确拒绝

if ([mediaType isEqualToString:AVMediaTypeVideo]) {

[METSettingPermissionAlertView showAlertViewWithPermissionType:METSettingPermissionTypeCamera];// 申请相机权限

} else if ([mediaType isEqualToString:AVMediaTypeAudio]) {

[METSettingPermissionAlertView showAlertViewWithPermissionType:METSettingPermissionTypeMicrophone];// 申请麦克风权限

}

break;

}

case AVAuthorizationStatusRestricted: { // 限制权限更改

break;

}

case AVAuthorizationStatusAuthorized: { // 已授权

result = YES;

break;

}

default: // 兜底

break;

}

}

return result;

}

2.1 创建预览视图

- 可以直接向一个 view 的 layer 中加入一个 AVCaptureVideoPreviewLayer 对象:

self.previewLayer = [[AVCaptureVideoPreviewLayer alloc] init];

[self.previewLayer setVideoGravity:AVLayerVideoGravityResizeAspectFill];

[self.previewLayer setSession:self.cameraHelper.captureSession];

self.previewLayer.frame = CGRectMake(0, 0, SCREEN_WIDTH, SCREEN_HEIGHT - 50);

[self.previewImageView.layer addSublayer:self.previewLayer];

- 也可以通过 view 的类方法直接换掉 view 的 CALayer 实例:

+ (Class)layerClass {

return [AVCaptureVideoPreviewLayer class];

}

- (AVCaptureSession*)session {

return [(AVCaptureVideoPreviewLayer*)self.layer session];

}

- (void)setSession:(AVCaptureSession *)session {

[(AVCaptureVideoPreviewLayer*)self.layer setSession:session];

}

- AVCaptureVideoPreviewLayer 定义了两个方法用于在屏幕坐标系和设备坐标系之间转换,设备坐标系规定左上角为 (0,0),右下角为(1,1)。

(CGPoint)captureDevicePointOfInterestForPoint:(CGPoint)pointInLayer从屏幕坐标系的点转换为设备坐标系(CGPoint)pointForCaptureDevicePointOfInterest:(CGPoint)captureDevicePointOfInterest从设备坐标系的点转换为屏幕坐标系

2.2 设置捕捉会话

- 首先是初始化捕捉会话:

self.captureSession = [[AVCaptureSession alloc]init];

[self.captureSession setSessionPreset:(self.isVideoMode)?AVCaptureSessionPreset1280x720:AVCaptureSessionPresetPhoto];

- 根据拍摄视频还是拍摄照片选择不同的预设值,然后设置会话输入:

- (void)configSessionInput

{

// 摄像头输入

NSError *videoError = [[NSError alloc] init];

self.activeVideoDevice = [AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeVideo];

self.flashMode = self.activeVideoDevice.flashMode;

self.captureVideoInput = [AVCaptureDeviceInput deviceInputWithDevice:self.activeVideoDevice error:&videoError];

if (self.captureVideoInput) {

if ([self.captureSession canAddInput:self.captureVideoInput]){

[self.captureSession addInput:self.captureVideoInput];

}

} else if (videoError) {

}

if (self.isVideoMode) {

// 麦克风输入

NSError *audioError = [[NSError alloc] init];

AVCaptureDeviceInput *audioInput = [AVCaptureDeviceInput deviceInputWithDevice:[AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeAudio] error:&audioError];

if (audioInput) {

if ([self.captureSession canAddInput:audioInput]) {

[self.captureSession addInput:audioInput];

}

} else if (audioError) {

}

}

}

- 对摄像头和麦克风设备均封装为 AVCaptureDeviceInput 后加入到会话中。然后配置会话输出:

- (void)configSessionOutput

{

if (self.isVideoMode) {

// 视频输出

self.movieFileOutput = [[AVCaptureMovieFileOutput alloc] init];

if ([self.captureSession canAddOutput:self.movieFileOutput]) {

[self.captureSession addOutput:self.movieFileOutput];

}

} else {

// 图片输出

self.imageOutput = [[AVCaptureStillImageOutput alloc] init];

self.imageOutput.outputSettings = @{AVVideoCodecKey:AVVideoCodecJPEG};// 配置 outputSetting 属性,表示希望捕捉 JPEG 格式的图片

if ([self.captureSession canAddOutput:self.imageOutput]) {

[self.captureSession addOutput:self.imageOutput];

}

}

}

- 当然你也可以合成在一个方法里面直接设置捕获会话

- (BOOL)setupSession:(NSError **)error {

//创建捕捉会话。AVCaptureSession 是捕捉场景的中心枢纽

self.captureSession = [[AVCaptureSession alloc]init];

/*

AVCaptureSessionPresetHigh

AVCaptureSessionPresetMedium

AVCaptureSessionPresetLow

AVCaptureSessionPreset640x480

AVCaptureSessionPreset1280x720

AVCaptureSessionPresetPhoto

*/

//设置图像的分辨率

self.captureSession.sessionPreset = AVCaptureSessionPresetHigh;

//拿到默认视频捕捉设备 iOS系统返回后置摄像头

AVCaptureDevice *videoDevice = [AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeVideo];

//将捕捉设备封装成AVCaptureDeviceInput

//注意:为会话添加捕捉设备,必须将设备封装成AVCaptureDeviceInput对象

AVCaptureDeviceInput *videoInput = [AVCaptureDeviceInput deviceInputWithDevice:videoDevice error:error];

//判断videoInput是否有效

if (videoInput)

{

//canAddInput:测试是否能被添加到会话中

if ([self.captureSession canAddInput:videoInput])

{

//将videoInput 添加到 captureSession中

[self.captureSession addInput:videoInput];

self.activeVideoInput = videoInput;

}

}else

{

return NO;

}

//选择默认音频捕捉设备 即返回一个内置麦克风

AVCaptureDevice *audioDevice = [AVCaptureDevice defaultDeviceWithMediaType:AVMediaTypeAudio];

//为这个设备创建一个捕捉设备输入

AVCaptureDeviceInput *audioInput = [AVCaptureDeviceInput deviceInputWithDevice:audioDevice error:error];

//判断audioInput是否有效

if (audioInput) {

//canAddInput:测试是否能被添加到会话中

if ([self.captureSession canAddInput:audioInput])

{

//将audioInput 添加到 captureSession中

[self.captureSession addInput:audioInput];

}

}else

{

return NO;

}

//AVCaptureStillImageOutput 实例 从摄像头捕捉静态图片

self.imageOutput = [[AVCaptureStillImageOutput alloc]init];

//配置字典:希望捕捉到JPEG格式的图片

self.imageOutput.outputSettings = @{AVVideoCodecKey:AVVideoCodecJPEG};

//输出连接 判断是否可用,可用则添加到输出连接中去

if ([self.captureSession canAddOutput:self.imageOutput])

{

[self.captureSession addOutput:self.imageOutput];

}

//创建一个AVCaptureMovieFileOutput 实例,用于将Quick Time 电影录制到文件系统

self.movieOutput = [[AVCaptureMovieFileOutput alloc]init];

//输出连接 判断是否可用,可用则添加到输出连接中去

if ([self.captureSession canAddOutput:self.movieOutput])

{

[self.captureSession addOutput:self.movieOutput];

}

self.videoQueue = dispatch_queue_create("com.kongyulu.VideoQueue", NULL);

return YES;

}

2.3 启动, 停止会话

- 可以在一个 VC 的生命周期内启动和停止会话,由于这个操作是比较耗时的同步操作,因此建议在异步线程里执行此方法。如下:

- (void)startSession {

//检查是否处于运行状态

if (![self.captureSession isRunning])

{

//使用同步调用会损耗一定的时间,则用异步的方式处理

dispatch_async(self.videoQueue, ^{

[self.captureSession startRunning];

});

}

}

- (void)stopSession {

//检查是否处于运行状态

if ([self.captureSession isRunning])

{

//使用异步方式,停止运行

dispatch_async(self.videoQueue, ^{

[self.captureSession stopRunning];

});

}

}

2.4 切换摄像头

- 大多数 ios 设备都有前后两个摄像头,标识前后摄像头需要用到 AVCaptureDevicePosition 枚举类:

typedef NS_ENUM(NSInteger, AVCaptureDevicePosition) {

AVCaptureDevicePositionUnspecified = 0, // 未知

AVCaptureDevicePositionBack = 1, // 后置摄像头

AVCaptureDevicePositionFront = 2, // 前置摄像头

}

- 接下来获取当前活跃的设备,没有激活的设备:

- (AVCaptureDevice *)activeCamera {

//返回当前捕捉会话对应的摄像头的device 属性

return self.activeVideoInput.device;

}

//返回当前未激活的摄像头

- (AVCaptureDevice *)inactiveCamera {

//通过查找当前激活摄像头的反向摄像头获得,如果设备只有1个摄像头,则返回nil

AVCaptureDevice *device = nil;

if (self.cameraCount > 1)

{

if ([self activeCamera].position == AVCaptureDevicePositionBack) {

device = [self cameraWithPosition:AVCaptureDevicePositionFront];

}else

{

device = [self cameraWithPosition:AVCaptureDevicePositionBack];

}

}

return device;

}

- 判断是否有超过1个摄像头可用

//判断是否有超过1个摄像头可用

- (BOOL)canSwitchCameras {

return self.cameraCount > 1;

}

- 可用视频捕捉设备的数量:

//可用视频捕捉设备的数量

- (NSUInteger)cameraCount {

return [[AVCaptureDevice devicesWithMediaType:AVMediaTypeVideo] count];

}

- 然后从 AVCaptureDeviceInput 就可以获取到当前活跃的 device,然后找到与其相对的设备:

#pragma mark - Device Configuration 配置摄像头支持的方法

- (AVCaptureDevice *)cameraWithPosition:(AVCaptureDevicePosition)position {

//获取可用视频设备

NSArray *devicess = [AVCaptureDevice devicesWithMediaType:AVMediaTypeVideo];

//遍历可用的视频设备 并返回position 参数值

for (AVCaptureDevice *device in devicess)

{

if (device.position == position) {

return device;

}

}

return nil;

}

- 切换摄像头,切换前首先要判断能否切换:

//切换摄像头

- (BOOL)switchCameras {

//判断是否有多个摄像头

if (![self canSwitchCameras])

{

return NO;

}

//获取当前设备的反向设备

NSError *error;

AVCaptureDevice *videoDevice = [self inactiveCamera];

//将输入设备封装成AVCaptureDeviceInput

AVCaptureDeviceInput *videoInput = [AVCaptureDeviceInput deviceInputWithDevice:videoDevice error:&error];

//判断videoInput 是否为nil

if (videoInput)

{

//标注原配置变化开始

[self.captureSession beginConfiguration];

//将捕捉会话中,原本的捕捉输入设备移除

[self.captureSession removeInput:self.activeVideoInput];

//判断新的设备是否能加入

if ([self.captureSession canAddInput:videoInput])

{

//能加入成功,则将videoInput 作为新的视频捕捉设备

[self.captureSession addInput:videoInput];

//将获得设备 改为 videoInput

self.activeVideoInput = videoInput;

}else

{

//如果新设备,无法加入。则将原本的视频捕捉设备重新加入到捕捉会话中

[self.captureSession addInput:self.activeVideoInput];

}

//配置完成后, AVCaptureSession commitConfiguration 会分批的将所有变更整合在一起。

[self.captureSession commitConfiguration];

}else

{

//创建AVCaptureDeviceInput 出现错误,则通知委托来处理该错误

[self.delegate deviceConfigurationFailedWithError:error];

return NO;

}

return YES;

}

注意:

- AVCapture Device 定义了很多方法,让开发者控制ios设备上的摄像头。可以独立调整和锁定摄像头的焦距、曝光、白平衡。对焦和曝光可以基于特定的兴趣点进行设置,使其在应用中实现点击对焦、点击曝光的功能。

还可以让你控制设备的LED作为拍照的闪光灯或手电筒的使用- 每当修改摄像头设备时,一定要先测试修改动作是否能被设备支持。并不是所有的摄像头都支持所有功能,例如牵制摄像头就不支持对焦操作,因为它和目标距离一般在一臂之长的距离。但大部分后置摄像头是可以支持全尺寸对焦。尝试应用一个不被支持的动作,会导致异常崩溃。所以修改摄像头设备前,需要判断是否支持

- 获取到对应的 device 后就可以封装为 AVCaptureInput 对象,然后进行配置:

//这里 beginConfiguration 和 commitConfiguration 可以使修改操作成为原子性操作,保证设备运行安全。

[self.captureSession beginConfiguration];// 开始配置新的视频输入

[self.captureSession removeInput:self.captureVideoInput]; // 首先移除旧的 input,才能加入新的 input

if ([self.captureSession canAddInput:newInput]) {

[self.captureSession addInput:newInput];

self.activeVideoDevice = newActiveDevice;

self.captureVideoInput = newInput;

} else {

[self.captureSession addInput:self.captureVideoInput];

}

[self.captureSession commitConfiguration];

2.5 调整焦距和曝光, 闪光灯和手电筒模式

2.5.1 对焦

- 对焦时,isFocusPointOfInterestSupported 用于判断设备是否支持兴趣点对焦,isFocusModeSupported 判断是否支持某种对焦模式,AVCaptureFocusModeAutoFocus 即自动对焦,然后进行对焦设置。代码如下:

#pragma mark - Focus Methods 点击聚焦方法的实现

- (BOOL)cameraSupportsTapToFocus {

//询问激活中的摄像头是否支持兴趣点对焦

return [[self activeCamera]isFocusPointOfInterestSupported];

}

- (void)focusAtPoint:(CGPoint)point {

AVCaptureDevice *device = [self activeCamera];

//是否支持兴趣点对焦 & 是否自动对焦模式

if (device.isFocusPointOfInterestSupported && [device isFocusModeSupported:AVCaptureFocusModeAutoFocus]) {

NSError *error;

//锁定设备准备配置,如果获得了锁

if ([device lockForConfiguration:&error]) {

//将focusPointOfInterest属性设置CGPoint

device.focusPointOfInterest = point;

//focusMode 设置为AVCaptureFocusModeAutoFocus

device.focusMode = AVCaptureFocusModeAutoFocus;

//释放该锁定

[device unlockForConfiguration];

}else{

//错误时,则返回给错误处理代理

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

2.5.2 曝光

- 先询问设备是否支持对一个兴趣点进行曝光

- (BOOL)cameraSupportsTapToExpose {

//询问设备是否支持对一个兴趣点进行曝光

return [[self activeCamera] isExposurePointOfInterestSupported];

}

- 曝光与对焦非常类似,核心方法如下:

static const NSString *THCameraAdjustingExposureContext;

- (void)exposeAtPoint:(CGPoint)point {

AVCaptureDevice *device = [self activeCamera];

AVCaptureExposureMode exposureMode =AVCaptureExposureModeContinuousAutoExposure;

//判断是否支持 AVCaptureExposureModeContinuousAutoExposure 模式

if (device.isExposurePointOfInterestSupported && [device isExposureModeSupported:exposureMode]) {

[device isExposureModeSupported:exposureMode];

NSError *error;

//锁定设备准备配置

if ([device lockForConfiguration:&error])

{

//配置期望值

device.exposurePointOfInterest = point;

device.exposureMode = exposureMode;

//判断设备是否支持锁定曝光的模式。

if ([device isExposureModeSupported:AVCaptureExposureModeLocked]) {

//支持,则使用kvo确定设备的adjustingExposure属性的状态。

[device addObserver:self forKeyPath:@"adjustingExposure" options:NSKeyValueObservingOptionNew context:&THCameraAdjustingExposureContext];

}

//释放该锁定

[device unlockForConfiguration];

}else

{

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

- 通过观察者模式监听,使用kvo确定设备的adjustingExposure属性的状态

- (void)observeValueForKeyPath:(NSString *)keyPath

ofObject:(id)object

change:(NSDictionary *)change

context:(void *)context {

//判断context(上下文)是否为THCameraAdjustingExposureContext

if (context == &THCameraAdjustingExposureContext) {

//获取device

AVCaptureDevice *device = (AVCaptureDevice *)object;

//判断设备是否不再调整曝光等级,确认设备的exposureMode是否可以设置为AVCaptureExposureModeLocked

if(!device.isAdjustingExposure && [device isExposureModeSupported:AVCaptureExposureModeLocked])

{

//移除作为adjustingExposure 的self,就不会得到后续变更的通知

[object removeObserver:self forKeyPath:@"adjustingExposure" context:&THCameraAdjustingExposureContext];

//异步方式调回主队列,

dispatch_async(dispatch_get_main_queue(), ^{

NSError *error;

if ([device lockForConfiguration:&error]) {

//修改exposureMode

device.exposureMode = AVCaptureExposureModeLocked;

//释放该锁定

[device unlockForConfiguration];

}else

{

[self.delegate deviceConfigurationFailedWithError:error];

}

});

}

}else

{

[super observeValueForKeyPath:keyPath ofObject:object change:change context:context];

}

}

- 还可以重新设置对焦&曝光

//重新设置对焦&曝光

- (void)resetFocusAndExposureModes {

AVCaptureDevice *device = [self activeCamera];

AVCaptureFocusMode focusMode = AVCaptureFocusModeContinuousAutoFocus;

//获取对焦兴趣点 和 连续自动对焦模式 是否被支持

BOOL canResetFocus = [device isFocusPointOfInterestSupported]&& [device isFocusModeSupported:focusMode];

AVCaptureExposureMode exposureMode = AVCaptureExposureModeContinuousAutoExposure;

//确认曝光度可以被重设

BOOL canResetExposure = [device isFocusPointOfInterestSupported] && [device isExposureModeSupported:exposureMode];

//回顾一下,捕捉设备空间左上角(0,0),右下角(1,1) 中心点则(0.5,0.5)

CGPoint centPoint = CGPointMake(0.5f, 0.5f);

NSError *error;

//锁定设备,准备配置

if ([device lockForConfiguration:&error]) {

//焦点可设,则修改

if (canResetFocus) {

device.focusMode = focusMode;

device.focusPointOfInterest = centPoint;

}

//曝光度可设,则设置为期望的曝光模式

if (canResetExposure) {

device.exposureMode = exposureMode;

device.exposurePointOfInterest = centPoint;

}

//释放锁定

[device unlockForConfiguration];

}else

{

[self.delegate deviceConfigurationFailedWithError:error];

}

}

2.5.3 闪光灯

- 处理对焦,我们还可以很方便的调整闪光灯,开启手电筒模式。

- 闪光灯(flash)和手电筒(torch)是两个不同的模式,分别定义如下:

typedef NS_ENUM(NSInteger, AVCaptureFlashMode) {

AVCaptureFlashModeOff = 0,

AVCaptureFlashModeOn = 1,

AVCaptureFlashModeAuto = 2,

}

typedef NS_ENUM(NSInteger, AVCaptureTorchMode) {

AVCaptureTorchModeOff = 0,

AVCaptureTorchModeOn = 1,

AVCaptureTorchModeAuto = 2,

}

- 通常在拍照时需要设置闪光灯,而拍视频时需要设置手电筒。具体配置模式代码如下:

- 判断是否有闪光灯:

//判断是否有闪光灯

- (BOOL)cameraHasFlash {

return [[self activeCamera] hasFlash];

}

//闪光灯模式

- (AVCaptureFlashMode)flashMode {

return [[self activeCamera] flashMode];

}

//设置闪光灯

- (void)setFlashMode:(AVCaptureFlashMode)flashMode {

//获取会话

AVCaptureDevice *device = [self activeCamera];

//判断是否支持闪光灯模式

if ([device isFlashModeSupported:flashMode]) {

//如果支持,则锁定设备

NSError *error;

if ([device lockForConfiguration:&error]) {

//修改闪光灯模式

device.flashMode = flashMode;

//修改完成,解锁释放设备

[device unlockForConfiguration];

}else

{

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

2.5.4 手电筒

- 是否支持手电筒:

//是否支持手电筒

- (BOOL)cameraHasTorch {

return [[self activeCamera]hasTorch];

}

- 切换为手电筒模式,开启手电筒

//手电筒模式

- (AVCaptureTorchMode)torchMode {

return [[self activeCamera]torchMode];

}

//设置是否打开手电筒

- (void)setTorchMode:(AVCaptureTorchMode)torchMode {

AVCaptureDevice *device = [self activeCamera];

if ([device isTorchModeSupported:torchMode]) {

NSError *error;

if ([device lockForConfiguration:&error]) {

device.torchMode = torchMode;

[device unlockForConfiguration];

}else

{

[self.delegate deviceConfigurationFailedWithError:error];

}

}

}

2.6 拍摄静态图片

- 设置捕捉会话时我们将 AVCaptureStillImageOutput (注意 :

AVCaptureStillImageOutput在IOS10 之后被废弃了,使用AVCapturePhotoOutput 代替)实例加入到会话中,这个会话可以用来拍摄静态图片。如下代码:

AVCaptureConnection *connection = [self.cameraHelper.imageOutput connectionWithMediaType:AVMediaTypeVideo];

if ([connection isVideoOrientationSupported]) {

[connection setVideoOrientation:self.cameraHelper.videoOrientation];

}

if (!connection.enabled || !connection.isActive) { // connection 不可用

// 处理非法情况

return;

}

- 这里从 AVCaptureStillImageOutput 实例类中获取到一个 AVCaptureConnection 对象后,需要设置此 connection 的 orientation 值,有两种方法可以获取:

- 通过监听重力感应器修改 orientation

- 通过 UIDevice 获取

- 通过监听重力感应器修改 orientation:

// 监测重力感应器并调整 orientation

CMMotionManager *motionManager = [[CMMotionManager alloc] init];

motionManager.deviceMotionUpdateInterval = 1/15.0;

if (motionManager.deviceMotionAvailable) {

[motionManager startDeviceMotionUpdatesToQueue:[NSOperationQueue currentQueue]

withHandler: ^(CMDeviceMotion *motion, NSError *error){

double x = motion.gravity.x;

double y = motion.gravity.y;

if (fabs(y) >= fabs(x)) { // y 轴分量大于 x 轴

if (y >= 0) { // 顶部向下

self.videoOrientation = AVCaptureVideoOrientationPortraitUpsideDown; // UIDeviceOrientationPortraitUpsideDown;

} else { // 顶部向上

self.videoOrientation = AVCaptureVideoOrientationPortrait; // UIDeviceOrientationPortrait;

}

} else {

if (x >= 0) { // 顶部向右

self.videoOrientation = AVCaptureVideoOrientationLandscapeLeft; // UIDeviceOrientationLandscapeRight;

} else { // 顶部向左

self.videoOrientation = AVCaptureVideoOrientationLandscapeRight; // UIDeviceOrientationLandscapeLeft;

}

}

}];

self.motionManager = motionManager;

} else {

self.videoOrientation = AVCaptureVideoOrientationPortrait;

}

- 然后我们调用方法来获取 CMSampleBufferRef(CMSampleBufferRef 是一个 Core Media 定义的 Core Foundation 对象),可以通过 AVCaptureStillImageOutput 的 jpegStillImageNSDataRepresentation 类方法将其转化为 NSData 类型。如下代码:

@weakify(self)

[self.cameraHelper.imageOutput captureStillImageAsynchronouslyFromConnection:connection completionHandler:^(CMSampleBufferRef imageDataSampleBuffer, NSError *error) {

@strongify(self)

if (!error && imageDataSampleBuffer) {

NSData *imageData = [AVCaptureStillImageOutput jpegStillImageNSDataRepresentation:imageDataSampleBuffer];

if (!imageData) {return;}

UIImage *image = [UIImage imageWithData:imageData];

if (!image) {return;}

}];

- 最后,我们可以直接将得到的图片保存存文件形式,注意:Assets Library 在 ios 8 以后已经被 PHPhotoLibrary 替代,这里用 PHPhotoLibrary 实现保存图片的功能。代码如下:

[[PHPhotoLibrary sharedPhotoLibrary] performChanges:^{

PHAssetChangeRequest *changeRequest = [PHAssetChangeRequest creationRequestForAssetFromImage:targetImage];

NSString *imageIdentifier = changeRequest.placeholderForCreatedAsset.localIdentifier;

} completionHandler:^( BOOL success, NSError * _Nullable error ) {

}];

-

我们可以通过保存时返回的 imageIdentifier 从相册里找到这个图片。

-

完整捕获静态图片的代码如下:

#pragma mark - Image Capture Methods 拍摄静态图片

/*

AVCaptureStillImageOutput 是AVCaptureOutput的子类。用于捕捉图片

*/

- (void)captureStillImage {

//获取连接

AVCaptureConnection *connection = [self.imageOutput connectionWithMediaType:AVMediaTypeVideo];

//程序只支持纵向,但是如果用户横向拍照时,需要调整结果照片的方向

//判断是否支持设置视频方向

if (connection.isVideoOrientationSupported) {

//获取方向值

connection.videoOrientation = [self currentVideoOrientation];

}

//定义一个handler 块,会返回1个图片的NSData数据

id handler = ^(CMSampleBufferRef sampleBuffer,NSError *error)

{

if (sampleBuffer != NULL) {

NSData *imageData = [AVCaptureStillImageOutput jpegStillImageNSDataRepresentation:sampleBuffer];

UIImage *image = [[UIImage alloc]initWithData:imageData];

//重点:捕捉图片成功后,将图片传递出去

[self writeImageToAssetsLibrary:image];

}else

{

NSLog(@"NULL sampleBuffer:%@",[error localizedDescription]);

}

};

//捕捉静态图片

[self.imageOutput captureStillImageAsynchronouslyFromConnection:connection completionHandler:handler];

}

//获取方向值

- (AVCaptureVideoOrientation)currentVideoOrientation {

AVCaptureVideoOrientation orientation;

//获取UIDevice 的 orientation

switch ([UIDevice currentDevice].orientation) {

case UIDeviceOrientationPortrait:

orientation = AVCaptureVideoOrientationPortrait;

break;

case UIDeviceOrientationLandscapeRight:

orientation = AVCaptureVideoOrientationLandscapeLeft;

break;

case UIDeviceOrientationPortraitUpsideDown:

orientation = AVCaptureVideoOrientationPortraitUpsideDown;

break;

default:

orientation = AVCaptureVideoOrientationLandscapeRight;

break;

}

return orientation;

return 0;

}

/*

Assets Library 框架

用来让开发者通过代码方式访问iOS photo

注意:会访问到相册,需要修改plist 权限。否则会导致项目崩溃

*/

- (void)writeImageToAssetsLibrary:(UIImage *)image {

//创建ALAssetsLibrary 实例

ALAssetsLibrary *library = [[ALAssetsLibrary alloc]init];

//参数1:图片(参数为CGImageRef 所以image.CGImage)

//参数2:方向参数 转为NSUInteger

//参数3:写入成功、失败处理

[library writeImageToSavedPhotosAlbum:image.CGImage

orientation:(NSUInteger)image.imageOrientation

completionBlock:^(NSURL *assetURL, NSError *error) {

//成功后,发送捕捉图片通知。用于绘制程序的左下角的缩略图

if (!error)

{

[self postThumbnailNotifification:image];

}else

{

//失败打印错误信息

id message = [error localizedDescription];

NSLog(@"%@",message);

}

}];

}

//发送缩略图通知

- (void)postThumbnailNotifification:(UIImage *)image {

//回到主队列

dispatch_async(dispatch_get_main_queue(), ^{

//发送请求

NSNotificationCenter *nc = [NSNotificationCenter defaultCenter];

[nc postNotificationName:THThumbnailCreatedNotification object:image];

});

}

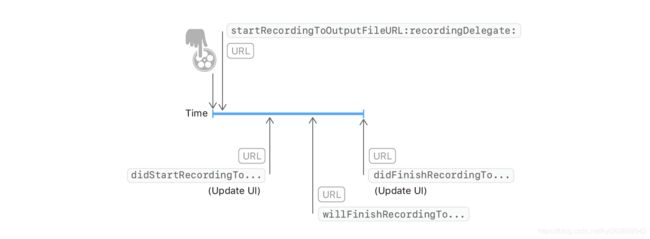

2.7 视频捕捉

-

QuickTime 格式的影片,元数据处于影片文件的开头位置,这样可以帮助视频播放器快速读取头文件来确定文件内容、结构和样本位置,但是录制时需要等所有样本捕捉完成才能创建头数据并将其附在文件结尾处。这样一来,如果录制时发生崩溃或中断就会导致无法创建影片头,从而在磁盘生成一个不可读的文件。

-

因此 AVFoundation 的 AVCaptureMovieFileOutput 类就提供了分段捕捉能力,录制开始时生成最小化的头信息,录制进行中,片段间隔一定周期再次创建头信息,从而逐步完成创建。默认状态下每 10s 写入一个片段,可以通过 movieFragmentInterval 属性来修改。

-

首先是开启视频拍摄:

AVCaptureConnection *videoConnection = [self.cameraHelper.movieFileOutput connectionWithMediaType:AVMediaTypeVideo];

if ([videoConnection isVideoOrientationSupported]) {

[videoConnection setVideoOrientation:self.cameraHelper.videoOrientation];

}

if ([videoConnection isVideoStabilizationSupported]) {

[videoConnection setPreferredVideoStabilizationMode:AVCaptureVideoStabilizationModeAuto];

}

[videoConnection setVideoScaleAndCropFactor:1.0];

if (![self.cameraHelper.movieFileOutput isRecording] && videoConnection.isActive && videoConnection.isEnabled) {

// 判断视频连接是否可用

self.countTimer = [NSTimer scheduledTimerWithTimeInterval:1 target:self selector:@selector(refreshTimeLabel) userInfo:nil repeats:YES];

NSString *urlString = [NSTemporaryDirectory() stringByAppendingString:[NSString stringWithFormat:@"%.0f.mov", [[NSDate date] timeIntervalSince1970] * 1000]];

NSURL *url = [NSURL fileURLWithPath:urlString];

[self.cameraHelper.movieFileOutput startRecordingToOutputFileURL:url recordingDelegate:self];

[self.captureButton setTitle:@"结束" forState:UIControlStateNormal];

} else {

}

- 设置 PreferredVideoStabilizationMode 可以支持视频拍摄时的稳定性和拍摄质量,但是这一稳定效果只会在拍摄的视频中感受到,预览视频时无法感知。

- 我们将视频文件临时写入到临时文件中,等待拍摄结束时会调用

AVCaptureFileOutputRecordingDelegate 的 (void)captureOutput:(AVCaptureFileOutput *)captureOutput didFinishRecordingToOutputFileAtURL:(NSURL *)outputFileURL fromConnections:(NSArray *)connections error:(NSError *)error方法。此时可以进行保存视频和生成视频缩略图的操作。

- (void)saveVideo:(NSURL *)videoURL

{

__block NSString *imageIdentifier;

@weakify(self)

[[PHPhotoLibrary sharedPhotoLibrary] performChanges:^{

// 保存视频

PHAssetChangeRequest *changeRequest = [PHAssetChangeRequest creationRequestForAssetFromVideoAtFileURL:videoURL];

imageIdentifier = changeRequest.placeholderForCreatedAsset.localIdentifier;

} completionHandler:^( BOOL success, NSError * _Nullable error ) {

@strongify(self)

dispatch_async(dispatch_get_main_queue(), ^{

@strongify(self)

[self resetTimeCounter];

if (!success) {

// 错误处理

} else {

PHAsset *asset = [PHAsset fetchAssetsWithLocalIdentifiers:@[imageIdentifier] options:nil].firstObject;

if (asset && asset.mediaType == PHAssetMediaTypeVideo) {

PHVideoRequestOptions *options = [[PHVideoRequestOptions alloc] init];

options.version = PHImageRequestOptionsVersionCurrent;

options.deliveryMode = PHVideoRequestOptionsDeliveryModeAutomatic;

[[PHImageManager defaultManager] requestAVAssetForVideo:asset options:options resultHandler:^(AVAsset * _Nullable obj, AVAudioMix * _Nullable audioMix, NSDictionary * _Nullable info) {

@strongify(self)

[self resolveAVAsset:obj identifier:asset.localIdentifier];

}];

}

}

});

}];

}

- (void)resolveAVAsset:(AVAsset *)asset identifier:(NSString *)identifier

{

if (!asset) {

return;

}

if (![asset isKindOfClass:[AVURLAsset class]]) {

return;

}

AVURLAsset *urlAsset = (AVURLAsset *)asset;

NSURL *url = urlAsset.URL;

NSData *data = [NSData dataWithContentsOfURL:url];

AVAssetImageGenerator *generator = [AVAssetImageGenerator assetImageGeneratorWithAsset:asset];

generator.appliesPreferredTrackTransform = YES; //捕捉缩略图时考虑视频 orientation 变化,避免错误的缩略图方向

CMTime snaptime = kCMTimeZero;

CGImageRef cgImageRef = [generator copyCGImageAtTime:snaptime actualTime:NULL error:nil];

UIImage *assetImage = [UIImage imageWithCGImage:cgImageRef];

CGImageRelease(cgImageRef);

}

- 梳理一下视频捕获的流程

- (1)判断是否录制状态

//判断是否录制状态

- (BOOL)isRecording {

return self.movieOutput.isRecording;

}

- (2)开始录制

//开始录制

- (void)startRecording {

if (![self isRecording]) {

//获取当前视频捕捉连接信息,用于捕捉视频数据配置一些核心属性

AVCaptureConnection * videoConnection = [self.movieOutput connectionWithMediaType:AVMediaTypeVideo];

//判断是否支持设置videoOrientation 属性。

if([videoConnection isVideoOrientationSupported])

{

//支持则修改当前视频的方向

videoConnection.videoOrientation = [self currentVideoOrientation];

}

//判断是否支持视频稳定 可以显著提高视频的质量。只会在录制视频文件涉及

if([videoConnection isVideoStabilizationSupported])

{

videoConnection.enablesVideoStabilizationWhenAvailable = YES;

}

AVCaptureDevice *device = [self activeCamera];

//摄像头可以进行平滑对焦模式操作。即减慢摄像头镜头对焦速度。当用户移动拍摄时摄像头会尝试快速自动对焦。

if (device.isSmoothAutoFocusEnabled) {

NSError *error;

if ([device lockForConfiguration:&error]) {

device.smoothAutoFocusEnabled = YES;

[device unlockForConfiguration];

}else

{

[self.delegate deviceConfigurationFailedWithError:error];

}

}

//查找写入捕捉视频的唯一文件系统URL.

self.outputURL = [self uniqueURL];

//在捕捉输出上调用方法 参数1:录制保存路径 参数2:代理

[self.movieOutput startRecordingToOutputFileURL:self.outputURL recordingDelegate:self];

}

}

- (CMTime)recordedDuration {

return self.movieOutput.recordedDuration;

}

//写入视频唯一文件系统URL

- (NSURL *)uniqueURL {

NSFileManager *fileManager = [NSFileManager defaultManager];

//temporaryDirectoryWithTemplateString 可以将文件写入的目的创建一个唯一命名的目录;

NSString *dirPath = [fileManager temporaryDirectoryWithTemplateString:@"kamera.XXXXXX"];

if (dirPath) {

NSString *filePath = [dirPath stringByAppendingPathComponent:@"kamera_movie.mov"];

return [NSURL fileURLWithPath:filePath];

}

return nil;

}

- (3)停止录制

//停止录制

- (void)stopRecording {

//是否正在录制

if ([self isRecording]) {

[self.movieOutput stopRecording];

}

}

- (4)捕获视频回调函数AVCaptureFileOutputRecordingDelegate

#pragma mark - AVCaptureFileOutputRecordingDelegate

- (void)captureOutput:(AVCaptureFileOutput *)captureOutput

didFinishRecordingToOutputFileAtURL:(NSURL *)outputFileURL

fromConnections:(NSArray *)connections

error:(NSError *)error {

//错误

if (error) {

[self.delegate mediaCaptureFailedWithError:error];

}else

{

//写入

[self writeVideoToAssetsLibrary:[self.outputURL copy]];

}

self.outputURL = nil;

}

- (5)将得到的视频数据保存写入视频文件

//写入捕捉到的视频

- (void)writeVideoToAssetsLibrary:(NSURL *)videoURL {

//ALAssetsLibrary 实例 提供写入视频的接口

ALAssetsLibrary *library = [[ALAssetsLibrary alloc]init];

//写资源库写入前,检查视频是否可被写入 (写入前尽量养成判断的习惯)

if ([library videoAtPathIsCompatibleWithSavedPhotosAlbum:videoURL]) {

//创建block块

ALAssetsLibraryWriteVideoCompletionBlock completionBlock;

completionBlock = ^(NSURL *assetURL,NSError *error)

{

if (error) {

[self.delegate assetLibraryWriteFailedWithError:error];

}else

{

//用于界面展示视频缩略图

[self generateThumbnailForVideoAtURL:videoURL];

}

};

//执行实际写入资源库的动作

[library writeVideoAtPathToSavedPhotosAlbum:videoURL completionBlock:completionBlock];

}

}

- (6)获取视频缩略图

//获取视频左下角缩略图

- (void)generateThumbnailForVideoAtURL:(NSURL *)videoURL {

//在videoQueue 上,

dispatch_async(self.videoQueue, ^{

//建立新的AVAsset & AVAssetImageGenerator

AVAsset *asset = [AVAsset assetWithURL:videoURL];

AVAssetImageGenerator *imageGenerator = [AVAssetImageGenerator assetImageGeneratorWithAsset:asset];

//设置maximumSize 宽为100,高为0 根据视频的宽高比来计算图片的高度

imageGenerator.maximumSize = CGSizeMake(100.0f, 0.0f);

//捕捉视频缩略图会考虑视频的变化(如视频的方向变化),如果不设置,缩略图的方向可能出错

imageGenerator.appliesPreferredTrackTransform = YES;

//获取CGImageRef图片 注意需要自己管理它的创建和释放

CGImageRef imageRef = [imageGenerator copyCGImageAtTime:kCMTimeZero actualTime:NULL error:nil];

//将图片转化为UIImage

UIImage *image = [UIImage imageWithCGImage:imageRef];

//释放CGImageRef imageRef 防止内存泄漏

CGImageRelease(imageRef);

//回到主线程

dispatch_async(dispatch_get_main_queue(), ^{

//发送通知,传递最新的image

[self postThumbnailNotifification:image];

});

});

}

2.8 视频缩放

- iOS 7.0 为 AVCaptureDevice 提供了一个 videoZoomFactor 属性用于对视频输出和捕捉提供缩放效果,这个属性的最小值为 1.0,最大值由下面的方法提供:

self.cameraHelper.activeVideoDevice.activeFormat.videoMaxZoomFactor; - 因而判断一个设备能否进行缩放也可以通过判断这一属性来获知:

- (BOOL)cameraSupportsZoom

{

return self.cameraHelper.activeVideoDevice.activeFormat.videoMaxZoomFactor > 1.0f;

}

- 设备执行缩放效果是通过居中裁剪由摄像头传感器捕捉到的图片实现的,也可以通过

videoZoomFactorUpscaleThreshold来设置具体的放大中心。当 zoom factors 缩放因子比较小的时候,裁剪的图片刚好等于或者大于输出尺寸(考虑与抗边缘畸变有关),则无需放大就可以返回。但是当 zoom factors 比较大时,设备必须缩放裁剪图片以符合输出尺寸,从而导致图片质量上的丢失。具体的临界点由videoZoomFactorUpscaleThreshold值来确定。

// 在 iphone6s 和 iphone8plus 上测试得到此值为 2.0左右

self.cameraHelper.activeVideoDevice.activeFormat.videoZoomFactorUpscaleThreshold;

- 可以通过一个变化值从 0.0 到 1.0 的 UISlider 来实现对缩放值的控制。代码如下:

{

[self.slider addTarget:self action:@selector(sliderValueChange:) forControlEvents:UIControlEventValueChanged];

}

- (void)sliderValueChange:(id)sender

{

UISlider *slider = (UISlider *)sender;

[self setZoomValue:slider.value];

}

- (CGFloat)maxZoomFactor

{

return MIN(self.cameraHelper.activeVideoDevice.activeFormat.videoMaxZoomFactor, 4.0f);

}

- (void)setZoomValue:(CGFloat)zoomValue

{

if (!self.cameraHelper.activeVideoDevice.isRampingVideoZoom) {

NSError *error;

if ([self.cameraHelper.activeVideoDevice lockForConfiguration:&error]) {

CGFloat zoomFactor = pow([self maxZoomFactor], zoomValue);

self.cameraHelper.activeVideoDevice.videoZoomFactor = zoomFactor;

[self.cameraHelper.activeVideoDevice unlockForConfiguration];

}

}

}

-

首先注意在进行配置属性前需要进行设备的锁定,否则会引发异常。其次,插值缩放是一个指数形式的增长,传入的 slider 值是线性的,需要进行一次 pow 运算得到需要缩放的值。另外,

videoMaxZoomFactor的值可能会非常大,在 iphone8p 上这一个值是 16,缩放到这么大的图像是没有太大意义的,因此需要人为设置一个最大缩放值,这里选择 4.0。 -

当然这里进行的缩放是立即生效的,下面的方法可以以一个速度平滑缩放到一个缩放因子上:

- (void)rampZoomToValue:(CGFloat)zoomValue {

CGFloat zoomFactor = pow([self maxZoomFactor], zoomValue);

NSError *error;

if ([self.activeCamera lockForConfiguration:&error]) {

[self.activeCamera rampToVideoZoomFactor:zoomFactor

withRate:THZoomRate];

[self.activeCamera unlockForConfiguration];

} else {

}

}

- (void)cancelZoom {

NSError *error;

if ([self.activeCamera lockForConfiguration:&error]) {

[self.activeCamera cancelVideoZoomRamp];

[self.activeCamera unlockForConfiguration];

} else {

}

}

- 当然我们还可以监听设备的 videoZoomFactor 可以获知当前的缩放值:

[RACObserve(self, activeVideoDevice.videoZoomFactor) subscribeNext:^(id x) {

NSLog(@"videoZoomFactor: %f", self.activeVideoDevice.videoZoomFactor);

}];

- 还可以监听设备的 rampingVideoZoom 可以获知设备是否正在平滑缩放:

[RACObserve(self, activeVideoDevice.rampingVideoZoom) subscribeNext:^(id x) {

NSLog(@"rampingVideoZoom : %@", (self.activeVideoDevice.rampingVideoZoom)?@"true":@"false");

}];

2.9 视频编辑

-

AVCaptureMovieFileOutput 可以简单地捕捉视频,但是不能进行视频数据交互,因此需要使用 AVCaptureVideoDataOutput 类。AVCaptureVideoDataOutput 是一个 AVCaptureOutput 的子类,可以直接访问摄像头传感器捕捉到的视频帧。与之对应的是处理音频输入的 AVCaptureAudioDataOutput 类。

-

AVCaptureVideoDataOutput 有一个遵循 AVCaptureVideoDataOutputSampleBufferDelegate 协议的委托对象,它有下面两个主要方法:

- (void)captureOutput:(AVCaptureOutput *)output didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer fromConnection:(AVCaptureConnection *)connection; // 有新的视频帧写入时调用,数据会基于 output 的 videoSetting 进行解码或重新编码

- (void)captureOutput:(AVCaptureOutput *)output didDropSampleBuffer:(CMSampleBufferRef)sampleBuffer fromConnection:(AVCaptureConnection *)connection; // 有迟到的视频帧被丢弃时调用,通常是因为在上面一个方法里进行了比较耗时的操作

- CMSampleBufferRef 是一个由 Core Media 框架提供的 Core Foundation 风格的对象,用于在媒体管道中传输数字样本。这样我们可以对 CMSampleBufferRef 的每一个 Core Video 视频帧进行处理,如下代码:

int BYTES_PER_PIXEL = 4;

CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer); //CVPixelBufferRef 在主内存中保存像素数据

CVPixelBufferLockBaseAddress(pixelBuffer, 0); // 获取相应内存块的锁

size_t bufferWidth = CVPixelBufferGetWidth(pixelBuffer);

size_t bufferHeight = CVPixelBufferGetHeight(pixelBuffer);// 获取像素宽高

unsigned char *pixel = (unsigned char *)CVPixelBufferGetBaseAddress(pixelBuffer); // 获取像素 buffer 的起始位置

unsigned char grayPixel;

for (int row = 0; row < bufferHeight; row++) {

for (int column = 0; column < bufferWidth; column ++) { // 遍历每一个像素点

grayPixel = (pixel[0] + pixel[1] + pixel[2])/3.0;

pixel[0] = pixel[1] = pixel[2] = grayPixel;

pixel += BYTES_PER_PIXEL;

}

}

CIImage *ciImage = [CIImage imageWithCVPixelBuffer:pixelBuffer]; // 通过 buffer 生成对应的 CIImage

CVPixelBufferUnlockBaseAddress(pixelBuffer, 0); // 解除锁

- CMSampleBufferRef 还提供了每一帧数据的格式信息,CMFormatDescription.h 头文件定义了大量函数来获取各种信息。

CMFormatDescriptionRef formatDescription = CMSampleBufferGetFormatDescription(sampleBuffer);

CMMediaType mediaType = CMFormatDescriptionGetMediaType(formatDescription);

- 还可以修改时间信息:

CMTime presentation = CMSampleBufferGetPresentationTimeStamp(sampleBuffer); // 获取帧样本的原始时间戳

CMTime decode = CMSampleBufferGetDecodeTimeStamp(sampleBuffer); // 获取帧样本的解码时间戳

- 可以附加元数据:

CFDictionaryRef exif = (CFDictionaryRef)CMGetAttachment(sampleBuffer, kCGImagePropertyExifDictionary, NULL);

- AVCaptureVideoDataOutput 的配置与 AVCaptureMovieFileOutput 大致相同,但要指明它的委托对象和回调队列。为了确保视频帧按顺序传递,队列要求必须是串行队列。

self.videoDataOutput = [[AVCaptureVideoDataOutput alloc] init];

self.videoDataOutput.videoSettings = @{(id)kCVPixelBufferPixelFormatTypeKey: @(kCVPixelFormatType_32BGRA)}; // 摄像头的初始格式为双平面 420v,这是一个 YUV 格式,而 OpenGL ES 常用 BGRA 格式

if ([self.captureSession canAddOutput:self.videoDataOutput]) {

[self.captureSession addOutput:self.videoDataOutput];

[self.videoDataOutput setSampleBufferDelegate:self queue:dispatch_get_main_queue()];

}

2.10 高帧率捕捉

- 除了上面介绍的普通视频捕捉外,我们还可以使用高频捕捉功能。高帧率捕获视频是在 iOS 7 以后加入的,具有更逼真的效果和更好的清晰度,对于细节的加强和动作流畅度的提升非常明显,尤其是录制快速移动的内容时更为明显,也可以实现高质量的慢动作视频效果。

- 实现高帧率捕捉的基本思路是:首先通过设备的 formats 属性获取所有支持的格式,也就是 AVCaptureDeviceFormat 对象;然后根据对象的 videoSupportedFrameRateRanges 属性,这样可以获知其所支持的最小帧率、最大帧率及时长信息;然后手动设置设备的格式和帧时长。

- 具体实现如下:

- 首先写一个 AVCaptureDevice 的 category,获取支持格式的最大帧率的方法如下:

AVCaptureDeviceFormat *maxFormat = nil;

AVFrameRateRange *maxFrameRateRange = nil;

for (AVCaptureDeviceFormat *format in self.formats) {

FourCharCode codecType = CMVideoFormatDescriptionGetCodecType(format.formatDescription);

//codecType 是一个无符号32位的数据类型,但是是由四个字符对应的四个字节组成,一般可能值为 "420v" 或 "420f",这里选取 420v 格式来配置。

if (codecType == kCVPixelFormatType_420YpCbCr8BiPlanarVideoRange) {

NSArray *frameRateRanges = format.videoSupportedFrameRateRanges;

for (AVFrameRateRange *range in frameRateRanges) {

if (range.maxFrameRate > maxFrameRateRange.maxFrameRate) {

maxFormat = format;

maxFrameRateRange = range;

}

}

} else {

}

}

- 我们可以通过判断最大帧率是否大于 30,来判断设备是否支持高帧率:

- (BOOL)isHighFrameRate {

return self.frameRateRange.maxFrameRate > 30.0f;

}

- 接下来我们就可以进行配置了:

if ([self hasMediaType:AVMediaTypeVideo] && [self lockForConfiguration:error] && [self.activeCamera supportsHighFrameRateCapture]) {

CMTime minFrameDuration = self.frameRateRange.minFrameDuration;

self.activeFormat = self.format;

self.activeVideoMinFrameDuration = minFrameDuration;

self.activeVideoMaxFrameDuration = minFrameDuration;

[self unlockForConfiguration];

}

- 这里首先锁定了设备,然后将最小帧时长和最大帧时长都设置成 minFrameDuration,帧时长与帧率是倒数关系,所以最大帧率对应最小帧时长。

- 播放时可以针对 AVPlayer 设置不同的 rate 实现变速播放,在 iphone8plus 上实测,如果 rate 在 0 到 0.5 之间, 则实际播放速率仍为 0.5。

- 另外要注意设置 AVPlayerItem 的 audioTimePitchAlgorithm 属性,这个属性允许你指定当视频正在各种帧率下播放的时候如何播放音频,通常选择

AVAudioTimePitchAlgorithmSpectral或AVAudioTimePitchAlgoruthmTimeDomain即可。:

AVAudioTimePitchAlgorithmLowQualityZeroLatency质量低,适合快进,快退或低质量语音AVAudioTimePitchAlgoruthmTimeDomain质量适中,计算成本较低,适合语音AVAudioTimePitchAlgorithmSpectral最高质量,最昂贵的计算,保留了原来的项目间距AVAudioTimePitchAlgorithmVarispeed高品质的播放没有音高校正

- 此外AVFoundation 提供了人脸识别,二维码识别功能。

2.11 人脸识别

- 人脸识别需要用到 AVCaptureMetadataOutput 作为输出,首先将其加入到捕捉会话中:

self.metaDataOutput = [[AVCaptureMetadataOutput alloc] init];

if ([self.captureSession canAddOutput:self.metaDataOutput]) {

[self.captureSession addOutput:self.metaDataOutput];

NSArray *metaDataObjectType = @[AVMetadataObjectTypeFace];

self.metaDataOutput.metadataObjectTypes = metaDataObjectType;

[self.metaDataOutput setMetadataObjectsDelegate:self queue:dispatch_get_main_queue()];

}

- 可以看到这里需要指定 AVCaptureMetadataOutput 的 metadataObjectTypes 属性,将其设置为 AVMetadataObjectTypeFace 的数组,它代表着人脸元数据对象。然后设置其遵循 AVCaptureMetadataOutputObjectsDelegate 协议的委托对象及回调线程,当检测到人脸时就会调用下面的方法:

- (void)captureOutput:(AVCaptureOutput *)output didOutputMetadataObjects:(NSArray<__kindof AVMetadataObject *> *)metadataObjects fromConnection:(AVCaptureConnection *)connection

{

if (self.detectFaces) {

self.detectFaces(metadataObjects);

}

}

- 其中 metadataObjects 是一个包含了许多 AVMetadataObject 对象的数组,这里则可以认为都是 AVMetadataObject 的子类 AVMetadataFaceObject。对于 AVMetadataFaceObject 对象,有四个重要的属性:

faceID,用于标识检测到的每一个 facerollAngle,用于标识人脸斜倾角,即人的头部向肩膀方便的侧倾角度yawAngle,偏转角,即人脸绕 y 轴旋转的角度bounds,标识检测到的人脸区域

@weakify(self)

self.cameraHelper.detectFaces = ^(NSArray *faces) {

@strongify(self)

NSMutableArray *transformedFaces = [NSMutableArray array];

for (AVMetadataFaceObject *face in faces) {

AVMetadataObject *transformedFace = [self.previewLayer transformedMetadataObjectForMetadataObject:face];

[transformedFaces addObject:transformedFace];

}

NSMutableArray *lostFaces = [self.faceLayers.allKeys mutableCopy];

for (AVMetadataFaceObject *face in transformedFaces) {

NSNumber *faceId = @(face.faceID);

[lostFaces removeObject:faceId];

CALayer *layer = self.faceLayers[faceId];

if (!layer) {

layer = [CALayer layer];

layer.borderWidth = 5.0f;

layer.borderColor = [UIColor colorWithRed:0.188 green:0.517 blue:0.877 alpha:1.000].CGColor;

[self.previewLayer addSublayer:layer];

self.faceLayers[faceId] = layer;

}

layer.transform = CATransform3DIdentity;

layer.frame = face.bounds;

if (face.hasRollAngle) {

layer.transform = CATransform3DConcat(layer.transform, [self transformForRollAngle:face.rollAngle]);

}

if (face.hasYawAngle) {

NSLog(@"%f", face.yawAngle);

layer.transform = CATransform3DConcat(layer.transform, [self transformForYawAngle:face.yawAngle]);

}

}

for (NSNumber *faceID in lostFaces) {

CALayer *layer = self.faceLayers[faceID];

[layer removeFromSuperlayer];

[self.faceLayers removeObjectForKey:faceID];

}

};

// Rotate around Z-axis

- (CATransform3D)transformForRollAngle:(CGFloat)rollAngleInDegrees { // 3

CGFloat rollAngleInRadians = THDegreesToRadians(rollAngleInDegrees);

return CATransform3DMakeRotation(rollAngleInRadians, 0.0f, 0.0f, 1.0f);

}

// Rotate around Y-axis

- (CATransform3D)transformForYawAngle:(CGFloat)yawAngleInDegrees { // 5

CGFloat yawAngleInRadians = THDegreesToRadians(yawAngleInDegrees);

CATransform3D yawTransform = CATransform3DMakeRotation(yawAngleInRadians, 0.0f, -1.0f, 0.0f);

return CATransform3DConcat(yawTransform, [self orientationTransform]);

}

- (CATransform3D)orientationTransform { // 6

CGFloat angle = 0.0;

switch ([UIDevice currentDevice].orientation) {

case UIDeviceOrientationPortraitUpsideDown:

angle = M_PI;

break;

case UIDeviceOrientationLandscapeRight:

angle = -M_PI / 2.0f;

break;

case UIDeviceOrientationLandscapeLeft:

angle = M_PI / 2.0f;

break;

default: // as UIDeviceOrientationPortrait

angle = 0.0;

break;

}

return CATransform3DMakeRotation(angle, 0.0f, 0.0f, 1.0f);

}

static CGFloat THDegreesToRadians(CGFloat degrees) {

return degrees * M_PI / 180;

}

-

我们用一个字典来管理每一个展示一个 face 对象的 layer,它的 key 值即 faceID,回调时更新当前已存在的 faceLayer,移除不需要的 faceLayer。其次对每一个 face,根据其 rollAngle 和 yawAngle 要通过 transfor 来变换展示的矩阵。

-

还要注意一点,transformedMetadataObjectForMetadataObject 方法可以将设备坐标系上的数据转换到视图坐标系上,设备坐标系的范围是 (0, 0) 到 (1,1)。

2.12 二维码识别

- 机器可读代码包括一维条码和二维码等,AVFoundation 支持多种一维码和三种二维码,其中最常见的是 QR 码,也即二维码。

- 扫码仍然需要用到 AVMetadataObject 对象,首先加入到捕捉会话中。

self.metaDataOutput = [[AVCaptureMetadataOutput alloc] init];

if ([self.captureSession canAddOutput:self.metaDataOutput]) {

[self.captureSession addOutput:self.metaDataOutput];

[self.metaDataOutput setMetadataObjectsDelegate:self queue:dispatch_get_main_queue()];

NSArray *types = @[AVMetadataObjectTypeQRCode];

self.metaDataOutput.metadataObjectTypes = types;

}

- 然后实现委托方法:

- (void)captureOutput:(AVCaptureOutput *)output didOutputMetadataObjects:(NSArray<__kindof AVMetadataObject *> *)metadataObjects fromConnection:(AVCaptureConnection *)connection

{

[metadataObjects enumerateObjectsUsingBlock:^(__kindof AVMetadataObject * _Nonnull obj, NSUInteger idx, BOOL * _Nonnull stop) {

if ([obj isKindOfClass:[AVMetadataMachineReadableCodeObject class]]) {

NSLog(@"%@", ((AVMetadataMachineReadableCodeObject*)obj).stringValue);

}

}];

}

- 对于一个 AVMetadataMachineReadableCodeObject,有以下三个重要属性:

- stringValue,用于表示二维码编码信息

- bounds,用于表示二维码的矩形边界

- corners,一个角点字典表示的数组,比 bounds 表示的二维码区域更精确

- 我们可以通过以上属性,在 UI 界面上对二维码区域进行高亮展示。

- 首先需要注意,一个从 captureSession 获得的 AVMetadataMachineReadableCodeObject,其坐标是设备坐标系下的坐标,需要进行坐标转换:

- (NSArray *)transformedCodesFromCodes:(NSArray *)codes {

NSMutableArray *transformedCodes = [NSMutableArray array];

[codes enumerateObjectsUsingBlock:^(id _Nonnull obj, NSUInteger idx, BOOL * _Nonnull stop) {

AVMetadataObject *transformedCode = [self.previewLayer transformedMetadataObjectForMetadataObject:obj];

[transformedCodes addObject:transformedCode];

}];

return [transformedCodes copy];

}

- 其次,对于每一个 AVMetadataMachineReadableCodeObject 对象,其 bounds 属性由于是 CGRect,所以可以直接绘制出一个 UIBezierPath 对象:

- (UIBezierPath *)bezierPathForBounds:(CGRect)bounds {

return [UIBezierPath bezierPathWithRect:bounds];

}

- 而 corners 属性是一个字典,需要手动生成 CGPoint,然后进行连线操作,生成 UIBezierPath 对象:

- (UIBezierPath *)bezierPathForCorners:(NSArray *)corners {

UIBezierPath *path = [UIBezierPath bezierPath];

for (int i = 0; i < corners.count; i++) {

CGPoint point = [self pointForCorner:corners[i]];

if (i == 0) {

[path moveToPoint:point];

} else {

[path addLineToPoint:point];

}

}

[path closePath];

return path;

}

- (CGPoint)pointForCorner:(NSDictionary *)corner {

CGPoint point;

CGPointMakeWithDictionaryRepresentation((CFDictionaryRef)corner, &point);

return point;

}

- corners 字典的形式大致如下所示,可以调用

CGPointMakeWithDictionaryRepresentation便捷函数将其转换为CGPoint形式。一般来说一个 corners 里会包含 4 个 corner 字典。获取到每一个 code 对应的两个 UIBezierPath 对象后,就可以在视图上添加相应的 CALayer 来显示高亮区域了。

3. 实例

3.1 捕捉照片和录制视频Demo Swift版本

- 此demo 来自苹果官方文档,详情参考苹果官方文档:AVCam: Building a Camera App 章节,这个Demo主要是用深度数据捕捉照片,并使用前后的iPhone和iPad摄像头录制视频。这个Demo使用最新的IOS SDK 要求运行在IOS 13.0以上版本。

- iOS摄像头应用程序允许你从前后摄像头捕捉照片和电影。根据您的设备,相机应用程序还支持深度数据的静态捕获、人像效果和实时照片。

- 这个示例代码项目AVCam向您展示了如何在自己的相机应用程序中实现这些捕获功能。它利用了内置的iPhone和iPad前后摄像头的基本功能。

- 要使用AVCam,你需要一个运行ios13或更高版本的iOS设备。由于Xcode无法访问设备摄像头,因此此示例无法在模拟器中工作。AVCam隐藏了当前设备不支持的模式按钮,比如iPhone 7 Plus上的人像效果 曝光传送。

- 项目代码结构如下图:

3.1.1 配置捕获会话

- AVCaptureSession接受来自摄像头和麦克风等捕获设备的输入数据。在接收到输入后, AVCaptureSession将数据封送到适当的输出进行处理,最终生成一个电影文件或静态照片。配置捕获会话的输入和输出之后,您将告诉它开始捕获,然后停止捕获。

private let session = AVCaptureSession()

-

AVCam默认选择后摄像头,并配置摄像头捕获会话以将内容流到视频预览视图。PreviewView是一个由AVCaptureVideoPreviewLayer支持的自定义UIView子类。AVFoundation没有PreviewView类,但是示例代码创建了一个类来促进会话管理。

-

将与avcapturesessiessie的任何交互(包括它的输入和输出)委托给一个专门的串行调度队列(sessionQueue),这样交互就不会阻塞主队列。在单独的调度队列上执行任何涉及更改会话拓扑或中断其正在运行的视频流的配置,因为会话配置总是阻塞其他任务的执行,直到队列处理更改为止。类似地,样例代码将其他任务分派给会话队列,比如恢复中断的会话、切换捕获模式、切换摄像机、将媒体写入文件,这样它们的处理就不会阻塞或延迟用户与应用程序的交互。

-

相反,代码将影响UI的任务(比如更新预览视图)分派给主队列,因为AVCaptureVideoPreviewLayer是CALayer的一个子类,是示例预览视图的支持层。您必须在主线程上操作UIView子类,以便它们以及时的、交互的方式显示。

-

在viewDidLoad中,AVCam创建一个会话并将其分配给preview视图:

previewView.session = session

3.1.2 请求访问输入设备的授权

- 配置会话之后,它就可以接受输入了。每个avcapturedevice—不管是照相机还是麦克风—都需要用户授权访问。AVFoundation使用AVAuthorizationStatus枚举授权状态,该状态通知应用程序用户是否限制或拒绝访问捕获设备。

- 有关准备应用程序信息的更多信息。有关自定义授权请求,请参阅iOS上的媒体捕获请求授权。

3.1.3 在前后摄像头之间切换

- changeCamera方法在用户点击UI中的按钮时处理相机之间的切换。它使用一个发现会话,该会话按优先顺序列出可用的设备类型,并接受它的设备数组中的第一个设备。例如,AVCam中的videoDeviceDiscoverySession查询应用程序所运行的设备,查找可用的输入设备。此外,如果用户的设备有一个坏了的摄像头,它将不能在设备阵列中使用。

switch currentPosition {

case .unspecified, .front:

preferredPosition = .back

preferredDeviceType = .builtInDualCamera

case .back:

preferredPosition = .front

preferredDeviceType = .builtInTrueDepthCamera

@unknown default:

print("Unknown capture position. Defaulting to back, dual-camera.")

preferredPosition = .back

preferredDeviceType = .builtInDualCamera

}

- changeCamera方法处理相机之间的切换,如果发现会话发现相机处于适当的位置,它将从捕获会话中删除以前的输入,并将新相机添加为输入。

// Remove the existing device input first, because AVCaptureSession doesn't support

// simultaneous use of the rear and front cameras.

self.session.removeInput(self.videoDeviceInput)

if self.session.canAddInput(videoDeviceInput) {

NotificationCenter.default.removeObserver(self, name: .AVCaptureDeviceSubjectAreaDidChange, object: currentVideoDevice)

NotificationCenter.default.addObserver(self, selector: #selector(self.subjectAreaDidChange), name: .AVCaptureDeviceSubjectAreaDidChange, object: videoDeviceInput.device)

self.session.addInput(videoDeviceInput)

self.videoDeviceInput = videoDeviceInput

} else {

self.session.addInput(self.videoDeviceInput)

}

3.1.4 处理中断和错误

- 在捕获会话期间,可能会出现诸如电话呼叫、其他应用程序通知和音乐播放等中断。通过添加观察者来处理这些干扰,以侦听AVCaptureSessionWasInterrupted:

NotificationCenter.default.addObserver(self,

selector: #selector(sessionWasInterrupted),

name: .AVCaptureSessionWasInterrupted,

object: session)

NotificationCenter.default.addObserver(self,

selector: #selector(sessionInterruptionEnded),

name: .AVCaptureSessionInterruptionEnded,

object: session)

- 当AVCam接收到中断通知时,它可以暂停或挂起会话,并提供一个在中断结束时恢复活动的选项。AVCam将sessionwas注册为接收通知的处理程序,当捕获会话出现中断时通知用户:

if reason == .audioDeviceInUseByAnotherClient || reason == .videoDeviceInUseByAnotherClient {

showResumeButton = true

} else if reason == .videoDeviceNotAvailableWithMultipleForegroundApps {

// Fade-in a label to inform the user that the camera is unavailable.

cameraUnavailableLabel.alpha = 0

cameraUnavailableLabel.isHidden = false

UIView.animate(withDuration: 0.25) {

self.cameraUnavailableLabel.alpha = 1

}

} else if reason == .videoDeviceNotAvailableDueToSystemPressure {

print("Session stopped running due to shutdown system pressure level.")

}

- 摄像头视图控制器观察AVCaptureSessionRuntimeError,当错误发生时接收通知:

NotificationCenter.default.addObserver(self,

selector: #selector(sessionRuntimeError),

name: .AVCaptureSessionRuntimeError,

object: session)

- 当运行时错误发生时,重新启动捕获会话:

// If media services were reset, and the last start succeeded, restart the session.

if error.code == .mediaServicesWereReset {

sessionQueue.async {

if self.isSessionRunning {

self.session.startRunning()

self.isSessionRunning = self.session.isRunning

} else {

DispatchQueue.main.async {

self.resumeButton.isHidden = false

}

}

}

} else {

resumeButton.isHidden = false

}

- 如果设备承受系统压力,比如过热,捕获会话也可能停止。相机本身不会降低拍摄质量或减少帧数;为了避免让你的用户感到惊讶,你可以让你的应用手动降低帧速率,关闭深度,或者根据AVCaptureDevice.SystemPressureState:的反馈来调整性能。

let pressureLevel = systemPressureState.level

if pressureLevel == .serious || pressureLevel == .critical {

if self.movieFileOutput == nil || self.movieFileOutput?.isRecording == false {

do {

try self.videoDeviceInput.device.lockForConfiguration()

print("WARNING: Reached elevated system pressure level: \(pressureLevel). Throttling frame rate.")

self.videoDeviceInput.device.activeVideoMinFrameDuration = CMTime(value: 1, timescale: 20)

self.videoDeviceInput.device.activeVideoMaxFrameDuration = CMTime(value: 1, timescale: 15)

self.videoDeviceInput.device.unlockForConfiguration()

} catch {

print("Could not lock device for configuration: \(error)")

}

}

} else if pressureLevel == .shutdown {

print("Session stopped running due to shutdown system pressure level.")

}

3.1.5 捕捉一张照片

- 在会话队列上拍照。该过程首先更新AVCapturePhotoOutput连接以匹配视频预览层的视频方向。这使得相机能够准确地捕捉到用户在屏幕上看到的内容:

if let photoOutputConnection = self.photoOutput.connection(with: .video) {

photoOutputConnection.videoOrientation = videoPreviewLayerOrientation!

}

- 对齐输出后,AVCam继续创建AVCapturePhotoSettings来配置捕获参数,如焦点、flash和分辨率:

var photoSettings = AVCapturePhotoSettings()

// Capture HEIF photos when supported. Enable auto-flash and high-resolution photos.

if self.photoOutput.availablePhotoCodecTypes.contains(.hevc) {

photoSettings = AVCapturePhotoSettings(format: [AVVideoCodecKey: AVVideoCodecType.hevc])

}

if self.videoDeviceInput.device.isFlashAvailable {

photoSettings.flashMode = .auto

}

photoSettings.isHighResolutionPhotoEnabled = true

if !photoSettings.__availablePreviewPhotoPixelFormatTypes.isEmpty {

photoSettings.previewPhotoFormat = [kCVPixelBufferPixelFormatTypeKey as String: photoSettings.__availablePreviewPhotoPixelFormatTypes.first!]

}

// Live Photo capture is not supported in movie mode.

if self.livePhotoMode == .on && self.photoOutput.isLivePhotoCaptureSupported {

let livePhotoMovieFileName = NSUUID().uuidString

let livePhotoMovieFilePath = (NSTemporaryDirectory() as NSString).appendingPathComponent((livePhotoMovieFileName as NSString).appendingPathExtension("mov")!)

photoSettings.livePhotoMovieFileURL = URL(fileURLWithPath: livePhotoMovieFilePath)

}

photoSettings.isDepthDataDeliveryEnabled = (self.depthDataDeliveryMode == .on

&& self.photoOutput.isDepthDataDeliveryEnabled)

photoSettings.isPortraitEffectsMatteDeliveryEnabled = (self.portraitEffectsMatteDeliveryMode == .on

&& self.photoOutput.isPortraitEffectsMatteDeliveryEnabled)

if photoSettings.isDepthDataDeliveryEnabled {

if !self.photoOutput.availableSemanticSegmentationMatteTypes.isEmpty {

photoSettings.enabledSemanticSegmentationMatteTypes = self.selectedSemanticSegmentationMatteTypes

}

}

photoSettings.photoQualityPrioritization = self.photoQualityPrioritizationMode

- 该示例使用一个单独的对象PhotoCaptureProcessor作为照片捕获委托,以隔离每个捕获生命周期。对于实时照片来说,这种清晰的捕获周期分离是必要的,因为单个捕获周期可能涉及多个帧的捕获。

- 每次用户按下中央快门按钮时,AVCam都会通过调用capturePhoto(带有:delegate:)来使用之前配置的设置捕捉照片:

self.photoOutput.capturePhoto(with: photoSettings, delegate: photoCaptureProcessor)

- capturePhoto方法接受两个参数:

- 一个avcapturephotoset对象,它封装了用户通过应用配置的设置,比如曝光、闪光、对焦和手电筒。

- 一个符合AVCapturePhotoCaptureDelegate协议的委托,以响应系统在捕获照片期间传递的后续回调。

- 一旦应用程序调用capturePhoto(带有:delegate:),开始拍照的过程就结束了。此后,对单个照片捕获的操作将在委托回调中发生。

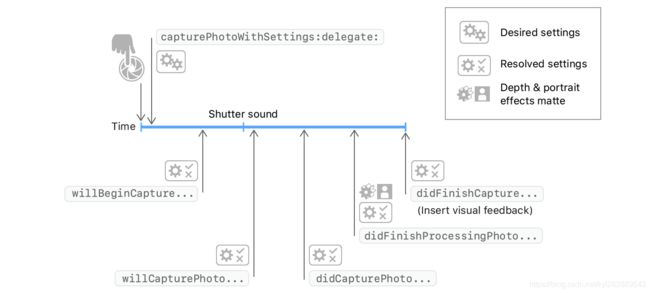

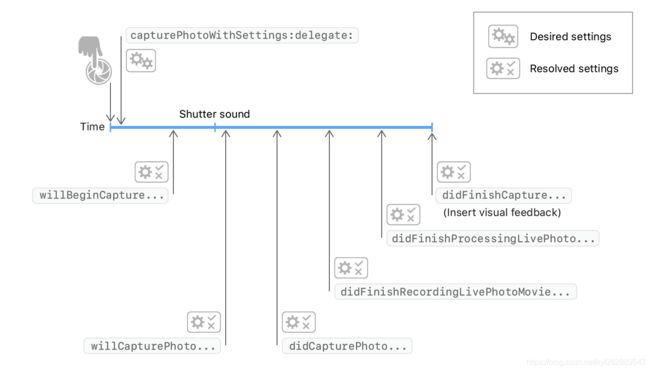

3.1.6 通过照片捕获委托跟踪结果

-

当你调用capturePhoto时,photoOutput(_:willBeginCaptureFor:)首先到达。解析的设置表示相机将为即将到来的照片应用的实际设置。AVCam仅将此方法用于特定于活动照片的行为。AVCam通过检查livephotomovieviedimensions尺寸来判断照片是否为活动照片;如果照片是活动照片,AVCam会增加一个计数来跟踪活动中的照片:

self.sessionQueue.async {

if capturing {

self.inProgressLivePhotoCapturesCount += 1

} else {

self.inProgressLivePhotoCapturesCount -= 1

}

let inProgressLivePhotoCapturesCount = self.inProgressLivePhotoCapturesCount

DispatchQueue.main.async {

if inProgressLivePhotoCapturesCount > 0 {

self.capturingLivePhotoLabel.isHidden = false

} else if inProgressLivePhotoCapturesCount == 0 {

self.capturingLivePhotoLabel.isHidden = true

} else {

print("Error: In progress Live Photo capture count is less than 0.")

}

}

}

- photoOutput(_:willCapturePhotoFor:)正好在系统播放快门声之后到达。AVCam利用这个机会来闪烁屏幕,提醒用户照相机捕获了一张照片。示例代码通过将预览视图层的不透明度从0调整到1来实现此flash。

// Flash the screen to signal that AVCam took a photo.

DispatchQueue.main.async {

self.previewView.videoPreviewLayer.opacity = 0

UIView.animate(withDuration: 0.25) {

self.previewView.videoPreviewLayer.opacity = 1

}

}

- photoOutput(_:didFinishProcessingPhoto:error:)在系统完成深度数据处理和人像效果处理后到达。AVCam检查肖像效果,曝光和深度元数据在这个阶段:

self.sessionQueue.async {

self.inProgressPhotoCaptureDelegates[photoCaptureProcessor.requestedPhotoSettings.uniqueID] = nil

}

- 您可以在此委托方法中应用其他视觉效果,例如动画化捕获照片的预览缩略图。

- 有关通过委托回调跟踪照片进度的更多信息,请参见跟踪照片捕获进度。

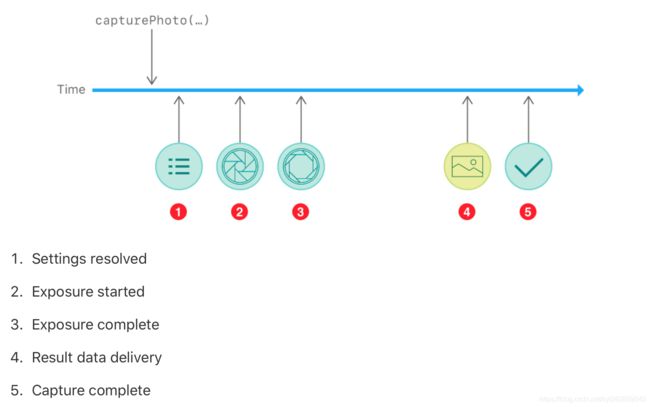

捕捉摄像头拍照一个iOS设备是一个复杂的过程,涉及物理相机机制、图像信号处理、操作系统和应用程序。虽然你的应用有可能忽略许多阶段,这个过程,只是等待最终的结果,您可以创建一个更具响应性相机接口通过监控每一步。

在调用capturePhoto(带有:delegate:)之后,您的委派对象可以遵循该过程中的五个主要步骤(或者更多,取决于您的照片设置)。根据您的捕获工作流和您想要创建的捕获UI,您的委托可以处理以下部分或全部步骤:

捕获系统在这个过程的每一步都提供一个avcaptureresolvedphotoset对象。由于多个捕获可以同时进行,因此每个解析后的照片设置对象都有一个uniqueID,其值与您用于拍摄照片的avcapturephotos的uniqueID相匹配。

3.1.7 捕捉实时的照片

-

当您启用实时照片捕捉功能时,相机会在捕捉瞬间拍摄一张静止图像和一段短视频。该应用程序以与静态照片捕获相同的方式触发实时照片捕获:通过对capturePhotoWithSettings的单个调用,您可以通过livePhotoMovieFileURL属性传递实时照片短视频的URL。您可以在AVCapturePhotoOutput级别启用活动照片,也可以在每次捕获的基础上在avcapturephotoset级别配置活动照片。

-

由于Live Photo capture创建了一个简短的电影文件,AVCam必须表示将电影文件保存为URL的位置。此外,由于实时照片捕捉可能会重叠,因此代码必须跟踪正在进行的实时照片捕捉的数量,以确保实时照片标签在这些捕捉期间保持可见。上一节中的photoOutput(_:willBeginCaptureFor:)委托方法实现了这个跟踪计数器。

-

photoOutput(_:didFinishRecordingLivePhotoMovieForEventualFileAt:resolvedSettings:)在录制短片结束时触发。AVCam取消了这里的活动标志。因为摄像机已经完成了短片的录制,AVCam执行Live Photo处理器递减完成计数器:

livePhotoCaptureHandler(false) -

photoOutput(_:didFinishProcessingLivePhotoToMovieFileAt:duration:photoDisplayTime:resolvedSettings:error:)最后触发,表示影片已完全写入磁盘,可以使用了。AVCam利用这个机会来显示任何捕获错误,并将保存的文件URL重定向到它的最终输出位置:

if error != nil {

print("Error processing Live Photo companion movie: \(String(describing: error))")

return

}

livePhotoCompanionMovieURL = outputFileURL

- 有关将实时照片捕捉功能整合到应用程序中的更多信息,请参见“捕捉静态照片”和“实时照片”。

3.1.8 捕获深度数据和人像效果曝光

- 使用AVCapturePhotoOutput, AVCam查询捕获设备,查看其配置是否可以将深度数据和人像效果传送到静态图像。如果输入设备支持这两种模式中的任何一种,并且您在捕获设置中启用了它们,则相机将深度和人像效果作为辅助元数据附加到每张照片请求的基础上。如果设备支持深度数据、人像效果或实时照片的传输,应用程序会显示一个按钮,用来切换启用或禁用该功能的设置。

if self.photoOutput.isDepthDataDeliverySupported {

self.photoOutput.isDepthDataDeliveryEnabled = true

DispatchQueue.main.async {

self.depthDataDeliveryButton.isEnabled = true

}

}

if self.photoOutput.isPortraitEffectsMatteDeliverySupported {

self.photoOutput.isPortraitEffectsMatteDeliveryEnabled = true

DispatchQueue.main.async {

self.portraitEffectsMatteDeliveryButton.isEnabled = true

}

}

if !self.photoOutput.availableSemanticSegmentationMatteTypes.isEmpty {

self.photoOutput.enabledSemanticSegmentationMatteTypes = self.photoOutput.availableSemanticSegmentationMatteTypes

self.selectedSemanticSegmentationMatteTypes = self.photoOutput.availableSemanticSegmentationMatteTypes

DispatchQueue.main.async {

self.semanticSegmentationMatteDeliveryButton.isEnabled = (self.depthDataDeliveryMode == .on) ? true : false

}

}

DispatchQueue.main.async {

self.livePhotoModeButton.isHidden = false

self.depthDataDeliveryButton.isHidden = false

self.portraitEffectsMatteDeliveryButton.isHidden = false

self.semanticSegmentationMatteDeliveryButton.isHidden = false

self.photoQualityPrioritizationSegControl.isHidden = false

self.photoQualityPrioritizationSegControl.isEnabled = true

}

- 相机存储深度和人像效果的曝光元数据作为辅助图像,可通过图像I/O API发现和寻址。AVCam通过搜索kCGImageAuxiliaryDataTypePortraitEffectsMatte类型的辅助图像来访问这个元数据:

if var portraitEffectsMatte = photo.portraitEffectsMatte {

if let orientation = photo.metadata[String(kCGImagePropertyOrientation)] as? UInt32 {

portraitEffectsMatte = portraitEffectsMatte.applyingExifOrientation(CGImagePropertyOrientation(rawValue: orientation)!)

}

let portraitEffectsMattePixelBuffer = portraitEffectsMatte.mattingImage

- 有关深度数据捕获的更多信息,请参见使用深度捕获照片。

在有后置双摄像头或前置真深度摄像头的iOS设备上,捕获系统可以记录深度信息。深度图就像一个图像;但是,它不是每个像素提供一个颜色,而是表示从相机到图像的那一部分的距离(以绝对值表示,或与深度图中的其他像素相对)。

您可以使用一个深度地图和照片一起创建图像处理效果,对前景和背景照片不同的元素,像iOS的竖屏模式相机应用。通过保存颜色和深度数据分开,你甚至可以应用,改变这些影响长照片后被抓获。

3.1.9 捕捉语义分割

- 使用AVCapturePhotoOutput, AVCam还可以捕获语义分割图像,将一个人的头发、皮肤和牙齿分割成不同的图像。将这些辅助图像与你的主要照片一起捕捉,可以简化照片效果的应用,比如改变一个人的头发颜色或让他们的笑容更灿烂。

通过将照片输出的enabledSemanticSegmentationMatteTypes属性设置为首选值(头发、皮肤和牙齿),可以捕获这些辅助图像。要捕获所有受支持的类型,请设置此属性以匹配照片输出的availableSemanticSegmentationMatteTypes属性。

// Capture all available semantic segmentation matte types.

photoOutput.enabledSemanticSegmentationMatteTypes =

photoOutput.availableSemanticSegmentationMatteTypes

- 当照片输出完成捕获一张照片时,您可以通过查询照片的semanticSegmentationMatte(for:)方法来检索相关的分割matte图像。此方法返回一个AVSemanticSegmentationMatte,其中包含matte图像和处理图像时可以使用的其他元数据。示例应用程序将语义分割的matte图像数据添加到一个数组中,这样您就可以将其写入用户的照片库。

// Find the semantic segmentation matte image for the specified type.

guard var segmentationMatte = photo.semanticSegmentationMatte(for: ssmType) else { return }

// Retrieve the photo orientation and apply it to the matte image.

if let orientation = photo.metadata[String(kCGImagePropertyOrientation)] as? UInt32,

let exifOrientation = CGImagePropertyOrientation(rawValue: orientation) {

// Apply the Exif orientation to the matte image.

segmentationMatte = segmentationMatte.applyingExifOrientation(exifOrientation)

}

var imageOption: CIImageOption!

// Switch on the AVSemanticSegmentationMatteType value.

switch ssmType {

case .hair:

imageOption = .auxiliarySemanticSegmentationHairMatte

case .skin:

imageOption = .auxiliarySemanticSegmentationSkinMatte

case .teeth:

imageOption = .auxiliarySemanticSegmentationTeethMatte

default:

print("This semantic segmentation type is not supported!")

return

}

guard let perceptualColorSpace = CGColorSpace(name: CGColorSpace.sRGB) else { return }

// Create a new CIImage from the matte's underlying CVPixelBuffer.

let ciImage = CIImage( cvImageBuffer: segmentationMatte.mattingImage,

options: [imageOption: true,

.colorSpace: perceptualColorSpace])

// Get the HEIF representation of this image.

guard let imageData = context.heifRepresentation(of: ciImage,

format: .RGBA8,

colorSpace: perceptualColorSpace,

options: [.depthImage: ciImage]) else { return }

// Add the image data to the SSM data array for writing to the photo library.

semanticSegmentationMatteDataArray.append(imageData)

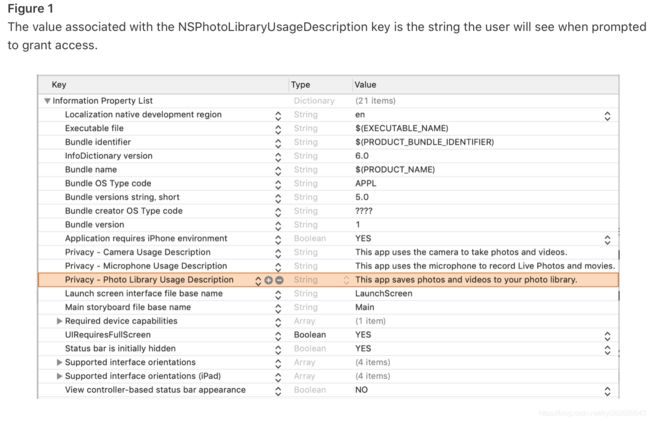

3.1.10 保存照片到用户的照片库

-

在将图像或电影保存到用户的照片库之前,必须首先请求访问该库。请求写授权的过程镜像捕获设备授权:使用Info.plist中提供的文本显示警报。

AVCam在fileOutput(_:didFinishRecordingTo:from:error:)回调方法中检查授权,其中AVCaptureOutput提供了要保存为输出的媒体数据。PHPhotoLibrary.requestAuthorization { status in -

有关请求访问用户的照片库的更多信息,请参见请求访问照片的授权。

- 用户必须明确授予您的应用程序访问照片的权限。通过提供调整字符串来准备你的应用。调整字符串是一个可本地化的消息,你添加到你的应用程序的信息。plist文件,告诉用户为什么你的应用程序需要访问用户的照片库。然后,当照片提示用户授予访问权限时,警报将以用户设备上选择的语言环境显示您提供的调整字符串。

- PHCollection,第一次您的应用程序使用PHAsset PHAssetCollection,从图书馆或PHCollectionList方法获取内容,或使用一个照片库中列出的方法应用更改请求更改库内容,照片自动和异步提示用户请求授权。

系统用户授予权限后,记得将来使用的选择在你的应用程序,但是用户可以在任何时候改变这个选择使用设置应用程序。如果用户否认你的应用照片库访问,还没有回复权限提示,或不能授予访问权限限制,任何试图获取照片库内容将返回空PHFetchResult对象,和任何试图更改照片库将会失败。如果这个方法返回PHAuthorizationStatus。您可以调用requestAuthorization(_:)方法来提示用户访问照片库权限。- 使用与照片库交互的类,如PHAsset、PHPhotoLibrary和PHImageManager(应用程序的信息)。plist文件必须包含面向用户的NSPhotoLibraryUsageDescription键文本,系统在请求用户访问权限时将显示该文本。如果没有这个键,iOS 10或之后的应用程序将会崩溃。

3.1.11 录制视频文件

- AVCam通过使用.video限定符查询和添加输入设备来支持视频捕获。该应用程序默认为后双摄像头,但如果设备没有双摄像头,该应用程序默认为广角摄像头。

if let dualCameraDevice = AVCaptureDevice.default(.builtInDualCamera, for: .video, position: .back) {

defaultVideoDevice = dualCameraDevice

} else if let backCameraDevice = AVCaptureDevice.default(.builtInWideAngleCamera, for: .video, position: .back) {

// If a rear dual camera is not available, default to the rear wide angle camera.

defaultVideoDevice = backCameraDevice

} else if let frontCameraDevice = AVCaptureDevice.default(.builtInWideAngleCamera, for: .video, position: .front) {

// If the rear wide angle camera isn't available, default to the front wide angle camera.

defaultVideoDevice = frontCameraDevice

}

- 不像静态照片那样将设置传递给系统,而是像活动照片那样传递输出URL。委托回调提供相同的URL,因此应用程序不需要将其存储在中间变量中。

- 一旦用户点击记录开始捕获,AVCam调用startRecording(to:recordingDelegate:):

movieFileOutput.startRecording(to: URL(fileURLWithPath: outputFilePath), recordingDelegate: self)

- 与capturePhoto为still capture触发委托回调一样,startRecording为影片录制触发一系列委托回调。

- 通过委托回调链跟踪影片录制的进度。与其实现AVCapturePhotoCaptureDelegate,不如实现AVCaptureFileOutputRecordingDelegate。由于影片录制委托回调需要与捕获会话进行交互,因此AVCam将CameraViewController作为委托,而不是创建单独的委托对象。

- 当文件输出开始向文件写入数据时触发fileOutput(_:didStartRecordingTo:from:)。AVCam利用这个机会将记录按钮更改为停止按钮:

DispatchQueue.main.async {

self.recordButton.isEnabled = true

self.recordButton.setImage(#imageLiteral(resourceName: "CaptureStop"), for: [])

}

- fileOutput(_:didFinishRecordingTo:from:error:)最后触发,表示影片已完全写入磁盘,可以使用了。AVCam利用这个机会将临时保存的影片从给定的URL移动到用户的照片库或应用程序的文档文件夹:

PHPhotoLibrary.shared().performChanges({

let options = PHAssetResourceCreationOptions()

options.shouldMoveFile = true

let creationRequest = PHAssetCreationRequest.forAsset()

creationRequest.addResource(with: .video, fileURL: outputFileURL, options: options)

}, completionHandler: { success, error in

if !success {

print("AVCam couldn't save the movie to your photo library: \(String(describing: error))")

}

cleanup()

}

)

- 如果AVCam进入后台——例如用户接受来电时——应用程序必须获得用户的许可才能继续录制。AVCam通过后台任务从系统请求时间来执行此保存。这个后台任务确保有足够的时间将文件写入照片库,即使AVCam退到后台。为了结束后台执行,AVCam在保存记录文件后调用fileOutput(:didFinishRecordingTo:from:error:)中的endBackgroundTask(。

self.backgroundRecordingID = UIApplication.shared.beginBackgroundTask(expirationHandler: nil)

3.1.12 录制视频时要抓拍图片

- 与iOS摄像头应用程序一样,AVCam也可以在拍摄录像的同时拍照。AVCam以与视频相同的分辨率捕捉这些照片。实现代码如下:

let movieFileOutput = AVCaptureMovieFileOutput()

if self.session.canAddOutput(movieFileOutput) {

self.session.beginConfiguration()

self.session.addOutput(movieFileOutput)

self.session.sessionPreset = .high

if let connection = movieFileOutput.connection(with: .video) {

if connection.isVideoStabilizationSupported {

connection.preferredVideoStabilizationMode = .auto

}

}

self.session.commitConfiguration()

DispatchQueue.main.async {

captureModeControl.isEnabled = true

}

self.movieFileOutput = movieFileOutput

DispatchQueue.main.async {

self.recordButton.isEnabled = true

/*

For photo captures during movie recording, Speed quality photo processing is prioritized

to avoid frame drops during recording.

*/

self.photoQualityPrioritizationSegControl.selectedSegmentIndex = 0

self.photoQualityPrioritizationSegControl.sendActions(for: UIControl.Event.valueChanged)

}

}

- 具体完整的代码点击这里下载:Swift 视频捕获Demo