BAM注意力机制——pytorch实现

论文传送门:BAM: Bottleneck Attention Module

BAM的目的:

为网络添加注意力机制。

BAM的结构:

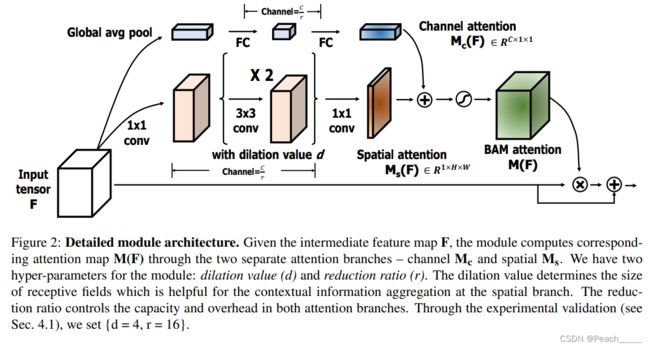

①通道注意力机制(Channel attention branch):与SEblock相似;

②空间注意力机制(Spatial attention branch):1x1卷积进行维度缩减,2个3x3空洞卷积(dilated convolution)增加感受野,1x1卷积输出单通道权重;

③二者并联。

import torch

import torch.nn as nn

class ChannelAttention(nn.Module): # Channel attention branch

def __init__(self, channels, r=16): # r: reduction ratio

super(ChannelAttention, self).__init__()

hidden_channels = channels // r

self.attn = nn.Sequential(

nn.AdaptiveAvgPool2d(1), # avgpool

nn.Conv2d(channels, hidden_channels, 1, 1, 0), # 1x1conv,代替全连接

nn.Conv2d(hidden_channels, channels, 1, 1, 0), # 1x1conv,代替全连接

nn.BatchNorm2d(channels) # bn

)

def forward(self, x):

return self.attn(x) # Mc(F) = BN(MLP(AvgPool(F))) = BN(W1(W0AvgPool(F)+b0)+b1),对应原文公式(3),(B,C,1,1)

class SpatialAttention(nn.Module): # Spatial attention branch

def __init__(self, channels, r=16, d=4): # r: reduction ratio; d: dilation value

super(SpatialAttention, self).__init__()

hidden_channels = channels // r

self.attn = nn.Sequential(

nn.Conv2d(channels, hidden_channels, 1, 1, 0, bias=False), # 1x1conv

# 对于kernel_size=3,stride=1的卷积,padding=dilation,保证卷积前后尺寸不变

nn.Conv2d(hidden_channels, hidden_channels, 3, 1, d, d, bias=False), # dilated conv

nn.Conv2d(hidden_channels, hidden_channels, 3, 1, d, d, bias=False), # dilated conv

nn.Conv2d(hidden_channels, 1, 1, 1, 0, bias=False), # 1x1conv,输出通道为1

nn.BatchNorm2d(1) # bn

)

def forward(self, x):

return self.attn(x) # Ms(F) = BN(f31×1(f23×3(f13×3(f01×1(F))))),对应原文公式(4),(B,1,H,W)

class BAM(nn.Module): # BAM

def __init__(self, channels, r=16, d=4):

super(BAM, self).__init__()

self.channel_attention = ChannelAttention(channels, r) # channel attention branch

self.spatial_attention = SpatialAttention(channels, r, d) # spatial attention branch

self.sigmoid = nn.Sigmoid() # sigmoid

def forward(self, x):

_, c, h, w = x.shape # b,c,h,w

channel_weights = torch.repeat_interleave(

self.channel_attention(x), repeats=h * w, dim=2

).view(-1, c, h,w) # (B,C,1,1) -> (B,C,H,W)

spatial_weights = torch.repeat_interleave(

self.spatial_attention(x), repeats=c, dim=1

) # (B,1,H,W) -> (B,C,H,W)

weights = self.sigmoid(channel_weights + spatial_weights) # M(F) = σ(Mc(F)+Ms(F)),对应原文公式(2)

return x + x * weights # F0 = F+F⊗M(F),对应原文公式(1)