动手学深度学习——批量归一化

1、批量归一化

损失出现在最后,后面的层训练较快;

数据在最底部,底部的层训练的慢;底部层一变化,所有都得跟着变;最后的那些层需要重新学习多次;导致收敛变慢;

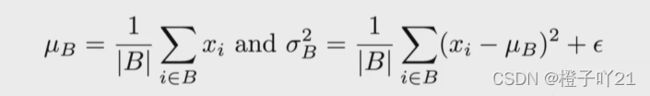

固定小批量里面的均差和方差:

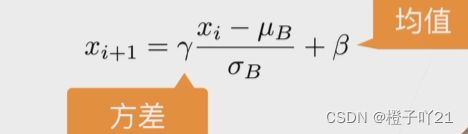

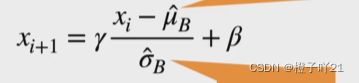

然后再做额外的调整(可学习的参数):

2、批量归一化层

可学习的参数为γ和β;

作用在全连接层和卷积层输出上,激活函数前;全连接层和卷积层输入上;

对全连接层,作用在特征维;

对于卷积层,作用在通道维上。

3、批量归一化在做什么

最初是想用它来减少内部变量转移;后续指出它可能就是通过在每个小批量里加入噪音来控制模型复杂度。

因此没有必要跟丢弃法混合使用。

4、总结

批量归一化固定小批量中的均值和方差,然后学习出适合的便宜和缩放;

可以加速收敛速度,但一般不改变模型精度。

5、代码实现

"""批量归一化 从零开始实现"""

import time

import torch

from torch import nn, optim

import torch.nn.functional as F

import sys

sys.path.append("..")

import d2lzh_pytorch as d2l

device = torch.device('cuda' if torch.cuda.is_available() else'cpu')

def batch_norm(is_training, X, gamma, beta, moving_mean,moving_var, eps, momentum):

# 判断当前模式是训练模式还是预测模式

if not is_training:

# 如果是在预测模式下,直接使⽤传⼊的移动平均所得的均值和⽅差

X_hat = (X - moving_mean) / torch.sqrt(moving_var + eps)

else:

assert len(X.shape) in (2, 4)

if len(X.shape) == 2:

# 使⽤全连接层的情况,计算特征维上的均值和⽅差

mean = X.mean(dim=0)

var = ((X - mean) ** 2).mean(dim=0)

else:

# 使⽤⼆维卷积层的情况,计算通道维上(axis=1)的均值和⽅差。这⾥我们需要保持

mean = X.mean(dim=0, keepdim=True).mean(dim=2,keepdim=True).mean(dim=3, keepdim=True)

var = ((X - mean) ** 2).mean(dim=0,keepdim=True).mean(dim=2, keepdim=True).mean(dim=3, keepdim=True)

# 训练模式下⽤当前的均值和⽅差做标准化

X_hat = (X - mean) / torch.sqrt(var + eps)

# 更新移动平均的均值和⽅差

moving_mean = momentum * moving_mean + (1.0 - momentum) *mean

moving_var = momentum * moving_var + (1.0 - momentum) * var

Y = gamma * X_hat + beta # 拉伸和偏移

return Y, moving_mean, moving_var

#创建一个正确的BatchNorm图层

class BatchNorm(nn.Module):

def __init__(self, num_features, num_dims):

super(BatchNorm, self).__init__()

if num_dims == 2:

shape = (1, num_features)

else:

shape = (1, num_features, 1, 1)

# 参与求梯度和迭代的拉伸和偏移参数,分别初始化成0和1

self.gamma = nn.Parameter(torch.ones(shape))

self.beta = nn.Parameter(torch.zeros(shape))

# 不参与求梯度和迭代的变量,全在内存上初始化成0

self.moving_mean = torch.zeros(shape)

self.moving_var = torch.zeros(shape)

def forward(self, X):

# 如果X不在内存上,将moving_mean和moving_var复制到X所在显存上

if self.moving_mean.device != X.device:

self.moving_mean = self.moving_mean.to(X.device)

self.moving_var = self.moving_var.to(X.device)

# 保存更新过的moving_mean和moving_var, Module实例的traning属性默认为true, 调⽤.eval()后设成false

Y, self.moving_mean, self.moving_var = batch_norm(self.training, X, self.gamma, self.beta, self.moving_mean,self.moving_var, eps=1e-5, momentum=0.9)

return Y

""" 使⽤批量归⼀化层的LeNet"""

net = nn.Sequential( nn.Conv2d(1, 6, 5),

# in_channels, out_channels,kernel_size

BatchNorm(6, num_dims=4),

nn.Sigmoid(),

nn.MaxPool2d(2, 2), # kernel_size, stride

nn.Conv2d(6, 16, 5),

BatchNorm(16, num_dims=4),

nn.Sigmoid(),

nn.MaxPool2d(2, 2),

d2l.FlattenLayer(),

nn.Linear(16*4*4, 120),

BatchNorm(120, num_dims=2),

nn.Sigmoid(),

nn.Linear(120, 84),

BatchNorm(84, num_dims=2),

nn.Sigmoid(),

nn.Linear(84, 10)

)

#训练修改后的模型

batch_size = 256

train_iter, test_iter =d2l.load_data_fashion_mnist(batch_size=batch_size)

lr, num_epochs = 0.001, 5

optimizer = torch.optim.Adam(net.parameters(), lr=lr)

d2l.train_ch5(net, train_iter, test_iter, batch_size, optimizer,device, num_epochs)

"""批量归一化 简介实现"""

net = nn.Sequential(

nn.Conv2d(1, 6, 5), # in_channels, out_channels,kernel_size

nn.BatchNorm2d(6),

nn.Sigmoid(),

nn.MaxPool2d(2, 2), # kernel_size, stride

nn.Conv2d(6, 16, 5),

nn.BatchNorm2d(16),

nn.Sigmoid(),

nn.MaxPool2d(2, 2),

d2l.FlattenLayer(),

nn.Linear(16*4*4, 120),

nn.BatchNorm1d(120),

nn.Sigmoid(),

nn.Linear(120, 84),

nn.BatchNorm1d(84),

nn.Sigmoid(),

nn.Linear(84, 10)

)

#使⽤同样的超参数进⾏训练。

batch_size = 256

train_iter, test_iter =d2l.load_data_fashion_mnist(batch_size=batch_size)

lr, num_epochs = 0.001, 5

optimizer = torch.optim.Adam(net.parameters(), lr=lr)

d2l.train_ch5(net, train_iter, test_iter, batch_size, optimizer,device, num_epochs)