PyTorch基础(五)搭建Inception网络模型

上一篇博客中写了如何搭建基础的CNN网络,然后我又学习了比基本高级一点的神经网络框架,Inception框架,这个框架的核心作用就是不需要人为决定使用哪个过滤器,或者是够需要池化,而是由网络自己决定这些参数,你可以给网络添加这些参数可能的值,然后把这些输出连接起来,让网络自己学习这些参数,网络自己决定采用哪些过滤器组合。

这篇博客利用Inception网络来训练mnist数据集,关键在于如何搭建Inception那个部分的网络架构。

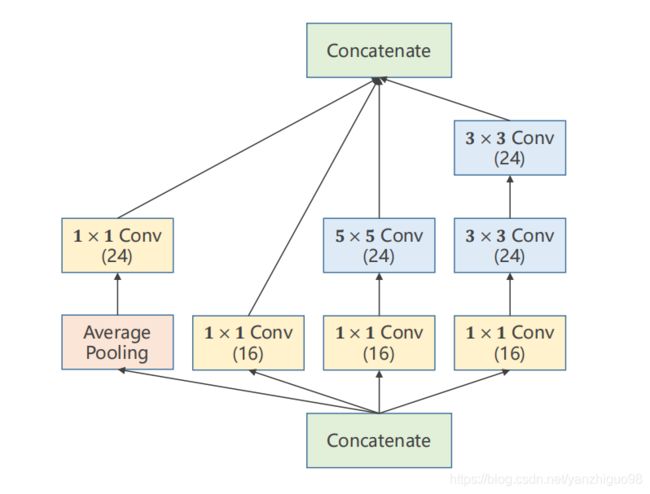

1、Inception网络图形

从上面这张图片中可以看出,共有四个部分组成,经过这四个部分之后,会形成24+24+24+16=88个通道,这是这个网络的关键点,也是有助于后面计算维度。

2、导入相关库、数据增强、构造数据

import torch

from torchvision import datasets

from torchvision import transforms

import torch.nn.functional as F

from torch.utils.data import DataLoader

#数据增强

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,),(0.3081,))

])

#下载数据集

train_dataset = datasets.MNIST(

root='../dataset/mnist',

download=False,

train=True,

transform=transform

)

test_dataset = datasets.MNIST(

root='../dataset/mnist',

download=False,

train=False,

transform=transform

)

#构造数据集

train_loader = DataLoader(

dataset=train_dataset,

batch_size=64,

shuffle=True

)

test_loader = DataLoader(

dataset=test_dataset,

batch_size=64,

shuffle=True

)这一部分和上一篇博客中的导入数据部分一样,利用pytorch中的datasets和DataLoader构造训练集和测试集。

3、构建Inception模型(关键部分)

这是这一篇博客的重点,这也是我模仿者写代码的过程,自己一开始肯定不会写的。

#盗梦空间网络结构Inception

class InceptionA(torch.nn.Module):

def __init__(self,in_channels):

super(InceptionA,self).__init__()

#第一个分支 1*1 输出通道数16

self.branch1x1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

#第二个分支 输出通道数24

self.branch5x5_1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch5x5_2 = torch.nn.Conv2d(16,24,kernel_size=5,padding=2)

#第三个分支 输出通道数24

self.branch3x3_1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch3x3_2 = torch.nn.Conv2d(16,24,kernel_size=3,padding=1)

self.branch3x3_3 = torch.nn.Conv2d(24,24,kernel_size=3,padding=1)

#第四个分支 输出通道数 24

self.branch_pool = torch.nn.Conv2d(in_channels,24,kernel_size=1)

def forward(self,x):

brach1x1 = self.branch1x1(x)

brach5x5 = self.branch5x5_1(x)

brach5x5 = self.branch5x5_2(brach5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x,kernel_size=3,stride = 1,padding = 1)

branch_pool = self.branch_pool(branch_pool)

output = [brach1x1,brach5x5,branch3x3,branch_pool]

return torch.cat(output,dim=1)

这个Inception部分代码具有重用性,因为再构造这个对象时,传入的参数是根据所搭建的模型而设置的,比如,网络给Inception传入通道数字为10,或者为20,都能满足情况,但是经过Inception模块,都会产生88个通道。

#第一个分支 1*1 输出通道数16

self.branch1x1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)第一个分支很简单,就是输入通道需要传入Inception,输出通道数是16,卷积核的大小是1*1,经过这样的卷积层,会产生16个通道,宽度和高度不变

#第二个分支 输出通道数24

self.branch5x5_1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch5x5_2 = torch.nn.Conv2d(16,24,kernel_size=5,padding=2)第二个分支有两个卷积层,第一个卷积层和第一个部分一样,都需要传入一个初始的通道数,输出通道为16,再Inception结构图中也能体现出来;第二个卷积层,使用的是same卷积,输入的高和宽经过卷积之后不变,这里有个小技巧,如何构造same卷积,当卷积核的大小为m是,padding为m/2即可,结果向下取整,输出通道数为88。

#第三个分支 输出通道数24

self.branch3x3_1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch3x3_2 = torch.nn.Conv2d(16,24,kernel_size=3,padding=1)

self.branch3x3_3 = torch.nn.Conv2d(24,24,kernel_size=3,padding=1)第三个分支有三个卷积层,第一个卷积是1*1卷积,第二个卷积和第三个卷积都是same卷积。

#第四个分支 输出通道数 24

self.branch_pool = torch.nn.Conv2d(in_channels,24,kernel_size=1)第四个分支是same池化,池化本来不改变通道数,但是,先利用same池化,然后利用1*1卷积改变通道数。

4、构建Net(关键部分)

#构建网络

class Net(torch.nn.Module):

def __init__(self):

super(Net,self).__init__()

self.conv1 = torch.nn.Conv2d(1,10,kernel_size=5)

self.conv2 = torch.nn.Conv2d(88,20,kernel_size=5)

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = torch.nn.MaxPool2d(2)

self.fc = torch.nn.Linear(1408,10)

def forward(self,x):

batch_size = x.size(0)

x = self.mp(F.relu(self.conv1(x)))

x = self.incep1(x)

x = self.mp(F.relu(self.conv2(x)))

x = self.incep2(x)

x = x.view(batch_size,-1)

x = self.fc(x)

return x

model = Net()

如何算理解模型,就是那一张图片放入模型中,计算出每一部分维度。

5、训练网络、测试网络

#构建损失器

criterion = torch.nn.CrossEntropyLoss()

#构建优化器

optimizer = torch.optim.SGD(model.parameters(),lr=0.01,momentum=0.5)

#训练网络

def train(epoch):

runing_loss = 0

for batchix,datas in enumerate(train_loader,0):

inputs,label = datas

optimizer.zero_grad()

output = model(inputs)

loss = criterion(output,label)

loss.backward()

optimizer.step()

runing_loss +=loss.item()

if batchix%300 == 299:

print('[%d %3d] %.3f'%(epoch+1,batchix+1,runing_loss/300))

runing_loss = 0

#测试网络

def test():

total = 0

correct = 0

with torch.no_grad():

for data in test_loader:

inputs,label = data

outputs = model(inputs)

_,pre = torch.max(outputs,dim=1)

total += label.size(0)

correct += (pre==label).sum().item()

print('准确率为%.3f%%' % (correct / total * 100))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()6、全部代码

import torch

from torchvision import datasets

from torchvision import transforms

import torch.nn.functional as F

from torch.utils.data import DataLoader

#数据增强

transform = transforms.Compose([

transforms.ToTensor(),

transforms.Normalize((0.1307,),(0.3081,))

])

#下载数据集

train_dataset = datasets.MNIST(

root='../dataset/mnist',

download=False,

train=True,

transform=transform

)

test_dataset = datasets.MNIST(

root='../dataset/mnist',

download=False,

train=False,

transform=transform

)

#构造数据集

train_loader = DataLoader(

dataset=train_dataset,

batch_size=64,

shuffle=True

)

test_loader = DataLoader(

dataset=test_dataset,

batch_size=64,

shuffle=True

)

#盗梦空间网络结构Inception

class InceptionA(torch.nn.Module):

def __init__(self,in_channels):

super(InceptionA,self).__init__()

#第一个分支 1*1 输出通道数16

self.branch1x1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

#第二个分支 输出通道数24

self.branch5x5_1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch5x5_2 = torch.nn.Conv2d(16,24,kernel_size=5,padding=2)

#第三个分支 输出通道数24

self.branch3x3_1 = torch.nn.Conv2d(in_channels,16,kernel_size=1)

self.branch3x3_2 = torch.nn.Conv2d(16,24,kernel_size=3,padding=1)

self.branch3x3_3 = torch.nn.Conv2d(24,24,kernel_size=3,padding=1)

#第四个分支 输出通道数 24

self.branch_pool = torch.nn.Conv2d(in_channels,24,kernel_size=1)

def forward(self,x):

brach1x1 = self.branch1x1(x)

brach5x5 = self.branch5x5_1(x)

brach5x5 = self.branch5x5_2(brach5x5)

branch3x3 = self.branch3x3_1(x)

branch3x3 = self.branch3x3_2(branch3x3)

branch3x3 = self.branch3x3_3(branch3x3)

branch_pool = F.avg_pool2d(x,kernel_size=3,stride = 1,padding = 1)

branch_pool = self.branch_pool(branch_pool)

output = [brach1x1,brach5x5,branch3x3,branch_pool]

return torch.cat(output,dim=1)

#构建网络

class Net(torch.nn.Module):

def __init__(self):

super(Net,self).__init__()

self.conv1 = torch.nn.Conv2d(1,10,kernel_size=5)

self.conv2 = torch.nn.Conv2d(88,20,kernel_size=5)

self.incep1 = InceptionA(in_channels=10)

self.incep2 = InceptionA(in_channels=20)

self.mp = torch.nn.MaxPool2d(2)

self.fc = torch.nn.Linear(1408,10)

def forward(self,x):

batch_size = x.size(0)

x = self.mp(F.relu(self.conv1(x)))

x = self.incep1(x)

x = self.mp(F.relu(self.conv2(x)))

x = self.incep2(x)

x = x.view(batch_size,-1)

x = self.fc(x)

return x

model = Net()

print(model)

#构建损失器

criterion = torch.nn.CrossEntropyLoss()

#构建优化器

optimizer = torch.optim.SGD(model.parameters(),lr=0.01,momentum=0.5)

#训练网络

def train(epoch):

runing_loss = 0

for batchix,datas in enumerate(train_loader,0):

inputs,label = datas

optimizer.zero_grad()

output = model(inputs)

loss = criterion(output,label)

loss.backward()

optimizer.step()

runing_loss +=loss.item()

if batchix%300 == 299:

print('[%d %3d] %.3f'%(epoch+1,batchix+1,runing_loss/300))

runing_loss = 0

#测试网络

def test():

total = 0

correct = 0

with torch.no_grad():

for data in test_loader:

inputs,label = data

outputs = model(inputs)

_,pre = torch.max(outputs,dim=1)

total += label.size(0)

correct += (pre==label).sum().item()

print('准确率为%.3f%%' % (correct / total * 100))

if __name__ == '__main__':

for epoch in range(10):

train(epoch)

test()

学了这几天的网络模型,感觉深度学习就像搭积木一样,最终看谁能把积木搭的最出色。。。。