pytorch学习笔记8-神经网络实战

目录

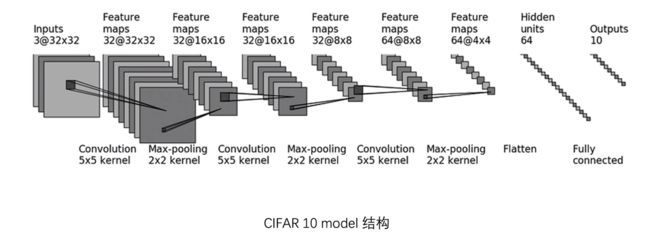

CIFAR-10模型

模型结构及卷积参数计算

模型代码

sequential的使用

使用SummaryWriter中的add_graph()展示model

正文

CIFAR-10模型

模型结构及卷积参数计算

CIFAR10数据集中每张图片有三个通道,高和宽是32*32的,经过三次卷积和最大池化,展平后经过两次线性层得到最后的输出,每次卷积的卷积核为5*5,最大池化的池化核为2*2,具体过程如下。

每张图片的维度为(3,32,32),首先经过卷积1,变成(32,32,32)的,如果步进和填充参数为默认的话,经过卷积后图片维度变小,(3,30,30),所以这里有设置,打开官网查看公式可以计算出卷积过程中的padding和stride参数值。

由于没有提出使用空洞卷积,dilation默认为1。

H_out=32=(32+2*padding-1*(5-1)-1)/stride+1

得:stride=1,padding=2

再经过池化1,维度变成(32,16,16),然后经过卷积2维度不变(此时的padding和stride值与卷积1时相同,可以总结出当卷积后高和宽不变时,stride=1,padding=2是一个解),经过池化2,维度变为(32,8,8),经过卷积3,维度变为(64,8,8),池化3,维度变为(64,4,4),展平变成1024(即64*4*4),之后经过线性层变成64,再经过一次线性层输出10。

模型代码

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear

class Model(nn.Module):

def __init__(self):

super(Model, self).__init__()

self.conv1 = Conv2d(3, 32, 5, padding=2)

self.maxpool1 = MaxPool2d(2)

self.conv2 = Conv2d(32, 32, 5, padding=2)

self.maxpool2 = MaxPool2d(2)

self.conv3 = Conv2d(32, 64, 5, padding=2)

self.maxpool3 = MaxPool2d(2)

self.flatten = Flatten()

self.linear1 = Linear(1024, 64)

self.linear2 = Linear(64, 10)

def forward(self, x):

x = self.conv1(x)

x = self.maxpool1(x)

x = self.conv2(x)

x = self.maxpool2(x)

x = self.conv3(x)

x = self.maxpool3(x)

x = self.flatten(x)

x = self.linear1(x)

x = self.linear2(x)

return x可以用下面几行代码验证代码是否有错误:

(用torch.ones创建全为1的数组,其维度与CIFAR10数据集中维度相同,(64,3,32,32)可理解为64张图片,每张图片3个通道,高和宽都是32)

model = Model()

Input = torch.ones((64, 3, 32, 32))

Output = model(Input)

print(Output.shape)输出结果:

torch.Size([64, 10])可以看到跟我们预期的是一样的。

sequential的使用

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

class Model(nn.Module):

def __init__(self):

super(Model, self).__init__()

# self.conv1 = Conv2d(3, 32, 5, padding=2)

# self.maxpool1 = MaxPool2d(2)

# self.conv2 = Conv2d(32, 32, 5, padding=2)

# self.maxpool2 = MaxPool2d(2)

# self.conv3 = Conv2d(32, 64, 5, padding=2)

# self.maxpool3 = MaxPool2d(2)

# self.flatten = Flatten()

# self.linear1 = Linear(1024, 64)

# self.linear2 = Linear(64, 10)

self.model1 = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 10)

)

def forward(self, x):

# x = self.conv1(x)

# x = self.maxpool1(x)

# x = self.conv2(x)

# x = self.maxpool2(x)

# x = self.conv3(x)

# x = self.maxpool3(x)

# x = self.flatten(x)

# x = self.linear1(x)

# x = self.linear2(x)

x = self.model1(x)

return x

model = Model()

Input = torch.ones((64, 3, 32, 32))

Output = model(Input)

print(Output.shape)

输出结果:

torch.Size([64, 10])使用nn.Sequential可以让代码简洁很多。

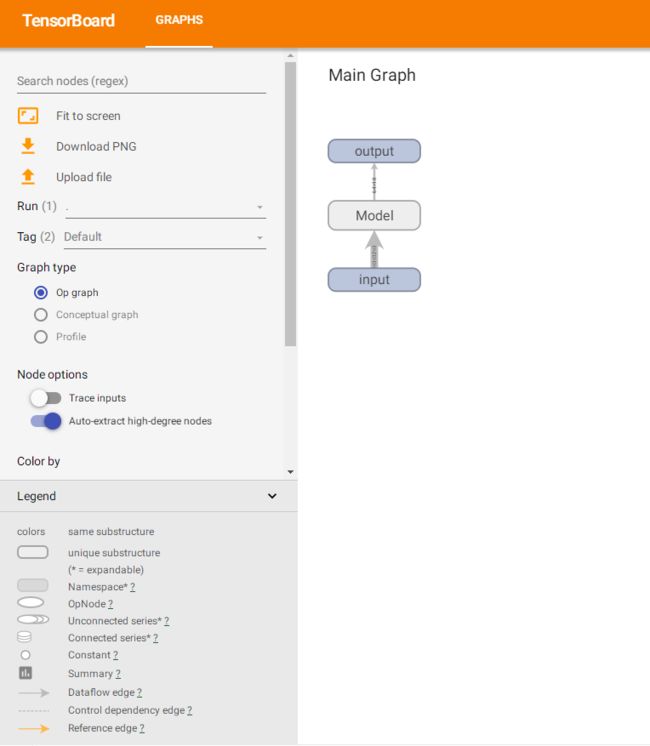

使用SummaryWriter中的add_graph()展示model

import torch

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.tensorboard import SummaryWriter

class Model(nn.Module):

def __init__(self):

super(Model, self).__init__()

self.model1 = Sequential(

Conv2d(3, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 32, 5, padding=2),

MaxPool2d(2),

Conv2d(32, 64, 5, padding=2),

MaxPool2d(2),

Flatten(),

Linear(1024, 64),

Linear(64, 10)

)

def forward(self, x):

x = self.model1(x)

return x

model = Model()

Input = torch.ones((64, 3, 32, 32))

Output = model(Input)

print(Output.shape)

writer = SummaryWriter("P22_sequential")

writer.add_graph(model, Input)

writer.close()

打开tensorboard可以发现里面多了个graphs

可以双击、按住鼠标拖动来查看模块。

可以看到神经网络每一步当中的维度变化,非常清晰。