使用TimeSformer预训练模型提取视频特征

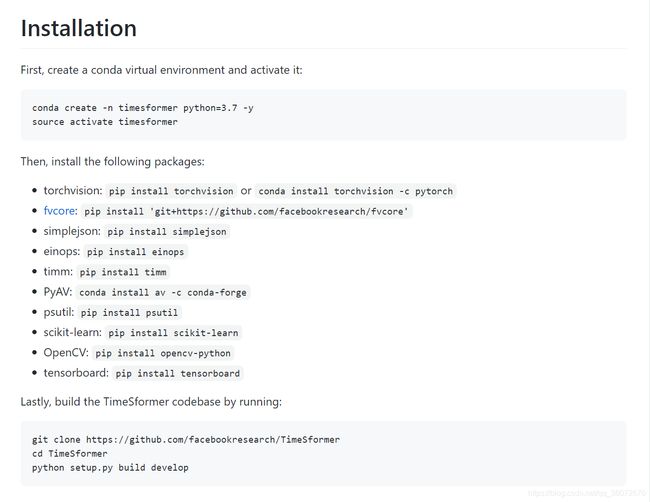

一、安装TimeSformer

github:GitHub - facebookresearch/TimeSformer: The official pytorch implementation of our paper "Is Space-Time Attention All You Need for Video Understanding?"

直接按照官方步骤安装即可,torchvision在安装pytorch时就一起安装好了,我这里选择安装1.8版本的pytorch,可以根据自己的cuda版本自行选择

pytorch安装:Previous PyTorch Versions | PyTorch

conda install pytorch==1.8.0 torchvision==0.9.0 torchaudio==0.8.0 cudatoolkit=10.2 -c pytorch其它的按照官方步骤即可

二、处理视频,根据需要更改dataloader

1、视频提帧

输入模型的是图片,所以需要先对视频提帧并保存(最后输入模型的根据模型具体参数,分别是8,16,32张图片,原始策略是均匀分段选择图片,可以自己更改)

首先需要准备一个存放视频目录的文件,方便进行批量处理,我这里选择生成格式为 视频名+'\t'+视频路径 的txt文件,生成代码如下:

import os

path = '/home/videos' # 要遍历的目录

txt_path = '/home/video.txt'

with open(txt_path, 'w') as f:

for root, dirs, names in os.walk(path):

for name in names:

ext = os.path.splitext(name)[1] # 获取后缀名

if ext == '.mp4':

video_path = os.path.join(root, name) # mp4文件原始地址

video_name = name.split('.')[0]

f.write(video_name+'\t'+video_path+'\n')得到的txt文件类似如下所示:

vi1231926809 /home/video/vi1231926809.mp4

vi3522215705 home/video/vi3522215705.mp4

vi3172646169 home/video/vi3172646169.mp4

然后用ffmpeg进行视频提帧:

import os

import sys

import subprocess

OUT_DATA_DIR="/home/video_pics"

txt_path = "/home/video.txt"

filelist = []

i = 1

with open(txt_path, 'r', encoding='utf-8') as f:

for line in f:

line = line.rstrip('\n')

video_name = line.split('\t')[0].split('.')[0]

dst_path = os.path.join(OUT_DATA_DIR, video_name)

video_path = line.split('\t')[1]

if not os.path.exists(dst_path):

os.makedirs(dst_path)

print(i)

i += 1

cmd = 'ffmpeg -i \"{}\" -r 1 -q:v 2 -f image2 \"{}/%05d.jpg\"'.format(video_path, dst_path)

subprocess.call(cmd, shell=True,stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL)

2、修改dataloader

import json

import torchvision

import random

import os

import numpy as np

import torch

import torch.nn.functional as F

import cv2

from torch.utils.data import Dataset

from torch.autograd import Variable

from models.transforms import *

class VideoClassificationDataset(Dataset):

def __init__(self, opt, mode):

# python 3

# super().__init__()

super(VideoClassificationDataset, self).__init__()

self.mode = mode # to load train/val/test data

self.feats_dir = opt['feats_dir']

if self.mode == 'val':

self.n = 5000 #提取的视频数量

if self.mode != 'inference':

print(f'load feats from {self.feats_dir}')

with open(self.feats_dir) as f:

feat_class_list = f.readlines()

self.feat_class_list = feat_class_list

mean =[0.485, 0.456, 0.406]

std = [0.229, 0.224, 0.225]

model_transform_params = {

"side_size": 256,

"crop_size": 224,

"num_segments": 8,

"sampling_rate": 5

}

# Get transform parameters based on model

transform_params = model_transform_params

transform_train = torchvision.transforms.Compose([

GroupMultiScaleCrop(transform_params["crop_size"], [1, .875, .75, .66]),

GroupRandomHorizontalFlip(is_flow=False),

Stack(roll=False),

ToTorchFormatTensor(div=True),

GroupNormalize(mean, std),

])

transform_val = torchvision.transforms.Compose([

GroupScale(int(transform_params["side_size"])),

GroupCenterCrop(transform_params["crop_size"]),

Stack(roll=False),

ToTorchFormatTensor(div=True),

GroupNormalize(mean, std),

])

self.transform_params = transform_params

self.transform_train = transform_train

self.transform_val = transform_val

print("Finished initializing dataloader.")

def __getitem__(self, ix):

"""This function returns a tuple that is further passed to collate_fn

"""

ix = ix % self.n

fc_feat = self._load_video(ix)

data = {

'fc_feats': Variable(fc_feat),

'video_id': ix,

}

return data

def __len__(self):

return self.n

def _load_video(self, idx):

prefix = '{:05d}.jpg'

feat_path_list = []

for i in range(len(self.feat_class_list)):

video_name = self.feat_class_list[i].rstrip('\n').split('\t')[0]+'-'

feat_path = self.feat_class_list[i].rstrip('\n').split('\t')[1]

feat_path_list.append(feat_path)

video_data = {}

if self.mode == 'val':

images = []

frame_list =os.listdir(feat_path_list[idx])

average_duration = len(frame_list) // self.transform_params["num_segments"]

# offests为采样坐标

offsets = np.array([int(average_duration / 2.0 + average_duration * x) for x in range(self.transform_params["num_segments"])])

offsets = offsets + 1

for seg_ind in offsets:

p = int(seg_ind)

seg_imgs = Image.open(os.path.join(feat_path_list[idx], prefix.format(p))).convert('RGB')

images.append(seg_imgs)

video_data = self.transform_val(images)

video_data = video_data.view((-1, self.transform_params["num_segments"]) + video_data.size()[1:])

return video_data

三、视频特征提取并存为npy文件

###更正:提取特征时为了保持一致性,加载模型应该用eval()模式,这样同一个视频每次提取的特征是固定不变的。

import argparse

import os

import torch

import numpy as np

from torch.utils.data import DataLoader

import random

from dataloader import VideoClassificationDataset

from timesformer.models.vit import TimeSformer

device = torch.device("cuda:0")

if __name__ == '__main__':

opt = argparse.ArgumentParser()

opt.add_argument('test_list_dir', help="Directory where test features are stored.")

opt = vars(opt.parse_args())

test_opts = {'feats_dir': opt['test_list_dir']}

# =================模型建立======================

model = TimeSformer(img_size=224, num_classes=400, num_frames=8, attention_type='divided_space_time',

pretrained_model='/home/user04/extract_feature/TimeSformer_divST_8x32_224_K400.pyth')

model = model.eval().to(device)

print(model)

# ================数据加载========================

print("Use", torch.cuda.device_count(), 'gpus')

test_loader = {}

test_dataset = VideoClassificationDataset(test_opts, 'val')

test_loader = DataLoader(test_dataset, batch_size=1, num_workers=6, shuffle=False)

# ===================训练和验证========================

i = 0

file1 = open("/home/video.txt", 'r')

file1_list = file1.readlines()

for data in test_loader:

model_input = data['fc_feats'].to(device)

name_feature = file1_list[i].rstrip().split('\t')[0].split('.')[0]

i = i + 1

out = model(model_input, )

out = out.squeeze(0)

out = out.cpu().detach().numpy()

np.save('/home/video_feature/' + name_feature + '.npy', out)

print(i)

上面两个py文件放在和TimeSformer文件夹同级目录下就好

最终提取的命令为

python extract.py /home/video.txt

这一步的txt文件需要重新生成,格式为视频名加视频提取的帧目录,可以自行生成

最终的视频特征为768维的向量,可以保存为自己想要的数据类型

四、输入单独一个视频并提取特征

import os

import sys

import subprocess

import json

import torchvision

import random

import numpy as np

import torch

import torch.nn.functional as F

import cv2

from torch.utils.data import Dataset

from torch.autograd import Variable

from models.transforms import *

from timesformer.models.vit import TimeSformer

device = torch.device("cuda:0")

def get_input(image_path):

prefix = '{:05d}.jpg'

feat_path = image_path

video_data = {}

images = []

mean =[0.485, 0.456, 0.406]

std = [0.229, 0.224, 0.225]

transform_params = {

"side_size": 256,

"crop_size": 224,

"num_segments": 8,

"sampling_rate": 5

}

transform_val = torchvision.transforms.Compose([

GroupScale(int(transform_params["side_size"])),

GroupCenterCrop(transform_params["crop_size"]),

Stack(roll=False),

ToTorchFormatTensor(div=True),

GroupNormalize(mean, std),

])

frame_list = os.listdir(feat_path)

average_duration = len(frame_list) // transform_params["num_segments"]

# offests为采样坐标

offsets = np.array([int(average_duration / 2.0 + average_duration * x) for x in range(transform_params["num_segments"])])

offsets = offsets + 1

for seg_ind in offsets:

p = int(seg_ind)

seg_imgs = Image.open(os.path.join(feat_path, prefix.format(p))).convert('RGB')

images.append(seg_imgs)

video_data = transform_val(images)

video_data = video_data.view((-1, transform_params["num_segments"]) + video_data.size()[1:])

out = Variable(video_data)

return out

def extract(modal, data):

output = {}

out_image_dir = '/home/extract_feature/extract_image'

if modal == 'video':

# =================模型建立======================

model = TimeSformer(img_size=224, num_classes=400, num_frames=8, attention_type='divided_space_time',

pretrained_model='/home/user04/extract_feature/TimeSformer_divST_8x32_224_K400.pyth')

model = model.eval().to(device)

#print(model)

# =================视频抽帧======================

video_name = data.split('/')[-1].split('.')[0]

out_image_path = os.path.join(out_image_dir, video_name)

if not os.path.exists(out_image_path):

os.makedirs(out_image_path)

cmd = 'ffmpeg -i \"{}\" -r 1 -q:v 2 -f image2 \"{}/%05d.jpg\"'.format(data, out_image_path)

subprocess.call(cmd, shell=True,stdout=subprocess.DEVNULL, stderr=subprocess.DEVNULL)

# =================提取特征======================

model_input = get_input(out_image_path).unsqueeze(0).to(device)

print(model_input.shape)

out = model(model_input, )

out = out.squeeze(0)

out = out.cpu().detach().numpy()

return out

video_path = '/home/vi0114457/vi0114457.mp4'

modal = 'video'

out = extract(modal, video_path)