目录

- 一、K8S 单节点部署

-

- 1.1 拓补图与主机分配

- 1.2 部署前优化

- 1.3 ETCD集群部署

-

- 1.3.1 master主机创建k8s文件夹并上传etcd脚本,下载cffssl官方证书生成工具

- 1.3.2 证书的创建与签名证书的生成

- 1.3.3 下载etcd 二进制包

- 1.3.4 创建etcd相应目录

- 1.3.5 启动进入卡住状态等待其他节点加入

- 1.3.6 拷贝证书去其他节点

- 1.3.7 node 节点修改配置文件

- 1.3.8 检查etcd群集状态

- 1.4 docker引擎部署

- 1.5 flannel网络配置

-

- 1.5.1 写入分配的子网段到ETCD中,供flannel使用

- 1.5.2 所有node节点操作解压 flannel压缩包

- 1.5.3 node节点创建工作目录

- 1.5.4 两个node节点都编辑flannel.sh脚本

- 1.5.5 开启flannel网络功能

- 1.5.6 修改docker配置文件连接flannel网络

- 1.5.7 查看node节点网络信息

- 1.5.8 查看flannel分配给docker的IP地址

- 1.5.9 进入容器测试两个节点网络是否互通

- 1.6 master组件的部署

-

- 1.6.1 证书脚本的生成

- 1.6.2 生成证书

- 1.6.3 解压K8S服务器终端压缩包

- 1.6.4 复制相关命令到K8S 工作命令目录中

- 1.6.5 编辑令牌与绑定角色kubelet-bootstrap

- 1.6.6 开启apiserver,将数据存放在etcd集群中并检查kube状态

- 1.6.7 启动scheduler服务

- 1.6.8 启动controller-manager

- 1.6.9 查看etcd群集状态

- 1.7 node 节点部署kubectl和kube-proxy

-

- 1.7.1 将master01节点上kubectl和kube-proxy拷贝到node节点

- 1.7.2 node节点解压node.zip压缩包

- 1.7.3 master01创建kubeconfig目录

- 1.7.4 生成配置文件并拷贝到node节点

- 1.7.5 创建bootstrap角色赋予权限用于连接apiserver请求签名(关键)

- 1.8 node01节点操作生成kubelet kubelet.config配置文件

-

- 1.8.1 master上检查到node01节点的请求,查看证书状态

- 1.8.2 颁发证书,再次查看证书状态

- 1.8.3 查看群集节点

- 1.8.4 node01 启动proxy服务

- 1.9 node02节点部署

-

- 1.9.1把kubelet,kube-proxy的service文件拷贝到node2中

- 1.9.2 删除复制过来的证书,等会node02会自行申请证书

- 1.9.3 修改配置文件kubelet kubelet.config kube-proxy(三个配置文件)

- 1.9.4 启动服务

- 1.9.5 在master上操作查看请求

一、K8S 单节点部署

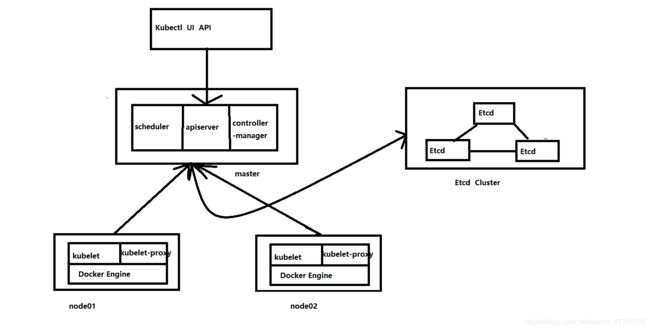

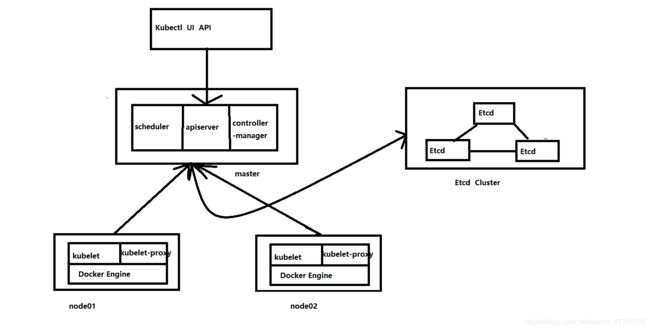

1.1 拓补图与主机分配

| 主机名 |

IP地址 |

所需部署组件 |

| master01 |

192.168.233.100 |

kube-apiserver、kube-controller-manager、kube-scheduler、etcd |

| node01 |

192.168.233.200 |

kubelet、kube-proxy、docker、flannel、etcd |

| node02 |

192.168.233.180 |

kubelet、kube-proxy、docker、flannel、etcd |

1.2 部署前优化

[root@localhost ~]# hostnamectl set-hostname master01 '//相同方法修改另外两台主机'

[root@localhost ~]# su

[root@node02 ~]# systemctl stop firewalld && systemctl disable firewalld ## 此处仅展示master01 的操作

Removed symlink /etc/systemd/system/multi-user.target.wants/firewalld.service.

Removed symlink /etc/systemd/system/dbus-org.fedoraproject.FirewallD1.service

1.3 ETCD集群部署

1.3.1 master主机创建k8s文件夹并上传etcd脚本,下载cffssl官方证书生成工具

[root@master01 ~]# mkdir k8s

[root@master01 ~]# cd k8s/

[root@master01 k8s]# ls //从宿主机拖进来

etcd-cert.sh etcd.sh

[root@master01 k8s]# mkdir etcd-cert

[root@master01 k8s]# mv etcd-cert.sh etcd-cert '//移动到相应目录'

[root@master01 k8s]# vim cfssl.sh

curl -L https://pkg.cfssl.org/R1.2/cfssl_linux-amd64 -o /usr/local/bin/cfssl

curl -L https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64 -o /usr/local/bin/cfssljson

curl -L https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64 -o /usr/local/bin/cfssl-certinfo

chmod +x /usr/local/bin/cfssl /usr/local/bin/cfssljson /usr/local/bin/cfssl-certinfo

[root@master01 k8s]# bash cfssl.sh '//运行下载工具的脚本'

[root@master01 k8s]# ls /usr/local/bin/

cfssl cfssl-certinfo cfssljson '//cfssl:生成证书工具、cfssljson:通过传入json文件生成证书、cfssl-certinfo查看证书信息'

1.3.2 证书的创建与签名证书的生成

[root@master01 k8s]# cd etcd-cert/

[root@master01 etcd-cert]#

### 定义ca证书

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

##实现证书签名

cat > ca-csr.json <<EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

## 生产证书,生成ca-key.pem ca.pem

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

## 指定etcd三个节点之间的通信验证

cat > server-csr.json <

1.3.3 下载etcd 二进制包

## 已经下载好了,直接上传,还有flannel和kubernetes-server的软件也一起上传

[root@master01 k8s]# ls

cfssl.sh etcd.sh flannel-v0.10.0-linux-amd64.tar.gz

etcd-cert etcd-v3.3.10-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz

[root@master k8s]# tar zxvf etcd-v3.3.10-linux-amd64.tar.gz '//解压软件'

1.3.4 创建etcd相应目录

- 创建etcd配置文件和命令和证书的文件夹,并移动相应文件到相应目录

[root@master01 k8s]# mkdir /opt/etcd/{cfg,bin,ssl} -p //配置文件,命令文件,证书

[root@master01 k8s]# ls /opt/etcd/

bin cfg ssl

[root@master01 k8s]# mv etcd-v3.3.10-linux-amd64/etcd etcd-v3.3.10-linux-amd64/etcdctl /opt/etcd/bin/

## 证书拷贝

[root@master01 k8s]# cp etcd-cert/*.pem /opt/etcd/ssl/

[root@master01 k8s]# ls /opt/etcd/ssl

ca-key.pem ca.pem server-key.pem server.pem

1.3.5 启动进入卡住状态等待其他节点加入

[root@master01 k8s]# vim etcd.sh

#!/bin/bash

# example: ./etcd.sh etcd01 192.168.1.10 etcd02=https://192.168.1.11:2380,etcd03=https://192.168.1.12:2380

ETCD_NAME=$1

ETCD_IP=$2

ETCD_CLUSTER=$3

WORK_DIR=/opt/etcd

cat <<EOF >$WORK_DIR/cfg/etcd

#[Member]

ETCD_NAME="${ETCD_NAME}"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://${ETCD_IP}:2380" ## '//2380端口是etcd内部通信端口'

ETCD_LISTEN_CLIENT_URLS="https://${ETCD_IP}:2379" ## '//2379端口是etcd外部通信端口'

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://${ETCD_IP}:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://${ETCD_IP}:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://${ETCD_IP}:2380,${ETCD_CLUSTER}"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

cat <<EOF >/usr/lib/systemd/system/etcd.service

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=${WORK_DIR}/cfg/etcd

ExecStart=${WORK_DIR}/bin/etcd \

--name=\${ETCD_NAME} \

--data-dir=\${ETCD_DATA_DIR} \

--listen-peer-urls=\${ETCD_LISTEN_PEER_URLS} \

--listen-client-urls=\${ETCD_LISTEN_CLIENT_URLS},http://127.0.0.1:2379 \

--advertise-client-urls=\${ETCD_ADVERTISE_CLIENT_URLS} \

--initial-advertise-peer-urls=\${ETCD_INITIAL_ADVERTISE_PEER_URLS} \

--initial-cluster=\${ETCD_INITIAL_CLUSTER} \

--initial-cluster-token=\${ETCD_INITIAL_CLUSTER_TOKEN} \

--initial-cluster-state=new \

--cert-file=${WORK_DIR}/ssl/server.pem \

--key-file=${WORK_DIR}/ssl/server-key.pem \

--peer-cert-file=${WORK_DIR}/ssl/server.pem \

--peer-key-file=${WORK_DIR}/ssl/server-key.pem \

--trusted-ca-file=${WORK_DIR}/ssl/ca.pem \

--peer-trusted-ca-file=${WORK_DIR}/ssl/ca.pem

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable etcd

systemctl restart etcd

## 进入卡住状态等待其他节点加入

[root@localhost k8s]# bash etcd.sh etcd01 192.168.233.100 etcd02=https://192.168.233.200:2380,etcd03=https://192.168.233.180:2380

[root@master02 k8s]# ps -ef | grep etcd ## etcd进程已经开启

root 17402 1 15 10月05 ? 00:31:43 /opt/etcd/bin/etcd --name=etcd01 --data-dir=/var/lib/etcd/default.etcd --listen-peer-urls=https://192.168.233.100:2380 --listen-client-urls=https://192.168.233.100:2379,http://127.0.0.1:2379 --advertise-client-urls=https://192.168.233.100:2379 --initial-advertise-peer-urls=https://192.168.233.100:2380 --initial-cluster=etcd01=https://192.168.233.100:2380,etcd02=https://192.168.233.200:2380,etcd03=https://192.168.233.180:2380 --initial-cluster-token=etcd-cluster --initial-cluster-state=new --cert-file=/opt/etcd/ssl/server.pem --key-file=/opt/etcd/ssl/server-key.pem --peer-cert-file=/opt/etcd/ssl/server.pem --peer-key-file=/opt/etcd/ssl/server-key.pem --trusted-ca-file=/opt/etcd/ssl/ca.pem --peer-trusted-ca-file=/opt/etcd/ssl/ca.pem

root 18075 1 9 10月05 ? 00:16:50 /opt/kubernetes/bin/kube-apiserver --logtostderr=true --v=4 --etcd-servers=https://192.168.233.100:2379,https://192.168.233.200:2379,https://192.168.233.180:2379 --bind-address=192.168.233.100 --secure-port=6443 --advertise-address=192.168.233.100 --allow-privileged=true --service-cluster-ip-range=10.0.0.0/24 --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction --authorization-mode=RBAC,Node --kubelet-https=true --enable-bootstrap-token-auth --token-auth-file=/opt/kubernetes/cfg/token.csv --service-node-port-range=30000-50000 --tls-cert-file=/opt/kubernetes/ssl/server.pem --tls-private-key-file=/opt/kubernetes/ssl/server-key.pem --client-ca-file=/opt/kubernetes/ssl/ca.pem --service-account-key-file=/opt/kubernetes/ssl/ca-key.pem --etcd-cafile=/opt/etcd/ssl/ca.pem --etcd-certfile=/opt/etcd/ssl/server.pem --etcd-keyfile=/opt/etcd/ssl/server-key.pem

root 22146 21991 0 00:49 pts/0 00:00:00 grep --color=auto etcd

1.3.6 拷贝证书去其他节点

[root@master01 k8s]# scp -r /opt/etcd/ [email protected]:/opt/

[root@master01 k8s]# scp -r /opt/etcd/ [email protected]:/opt

//启动脚本拷贝其他节点

[root@master01 k8s]# scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

[root@master01 k8s]# scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

1.3.7 node 节点修改配置文件

[root@node01 ~]# vim /opt/etcd/cfg/etcd

#[Member]

ETCD_NAME="etcd02" ## 原来是etcd01 node2 节点修改为etcd03

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.233.200:2380" ## node2 节点修改为192.168.233.180

ETCD_LISTEN_CLIENT_URLS="https://192.168.233.200:2379" ## node2 节点修改为192.168.233.180

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.233.200:2380" ## node2 节点修改为192.168.233.180

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.233.200:2379"

ETCD_INITIAL_CLUSTER="etcd01=https://192.168.233.100:2380,etcd02=https://192.168.233.200:2380,etcd03=https://192.168.233.180:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

//启动

[root@node01 ssl]# systemctl start etcd

[root@node01 ssl]# systemctl status etcd

1.3.8 检查etcd群集状态

root@localhost etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.233.100:2379,https://192.168.233.200:2379,https://192.168.233.180:2379" cluster-health

member 3eae9a550e2e3ec is healthy: got healthy result from https://192.168.233.100:2379

member 26cd4dcf17bc5cbd is healthy: got healthy result from https://192.168.233.200:2379

member 2fcd2df8a9411750 is healthy: got healthy result from https://192.168.233.180:2379

cluster is healthy

1.4 docker引擎部署

- 所有node节点部署docker引擎

- https://blog.csdn.net/weixin_47219725/article/details/108691608

1.5 flannel网络配置

1.5.1 写入分配的子网段到ETCD中,供flannel使用

[root@localhost etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.233.100:2379,https://192.168.233.200:2379,https://192.168.233.180:2379" set /coreos.com/network/config '{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}'

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

[root@localhost etcd-cert]# /opt/etcd/bin/etcdctl --ca-file=ca.pem --cert-file=server.pem --key-file=server-key.pem --endpoints="https://192.168.233.100:2379,https://192.168.233.200:2379,https://192.168.233.180:2379" get /coreos.com/network/config

{ "Network": "172.17.0.0/16", "Backend": {"Type": "vxlan"}}

1.5.2 所有node节点操作解压 flannel压缩包

'//谁需要跑pod,谁就需要安装flannel网络'

## master01上拷贝到 flannel-v0.10.0-linux-amd64.tar.gz所有node节点(只需要部署在node节点即可)

[root@master01 k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz [email protected]:/root

[root@master01 k8s]# scp flannel-v0.10.0-linux-amd64.tar.gz [email protected]:/root

[root@node01 ~]# tar zxvf flannel-v0.10.0-linux-amd64.tar.gz

flanneld

mk-docker-opts.sh

README.md

1.5.3 node节点创建工作目录

- node节点创建k8s工作目录,将两个脚本移动到对应工作目录

[root@node01 ~]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p

[root@node01 ~]# mv mk-docker-opts.sh flanneld /opt/kubernetes/bin/

1.5.4 两个node节点都编辑flannel.sh脚本

[root@node01 opt]# vim flannel.sh

#!/bin/bash

ETCD_ENDPOINTS=${1:-"http://127.0.0.1:2379"} ## 定义的端口是2379,节点对外提供的端口

cat <<EOF >/opt/kubernetes/cfg/flanneld

FLANNEL_OPTIONS="--etcd-endpoints=${ETCD_ENDPOINTS} \

-etcd-cafile=/opt/etcd/ssl/ca.pem \

-etcd-certfile=/opt/etcd/ssl/server.pem \

-etcd-keyfile=/opt/etcd/ssl/server-key.pem"

EOF

cat <<EOF >/usr/lib/systemd/system/flanneld.service

[Unit]

Description=Flanneld overlay address etcd agent

After=network-online.target network.target

Before=docker.service

[Service]

Type=notify

EnvironmentFile=/opt/kubernetes/cfg/flanneld

ExecStart=/opt/kubernetes/bin/flanneld --ip-masq \$FLANNEL_OPTIONS

ExecStartPost=/opt/kubernetes/bin/mk-docker-opts.sh -k DOCKER_NETWORK_OPTIONS -d /run/flannel/subnet.env

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

systemctl daemon-reload

systemctl enable flanneld

systemctl restart flanneld

1.5.5 开启flannel网络功能

[root@node01 opt]#bash bash flannel.sh https://192.168.233.100:2379,https://192.168.233.200:2379,https://192.168.233.180:2379

Created symlink from /etc/systemd/system/multi-user.target.wants/flanneld.service to /usr/lib/systemd/system/flanneld.service.

1.5.6 修改docker配置文件连接flannel网络

[root@node01 opt]# vim /usr/lib/systemd/system/docker.service

EnvironmentFile=/run/flannel/subnet.env ## 添加这句

ExecStart=/usr/bin/dockerd -H fd:// $DOCKER_NETWORK_OPTIONS --containerd=/run/containerd/containerd.sock

ExecReload=/bin/kill -s HUP $MAINPID ## 修改这句

## 其他省略

1.5.7 查看node节点网络信息

[root@node1 ~]# ifconfig

docker0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.48.1 netmask 255.255.255.0 broadcast 172.17.48.255

inet6 fe80::42:9fff:fe81:3f9f prefixlen 64 scopeid 0x20<link>

ether 02:42:9f:81:3f:9f txqueuelen 0 (Ethernet)

RX packets 6698 bytes 272659 (266.2 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 14177 bytes 12413297 (11.8 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.233.200 netmask 255.255.255.0 broadcast 192.168.233.255

inet6 fe80::1199:c740:2050:ac62 prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:e7:9d:50 txqueuelen 1000 (Ethernet)

RX packets 1542039 bytes 710889987 (677.9 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 1334778 bytes 625197407 (596.2 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.48.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::bc83:53ff:fee6:6ac prefixlen 64 scopeid 0x20<link>

ether be:83:53:e6:06:ac txqueuelen 0 (Ethernet)

RX packets 4 bytes 336 (336.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 4 bytes 336 (336.0 B)

TX errors 0 dropped 27 overruns 0 carrier 0 collisions 0

#################################################################################

[root@node2 ~]# ifconfig

docker0: flags=4099<UP,BROADCAST,MULTICAST> mtu 1500

inet 172.17.52.1 netmask 255.255.255.0 broadcast 172.17.52.255

inet6 fe80::42:5cff:fe66:d1a5 prefixlen 64 scopeid 0x20<link>

ether 02:42:5c:66:d1:a5 txqueuelen 0 (Ethernet)

RX packets 7084 bytes 286773 (280.0 KiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 14595 bytes 12427451 (11.8 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

ens33: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1500

inet 192.168.233.180 netmask 255.255.255.0 broadcast 192.168.233.255

inet6 fe80::9c9:3acb:1c5f:375a prefixlen 64 scopeid 0x20<link>

ether 00:0c:29:71:b6:ad txqueuelen 1000 (Ethernet)

RX packets 1168559 bytes 785593565 (749.2 MiB)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 735210 bytes 57001175 (54.3 MiB)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

flannel.1: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.52.0 netmask 255.255.255.255 broadcast 0.0.0.0

inet6 fe80::9030:b4ff:fee7:15bc prefixlen 64 scopeid 0x20<link>

ether 92:30:b4:e7:15:bc txqueuelen 0 (Ethernet)

RX packets 4 bytes 336 (336.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 4 bytes 336 (336.0 B)

TX errors 0 dropped 26 overruns 0 carrier 0 collisions 0

1.5.8 查看flannel分配给docker的IP地址

[root@node01 ~]# cat /run/flannel/subnet.env

DOCKER_OPT_BIP="--bip=172.17.48.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_NETWORK_OPTIONS=" --bip=172.17.48.1/24 --ip-masq=false --mtu=1450"

[root@node02 ~]# cat /run/flannel/subnet.env

DOCKER_OPT_BIP="--bip=172.17.52.1/24"

DOCKER_OPT_IPMASQ="--ip-masq=false"

DOCKER_OPT_MTU="--mtu=1450"

DOCKER_NETWORK_OPTIONS=" --bip=172.17.52.1/24 --ip-masq=false --mtu=1450"

1.5.9 进入容器测试两个节点网络是否互通

[root@node02 ~]# docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

d440f7bc0ec4 centos:7 "/bin/bash" 6 days ago Exited (127) 6 days ago vigorous_shamir

[root@localhost ~]# docker start d440f7bc0ec4

d440f7bc0ec4

[root@localhost ~]# docker exec -it d440f7bc0ec4 /bin/bash

[root@d440f7bc0ec4 /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.52.2 netmask 255.255.255.0 broadcast 172.17.52.255

ether 02:42:ac:11:34:02 txqueuelen 0 (Ethernet)

RX packets 7 bytes 586 (586.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

###########################################

[root@promote ~]# docker ps -a

e721762e5978 centos:7 "/bin/bash" 6 days ago Exited (0) 6 days ago dreamy_matsumoto

[root@promote ~]# docker start e721762e5978

e721762e5978

[root@promote ~]# docker exec -it e721762e5978 /bin/bash

[root@e721762e5978 /]#

[root@e721762e5978 /]# ifconfig

eth0: flags=4163<UP,BROADCAST,RUNNING,MULTICAST> mtu 1450

inet 172.17.48.3 netmask 255.255.255.0 broadcast 172.17.48.255

ether 02:42:ac:11:30:03 txqueuelen 0 (Ethernet)

RX packets 8 bytes 656 (656.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

lo: flags=73<UP,LOOPBACK,RUNNING> mtu 65536

inet 127.0.0.1 netmask 255.0.0.0

loop txqueuelen 1000 (Local Loopback)

RX packets 0 bytes 0 (0.0 B)

RX errors 0 dropped 0 overruns 0 frame 0

TX packets 0 bytes 0 (0.0 B)

TX errors 0 dropped 0 overruns 0 carrier 0 collisions 0

## node01 上容器 里 ping node02里面的容器

[root@e721762e5978 /]# ping 172.17.52.2

PING 172.17.52.2 (172.17.52.2) 56(84) bytes of data.

64 bytes from 172.17.52.2: icmp_seq=1 ttl=62 time=1.01 ms

64 bytes from 172.17.52.2: icmp_seq=2 ttl=62 time=0.878 ms

64 bytes from 172.17.52.2: icmp_seq=3 ttl=62 time=3.74 ms

64 bytes from 172.17.52.2: icmp_seq=4 ttl=62 time=0.538 ms

^C

--- 172.17.52.2 ping statistics ---

4 packets transmitted, 4 received, 0% packet loss, time 3005ms

rtt min/avg/max/mdev = 0.538/1.543/3.744/1.282 ms

1.6 master组件的部署

1.6.1 证书脚本的生成

[root@master01 k8s]# unzip master.zip

[root@master01 k8s]# mkdir /opt/kubernetes/{cfg,bin,ssl} -p

[root@master01 k8s]# mkdir k8s-cert

[root@master01 k8s]# cd k8s-cert/

[root@master01 k8s-cert]# vim k8s-cert.sh ## 创建证书脚本

cat > ca-config.json <<EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

cat > ca-csr.json <<EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

#-----------------------

cat > server-csr.json < admin-csr.json <<EOF

{

"CN": "admin",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "system:masters",

"OU": "System"

}

]

}

EOF

cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes admin-csr.json | cfssljson -bare admin

#-----------------------

cat > kube-proxy-csr.json <

1.6.2 生成证书

[root@master01 k8s-cert]# bash k8s-cert.sh '//生成证书'

[root@master k8s-cert]# ls

admin.csr admin.pem ca-csr.json k8s-cert.sh kube-proxy-key.pem server-csr.json

admin-csr.json ca-config.json ca-key.pem kube-proxy.csr kube-proxy.pem server-key.pem

admin-key.pem ca.csr ca.pem kube-proxy-csr.json server.csr server.pem

[root@master01 k8s-cert]# ls *.pem

admin-key.pem ca-key.pem kube-proxy-key.pem server-key.pem

admin.pem ca.pem kube-proxy.pem server.pem

[root@master01 k8s-cert]# cp ca*.pem server*.pem /opt/kubernets/ssl/ '//复制证书到工作目录'

[root@master01 k8s-cert]# ls /opt/kubernets/ssl/

ca-key.pem ca.pem server-key.pem server.pem

1.6.3 解压K8S服务器终端压缩包

[root@master01 k8s-cert]# cd ..

[root@master01 k8s]# ls

cfssl.sh etcd-v3.3.10-linux-amd64 k8s-cert

etcd-cert etcd-v3.3.10-linux-amd64.tar.gz kubernetes-server-linux-amd64.tar.gz

etcd.sh flannel-v0.10.0-linux-amd64.tar.gz master.zip

[root@master01 k8s]# tar zxvf kubernetes-server-linux-amd64.tar.gz

1.6.4 复制相关命令到K8S 工作命令目录中

[root@master01 k8s]# cd kubernetes/server/bin/

[root@master01 bin]# cp kube-controller-manager kube-scheduler kubectl kube-apiserver /opt/kubernets/bin/

[root@master01 bin]# ls /opt/kubernetes/bin/

kube-apiserver kube-controller-manager kubectl kube-scheduler

1.6.5 编辑令牌与绑定角色kubelet-bootstrap

## 使用 head -c 16 /dev/urandom | od -An -t x | tr -d ' ' 可以随机生成序列号

[root@master01 k8s]# vim /opt/kubernetes/cfg/token.csv

6c65aa8248e15a0cb4bf17b280fa7be1,kubelet-bootstrap,10001,"system:kubelet-bootstrap"

1.6.6 开启apiserver,将数据存放在etcd集群中并检查kube状态

[root@master01 kubernetes]# bash apiserver.sh 192.168.233.100 https://192.168.233.100:2379,https://192.168.233.200:2379,https://192.168.233.180:2379

[root@master01 kubernetes]# ls /opt/kubernetes/cfg/

kube-apiserver token.csv

[root@master01 kubernetes]# netstat -ntap |grep kube

[root@master01 kubernetes]# ps aux |grep kube

[root@master01 kubernetes]# vim /opt/kubernetes/cfg/kube-apiserver

...省略内容

--secure-port=6443 \ '//其实就是443,https协议通信端口'

...省略内容

[root@localhost k8s]# netstat -ntap |grep 6443

tcp 0 0 192.168.233.100:6443 0.0.0.0:* LISTEN 18075/kube-apiserve

tcp 0 0 192.168.233.100:44770 192.168.233.100:6443 ESTABLISHED 18075/kube-apiserve

tcp 0 0 192.168.233.100:6443 192.168.233.100:44770 ESTABLISHED 18075/kube-apiserve

1.6.7 启动scheduler服务

[root@master kubernetes]# ./scheduler.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-scheduler.service to /usr/lib/systemd/system/kube-scheduler.service.

[root@master kubernetes]# systemctl status kube-scheduler

1.6.8 启动controller-manager

[root@master kubernetes]# ./controller-manager.sh 127.0.0.1

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-controller-manager.service to /usr/lib/systemd/system/kube-controller-manager.service.

[root@master kubernetes]# systemctl status kube-controller-manager

1.6.9 查看etcd群集状态

[root@master01 k8s]# /opt/kubernetes/bin/kubectl get cs

NAME STATUS MESSAGE ERROR

controller-manager Healthy ok

scheduler Healthy ok

etcd-1 Healthy {"health":"true"}

etcd-2 Healthy {"health":"true"}

etcd-0 Healthy {"health":"true"}

1.7 node 节点部署kubectl和kube-proxy

1.7.1 将master01节点上kubectl和kube-proxy拷贝到node节点

[root@master kubernetes]# cd /root/k8s/kubernetes/server/bin/

[root@master bin]# ls

apiextensions-apiserver kube-apiserver.docker_tag kube-proxy

cloud-controller-manager kube-apiserver.tar kube-proxy.docker_tag

cloud-controller-manager.docker_tag kube-controller-manager kube-proxy.tar

cloud-controller-manager.tar kube-controller-manager.docker_tag kube-scheduler

hyperkube kube-controller-manager.tar kube-scheduler.docker_tag

kubeadm kubectl kube-scheduler.tar

kube-apiserver kubelet

[root@master bin]# scp kubelet kube-proxy [email protected]:/opt/k8s/bin

[root@master bin]# scp kubelet kube-proxy [email protected]:/opt/k8s/bin

1.7.2 node节点解压node.zip压缩包

[root@node01 ~]# ls

anaconda-ks.cfg flannel-v0.10.0-linux-amd64.tar.gz node.zip

[root@node01 ~]# unzip node.zip

[root@node01 ~]# ls

anaconda-ks.cfg flannel-v0.10.0-linux-amd64.tar.gz kubelet.sh node.zip proxy.sh

1.7.3 master01创建kubeconfig目录

root@master01 bin]# cd /root/k8s/

[root@master01 k8s]# mkdir kubeconfig

[root@master01 k8s]# cd kubeconfig/

[root@master01 kubeconfig]# vim kubeconfig

APISERVER=$1

SSL_DIR=$2

# 创建kubelet bootstrapping kubeconfig

export KUBE_APISERVER="https://$APISERVER:6443"

# 设置集群参数

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

# 设置客户端认证参数

kubectl config set-credentials kubelet-bootstrap \

--token=6c65aa8248e15a0cb4bf17b280fa7be1 \ '//此token序列号就是之前/opt/kubernetes/cfg/token.csv 文件中使用的的'

--kubeconfig=bootstrap.kubeconfig

# 设置上下文参数

kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

# 设置默认上下文

kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

#----------------------

# 创建kube-proxy kubeconfig文件

kubectl config set-cluster kubernetes \

--certificate-authority=$SSL_DIR/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-credentials kube-proxy \

--client-certificate=$SSL_DIR/kube-proxy.pem \

--client-key=$SSL_DIR/kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

[root@master01 kubeconfig]# export PATH=$PATH://opt/kubernetes/bin '//设置环境变量(可以写入到/etc/prlfile中)'

1.7.4 生成配置文件并拷贝到node节点

[root@master kubeconfig]# bash kubeconfig 192.168.233.100 /root/k8s/k8s-cert/

[root@master kubeconfig]# ls

bootstrap.kubeconfig kubeconfig kube-proxy.kubeconfig

[root@master kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/k8s/cfg

[root@master kubeconfig]# scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/k8s/cfg

1.7.5 创建bootstrap角色赋予权限用于连接apiserver请求签名(关键)

[root@master01 kubeconfig]# kubectl create clusterrolebinding kubelet-bootstrap --clusterrole=system:node-bootstrapper --user=kubelet-bootstrap

clusterrolebinding.rbac.authorization.k8s.io/kubelet-bootstrap created

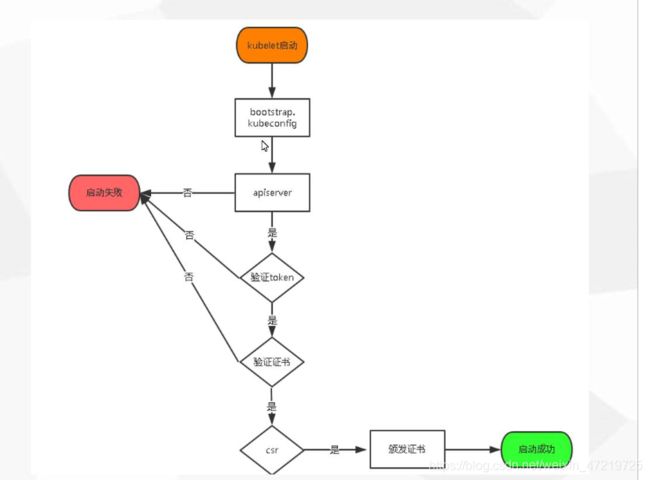

1.8 node01节点操作生成kubelet kubelet.config配置文件

[root@node1 ~]# bash kubelet.sh 192.168.233.200

Created symlink from /etc/systemd/system/multi-user.target.wants/kubelet.service to /usr/lib/systemd/system/kubelet.service.

1.8.1 master上检查到node01节点的请求,查看证书状态

[root@master01 kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-xmi9gQiUIFuyZ9KAIKFIyf4JiQOuPN1tACjVzu_SH6s 71s kubelet-bootstrap Pending

'//pending:等待集群给该节点办法证书'

1.8.2 颁发证书,再次查看证书状态

[root@master01 kubeconfig]# kubectl certificate approve node-csr-xmi9gQiUIFuyZ9KAIKFIyf4JiQOuPN1tACjVzu_SH6s

certificatesigningrequest.certificates.k8s.io/node-csr-xmi9gQiUIFuyZ9KAIKFIyf4JiQOuPN1tACjVzu_SH6s approved

[root@master01 kubeconfig]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-xmi9gQiUIFuyZ9KAIKFIyf4JiQOuPN1tACjVzu_SH6s 3m9s kubelet-bootstrap Approved,Issued '//已经被允许加入集群'

1.8.3 查看群集节点

//查看群集节点,成功加入node01节点

[root@master01 kubeconfig]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.233.200 Ready <none> 118s v1.12.3

1.8.4 node01 启动proxy服务

[root@node01 ~]# bash proxy.sh 192.168.233.200

Created symlink from /etc/systemd/system/multi-user.target.wants/kube-proxy.service to /usr/lib/systemd/system/kube-proxy.service.

[root@node01 ~]# systemctl status kube-proxy.service

[root@node01 ~]# systemctl enable kube-proxy.service

[root@node01 ~]# systemctl enable kubelet

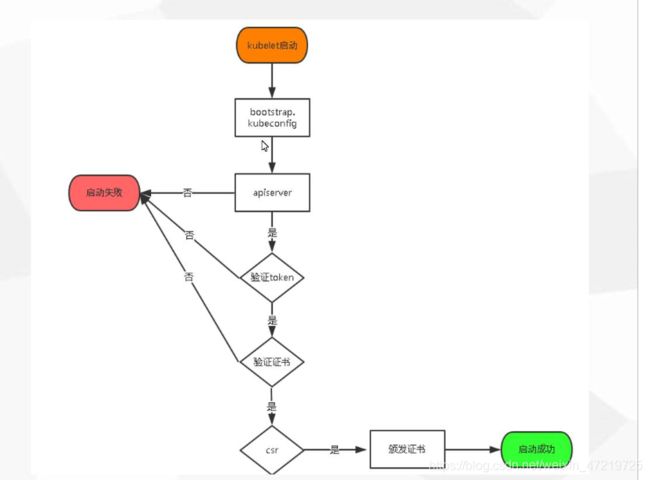

1.9 node02节点部署

- 把现成的/opt/kubernetes目录复制到其他节点进行修改即可

[root@node01 ~]# scp -r /opt/kubernetes/ [email protected]:/opt/

1.9.1把kubelet,kube-proxy的service文件拷贝到node2中

[root@node01 ~]# scp /usr/lib/systemd/system/{kubelet,kube-proxy}.service [email protected]:/usr/lib/systemd/system/

1.9.2 删除复制过来的证书,等会node02会自行申请证书

[root@node02 ~]# cd /opt/kubernetes/ssl/

[root@node02 ssl]# rm -rf *

1.9.3 修改配置文件kubelet kubelet.config kube-proxy(三个配置文件)

[root@node02 ssl]# cd ../cfg/

[root@node02 cfg]# vim kubelet

--hostname-override=192.168.233.180 \ ## 修改这个IP地址

[root@node02 cfg]# vim kubelet.config

address: 192.168.233.180 ## 修改这个IP地址

[root@localhost cfg]# vim kube-proxy

--hostname-override=192.168.233.180 \ ## 修改这个IP地址

1.9.4 启动服务

[root@node02 cfg]# systemctl start kubelet.service

[root@node02 cfg]# systemctl enable kubelet.service

[root@node02 cfg]# systemctl start kube-proxy.service

[root@node02 cfg]# systemctl enable kube-proxy.service

1.9.5 在master上操作查看请求

[root@master01 k8s]# kubectl get csr

NAME AGE REQUESTOR CONDITION

node-csr-OaH9HpIKh6AKlfdjEKm4C6aJ0UT_1YxNaa70yEAxnsU 15s kubelet-bootstrap Pending

## 授权加入群集

[root@master01 k8s]# kubectl certificate approve node-csr-OaH9HpIKh6AKlfdjEKm4C6aJ0UT_1YxNaa70yEAxnsU

## 查看群集中的节点

[root@localhost k8s]# kubectl get node

NAME STATUS ROLES AGE VERSION

192.168.195.150 Ready <none> 21h v1.12.3

192.168.195.151 Ready <none> 37s v1.12.3