人工智能系列实验(六)——梯度检验的Python实现

在实际的神经网络搭建过程中,前向传播是比较容易实现的,正确性较高;而反向传播的实现是有一定难度的,时常会出现bug。对于准确性要求很高的项目,梯度检验尤为重要。

梯度检验的原理

数学中对导数(梯度)的定义是

∂ J ∂ θ = lim ε → 0 J ( θ + ε ) − J ( θ − ε ) 2 ε \frac{\partial J}{\partial \theta} =\lim_{\varepsilon \to 0} \frac{J(\theta + \varepsilon) - J(\theta - \varepsilon)}{2\varepsilon} ∂θ∂J=ε→0lim2εJ(θ+ε)−J(θ−ε)

我们需要验证反向传播计算得到的 ∂ J ∂ θ \frac{\partial J}{\partial \theta} ∂θ∂J是否准确,就可以用另一种方式,即上述的公式,利用前向传播分别计算出 J ( θ + ε ) J(\theta + \varepsilon) J(θ+ε)和 J ( θ − ε ) J(\theta - \varepsilon) J(θ−ε)来求得 ∂ J ∂ θ \frac{\partial J}{\partial \theta} ∂θ∂J,验证它是否与反向传播计算得到的一样。

梯度检验的Python实现

def gradient_check_n_test_case():

np.random.seed(1)

x = np.random.randn(4,3)

y = np.array([1, 1, 0])

W1 = np.random.randn(5,4)

b1 = np.random.randn(5,1)

W2 = np.random.randn(3,5)

b2 = np.random.randn(3,1)

W3 = np.random.randn(1,3)

b3 = np.random.randn(1,1)

parameters = {"W1": W1,

"b1": b1,

"W2": W2,

"b2": b2,

"W3": W3,

"b3": b3}

return x, y, parameters

分别实现其前向传播和反向传播(反向传播故意加了两处错误)

def forward_propagation_n(X, Y, parameters):

m = X.shape[1]

W1 = parameters["W1"]

b1 = parameters["b1"]

W2 = parameters["W2"]

b2 = parameters["b2"]

W3 = parameters["W3"]

b3 = parameters["b3"]

# RELU -> RELU -> SIGMOID

Z1 = np.dot(W1, X) + b1

A1 = relu(Z1)

Z2 = np.dot(W2, A1) + b2

A2 = relu(Z2)

Z3 = np.dot(W3, A2) + b3

A3 = sigmoid(Z3)

logprobs = np.multiply(-np.log(A3), Y) + np.multiply(-np.log(1 - A3), 1 - Y)

cost = 1. / m * np.sum(logprobs)

cache = (Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3)

return cost, cache

def backward_propagation_n(X, Y, cache):

m = X.shape[1]

(Z1, A1, W1, b1, Z2, A2, W2, b2, Z3, A3, W3, b3) = cache

dZ3 = A3 - Y

dW3 = 1. / m * np.dot(dZ3, A2.T)

db3 = 1. / m * np.sum(dZ3, axis=1, keepdims=True)

dA2 = np.dot(W3.T, dZ3)

dZ2 = np.multiply(dA2, np.int64(A2 > 0))

dW2 = 1. / m * np.dot(dZ2, A1.T) * 2 # 错误1

db2 = 1. / m * np.sum(dZ2, axis=1, keepdims=True)

dA1 = np.dot(W2.T, dZ2)

dZ1 = np.multiply(dA1, np.int64(A1 > 0))

dW1 = 1. / m * np.dot(dZ1, X.T)

db1 = 4. / m * np.sum(dZ1, axis=1, keepdims=True) # 错误2

gradients = {"dZ3": dZ3, "dW3": dW3, "db3": db3,

"dA2": dA2, "dZ2": dZ2, "dW2": dW2, "db2": db2,

"dA1": dA1, "dZ1": dZ1, "dW1": dW1, "db1": db1}

return gradients

我们通过一维列向量 g r a d a p p r o x gradapprox gradapprox保存通过前向传播获得的梯度,其每一个元素都对应着一个参数的梯度。再通过反向传播的梯度列向量 g r a d grad grad与其进行对比,判断误差是否过大。

计算对比的公式为

d i f f e r e n c e = ∥ g r a d − g r a d a p p r o x ∥ 2 ∥ g r a d ∥ 2 + ∥ g r a d a p p r o x ∥ 2 difference = \frac{\left \|grad - gradapprox \right \| _2}{\left \|grad \right \| _2+\left \|gradapprox \right \| _2} difference=∥grad∥2+∥gradapprox∥2∥grad−gradapprox∥2

其中计算矩阵的范数使用numpy的norm函数。

def gradient_check_n(parameters, gradients, X, Y, epsilon=1e-7):

parameters_values, _ = dictionary_to_vector(parameters)

grad = gradients_to_vector(gradients)

num_parameters = parameters_values.shape[0]

J_plus = np.zeros((num_parameters, 1))

J_minus = np.zeros((num_parameters, 1))

gradapprox = np.zeros((num_parameters, 1))

# 计算gradapprox

for i in range(num_parameters):

thetaplus = np.copy(parameters_values)

thetaplus[i][0] = thetaplus[i][0] + epsilon

J_plus[i], _ = forward_propagation_n(X, Y, vector_to_dictionary(thetaplus))

thetaminus = np.copy(parameters_values)

thetaminus[i][0] = thetaminus[i][0] - epsilon

J_minus[i], _ = forward_propagation_n(X, Y, vector_to_dictionary(thetaminus))

gradapprox[i] = (J_plus[i] - J_minus[i]) / (2 * epsilon)

numerator = np.linalg.norm(grad - gradapprox)

denominator = np.linalg.norm(grad) + np.linalg.norm(gradapprox)

difference = numerator / denominator

if difference < 2e-7:

print("backward propagation is wrong! difference = " + str(difference))

else:

print("backward propagation is right! difference = " + str(difference))

return difference

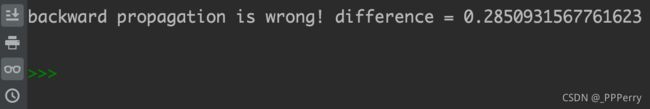

运行结果如下

X, Y, parameters = gradient_check_n_test_case()

cost, cache = forward_propagation_n(X, Y, parameters)

gradients = backward_propagation_n(X, Y, cache)

difference = gradient_check_n(parameters, gradients, X, Y)

关于本实验完整代码详见:

https://github.com/PPPerry/AI_projects/tree/main/6.gradient_check

提示

- 梯度检验是很缓慢的。使用近似公式来计算梯度非常消耗计算力。只在需要验证代码是否正确时才开启。确认代码没有问题后,就关闭掉梯度检验。

- 梯度检验是无法与dropout共存的。

往期人工智能系列实验:

人工智能系列实验(一)——用于识别猫的二分类单层神经网络

人工智能系列实验(二)——用于区分不同颜色区域的浅层神经网络

人工智能系列实验(三)——用于识别猫的二分类深度神经网络

人工智能系列实验(四)——多种神经网络参数初始化方法对比(Xavier初始化和He初始化)

人工智能系列实验(五)——正则化方法:L2正则化和dropout的Python实现