Java 开发flink流/批处理程序

Java 开发flink 流/批处理程序

文章目录

-

- Java 开发flink 流/批处理程序

-

- 一、安装`pom`依赖,配置打包插件以及入口类

- 二、编写`Flink`程序

- 三、完整的java代码

- 四、测试

一、安装pom依赖,配置打包插件以及入口类

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0modelVersion>

<groupId>com.lemongroupId>

<artifactId>FlinkTutorialartifactId>

<version>1.0-SNAPSHOTversion>

<properties>

<maven.compiler.source>8maven.compiler.source>

<maven.compiler.target>8maven.compiler.target>

<flink.version>1.14.4flink.version>

<slf4j.version>1.7.36slf4j.version>

<scala.version>2.12scala.libary.version>

properties>

<dependencies>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-javaartifactId>

<version>${flink.version}version>

dependency>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-streaming-java_${scala.version}artifactId>

<version>${flink.version}version>

dependency>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-clients_${scala.version}artifactId>

<version>${flink.version}version>

dependency>

<dependency>

<groupId>org.slf4jgroupId>

<artifactId>slf4j-apiartifactId>

<version>${slf4j.version}version>

dependency>

<dependency>

<groupId>org.slf4jgroupId>

<artifactId>slf4j-log4j12artifactId>

<version>${slf4j.version}version>

dependency>

<dependency>

<groupId>org.apache.logging.log4jgroupId>

<artifactId>log4j-to-slf4jartifactId>

<version>2.17.2version>

dependency>

<dependency>

<groupId>org.apache.flinkgroupId>

<artifactId>flink-connector-redis_2.11artifactId>

<version>1.1.5version>

dependency>

dependencies>

<build>

<plugins>

<plugin>

<groupId>org.apache.maven.pluginsgroupId>

<artifactId>maven-assembly-pluginartifactId>

<version>3.3.0version>

<configuration>

<descriptorRefs>

<descriptorRef>jar-with-dependenciesdescriptorRef>

descriptorRefs>

<archive>

<manifest>

<mainClass>com.lemon.flink.StreamWordCountmainClass>

manifest>

archive>

configuration>

<executions>

<execution>

<id>make-assemblyid>

<phase>packagephase>

<goals>

<goal>singlegoal>

goals>

execution>

executions>

plugin>

<plugin>

<groupId>org.apache.maven.pluginsgroupId>

<artifactId>maven-shade-pluginartifactId>

<executions>

<execution>

<phase>packagephase>

<goals>

<goal>shadegoal>

goals>

<configuration>

<filters>

<filter>

<artifact>*:*artifact>

<excludes>

<exclude>META-INF/*.SFexclude>

<exclude>META-INF/*.DSAexclude>

<exclude>META-INF/*.RSAexclude>

excludes>

filter>

filters>

<transformers>

<transformer implementation="org.apache.maven.plugins.shade.resource.ManifestResourceTransformer">

<mainClass>com.lemon.flink.BatchWordCountmainClass>

transformer>

transformers>

configuration>

execution>

executions>

plugin>

plugins>

build>

project>

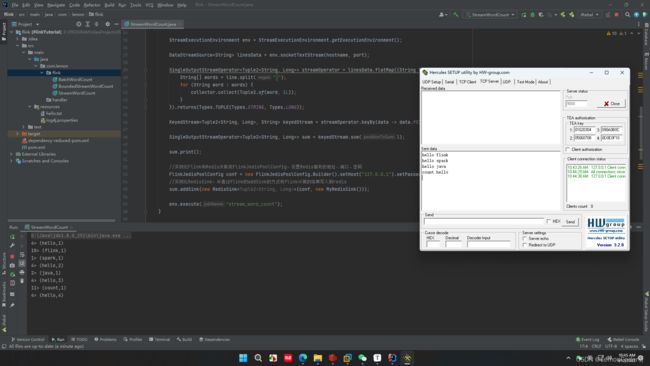

二、编写Flink程序

- 创建执行环境

/**

* 获取flink执行环境(两种方式)ExecutionEnvironment 、StreamExecutionEnvironment

* StreamExecutionEnvironment:默认就是流处理模式,但可以强制指定其他处理模式

* 在flink中,有界与无界数据流都可以强指定为流式运行环境,但是,如果明知一个数据来源为流式数据,就必须设置环境为AUTOMATIC 或STREAMING,不可以指定 为BATCH否则程序会报错!

*/

// 方式一:获取flink的批处理执行环境

ExecutionEnvironment env = ExecutionEnvironment.getExecutionEnvironment();

// 方式二:获取flink的流处理执行环境

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

// 指定数据处理模式 AUTOMATIC BATCH STREAMING

env.setRuntimeMode(RuntimeExecutionMode.AUTOMATIC);

env.setRuntimeMode(RuntimeExecutionMode.BATCH);

env.setRuntimeMode(RuntimeExecutionMode.STREAMING);

-

获取数据

// 批处理 DataSet<String> linesData = env.readTextFile("src/main/resources/hello.txt"); // 流处理(parameter工具获取参数) ParameterTool params = ParameterTool.fromArgs(args); String hostname = params.has("h") ? params.get("h") : "localhost"; int port = params.has("p") ? params.getInt("p") : 9000; DataStreamSource<String> linesData = env.socketTextStream(hostname, port); -

flatMap算子// 批处理 FlatMapOperator<String, Tuple2<String, Long>> streamOperator = linesData.flatMap((String line, Collector<Tuple2<String, Long>> collector) -> { String[] words = line.split(" "); for (String word : words) { collector.collect(Tuple2.of(word, 1L)); } }).returns(Types.TUPLE(Types.STRING, Types.LONG)); // 流处理 SingleOutputStreamOperator<Tuple2<String, Long>> streamOperator = linesData.flatMap((String line, Collector<Tuple2<String, Long>> collector) -> { String[] words = line.split(" "); for (String word : words) { collector.collect(Tuple2.of(word, 1L)); } }).returns(Types.TUPLE(Types.STRING, Types.LONG)); -

数据分组

// 批处理 UnsortedGrouping<Tuple2<String, Long>> group = streamOperator.groupBy(0); // 流处理 KeyedStream<Tuple2<String, Long>, String> keyedStream = streamOperator.keyBy(data -> data.f0); -

聚合

// 批处理 AggregateOperator<Tuple2<String, Long>> sum = group.sum(1); // 流处理 SingleOutputStreamOperator<Tuple2<String, Long>> sum = keyedStream.sum(1); -

创建自己的

RedisSink类,实现RedisMapper接口public static final class MyRedisSink implements RedisMapper<Tuple2<String, Long>> { @Override public RedisCommandDescription getCommandDescription() { return new RedisCommandDescription(RedisCommand.SET, null); } @Override public String getKeyFromData(Tuple2<String, Long> data) { return data.f0; } @Override public String getValueFromData(Tuple2<String, Long> data) { return data.f1.toString(); } } -

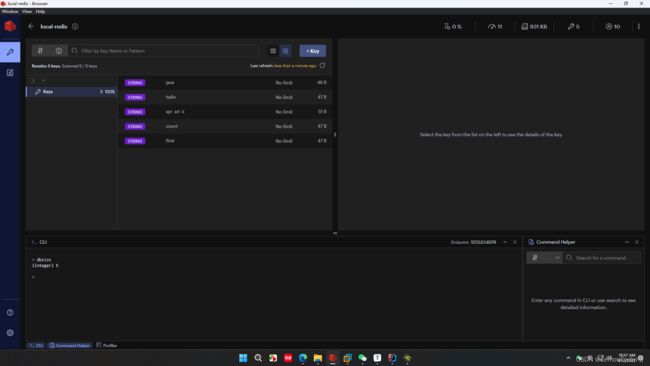

写入redis

//实例化Flink和Redis关联类FlinkJedisPoolConfig,设置Redis端口 FlinkJedisPoolConfig conf = new FlinkJedisPoolConfig.Builder().setHost("127.0.0.1").setPassword("root").setPort(6379).build(); //实例化RedisSink,并通过flink的addSink的方式将flink计算的结果插入到redis sum.addSink(new RedisSink<Tuple2<String, Long>>(conf, new MyRedisSink())); -

打印

// 流、批处理 sum.print(); -

执行

// 仅限流处理 // "stream_word_count" 定义当前工作的job名 env.execute("stream_word_count");

三、完整的java代码

package com.lemon.flink;

import org.apache.flink.api.common.typeinfo.Types;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.api.java.utils.ParameterTool;

import org.apache.flink.streaming.api.datastream.DataStreamSource;

import org.apache.flink.streaming.api.datastream.KeyedStream;

import org.apache.flink.streaming.api.datastream.SingleOutputStreamOperator;

import org.apache.flink.streaming.api.environment.StreamExecutionEnvironment;

import org.apache.flink.streaming.connectors.redis.RedisSink;

import org.apache.flink.streaming.connectors.redis.common.config.FlinkJedisPoolConfig;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisCommand;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisCommandDescription;

import org.apache.flink.streaming.connectors.redis.common.mapper.RedisMapper;

import org.apache.flink.util.Collector;

/**

* @description: 执行flink流处理计算,并将结果写入redis

* @author: LemonCoder

* @date: 4/12/2022

*/

public class StreamWordCount {

/**

* @description: main方法

* @author: LemonCoder

* @date: 4/12/2022

*/

public static void main(String[] args) throws Exception {

ParameterTool params = ParameterTool.fromArgs(args);

String hostname = params.has("h") ? params.get("h") : "localhost";

int port = params.has("p") ? params.getInt("p") : 9000;

StreamExecutionEnvironment env = StreamExecutionEnvironment.getExecutionEnvironment();

DataStreamSource<String> linesData = env.socketTextStream(hostname, port);

SingleOutputStreamOperator<Tuple2<String, Long>> streamOperator = linesData.flatMap((String line, Collector<Tuple2<String, Long>> collector) -> {

String[] words = line.split(" ");

for (String word : words) {

collector.collect(Tuple2.of(word, 1L));

}

}).returns(Types.TUPLE(Types.STRING, Types.LONG));

KeyedStream<Tuple2<String, Long>, String> keyedStream = streamOperator.keyBy(data -> data.f0);

SingleOutputStreamOperator<Tuple2<String, Long>> sum = keyedStream.sum(1);

sum.print();

//实例化Flink和Redis关联类FlinkJedisPoolConfig,设置Redis服务的地址、端口、密码

FlinkJedisPoolConfig conf = new FlinkJedisPoolConfig.Builder().setHost("127.0.0.1").setPassword("root").setPort(6379).build();

//实例化RedisSink,并通过flink的addSink的方式将flink计算的结果写入到redis

sum.addSink(new RedisSink<Tuple2<String, Long>>(conf, new MyRedisSink()));

env.execute("stream_word_count");

}

/**

* @description: 定义自己的RedisSink类,并实现RedisMapper接口

* @author: LemonCoder

* @date: 4/12/2022

*/

public static final class MyRedisSink implements RedisMapper<Tuple2<String, Long>> {

@Override

public RedisCommandDescription getCommandDescription() {

return new RedisCommandDescription(RedisCommand.SET, null);

}

@Override

public String getKeyFromData(Tuple2<String, Long> data) {

return data.f0;

}

@Override

public String getValueFromData(Tuple2<String, Long> data) {

return data.f1.toString();

}

}

}