废话不多说直接实战,部署Hadoop高性能集群:

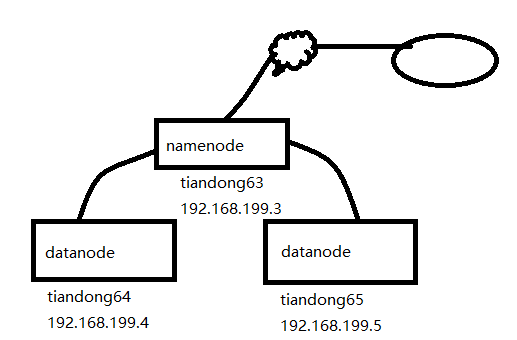

拓扑图:

一、实验前期环境准备:

1、三台主机配置hosts文件:(复制到另外两台主机上)

[root@tiandong63 ~]# more /etc/hosts

192.168.199.3 tiandong63

192.168.199.4 tiandong64

192.168.199.5 tiandong65

2、创建Hadoop账号(另外两台主机上都的创建)

[root@tiandong63 ~]#useradd -u 8000 hadoop

[root@tiandong63 ~]#echo '123456' | passwd --stdin hadoop

3、给hadoop用户增加sudo权限,增加内容(另外两台主机上都得配置)

[root@tiandong63 ~]# vim /etc/sudoers

hadoop ALL=(ALL) ALL

4、主机互信(在tiandong63主机上)

可以ssh无密码登录机器tiandong63,tiandong64,tiandong65 ,方便后期复制文件和启动服务。因为namenode启动时,会连接到datanode上启动对应的服务。

[root@tiandong63 ~]# su - hadoop

[hadoop@tiandong63 ~]$ ssh-keygen

[hadoop@tiandong63 ~]$ ssh-copy-id [email protected]

[hadoop@tiandong63 ~]$ ssh-copy-id [email protected]

二、配置hadoop环境

1、配置jdk环境:(三台都的配置)

[root@tiandong63 ~]# ll jdk-8u191-linux-x64.tar.gz

-rw-r--r-- 1 root root 191753373 Dec 30 00:58 jdk-8u191-linux-x64.tar.gz

[root@tiandong63 ~]# tar zxvf jdk-8u191-linux-x64.tar.gz -C /usr/local/src/

[root@tiandong63 ~]# vim /etc/profile

export JAVA_HOME=/usr/local/src/jdk1.8.0_191

export JAVA_BIN=/usr/local/src/jdk1.8.0_191/bin

export PATH=${JAVA_HOME}/bin:$PATH

export CLASSPATH=.:${JAVA_HOME}/lib/dt.jar:${JAVA_HOME}/lib/tools.jar

export hadoop_root_logger=DEBUG,console

[root@tiandong63 ~]# source /etc/profile

2、关闭防火墙(三台都的关闭)

[root@tiandong63 ~]# /etc/init.d/iptables stop

3、在tiandong63安装Hadoop 并配置成namenode主节点

[root@tiandong63 ~]# cd /home/hadoop/

[root@tiandong63 hadoop]# ll hadoop-2.7.7.tar.gz

-rw-r--r-- 1 hadoop hadoop 218720521 Dec 29 21:11 hadoop-2.7.7.tar.gz

[root@tiandong63 ~]# su - hadoop

[hadoop@tiandong63 ~]$ tar -zxvf hadoop-2.7.7.tar.gz

创建hadoop相关的工作目录:

[hadoop@tiandong63 ~]$ mkdir -p /home/hadoop/dfs/name/ /home/hadoop/dfs/data/^Chome/hadoop/tmp/

4、配置hadoop(修改7个配置文件)

文件名称:hadoop-env.sh、yarn-evn.sh、slaves、core-site.xml、hdfs-site.xml、mapred-site.xml、yarn-site.xml

[hadoop@tiandong63 ~]$ cd /home/hadoop/hadoop-2.7.7/etc/hadoop/

[hadoop@tiandong63 hadoop]$ vim hadoop-env.sh 指定hadoop的Java运行环境

25 export JAVA_HOME=/usr/local/src/jdk1.8.0_191

[hadoop@tiandong63 hadoop]$ vim yarn-env.sh 指定yarn框架的java运行环境

26 JAVA_HOME=/usr/local/src/jdk1.8.0_191

[hadoop@tiandong63 hadoop]$ vim slaves 指定datanode 数据存储服务器

tiandong64

tiandong65

[hadoop@tiandong63 hadoop]$ vim core-site.xml 指定访问hadoop web界面访问路径

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

[hadoop@tiandong63 hadoop]$ mkdir -p /home/hadoop/tmp

[hadoop@tiandong63 hadoop]$ vim hdfs-site.xml

hdfs的配置文件,dfs.http.address配置了hdfs的http的访问位置,dfs.replication配置了文件块的副本数,一般不大于从机的个数。

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

[hadoop@tiandong63 hadoop]$ vim mapred-site.xml

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

[hadoop@tiandong63 hadoop]$ vim yarn-site.xml 该文件为yarn框架的配置,主要是一些任务的启动位置

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

复制到其他datanode节点(192.168.199.4/5)

[hadoop@tiandong63 ~]$ scp -r /home/hadoop/hadoop-2.7.7 hadoop@tiandong64

[hadoop@tiandong63 ~]$ scp -r /home/hadoop/hadoop-2.7.7 hadoop@tiandong65

三、启动hadoop:

在tiandong63上面启动(使用hadoop用户)

1、格式化:

[hadoop@tiandong63 ~]$ cd /home/hadoop/hadoop-2.7.7/bin/

[hadoop@tiandong63 bin]$ ./hdfs nodename -format

2、启动hdfs:

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/sbin/start-dfs.sh

查看进程(tiandong63上面查看namenode):

[hadoop@tiandong63 ~]$ ps -axu | grep namenode --color

Warning: bad syntax, perhaps a bogus '-'? See /usr/share/doc/procps-3.2.8/FAQ

hadoop 42851 0.4 17.6 2762196 177412 ? Sl 18:13 1:26 /usr/local/src/jdk1.8.0_191/bin/java -Dproc_namenode -Xmx1000m -Djava.net.preferIPv4Stack=true -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=hadoop.log -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.id.str=hadoop -Dhadoop.root.logger=INFO,console -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dhadoop.policy.file=hadoop-policy.xml -Djava.net.preferIPv4Stack=true -Djava.net.preferIPv4Stack=true -Djava.net.preferIPv4Stack=true -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=hadoop-hadoop-namenode-tiandong63.log -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.id.str=hadoop -Dhadoop.root.logger=INFO,RFA -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dhadoop.policy.file=hadoop-policy.xml -Djava.net.preferIPv4Stack=true -Dhadoop.security.logger=INFO,RFAS -Dhdfs.audit.logger=INFO,NullAppender -Dhadoop.security.logger=INFO,RFAS -Dhdfs.audit.logger=INFO,NullAppender -Dhadoop.security.logger=INFO,RFAS -Dhdfs.audit.logger=INFO,NullAppender -Dhadoop.security.logger=INFO,RFAS org.apache.hadoop.hdfs.server.namenode.NameNode

hadoop 43046 0.2 13.3 2733336 134344 ? Sl 18:13 0:35 /usr/local/src/jdk1.8.0_191/bin/java -Dproc_secondarynamenode -Xmx1000m -Djava.net.preferIPv4Stack=true -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=hadoop.log -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.id.str=hadoop -Dhadoop.root.logger=INFO,console -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dhadoop.policy.file=hadoop-policy.xml -Djava.net.preferIPv4Stack=true -Djava.net.preferIPv4Stack=true -Djava.net.preferIPv4Stack=true -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=hadoop-hadoop-secondarynamenode-tiandong63.log -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.id.str=hadoop -Dhadoop.root.logger=INFO,RFA -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dhadoop.policy.file=hadoop-policy.xml -Djava.net.preferIPv4Stack=true -Dhadoop.security.logger=INFO,RFAS -Dhdfs.audit.logger=INFO,NullAppender -Dhadoop.security.logger=INFO,RFAS -Dhdfs.audit.logger=INFO,NullAppender -Dhadoop.security.logger=INFO,RFAS -Dhdfs.audit.logger=INFO,NullAppender -Dhadoop.security.logger=INFO,RFAS org.apache.hadoop.hdfs.server.namenode.SecondaryNameNode

在tiandong64和tiandong65上面查看进程(datanode)

[root@tiandong64 ~]# ps -aux|grep datanode

Warning: bad syntax, perhaps a bogus '-'? See /usr/share/doc/procps-3.2.8/FAQ

hadoop 3938 0.3 12.2 2757576 122592 ? Sl 18:13 1:04 /usr/local/src/jdk1.8.0_191/bin/java -Dproc_datanode -Xmx1000m -Djava.net.preferIPv4Stack=true -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=hadoop.log -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.id.str=hadoop -Dhadoop.root.logger=INFO,console -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dhadoop.policy.file=hadoop-policy.xml -Djava.net.preferIPv4Stack=true -Djava.net.preferIPv4Stack=true -Djava.net.preferIPv4Stack=true -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=hadoop-hadoop-datanode-tiandong64.log -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.id.str=hadoop -Dhadoop.root.logger=INFO,RFA -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dhadoop.policy.file=hadoop-policy.xml -Djava.net.preferIPv4Stack=true -server -Dhadoop.security.logger=ERROR,RFAS -Dhadoop.security.logger=ERROR,RFAS -Dhadoop.security.logger=ERROR,RFAS -Dhadoop.security.logger=INFO,RFAS org.apache.hadoop.hdfs.server.datanode.DataNode

3、启动yarn(在tiandong63上面,即启动分布式计算):

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/sbin/start-yarn.sh

在tiandong63上面查看进程(resourcemanager进程)

[hadoop@tiandong63 ~]$ ps -ef|grep resourcemanager --color

hadoop 43196 1 0 18:14 pts/1 00:02:55 /usr/local/src/jdk1.8.0_191/bin/java -Dproc_resourcemanager -Xmx1000m -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dyarn.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=yarn-hadoop-resourcemanager-tiandong63.log -Dyarn.log.file=yarn-hadoop-resourcemanager-tiandong63.log -Dyarn.home.dir= -Dyarn.id.str=hadoop -Dhadoop.root.logger=INFO,RFA -Dyarn.root.logger=INFO,RFA -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dyarn.policy.file=hadoop-policy.xml -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dyarn.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=yarn-hadoop-resourcemanager-tiandong63.log -Dyarn.log.file=yarn-hadoop-resourcemanager-tiandong63.log -Dyarn.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.root.logger=INFO,RFA -Dyarn.root.logger=INFO,RFA -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -classpath /home/hadoop/hadoop-2.7.7/etc/hadoop:/home/hadoop/hadoop-2.7.7/etc/hadoop:/home/hadoop/hadoop-2.7.7/etc/hadoop:/home/hadoop/hadoop-2.7.7/share/hadoop/common/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/common/*:/home/hadoop/hadoop-2.7.7/share/hadoop/hdfs:/home/hadoop/hadoop-2.7.7/share/hadoop/hdfs/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/hdfs/*:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/*:/home/hadoop/hadoop-2.7.7/share/hadoop/mapreduce/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/mapreduce/*:/contrib/capacity-scheduler/*.jar:/contrib/capacity-scheduler/*.jar:/contrib/capacity-scheduler/*.jar:/contrib/capacity-scheduler/*.jar:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/*:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/lib/*:/home/hadoop/hadoop-2.7.7/etc/hadoop/rm-config/log4j.properties org.apache.hadoop.yarn.server.resourcemanager.ResourceManager

在tiandong64和tiandong65上面查看进程(nodemanager)

[root@tiandong64 ~]# ps -aux|grep nodemanager --color

Warning: bad syntax, perhaps a bogus '-'? See /usr/share/doc/procps-3.2.8/FAQ

hadoop 4048 0.5 15.7 2802552 158380 ? Sl 18:14 1:42 /usr/local/src/jdk1.8.0_191/bin/java -Dproc_nodemanager -Xmx1000m -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dyarn.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=yarn-hadoop-nodemanager-tiandong64.log -Dyarn.log.file=yarn-hadoop-nodemanager-tiandong64.log -Dyarn.home.dir= -Dyarn.id.str=hadoop -Dhadoop.root.logger=INFO,RFA -Dyarn.root.logger=INFO,RFA -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -Dyarn.policy.file=hadoop-policy.xml -server -Dhadoop.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dyarn.log.dir=/home/hadoop/hadoop-2.7.7/logs -Dhadoop.log.file=yarn-hadoop-nodemanager-tiandong64.log -Dyarn.log.file=yarn-hadoop-nodemanager-tiandong64.log -Dyarn.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.home.dir=/home/hadoop/hadoop-2.7.7 -Dhadoop.root.logger=INFO,RFA -Dyarn.root.logger=INFO,RFA -Djava.library.path=/home/hadoop/hadoop-2.7.7/lib/native -classpath /home/hadoop/hadoop-2.7.7/etc/hadoop:/home/hadoop/hadoop-2.7.7/etc/hadoop:/home/hadoop/hadoop-2.7.7/etc/hadoop:/home/hadoop/hadoop-2.7.7/share/hadoop/common/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/common/*:/home/hadoop/hadoop-2.7.7/share/hadoop/hdfs:/home/hadoop/hadoop-2.7.7/share/hadoop/hdfs/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/hdfs/*:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/*:/home/hadoop/hadoop-2.7.7/share/hadoop/mapreduce/lib/*:/home/hadoop/hadoop-2.7.7/share/hadoop/mapreduce/*:/contrib/capacity-scheduler/*.jar:/contrib/capacity-scheduler/*.jar:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/*:/home/hadoop/hadoop-2.7.7/share/hadoop/yarn/lib/*:/home/hadoop/hadoop-2.7.7/etc/hadoop/nm-config/log4j.properties org.apache.hadoop.yarn.server.nodemanager.NodeManager

注意:start-dfs和start-yarn.sh这两个脚本可以使用start-all.sh代替

4、查看HDFS分布式文件系统状态

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/bin/hdfs dfsadmin -report

四、hadoop的简单使用

运行hadoop计算任务,word count字数统计

在HDFS上创建文件夹:

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/bin/hadoop fs -mkdir /test/input

查看文件夹:

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/bin/hadoop fs -ls /test/

drwxr-xr-x - hadoop supergroup 0 2018-12-30 21:40 /test/input

上传文件:

创建一个计算的文件:

[hadoop@tiandong63 ~]$ more file1.txt

welcome to beijing

my name is thunder

what is your name

上传到HDFS的/test/input文件夹中

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/bin/hadoop fs -put /home/hadoop/file1.txt /test/input

查看是否上传成功:

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/bin/hadoop fs -ls /test/input

Found 1 items

-rw-r--r-- 2 hadoop supergroup 56 2018-12-30 21:40 /test/input/file1.txt

计算:

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/bin/hadoop jar /home/hadoop/hadoop-2.7.7/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.7.7.jar wordcount /test/input /test/output

查看运行结果:

[hadoop@tiandong63 ~]$ /home/hadoop/hadoop-2.7.7/bin/hadoop fs -cat /test/output/part-r-00000

beijing 1

is 2

my 1

name 2

thunder 1

to 1

welcome 1

what 1

your 1