ELK集群搭建(基础教程)

文章目录

-

- 机器准备

- 集群内各台机器安装Elasticsearch

- ELK收集Nginx的json日志

- ELK收集Nginx正常日志和错误日志

- ELK收集Tomcat日志

- ELK收集docker日志

- modules日志收集

- 使用redis作为缓存收集日志

- 使用Kafka做缓存收集日志

-

-

- 安装zookeeper(三台机器均需安装)

- 安装部署Kafka(注:每个节点都配置,注意ip不同)

- 修改filebeat配置文件

- 修改logstash配置文件

- 报错处理

-

机器准备

| 10.20.1.114 | node01 |

|---|---|

| 10.20.1.115 | node02 |

| 10.20.1.116 | node03 |

集群内各台机器安装Elasticsearch

1、下载Elasticsearch的安装包

官方地址:https://www.elastic.co/cn/downloads/past-releases#elasticsearch

2、Elasticsearch安装(每台机器都执行)

#上传到安装包存放在/data/soft目录

#安装

rpm -ivh elasticsearch-6.4.2.rpm

#查看elasticsearch配置文件目录

rpm -qc elasticsearch

#编辑elasticsearch.yml配置文件

vi /etc/elasticsearch/elasticsearch.yml

grep "^[a-Z]" /etc/elasticsearch/elasticsearch.yml

node.name: node03 #节点名称,同一个集群内所有节点的节点名称不能重复

path.data: /data/elasticsearch #将es的数据存在该目录,注意创建该目录

path.logs: /var/log/elasticsearch #日志目录,会创建以集群名称的一个日志目录 eg:es-app.log

bootstrap.memory_lock: true #内存锁定

network.host: 10.20.xx.xx #绑定监听地址

http.port: 9200 #默认端口号

#创建es数据存储目录

mkdir /data/elasticsearch -p

#将es数据存储目录指定给elasticsearch用户

chown -R elasticsearch:elasticsearch /data/elasticsearch/

###锁定内存失败(memory locking requested for elasticsearch process but memory is not locked)的解决办法:

参考官方解决方案:

https://www.elastic.co/guide/en/elasticsearch/reference/6.6/setup-configuration-memory.html

https://www.elastic.co/guide/en/elasticsearch/reference/6.6/setting-system-settings.html#sysconfig

#编辑配置文件

systemctl edit elasticsearch

#将以下配置写入到配置文件&&保存

[Service]

LimitMEMLOCK=infinity

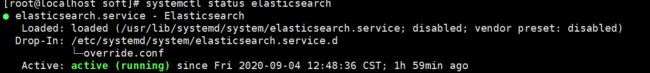

#启动elasticsearch服务&&查看es端口是否开启

systemctl start elasticsearch.service

systemctl status elasticsearch.service

netstat -natp | grep 9200

#在elasticsearch.yml配置文件中打开注释,设置相关参数

cluster.name: es-app #集群名称,同一个集群内所有节点集群名称相同

discovery.zen.ping.unicast.hosts: ["10.20.1.114", "10.20.1.115", "10.20.1.116"] #集群发现节点配置

discovery.zen.minimum_master_nodes: 2 #选举相关参数,公式: 节点数/2 +1

向集群中创建一些索引,数据

创建索引

[root@master elasticsearch]# curl -XPUT '10.20.1.114:9200/vipinfo?pretty'

{

"acknowledged" : true,

"shards_acknowledged" : true,

"index" : "vipinfo"

}

插入文档数据

curl -XPUT '10.20.1.114:9200/vipinfo/user/1?pretty' -H 'Content-Type: application/json' -d'

{

"first_name" : "John",

"last_name": "Smith",

"age" : 25,

"about" : "I love to go rock climbing", "interests": [ "sports", "music" ]

}

'

curl -XPUT '10.20.1.114:9200/vipinfo/user/2?pretty' -H 'Content-Type: application/json' -d' {

"first_name": "Jane",

"last_name" : "Smith",

"age" : 32,

"about" : "I like to collect rock albums", "interests": [ "music" ]

}'

#查看集群状态

curl -XGET 'http://10.20.1.114:9200/_cat/nodes?human&pretty'

4、安装Elasticsearch-head插件(可视化插件)

进入谷歌应用商店:https://chrome.google.com/webstore/category/extensions

搜索关键字:elasticsearch-head

将其添加到应用程序

#安装kibana-6.6.0-x86_64.rpm包

rpm -ivh kibana-6.6.0-x86_64.rpm

#修改Kibana配置文件

[root@master ~]# grep "^[a-Z]" /etc/kibana/kibana.yml

server.port: 5601

server.host: "10.20.1.114"

server.name: "node01"

elasticsearch.hosts: ["http://10.20.1.114:9200"]

kibana.index: ".kibana"

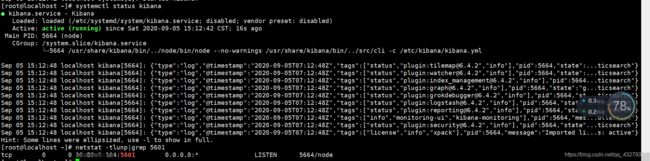

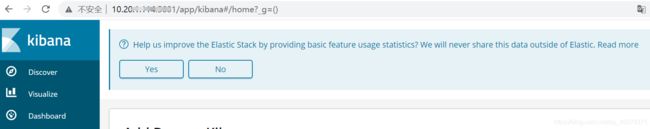

#启动Kibana&&查看服务状态&&通过IP访问界面

systemctl start kibana.service

systemctl status kibana.service

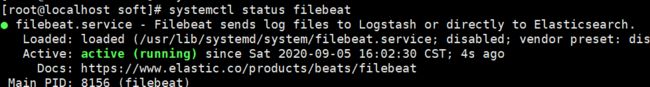

#安装filebeat包

rpm -ivh filebeat-6.4.2-x86_64.rpm

#查看filebeat配置文件

rpm -qc filebeat

#启动filebeat服务&&查看服务状态

#若filebeat无法启动并无报错日志,执行以下指令可看到报错

/usr/share/filebeat/bin/filebeat -c /etc/filebeat/filebeat.yml

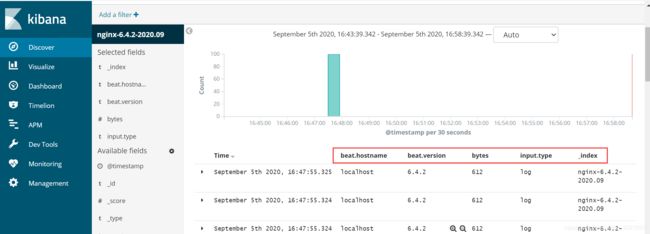

ELK收集Nginx的json日志

思路:1、将nginx中的日志以json格式记录

2、filebeat采的时候说明是json格式

3、传入es的日志为json,那么显示在kibana的格式也是json,便于日志管理

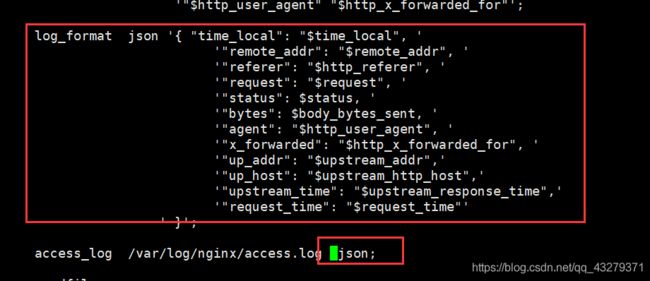

1、配置nginx的日志以json格式记录

#修改/etc/nginx/nginx.conf配置文件,加入以下内容,yml文件注意缩进

log_format json '{ "time_local": "$time_local", '

'"remote_addr": "$remote_addr", '

'"referer": "$http_referer", '

'"request": "$request", '

'"status": $status, '

'"bytes": $body_bytes_sent, '

'"agent": "$http_user_agent", '

'"x_forwarded": "$http_x_forwarded_for", '

'"up_addr": "$upstream_addr",'

'"up_host": "$upstream_http_host",'

'"upstream_time": "$upstream_response_time",'

'"request_time": "$request_time"'

' }';

access_log /var/log/nginx/access.log json;

#重启nginx服务

systemctl restart nginx.service

#再次进行压测&&查看nginx日志是否记录显示为json格式的键值对&&查看可知已是json格式

ab -n 100 -c 100 http://10.20.1.114/

tail -f /var/log/nginx/access.log

2、filebeat采的时候说明是json格式

#备份filebeat配置文件到/root目录下

[root@master soft]# cp /etc/filebeat/filebeat.yml /root/

#修改配置文件

cat > /etc/filebeat/filebeat.yml <<EOF

filebeat.inputs: #输入

- type: log #输入类型

enabled: true

paths: #filebeat采集路径

- /var/log/nginx/access.log

#说明input的日志是json格式

json.keys_under_root: true

json.overwrite_keys: true

setup.kibana:

host: "10.20.1.114:5601"

output.elasticsearch: #输出

hosts: ["10.20.1.114:9200"]

index: "nginx-%{[beat.version]}-%{+yyyy.MM}"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

EOF

ELK收集Nginx正常日志和错误日志

#编辑filebeat的配置文件,在/etc/filebeat/filebeat.yml加入以下配置

#filebeat输入配置

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

#输入为json格式

json.keys_under_root: true

json.overwrite_keys: true

#对正常日志加上access标签

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

#对错误日志加上error标签

tags: ["error"]

setup.kibana:

host: "10.20.1.114:5601"

#filebeat输入配置

output.elasticsearch:

hosts: ["10.20.1.114:9200"]

#index: "nginx-%{[beat.version]}-%{+yyyy.MM}"

indices:

#将输入带有access标签输出为这个索引

- index: "nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "access"

#将输入带有error标签输出为这个索引

- index: "nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "error"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

#保存退出&&重启filebeat服务&&重新压测数据(访问不存在页面)即可查看到现象

ELK收集Tomcat日志

#安装成功tomcat&&启动tomcat&&访问测试(1查看8080默认端口是否开启,2通过IP:8080在浏览器访问观察是否有tomcat官网页面)

yum install tomcat tomcat-webapps tomcat-admin-webapps tomcat-docs-webapp tomcat-javadoc -y

systemctl start tomcat

#修改tomcat日志为json格式

vim /etc/tomcat/server.xml

##删除第139行

139 pattern="%h %l %u %t "%r" %s %b" />

##将以下配置放入到139行

pattern="{"clientip":"%h","ClientUser":"%l","authenticated":"%u","AccessTime":&quo t;%t","method":"%r","status":"%s","SendBytes":"%b","Query?string":"%q","partn er":"%{Referer}i","AgentVersion":"%{User-Agent}i"}"/>

##保存退出&&重启服务&&查看日志

systemctl restart tomcat

tail -f /var/log/tomcat/localhost_access_log.2020-09-05.txt

#修改filebeat.yml配置文件

vim /etc/filebeat/filebeat.yml

#在filebeat.inputs:下增加以下配置

##############tomcat##############

- type: log

enabled: true

paths:

- /var/log/tomcat/localhost_access_log.*.txt

json.keys_under_root: true

json.overwrite_keys: true

tags: ["tomcat"]

#filebeat.outputs:下增加以下配置

- index: "tomcat-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

tags: "tomcat"

#重启服务即可查看到

systemctl restart filebeat.service

ELK收集docker日志

安装docker

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

wget -O /etc/yum.repos.d/docker-ce.repo https://mirrors.ustc.edu.cn/docker-ce/linux/centos/docker-ce.repo

sed -i 's#download.docker.com#mirrors.tuna.tsinghua.edu.cn/docker-ce#g' /etc/yum.repos.d/docker-ce.repo

yum install docker-ce -y

systemctl start docker

#添加阿里云仓库加速,每个人都可以自己到阿里云官网获取自己的加速registry-mirrors

tee /etc/docker/daemon.json <<-'EOF'

{

"registry-mirrors": ["https://76rdsfoe.mirror.aliyuncs.com"]

}

EOF

systemctl daemon-reload

systemctl restart docker

#登录docker(阿里云-》容器镜像服务-》访问凭证,该路径下设定固定密码,用作登录docker)

sudo docker login --username=jeffii registry.cn-hangzhou.aliyuncs.com

#紧接着,输入密码你刚设置的密码即可。若一下拉取镜像时提示connect refused的话,可重新登录下docker

#拉取ng镜像

docker pull nginx

docker run --name nginx -p 80:80 -d nginx

docker ps

docker logs -f nginx

配置filebeat收集单个docker日志

#获取容器id

/var/lib/docker/containers/

#编辑filebeat.yml配置文件

filebeat.inputs:

- type: docker

containers.ids:

- 'e5e90adc1871e0689f5346fac071db90cb462941c8ff4444ceecaec5772bbafa'

tags: ["docker-nginx"]

output.elasticsearch:

hosts: ["10.20.1.114:9200"]

index: "docker-nginx-%{[beat.version]}-%{+yyyy.MM}"

setup.template.name: "docker"

setup.template.pattern: "docker-*"

setup.template.enabled: false

setup.template.overwrite: true

modules日志收集

官方参考:

https://www.elastic.co/guide/en/beats/filebeat/6.4/configuration-filebeat-modules.html

demo01:收集nginx日志

#1.命令行输入激活模块(注意:确保filebeat.yml文件需配置modules)

filebeat modules enable nginx

#2.修改filebeat中的nginx模块配置文件

cat /etc/filebeat/modules.d/nginx.yml |egrep -v "#|^$"

- module: nginx

access:

enabled: true

var.paths: ["/var/log/nginx/access.log"]

error:

enabled: true

var.paths: ["/var/log/nginx/error.log"]

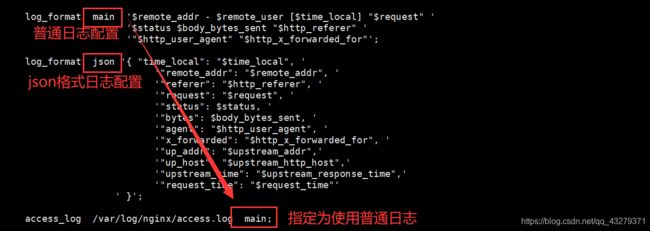

#3.修改nginx日志为普通格式

> /var/log/nginx/access.log

vi /etc/nginx/nginx.conf #指定日志记录格式为普通格式

nginx -t

systemctl restart nginx

#4.安装es的2个插件

sudo bin/elasticsearch-plugin install ingest-user-agent

sudo bin/elasticsearch-plugin install ingest-geoip

安装(集群内各台机器均需安装)

/usr/share/elasticsearch/bin/elasticsearch-plugin install ingest-user-agent

/usr/share/elasticsearch/bin/elasticsearch-plugin install ingest-geoip

#修改filebeat配置文件(配置文件在下面)

#5.重启es

systemctl restart elasticsearch

#6.重启filebeat

systemctl restart filebeat

filebeat配置文件

filebeat.config.modules:

path: ${path.config}/modules.d/*.yml

reload.enabled: true

reload.period: 10s

setup.kibana:

host: "10.20.1.114:5601"

output.elasticsearch:

hosts: ["10.20.1.114:9200"]

indices:

- index: "nginx-access-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

fileset.name: "access"

- index: "nginx-error-%{[beat.version]}-%{+yyyy.MM}"

when.contains:

fileset.name: "error"

setup.template.name: "nginx"

setup.template.pattern: "nginx-*"

setup.template.enabled: false

setup.template.overwrite: true

使用redis作为缓存收集日志

1、redis安装

复制代码#配置epel源

wget -O /etc/yum.repos.d/epel.repo http://mirrors.aliyun.com/repo/epel-7.repo

#安装redis

yum install redis -y

#修改redis配置文件

vim /etc/redis.conf

bind 10.20.1.114

#启动redis服务

systemctl start redis

2、修改filebeat的配置文件

复制代码#把nginx的日志格式改为json

#修改filebeat的主配置文件

vim /etc/filebeat/filebeat.yml

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

output.redis:

hosts: ["10.20.1.114"]

keys:

- key: "nginx_access"

when.contains:

tags: "access"

- key: "nginx_error"

when.contains:

tags: "error"

setup.template.name: "nginx"

setup.template.pattern: "nginx_*"

setup.template.enabled: false

setup.template.overwrite: true

#重启filebeat服务

systemctl restart filebeat

3、检查redis能否收集日志

复制代码#连接redis

redis-cli -h 10.20.1.114

#使用命令检查

10.20.1.114:6379> keys *

1) "nginx_access"

10.20.1.114:6379> LLEN nginx_access

(integer) 18

10.20.1.114:6379> type nginx_access

list

10.20.1.114:6379> LRANGE nginx_access 1 18

1) "{\"@timestamp\":\"2020-09-06T09:46:56.057Z\",\"@metadata\":{\"beat\":\"filebeat\",\"type\":\"doc\",\"version\":\"6.4.2\"},\"source\":\"/var/log/nginx/access.log\",\"offset\":21682,\"tags\":[\"access\"],\"prospector\":{\"type\":\"log\"},\"beat\":{\"name\":\"localhost\",\"hostname\":\"localhost\",\"version\":\"6.4.2\"},\"json\":{},\"message\":\"192.168.88.102 - - [06/Sep/2020:17:46:50 +0800] \\\"GET / HTTP/1.1\\\" 304 0 \\\"-\\\" \\\"Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/84.0.4147.135 Safari/537.36\\\" \\\"-\\\"\",\"input\":{\"type\":\"log\"},\"host\":{\"name\":\"localhost\"}}"

4、安装logstash工具

复制代码#进入目录

cd /data/soft

#上传logstash安装包,并安装

rpm -ivh logstash-6.4.2.rpm

#添加一个配置文件

cd /etc/logstash/conf.d

vim nginx_log.conf

input {

redis {

host => "10.20.1.114"

port => "6379"

db => "0"

key => "nginx_access"

data_type => "list"

}

redis {

host => "10.20.1.114"

port => "6379"

db => "0"

key => "nginx_error"

data_type => "list"

}

}

filter {

mutate {

convert => ["upstream_time", "float"]

convert => ["request_time", "float"]

}

}

output {

stdout {}

if "access" in [tags] {

elasticsearch {

hosts => "http://10.20.1.114:9200"

manage_template => false

index => "nginx_access-%{+yyyy.MM}"

}

}

if "error" in [tags] {

elasticsearch {

hosts => "http://10.20.1.114:9200"

manage_template => false

index => "nginx_error-%{+yyyy.MM}"

}

}

}

#启动logstash

/usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/nginx_log.conf

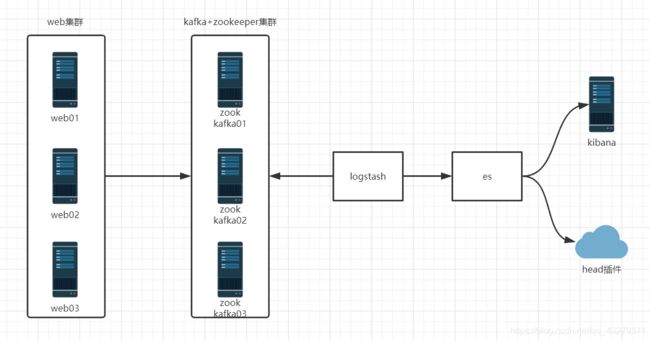

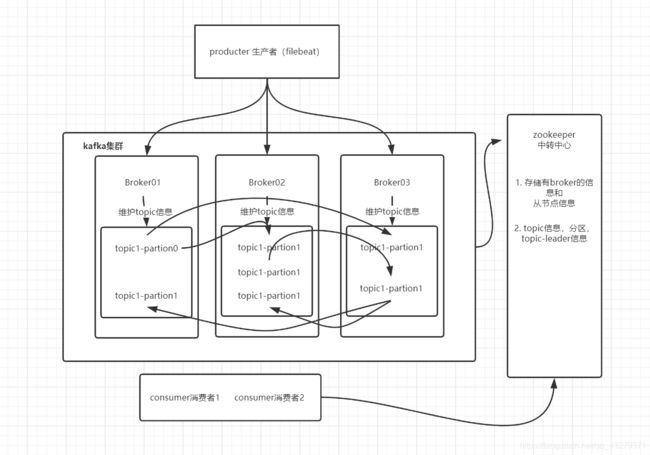

使用Kafka做缓存收集日志

kafka是分布式发布-订阅消息系统

zookeeper:存储了一些关于 consumer 和 broker 的信息

producer 直接连接 Broker

zookeeper:保存了topic相关配置,例如topic列表,每个topic的 partition数量、副本的位置,这些分区信息及与Broker的对应关系所有, topic的访问控制信息也是由zookeeper维护的,记录消息分区与consumer之间的关系

broker:多个broker,其中一个会被选举为控制器。从多个broker中选出控制器,这个工作就是zookeeper负责的控制器负责管理整个集群所有分区和副本的控制,例如某个分区的leader故障了,控制器会选择新的leader

Producer:直接连接Broker

partition:一个topic可以分为多个partion,每个partion是一个有序的队列。

Broker:缓存代理,kafka集群中的一台或多台服务器统称broker,存储topic

topic:kafka处理资源的消息源(feeds of messages)的不同分类

安装zookeeper(三台机器均需安装)

复制代码#把需要的软件包上传

cd /data/soft

#解压软件并做软件连接

tar zxf zookeeper-3.4.11.tar.gz -C /opt/

ln -s /opt/zookeeper-3.4.11/ /opt/zookeeper

#创建一个数据目录

mkdir -p /data/zookeeper

#把zoo_sample.cfg复制一个zoo.cfg文件

cp /opt/zookeeper/conf/zoo_sample.cfg /opt/zookeeper/conf/zoo.cfg

#修改zoo.cfg配置文件

vim /opt/zookeeper/conf/zoo.cfg

# 服务器之间或客户端与服务器之间维持心跳的时间间隔

# tickTime以毫秒为单位

tickTime=2000

# 集群中的follower服务器(F)与leader服务器(L)之间的初始连接心跳数

initLimit=10

# 集群中的follower服务器与leader服务器之间请求和应答之间能容忍的最多心跳数

syncLimit=5

dataDir=/data/zookeeper

# 客户端连接端口

clientPort=2181

# 三个接点配置,格式为: server.服务编号=服务地址、LF通信端口、选举端口

server.1=10.20.1.114:2888:3888

server.2=10.20.1.115:2888:3888

server.3=10.20.1.116:2888:3888

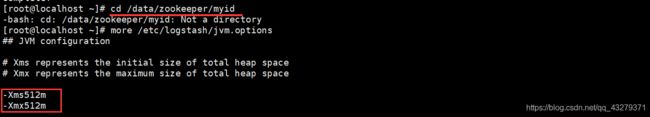

配置主机id(注意:每台主机myid都不一样,每台都要配置)

echo "1" > /data/zookeeper/myid

cat /data/zookeeper/myid

zookeeper所有节点启动

/opt/zookeeper/bin/zkServer.sh start

#状态信息

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/bin/../conf/zoo.cfg

Starting zookeeper ... STARTED

检查节点运行状态

/opt/zookeeper/bin/zkServer.sh status

#es01主机状态

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/bin/../conf/zoo.cfg

Mode: follower --> 追随者

#es02主机状态

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/bin/../conf/zoo.cfg

Mode: leader -->领导者

#es03状态

ZooKeeper JMX enabled by default

Using config: /opt/zookeeper/bin/../conf/zoo.cfg

Mode: follower --> 追随者

#在一个节点上执行,创建一个频道

/opt/zookeeper/bin/zkCli.sh -server 10.20.1.114:2181

create /test "hello"

#在其他节点上看能否接收到

/opt/zookeeper/bin/zkCli.sh -server 10.20.1.114:2181

get /test

安装部署Kafka(注:每个节点都配置,注意ip不同)

#es01操作

#上传安装包

cd /data/soft/

#解压并创建软连接

tar zxf kafka_2.11-1.0.0.tgz -C /opt/

ln -s /opt/kafka_2.11-1.0.0/ /opt/kafka

#创建一个日志目录

mkdir /opt/kafka/logs

#修改配置文件

vim /opt/kafka/config/server.properties

# broker的id,值为整数,且必须唯一,在一个集群中不能重复

broker.id=1

listeners=PLAINTEXT://10.20.1.114:9092

log.dirs=/opt/kafka/logs

log.retention.hours=24

zookeeper.connect=10.20.1.114:2181,10.20.1.115:2181,10.20.1.116:2181

前台测试能否正常启动

/opt/kafka/bin/kafka-server-start.sh /opt/kafka/config/server.properties

测试创建topic(注:zookeeper保存信息为:topic创建的副本是3个,分区是3个,topic主体叫做Kafkatest)

/opt/kafka/bin/kafka-topics.sh --create --zookeeper 10.20.1.114:2181,10.20.1.115:2181,10.20.1.116:2181 --partitions 3 --replication-factor 3 --topic kafkatest

#创建信息

Created topic "kafkatest".

测试获取toppid

/opt/kafka/bin/kafka-topics.sh --describe --zookeeper 10.20.1.114:2181,10.20.1.115:2181,10.20.1.116:2181 --topic kafkatest

#测试信息

Topic:kafkatest PartitionCount:3 ReplicationFactor:3 Configs:

Topic: kafkatest Partition: 0 Leader: 3 Replicas: 3,2,1 Isr: 3,2,1

Topic: kafkatest Partition: 1 Leader: 1 Replicas: 1,3,2 Isr: 1,3,2

Topic: kafkatest Partition: 2 Leader: 2 Replicas: 2,1,3 Isr: 2,1,3

Kafka测试命令发送信息

#创建命令

/opt/kafka/bin/kafka-topics.sh --create --zookeeper 10.20.1.114:2181,10.20.1.115:2181,10.20.1.116:2181 --partitions 3 --replication-factor 3 --topic messagetest

#测试发送消息

/opt/kafka/bin/kafka-console-producer.sh --broker-list 10.20.1.114:9092,10.20.1.115:9092,10.20.1.116:9092 --topic messagetest

#其他节点测试接收

/opt/kafka/bin/kafka-console-consumer.sh --zookeeper 10.20.1.114:2181,10.20.1.115:2181,10.20.1.116:2181 --topic messagetest --from-beginning

#测试获取所有的频道

/opt/kafka/bin/kafka-topics.sh --list --zookeeper 10.20.1.114:2181,10.20.1.115:2181,10.20.1.116:2181

测试成功放入后台

/opt/kafka/bin/kafka-server-start.sh -daemon /opt/kafka/config/server.properties

修改filebeat配置文件

复制代码vim /etc/filebeat/filebeat.yml

filebeat.inputs:

- type: log

enabled: true

paths:

- /var/log/nginx/access.log

json.keys_under_root: true

json.overwrite_keys: true

tags: ["access"]

- type: log

enabled: true

paths:

- /var/log/nginx/error.log

tags: ["error"]

setup.kibana:

host: "10.20.1.114:5601"

output.kafka:

hosts: ["10.20.1.114:9092","10.20.1.115:9092","10.20.1.116:9092"]

topic: elklog

#重启filebeat服务

systemctl restart filebeat

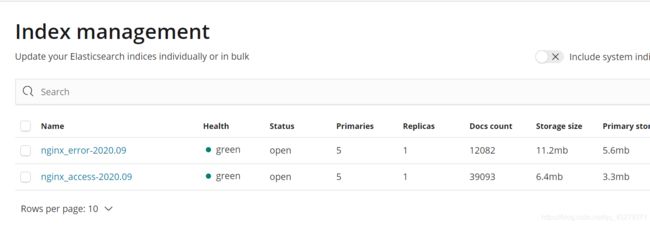

修改logstash配置文件

复制代码vim /etc/logstash/conf.d/kafka.conf

input{

kafka{

bootstrap_servers=>"10.20.1.114:9092"

topics=>["elklog"]

group_id=>"logstash"

codec => "json"

}

}

filter {

mutate {

convert => ["upstream_time", "float"]

convert => ["request_time", "float"]

}

}

output {

if "access" in [tags] {

elasticsearch {

hosts => "http://10.20.1.114:9200"

manage_template => false

index => "nginx_access-%{+yyyy.MM}"

}

}

if "error" in [tags] {

elasticsearch {

hosts => "http://10.20.1.114:9200"

manage_template => false

index => "nginx_error-%{+yyyy.MM}"

}

}

}

#重新启动logstash服务

ps -ef |grep logstash

kill -9 pid

/usr/share/logstash/bin/logstash -f /etc/logstash/conf.d/kafka.conf