Linux内核文件写入流程

文本代码基于Linux 5.15 。

当用户层调用write 去做写入, linux 内核里面是如何处理的? 本文以exfat 为例, 讨论这个流程

入口函数

write 系统调用的定义如下:

fs/read_write.c

ssize_t ksys_write(unsigned int fd, const char __user *buf, size_t count)

{

struct fd f = fdget_pos(fd);

ssize_t ret = -EBADF;

if (f.file) {

loff_t pos, *ppos = file_ppos(f.file);

if (ppos) {

pos = *ppos;

ppos = &pos;

}

ret = vfs_write(f.file, buf, count, ppos);

if (ret >= 0 && ppos)

f.file->f_pos = pos;

fdput_pos(f);

}

return ret;

}

SYSCALL_DEFINE3(write, unsigned int, fd, const char __user *, buf,

size_t, count)

{

return ksys_write(fd, buf, count);

}主要是调用了ksys_write , 然后再调用vfs_write

fs/read_write.c

ksys_write->vfs_write

ssize_t vfs_write(struct file *file, const char __user *buf, size_t count, loff_t *pos)

{

ssize_t ret;

if (!(file->f_mode & FMODE_WRITE))

return -EBADF;

if (!(file->f_mode & FMODE_CAN_WRITE))

return -EINVAL;

if (unlikely(!access_ok(buf, count)))

return -EFAULT;

ret = rw_verify_area(WRITE, file, pos, count);

if (ret)

return ret;

if (count > MAX_RW_COUNT)

count = MAX_RW_COUNT;

file_start_write(file);

if (file->f_op->write)

ret = file->f_op->write(file, buf, count, pos);

else if (file->f_op->write_iter)

ret = new_sync_write(file, buf, count, pos);

else

ret = -EINVAL;

if (ret > 0) {

fsnotify_modify(file);

add_wchar(current, ret);

}

inc_syscw(current);

file_end_write(file);

return ret;

}

ksys_write->vfs_write->new_sync_write

static ssize_t new_sync_write(struct file *filp, const char __user *buf, size_t len, loff_t *ppos)

{

struct iovec iov = { .iov_base = (void __user *)buf, .iov_len = len };

struct kiocb kiocb;

struct iov_iter iter;

ssize_t ret;

init_sync_kiocb(&kiocb, filp);

kiocb.ki_pos = (ppos ? *ppos : 0);

iov_iter_init(&iter, WRITE, &iov, 1, len);

ret = call_write_iter(filp, &kiocb, &iter);

BUG_ON(ret == -EIOCBQUEUED);

if (ret > 0 && ppos)

*ppos = kiocb.ki_pos;

return ret;

}

include/linux/fs.h

ksys_write->vfs_write->new_sync_write->call_write_iter->write_iter

static inline ssize_t call_write_iter(struct file *file, struct kiocb *kio,

struct iov_iter *iter)

{

return file->f_op->write_iter(kio, iter);

}可以看到, 最终会调用到f_op->write_iter这个回调。

在exfat文件系统中, 这个回调被实现为generic_file_write_iter

fs/exfat/file.c

const struct file_operations exfat_file_operations = {

.llseek = generic_file_llseek,

.read_iter = generic_file_read_iter,

.write_iter = generic_file_write_iter,

.mmap = generic_file_mmap,

.fsync = exfat_file_fsync,

.splice_read = generic_file_splice_read,

.splice_write = iter_file_splice_write,

};generic_file_write_iter 最终会调用到generic_perform_write 执行真正的写入操作:

mm/filemap.c

ksys_write->vfs_write->new_sync_write->call_write_iter->`generic_file_write_iter->__generic_file_write_iter->generic_perform_write

ssize_t generic_perform_write(struct file *file,

struct iov_iter *i, loff_t pos)

{

struct address_space *mapping = file->f_mapping;

const struct address_space_operations *a_ops = mapping->a_ops;

long status = 0;

ssize_t written = 0;

unsigned int flags = 0;

do { /* 1 */

struct page *page;

unsigned long offset; /* Offset into pagecache page */

unsigned long bytes; /* Bytes to write to page */

size_t copied; /* Bytes copied from user */

void *fsdata;

offset = (pos & (PAGE_SIZE - 1));

bytes = min_t(unsigned long, PAGE_SIZE - offset,

iov_iter_count(i));

again:

/*

* Bring in the user page that we will copy from _first_.

* Otherwise there's a nasty deadlock on copying from the

* same page as we're writing to, without it being marked

* up-to-date.

*

* Not only is this an optimisation, but it is also required

* to check that the address is actually valid, when atomic

* usercopies are used, below.

*/

if (unlikely(iov_iter_fault_in_readable(i, bytes))) {

status = -EFAULT;

break;

}

if (fatal_signal_pending(current)) {

status = -EINTR;

break;

}

status = a_ops->write_begin(file, mapping, pos, bytes, flags,

&page, &fsdata); /* 2 */

if (unlikely(status < 0))

break;

if (mapping_writably_mapped(mapping))

flush_dcache_page(page);

copied = iov_iter_copy_from_user_atomic(page, i, offset, bytes); /* 3 */

flush_dcache_page(page);

status = a_ops->write_end(file, mapping, pos, bytes, copied,

page, fsdata); /* 4 */

if (unlikely(status < 0))

break;

copied = status;

cond_resched();

iov_iter_advance(i, copied);

if (unlikely(copied == 0)) {

/*

* If we were unable to copy any data at all, we must

* fall back to a single segment length write.

*

* If we didn't fallback here, we could livelock

* because not all segments in the iov can be copied at

* once without a pagefault.

*/

bytes = min_t(unsigned long, PAGE_SIZE - offset,

iov_iter_single_seg_count(i));

goto again;

}

pos += copied;

written += copied;

balance_dirty_pages_ratelimited(mapping);

} while (iov_iter_count(i));

return written ? written : status;

}

EXPORT_SYMBOL(generic_perform_write);(1) 执行循环, 直到所有的内容写入完成

(2) 调用a_ops->write_begin 回调, 被具体文件系统用来执行相关的准备

(3) 把需要写的内容写到page cache

(4) 写入完成, 调用a_ops->write_end处理后续的善后工作

可以看到, 主要是write_begin和write_end 这两个调用, 下面分别来说明这两个函数

通知为写请求做准备

在exfat文件系统中, write_begin 的实现如下:

fs/exfat/inode.c

ksys_write->vfs_write->new_sync_write->call_write_iter->`generic_file_write_iter->__generic_file_write_iter->generic_perform_write->exfat_write_begin

static int exfat_write_begin(struct file *file, struct address_space *mapping,

loff_t pos, unsigned int len, unsigned int flags,

struct page **pagep, void **fsdata)

{

int ret;

*pagep = NULL;

ret = cont_write_begin(file, mapping, pos, len, flags, pagep, fsdata,

exfat_get_block,

&EXFAT_I(mapping->host)->i_size_ondisk);

if (ret < 0)

exfat_write_failed(mapping, pos+len);

return ret;

}主要是对cont_write_begin的封装

fs/buffer.c

ksys_write->vfs_write->new_sync_write->call_write_iter->`generic_file_write_iter->__generic_file_write_iter->generic_perform_write->exfat_write_begin->cont_write_begin

int cont_write_begin(struct file *file, struct address_space *mapping,

loff_t pos, unsigned len, unsigned flags,

struct page **pagep, void **fsdata,

get_block_t *get_block, loff_t *bytes)

{

struct inode *inode = mapping->host;

unsigned int blocksize = i_blocksize(inode);

unsigned int zerofrom;

int err;

err = cont_expand_zero(file, mapping, pos, bytes); /* 1 */

if (err)

return err;

zerofrom = *bytes & ~PAGE_MASK;

if (pos+len > *bytes && zerofrom & (blocksize-1)) {

*bytes |= (blocksize-1);

(*bytes)++;

}

return block_write_begin(mapping, pos, len, flags, pagep, get_block); /* 2 */

}(1) 如果存在文件空洞, 这需要把空洞的地方填0, 这一点后面详细解释

(2) 这里面主要是建立buffer_head的隐射, 并且做必要的预读。

cont_expand_zero

考虑这种情况, 一个文件的大小是1M , 但用户写的时候, 先seek 到2M的offset, 再进行写入。 那么中间的1M部分即成为了所谓的“文件空洞”, 对应这种空洞, exFAT 会用0去填充对应的位置, 这样如果读取到对应的位置, 读到的就是0。

需要说明的是, 如果文件大小是1M , 用户如果从1M的地方开始写入, 这时候cont_expand_zero 会直接返回, 直接调用到block_write_begin 这个函数。

fs/buffer.c

ksys_write->vfs_write->new_sync_write->call_write_iter->generic_file_write_iter->__generic_file_write_iter->generic_perform_write->exfat_write_begin->cont_write_begin->cont_expand_zero

static int cont_expand_zero(struct file *file, struct address_space *mapping,

loff_t pos, loff_t *bytes)

{

struct inode *inode = mapping->host;

unsigned int blocksize = i_blocksize(inode);

struct page *page;

void *fsdata;

pgoff_t index, curidx;

loff_t curpos;

unsigned zerofrom, offset, len;

int err = 0;

index = pos >> PAGE_SHIFT;

offset = pos & ~PAGE_MASK;

while (index > (curidx = (curpos = *bytes)>>PAGE_SHIFT)) { /* 1 */

zerofrom = curpos & ~PAGE_MASK;

if (zerofrom & (blocksize-1)) {

*bytes |= (blocksize-1);

(*bytes)++;

}

len = PAGE_SIZE - zerofrom;

err = pagecache_write_begin(file, mapping, curpos, len, 0,

&page, &fsdata); /* 2 */

if (err)

goto out;

zero_user(page, zerofrom, len); /* 3 */

err = pagecache_write_end(file, mapping, curpos, len, len,

page, fsdata); /* 4 */

if (err < 0)

goto out;

BUG_ON(err != len);

err = 0;

balance_dirty_pages_ratelimited(mapping);

if (fatal_signal_pending(current)) {

err = -EINTR;

goto out;

}

}

/* page covers the boundary, find the boundary offset */

if (index == curidx) { /* 5 */

zerofrom = curpos & ~PAGE_MASK;

/* if we will expand the thing last block will be filled */

if (offset <= zerofrom) {

goto out;

}

if (zerofrom & (blocksize-1)) {

*bytes |= (blocksize-1);

(*bytes)++;

}

len = offset - zerofrom;

err = pagecache_write_begin(file, mapping, curpos, len, 0,

&page, &fsdata);

if (err)

goto out;

zero_user(page, zerofrom, len);

err = pagecache_write_end(file, mapping, curpos, len, len,

page, fsdata);

if (err < 0)

goto out;

BUG_ON(err != len);

err = 0;

}

out:

return err;

}(1) 对于超出部分的页面, 全部填充0。 按照上面的例子, size=1m, offset=2m, 那么中间1m的部分要全部填充0.

(2) pagecache_write_begin实际上是调用aops->write_begin 。 这里又会调用到cont_write_begin , 只不过现在调用到这个函数的时候, size=1m, offset=1m, cont_expand_zero 直接返回, 会直接到block_write_begin, 分配对应的空间, 并读取对应的内容到page中。

(3) 将读取到的page 清0。这样下次read的时候,如果读到了这个位置, 那么就会返回0.

(4) pagecache_write_end 实际上调用了aops->write_end

(5) 上面是处理不在一个page 中的情况,但一个page 大小是4k。 还有一种情况是空洞发生在page 内, 比如size=512, offset =1024, 这样中间512Byte就需要清零。 zerofrom代表页内偏移, 需要把zerofrom到offset这部分内容清零。

block_write_begin

这个函数做的事情就是把建立page与磁盘内容的映射关系, 如果文件长度不够, 还会进行块分配; 另外,在必要时, 还是进行预读操作。(以下内容主要参考《存储技术原理分析》)

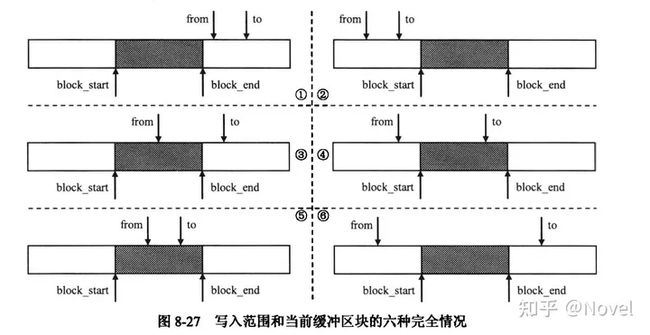

我们知道, 对于一个page而言, 它是通过buffer_head 去管理具体的内容。一般来说, 一个page(4k) 会有8个buffer_head(512B)。这样某个buffer_head (block_start->block_end) 与要写入的地址(from->to)存在如上六种情况。

前两种情况, 这个buffer_head 落在要写的数据之外; 接下来三种情况, 属于非覆盖写的情况, 这几种情况都需要进行预读, 将对应buffer_head的内容先读出来; 最后一种情况, 是覆盖写的情况, 不需要进行预读, 因为要写的内容完全覆盖了buffer_head的区域, 这时候直接写入就好了, 没必要先读出来。

理解了上面几种情况, 我们再来看看这个函数的具体实现。

fs/buffer.c

ksys_write->vfs_write->new_sync_write->call_write_iter->generic_file_write_iter->__generic_file_write_iter->generic_perform_write->exfat_write_begin->cont_write_begin->block_write_begin

int block_write_begin(struct address_space *mapping, loff_t pos, unsigned len,

unsigned flags, struct page **pagep, get_block_t *get_block)

{

pgoff_t index = pos >> PAGE_SHIFT;

struct page *page;

int status;

page = grab_cache_page_write_begin(mapping, index, flags); /* 1 */

if (!page)

return -ENOMEM;

status = __block_write_begin(page, pos, len, get_block); /* 2 */

if (unlikely(status)) {

unlock_page(page);

put_page(page);

page = NULL;

}

*pagep = page;

return status;

}(1) 获取对应文件位置的page。

(2) 调用__block_write_begin 执行真正的操作

fs/buffer.c

ksys_write->vfs_write->new_sync_write->call_write_iter->generic_file_write_iter->__generic_file_write_iter->generic_perform_write->exfat_write_begin->cont_write_begin->block_write_begin->__block_write_begin->__block_write_begin_int

int __block_write_begin(struct page *page, loff_t pos, unsigned len,

get_block_t *get_block)

{

return __block_write_begin_int(page, pos, len, get_block, NULL);

}

int __block_write_begin_int(struct page *page, loff_t pos, unsigned len,

get_block_t *get_block, struct iomap *iomap)

{

unsigned from = pos & (PAGE_SIZE - 1);

unsigned to = from + len;

struct inode *inode = page->mapping->host;

unsigned block_start, block_end;

sector_t block;

int err = 0;

unsigned blocksize, bbits;

struct buffer_head *bh, *head, *wait[2], **wait_bh=wait;

BUG_ON(!PageLocked(page));

BUG_ON(from > PAGE_SIZE);

BUG_ON(to > PAGE_SIZE);

BUG_ON(from > to);

head = create_page_buffers(page, inode, 0);

blocksize = head->b_size;

bbits = block_size_bits(blocksize);

block = (sector_t)page->index << (PAGE_SHIFT - bbits);

for(bh = head, block_start = 0; bh != head || !block_start;

block++, block_start=block_end, bh = bh->b_this_page) { /* 1 */

block_end = block_start + blocksize;

if (block_end <= from || block_start >= to) { /* 2 */

if (PageUptodate(page)) {

if (!buffer_uptodate(bh))

set_buffer_uptodate(bh);

}

continue;

}

if (buffer_new(bh))

clear_buffer_new(bh);

if (!buffer_mapped(bh)) { /* 3 */

WARN_ON(bh->b_size != blocksize);

if (get_block) {

err = get_block(inode, block, bh, 1); /* 4 */

if (err)

break;

} else {

iomap_to_bh(inode, block, bh, iomap);

}

if (buffer_new(bh)) {

clean_bdev_bh_alias(bh);

if (PageUptodate(page)) {

clear_buffer_new(bh);

set_buffer_uptodate(bh);

mark_buffer_dirty(bh);

continue;

}

if (block_end > to || block_start < from) /* 5 */

zero_user_segments(page,

to, block_end,

block_start, from);

continue;

}

}

if (PageUptodate(page)) {

if (!buffer_uptodate(bh))

set_buffer_uptodate(bh);

continue;

}

if (!buffer_uptodate(bh) && !buffer_delay(bh) &&

!buffer_unwritten(bh) &&

(block_start < from || block_end > to)) { /* 6 */

ll_rw_block(REQ_OP_READ, 0, 1, &bh);

*wait_bh++=bh;

}

}

/*

* If we issued read requests - let them complete.

*/

while(wait_bh > wait) { /* 7 */

wait_on_buffer(*--wait_bh);

if (!buffer_uptodate(*wait_bh))

err = -EIO;

}

if (unlikely(err))

page_zero_new_buffers(page, from, to);

return err;

}(1) 循环处理每个buffer_head, 这个循环推出的条件是, bh == head, 即所有buffer_head遍历完。 这里有一个小疑问,为什么不再block_start > to 的时候就推出循环?

(2) 这里对应上述前两种情况, 这时候直接跳过

(3) (4) 如果buffer_head 没有建立映射关系, 调用get_block映射到具体的文件系统的sector

(5) 对于③④⑤中情况, 调用zero_user_segments 先将非覆盖写的部分清零

(6) 对于③④⑤中情况, 需要进行预读, 调用ll_rw_block, 读取对应的部分

(7) 等待(6)的读取完成

通知数据已写入完成

a_ops->write_end 在数据从用户buf复制到page cache 之后, 被具体文件系统用来做相关的善后。

对应exfat, 这个函数的被实例化为

fs/exfat/inode.c

static int exfat_write_end(struct file *file, struct address_space *mapping,

loff_t pos, unsigned int len, unsigned int copied,

struct page *pagep, void *fsdata)

{

struct inode *inode = mapping->host;

struct exfat_inode_info *ei = EXFAT_I(inode);

int err;

err = generic_write_end(file, mapping, pos, len, copied, pagep, fsdata);

if (EXFAT_I(inode)->i_size_aligned < i_size_read(inode)) {

exfat_fs_error(inode->i_sb,

"invalid size(size(%llu) > aligned(%llu)\n",

i_size_read(inode), EXFAT_I(inode)->i_size_aligned);

return -EIO;

}

if (err < len)

exfat_write_failed(mapping, pos+len);

if (!(err < 0) && !(ei->attr & ATTR_ARCHIVE)) {

inode->i_mtime = inode->i_ctime = current_time(inode);

ei->attr |= ATTR_ARCHIVE;

mark_inode_dirty(inode);

}

return err;

}主要调用了generic_write_end 处理具体的工作:

fs/buffer.c

ksys_write->vfs_write->new_sync_write->call_write_iter->generic_file_write_iter->__generic_file_write_iter->generic_perform_write->exfat_write_end->generic_write_end

int generic_write_end(struct file *file, struct address_space *mapping,

loff_t pos, unsigned len, unsigned copied,

struct page *page, void *fsdata)

{

struct inode *inode = mapping->host;

loff_t old_size = inode->i_size;

bool i_size_changed = false;

copied = block_write_end(file, mapping, pos, len, copied, page, fsdata);

/*

* No need to use i_size_read() here, the i_size cannot change under us

* because we hold i_rwsem.

*

* But it's important to update i_size while still holding page lock:

* page writeout could otherwise come in and zero beyond i_size.

*/

if (pos + copied > inode->i_size) {

i_size_write(inode, pos + copied);

i_size_changed = true;

}

unlock_page(page);

put_page(page);

if (old_size < pos)

pagecache_isize_extended(inode, old_size, pos);

/*

* Don't mark the inode dirty under page lock. First, it unnecessarily

* makes the holding time of page lock longer. Second, it forces lock

* ordering of page lock and transaction start for journaling

* filesystems.

*/

if (i_size_changed)

mark_inode_dirty(inode);

return copied;

}

EXPORT_SYMBOL(generic_write_end);这里主要介绍一下block_write_end 这个函数:

fs/buffer.c

int block_write_end(struct file *file, struct address_space *mapping,

loff_t pos, unsigned len, unsigned copied,

struct page *page, void *fsdata)

{

struct inode *inode = mapping->host;

unsigned start;

start = pos & (PAGE_SIZE - 1);

if (unlikely(copied < len)) { /* 1 */

/*

* The buffers that were written will now be uptodate, so we

* don't have to worry about a readpage reading them and

* overwriting a partial write. However if we have encountered

* a short write and only partially written into a buffer, it

* will not be marked uptodate, so a readpage might come in and

* destroy our partial write.

*

* Do the simplest thing, and just treat any short write to a

* non uptodate page as a zero-length write, and force the

* caller to redo the whole thing.

*/

if (!PageUptodate(page))

copied = 0;

page_zero_new_buffers(page, start+copied, start+len);

}

flush_dcache_page(page);

/* This could be a short (even 0-length) commit */

__block_commit_write(inode, page, start, start+copied); /* 2 */

return copied;

}这里有一种特殊的情况需要处理, 即实际写入长度copied < len, 为此专门定义了一个术语: 短写(short write)。

(以下内容主要参考《存储技术原理分析》)

对于完全覆盖写的情况,是没有进行预读, 这样如果没有进行完全覆盖写, 就会出现这个buffer_head中一部分内容是不确定, 既没有从磁盘中读出, 又没有从用户空间更新, 如果将这部分数据贸然更新到磁盘, 势必覆盖原来的有效数据。

因此最简单的处理, 是认为这种场景下没有写入(copied = 0), 然后让调用者重新做整个事情。

下面介绍一下__block_commit_write 这个函数,

static int __block_commit_write(struct inode *inode, struct page *page,

unsigned from, unsigned to)

{

unsigned block_start, block_end;

int partial = 0;

unsigned blocksize;

struct buffer_head *bh, *head;

bh = head = page_buffers(page);

blocksize = bh->b_size;

block_start = 0;

do { /* 1 */

block_end = block_start + blocksize;

if (block_end <= from || block_start >= to) { /* 2 */

if (!buffer_uptodate(bh))

partial = 1;

} else {

set_buffer_uptodate(bh);

mark_buffer_dirty(bh);

}

clear_buffer_new(bh);

block_start = block_end;

bh = bh->b_this_page;

} while (bh != head);

/*

* If this is a partial write which happened to make all buffers

* uptodate then we can optimize away a bogus readpage() for

* the next read(). Here we 'discover' whether the page went

* uptodate as a result of this (potentially partial) write.

*/

if (!partial) /* 3 */

SetPageUptodate(page);

return 0;

}(1) 遍历page中所有的buffer_head

(2) 这里和block_write_begin中一样,对应①②种情况, 对于不在范围内的buffer_head,设置partial = 1

(3) 如果!partitial, 即page中所有buffer_head都被处理, 设置SetPageUptodate。 这个是这个函数的关键操作。