uniapp实现条码扫描 可开闪光灯,原生H5调用,不需要任何sdk。

主要思路

使用QuaggaJs这个库。调用摄像头使用的 navigator.mediaDevices.getUserMedia 这个H5的api。通过 video 和 canvas 把摄像头获取到的数据展现到页面上,同时调用监听Quagga解析。

- 获取设备摄像头权限,用于后续开启摄像头。

- 创建video元素显示摄像头画面,和canvas元素用于QuaggaJS进行图像处理和解码。

- 初始化QuaggaJS,设置解码参数和回调。

- 开启摄像头,开始扫描循环tick。

- 在tick中捕获video画面,绘制到canvas上。

- 将canvas图像数据传入QuaggaJS,进行解码识别。

- 解码成功后,在回调中处理识别结果。

- 关闭摄像头和QuaggaJS,释放资源。

- 通过组件props设置扫描相关参数,如是否高清模式、需要激活的解码器等。

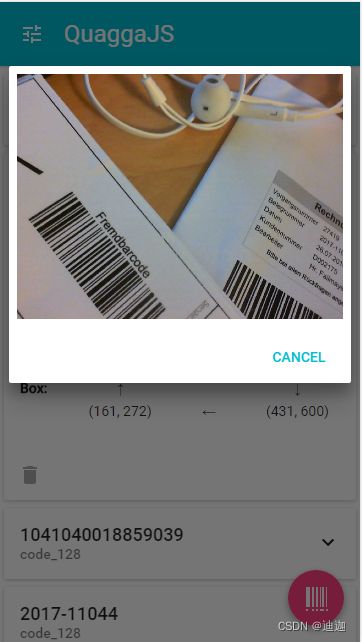

功能预览

兼容说明

需要https环境才能使用,本地测试可以在 manifest.json 中点击源码展示,找到h5 ,添加:"devServer" : { "https" : true},并且打开闪光灯只有在安装chrome内核中可以使用,

一、安装QuaggaJs

官网链接 https://serratus.github.io/quaggaJS/#installing

NPM安装

npm install quagga

二、获取设备摄像头权限

在mounted钩子中,使用uni.request()获取设备权限:

mounted() {

uni.request({

name: 'camera',

success: () => {

this.openScan()

}

})

}

三、创建video和canvas元素

video = document.createElement('video')

video.id = 'video'

video.width = 300

video.height = 200

canvas = document.createElement('canvas')

canvas.id = 'canvas'

canvas.width = 300

canvas.height = 200

canvas.style = 'display:none'

canvas2 = document.createElement('canvas')

canvas2.id = 'canvas2'

canvas2.width = 300

canvas2.height = 200

四、初始化Quagga并开始扫描

在openScan方法中初始化Quagga,并开始扫描循环tick:

openScan() {

this.isScanning = true

let width = 300 * this.definition

let height = 200 * this.definition

Quagga.init(this.config, (err) => {

if (err) throw err

Quagga.start()

this.tick()

})

}

五、图像处理和解码

在tick方法中,不断从video中捕获图像,绘制到canvas上,然后使用Quagga对canvas进行解码:

tick() {

if (!this.isScanning) return

if (this.video.readyState === this.video.HAVE_ENOUGH_DATA) {

this.canvas2d.drawImage(video, 0, 0, width, height)

const inputStream = {

size: width * height * 4,

canvas: this.canvas

}

Quagga.decodeSingle(inputStream, result => {

// 解码成功后的处理逻辑

})

} else {

requestAnimationFrame(this.tick)

}

}

六、关闭摄像头和Quagga

在beforeDestroy钩子中,关闭摄像头和Quagga:

beforeDestroy() {

this.isScanning = false

Quagga.stop()

if (this.video.srcObject) {

this.video.srcObject.getTracks().forEach(track => {

track.stop()

})

}

}

参数解析

readers是一维码类型,一般使用code_128_reader,可带字母。一些电子产品类别的一维码就是这个编码ean_reader :商品常用需要更具实际需求填入。数组中可填多个,但是不推荐,因为不同类型中的一维码可能会被其他类型给解码出来,这样会导致解码不正确。具体类型规则如下图:

props: {

continue: {

type: Boolean,

default: false // ,设置自动扫描次数false 一次 true 持续扫描

},

exact: {

type: String,

default: 'environment' // environment 后摄像头 user 前摄像头

},

definition: {

type: Boolean,

default: false // fasle 正常 true 高清

},

readers: {

// 一维码类型

type: Array,

default: () => ['code_128_reader']

}

}

基于上面所诉内容,我这里有现成的组件代码,复制就可以使用!!quaggaJs的文件可以私我,我发给各位。

组件完整代码

<template>

<view class="canvasBox">

<view class="box">

<view class="line">view>

<view class="angle">view>

view>

<view class="box2" v-if="isUseTorch">

<view class="track" @click="openTrack">

<svg t="1653920715959" class="icon" viewBox="0 0 1024 1024" version="1.1"

xmlns="http://www.w3.org/2000/svg" p-id="1351" width="32" height="32">

<path

d="M651.353043 550.479503H378.752795L240.862609 364.315031c-3.688944-4.897391-5.660621-10.876025-5.660621-17.045466v-60.040745c0-15.773416 12.847702-28.621118 28.621118-28.621118h502.459627c15.773416 0 28.621118 12.847702 28.621118 28.621118v59.977143c0 6.105839-1.971677 12.084472-5.660621 17.045466l-137.890187 186.228074zM378.752795 598.308571v398.024348c0 15.328199 12.402484 27.667081 27.667081 27.667081h217.266087c15.328199 0 27.667081-12.402484 27.66708-27.667081V598.308571H378.752795z m136.300124 176.942112c-14.564969 0-26.331429-11.76646-26.331428-26.331428v-81.283975c0-14.564969 11.76646-26.331429 26.331428-26.331429 14.564969 0 26.331429 11.76646 26.331429 26.331429v81.283975c0 14.564969-11.76646 26.331429-26.331429 26.331428zM512 222.608696c-17.554286 0-31.801242-14.246957-31.801242-31.801243V31.801242c0-17.554286 14.246957-31.801242 31.801242-31.801242s31.801242 14.246957 31.801242 31.801242v159.006211c0 17.554286-14.246957 31.801242-31.801242 31.801243zM280.932174 205.881242c-9.47677 0-18.889938-4.197764-25.122981-12.275279L158.242981 67.991056a31.864845 31.864845 0 0 1 5.597019-44.648944 31.864845 31.864845 0 0 1 44.648944 5.597018l97.502609 125.551305a31.864845 31.864845 0 0 1-5.597019 44.648944c-5.787826 4.579379-12.656894 6.741863-19.46236 6.741863zM723.987081 205.881242c-6.805466 0-13.674534-2.162484-19.462361-6.678261a31.794882 31.794882 0 0 1-5.597018-44.648944l97.566211-125.551304a31.794882 31.794882 0 0 1 44.648944-5.597019 31.794882 31.794882 0 0 1 5.597019 44.648944l-97.566211 125.551305c-6.360248 8.077516-15.709814 12.27528-25.186584 12.275279z"

fill="#ffffff" p-id="1352">path>

svg>

{{ trackStatus ? '关闭闪光灯' : '打开闪光灯' }}

view>

view>

<view class="mask1 mask" :style="'height:' + maskHeight + 'px;'">view>

<view class="mask2 mask" :style="'width:' + maskWidth + 'px;top:' + maskHeight + 'px'">view>

<view class="mask3 mask" :style="'height:' + maskHeight + 'px;'">view>

<view class="mask4 mask" :style="'width:' + maskWidth + 'px;top:' + maskHeight + 'px'">view>

view>

template>

<script>

import Quagga from './quagga.min.js'

export default {

props: {

continue: {

type: Boolean,

default: false // false 监听一次 true 持续监听

},

exact: {

type: String,

default: 'environment' // environment 后摄像头 user 前摄像头

},

definition: {

type: Boolean,

default: false // fasle 正常 true 高清

},

readers: {

// 一维码类型

type: Array,

default: () => ['code_128_reader']

}

},

data() {

return {

windowWidth: 0,

windowHeight: 0,

video: null,

canvas2d: null,

canvasDom: null,

canvasDom2: null, // 用于画框

canvas2d2: null,

canvasWidth: 300,

canvasHeight: 200,

maskWidth: 0,

maskHeight: 0,

cpu: 1,

inter: 0,

track: null,

isUseTorch: false,

trackStatus: false,

isParse: false,

data1: '',

data2: ''

}

},

mounted() {

if (origin.indexOf('https') === -1) throw '请在 https 环境中使用摄像头组件。'

this.windowWidth = document.documentElement.clientWidth || document.body.clientWidth

this.windowHeight = document.documentElement.clientHeight || document.body.clientHeight

this.isParse = true

this.$nextTick(() => {

this.video = document.createElement('video')

this.video.width = this.windowWidth

this.video.height = this.windowHeight

const canvas = document.createElement('canvas')

this.canvas = canvas

canvas.id = 'canvas'

canvas.width = this.canvasWidth

canvas.height = this.canvasHeight

canvas.style = 'display:none;'

// 设置当前宽高 满屏

const canvasBox = document.querySelector('.canvasBox')

canvasBox.append(this.video)

canvasBox.append(canvas)

canvasBox.style = `width:${this.windowWidth}px;height:${this.windowHeight}px;`

this.canvas2d = canvas.getContext('2d')

// 创建第二个canvas

const canvas2 = document.createElement('canvas')

this.canvas2 = canvas2

canvas2.id = 'canvas2'

canvas2.width = this.canvasWidth

canvas2.height = this.canvasHeight

canvas2.style =

'position: absolute;top: 50%;left: 50%;z-index: 20;transform: translate(-50%, -50%);'

this.canvas2d2 = canvas2.getContext('2d')

canvasBox.append(canvas2)

this.cpu = 1 // navigator.hardwareConcurrency || 1

// console.log(navigator.hardwareConcurrency )

this.createMsk()

setTimeout(() => {

this.openScan()

}, 500)

})

},

methods: {

openScan() {

let width = this.transtion(this.windowHeight)

let height = this.transtion(this.windowWidth)

const videoParam = {

audio: false,

video: {

facingMode: {

exact: this.exact

},

width,

height

}

}

navigator.mediaDevices

.getUserMedia(videoParam)

.then(stream => {

this.video.srcObject = stream

this.video.setAttribute('playsinline', true)

this.video.play()

this.tick()

this.track = stream.getVideoTracks()[0]

setTimeout(() => {

this.isUseTorch = this.track.getCapabilities().torch || null

}, 500)

})

.catch(err => {

console.log('设备不支持', err)

})

},

tick() {

if (!this.isParse) return

try {

if (this.video.readyState === this.video.HAVE_ENOUGH_DATA) {

this.canvas2d.drawImage(

this.video,

this.transtion(this.maskWidth),

this.transtion(this.maskHeight),

this.transtion(300),

this.transtion(200),

0,

0,

this.canvasWidth,

this.canvasHeight

)

const img = this.canvas.toDataURL('image/jpg')

Quagga.decodeSingle({

inputStream: {

size: 300 * 2

},

locator: {

patchSize: 'large',

halfSample: true

},

numOfWorkers: this.cpu,

decoder: {

readers: this.readers

},

locate: true,

src: img

},

result => {

this.canvas2d2.clearRect(0, 0, this.canvasWidth, this.canvasHeight)

requestAnimationFrame(this.tick)

if (!result || !result.codeResult) return

if (!this.data1) return (this.data1 = result.codeResult.code)

this.data2 = result.codeResult.code

console.log(this.data1, '-', this.data2)

if (this.data2 !== this.data1) return (this.data1 = '')

this.drawLine(result.box, result.line)

if (!this.continue) {

this.closeCamera()

}

this.$emit('success', result.codeResult.code)

}

)

} else {

requestAnimationFrame(this.tick)

}

} catch (e) {

console.log('出错了');

requestAnimationFrame(this.tick)

}

},

drawLine(box, line) {

if (!line[0] || !line[0]['x']) return

this.canvas2d2.beginPath()

this.canvas2d2.moveTo(line[0]['x'] / 2, line[0]['y'] / 2)

this.canvas2d2.lineTo(line[1]['x'] / 2, line[1]['y'] / 2)

this.canvas2d2.lineWidth = 2

this.canvas2d2.strokeStyle = '#FF3B58'

this.canvas2d2.stroke()

},

createMsk() {

this.maskWidth = this.windowWidth / 2 - 150

this.maskHeight = this.windowHeight / 2 - 100

},

closeCamera() {

this.isParse = false

if (this.video.srcObject) {

this.video.srcObject.getTracks().forEach(track => {

track.stop()

})

}

},

transtion(number) {

return this.definition ? number * 2.6 : number * 1.6

},

openTrack() {

this.trackStatus = !this.trackStatus

this.track.applyConstraints({

advanced: [{

torch: this.trackStatus

}]

})

}

},

beforeDestroy() {

this.closeCamera()

}

}

script>

<style scoped>

page {

background-color: #333333;

}

.canvasBox {

width: 100vw;

position: relative;

background-image: linear-gradient(0deg,

transparent 24%,

rgba(32, 255, 77, 0.1) 25%,

rgba(32, 255, 77, 0.1) 26%,

transparent 27%,

transparent 74%,

rgba(32, 255, 77, 0.1) 75%,

rgba(32, 255, 77, 0.1) 76%,

transparent 77%,

transparent),

linear-gradient(90deg,

transparent 24%,

rgba(32, 255, 77, 0.1) 25%,

rgba(32, 255, 77, 0.1) 26%,

transparent 27%,

transparent 74%,

rgba(32, 255, 77, 0.1) 75%,

rgba(32, 255, 77, 0.1) 76%,

transparent 77%,

transparent);

background-size: 3rem 3rem;

background-position: -1rem -1rem;

z-index: 10;

background-color: #1110;

}

.box {

width: 300px;

height: 200px;

position: absolute;

left: 50%;

top: 50%;

transform: translate(-50%, -50%);

overflow: hidden;

border: 0.1rem solid rgba(0, 255, 51, 0.2);

z-index: 11;

}

.line {

height: calc(100% - 2px);

width: 100%;

background: linear-gradient(180deg, rgba(0, 255, 51, 0) 43%, #00ff33 211%);

border-bottom: 3px solid #00ff33;

transform: translateY(-100%);

animation: radar-beam 2s infinite alternate;

animation-timing-function: cubic-bezier(0.53, 0, 0.43, 0.99);

animation-delay: 1.4s;

}

.box2 {

width: 300px;

height: 200px;

position: absolute;

left: 50%;

top: 50%;

transform: translate(-50%, -50%);

z-index: 20;

}

.track {

position: absolute;

bottom: -100px;

left: 50%;

transform: translateX(-50%);

z-index: 20;

color: #fff;

display: flex;

flex-direction: column;

align-items: center;

}

.box:after,

.box:before,

.angle:after,

.angle:before {

content: '';

display: block;

position: absolute;

width: 3vw;

height: 3vw;

z-index: 12;

border: 0.2rem solid transparent;

}

.box:after,

.box:before {

top: 0;

border-top-color: #00ff33;

}

.angle:after,

.angle:before {

bottom: 0;

border-bottom-color: #00ff33;

}

.box:before,

.angle:before {

left: 0;

border-left-color: #00ff33;

}

.box:after,

.angle:after {

right: 0;

border-right-color: #00ff33;

}

@keyframes radar-beam {

0% {

transform: translateY(-100%);

}

100% {

transform: translateY(0);

}

}

.msg {

text-align: center;

padding: 20rpx 0;

}

.mask {

position: absolute;

z-index: 10;

background-color: rgba(0, 0, 0, 0.55);

}

.mask1 {

top: 0;

left: 0;

right: 0;

}

.mask2 {

right: 0;

height: 200px;

}

.mask3 {

right: 0;

left: 0;

bottom: 0;

}

.mask4 {

left: 0;

height: 200px;

}

style>

总结

以上就是整个uniapp条码扫描组件的主要逻辑和步骤。如果在理解和使用上有任何疑问,欢迎随时提出。我会提供更详细的解释和指导。欢迎大家交流