尚硅谷大数据Flink1.17实战教程-笔记02【部署】

- 尚硅谷大数据技术-教程-学习路线-笔记汇总表【课程资料下载】

- 视频地址:尚硅谷大数据Flink1.17实战教程从入门到精通_哔哩哔哩_bilibili

- 尚硅谷大数据Flink1.17实战教程-笔记01【Flink概述、Flink快速上手】

- 尚硅谷大数据Flink1.17实战教程-笔记02【Flink部署】

- 尚硅谷大数据Flink1.17实战教程-笔记03【】

- 尚硅谷大数据Flink1.17实战教程-笔记04【】

- 尚硅谷大数据Flink1.17实战教程-笔记05【】

- 尚硅谷大数据Flink1.17实战教程-笔记06【】

- 尚硅谷大数据Flink1.17实战教程-笔记07【】

- 尚硅谷大数据Flink1.17实战教程-笔记08【】

目录

基础篇

第03章 Flink部署

P011【011_Flink部署_集群角色】03:07

P012【012_Flink部署_集群搭建_集群启动】14:22

P013【013_Flink部署_集群搭建_WebUI提交作业】13:58

P014【014_Flink部署_集群搭建_命令行提交作业】03:46

P015【015_Flink部署_部署模式介绍】10:17

P016【016_Flink部署_Standalone运行模式】08:16

P017【017_Flink部署_YARN运行模式_环境准备】07:41

P018【018_Flink部署_YARN运行模式_会话模式】18:11

P019【019_Flink部署_YARN运行模式_会话模式的停止】04:10

P020【020_Flink部署_YARN运行模式_单作业模式】09:49

P021【021_Flink部署_YARN运行模式_应用模式】12:51

P022【022_Flink部署_历史服务器】08:11

基础篇

第03章 Flink部署

P011【011_Flink部署_集群角色】03:07

第 3 章 Flink 部署

3.1 集群角色

P012【012_Flink部署_集群搭建_集群启动】14:22

表3-1 集群角色分配 节点服务器

hadoop102

hadoop103

hadoop104

角色

JobManager

TaskManager

TaskManager

TaskManager

[atguigu@node001 module]$ cd flink

[atguigu@node001 flink]$ cd flink-1.17.0/

[atguigu@node001 flink-1.17.0]$ bin/start-cluster.sh

Starting cluster.

Starting standalonesession daemon on host node001.

Starting taskexecutor daemon on host node001.

Starting taskexecutor daemon on host node002.

Starting taskexecutor daemon on host node003.

[atguigu@node001 flink-1.17.0]$ jpsall

================ node001 ================

3408 Jps

2938 StandaloneSessionClusterEntrypoint

3276 TaskManagerRunner

================ node002 ================

2852 TaskManagerRunner

2932 Jps

================ node003 ================

2864 TaskManagerRunner

2944 Jps

[atguigu@node001 flink-1.17.0]$ P013【013_Flink部署_集群搭建_WebUI提交作业】13:58

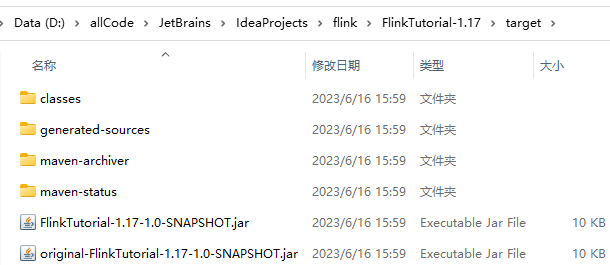

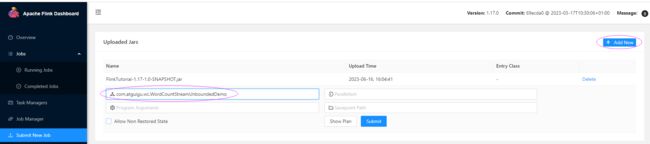

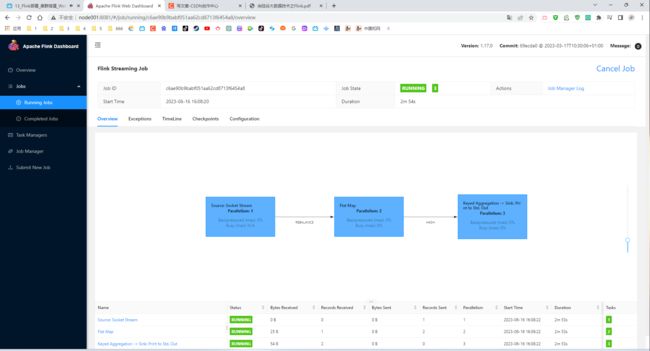

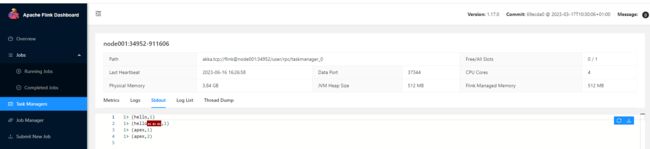

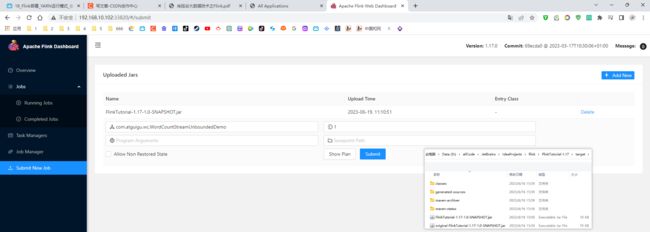

3.2.2 向集群提交作业

org.apache.maven.plugins

maven-shade-plugin

3.2.4

package

shade

com.google.code.findbugs:jsr305

org.slf4j:*

log4j:*

*:*

META-INF/*.SF

META-INF/*.DSA

META-INF/*.RSA

com.atguigu.wc.WordCountStreamUnboundedDemo

P014【014_Flink部署_集群搭建_命令行提交作业】03:46

3.2.2 向集群提交作业

4)命令行提交作业

连接成功

Last login: Fri Jun 16 14:44:01 2023 from 192.168.10.1

[atguigu@node001 ~]$ cd /opt/module/flink/flink-1.17.0/

[atguigu@node001 flink-1.17.0]$ cd bin

[atguigu@node001 bin]$ ./start-cluster.sh

Starting cluster.

Starting standalonesession daemon on host node001.

Starting taskexecutor daemon on host node001.

Starting taskexecutor daemon on host node002.

Starting taskexecutor daemon on host node003.

[atguigu@node001 bin]$ jpsall

================ node001 ================

2723 TaskManagerRunner

2855 Jps

2380 StandaloneSessionClusterEntrypoint

================ node002 ================

2294 TaskManagerRunner

2367 Jps

================ node003 ================

2292 TaskManagerRunner

2330 Jps

[atguigu@node001 bin]$ cd ..

[atguigu@node001 flink-1.17.0]$ bin/flink run -m node001:8081 -c com.atguigu.wc.WordCountStreamUnboundedDemo ./

bin/ conf/ examples/ lib/ LICENSE licenses/ log/ NOTICE opt/ plugins/ README.txt

[atguigu@node001 flink-1.17.0]$ bin/flink run -m node001:8081 -c com.atguigu.wc.WordCountStreamUnboundedDemo ../

flink-1.17.0/ jar/

[atguigu@node001 flink-1.17.0]$ bin/flink run -m node001:8081 -c com.atguigu.wc.WordCountStreamUnboundedDemo ../jar/FlinkTutorial-1.17-1.0-SNAPSHOT.jar

Job has been submitted with JobID 59ae9d6532523b0c48cdb8b6c9105356P015【015_Flink部署_部署模式介绍】10:17

3.3 部署模式

在一些应用场景中,对于集群资源分配和占用的方式,可能会有特定的需求。Flink为各种场景提供了不同的部署模式,主要有以下三种:会话模式(Session Mode)、单作业模式(Per-Job Mode)、应用模式(Application Mode)。

它们的区别主要在于:集群的生命周期以及资源的分配方式;以及应用的main方法到底在哪里执行——客户端(Client)还是JobManager。

P016【016_Flink部署_Standalone运行模式】08:16

3.4 Standalone运行模式(了解)

独立模式是独立运行的,不依赖任何外部的资源管理平台;当然独立也是有代价的:如果资源不足,或者出现故障,没有自动扩展或重分配资源的保证,必须手动处理。所以独立模式一般只用在开发测试或作业非常少的场景下。

[atguigu@node001 ~]$ cd /opt/module/flink/flink-1.17.0/bin

[atguigu@node001 bin]$ ./stop-cluster.sh

Stopping taskexecutor daemon (pid: 2723) on host node001.

Stopping taskexecutor daemon (pid: 2294) on host node002.

Stopping taskexecutor daemon (pid: 2292) on host node003.

Stopping standalonesession daemon (pid: 2380) on host node001.

[atguigu@node001 bin]$ jpsall

================ node001 ================

5120 Jps

================ node002 ================

3212 Jps

================ node003 ================

3159 Jps

[atguigu@node001 bin]$ ls

bash-java-utils.jar flink historyserver.sh kubernetes-session.sh sql-client.sh start-cluster.sh stop-zookeeper-quorum.sh zookeeper.sh

config.sh flink-console.sh jobmanager.sh kubernetes-taskmanager.sh sql-gateway.sh start-zookeeper-quorum.sh taskmanager.sh

find-flink-home.sh flink-daemon.sh kubernetes-jobmanager.sh pyflink-shell.sh standalone-job.sh stop-cluster.sh yarn-session.sh

[atguigu@node001 bin]$ cd ../lib/

[atguigu@node001 lib]$ ls

flink-cep-1.17.0.jar flink-dist-1.17.0.jar flink-table-api-java-uber-1.17.0.jar FlinkTutorial-1.17-1.0-SNAPSHOT.jar log4j-core-2.17.1.jar

flink-connector-files-1.17.0.jar flink-json-1.17.0.jar flink-table-planner-loader-1.17.0.jar log4j-1.2-api-2.17.1.jar log4j-slf4j-impl-2.17.1.jar

flink-csv-1.17.0.jar flink-scala_2.12-1.17.0.jar flink-table-runtime-1.17.0.jar log4j-api-2.17.1.jar

[atguigu@node001 lib]$ cd ../

[atguigu@node001 flink-1.17.0]$ bin/standalone-job.sh start --job-classname com.atguigu.wc.WordCountStreamUnboundedDemo

Starting standalonejob daemon on host node001.

[atguigu@node001 flink-1.17.0]$ jpsall

================ node001 ================

5491 StandaloneApplicationClusterEntryPoint

5583 Jps

================ node002 ================

3326 Jps

================ node003 ================

3307 Jps

[atguigu@node001 flink-1.17.0]$ bin/taskmanager.sh

Usage: taskmanager.sh (start|start-foreground|stop|stop-all)

[atguigu@node001 flink-1.17.0]$ bin/taskmanager.sh start

Starting taskexecutor daemon on host node001.

[atguigu@node001 flink-1.17.0]$ jpsall

================ node001 ================

5491 StandaloneApplicationClusterEntryPoint

5995 Jps

5903 TaskManagerRunner

================ node002 ================

3363 Jps

================ node003 ================

3350 Jps

[atguigu@node001 flink-1.17.0]$ bin/taskmanager.sh stop

Stopping taskexecutor daemon (pid: 5903) on host node001.

[atguigu@node001 flink-1.17.0]$ bin/standalone-job.sh stop

No standalonejob daemon (pid: 5491) is running anymore on node001.

[atguigu@node001 flink-1.17.0]$ xcall jps

=============== node001 ===============

6682 Jps

=============== node002 ===============

3429 Jps

=============== node003 ===============

3419 Jps

[atguigu@node001 flink-1.17.0]$ P017【017_Flink部署_YARN运行模式_环境准备】07:41

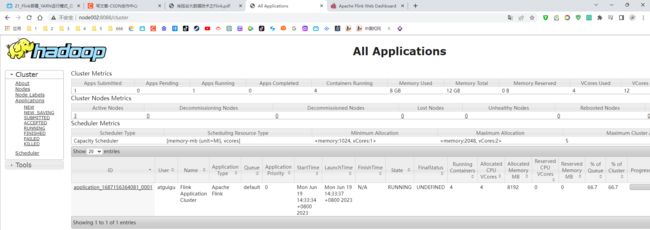

3.5 YARN运行模式(重点)

YARN上部署的过程是:客户端把Flink应用提交给Yarn的ResourceManager,Yarn的ResourceManager会向Yarn的NodeManager申请容器。在这些容器上,Flink会部署JobManager和TaskManager的实例,从而启动集群。Flink会根据运行在JobManger上的作业所需要的Slot数量动态分配TaskManager资源。

[atguigu@node001 flink-1.17.0]$ source /etc/profile.d/my_env.sh

[atguigu@node001 flink-1.17.0]$ myhadoop.sh s

Input Args Error...

[atguigu@node001 flink-1.17.0]$ myhadoop.sh start

================ 启动 hadoop集群 ================

---------------- 启动 hdfs ----------------

Starting namenodes on [node001]

Starting datanodes

Starting secondary namenodes [node003]

--------------- 启动 yarn ---------------

Starting resourcemanager

Starting nodemanagers

--------------- 启动 historyserver ---------------

[atguigu@node001 flink-1.17.0]$ jpsall

================ node001 ================

9200 JobHistoryServer

8416 NameNode

8580 DataNode

9284 Jps

8983 NodeManager

================ node002 ================

3892 ResourceManager

3690 DataNode

4365 Jps

4015 NodeManager

================ node003 ================

3680 DataNode

3778 SecondaryNameNode

3911 NodeManager

4044 Jps

[atguigu@node001 flink-1.17.0]$ P018【018_Flink部署_YARN运行模式_会话模式】18:11

[atguigu@node001 bin]$ ./yarn-session.sh --help

[atguigu@node001 bin]$ ./yarn-session.sh

[atguigu@node001 bin]$ ./yarn-session.sh -d -nm test

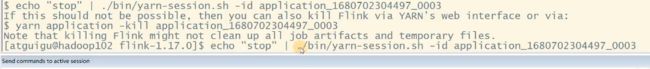

P019【019_Flink部署_YARN运行模式_会话模式的停止】04:10

3.5.3 单作业模式部署

在YARN环境中,由于有了外部平台做资源调度,所以我们也可以直接向YARN提交一个单独的作业,从而启动一个Flink集群。

P020【020_Flink部署_YARN运行模式_单作业模式】09:49

3.5.3 单作业模式部署

(1)执行命令提交作业

P021【021_Flink部署_YARN运行模式_应用模式】12:51

3.5.4 应用模式部署

应用模式同样非常简单,与单作业模式类似,直接执行flink run-application命令即可。

[atguigu@node001 flink-1.17.0]$ bin/flink run-application -t yarn-application -c com.atguigu.wc.WordCountStreamUnboundedDemo ./FlinkTutorial-1.17-1.0-SNAPSHOT.jar

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/flink/flink-1.17.0/lib/log4j-slf4j-impl-2.17.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/opt/module/hadoop/hadoop-3.1.3/share/hadoop/common/lib/slf4j-log4j12-1.7.25.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.apache.logging.slf4j.Log4jLoggerFactory]

2023-06-19 14:31:05,693 INFO org.apache.flink.yarn.cli.FlinkYarnSessionCli [] - Found Yarn properties file under /tmp/.yarn-properties-atguigu.

2023-06-19 14:31:05,693 INFO org.apache.flink.yarn.cli.FlinkYarnSessionCli [] - Found Yarn properties file under /tmp/.yarn-properties-atguigu.

2023-06-19 14:31:06,142 WARN org.apache.flink.yarn.configuration.YarnLogConfigUtil [] - The configuration directory ('/opt/module/flink/flink-1.17.0/conf') already contains a LOG4J config file.If you want to use logback, then please delete or rename the log configuration file.

2023-06-19 14:31:06,632 INFO org.apache.hadoop.yarn.client.RMProxy [] - Connecting to ResourceManager at node002/192.168.10.102:8032

2023-06-19 14:31:07,195 INFO org.apache.flink.yarn.YarnClusterDescriptor [] - No path for the flink jar passed. Using the location of class org.apache.flink.yarn.YarnClusterDescriptor to locate the jar[atguigu@node001 flink-1.17.0]$ bin/flink run-application -t yarn-application -c com.atguigu.wc.WordCountStreamUnboundedDemo ./FlinkTutorial-1.17-1.0-SNAPSHOT.jar

SLF4J: Class path contains multiple SLF4J bindings.[atguigu@node001 flink-1.17.0]$ bin/flink run-application -t yarn-application -Dyarn.provided.lib.dirs="hdfs://node001:8020/flink-dist" -c com.atguigu.wc.WordCountStreamUnboundedDemo hdfs://node001:8020/flink-jars/FlinkTutorial-1.17-1.0-SNAPSHOT.jar

P022【022_Flink部署_历史服务器】08:11

3.6 K8S 运行模式(了解)

容器化部署是如今业界流行的一项技术,基于Docker镜像运行能够让用户更加方便地对应用进行管理和运维。容器管理工具中最为流行的就是Kubernetes(k8s),而Flink也在最近的版本中支持了k8s部署模式。基本原理与YARN是类似的,具体配置可以参见官网说明,这里我们就不做过多讲解了。

3.7 历史服务器

运行 Flink job 的集群一旦停止,只能去 yarn 或本地磁盘上查看日志,不再可以查看作业挂掉之前的运行的 Web UI,很难清楚知道作业在挂的那一刻到底发生了什么。如果我们还没有 Metrics 监控的话,那么完全就只能通过日志去分析和定位问题了,所以如果能还原之前的 Web UI,我们可以通过 UI 发现和定位一些问题。

Flink提供了历史服务器,用来在相应的 Flink 集群关闭后查询已完成作业的统计信息。我们都知道只有当作业处于运行中的状态,才能够查看到相关的WebUI统计信息。通过 History Server 我们才能查询这些已完成作业的统计信息,无论是正常退出还是异常退出。

此外,它对外提供了 REST API,它接受 HTTP 请求并使用 JSON 数据进行响应。Flink 任务停止后,JobManager 会将已经完成任务的统计信息进行存档,History Server 进程则在任务停止后可以对任务统计信息进行查询。比如:最后一次的 Checkpoint、任务运行时的相关配置。

[atguigu@node001 flink-1.17.0]$ bin/historyserver.sh start

Starting historyserver daemon on host node001.

[atguigu@node001 flink-1.17.0]$ bin/flink run -t yarn-per-job -d -c com.atguigu.wc.WordCountStreamUnboundedDemo ../jar/FlinkTutorial-1.17-1.0-SNAPSHOT.jar

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/opt/module/flink/flink-1.17.0/lib/log4j-slf4j-impl-2.17.1.jar!/org/slf4j/impl/StaticLoggerBinder.class]