爬虫三(Bs4搜索、Selenium基本使用、无界面浏览器、Selenium自动登录百度案例、自动获取12306登录验证码案例、切换选项卡、浏览器前进后退、登录Cnblogs获取Cookie自动点赞)

文章标题

- 一、Bs4搜索文档树

- 二、CSS选择器

- 三、selenium基本使用

- 四、无界面浏览器

- 五、selenium其他使用

-

- 1)自动登录百度案例

- 2)获取位置属性大小、文本

- 3)自动获取12306登录验证码案例

- 4)等待元素被加载

- 5)元素操作

- 6)执行Js代码

- 7)切换选项卡

- 8)浏览器前进后退

- 9)异常处理

- 六、selenium登录Cnblogs获取Cookie

- 七、抽屉网半自动点赞

一、Bs4搜索文档树

from bs4 import BeautifulSoup

html_doc = """

The Dormouse's story

asdfasdfThe Dormouse's story

Once upon a time there were three little sisters; and their names were

Elsie,

Lacie and

Tillie;

and they lived at the bottom of a well.

...

"""

soup = BeautifulSoup(html_doc, 'lxml')

字符串

res = soup.find(name='a', id='link2')

res1 = soup.find(href='http://example.com/tillie')

res2 = soup.find(class_='story')

res3 = soup.body.find('p')

res4 = soup.body.find(string='Elsie')

res5 = soup.find(attrs={'class': 'sister'})

print(res, res1, res2, res3, res4, res5)

正则表达式

res = soup.find_all(name=re.compile('^b'))

res1 = soup.find_all(href=re.compile('^http'))

for item in res1:

url = item.attrs.get('href')

print(url)

res2 = soup.find(attrs={'href': re.compile('^a')})

print(res2)

列表

res = soup.find_all(class_=['story', 'sister'])

res1 = soup.find_all(name=['a', 'p'])

print(res)

布尔

res = soup.find_all(name=True)

print(res)

方法

def has_class_but_no_id(tag):

return tag.has_attr('class') and not tag.has_attr('id')

print(soup.find_all(has_class_but_no_id))

二、CSS选择器

from bs4 import BeautifulSoup

html_doc = """

The Dormouse's story

asdfasdfThe Dormouse's story

Once upon a time there were three little sisters; and their names were

Elsie,

Lacie and

Tillie;

and they lived at the bottom of a well.

...

"""

soup = BeautifulSoup(html_doc, 'lxml')

res = soup.select('a')

res1 = soup.select('#link1')

res2 = soup.select('.sister')

res3 = soup.select('body>p>a')

res4 = soup.select('body>p>a:nth-child(2)')

res5 = soup.select('body>p>a:nth-last-child(1)')

res6 = soup.select('a[href="http://example.com/tillie"]')

print(res6)

三、selenium基本使用

Selenium最初是一个自动化测试工具,而爬虫中使用它主要是为了解决Requests无法直接执行JavaScript代码的问题

Selenium本质是通过驱动浏览器,完全模拟浏览器的操作,比如跳转、输入、点击、下拉等,来拿到网页渲染之后的结果,可支持多种浏览器。

安装selenium

pip3 install selenium

下载浏览器驱动 根据自己浏览器的版本进行下载

https://registry.npmmirror.com/binary.html?path=chromedriver/

模拟使用

from selenium import webdriver

import time

bro = webdriver.Chrome(executable_path='./chromedriver') # 使用插件

bro.get('http://www.baidu.com') # 目标地址

time.sleep(3) # 睡眠三秒

bro.close() # 关闭

bro.quit()

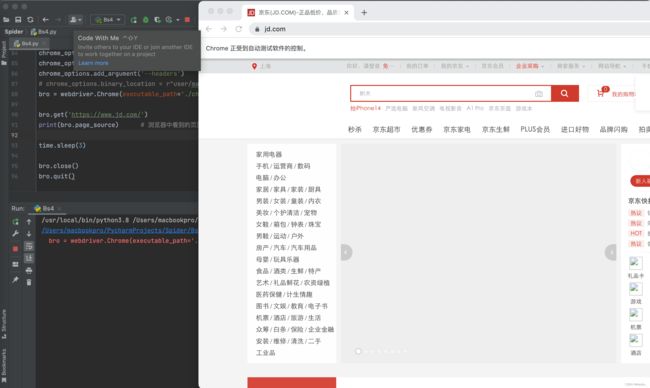

四、无界面浏览器

做爬虫,不希望有一个浏览器打开,谷歌支持无头浏览器,后台运行,没有浏览器的图形化(GUI)界面

from selenium.webdriver.chrome.options import Options

chrome_options = Options() # 打开一个浏览器

chrome_options.add_argument('window-size=1920x3000') # 指定分辨率

chrome_options.add_argument('--disable-gpu') # 谷歌文档提到需要加上这个属性来规避bug

chrome_options.add_argument('blink-settings=imagesEnabled=false') # 不加载图片, 提升速度

chrome_options.add_argument('--headers') # 浏览器不提供可视化页面. linux下如果系统不支持可视化不加这条会启动失败

# chrome_options.binary_location = r"user/macbookpro/......" # 手动指定使用的浏览器位置

bro = webdriver.Chrome(executable_path='./chromedriver', options=chrome_options)

bro.get('https://www.jd.com/') # 目标网址

print(bro.page_source) # 浏览器中看到的页面内容

time.sleep(3)

bro.close() # 关闭tab页

bro.quit() # 关闭浏览器

五、selenium其他使用

1)自动登录百度案例

from selenium import webdriver

from selenium.webdriver.common.by import By

bro = webdriver.Chrome(executable_path='./chromedriver')

bro.get('http://www.baidu.com')

bro.implicitly_wait(10) # 等待页面加载标签

bro.maximize_window() # 全屏

a = bro.find_element(by=By.LINK_TEXT, value='登录') # 找到登录按钮

a.click()

input_name = bro.find_element(by=By.ID, value='TANGRAM__PSP_11__userName')

input_name.send_keys('xxxxxxx') # 账号

time.sleep(1)

input_password = bro.find_element(by=By.ID, value='TANGRAM__PSP_11__password')

input_password.send_keys('xxxxxx') # 密码

time.sleep(1)

input_submit = bro.find_element(by=By.ID, value='TANGRAM__PSP_11__submit')

input_submit.click() # 点击登录

time.sleep(3)

bro.close()

2)获取位置属性大小、文本

bro.find_element(by=By.ID,value='id号')

bro.find_element(by=By.LINK_TEXT,value='a标签文本内容')

bro.find_element(by=By.PARTIAL_LINK_TEXT,value='a标签文本内容模糊匹配')

bro.find_element(by=By.CLASS_NAME,value='类名')

bro.find_element(by=By.TAG_NAME,value='标签名')

bro.find_element(by=By.NAME,value='属性name')

# -----所有语言通用的----

bro.find_element(by=By.CSS_SELECTOR,value='css选择器')

bro.find_element(by=By.XPATH,value='xpath选择器')

# 获取标签位置,大小

print(code.location)

print(code.size)

-------

print(code.tag_name)

print(code.id)

3)自动获取12306登录验证码案例

bro = webdriver.Chrome(executable_path='./chromedriver')

bro.get('https://kyfw.12306.cn/otn/resources/login.html')

a = bro.find_element(by=By.LINK_TEXT, value='扫码登录')

a.click()

code = bro.find_element(by=By.CSS_SELECTOR, value='#J-qrImg')

s = code.get_attribute('src')

with open('code.png', 'wb') as f:

res = base64.b64decode(s.split(',')[-1])

f.write(res)

time.sleep(3)

bro.close()

4)等待元素被加载

# 代码执行很快,有些标签还没加载出来,直接取,取不到 隐士等待

bro.implicitly_wait(10) # 10秒

5)元素操作

点击

标签.click()

input输入文字

标签.send_keys('文字')

input清空文字

标签.clear()

模拟键盘操作

from selenium.webdriver.common.keys import Keys

input_search.send_keys(Keys.ENTER)

6)执行Js代码

bro = webdriver.Chrome(executable_path='./chromedriver')

bro.get('https://www.jd.com')

bro.execute_script('alert("document.cookie")') # 弹窗提示 cookie

bro.execute_script('scrollTo(0, 600)') # 下拉600px

for i in range(10): # 循环下拉400px

y = 400*(i+1)

bro.execute_script('scrollTo(0, %s)' % y)

time.sleep(1)

bro.execute_script('scrollTo(0, document.body.scrollHeight)') # 移动到网页底部

time.sleep(3)

bro.close()

7)切换选项卡

bro = webdriver.Chrome(executable_path='./chromedriver')

bro.get('https://www.jd.com') # 目标地址京东

bro.execute_script('window.open()')

bro.switch_to.window(bro.window_handles[1]) # 新建一个打开淘宝

bro.get('http://www.taobao.com')

time.sleep(2)

bro.switch_to.window(bro.window_handles[0]) # 切换为京东

time.sleep(3)

bro.close()

bro.quit()

8)浏览器前进后退

bro = webdriver.Chrome(executable_path='./chromedriver')

bro.get('https://www.jd.com/')

time.sleep(2)

bro.get('https://www.taobao.com/')

time.sleep(2)

bro.get('https://www.baidu.com/')

bro.back() # 后退

time.sleep(1)

bro.forward() # 前进

time.sleep(3)

bro.close()

9)异常处理

bro = webdriver.Chrome(executable_path='./chromedriver')

bro.get('https://www.jd.com/')

try:

time.sleep(2)

bro.get('https://www.taobao.com/')

time.sleep(2)

bro.get('https://www.baidu.com/')

bro.back() # 后退

time.sleep(1)

bro.forward() # 前进

time.sleep(3)

except Exception as e:

print(e)

bro.close()

六、selenium登录Cnblogs获取Cookie

bro = webdriver.Chrome(executable_path='./chromedriver')

bro.get('https://www.cnblogs.com/')

bro.implicitly_wait(10)

try:

submit_btn = bro.find_element(By.LINK_TEXT, value='登录') # 首页点击登录

submit_btn.click()

time.sleep(1)

username = bro.find_element(By.ID, value='mat-input-0') # 获取用户名输入框

password = bro.find_element(By.ID, value='mat-input-1') # 获取密码输入框

username.send_keys('[email protected]') # 输入账号

password.send_keys('1231') # 输入密码

submit = bro.find_element(By.CSS_SELECTOR, value='body > app-root > app-sign-in-layout > div > div > app-sign-in > app-content-container > div > div > div > form > div > button')

time.sleep(20) # 获取登录按钮 获取css样式 右键copy selector

submit.click() # 点击登录按钮

input() # 控制台输入空格 输出cookies

cookie = bro.get_cookies()

print(cookie)

with open('cnblogs.json', 'w', encoding='utf-8')as f: # 保存到本地

json.dump(cookie, f)

time.sleep(5)

except Exception as e:

print(e)

finally:

bro.close()

七、抽屉网半自动点赞

bro = webdriver.Chrome(executable_path='./chromedriver.exe')

bro.get('https://dig.chouti.com/')

bro.implicitly_wait(10)

try:

submit = bro.find_element(by=By.ID, value='login_btn')

bro.execute_script("arguments[0].click()", submit)

# submit.click() # 有的页面button能找到,但是点击不了,报错,可以使用js点击它

time.sleep(2)

username = bro.find_element(by=By.NAME, value='phone')

username.send_keys('[email protected]')

password = bro.find_element(by=By.NAME, value='password')

password.send_keys('xxxxxx')

time.sleep(3)

submit_button = bro.find_element(By.CSS_SELECTOR,

'body > div.login-dialog.dialog.animated2.scaleIn > div > div.login-footer > div:nth-child(4) > button')

submit_button.click()

# 验证码

input()

cookie = bro.get_cookies()

print(cookie)

with open('chouti.json', 'w', encoding='utf-8') as f:

json.dump(cookie, f)

# 找出所有文章的id号

div_list = bro.find_elements(By.CLASS_NAME, 'link-item')

l = []

for div in div_list:

article_id = div.get_attribute('data-id')

l.append(article_id)

except Exception as e:

print(e)

finally:

bro.close()

# 继续往下写,selenium完成它的任务了,登录---》拿到cookie,使用requests发送[点赞]

print(l)

with open('chouti.json', 'r', encoding='utf-8')as f:

cookie = json.load(f)

# 小细节,selenium的cookie不能直接给request用,需要有些处理

request_cookies = {}

for item in cookie:

request_cookies[item['name']] = item['value']

print(request_cookies)

header = {

'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/106.0.0.0 Safari/537.36'

}

for i in l:

data = {

'linkId': i

}

res = requests.post('https://dig.chouti.com/link/vote', data=data, headers=header, cookies=request_cookies)

print(res.text)