二进制方式搭建k8s集群

文章目录

- 使用二进制方式搭建K8S集群

-

- k8s架构

- 1. 准备工作

- 2. 准备虚拟机

- 3. 操作系统的初始化

- 4. 部署Etcd集群

-

- 4.1 准备cfssl证书生成工具

- 4.2 生成 Etcd证书

-

- (1)自签证书颁发机构(CA)

- (2)使用自签 CA 签发 Etcd HTTPS 证书

- 4.3 部署 Etcd集群

-

- (1)创建工作目录并下载二进制包

- (2)创建 etcd配置文件

- (3)systemd管理 etcd

- (4)拷贝刚才生成的证书

- (5)将上面节点 1 所有生成的文件拷贝到节点 2 和节点 3

- (6)启动并设置开机启动(3个节点都有)

- (7)查看集群状态

- 5. 安装Docker

- 6. 生成证书(只在master节点)

-

- 6.1 生成 kube-apiserver 证书(用于部署master mode)

- 6.2生成kube-proxy证书(用于部署worker node)

- 7. 部署Master Node

-

- 7.1 从 Github 下载二进制文件

- 7.2 解压二进制包

- 7.3 部署 kube-apiserver

- 7.4 部署 kube-controller-manager

- 7.5 部署 kube-scheduler

- 8. 部署Worker Node

-

- 8.1 创建工作目录并拷贝二进制文件

- 8.2 创建bootstrap kubeconfig文件和kube-proxy kubeconfig文件

-

- (1)创建kubeconfig文件

- (2)创建kube-proxy kubeconfig文件

- 8.3部署 kubelet

- 8.4 批准 kubelet证书申请并加入集群

- 8.5 部署 kube-proxy

- 8.6 部署 CNI网络

- 8.7 新增加 Worker Node

- 错误

- 参考文献

使用二进制方式搭建K8S集群

k8s架构

k8s 集群控制节点,对集群进行调度管理,接受集群外用户去集群操作请求

master节点上的主要组件包括:

1、kube-apiserver:集群控制的入口,提供 HTTP REST 服务,同时交给etcd存储,提供认证、授权、访问控制、API注册和发现等机制

2、kube-controller-manager:Kubernetes 集群中所有资源对象的自动化控制中心,理集群中常规后台任务,一个资源对应一个控制器

3、kube-scheduler:负责 Pod 的调度,选择node节点应用部署

4、etcd数据库(也可以安装在单独的服务器上):存储系统,用于保存集群中的相关数据

node节点上的主要组件包括:

1、kubelet:master派到node节点代表,管理本机容器

- 一个集群中每个节点上运行的代理,它保证容器都运行在Pod中

- 负责维护容器的生命周期,同时也负责Volume(CSI) 和 网络(CNI)的管理

2、kube-proxy:提供网络代理,负载均衡等操作

3、容器运行环境(Container runtime)如docker

- 容器运行环境是负责运行容器的软件

- Kubernetes支持多个容器运行环境:Docker、containerd、cri-o、rktlet以及任何实现Kubernetes CRI (容器运行环境接口) 的软件。

1. 准备工作

在开始之前,部署Kubernetes集群机器需要满足以下几个条件

- 一台或多台机器,操作系统CentOS 7.x

- 硬件配置:2GB ,2个CPU,硬盘30GB

- 集群中所有机器之间网络互通

- 可以访问外网,需要拉取镜像,如果服务器不能上网,需要提前下载镜像导入节点

- 禁止swap分区

2. 准备虚拟机

首先我们准备了三台虚拟机,来进行安装测试

| 主机名 | ip | 组件 |

|---|---|---|

| m1 | 192.168.11.187 | kube-apiserver,kube-controller-manager,kube -scheduler,etcd |

| n1 | 192.168.11.188 | kubelet,kube-proxy,docker,flannel,etcd |

| n2 | 192.168.11.189 | kubelet,kube-proxy,docker,flannel,etcd |

【注意】本地部署工作所需要的全部文件均已列出,如果网络不好或者是需要科学上网可以访问到,可以直接使用!

相关文件传送门 点这里这里

3. 操作系统的初始化

然后我们需要进行一些系列的初始化操作

# 关闭防火墙

systemctl stop firewalld

systemctl disable firewalld

# 关闭selinux

# 永久关闭

sed -i 's/enforcing/disabled/' /etc/selinux/config

# 临时关闭

setenforce 0

# 关闭swap

# 临时

swapoff -a

# 永久关闭

sed -ri 's/.*swap.*/#&/' /etc/fstab

# 根据规划设置主机名【master节点上操作】

hostnamectl set-hostname m1

# 根据规划设置主机名【node1节点操作】

hostnamectl set-hostname n1

# 根据规划设置主机名【node2节点操作】

hostnamectl set-hostname n2

# 在master添加hosts

cat >> /etc/hosts << EOF

192.168.11.187 m1

192.168.11.188 n1

192.168.11.189 n2

EOF

# 将桥接的IPv4流量传递到iptables的链

cat > /etc/sysctl.d/k8s.conf << EOF

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

# 生效

sysctl --system

# 时间同步

yum install ntpdate -y

ntpdate time.windows.com

4. 部署Etcd集群

Etcd是一个分布式键值存储系统,Kubernetes使用Etcd进行数据存储,所以先准备一个Etcd数据库,为了解决Etcd单点故障,应采用集群方式部署,这里使用3台组建集群,可容忍一台机器故障,当然也可以使用5台组件集群,可以容忍2台机器故障

| 节点名称 | ip |

|---|---|

| etcd1 | 192.168.11.184 |

| etcd2 | 192.168.11.185 |

| etcd3 | 192.168.11.186 |

注:为了节省机器,这里与 K8s 节点机器复用。也可以独立于 k8s 集群之外部署,只要apiserver 能连接到就行。

4.1 准备cfssl证书生成工具

cfssl是一个开源的证书管理工具,使用json文件生成证书,相比openssl 更方便使用。找任意一台服务器操作,这里用Master节点。

[root@m1 ~]# wget https://pkg.cfssl.org/R1.2/cfssl_linux-amd64

[root@m1 ~]# wget https://pkg.cfssl.org/R1.2/cfssljson_linux-amd64

[root@m1 ~]# wget https://pkg.cfssl.org/R1.2/cfssl-certinfo_linux-amd64

[root@m1 ~]# chmod +x cfssl_linux-amd64 cfssljson_linux-amd64 cfssl-certinfo_linux-amd64

[root@m1 ~]# mv cfssl_linux-amd64 /usr/local/bin/cfssl

[root@m1 ~]# mv cfssljson_linux-amd64 /usr/local/bin/cfssljson

[root@m1 ~]# mv cfssl-certinfo_linux-amd64 /usr/local/bin/cfssl-certinfo

4.2 生成 Etcd证书

(1)自签证书颁发机构(CA)

[root@m1 ~]# mkdir -p ~/TLS/{etcd,k8s}

[root@m1 ~]# cd TLS/etcd

自签 CA:

[root@m1 etcd]# cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"www": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

[root@m1 etcd]# cat > ca-csr.json<< EOF

{

"CN": "etcd CA",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing"

}

]

}

EOF

生成证书:

[root@m1 etcd]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2021/01/29 12:56:38 [INFO] generating a new CA key and certificate from CSR

2021/01/29 12:56:38 [INFO] generate received request

2021/01/29 12:56:38 [INFO] received CSR

2021/01/29 12:56:38 [INFO] generating key: rsa-2048

2021/01/29 12:56:38 [INFO] encoded CSR

2021/01/29 12:56:38 [INFO] signed certificate with serial number 626998253804116666178693896935885801741945418830

[root@m1 etcd]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

[root@m1 etcd]# ls *pem

ca-key.pem ca.pem

(2)使用自签 CA 签发 Etcd HTTPS 证书

创建证书申请文件:

[root@m1 etcd]# cat > server-csr.json << EOF

{

"CN": "etcd",

"hosts": [

"192.168.11.187",

"192.168.11.188",

"192.168.11.189"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing"

}

]

}

EOF

注:上述文件 hosts字段中 IP为所有 etcd节点的集群内部通信 IP,一个都不能少!为了方便后期扩容可以多写几个预留的 IP。

生成证书:

[root@m1 etcd]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=www server-csr.json | cfssljson -bare server

2021/01/29 13:01:14 [INFO] generate received request

2021/01/29 13:01:14 [INFO] received CSR

2021/01/29 13:01:14 [INFO] generating key: rsa-2048

2021/01/29 13:01:14 [INFO] encoded CSR

2021/01/29 13:01:14 [INFO] signed certificate with serial number 352987269900645375778410919481069285400609343984

2021/01/29 13:01:14 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@m1 etcd]#

[root@m1 etcd]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem server.csr server-csr.json server-key.pem server.pem

[root@m1 etcd]#

[root@m1 etcd]# ls server*pem

server-key.pem server.pem

[root@m1 etcd]#

4.3 部署 Etcd集群

以下在节点 1 上操作,为简化操作,待会将节点 1 生成的所有文件拷贝到节点 2和节点 3.

(1)创建工作目录并下载二进制包

下载地址:https://github.com/etcd-io/etcd/releases/download/v3.4.9/etcd-v3.4.9-linux-amd64.tar.gz

[root@m1 etcd]# cd ~

[root@m1 ~]# wget https://github.com/etcd-io/etcd/releases/download/v3.4.9/etcd-v3.4.9-linux-amd64.tar.gz

[root@m1 ~]# mkdir /opt/etcd/{bin,cfg,ssl} -p #bin里面存放的是可执行文件,cfg配置文件,ssl证书

[root@m1 ~]# tar zxvf etcd-v3.4.9-linux-amd64.tar.gz

[root@m1 ~]# mv etcd-v3.4.9-linux-amd64/{etcd,etcdctl} /opt/etcd/bin/

(2)创建 etcd配置文件

[root@m1 ~]# cat > /opt/etcd/cfg/etcd.conf << EOF

#[Member]

ETCD_NAME="etcd-1"

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.11.187:2380"

ETCD_LISTEN_CLIENT_URLS="https://192.168.11.187:2379"

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.11.187:2380"

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.11.187:2379"

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.11.187:2380,etcd-2=https://192.168.11.188:2380,etcd-3=https://192.168.11.189:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

EOF

ETCD_NAME:节点名称,集群中唯一

ETCD_DATA_DIR:数据目录

ETCD_LISTEN_PEER_URLS:集群通信监听地址

ETCD_LISTEN_CLIENT_URLS:客户端访问监听地址

ETCD_INITIAL_ADVERTISE_PEER_URLS:集群通告地址

ETCD_ADVERTISE_CLIENT_URLS:客户端通告地址

ETCD_INITIAL_CLUSTER:集群节点地址

ETCD_INITIAL_CLUSTER_TOKEN:集群 Token

ETCD_INITIAL_CLUSTER_STATE:加入集群的当前状态,new是新集群,existing表示加入已有集群

(3)systemd管理 etcd

[root@m1 ~]# cat > /usr/lib/systemd/system/etcd.service << EOF

[Unit]

Description=Etcd Server

After=network.target

After=network-online.target

Wants=network-online.target

[Service]

Type=notify

EnvironmentFile=/opt/etcd/cfg/etcd.conf

ExecStart=/opt/etcd/bin/etcd \

--cert-file=/opt/etcd/ssl/server.pem \

--key-file=/opt/etcd/ssl/server-key.pem \

--peer-cert-file=/opt/etcd/ssl/server.pem \

--peer-key-file=/opt/etcd/ssl/server-key.pem \

--trusted-ca-file=/opt/etcd/ssl/ca.pem \

--peer-trusted-ca-file=/opt/etcd/ssl/ca.pem \

--logger=zap

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

(4)拷贝刚才生成的证书

把刚才生成的证书拷贝到配置文件中的路径:

[root@m1 ~]# cp ~/TLS/etcd/ca*pem ~/TLS/etcd/server*pem /opt/etcd/ssl/

(5)将上面节点 1 所有生成的文件拷贝到节点 2 和节点 3

# 拷贝到节点2

scp -r /opt/etcd/ [email protected]:/opt/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

#拷贝到节点3

scp -r /opt/etcd/ [email protected]:/opt/

scp /usr/lib/systemd/system/etcd.service [email protected]:/usr/lib/systemd/system/

然后在节点 2 和节点 3 分别修改 etcd.conf 配置文件中的节点名称和当前服务器 IP:

vim /opt/etcd/cfg/etcd.conf

#[Member]

ETCD_NAME="etcd-1" # 修改此处,节点 2 改为 etcd-2,节点 3改为 etcd-3

ETCD_DATA_DIR="/var/lib/etcd/default.etcd"

ETCD_LISTEN_PEER_URLS="https://192.168.31.71:2380" # 修改此处为当前服务器 IP ETCD_LISTEN_CLIENT_URLS="https://192.168.31.71:2379" # 修改此处为当前服务器 IP

#[Clustering]

ETCD_INITIAL_ADVERTISE_PEER_URLS="https://192.168.31.71:2380" # 修改此处为当前服务器 IP

ETCD_ADVERTISE_CLIENT_URLS="https://192.168.31.71:2379" # 修改此处为当前服务器IP

ETCD_INITIAL_CLUSTER="etcd-1=https://192.168.31.71:2380,etcd-2=https://192.168.31.72:2380,etcd-3=https://192.168.31.73:2380"

ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster"

ETCD_INITIAL_CLUSTER_STATE="new"

(6)启动并设置开机启动(3个节点都有)

systemctl daemon-reload

systemctl start etcd

systemctl enable etcd

systemctl status etcd

(7)查看集群状态

ETCDCTL_API=3 /opt/etcd/bin/etcdctl --cacert=/opt/etcd/ssl/ca.pem --cert=/opt/etcd/ssl/server.pem --key=/opt/etcd/ssl/server-key.pem --endpoints="https://192.168.11.187:2379,https://192.168.11.188:2379,https://192.168.11.189:2379" endpoint health

如果输出下面信息,就说明集群部署成功。

如果有问题第一步先看日志:/var/log/message 或 journalctl -u etcd

5. 安装Docker

以下在所有节点操作。这里采用二进制安装,用 yum 安装也一样。

(1)下载二进制包

下载地址:https://download.docker.com/linux/static/stable/x86_64/docker-19.03.9.tgz

wget https://download.docker.com/linux/static/stable/x86_64/docker-19.03.9.tgz

tar zxvf docker-19.03.9.tgz

mv docker/* /usr/bin

(2) systemd管理 docker

cat > /usr/lib/systemd/system/docker.service << EOF

[Unit]

Description=Docker Application Container Engine

Documentation=https://docs.docker.com

After=network-online.target firewalld.service

Wants=network-online.target

[Service]

Type=notify

ExecStart=/usr/bin/dockerd

ExecReload=/bin/kill -s HUP $MAINPID

LimitNOFILE=infinity

LimitNPROC=infinity

LimitCORE=infinity

TimeoutStartSec=0

Delegate=yes

KillMode=process

Restart=on-failure

StartLimitBurst=3

StartLimitInterval=60s

[Install]

WantedBy=multi-user.target

EOF

(3)创建配置文件

mkdir /etc/docker

cat > /etc/docker/daemon.json << EOF

{

"registry-mirrors": ["https://b9pmyelo.mirror.aliyuncs.com"]

}

EOF

registry-mirrors 阿里云镜像加速器

(4)启动并设置开机启动

systemctl daemon-reload

systemctl start docker

systemctl enable docker

systemctl status docker

6. 生成证书(只在master节点)

6.1 生成 kube-apiserver 证书(用于部署master mode)

(1)自签证书颁发机构(CA)

# 切换目录

cd ~/TLS/k8s

[root@m1 k8s]# cat > ca-config.json << EOF

{

"signing": {

"default": {

"expiry": "87600h"

},

"profiles": {

"kubernetes": {

"expiry": "87600h",

"usages": [

"signing",

"key encipherment",

"server auth",

"client auth"

]

}

}

}

}

EOF

[root@m1 k8s]# cat > ca-csr.json<< EOF

{

"CN": "kubernetes",

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "Beijing",

"ST": "Beijing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

(2)生成证书:

[root@m1 k8s]# cfssl gencert -initca ca-csr.json | cfssljson -bare ca -

2021/01/29 15:17:54 [INFO] generating a new CA key and certificate from CSR

2021/01/29 15:17:54 [INFO] generate received request

2021/01/29 15:17:54 [INFO] received CSR

2021/01/29 15:17:54 [INFO] generating key: rsa-2048

2021/01/29 15:17:54 [INFO] encoded CSR

2021/01/29 15:17:54 [INFO] signed certificate with serial number 502058599335845103424504775644770447174262097753

[root@m1 k8s]#

[root@m1 k8s]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem

[root@m1 k8s]#

[root@m1 k8s]# ls *pem

ca-key.pem ca.pem

[root@m1 k8s]#

(3)使用自签 CA 签发 kube-apiserver HTTPS 证书

创建证书申请文件:

cd ~/TLS/k8s

cat > server-csr.json << EOF

{

"CN": "kubernetes",

"hosts": [

"10.0.0.1",

"127.0.0.1",

"192.168.11.187",

"192.168.11.188",

"192.168.11.189",

"kubernetes",

"kubernetes.default",

"kubernetes.default.svc",

"kubernetes.default.svc.cluster",

"kubernetes.default.svc.cluster.local"

],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

生成证书:

[root@m1 k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes server-csr.json | cfssljson -bare server

2021/01/29 15:21:36 [INFO] generate received request

2021/01/29 15:21:36 [INFO] received CSR

2021/01/29 15:21:36 [INFO] generating key: rsa-2048

2021/01/29 15:21:36 [INFO] encoded CSR

2021/01/29 15:21:36 [INFO] signed certificate with serial number 258272723242965946822580152857434852064172528676

2021/01/29 15:21:36 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@m1 k8s]#

[root@m1 k8s]# ls

ca-config.json ca.csr ca-csr.json ca-key.pem ca.pem server.csr server-csr.json server-key.pem server.pem

[root@m1 k8s]#

[root@m1 k8s]# ls server*pem

server-key.pem server.pem

[root@m1 k8s]#

6.2生成kube-proxy证书(用于部署worker node)

cd ~/TLS/k8s

# 创建证书请求文件

cat > kube-proxy-csr.json<< EOF

{

"CN": "system:kube-proxy",

"hosts": [],

"key": {

"algo": "rsa",

"size": 2048

},

"names": [

{

"C": "CN",

"L": "BeiJing",

"ST": "BeiJing",

"O": "k8s",

"OU": "System"

}

]

}

EOF

# 生成证书

[root@m1 k8s]# cfssl gencert -ca=ca.pem -ca-key=ca-key.pem -config=ca-config.json -profile=kubernetes kube-proxy-csr.json | cfssljson -bare kube-proxy

2021/01/29 15:51:31 [INFO] generate received request

2021/01/29 15:51:31 [INFO] received CSR

2021/01/29 15:51:31 [INFO] generating key: rsa-2048

2021/01/29 15:51:31 [INFO] encoded CSR

2021/01/29 15:51:31 [INFO] signed certificate with serial number 356910887774769387939132069692205208873278532262

2021/01/29 15:51:31 [WARNING] This certificate lacks a "hosts" field. This makes it unsuitable for

websites. For more information see the Baseline Requirements for the Issuance and Management

of Publicly-Trusted Certificates, v.1.1.6, from the CA/Browser Forum (https://cabforum.org);

specifically, section 10.2.3 ("Information Requirements").

[root@m1 k8s]#

[root@m1 k8s]# ls kube-proxy*pem

kube-proxy-key.pem kube-proxy.pem

7. 部署Master Node

7.1 从 Github 下载二进制文件

下载地址:

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG/CHANGELOG-1.18.md#v1183

7.2 解压二进制包

tar zxvf kubernetes-server-linux-amd64.tar.gz

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

cd kubernetes/server/bin

cp kube-apiserver kube-scheduler kube-controller-manager /opt/kubernetes/bin

cp kubectl /usr/bin/

7.3 部署 kube-apiserver

(1)创建配置文件

cat > /opt/kubernetes/cfg/kube-apiserver.conf << EOF

KUBE_APISERVER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--etcd-servers=https://192.168.11.187:2379,https://192.168.11.188:2379,https://192.168.11.189:2379 \

--bind-address=192.168.11.187 \

--secure-port=6443 \

--advertise-address=192.168.11.187 \

--allow-privileged=true \

--service-cluster-ip-range=10.0.0.0/24 \

--enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \

--authorization-mode=RBAC,Node \

--enable-bootstrap-token-auth=true \

--token-auth-file=/opt/kubernetes/cfg/token.csv \

--service-node-port-range=30000-32767 \

--kubelet-client-certificate=/opt/kubernetes/ssl/server.pem \

--kubelet-client-key=/opt/kubernetes/ssl/server-key.pem \

--tls-cert-file=/opt/kubernetes/ssl/server.pem \

--tls-private-key-file=/opt/kubernetes/ssl/server-key.pem \

--client-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-key-file=/opt/kubernetes/ssl/ca-key.pem \

--etcd-cafile=/opt/etcd/ssl/ca.pem \

--etcd-certfile=/opt/etcd/ssl/server.pem \

--etcd-keyfile=/opt/etcd/ssl/server-key.pem \

--audit-log-maxage=30 \

--audit-log-maxbackup=3 \

--audit-log-maxsize=100 \

--audit-log-path=/opt/kubernetes/logs/k8s-audit.log"

EOF

– logtostderr:启用日志

– v:日志等级

– log-dir:日志目录

– etcd-servers:etcd集群地址

– bind-address:监听地址

– secure-port:https 安全端口

– advertise-address:集群通告地址

– allow-privileged:启用授权

– service-cluster-ip-range:Service虚拟 IP地址段

– enable-admission-plugins:准入控制模块

– authorization-mode:认证授权,启用 RBAC 授权和节点自管理

– enable-bootstrap-token-auth:启用 TLS bootstrap 机制

– token-auth-file:bootstrap token文件

– service-node-port-range:Service nodeport类型默认分配端口范围

– kubelet-client-xxx:apiserver 访问 kubelet客户端证书

– tls-xxx-file:apiserver https 证书

– etcd-xxxfile:连接 Etcd 集群证书

– audit-log-xxx:审计日志

(2)拷贝刚才生成的证书

把刚才生成的证书拷贝到配置文件中的路径:

cp ~/TLS/k8s/ca*pem ~/TLS/k8s/server*pem /opt/kubernetes/ssl/

(3)启用 TLS Bootstrapping 机制

TLS Bootstraping:Master apiserver 启用 TLS 认证后,Node 节点 kubelet 和 kube-proxy 要与 kube-apiserver 进行通信,必须使用 CA 签发的有效证书才可以,当 Node节点很多时,这种客户端证书颁发需要大量工作,同样也会增加集群扩展复杂度。为了简化流程,Kubernetes 引入了 TLS bootstraping 机制来自动颁发客户端证书,kubelet会以一个低权限用户自动向 apiserver 申请证书,kubelet 的证书由 apiserver 动态签署。所以强烈建议在 Node 上使用这种方式,目前主要用于 kubelet,kube-proxy 还是由我们统一颁发一个证书。

创建上述配置文件中 token 文件:

cat > /opt/kubernetes/cfg/token.csv << EOF

c47ffb939f5ca36231d9e3121a252940,kubelet-bootstrap,10001,"system:node-bootstrapper"

EOF

(4)systemd 管理 apiserver

cat > /usr/lib/systemd/system/kube-apiserver.service << EOF

[Unit]

Description=Kubernetes API Server

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-apiserver.conf

ExecStart=/opt/kubernetes/bin/kube-apiserver \$KUBE_APISERVER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

(5)启动并设置开机启动

systemctl daemon-reload

systemctl start kube-apiserver

systemctl enable kube-apiserver

systemctl status kube-apiserver

(6)授权 kubelet-bootstrap 用户允许请求证书

kubectl create clusterrolebinding kubelet-bootstrap \

--clusterrole=system:node-bootstrapper \

--user=kubelet-bootstrap

7.4 部署 kube-controller-manager

(1)创建配置文件

cat > /opt/kubernetes/cfg/kube-controller-manager.conf << EOF

KUBE_CONTROLLER_MANAGER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect=true \

--master=127.0.0.1:8080 \

--bind-address=127.0.0.1 \

--allocate-node-cidrs=true \

--cluster-cidr=10.244.0.0/16 \

--service-cluster-ip-range=10.0.0.0/24 \

--cluster-name=kubernetes \

--cluster-signing-cert-file=/opt/kubernetes/ssl/ca.pem \

--cluster-signing-key-file=/opt/kubernetes/ssl/ca-key.pem \

--root-ca-file=/opt/kubernetes/ssl/ca.pem \

--service-account-private-key-file=/opt/kubernetes/ssl/ca-key.pem \

--experimental-cluster-signing-duration=87600h0m0s"

EOF

– master:通过本地非安全本地端口 8080 连接 apiserver。

– leader-elect:当该组件启动多个时,自动选举(HA)

– cluster-signing-cert-file/–cluster-signing-key-file:自动为 kubelet颁发证书的 CA,与 apiserver保持一致

(2)systemd 管理 controller-manager

cat > /usr/lib/systemd/system/kube-controller-manager.service << EOF

[Unit]

Description=Kubernetes Controller Manager

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-controller-manager.conf

ExecStart=/opt/kubernetes/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

(3)启动并设置开机启动

systemctl daemon-reload

systemctl start kube-controller-manager

systemctl enable kube-controller-manager

systemctl status kube-controller-manager

7.5 部署 kube-scheduler

(1)创建配置文件

cat > /opt/kubernetes/cfg/kube-scheduler.conf << EOF

KUBE_SCHEDULER_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--leader-elect \

--master=127.0.0.1:8080 \

--bind-address=127.0.0.1"

EOF

– master:通过本地非安全本地端口 8080 连接 apiserver。

– leader-elect:当该组件启动多个时,自动选举(HA)

(2)systemd 管理 scheduler

cat > /usr/lib/systemd/system/kube-scheduler.service << EOF

[Unit]

Description=Kubernetes Scheduler

Documentation=https://github.com/kubernetes/kubernetes

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-scheduler.conf

ExecStart=/opt/kubernetes/bin/kube-scheduler \$KUBE_SCHEDULER_OPTS

Restart=on-failure

[Install]

WantedBy=multi-user.target

EOF

(3)启动并设置开机启动

systemctl daemon-reload

systemctl start kube-scheduler

systemctl enable kube-scheduler

systemctl status kube-scheduler

(4)查看集群状态

所有组件都已经启动成功,通过 kubectl 工具查看当前集群组件状态:

kubectl get cs

如上输出说明 Master 节点组件运行正常。

8. 部署Worker Node

下面还是在 Master Node 上操作,即同时作为 Worker Node

8.1 创建工作目录并拷贝二进制文件

在所有 worker node 创建工作目录:

mkdir -p /opt/kubernetes/{bin,cfg,ssl,logs}

从 master 节点拷贝:

# 将前面下载的二进制包中的kubelet和kube-proxy拷贝到/opt/kubernetes/bin目录下。

#(master节点)

cd kubernetes/server/bin

cp kubelet kube-proxy /opt/kubernetes/bin

#scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin

#scp kubelet kube-proxy [email protected]:/opt/kubernetes/bin

8.2 创建bootstrap kubeconfig文件和kube-proxy kubeconfig文件

(1)创建kubeconfig文件

创建kubeconfig文件:

在生成kubernetes证书的目录下执行以下命令生成kubeconfig文件:

[root@m1 ~]# cd ~/TLS/k8s

指定apiserver 内网负载均衡地址

[root@m1 k8s]# KUBE_APISERVER="https://192.168.11.187:6443"

[root@m1 k8s]# TOKEN=c47ffb939f5ca36231d9e3121a252940 #这个和上面创建token文件的一致

# 生成 kubelet bootstrap kubeconfig 配置文件

设置集群参数

[root@m1 k8s]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=bootstrap.kubeconfig

设置客户端认证参数

[root@m1 k8s]# kubectl config set-credentials "kubelet-bootstrap" \

--token=${TOKEN} \

--kubeconfig=bootstrap.kubeconfig

设置上下文参数

[root@m1 k8s]# kubectl config set-context default \

--cluster=kubernetes \

--user=kubelet-bootstrap \

--kubeconfig=bootstrap.kubeconfig

设置默认上下文

[root@m1 k8s]# kubectl config use-context default --kubeconfig=bootstrap.kubeconfig

(2)创建kube-proxy kubeconfig文件

#创建kube-proxy kubeconfig文件

[root@m1 k8s]# kubectl config set-cluster kubernetes \

--certificate-authority=/opt/kubernetes/ssl/ca.pem \

--embed-certs=true \

--server=${KUBE_APISERVER} \

--kubeconfig=kube-proxy.kubeconfig

[root@m1 k8s]# kubectl config set-credentials kube-proxy \

--client-certificate=./kube-proxy.pem \

--client-key=./kube-proxy-key.pem \

--embed-certs=true \

--kubeconfig=kube-proxy.kubeconfig

[root@m1 k8s]# kubectl config set-context default \

--cluster=kubernetes \

--user=kube-proxy \

--kubeconfig=kube-proxy.kubeconfig

[root@m1 k8s]# kubectl config use-context default --kubeconfig=kube-proxy.kubeconfig

[root@m1 k8s]# ls #会多出下面两个.kubeconfig文件

bootstrap.kubeconfig kube-proxy.kubeconfig

将这两个文件拷贝到Node节点/opt/kubernetes/cfg目录下。 !!!!不能忽略

[root@m1 k8s]# cp bootstrap.kubeconfig kube-proxy.kubeconfig /opt/kubernetes/cfg

#[root@m1 k8s]# scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/kubernetes/cfg

#[root@m1 k8s]# scp bootstrap.kubeconfig kube-proxy.kubeconfig [email protected]:/opt/kubernetes/cfg

8.3部署 kubelet

(1)创建配置文件

cat > /opt/kubernetes/cfg/kubelet.conf << EOF

KUBELET_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--hostname-override=k8s-m1 \

--network-plugin=cni \

--kubeconfig=/opt/kubernetes/cfg/kubelet.kubeconfig \

--bootstrap-kubeconfig=/opt/kubernetes/cfg/bootstrap.kubeconfig \

--config=/opt/kubernetes/cfg/kubelet-config.yml \

--cert-dir=/opt/kubernetes/ssl \

--pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google-containers/pause-amd64:3.0"

EOF

– hostname-override:显示名称,集群中唯一

– network-plugin:启用 CNI

– kubeconfig:空路径,会自动生成,后面用于连接 apiserver

– bootstrap-kubeconfig:首次启动向 apiserver 申请证书

– config:配置参数文件

– cert-dir:kubelet证书生成目录

– pod-infra-container-image:管理 Pod 网络容器的镜像

(2)配置参数文件

# m1

cat > /opt/kubernetes/cfg/kubelet-config.yml << EOF

kind: KubeletConfiguration

apiVersion: kubelet.config.k8s.io/v1beta1

address: 0.0.0.0

port: 10250

readOnlyPort: 10255

cgroupDriver: cgroupfs

clusterDNS: ["10.0.0.2"]

clusterDomain: cluster.local.

failSwapOn: false

authentication:

anonymous:

enabled: false

webhook:

cacheTTL: 2m0s

enabled: true

x509:

clientCAFile: /opt/kubernetes/ssl/ca.pem

authorization:

mode: Webhook

webhook:

cacheAuthorizedTTL: 5m0s

cacheUnauthorizedTTL: 30s

evictionHard:

imagefs.available: 15%

memory.available: 100Mi

nodefs.available: 10%

nodefs.inodesFree: 5%

maxOpenFiles: 1000000

maxPods: 110

EOF

(3)systemd管理kubelet组件:

cat > /usr/lib/systemd/system/kubelet.service << EOF

[Unit]

Description=Kubernetes Kubelet

After=docker.service

Requires=docker.service

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kubelet.conf

ExecStart=/opt/kubernetes/bin/kubelet \$KUBELET_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

(4)启动并设置开机启动

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

systemctl status kubelet

8.4 批准 kubelet证书申请并加入集群

master节点:

在Master审批Node加入集群:

启动后还没加入到集群中,需要手动允许该节点才可以。

在Master节点查看请求签名的Node:

[root@m1 ~]# kubectl get csr

NAME AGE SIGNERNAME REQUESTOR CONDITION

node-csr-jsQakenT4ueGHcKXCwgxePQsQHPoGE4Cb4uoij4GhBA 33s kubernetes.io/kube-apiserver-client-kubelet kubelet-bootstrap Pending

[root@m1 ~]#

[root@m1 ~]# kubectl certificate approve node-csr-jsQakenT4ueGHcKXCwgxePQsQHPoGE4Cb4uoij4GhBA

certificatesigningrequest.certificates.k8s.io/node-csr-jsQakenT4ueGHcKXCwgxePQsQHPoGE4Cb4uoij4GhBA approved

[root@m1 ~]#

[root@m1 ~]# kubectl get node

No resources found in default namespace.

[root@m1 ~]#

[root@m1 ~]# kubectl get node

No resources found in default namespace.

[root@m1 ~]# kubectl get node

NAME STATUS ROLES AGE VERSION

k8s-m1 NotReady <none> 5s v1.18.3

[root@m1 ~]#

注:由于网络插件还没有部署,节点会没有准备就绪 NotReady

8.5 部署 kube-proxy

(1)创建配置文件

cat > /opt/kubernetes/cfg/kube-proxy.conf << EOF

KUBE_PROXY_OPTS="--logtostderr=false \

--v=2 \

--log-dir=/opt/kubernetes/logs \

--config=/opt/kubernetes/cfg/kube-proxy-config.yml"

EOF

(2)配置参数文件

# m1

cat > /opt/kubernetes/cfg/kube-proxy-config.yml << EOF

kind: KubeProxyConfiguration

apiVersion: kubeproxy.config.k8s.io/v1alpha1

bindAddress: 0.0.0.0

metricsBindAddress: 0.0.0.0:10249

clientConnection:

kubeconfig: /opt/kubernetes/cfg/kube-proxy.kubeconfig

hostnameOverride: k8s-m1

clusterCIDR: 10.0.0.0/24

EOF

(3)systemd 管理 kube-proxy

cat > /usr/lib/systemd/system/kube-proxy.service << EOF

[Unit]

Description=Kubernetes Proxy

After=network.target

[Service]

EnvironmentFile=/opt/kubernetes/cfg/kube-proxy.conf

ExecStart=/opt/kubernetes/bin/kube-proxy \$KUBE_PROXY_OPTS

Restart=on-failure

LimitNOFILE=65536

[Install]

WantedBy=multi-user.target

EOF

(4)启动并设置开机启动

systemctl daemon-reload

systemctl start kube-proxy

systemctl enable kube-proxy

systemctl status kube-proxy

8.6 部署 CNI网络

先准备好 CNI 二进制文件:

下载地址:https://github.com/containernetworking/plugins/releases/download/v0.8.6/cni-plugins-linux-amd64-v0.8.6.tgz

wget https://github.com/containernetworking/plugins/releases/download/v0.8.6/cni-plugins-linux-amd64-v0.8.6.tgz

解压二进制包并移动到默认工作目录:

mkdir /opt/cni/bin -p

tar zxvf cni-plugins-linux-amd64-v0.8.6.tgz -C /opt/cni/bin

部署 CNI 网络,安装flannel插件

wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

默认镜像地址无法访问,修改为 docker hub 镜像仓库。

sed -i -r "s#quay.io/coreos/flannel:.*-amd64#lizhenliang/flannel:v0.12.0-amd64#g" kube-flannel.yml

kubectl apply -f kube-flannel.yml

kubectl get pods -n kube-system

kubectl get node

部署好网络插件,Node 准备就绪

【注意】建议多等一会,因为需要拉取镜像。如果一直不好,可以进行查看:

[root@m1 ~]# kubectl get pods -n kube-system -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

kube-flannel-ds-4tb92 0/1 Init:0/1 0 114s 192.168.11.187 k8s-m1 <none> <none>

[root@m1 ~]# kubectl describe pod kube-flannel-ds-4tb92 -n kube-system

【注意】如果网络部署出现问题,需要删除kube-flannel.yml,重新开始部署

# 删除flannel

kubectl delete -f kube-flannel.yml

8.7 新增加 Worker Node

(1)拷贝已部署好的 Node 相关文件到新节点

在 master 节点将 Worker Node 涉及文件拷贝到新节点 192.168.11.188/189

# n1

[root@m1 ~]# scp -r /opt/kubernetes [email protected]:/opt/

[root@m1 ~]# scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service [email protected]:/usr/lib/systemd/system

[root@m1 ~]# scp -r /opt/cni/ [email protected]:/opt/

[root@m1 ~]# scp /opt/kubernetes/ssl/ca.pem [email protected]:/opt/kubernetes/ssl

# n2

[root@m1 ~]# scp -r /opt/kubernetes [email protected]:/opt/

[root@m1 ~]# scp -r /usr/lib/systemd/system/{kubelet,kube-proxy}.service [email protected]:/usr/lib/systemd/system

[root@m1 ~]# scp -r /opt/cni/ [email protected]:/opt/

[root@m1 ~]# scp /opt/kubernetes/ssl/ca.pem [email protected]:/opt/kubernetes/ssl

(2)删除 kubelet 证书和 kubeconfig 文件(新加的worker node)

rm /opt/kubernetes/cfg/kubelet.kubeconfig

rm -f /opt/kubernetes/ssl/kubelet*

注:这几个文件是证书申请审批后自动生成的,每个 Node不同,必须删除重新生成。

(3)修改主机名

vim /opt/kubernetes/cfg/kubelet.conf

--hostname-override=k8s-node1

vim /opt/kubernetes/cfg/kube-proxy-config.yml

hostnameOverride: k8s-node1

(4)启动并设置开机启动

systemctl daemon-reload

systemctl start kubelet

systemctl enable kubelet

systemctl start kube-proxy

systemctl enable kube-proxy

(5)在 Master 上批准新 Node kubelet 证书申请

kubectl get csr

kubectl certificate approve 生成的name

(6)查看 Node 状态

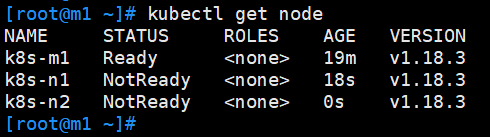

kubectl get node

最终,所有节点均处于ready状态!

【注意】之后,n1和n2一直都是notready,自己以为出了什么问题,查看了半天

再次到master中查看时,发现n2已经ready了,并且n2拉取到了镜像flannel;但是n1还是notready,而且n1还没有拉取到镜像。所以,我们还是要多等一会,因为拉取镜像需要时间。

又过了一会,n1和n2全部ready,而且全部拉取到了镜像。

错误

conflicting environment variable “ETCD_NAME” is shadowed by corresponding command-line flag

- ETCD3.4版本会自动读取环境变量的参数,所以EnvironmentFile文件中有的参数,不需要再次在ExecStart启动参数中添加,二选一,如同时配置,会触发以下类似报错“etcd: conflicting environment variable “ETCD_NAME” is shadowed by corresponding command-line flag (either unset environment variable or disable flag)”

vim /usr/lib/systemd/system/etcd.service

这里选择去掉EnvironmentFile

参考文献

手把手教你部署k8s(二进制方式)-------详解

kubernetes1.16 K8S二进制搭建集群,一主多从