Kubernetes基础4

1.HPA控制器简介

1 方式1:手动调整pod数量,通过kubectl scale命令

- 临时修改方式,不会持久保存

- 查看pod 数量

root@master1:~# kubectl get pod -A -o wide

ns-uat uat-tomcat-app1-deployment-677fcd9d44-jxmfm 1/1 Running 4 5d10h 10.20.2.57 172.16.62.207

1.1 查看deployment 数量

root@master1:~# kubectl get deployment -n ns-uat

NAME READY UP-TO-DATE AVAILABLE AGE

uat-nginx-deployment 1/1 1 1 5d8h

uat-tomcat-app1-deployment 1/1 1 1 5d10h

1.2 扩容deployment为3个

root@master1:~# kubectl scale deployment/uat-tomcat-app1-deployment --replicas=3 -n ns-uat

deployment.apps/uat-tomcat-app1-deployment scaled

1.3 验证deployment 数量,已经为3个

root@master1:~# kubectl get deployment -n ns-uat

NAME READY UP-TO-DATE AVAILABLE AGE

uat-nginx-deployment 1/1 1 1 5d8h

uat-tomcat-app1-deployment 3/3 3 3 5d10h

root@master1:~#

1.4 验证pod 数量,已经为3个

root@master1:~# kubectl get pod -A -o wide

ns-uat uat-tomcat-app1-deployment-677fcd9d44-f5rdp 1/1 Running 0 2m28s 10.20.3.53 172.16.62.208

ns-uat uat-tomcat-app1-deployment-677fcd9d44-jxmfm 1/1 Running 4 5d10h 10.20.2.57 172.16.62.207

ns-uat uat-tomcat-app1-deployment-677fcd9d44-xmzls 1/1 Running 0 2m28s 10.20.5.43 172.16.62.209

1.5 将deployment减少为2个

root@master1:~# kubectl scale deployment/uat-tomcat-app1-deployment --replicas=2 -n ns-uat

deployment.apps/uat-tomcat-app1-deployment scaled

1.6 验证deployment 数量,已经为2个

root@master1:~# kubectl get deployment -n ns-uat

NAME READY UP-TO-DATE AVAILABLE AGE

uat-nginx-deployment 1/1 1 1 5d8h

uat-tomcat-app1-deployment 2/2 2 2 5d10h

1.7 验证pod 数量,已经为2个

root@master1:~# kubectl get pod -A -o wide

ns-uat uat-tomcat-app1-deployment-677fcd9d44-jxmfm 1/1 Running 4 5d10h 10.20.2.57 172.16.62.207

ns-uat uat-tomcat-app1-deployment-677fcd9d44-xmzls 1/1 Running 0 9m34s 10.20.5.43 172.16.62.209

1.2.方式2:手动调整pod数量,通过kubectl edit命令

- 通过kubectl edit命令,立即生效

- root@master1:~# k:ubectl edit deployment/uat-tomcat-app1-deployment -n ns-uat

root@master1:~# kubectl edit deployment/uat-tomcat-app1-deployment -n ns-uat

# Please edit the object below. Lines beginning with a '#' will be ignored,

# and an empty file will abort the edit. If an error occurs while saving this file will be

# reopened with the relevant failures.

#

apiVersion: apps/v1

kind: Deployment

metadata:

annotations:

deployment.kubernetes.io/revision: "1"

kubectl.kubernetes.io/last-applied-configuration: |

creationTimestamp: "2020-08-09T14:13:40Z"

generation: 4

labels:

app: uat-tomcat-app1-deployment-label

name: uat-tomcat-app1-deployment

namespace: ns-uat

resourceVersion: "3591300"

selfLink: /apis/apps/v1/namespaces/ns-uat/deployments/uat-tomcat-app1-deployment

uid: 8d21855f-8c84-4fa9-9a01-7b6ebe69b67c

spec:

progressDeadlineSeconds: 600

replicas: 3 #调整为3个

revisionHistoryLimit: 10

selector:

matchLabels:

app: uat-tomcat-app1-selector

strategy:

rollingUpdate:

maxSurge: 25%

maxUnavailable: 25%

type: RollingUpdate

template:

metadata:

creationTimestamp: null

labels:

app: uat-tomcat-app1-selector

spec:

containers:

- env:

- name: password

value: "123456"

- name: age

value: "18"

image: harbor.haostack.com/k8s/jack_k8s_tomcat-app1:v1

imagePullPolicy: Always

name: uat-tomcat-app1-container

ports:

- containerPort: 8080

name: http

protocol: TCP

resources:

limits:

cpu: "1"

memory: 512Mi

requests:

cpu: 500m

memory: 512Mi

terminationMessagePath: /dev/termination-log

terminationMessagePolicy: File

dnsPolicy: ClusterFirst

restartPolicy: Always

schedulerName: default-scheduler

securityContext: {}

terminationGracePeriodSeconds: 30

status:

availableReplicas: 5

conditions:

- lastTransitionTime: "2020-08-09T14:13:40Z"

lastUpdateTime: "2020-08-09T14:14:59Z"

message: ReplicaSet "uat-tomcat-app1-deployment-677fcd9d44" has successfully progressed.

reason: NewReplicaSetAvailable

status: "True"

type: Progressing

- lastTransitionTime: "2020-08-15T00:58:00Z"

lastUpdateTime: "2020-08-15T00:58:00Z"

message: Deployment has minimum availability.

reason: MinimumReplicasAvailable

status: "True"

type: Available

observedGeneration: 4

readyReplicas: 5

replicas: 5

updatedReplicas: 5

1.2.1 验证deployment 数量,已经为3个

root@master1:~# kubectl get deployment -n ns-uat

NAME READY UP-TO-DATE AVAILABLE AGE

uat-nginx-deployment 1/1 1 1 5d8h

uat-tomcat-app1-deployment 3/3 3 3 5d10h

1.2.2 验证pod 数量,已经为3个

root@master1:~# kubectl get pod -A -o wide

ns-uat uat-tomcat-app1-deployment-677fcd9d44-2zxs6 1/1 Terminating 0 9m57s 10.20.2.58 172.16.62.207

ns-uat uat-tomcat-app1-deployment-677fcd9d44-82s6q 1/1 Terminating 0 9m57s 10.20.5.44 172.16.62.209

ns-uat uat-tomcat-app1-deployment-677fcd9d44-jxmfm 1/1 Running 4 5d10h 10.20.2.57 172.16.62.207

ns-uat uat-tomcat-app1-deployment-677fcd9d44-ssdr2 1/1 Running 0 9m57s 10.20.3.54 172.16.62.208

ns-uat uat-tomcat-app1-deployment-677fcd9d44-xmzls 1/1 Running 0 25m 10.20.5.43 172.16.62.209

1.3.方式3 通过dashboard方式伸缩pod数量

- 临时修改方式,不会持久保存

-将 pod数量调整为5个

1.3.1 验证deployment 数量,已经为5个

root@master1:~# kubectl get deployment -n ns-uat

NAME READY UP-TO-DATE AVAILABLE AGE

uat-nginx-deployment 1/1 1 1 5d8h

uat-tomcat-app1-deployment 5/5 5 5 5d10h

1.3.2 验证pod 数量,已经为5个

root@master1:~# kubectl get pod -A -o wide

ns-uat uat-tomcat-app1-deployment-677fcd9d44-2zxs6 1/1 Running 0 24s 10.20.2.58 172.16.62.207

ns-uat uat-tomcat-app1-deployment-677fcd9d44-82s6q 1/1 Running 0 24s 10.20.5.44 172.16.62.209

ns-uat uat-tomcat-app1-deployment-677fcd9d44-jxmfm 1/1 Running 4 5d10h 10.20.2.57 172.16.62.207

ns-uat uat-tomcat-app1-deployment-677fcd9d44-ssdr2 1/1 Running 0 24s 10.20.3.54 172.16.62.208

ns-uat uat-tomcat-app1-deployment-677fcd9d44-xmzls 1/1 Running 0 16m 10.20.5.43 172.16.62.209 1.4 HPA自动伸缩pod数量

- kubectl autoscale 自动控制在k8s集群中运行的pod数量(水平自动伸缩),需要提前设置pod范围及触发条件。

- k8s从1.1版本开始增加了名称为HPA(Horizontal PodAutoscaler)的控制器,用于实现基于pod中资源

(CPU/Memory)利用率进行对pod的自动扩缩容功能的实现,早期的版本只能基于Heapster组件实现对CPU利用率

做为触发条件,但是在k8s 1.11版本开始使用Metrices

Server完成数据采集,然后将采集到的数据通过

API(Aggregated API,汇总API),例如metrics.k8s.io、custom.metrics.k8s.io、external.metrics.k8s.io,然后

再把数据提供给HPA控制器进行查询,以实现基于某个资源利用率对pod进行扩缩容的目的。

- 控制管理器默认每隔15s(可以通过–horizontal-pod-autoscaler-sync-period修改)查询metrics的资源使用情况

支持以下三种metrics指标类型:

->预定义metrics(比如Pod的CPU)以利用率的方式计算

->自定义的Pod metrics,以原始值(raw value)的方式计算

->自定义的object metrics

支持两种metrics查询方式:

->Heapster

->自定义的REST API

支持多metrics

1.5 准备metrics-server

- 使用metrics-server作为HPA数据源

- https://github.com/kubernetes-incubator/metrics-server

1.5.1 clone代码

oot@master1:/data# git clone https://github.com/kubernetes-incubator/metrics-server.git

Cloning into 'metrics-server'...

remote: Enumerating objects: 45, done.

remote: Counting objects: 100% (45/45), done.

remote: Compressing objects: 100% (39/39), done.

Receiving objects: 24% (2967/12244), 5.38 MiB | 44.00 KiB/s

#下载完成

root@master1:/data/metrics-server# ll

total 156

drwxr-xr-x 10 root root 4096 Apr 7 11:31 ./

drwxr-xr-x 6 root root 4096 Aug 15 09:46 ../

-rw-r--r-- 1 root root 311 Apr 7 11:31 cloudbuild.yaml

drwxr-xr-x 3 root root 4096 Apr 7 11:31 cmd/

-rw-r--r-- 1 root root 148 Apr 7 11:31 code-of-conduct.md

-rw-r--r-- 1 root root 1908 Apr 7 11:31 CONTRIBUTING.md

drwxr-xr-x 4 root root 4096 Apr 7 11:31 deploy/

-rw-r--r-- 1 root root 5620 Apr 7 11:31 FAQ.md

drwxr-xr-x 8 root root 4096 Apr 7 11:31 .git/

drwxr-xr-x 3 root root 4096 Apr 7 11:31 .github/

-rw-r--r-- 1 root root 124 Apr 7 11:31 .gitignore

-rw-r--r-- 1 root root 237 Apr 7 11:31 .golangci.yml

-rw-r--r-- 1 root root 760 Apr 7 11:31 go.mod

-rw-r--r-- 1 root root 44450 Apr 7 11:31 go.sum

drwxr-xr-x 2 root root 4096 Apr 7 11:31 hack/

-rw-r--r-- 1 root root 11357 Apr 7 11:31 LICENSE

-rw-r--r-- 1 root root 3611 Apr 7 11:31 Makefile

-rw-r--r-- 1 root root 201 Apr 7 11:31 OWNERS

-rw-r--r-- 1 root root 177 Apr 7 11:31 OWNERS_ALIASES

drwxr-xr-x 8 root root 4096 Apr 7 11:31 pkg/

-rw-r--r-- 1 root root 3952 Apr 7 11:31 README.md

-rw-r--r-- 1 root root 606 Apr 7 11:31 RELEASE.md

-rw-r--r-- 1 root root 541 Apr 7 11:31 SECURITY_CONTACTS

drwxr-xr-x 2 root root 4096 Apr 7 11:31 test/

-rw-r--r-- 1 root root 341 Apr 7 11:31 .travis.yml

drwxr-xr-x 3 root root 4096 Apr 7 11:31 vendor/

root@master1:/data/metrics-server#

1.5.2 准备镜像

-修改为阿里云或者本地harbor都可以

registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server-amd64 v0.3.6 9dd718864ce6 10 months ago 39.9MB

1.5.3 修改yaml 文件

root@master1:/data/metrics-server/deploy/kubernetes# more metrics-server-deployment.yaml

---

apiVersion: v1

kind: ServiceAccount

metadata:

name: metrics-server

namespace: kube-system

---

apiVersion: apps/v1

kind: Deployment

metadata:

name: metrics-server

namespace: kube-system

labels:

k8s-app: metrics-server

spec:

selector:

matchLabels:

k8s-app: metrics-server

template:

metadata:

name: metrics-server

labels:

k8s-app: metrics-server

spec:

serviceAccountName: metrics-server

volumes:

# mount in tmp so we can safely use from-scratch images and/or read-only containers

- name: tmp-dir

emptyDir: {}

containers:

- name: metrics-server

image: registry.cn-hangzhou.aliyuncs.com/google_containers/metrics-server-amd64:v0.3.6

imagePullPolicy: IfNotPresent

args:

- --cert-dir=/tmp

- --secure-port=4443

ports:

- name: main-port

containerPort: 4443

protocol: TCP

securityContext:

readOnlyRootFilesystem: true

runAsNonRoot: true

runAsUser: 1000

volumeMounts:

- name: tmp-dir

mountPath: /tmp

nodeSelector:

kubernetes.io/os: linux

kubernetes.io/arch: "amd64"

root@master1:/data/metrics-server/deploy/kubernetes#

1.5.4 创建metrics-server服务

oot@master1:/data/metrics-server/deploy/kubernetes# kubectl apply -f .

clusterrole.rbac.authorization.k8s.io/system:aggregated-metrics-reader created

clusterrolebinding.rbac.authorization.k8s.io/metrics-server:system:auth-delegator created

rolebinding.rbac.authorization.k8s.io/metrics-server-auth-reader created

apiservice.apiregistration.k8s.io/v1beta1.metrics.k8s.io created

serviceaccount/metrics-server created

deployment.apps/metrics-server created

service/metrics-server created

clusterrole.rbac.authorization.k8s.io/system:metrics-server created

clusterrolebinding.rbac.authorization.k8s.io/system:metrics-server created

#查看SVC

root@master1:/data/metrics-server/deploy/kubernetes# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kube-dns ClusterIP 172.28.0.2 53/UDP,53/TCP,9153/TCP 12d

metrics-server ClusterIP 172.28.172.191 443/TCP 7m6s

root@master1:/data/metrics-server/deploy/kubernetes#

1.5.5 验证metrics-server 是否采集到node数据

root@master1:~# kubectl top nodes

NAME CPU(cores) CPU% MEMORY(bytes) MEMORY%

172.16.62.201 154m 8% 1052Mi 32%

172.16.62.202 93m 5% 885Mi 27%

172.16.62.203 111m 6% 782Mi 24%

172.16.62.207 100m 5% 891Mi 27%

172.16.62.208 109m 6% 1192Mi 36%

172.16.62.209 119m 6% 1041Mi 32%

1.5.6 验证metrics-server 是否采集到pod数据

root@master1:~# kubectl top pods -n ns-uat

NAME CPU(cores) MEMORY(bytes)

uat-tomcat-app1-deployment-677fcd9d44-c5nnk 3m 92Mi

uat-tomcat-app1-deployment-677fcd9d44-jxmfm 0m 3Mi

root@master1:~#

1.5.7 查看controller-manager启动参数

root@master1:/data/metrics-server/deploy/kubernetes# kubectl explain HorizontalPodAutoscaler.spec.targetCPUUtilizationPercentage

1.5.8 创建HPA

- hpa.yaml

root@master1:/data/web/tomcat-app1/hpa# more hpa.yaml

#apiVersion: autoscaling/v2beta1

apiVersion: autoscaling/v1

kind: HorizontalPodAutoscaler

metadata:

namespace: ns-uat #namespace名称

name: uat-tomcat-app1-podautoscaler #deployment 名称

labels:

app: uat-tomcat-app1

version: v2beta1

spec:

scaleTargetRef:

apiVersion: apps/v1

#apiVersion: extensions/v1beta1

kind: Deployment

name: uat-tomcat-app1-deployment #针对这个deployment 做伸缩

minReplicas: 3

maxReplicas: 20

targetCPUUtilizationPercentage: 60

#metrics:

#- type: Resource

# resource:

# name: cpu

# targetAverageUtilization: 60

#- type: Resource

# resource:

# name: memory

root@master1:/data/web/tomcat-app1/hpa

- 验证uat-tomcat-app1-deployment 扩容为3个

root@master1:~# kubectl get deployment -A

kube-system coredns 1/1 1 1 12d

kube-system metrics-server 1/1 1 1 30m

kubernetes-dashboard dashboard-metrics-scraper 1/1 1 1 13d

kubernetes-dashboard kubernetes-dashboard 1/1 1 1 13d

ns-uat uat-nginx-deployment 1/1 1 1 5d10h

ns-uat uat-tomcat-app1-deployment 3/3 3 3 5d12h

1.5.9测试

- 现在hap.yaml 设置最小值是3个

- 为了测试把tomcat设置为1个,然后hpa会自动扩容为3个

- 如果HPA的最小值高于业务yaml文件的replicas的值,以HPA中的min值为准

- 如果HPA的最小值低于业务yaml文件的replicas的值,以HPA中的min值为准

#修改为replicas 1

root@master1:/data/web/tomcat-app1# kubectl apply -f tomcat-app1.yaml

#查看deployment

root@master1:~# kubectl get deployment uat-tomcat-app1-deployment -n ns-uat

NAME READY UP-TO-DATE AVAILABLE AGE

uat-tomcat-app1-deployment 3/3 3 3 5d12h

root@master1:~#

#查看pod

root@master1:~# kubectl get pod -A -o wide

ns-uat uat-tomcat-app1-deployment-677fcd9d44-c5nnk 1/1 Running 0 43s 10.20.5.45 172.16.62.209

ns-uat uat-tomcat-app1-deployment-677fcd9d44-jxmfm 1/1 Running 4 5d12h 10.20.2.57 172.16.62.207

ns-uat uat-tomcat-app1-deployment-677fcd9d44-kffsz 1/1 Running 0 43s 10.20.3.57 172.16.62.208

#查看pod详细信息

root@master1:/data/web/tomcat-app1# kubectl describe hpa uat-tomcat-app1-podautoscaler -n ns-uat

Name: uat-tomcat-app1-podautoscaler

Namespace: ns-uat

Labels: app=uat-tomcat-app1

version=v2beta1

Annotations: kubectl.kubernetes.io/last-applied-configuration:

{"apiVersion":"autoscaling/v1","kind":"HorizontalPodAutoscaler","metadata":{"annotations":{},"labels":{"app":"uat-tomcat-app1","version":"...

CreationTimestamp: Sat, 15 Aug 2020 10:21:47 +0800

Reference: Deployment/uat-tomcat-app1-deployment

Metrics: ( current / target )

resource cpu on pods (as a percentage of request): 0% (1m) / 60%

Min replicas: 3

Max replicas: 20

Deployment pods: 3 current / 3 desired

Conditions:

Type Status Reason Message

---- ------ ------ -------

AbleToScale True ScaleDownStabilized recent recommendations were higher than current one, applying the highest recent recommendation

ScalingActive True ValidMetricFound the HPA was able to successfully calculate a replica count from cpu resource utilization (percentage of request)

ScalingLimited False DesiredWithinRange the desired count is within the acceptable range

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal SuccessfulRescale 9m23s horizontal-pod-autoscaler New size: 3; reason: All metrics below target

Normal SuccessfulRescale 2m3s (x2 over 37m) horizontal-pod-autoscaler New size: 3; reason: Current number of replicas below Spec.MinReplicas #重新扩容为3个pod

root@master1:/data/web/tomcat-app1#

2.kubernetes实战案例之基于set image及rollout实现镜像版本升级与回滚

- 在指定的deployment中通过kubectl set image指定新版本的 镜像:tag 来实现更新代码的目的。

构建三个不同版本的nginx镜像,第一次使用v1版本,后组逐渐升级到v2与v3,测试镜像版本升级与回滚操作

2.1 准备镜像

v1 k8s lab tomcat app1 v1...........

v2 k8s lab tomcat app1 v2...........

v3 k8s lab tomcat app1 v3...........

v4 k8s lab tomcat app1 v4...........

2.2 更改yaml文件方式升级版本

- 升级 Kubectl apply -f tomcat-app1.yaml --record=true

root@master1:/data/web/tomcat-app1# kubectl apply -f tomcat-app1.yaml --record=true

deployment.apps/uat-tomcat-app1-deployment configured

service/uat-tomcat-app1-service unchanged

root@master1:/data/web/tomcat-app1#

- 查看升级记录

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

2 kubectl apply --filename=tomcat-app1.yaml --record=true

3 kubectl apply --filename=tomcat-app1.yaml --record=true

4 kubectl apply --filename=tomcat-app1.yaml --record=true

root@master1:/tmp/core#

- 测试页面

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v2...........

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v2...........

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v3...........

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v3...........

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v4...........

root@ha1:/etc/haproxy#

2.3 回滚到上一个版本

#回滚前

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

2 kubectl apply --filename=tomcat-app1.yaml --record=true

3 kubectl apply --filename=tomcat-app1.yaml --record=true

4 kubectl apply --filename=tomcat-app1.yaml --record=true

#开始回滚到上一个版本

root@master1:/tmp/core# kubectl rollout undo deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment rolled back

#查看历史记录,注意序号3没有了,出现了5,其实就是回滚到了3对应的版本

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

2 kubectl apply --filename=tomcat-app1.yaml --record=true

4 kubectl apply --filename=tomcat-app1.yaml --record=true

5 kubectl apply --filename=tomcat-app1.yaml --record=true

root@master1:/tmp/core#

#测试,3对应的版本为 v3

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v3...........

root@ha1:/etc/haproxy#

#再回滚一次,会回滚到上一次的对应版本,不会回滚到上上一个版本,只会两个版本之间来回回滚

#回滚前

oot@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

2 kubectl apply --filename=tomcat-app1.yaml --record=true

4 kubectl apply --filename=tomcat-app1.yaml --record=true

5 kubectl apply --filename=tomcat-app1.yaml --record=true

#执行回滚

root@master1:/tmp/core# kubectl rollout undo deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment rolled back

#查看历史记录,会回滚到上一个版本,只会在两个版本建回滚,不能回滚到上上一个版本

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

2 kubectl apply --filename=tomcat-app1.yaml --record=true

5 kubectl apply --filename=tomcat-app1.yaml --record=true

6 kubectl apply --filename=tomcat-app1.yaml --record=true

root@master1:/tmp/core#

- REVISION6 对应的就是一切的REVISION4,而REVISION4对应的页面就是k8s lab tomcat app1 v4...........

#测试 V4 对应的就是

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v4...........

root@ha1:/etc/haproxy#

2.4 回滚到指定版本

#回滚前

oot@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

2 kubectl apply --filename=tomcat-app1.yaml --record=true

5 kubectl apply --filename=tomcat-app1.yaml --record=true

6 kubectl apply --filename=tomcat-app1.yaml --record=true

#执行回滚,--to-revision=2

root@master1:/tmp/core# kubectl rollout undo deployment uat-tomcat-app1-deployment --to-revision=2 -n ns-uat

deployment.apps/uat-tomcat-app1-deployment rolled back

#查看历史记录REVISION2没有,创建出REVISION7

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

5 kubectl apply --filename=tomcat-app1.yaml --record=true

6 kubectl apply --filename=tomcat-app1.yaml --record=true

7 kubectl apply --filename=tomcat-app1.yaml --record=true

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

5 kubectl apply --filename=tomcat-app1.yaml --record=true

6 kubectl apply --filename=tomcat-app1.yaml --record=true

7 kubectl apply --filename=tomcat-app1.yaml --record=true

root@master1:/tmp/core#

#测试

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v2...........

root@ha1:/etc/haproxy#

2.5 通过set image方式升级版本

- at-tomcat-app1-container 为容器名称,在yaml中可以查看到

#开始升级到V3

root@master1:/data/web/tomcat-app1# kubectl get pod -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

root@master1:/tmp/core# kubectl set image deployment/uat-tomcat-app1-deployment uat-tomcat-app1-container=harbor.haostack.com/k8s/jack_k8s_tomcat-app1:v3 -n ns-uat

deployment.apps/uat-tomcat-app1-deployment image updated

root@master1:/tmp/core#

#执行升级中

root@master1:/data/web/tomcat-app1# kubectl get pod -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ns-uat uat-tomcat-app1-deployment-5f87c98d7f-gr4sd 1/1 Terminating 0 20m 10.20.3.90 172.16.62.208

ns-uat uat-tomcat-app1-deployment-5f87c98d7f-mrkc7 1/1 Running 0 20m 10.20.2.72 172.16.62.207

ns-uat uat-tomcat-app1-deployment-5f87c98d7f-z82tn 1/1 Running 0 19m 10.20.5.58 172.16.62.209

ns-uat uat-tomcat-app1-deployment-6d5876d55d-dpcck 1/1 Running 0 28s 10.20.3.92 172.16.62.208

ns-uat uat-tomcat-app1-deployment-6d5876d55d-hwm8n 0/1 ImagePullBackOff 0 25s 10.20.2.74 172.16.62.207

#业务验证 已经到了V3

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v3...........

root@ha1:/etc/haproxy

#升级到V4

root@master1:/tmp/core# kubectl set image deployment/uat-tomcat-app1-deployment uat-tomcat-app1-container=harbor.haostack.com/k8s/jack_k8s_tomcat-app1:v4 -n ns-uat

deployment.apps/uat-tomcat-app1-deployment image updated

root@master1:/tmp/core#

#升级中

root@master1:/data/web/tomcat-app1# kubectl get pod -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ns-uat uat-tomcat-app1-deployment-6d5876d55d-bc6vx 1/1 Terminating 0 4m45s 10.20.5.60 172.16.62.209

ns-uat uat-tomcat-app1-deployment-6d5876d55d-dpcck 1/1 Terminating 0 5m36s 10.20.3.92 172.16.62.208

ns-uat uat-tomcat-app1-deployment-6d5876d55d-hwm8n 1/1 Terminating 0 5m33s 10.20.2.74 172.16.62.207

ns-uat uat-tomcat-app1-deployment-6d5876d55d-qr927 1/1 Terminating 0 4m21s 10.20.3.93 172.16.62.208

ns-uat uat-tomcat-app1-deployment-75c57fcffc-2p2kg 0/1 ContainerCreating 0 3s 172.16.62.209

ns-uat uat-tomcat-app1-deployment-75c57fcffc-78frv 1/1 Running 0 3s 10.20.3.95 172.16.62.208

ns-uat uat-tomcat-app1-deployment-75c57fcffc-8m2wl 1/1 Running 0 9s 10.20.2.76 172.16.62.207

ns-uat uat-tomcat-app1-deployment-75c57fcffc-bwrb9 0/1 ContainerCreating 0 3s 172.16.62.208

ns-uat uat-tomcat-app1-deployment-75c57fcffc-bx6dv 1/1 Running 0 12s 10.20.2.75 172.16.62.207

ns-uat uat-tomcat-app1-deployment-75c57fcffc-fq7ck 1/1 Running 0 12s 10.20.5.61 172.16.62.209

ns-uat uat-tomcat-app1-deployment-75c57fcffc-td692 1/1 Running 0 9s 10.20.3.94 172.16.62.208

root@master1:/data/web/tomcat-app1#

#查看升级历史

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

6 kubectl apply --filename=tomcat-app1.yaml --record=true

7 kubectl apply --filename=tomcat-app1.yaml --record=true

8 kubectl apply --filename=tomcat-app1.yaml --record=true

root@master1:/tmp/core# kubectl set image deployment/uat-tomcat-app1-deployment uat-tomcat-app1-container=harbor.haostack.com/k8s/jack_k8s_tomcat-app1:v4 -n ns-uat

deployment.apps/uat-tomcat-app1-deployment image updated

root@master1:/tmp/core# kubectl rollout history deployment uat-tomcat-app1-deployment -n ns-uat

deployment.apps/uat-tomcat-app1-deployment

REVISION CHANGE-CAUSE

1

7 kubectl apply --filename=tomcat-app1.yaml --record=true

8 kubectl apply --filename=tomcat-app1.yaml --record=true

9 kubectl apply --filename=tomcat-app1.yaml --record=true

root@master1:/tmp/core#

#业务验证 已经到了V4

root@ha1:/etc/haproxy# curl http://172.16.62.191/myapp/index.html

k8s lab tomcat app1 v4...........

root@ha1:/etc/haproxy#

2.6 配置主机维封锁状态且不参与调度

- kubectl --help | grep cordon #警戒线

- cordon Mark node as unschedulable #标记为警戒,即不参加pod调度

- uncordon Mark node as schedulable #去掉警戒,即参加pod调度

2.6.1 设置172.16.62.209 不参加调度

root@master1:/data/web/tomcat-app1# kubectl cordon 172.16.62.209

node/172.16.62.209 cordoned

root@master1:/data/web/tomcat-app1# kubectl get nodes

NAME STATUS ROLES AGE VERSION

172.16.62.201 Ready,SchedulingDisabled node 14d v1.17.4

172.16.62.202 Ready,SchedulingDisabled node 14d v1.17.4

172.16.62.203 Ready,SchedulingDisabled node 14d v1.17.4

172.16.62.207 Ready node 14d v1.17.4

172.16.62.208 Ready node 14d v1.17.4

172.16.62.209 Ready,SchedulingDisabled node 14d v1.17.4

root@master1:/data/web/tomcat-app1#

2.6.2 设置172.16.62.209 参加调度

root@master1:/data/web/tomcat-app1# kubectl uncordon 172.16.62.209

node/172.16.62.209 uncordoned

root@master1:/data/web/tomcat-app1# kubectl get nodes

NAME STATUS ROLES AGE VERSION

172.16.62.201 Ready,SchedulingDisabled node 14d v1.17.4

172.16.62.202 Ready,SchedulingDisabled node 14d v1.17.4

172.16.62.203 Ready,SchedulingDisabled node 14d v1.17.4

172.16.62.207 Ready node 14d v1.17.4

172.16.62.208 Ready node 14d v1.17.4

172.16.62.209 Ready node 14d v1.17.4

root@master1:/data/web/tomcat-app1#

3 ZK和dobbo服务部署

-

PV和PVC介绍

-

默认情况下容器中的磁盘文件是非持久化的,对于运行在容器中的应用来说面临两个问题,第一:当容器挂掉

kubelet将重启启动它时,文件将会丢失;第二:当Pod中同时运行多个容器,容器之间需要共享文件时,

Kubernetes的Volume解决了这两个问题。 -

PersistentVolume(PV)是集群中已由管理员配置的一段网络存储,集群中的存储资源就像一个node节点是一个集群

资源,PV是诸如卷之类的卷插件,但是具有独立于使用PV的任何单个pod的生命周期, 该API对象捕获存储的实现细节,

即NFS,iSCSI或云提供商特定的存储系统,PV是由管理员添加的的一个存储的描述,是一个全局资源即不隶属于任何

namespace,包含存储的类型,存储的大小和访问模式等,它的生命周期独立于Pod,例如当使用它的Pod销毁时对PV没有影响。 -

PersistentVolumeClaim(PVC)是用户存储的请求,它类似于pod,Pod消耗节点资源,PVC消耗存储资源,就像pod可以请求特定级别的资源(CPU和内存),PVC是namespace中的资源,可以设置特定的空间大小和访问模式

-

kubernetes 从1.0版本开始支持PersistentVolume和PersistentVolumeClaim。

PV是对底层网络存储的抽象,即将网络存储定义为一种存储资源,将一个整体的存储资源拆分成多份后给不同的业务使用

PVC是对PV资源的申请调用,就像POD消费node节点资源一样,pod是通过PVC将数据保存至PV,PV在保存至存

- PersistentVolume参数

# kubectl explain PersistentVolume

Capacity: #当前PV空间大小,kubectl explain PersistentVolume.spec.capacity

accessModes :访问模式,#kubectl explain PersistentVolume.spec.accessModes

ReadWriteOnce – PV只能被单个节点以读写权限挂载,RWO

ReadOnlyMany – PV以可以被多个节点挂载但是权限是只读的,ROX

ReadWriteMany – PV可以被多个节点是读写方式挂载使用,RWX

persistentVolumeReclaimPolicy #删除机制即删除存储卷卷时候,已经创建好的存储卷由以下删除操作:

#kubectl explain PersistentVolume.spec.persistentVolumeReclaimPolicy

Retain – 删除PV后保持原装,最后需要管理员手动删除

Recycle – 空间回收,及删除存储卷上的所有数据(包括目录和隐藏文件),目前仅支持NFS和hostPath

Delete – 自动删除存储卷

volumeMode #卷类型,kubectl explain PersistentVolume.spec.volumeMode

定义存储卷使用的文件系统是块设备还是文件系统,默认为文件系统

mountOptions #附加的挂载选项列表,实现更精细的权限控制

ro #等

- PersistentVolumeClaim创建参数:

#kubectl explain PersistentVolumeClaim.

accessModes :PVC 访问模式,#kubectl explain PersistentVolumeClaim.spec.volumeMode

ReadWriteOnce – PVC只能被单个节点以读写权限挂载,RWO

ReadOnlyMany – PVC以可以被多个节点挂载但是权限是只读的,ROX

ReadWriteMany – PVC可以被多个节点是读写方式挂载使用,RWX

resources: #定义PVC创建存储卷的空间大小

selector: #标签选择器,选择要绑定的PV

matchLabels #匹配标签名称

matchExpressions #基于正则表达式匹配

volumeName #要绑定的PV名称

volumeMode #卷类型

定义PVC使用的文件系统是块设备还是文件系统,默认为文件系统

3.1 部署ZK集群

- 基于PV和PVC作为后端存储,实现zookeeper集群

- 下载JDK镜像

root@master1:/data/web/tomcat-app1# docker pull elevy/slim_java:8

root@master1:/data/web/tomcat-app1# docker tag elevy/slim_java:8 harbor.haostack.com/k8s/elevy/slim_java:1.8.0.144

root@master1:/data/web/tomcat-app1# docker push harbor.haostack.com/k8s/elevy/slim_java:1.8.0.144

3.1.1 ZK镜像制作

#文件

root@master1:/data/web/zookeeper# ll

total 32

drwxr-xr-x 4 root root 4096 Aug 15 18:43 ./

drwxr-xr-x 10 root root 4096 Aug 15 18:29 ../

drwxr-xr-x 2 root root 4096 Aug 15 18:29 bin/

-rw-r--r-- 1 root root 140 Aug 15 18:36 build-command.sh

drwxr-xr-x 2 root root 4096 Aug 15 18:29 conf/

-rw-r--r-- 1 root root 2000 Aug 15 18:35 Dockerfile

-rw-r--r-- 1 root root 278 Aug 15 18:29 entrypoint.sh

-rw-r--r-- 1 root root 2270 Aug 15 18:29 zookeeper-3.12-Dockerfile.tar.gz

#ZK Dockerfile

root@master1:/data/web/zookeeper# cat Dockerfile

#FROM harbor-linux38.local.com/linux38/slim_java:8

FROM harbor.haostack.com/k8s/elevy/slim_java:1.8.0.144

ENV ZK_VERSION 3.4.14

RUN apk add --no-cache --virtual .build-deps \

ca-certificates \

gnupg \

tar \

wget && \

#

# Install dependencies

apk add --no-cache \

bash && \

#

# Download Zookeeper

wget -nv -O /tmp/zk.tgz "https://www.apache.org/dyn/closer.cgi?action=download&filename=zookeeper/zookeeper-${ZK_VERSION}/zookeeper-${ZK_VERSION}.tar.gz" && \

wget -nv -O /tmp/zk.tgz.asc "https://www.apache.org/dist/zookeeper/zookeeper-${ZK_VERSION}/zookeeper-${ZK_VERSION}.tar.gz.asc" && \

wget -nv -O /tmp/KEYS https://dist.apache.org/repos/dist/release/zookeeper/KEYS && \

#

# Verify the signature

export GNUPGHOME="$(mktemp -d)" && \

gpg -q --batch --import /tmp/KEYS && \

gpg -q --batch --no-auto-key-retrieve --verify /tmp/zk.tgz.asc /tmp/zk.tgz && \

#

# Set up directories

#

mkdir -p /zookeeper/data /zookeeper/wal /zookeeper/log && \

#

# Install

tar -x -C /zookeeper --strip-components=1 --no-same-owner -f /tmp/zk.tgz && \

#

# Slim down

cd /zookeeper && \

cp dist-maven/zookeeper-${ZK_VERSION}.jar . && \

rm -rf \

*.txt \

*.xml \

bin/README.txt \

bin/*.cmd \

conf/* \

contrib \

dist-maven \

docs \

lib/*.txt \

lib/cobertura \

lib/jdiff \

recipes \

src \

zookeeper-*.asc \

zookeeper-*.md5 \

zookeeper-*.sha1 && \

#

# Clean up

apk del .build-deps && \

rm -rf /tmp/* "$GNUPGHOME"

COPY conf /zookeeper/conf/

COPY bin/zkReady.sh /zookeeper/bin/

COPY entrypoint.sh /

ENV PATH=/zookeeper/bin:${PATH} \

ZOO_LOG_DIR=/zookeeper/log \

ZOO_LOG4J_PROP="INFO, CONSOLE, ROLLINGFILE" \

JMXPORT=9010

ENTRYPOINT [ "/entrypoint.sh" ]

CMD [ "zkServer.sh", "start-foreground" ]

EXPOSE 2181 2888 3888 9010

root@master1:/data/web/zookeeper#

#制作ZK镜像

3.2 创建PV

- PV Dockerfile

root@master1:/data/web/zookeeper/pv# cat zookeeper-persistentvolume.yaml

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-datadir-pv-1

namespace: ns-uat

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

nfs:

server: 172.16.62.24

path: /nfsdata/k8sdata/zk/zookeeper-datadir-1

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-datadir-pv-2

namespace: ns-uat

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

nfs:

server: 172.16.62.24

path: /nfsdata/k8sdata/zk/zookeeper-datadir-2

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: zookeeper-datadir-pv-3

namespace: ns-uat

spec:

capacity:

storage: 20Gi

accessModes:

- ReadWriteOnce

nfs:

server: 172.16.62.24

path: /nfsdata/k8sdata/zk/zookeeper-datadir-3

root@master1:/data/web/zookeeper/pv#

#创建PV

root@master1:/data/web/zookeeper/pv# kubectl apply -f zookeeper-persistentvolume.yaml

persistentvolume/zookeeper-datadir-pv-1 created

persistentvolume/zookeeper-datadir-pv-2 created

persistentvolume/zookeeper-datadir-pv-3 created

#查看PV状态

回收策略Retain,删除PV后保持原状,最后需要管理员手动删除

root@master1:/data/web/zookeeper/pv# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

zookeeper-datadir-pv-1 20Gi RWO Retain Available 4s

zookeeper-datadir-pv-2 20Gi RWO Retain Available 4s

zookeeper-datadir-pv-3 20Gi RWO Retain Available 4s

root@master1:/data/web/zookeeper/pv#

3.3 创建PVC

root@master1:/data/web/zookeeper/pv# cat zookeeper-persistentvolumeclaim.yaml

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: zookeeper-datadir-pvc-1

namespace: ns-uat

spec:

accessModes:

- ReadWriteOnce

volumeName: zookeeper-datadir-pv-1

resources:

requests:

storage: 10Gi

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: zookeeper-datadir-pvc-2

namespace: ns-uat

spec:

accessModes:

- ReadWriteOnce

volumeName: zookeeper-datadir-pv-2

resources:

requests:

storage: 10Gi

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: zookeeper-datadir-pvc-3

namespace: ns-uat

spec:

accessModes:

- ReadWriteOnce

volumeName: zookeeper-datadir-pv-3

resources:

requests:

storage: 10Gi

root@master1:/data/web/zookeeper/pv#

root@master1:/data/web/zookeeper/pv# kubectl apply -f zookeeper-persistentvolumeclaim.yaml

persistentvolumeclaim/zookeeper-datadir-pvc-1 created

persistentvolumeclaim/zookeeper-datadir-pvc-2 created

persistentvolumeclaim/zookeeper-datadir-pvc-3 created

- 查看PV,已经绑定

root@master1:/data/web/zookeeper/pv# kubectl get pv

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

zookeeper-datadir-pv-1 20Gi RWO Retain Bound ns-uat/zookeeper-datadir-pvc-1 8m28s

zookeeper-datadir-pv-2 20Gi RWO Retain Bound ns-uat/zookeeper-datadir-pvc-2 8m28s

zookeeper-datadir-pv-3 20Gi RWO Retain Bound ns-uat/zookeeper-datadir-pvc-3 8m28s

- 查看PVC已经绑定

root@master1:/data/web/zookeeper/pv# kubectl get pvc -n ns-uat

NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

zookeeper-datadir-pvc-1 Bound zookeeper-datadir-pv-1 20Gi RWO 18s

zookeeper-datadir-pvc-2 Bound zookeeper-datadir-pv-2 20Gi RWO 18s

zookeeper-datadir-pvc-3 Bound zookeeper-datadir-pv-3 20Gi RWO 18s

root@master1:/data/web/zookeeper/pv#

3.4 部署ZK集群

- zk yaml

root@master1:/data/web/zookeeper# cat zookeeper.yaml

apiVersion: v1

kind: Service

metadata:

name: zookeeper

namespace: ns-uat

spec:

ports:

- name: client

port: 2181

selector:

app: zookeeper

---

apiVersion: v1

kind: Service

metadata:

name: zookeeper1

namespace: ns-uat

spec:

type: NodePort

ports:

- name: client

port: 2181

nodePort: 32181

- name: followers

port: 2888

- name: election

port: 3888

selector:

app: zookeeper

server-id: "1"

---

apiVersion: v1

kind: Service

metadata:

name: zookeeper2

namespace: ns-uat

spec:

type: NodePort

ports:

- name: client

port: 2181

nodePort: 32182

- name: followers

port: 2888

- name: election

port: 3888

selector:

app: zookeeper

server-id: "2"

---

apiVersion: v1

kind: Service

metadata:

name: zookeeper3

namespace: ns-uat

spec:

type: NodePort

ports:

- name: client

port: 2181

nodePort: 32183

- name: followers

port: 2888

- name: election

port: 3888

selector:

app: zookeeper

server-id: "3"

---

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

name: zookeeper1

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: zookeeper

template:

metadata:

labels:

app: zookeeper

server-id: "1"

spec:

volumes:

- name: data

emptyDir: {}

- name: wal

emptyDir:

medium: Memory

containers:

- name: server

image: harbor.haostack.com/k8s/zookeeper:v3.4.14

imagePullPolicy: Always

env:

- name: MYID

value: "1"

- name: SERVERS

value: "zookeeper1,zookeeper2,zookeeper3"

- name: JVMFLAGS

value: "-Xmx2G"

ports:

- containerPort: 2181

- containerPort: 2888

- containerPort: 3888

volumeMounts:

- mountPath: "/zookeeper/data"

name: zookeeper-datadir-pvc-1

volumes:

- name: zookeeper-datadir-pvc-1

persistentVolumeClaim:

claimName: zookeeper-datadir-pvc-1

---

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

name: zookeeper2

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: zookeeper

template:

metadata:

labels:

app: zookeeper

server-id: "2"

spec:

volumes:

- name: data

emptyDir: {}

- name: wal

emptyDir:

medium: Memory

containers:

- name: server

image: harbor.haostack.com/k8s/zookeeper:v3.4.14

imagePullPolicy: Always

env:

- name: MYID

value: "2"

- name: SERVERS

value: "zookeeper1,zookeeper2,zookeeper3"

- name: JVMFLAGS

value: "-Xmx2G"

ports:

- containerPort: 2181

- containerPort: 2888

- containerPort: 3888

volumeMounts:

- mountPath: "/zookeeper/data"

name: zookeeper-datadir-pvc-2

volumes:

- name: zookeeper-datadir-pvc-2

persistentVolumeClaim:

claimName: zookeeper-datadir-pvc-2

---

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

name: zookeeper3

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: zookeeper

template:

metadata:

labels:

app: zookeeper

server-id: "3"

spec:

volumes:

- name: data

emptyDir: {}

- name: wal

emptyDir:

medium: Memory

containers:

- name: server

image: harbor.haostack.com/k8s/zookeeper:v3.4.14

imagePullPolicy: Always

env:

- name: MYID

value: "3"

- name: SERVERS

value: "zookeeper1,zookeeper2,zookeeper3"

- name: JVMFLAGS

value: "-Xmx2G"

ports:

- containerPort: 2181

- containerPort: 2888

- containerPort: 3888

volumeMounts:

- mountPath: "/zookeeper/data"

name: zookeeper-datadir-pvc-3

volumes:

- name: zookeeper-datadir-pvc-3

persistentVolumeClaim:

claimName: zookeeper-datadir-pvc-3

root@master1:/data/web/zookeeper#

- 创建zk pod

root@master1:/data/web/zookeeper# kubectl apply -f zookeeper.yaml

service/zookeeper unchanged

service/zookeeper1 created

service/zookeeper2 created

service/zookeeper3 created

deployment.apps/zookeeper1 unchanged

deployment.apps/zookeeper2 unchanged

deployment.apps/zookeeper3 unchanged

root@master1:/data/web/zookeeper#

- 验证pod

root@master1:/data/web/zookeeper/pv# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

ns-uat zookeeper1-7495b46dd9-p8jtn 1/1 Running 0 67s

ns-uat zookeeper2-fb6896f66-tgl7l 1/1 Running 0 67s

ns-uat zookeeper3-5d7f556575-g4qhd 1/1 Running 0 67s

- 验证数据

drwxr-xr-x 3 root root 35 Aug 15 19:36 zookeeper-datadir-1

drwxr-xr-x 3 root root 35 Aug 15 19:36 zookeeper-datadir-2

drwxr-xr-x 3 root root 35 Aug 15 19:36 zookeeper-datadir-3

[root@node24 zk]# cd zookeeper-datadir-1

[root@node24 zookeeper-datadir-1]# ll

total 4

-rw-r--r-- 1 root root 2 Aug 15 19:36 myid

drwxr-xr-x 2 root root 47 Aug 15 19:36 version-2

[root@node24 zookeeper-datadir-1]# cd ..

[root@node24 zk]# ls

zookeeper-datadir-1 zookeeper-datadir-2 zookeeper-datadir-3

[root@node24 zk]# cd zookeeper-datadir-3

[root@node24 zookeeper-datadir-3]# ls

myid version-2

[root@node24 zookeeper-datadir-3]# cat myid

3

[root@node24 zookeeper-datadir-3]#

- 验证集群状态

root@master1:/data/web/zookeeper/pv# kubectl exec -it zookeeper1-7495b46dd9-p8jtn sh -n ns-uat

/ # /zookeeper/bin/zk

zkCleanup.sh zkCli.sh zkEnv.sh zkReady.sh zkServer.sh zkTxnLogToolkit.sh

/ # /zookeeper/bin/zk

zkCleanup.sh zkCli.sh zkEnv.sh zkReady.sh zkServer.sh zkTxnLogToolkit.sh

/ # /zookeeper/bin/zkServer.sh status

ZooKeeper JMX enabled by default

ZooKeeper remote JMX Port set to 9010

ZooKeeper remote JMX authenticate set to false

ZooKeeper remote JMX ssl set to false

ZooKeeper remote JMX log4j set to true

Using config: /zookeeper/bin/../conf/zoo.cfg

Mode: follower #状态正常,follower

/ #

root@master1:/data/web/zookeeper/pv# kubectl exec -it zookeeper2-fb6896f66-tgl7l sh -n ns-uat

/ # /zookeeper/bin/zkServer.sh status

ZooKeeper JMX enabled by default

ZooKeeper remote JMX Port set to 9010

ZooKeeper remote JMX authenticate set to false

ZooKeeper remote JMX ssl set to false

ZooKeeper remote JMX log4j set to true

Using config: /zookeeper/bin/../conf/zoo.cfg

Mode: follower #状态正常,follower

/ # exit

root@master1:/data/web/zookeeper/pv# kubectl exec -it zookeeper3-5d7f556575-g4qhd sh -n ns-uat

/ # /zookeeper/bin/zkServer.sh status

ZooKeeper JMX enabled by default

ZooKeeper remote JMX Port set to 9010

ZooKeeper remote JMX authenticate set to false

ZooKeeper remote JMX ssl set to false

ZooKeeper remote JMX log4j set to true

Using config: /zookeeper/bin/../conf/zoo.cfg

Mode: leader #状态正常,leader

/ #

3.5 测试删除 zk leader

- 当删除其中一个leader pod ,验证在其余两个pod是否能自动 选举出新的leader,然后验证删除的pod重建后,是否以follower的身份加入到zookeeper集群中。

- zookeeper3-5d7f556575-g4qhd 是leader, 将它删除然后查看

root@master1:/data/web/zookeeper/pv# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

ns-uat zookeeper1-7495b46dd9-p8jtn 1/1 Running 0 45m

ns-uat zookeeper2-fb6896f66-tgl7l 1/1 Running 0 45m

ns-uat zookeeper3-5d7f556575-g4qhd 1/1 Running 0 45m

3.5.1 删除 leader pod

root@master1:/data/web/zookeeper/pv# kubectl delete pod zookeeper3-5d7f556575-g4qhd -n ns-uat

pod "zookeeper3-5d7f556575-g4qhd" deleted

3.5.2 pod会重建

root@master1:/data/web/zookeeper/pv# kubectl get pod -A

NAMESPACE NAME READY STATUS RESTARTS AGE

ns-uat zookeeper1-7495b46dd9-p8jtn 1/1 Running 0 47m

ns-uat zookeeper2-fb6896f66-tgl7l 1/1 Running 0 47m

ns-uat zookeeper3-5d7f556575-2wslc 0/1 ContainerCreating 0 13s

3.5.3 验证pod

#zookeeper3-5d7f556575-2wslc 变成leader

root@master1:/data/web/zookeeper/pv# kubectl exec -it zookeeper3-5d7f556575-2wslc sh -n ns-uat

/ # /zookeeper/bin/zkServer.sh status

ZooKeeper JMX enabled by default

ZooKeeper remote JMX Port set to 9010

ZooKeeper remote JMX authenticate set to false

ZooKeeper remote JMX ssl set to false

ZooKeeper remote JMX log4j set to true

Using config: /zookeeper/bin/../conf/zoo.cfg

Mode: follower #变成follower

/ # exit

#zookeeper1-7495b46dd9-p8jtn 变成leader

root@master1:/data/web/zookeeper/pv# kubectl exec -it zookeeper1-7495b46dd9-p8jtn sh -n ns-uat

/ # /zookeeper/bin/zkServer.sh status

ZooKeeper JMX enabled by default

ZooKeeper remote JMX Port set to 9010

ZooKeeper remote JMX authenticate set to false

ZooKeeper remote JMX ssl set to false

ZooKeeper remote JMX log4j set to true

Using config: /zookeeper/bin/../conf/zoo.cfg

Mode: leader #变成leader

/ #

3.5.4 验证从外部访问zookeeper

3.6 部署dubbo服务

3.6.1 consumer Dockerfile

root@master1:/data/web/dubbo/Dockerfile/consumer# cat Dockerfile

#Dubbo consumer

FROM harbor.haostack.com/k8s/jack_k8s_base-jdk:v8.212

MAINTAINER jack liu "[email protected]"

RUN yum install file -y

RUN mkdir -p /apps/dubbo/consumer && useradd tomcat

ADD dubbo-demo-consumer-2.1.5 /apps/dubbo/consumer

ADD run_java.sh /apps/dubbo/consumer/bin

RUN chown tomcat.tomcat /apps -R

RUN chmod a+x /apps/dubbo/consumer/bin/*.sh

CMD ["/apps/dubbo/consumer/bin/run_java.sh"]

root@master1:/data/web/dubbo/Dockerfile/consumer#

- dubbo.properties配置,需要指定server name

root@master1:/data/web/dubbo/Dockerfile/consumer/dubbo-demo-consumer-2.1.5/conf# vim /data/web/dubbo/Dockerfile/consumer/dubbo-demo-consumer-2.1.5/conf/dubbo.properties

##

# Copyright 1999-2011 Alibaba Group.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

##

dubbo.container=log4j,spring

dubbo.application.name=demo-consumer

dubbo.application.owner=

#dubbo.registry.address=multicast://224.5.6.7:1234

dubbo.registry.address=zookeeper://zookeeper1.ns-uat.svc.haostack.com:2181 | zookeeper://zookeeper2.ns-uat.svc.haostack.com:2181 | zookeeper://zookeeper3.ns-uat.svc.haostack.com:2181

#dubbo.registry.address=redis://127.0.0.1:6379

#dubbo.registry.address=dubbo://127.0.0.1:9090

dubbo.monitor.protocol=registry

dubbo.log4j.file=logs/dubbo-demo-consumer.log

dubbo.log4j.level=WARN

~

"/data/web/dubbo/Dockerfile/consumer/dubbo-demo-consumer-2.1.5/conf/dubbo.properties" [dos] 25L, 1141C

3.6.2 dubboadmin Dockerfile

root@master1:/data/web/dubbo/Dockerfile/dubboadmin# cat Dockerfile

#Dubbo dubboadmin

FROM harbor.haostack.com/k8s/jack_k8s_base-tomcat:v8.5.43

MAINTAINER jack liu "[email protected]"

RUN yum install unzip -y

ADD server.xml /apps/tomcat/conf/server.xml

ADD logging.properties /apps/tomcat/conf/logging.properties

ADD catalina.sh /apps/tomcat/bin/catalina.sh

ADD run_tomcat.sh /apps/tomcat/bin/run_tomcat.sh

ADD dubboadmin.war /data/tomcat/webapps/dubboadmin.war

RUN cd /data/tomcat/webapps && unzip dubboadmin.war && rm -rf dubboadmin.war && chown -R tomcat.tomcat /data /apps

EXPOSE 8080 8443

CMD ["/apps/tomcat/bin/run_tomcat.sh"]

root@master1:/data/web/dubbo/Dockerfile/dubboadmin#

- dubbo.properties配置,需要指定server name

root@master1:/data/web/dubbo/Dockerfile/dubboadmin/dubboadmin/WEB-INF# cat dubbo.properties

dubbo.registry.address=zookeeper://zookeeper1.ns-uat.svc.haostack.com:2181

dubbo.admin.root.password=root

dubbo.admin.guest.password=guest

root@master1:/data/web/dubbo/Dockerfile/dubboadmin/dubboadmin/WEB-INF#

3.6.3 provider Dockerfile

root@master1:/data/web/dubbo/Dockerfile/provider# cat Dockerfile

#Dubbo provider

FROM harbor.haostack.com/k8s/jack_k8s_base-jdk:v8.212

MAINTAINER jack liu "[email protected]"

RUN yum install file nc -y

RUN mkdir -p /apps/dubbo/provider && useradd tomcat

ADD dubbo-demo-provider-2.1.5/ /apps/dubbo/provider

ADD run_java.sh /apps/dubbo/provider/bin

RUN chown tomcat.tomcat /apps -R

RUN chmod a+x /apps/dubbo/provider/bin/*.sh

CMD ["/apps/dubbo/provider/bin/run_java.sh"]

root@master1:/data/web/dubbo/Dockerfile/provider#

- dubbo.properties配置,需要指定server name

- dubbo服务端口 20880

root@master1:/data/web/dubbo/Dockerfile/provider/dubbo-demo-provider-2.1.5/conf# cat dubbo.properties

##

# Copyright 1999-2011 Alibaba Group.

#

# Licensed under the Apache License, Version 2.0 (the "License");

# you may not use this file except in compliance with the License.

# You may obtain a copy of the License at

#

# http://www.apache.org/licenses/LICENSE-2.0

#

# Unless required by applicable law or agreed to in writing, software

# distributed under the License is distributed on an "AS IS" BASIS,

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

# See the License for the specific language governing permissions and

# limitations under the License.

##

dubbo.container=log4j,spring

dubbo.application.name=demo-provider

dubbo.application.owner=

#dubbo.registry.address=multicast://224.5.6.7:1234

dubbo.registry.address=zookeeper://zookeeper1.ns-uat.svc.haostack.com:2181 | zookeeper://zookeeper2.ns-uat.svc.haostack.com:2181 | zookeeper://zookeeper3.ns-uat.svc.haostack.com:2181

#dubbo.registry.address="zookeeper://zookeeper1.linux36.svc.linux36.local:2181,"

#dubbo.registry.address=zookeeper://zookeeper1.linux36.svc.linux36.local:2181 | zookeeper2.linux36.svc.linux36.local:2181 | zookeeper3.linux36.svc.linux36.local:2181

#dubbo.registry.address=redis://127.0.0.1:6379

#dubbo.registry.address=dubbo://127.0.0.1:9090

dubbo.monitor.protocol=registry

dubbo.protocol.name=dubbo

dubbo.protocol.port=20880 #服务端口

dubbo.log4j.file=logs/dubbo-demo-provider.log

dubbo.log4j.level=WARN

root@master1:/data/web/dubbo/Dockerfile/provider/dubbo-demo-provider-2.1.5/conf#

3.6.4 制作镜像,上传到本地harbor中

oot@master1:/data/web/dubbo/Dockerfile/provider# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

harbor.haostack.com/k8s/dubbo-demo-provider v1 13a8ef510c89 47 seconds ago 1.06GB

harbor.haostack.com/k8s/dubbo-demo-consumer v1 3cf30ab56d99 3 minutes ago 1.06GB

harbor.haostack.com/k8s/dubboadmin v1 ce9f620f7158 6 minutes ago 1.13GB

3.6.5 yaml配置

- consumer

root@master1:/data/web/dubbo/yaml/consumer# cat consumer.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: uat-consumer

name: uat-consumer-deployment

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: uat-consumer

template:

metadata:

labels:

app: uat-consumer

spec:

containers:

- name: uat-consumer-container

image: harbor.haostack.com/k8s/dubbo-demo-consumer:v1

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 20880

protocol: TCP

name: http

---

kind: Service

apiVersion: v1

metadata:

labels:

app: uat-consumer

name: uat-consumer-server

namespace: ns-uat

spec:

type: NodePort

ports:

- name: http

port: 20880

protocol: TCP

targetPort: 20880

#nodePort: 30001

selector:

app: uat-consumer

root@master1:/data/web/dubbo/yaml/consumer#

- dubboadmin

root@master1:/data/web/dubbo/yaml/dubboadmin# cat dubboadmin.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: uat-dubboadmin

name: uat-dubboadmin-deployment

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: uat-dubboadmin

template:

metadata:

labels:

app: uat-dubboadmin

spec:

containers:

- name: uat-dubboadmin-container

image: harbor.haostack.com/k8s/dubboadmin:v1

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

---

kind: Service

apiVersion: v1

metadata:

labels:

app: uat-dubboadmin

name: uat-dubboadmin-service

namespace: ns-uat

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 20080

selector:

app: uat-dubboadmin

root@master1:/data/web/dubbo/yaml/dubboadmin#

- provider

root@master1:/data/web/dubbo/yaml/provider# cat provider.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: uat-provider

name: uat-provider-deployment

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: uat-provider

template:

metadata:

labels:

app: uat-provider

spec:

containers:

- name: uat-provider-container

image: harbor.haostack.com/k8s/dubbo-demo-provider:v1

#command: ["/apps/tomcat/bin/run_tomcat.sh"]

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 20880

protocol: TCP

name: http

---

kind: Service

apiVersion: v1

metadata:

labels:

app: uat-provider

name: uat-provider-spec

namespace: ns-uat

spec:

type: NodePort

ports:

- name: http

port: 20880

protocol: TCP

targetPort: 20880

#nodePort: 30001

selector:

app: uat-provider

root@master1:/data/web/dubbo/yaml/provider#

3.7 创建pod

- kubectl apply -f consumer.yaml

- kubectl apply -f dubboadmin.yaml

- kubectl apply -f provider.yaml

3.8 查看pod,状态正常

root@master1:/data/web/nginx-web1# kubectl get pod -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE

ns-uat uat-consumer-deployment-8475fd7c4f-jf4s6 1/1 Running 0 4m9s 10.20.5.66 172.16.62.209

ns-uat uat-dubboadmin-deployment-58f7878c59-4jsbb 1/1 Running 0 88m 10.20.3.107 172.16.62.208

ns-uat uat-nginx-deployment-689c84c954-x8qbc 1/1 Running 3 17h 10.20.3.77 172.16.62.208

ns-uat uat-provider-deployment-9996494cb-msds9 1/1 Running 0 11h 10.20.3.103 172.16.62.208

3.9 查看SVC,状态正常

root@master1:/data/web/nginx-web1# kubectl get svc -n ns-uat

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

uat-consumer-server NodePort 172.28.159.19 20880:32470/TCP 11h

uat-dubboadmin-service NodePort 172.28.50.24 80:20080/TCP 89m

uat-nginx-service NodePort 172.28.26.76 80:30016/TCP,443:30443/TCP 17h

uat-provider-spec NodePort 172.28.120.219 20880:37977/TCP 11h

uat-tomcat-app1-service NodePort 172.28.82.181 80:30017/TCP 17h

zookeeper ClusterIP 172.28.9.143 2181/TCP 14h

zookeeper1 NodePort 172.28.131.135 2181:32181/TCP,2888:25530/TCP,3888:34631/TCP 14h

zookeeper2 NodePort 172.28.225.240 2181:32182/TCP,2888:31239/TCP,3888:20156/TCP 14h

zookeeper3 NodePort 172.28.168.172 2181:32183/TCP,2888:26213/TCP,3888:34619/TCP 14h

root@master1:/data/web/nginx-web1#

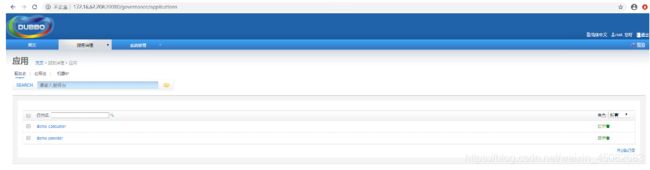

3.10 验证

-

检查dubboadmin服务

-

20080 是nodeport 端口,在yaml中定义

-

dubbo.properties 中定义账号信息

root@master1:/data/web/dubbo/dockerfile/dubboadmin/dubboadmin/WEB-INF# cat dubbo.properties

dubbo.registry.address=zookeeper://zookeeper1.ns-uat.svc.haostack.com:2181

dubbo.admin.root.password=root

dubbo.admin.guest.password=guest

root@master1:/data/web/dubbo/dockerfile/dubboadmin/dubboadmin/WEB-INF# pwd

/data/web/dubbo/dockerfile/dubboadmin/dubboadmin/WEB-INF

root@master1:/data/web/dubbo/dockerfile/dubboadmin/dubboadmin/WEB-INF#

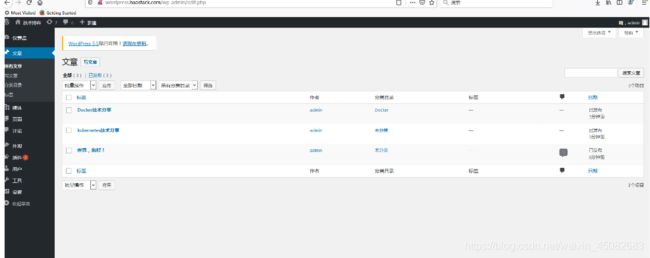

- 登录dubboadmin

- 查看服务

- 验证consumer

- 进入 consumerpod 查看日志

- kubectl exec -it uat-consumer-deployment-8475fd7c4f-jf4s6 sh -n ns-uat

sh-4.2# tail -f stdout.log

[09:50:40] Hello world457, response form provider: 10.20.3.103:20880

[09:50:42] Hello world458, response form provider: 10.20.3.103:20880

[09:50:44] Hello world459, response form provider: 10.20.3.103:20880

[09:50:46] Hello world460, response form provider: 10.20.3.103:20880

[09:50:48] Hello world461, response form provider: 10.20.3.103:20880

[09:50:50] Hello world462, response form provider: 10.20.3.103:20880

[09:50:52] Hello world463, response form provider: 10.20.3.103:20880

[09:50:54] Hello world464, response form provider: 10.20.3.103:20880

[09:50:56] Hello world465, response form provider: 10.20.3.103:20880

[09:50:58] Hello world466, response form provider: 10.20.3.103:20880

[09:51:00] Hello world467, response form provider: 10.20.3.103:20880

^C

sh-4.2# ip a

1: lo: mtu 65536 qdisc noqueue state UNKNOWN group default qlen 1000

link/loopback 00:00:00:00:00:00 brd 00:00:00:00:00:00

inet 127.0.0.1/8 scope host lo

valid_lft forever preferred_lft forever

3: eth0@if44: mtu 1450 qdisc noqueue state UP group default

link/ether 0a:51:5a:a2:64:d2 brd ff:ff:ff:ff:ff:ff link-netnsid 0

inet 10.20.5.66/24 scope global eth0

valid_lft forever preferred_lft forever

sh-4.2#

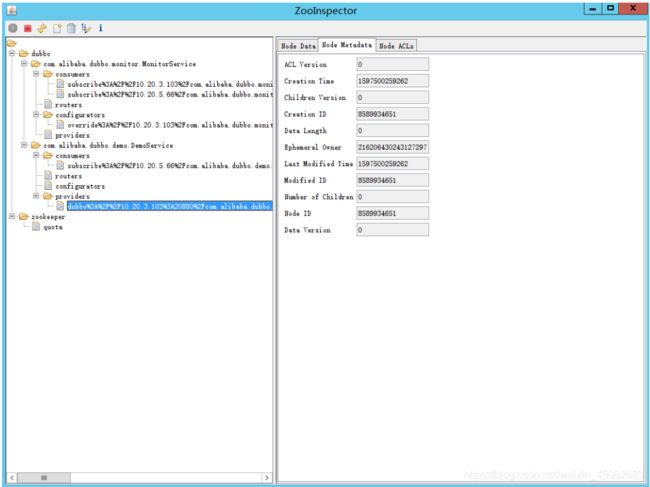

- 利用 客户端zooInspector登录测试

- 可以查看到consumer和provider的信息

4 部署jenkins服务

4.1 文件准备

root@master1:/data/web/jenkins/dockerfile# tree

.

├── build-command.sh

├── Dockerfile

├── jenkins-2.164.3.war

├── jenkins-2.190.1.war

├── jenkins-2.222.1.war

└── run_jenkins.sh

0 directories, 6 files

root@master1:/data/web/jenkins/dockerfile#

4.2 NFS Server 目录创建

- 在NFS上创建两个目录,用于创建PV

/nfsdata/k8sdata/jenkins/jenkins-data

/nfsdata/k8sdata/jenkins/jenkins-root-data

4.3 jenkins dockerfile

root@master1:/data/web/jenkins/dockerfile# cat Dockerfile

#Jenkins Version 2.222.1

FROM harbor.haostack.com/k8s/jack_k8s_base-jdk:v8.212

MAINTAINER jack liu

ADD jenkins-2.222.1.war /apps/jenkins/

ADD run_jenkins.sh /usr/bin/

EXPOSE 8080

CMD ["/usr/bin/run_jenkins.sh"]

root@master1:/data/web/jenkins/dockerfile#

4.3.1 jenkins 镜像制作

docker build -t harbor.haostack.com/k8s/jenkins:v2.222.1 .

docker push harbor.haostack.com/k8s/jenkins:v2.222.1

#查看镜像

root@master1:/data/web/jenkins/dockerfile# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

harbor.haostack.com/k8s/jenkins v2.222.1 992eeacb9bcc 46 minutes ago 1.01GB

4.4 创建PV和PVC

-

PVC大小只能增大 不建议缩小

-

创建PV

kubectl apply -f jenkins-persistentvolume.yaml

root@master1:/# kubectl get pv -A

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

jenkins-datadir-pv 100Gi RWO Retain Available 2m59s

jenkins-root-datadir-pv 100Gi RWO Retain Available 2m59s

- 创建PVC

kubectl apply -f jenkins-persistentvolumeclaim.yaml

root@master1:/# kubectl get pvc -A

NAMESPACE NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

ns-uat jenkins-datadir-pvc Bound jenkins-datadir-pv 100Gi RWO 14s

ns-uat jenkins-root-data-pvc Bound jenkins-root-datadir-pv 100Gi RWO 14s

4.4.1 jenkins yaml

root@master1:/data/web/jenkins/yaml# cat jenkins.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: uat-jenkins

name: uat-jenkins-deployment

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: uat-jenkins

template:

metadata:

labels:

app: uat-jenkins

spec:

containers:

- name: uat-jenkins-container

image: harbor.haostack.com/k8s/jenkins:v2.222.1

#imagePullPolicy: IfNotPresent

imagePullPolicy: Always

ports:

- containerPort: 8080

protocol: TCP

name: http

volumeMounts:

- mountPath: "/apps/jenkins/jenkins-data/"

name: jenkins-datadir-uat

- mountPath: "/root/.jenkins"

name: jenkins-root-datadir

volumes:

- name: jenkins-datadir-uat

persistentVolumeClaim:

claimName: jenkins-datadir-pvc

- name: jenkins-root-datadir

persistentVolumeClaim:

claimName: jenkins-root-data-pvc

---

kind: Service

apiVersion: v1

metadata:

labels:

app: uat-jenkins

name: uat-jenkins-service

namespace: ns-uat

spec:

type: NodePort

ports:

- name: http

port: 80

protocol: TCP

targetPort: 8080

nodePort: 38080

selector:

app: uat-jenkins

root@master1:/data/web/jenkins/yaml#

4.5 创建jenkins

kubectl apply -f jenkins.yaml

4.5.1 查看pod

root@master1:/# kubectl get pod -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE

ns-uat uat-jenkins-deployment-645bb4db59-xnh4g 1/1 Running 0 106s 10.20.3.108 172.16.62.208

4.5.2 测试

安装插件

5.部署Redis服务

5.1 文件准备

root@master1:/data/web/redis/redis# tree

.

├── build-command.sh

├── Dockerfile

├── redis-4.0.14.tar.gz

├── redis.conf

└── run_redis.sh

0 directories, 5 files

root@master1:/data/web/redis/redis

5.2 Redis镜像制作

Successfully built b919315d6308

Successfully tagged harbor.haostack.com/k8s/redis:v1

The push refers to repository [harbor.haostack.com/k8s/redis]

root@master1:/data/web/redis/redis# docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

harbor.haostack.com/k8s/redis v1 b919315d6308 5 minutes ago 614MB

5.2.1 创建PV和PVC

-

NFS上创建目录为 /nfsdata/k8sdata/redis/redis-datadir-1

-

创建PV

#yaml文件

root@master1:/data/web/redis/yaml/pv# cat redis-persistentvolume.yaml

---

apiVersion: v1

kind: PersistentVolume

metadata:

name: redis-datadir-pv-1

namespace: ns-uat

spec:

capacity:

storage: 10Gi

accessModes:

- ReadWriteOnce

nfs:

path: /nfsdata/k8sdata/redis/redis-datadir-1

server: 172.16.62.24

root@master1:/data/web/redis/yaml/pv#

#创建

kubectl apply -f redis-persistentvolume.yaml

#查看redis pv

root@master1:/data/web/redis/yaml/pv# kubectl get pv -A

NAME CAPACITY ACCESS MODES RECLAIM POLICY STATUS CLAIM STORAGECLASS REASON AGE

jenkins-datadir-pv 100Gi RWO Retain Bound ns-uat/jenkins-datadir-pvc 53m

jenkins-root-datadir-pv 100Gi RWO Retain Bound ns-uat/jenkins-root-data-pvc 53m

redis-datadir-pv-1 10Gi RWO Retain Bound ns-uat/redis-datadir-pvc-1 12s

zookeeper-datadir-pv-1 20Gi RWO Retain Bound ns-uat/zookeeper-datadir-pvc-1 16h

zookeeper-datadir-pv-2 20Gi RWO Retain Bound ns-uat/zookeeper-datadir-pvc-2 16h

zookeeper-datadir-pv-3 20Gi RWO Retain Bound ns-uat/zookeeper-datadir-pvc-3 16h

- 创建PVC

#pvc yaml

root@master1:/data/web/redis/yaml/pv# cat redis-persistentvolumeclaim.yaml

---

apiVersion: v1

kind: PersistentVolumeClaim

metadata:

name: redis-datadir-pvc-1

namespace: ns-uat

spec:

volumeName: redis-datadir-pv-1

accessModes:

- ReadWriteOnce

resources:

requests:

storage: 10Gi

root@master1:/data/web/redis/yaml/pv#

#创建PV

kubectl apply -f redis-persistentvolumeclaim.yaml

#查看redis pvc

root@master1:/data/web/redis/yaml/pv# kubectl get pvc -A

NAMESPACE NAME STATUS VOLUME CAPACITY ACCESS MODES STORAGECLASS AGE

ns-uat jenkins-datadir-pvc Bound jenkins-datadir-pv 100Gi RWO 50m

ns-uat jenkins-root-data-pvc Bound jenkins-root-datadir-pv 100Gi RWO 50m

ns-uat redis-datadir-pvc-1 Bound redis-datadir-pv-1 10Gi RWO 11s

ns-uat zookeeper-datadir-pvc-1 Bound zookeeper-datadir-pv-1 20Gi RWO 16h

ns-uat zookeeper-datadir-pvc-2 Bound zookeeper-datadir-pv-2 20Gi RWO 16h

ns-uat zookeeper-datadir-pvc-3 Bound zookeeper-datadir-pv-3 20Gi RWO 16h

5.2.2 Redis yaml

root@master1:/data/web/redis/yaml# cat redis.yaml

kind: Deployment

#apiVersion: extensions/v1beta1

apiVersion: apps/v1

metadata:

labels:

app: devops-redis

name: deploy-devops-redis

namespace: ns-uat

spec:

replicas: 1

selector:

matchLabels:

app: devops-redis

template:

metadata:

labels:

app: devops-redis

spec:

containers:

- name: redis-container

image: harbor.haostack.com/k8s/redis:v1

imagePullPolicy: Always

volumeMounts:

- mountPath: "/data/redis-data/"

name: redis-datadir

volumes:

- name: redis-datadir

persistentVolumeClaim:

claimName: redis-datadir-pvc-1

---

kind: Service

apiVersion: v1

metadata:

labels:

app: devops-redis

name: srv-devops-redis

namespace: ns-uat

spec:

type: NodePort

ports:

- name: http

port: 6379

targetPort: 6379

nodePort: 36379

selector:

app: devops-redis

sessionAffinity: ClientIP

sessionAffinityConfig:

clientIP:

timeoutSeconds: 10800

root@master1:/data/web/redis/yaml#

#创建pod

kubectl apply -f redis.yaml

#查看pod

root@master1:/# kubectl get pod -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE

ns-uat deploy-devops-redis-7d4679cb9-6jlkd 1/1 Running 0 52s 10.20.5.67 172.16.62.209

#查看redis svc

root@master1:/data/web/redis/yaml# kubectl get svc -n ns-uat

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

srv-devops-redis NodePort 172.28.47.223 6379:36379/TCP 19m

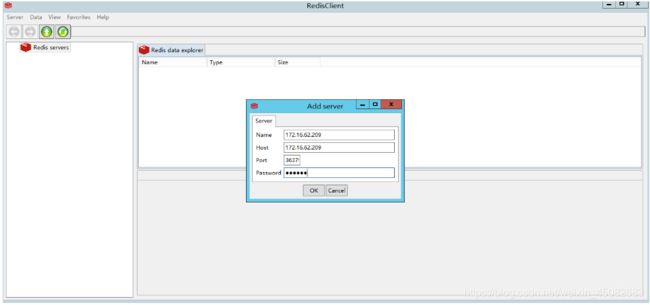

5.3测试

- Redis client工具

172.16.62.209:36379> get key1

"value1"

172.16.62.209:36379>

- 登录

root@ha1:/etc/haproxy# redis-cli -h 172.16.62.209 -p 36379

172.16.62.209:36379>

172.16.62.209:36379>

172.16.62.209:36379>

172.16.62.209:36379> info

NOAUTH Authentication required.

172.16.62.209:36379> info

NOAUTH Authentication required.

172.16.62.209:36379> AUTH 123456

OK

172.16.62.209:36379> info

# Server

redis_version:4.0.14

redis_git_sha1:00000000

redis_git_dirty:0

redis_build_id:d24f04da9a60c426

redis_mode:standalone

os:Linux 4.15.0-112-generic x86_64

arch_bits:64

multiplexing_api:epoll

atomicvar_api:atomic-builtin

gcc_version:4.8.5

process_id:7

run_id:58a23adc4162e769a69c320f9d9aae5bf764ac43

tcp_port:6379

uptime_in_seconds:243

uptime_in_days:0

hz:10

lru_clock:3714380

executable:/usr/sbin/redis-server

config_file:/usr/local/redis/redis.conf

# Clients

connected_clients:1

client_longest_output_list:0

client_biggest_input_buf:0

blocked_clients:0

# Memory

used_memory:849472

used_memory_human:829.56K

used_memory_rss:4161536

used_memory_rss_human:3.97M

used_memory_peak:849472

used_memory_peak_human:829.56K

used_memory_peak_perc:100.12%

used_memory_overhead:836246

used_memory_startup:786608

used_memory_dataset:13226

used_memory_dataset_perc:21.04%

total_system_memory:4136685568

total_system_memory_human:3.85G

used_memory_lua:37888

used_memory_lua_human:37.00K

maxmemory:0

maxmemory_human:0B

maxmemory_policy:noeviction

mem_fragmentation_ratio:4.90

mem_allocator:jemalloc-4.0.3

active_defrag_running:0

lazyfree_pending_objects:0

# Persistence

loading:0

rdb_changes_since_last_save:0

rdb_bgsave_in_progress:0

rdb_last_save_time:1597549657

rdb_last_bgsave_status:ok

rdb_last_bgsave_time_sec:-1

rdb_current_bgsave_time_sec:-1

rdb_last_cow_size:0

aof_enabled:0

aof_rewrite_in_progress:0

aof_rewrite_scheduled:0

aof_last_rewrite_time_sec:-1

aof_current_rewrite_time_sec:-1

aof_last_bgrewrite_status:ok

aof_last_write_status:ok

aof_last_cow_size:0

# Stats

total_connections_received:1

total_commands_processed:1

instantaneous_ops_per_sec:0

total_net_input_bytes:85

total_net_output_bytes:107

instantaneous_input_kbps:0.00

instantaneous_output_kbps:0.00

rejected_connections:0

sync_full:0

sync_partial_ok:0

sync_partial_err:0

expired_keys:0

expired_stale_perc:0.00

expired_time_cap_reached_count:0

evicted_keys:0

keyspace_hits:0

keyspace_misses:0

pubsub_channels:0

pubsub_patterns:0

latest_fork_usec:0

migrate_cached_sockets:0

slave_expires_tracked_keys:0

active_defrag_hits:0

active_defrag_misses:0

active_defrag_key_hits:0

active_defrag_key_misses:0

# Replication

role:master

connected_slaves:0

master_replid:a8b6fec7fa70667c954b62f9bf0f4fc092aba1a3

master_replid2:0000000000000000000000000000000000000000

master_repl_offset:0

second_repl_offset:-1

repl_backlog_active:0

repl_backlog_size:1048576

repl_backlog_first_byte_offset:0

repl_backlog_histlen:0

# CPU

used_cpu_sys:0.24

used_cpu_user:0.16

used_cpu_sys_children:0.00

used_cpu_user_children:0.00

# Cluster

cluster_enabled:0

# Keyspace

172.16.62.209:36379>

- 删除redis pod 测试数据是否存在

root@master1:/# kubectl delete pod deploy-devops-redis-7d4679cb9-6jlkd -n ns-uat

pod "deploy-devops-redis-7d4679cb9-6jlkd" deleted

#自动创建出新pod

root@master1:/# kubectl get pod -A -o wide

NAMESPACE NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

ns-uat deploy-devops-redis-7d4679cb9-srrzf 1/1 Running 0 2m46s 10.20.5.68 172.16.62.209

#检查数据还存在

root@ha1:/etc/haproxy# redis-cli -h 172.16.62.209 -p 36379

172.16.62.209:36379> AUTH 123456

OK

172.16.62.209:36379> get key1

"value1"

172.16.62.209:36379>

6.部署mysql实现一主多从

- 基于StatefulSet实现:

Pod调度运行时,如果应用不需要任何稳定的标示、有序的部署、删除和扩展,则应该使用一组无状态副本的控制

器来部署应用,例如 Deployment 或 ReplicaSet更适合无状态服务需求,而StatefulSet适合管理所有有状态的服

务,比如MySQL、MongoDB集群等

- StatefulSet本质上是Deployment的一种变体,在v1.9版本中已成为GA版本,它为了解决有状态服务的问题,它所管

理的Pod拥有固定的Pod名称,启停顺序,在StatefulSet中,Pod名字称为网络标识(hostname),还必须要用到共享

存储。

在Deployment中,与之对应的服务是service,而在StatefulSet中与之对应的headless service,headless

service,即无头服务,与service的区别就是它没有Cluster IP,解析它的名称时将返回该Headless Service对

应的全部Pod的Endpoint列表。

StatefulSet 特点:

-> 给每个pdo分配固定且唯一的网络标识符

-> 给每个pod分配固定且持久化的外部存储

-> 对pod进行有序的部署和扩展

-> 对pod进有序的删除和终止

-> 对pod进有序的自动滚动更新

6.1 NFS目录挂载

total 0

drwxr-xr-x 2 root root 6 Aug 16 13:56 mysql-datadir-1

drwxr-xr-x 2 root root 6 Aug 16 13:56 mysql-datadir-2

drwxr-xr-x 2 root root 6 Aug 16 13:56 mysql-datadir-3

drwxr-xr-x 2 root root 6 Aug 16 13:56 mysql-datadir-4

drwxr-xr-x 2 root root 6 Aug 16 13:56 mysql-datadir-5

drwxr-xr-x 2 root root 6 Aug 16 13:56 mysql-datadir-6

[root@node24 mysql]#

6.1.1 statefulset镜像制作

docker pull registry.cn-hangzhou.aliyuncs.com/hxpdocker/xtrabackup:1.0

docker tag registry.cn-hangzhou.aliyuncs.com/hxpdocker/xtrabackup:1.0 harbor.haostack.com/k8s/hxpdocker/xtrabackup:v1.0

docker push harbor.haostack.com/k8s/hxpdocker/xtrabackup:v1.0

6.1.2 msyql镜像制作

docker pull mysql:5.7.29

docker tag mysql:5.7.29 harbor.haostack.com/official/mysql:v5.7.29

docker push harbor.haostack.com/official/mysql:v5.7.29

6.2 创建PV和PVC

- 创建PV

root@master1:/data/web/mysql/yaml/pv# kubectl apply -f mysql-persistentvolume.yaml

persistentvolume/mysql-datadir-pv-1 created

persistentvolume/mysql-datadir-pv-2 created

persistentvolume/mysql-datadir-pv-3 created

persistentvolume/mysql-datadir-pv-4 created

persistentvolume/mysql-datadir-pv-5 created

persistentvolume/mysql-datadir-pv-6 created

root@master1:/data/web/mysql/yaml/pv#

- 创建PVC

6.3 mysql yaml

root@master1:/data/web/mysql/yaml# tree

.

├── mysql-configmap.yaml

├── mysql-services.yaml

├── mysql-statefulset.yaml

└── pv

└── mysql-persistentvolume.yaml

1 directory, 4 files

root@master1:/data/web/mysql/yaml#

#mysql-configmap.yaml

root@master1:/data/web/mysql/yaml# cat mysql-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:

name: mysql

labels:

app: mysql

data:

master.cnf: |

# Apply this config only on the master.

[mysqld]

log-bin

log_bin_trust_function_creators=1

lower_case_table_names=1

slave.cnf: |

# Apply this config only on slaves.

[mysqld]

super-read-only

log_bin_trust_function_creators=1

root@master1:/data/web/mysql/yaml#

#mysql-services.yaml

root@master1:/data/web/mysql/yaml# cat mysql-services.yaml

# Headless service for stable DNS entries of StatefulSet members.

apiVersion: v1

kind: Service

metadata:

name: mysql

labels:

app: mysql

spec:

ports:

- name: mysql

port: 3306

clusterIP: None

selector:

app: mysql

---

# Client service for connecting to any MySQL instance for reads.

# For writes, you must instead connect to the master: mysql-0.mysql.

apiVersion: v1

kind: Service

metadata:

name: mysql-read

labels:

app: mysql

spec:

ports:

- name: mysql

port: 3306

selector:

app: mysql

root@master1:/data/web/mysql/yaml#

#mysql-statefulset.yaml

root@master1:/data/web/mysql/yaml# cat mysql-services.yaml

# Headless service for stable DNS entries of StatefulSet members.

apiVersion: v1

kind: Service

metadata:

name: mysql

labels:

app: mysql

spec:

ports:

- name: mysql

port: 3306

clusterIP: None

selector:

app: mysql

---

# Client service for connecting to any MySQL instance for reads.

# For writes, you must instead connect to the master: mysql-0.mysql.

apiVersion: v1

kind: Service

metadata:

name: mysql-read

labels:

app: mysql

spec:

ports:

- name: mysql

port: 3306

selector:

app: mysql

root@master1:/data/web/mysql/yaml#

root@master1:/data/web/mysql/yaml# cat mysql-s

mysql-services.yaml mysql-statefulset.yaml

root@master1:/data/web/mysql/yaml# cat mysql-s

mysql-services.yaml mysql-statefulset.yaml

root@master1:/data/web/mysql/yaml# cat mysql-statefulset.yaml

apiVersion: apps/v1

kind: StatefulSet

metadata:

name: mysql

spec:

selector:

matchLabels:

app: mysql

serviceName: mysql

replicas: 3

template:

metadata:

labels:

app: mysql

spec:

initContainers:

- name: init-mysql

image: harbor.haostack.com/official/mysql:v5.7.29

command:

- bash

- "-c"

- |

set -ex

# Generate mysql server-id from pod ordinal index.

[[ `hostname` =~ -([0-9]+)$ ]] || exit 1

ordinal=${BASH_REMATCH[1]}

echo [mysqld] > /mnt/conf.d/server-id.cnf

# Add an offset to avoid reserved server-id=0 value.

echo server-id=$((100 + $ordinal)) >> /mnt/conf.d/server-id.cnf

# Copy appropriate conf.d files from config-map to emptyDir.

if [[ $ordinal -eq 0 ]]; then

cp /mnt/config-map/master.cnf /mnt/conf.d/

else

cp /mnt/config-map/slave.cnf /mnt/conf.d/

fi

volumeMounts:

- name: conf

mountPath: /mnt/conf.d

- name: config-map

mountPath: /mnt/config-map

- name: clone-mysql

image: harbor.haostack.com/k8s/hxpdocker/xtrabackup:v1.0

command:

- bash

- "-c"

- |

set -ex

# Skip the clone if data already exists.

[[ -d /var/lib/mysql/mysql ]] && exit 0