ceph对象存储和安装dashborad

一、ceph–RadosGW对象存储

数据不需要放置在目录层次结构中,而是存在于平面地址空间内的同一级别;

应用通过唯一地址来识别每个单独的数据对象;

每个对象可包含有助于检索的元数据;

在Ceph中的对象存储网关中,通过RESTful API在应用级别进行访问意味着应用程序可以直接通过HTTP/HTTPS使用API与对象存储网关进行交互。这种访问方式是针对整个应用程序而不是特定用户进行的,允许应用程序以编程方式执行与对象存储相关的操作,如创建、读取、更新和删除对象,管理桶(bucket)、权限等。它提供了一种基于Web的接口,用于与Ceph对象存储系统进行通信,并且能够以应用程序的身份进行操作,而不是依赖于用户的身份认证。

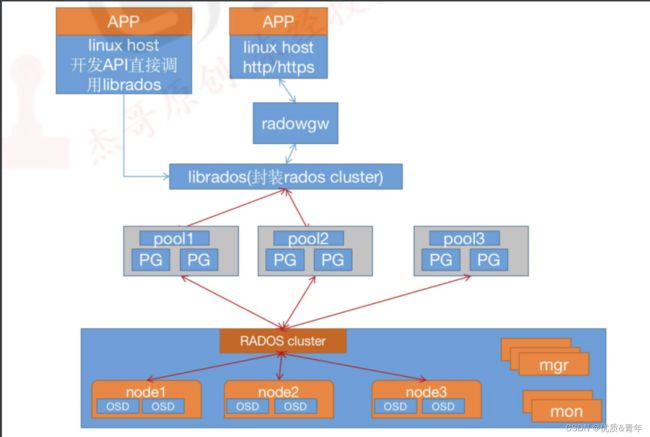

1、RadosGW对象存储简介

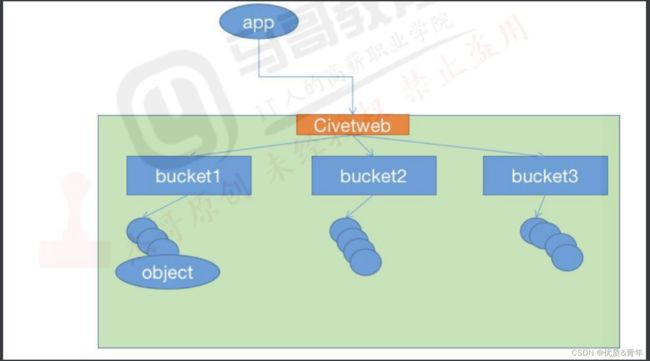

RadosGW 是对象存储(OSS,Object Storage Service)的一种实现方式,RADOS 网关也称为Ceph 对象网关、RadosGW、RGW,是一种服务,使客户端能够利用标准对象存储 API 来访问 Ceph 集群,它支持 AWS S3 和 Swift API,在 ceph 0.8 版本之后使用Civetweb(https://github.com/civetweb/civetweb) 的 web 服务器来响应 api 请求,客户端使用http/https 协议通过 RESTful API 与 RGW 通信,而 RGW 则通过 librados 与 ceph 集群通信,RGW 客户端通过 s3 或者 swift api 使用 RGW 用户进行身份验证,然后 RGW 网关代表用户利用 cephx 与 ceph 存储进行身份验证。

2、对象存储的特点

- 通过对象存储将数据存储为对象,每个对象除了包含数据还包含数据自身的元数据。

- 对象通过object ID来检索,无法通过普通文件系统的方式通过文件路径及文件名称操作来直接访问对象,只能通过API来访问,或者第三方客户端工具(实际上也是对API的封装)

- 对象存储中的对象不整理到目录树中,而是存储在扁平的命名空间中,Amazon S3将这个扁平命名空间成为bucket(存储桶),而非swift则其称为容器。

- bucket 需要被授权才能访问到,一个帐户可以对多个 bucket 授权,而权限可以不同;方便横向扩展、快速检索数据;不支持客户端挂载,且需要客户端在访问的时候指定文件名称;不是很适用于文件过于频繁修改及删除的场景。

ceph 使用 bucket 作为存储桶(存储空间),实现对象数据的存储和多用户隔离,数据存储在bucket 中,用户的权限也是针对 bucket 进行授权,可以设置用户对不同的 bucket 拥有不通的权限,以实现权限管理。

2.1 bucket特性

(1)存储空间是用于存储对象(Object)的容器,所有的对象都必须隶属于某个存储空间,可以设置和修改存储空间属性用来控制地域、访问权限、生命周期等,这些属性设置直接作用于该存储空间内所有对象,因此可以通过灵活创建不同的存储空间来完成不同的管理功能。

(2)同一个存储空间的内部是扁平的,没有文件系统的目录等概念,所有的对象都直接隶属于其对应的存储空间。

(3)每个用户可以拥有多个存储空间

(4)存储空间的名称在 OSS 范围内必须是全局唯一的,一旦创建之后无法修改名称。

(5)存储空间内部的对象数目没有限制。

2.2 bucket命名规范

1、只能包括小写字母、数字和短横线(-)

2、必须以小写字母或者数字开头和结尾。

3、长度必须在 3-63 字节之间。

4、存储桶名称不能使用用 IP 地址格式。

5、Bucket 名称必须全局唯一。

2.3 RadosGW架构图

2.4 RadosGW逻辑图

3、对象存储访问对比

Amazon S3:提供了 user、bucket 和 object 分别表示用户、存储桶和对象,其中 bucket

隶属于 user,可以针对 user 设置不同 bucket 的名称空间的访问权限,而且不同用户允许

访问相同的 bucket。

OpenStack Swift:提供了 user、container 和 object 分别对应于用户、存储桶和对象,不

过它还额外为user提供了父级组件account,account用于表示一个项目或租户(OpenStack

用户),因此一个 account 中可包含一到多个 user,它们可共享使用同一组 container,并为

container 提供名称空间。

RadosGW:提供了 user、subuser、bucket 和 object,其中的 user 对应于 S3 的 user,而

subuser 则对应于 Swift 的 user,不过 user 和 subuser 都不支持为 bucket 提供名称空间,

因此,不同用户的存储桶也不允许同名;不过,自 Jewel 版本起,RadosGW 引入了 tenant

(租户)用于为 user 和 bucket 提供名称空间,但它是个可选组件,RadosGW 基于 ACL

为不同的用户设置不同的权限控制,如:

Read 读权限

Write 写权限

Readwrite 读写权限

full-control 全部控制权限

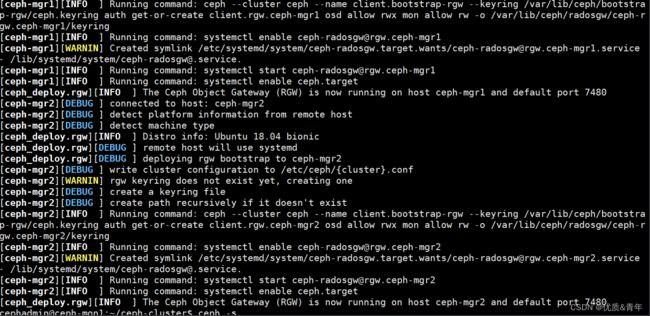

4、部署RadosGW服务

4.1 安装RadosGW服务并初始化

root@ceph-mgr1:~#apt -y install radosgw

root@ceph-mgr2:~#apt -y install radosgw

cephadmin@ceph-mon1:~/ceph-cluster$ceph-deploy rgw create ceph-mgr1

cephadmin@ceph-mon1:~/ceph-cluster$ceph-deploy rgw create ceph-mgr2

#验证RadosGW服务状态

root@ceph-mon1:~# su - cephadmin

cephadmin@ceph-mon1:~$ cd ceph-cluster/

cephadmin@ceph-mon1:~/ceph-cluster$ ceph -s

cluster:

id: 3bc181dd-a0ef-4d72-a58d-ee4776e9870f

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph-mon1,ceph-mon2,ceph-mon3 (age 9m)

mgr: ceph-mgr1(active, since 2h), standbys: ceph-mgr2

mds: 2/2 daemons up, 2 standby

osd: 9 osds: 9 up (since 2h), 9 in (since 4d)

rgw: 2 daemons active (2 hosts, 1 zones)

data:

volumes: 1/1 healthy

pools: 9 pools, 289 pgs

objects: 358 objects, 200 MiB

usage: 781 MiB used, 1.8 TiB / 1.8 TiB avail

pgs: 289 active+clean

4.2验证RadosGW服务进程

root@ceph-mgr1:~# ps -ef|grep rados

ceph 4716 1 0 01:48 ? 00:00:41 /usr/bin/radosgw -f --cluster ceph --name client.rgw.ceph-mgr1 --setuser ceph --setgroup ceph

root 5606 5583 0 03:57 pts/0 00:00:00 grep --color=auto rados

4.3RadosGW的存储池类型

root@ceph-mgr1:~# ceph osd pool ls

cephfs-metadata

cephfs-data

.rgw.root #包含realm(领域信息),比如zone和zonegroup

default.rgw.log #存储日志信息,用于记录各种log信息

default.rgw.control #系统控制池,在有数据更新时,通知其他RGW更新缓存

default.rgw.meta #元数据存储池,通过不同的名称空间分别存储不同的 rados 对象,这些名称空间包括⽤⼾UID 及其 bucket 映射信息的名称空间 users.uid、⽤⼾的密钥名称空间users.keys、⽤⼾的 email 名称空间 users.email、⽤⼾的 subuser 的名称空间 users.swift,以及 bucket 的名称空间 root 等。

device_health_metrics

default.rgw.buckets.index #存放bucket到object的索引信息

default.rgw.buckets.data #存放对象的数据

#验证RGW zone的信息

root@ceph-mgr1:~# radosgw-admin zone get --rgw-zone=default

{

"id": "055243e4-d13b-4858-b7cd-90aca81befe2",

"name": "default",

"domain_root": "default.rgw.meta:root",

"control_pool": "default.rgw.control",

"gc_pool": "default.rgw.log:gc",

"lc_pool": "default.rgw.log:lc",

"log_pool": "default.rgw.log",

"intent_log_pool": "default.rgw.log:intent",

"usage_log_pool": "default.rgw.log:usage",

"roles_pool": "default.rgw.meta:roles",

"reshard_pool": "default.rgw.log:reshard",

"user_keys_pool": "default.rgw.meta:users.keys",

"user_email_pool": "default.rgw.meta:users.email",

"user_swift_pool": "default.rgw.meta:users.swift",

"user_uid_pool": "default.rgw.meta:users.uid",

"otp_pool": "default.rgw.otp",

"system_key": {

"access_key": "",

"secret_key": ""

},

"placement_pools": [

{

"key": "default-placement",

"val": {

"index_pool": "default.rgw.buckets.index",

"storage_classes": {

"STANDARD": {

"data_pool": "default.rgw.buckets.data"

}

},

"data_extra_pool": "default.rgw.buckets.non-ec",

"index_type": 0

}

}

],

"realm_id": "",

"notif_pool": "default.rgw.log:notif"

}

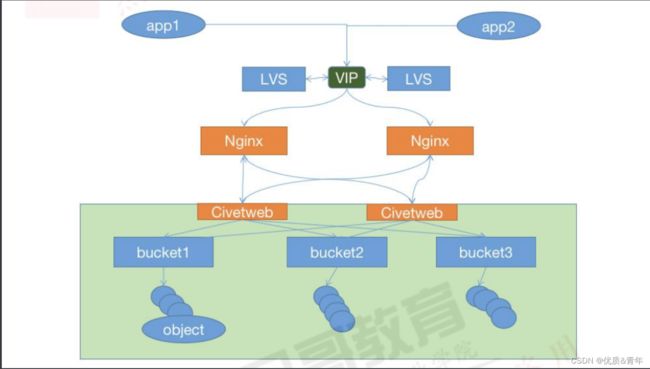

5、radosgw服务高可用配置

5.1 radosgw http

5.1.1 自定义http端口

配置文件可以在ceph-deploy部署节点修改然后统一推送,或者单独修改每个radosgw服务器的配置为统一配置,然后重启RGW服务。

root@ceph-mgr1:~# cat /etc/ceph/ceph.conf

[global]

fsid = 3bc181dd-a0ef-4d72-a58d-ee4776e9870f

public_network = 172.17.0.0/16

cluster_network = 192.168.10.0/24

mon_initial_members = ceph-mon1

mon_host = 172.17.10.61

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

mon clock drift allowed = 2

mon clock drift warn backoff = 30

mon_allow_pool_delete = true

osd pool default ec profile = /var/log/ceph_pool_healthy

[client.rgw.ceph-mgr1]

rgw_host = ceph-mgr1

rgw_frontends = civetweb port=9900

request_timeout_ms=30000 num_threads=200

rgw_dns_name = rgw.qiange.com

5.2 radosgw https

在rgw节点生成签名证书并配置radosgw启用SSL

5.2.1自签名证书

root@ceph-mgr1:/etc/ceph# mkdir certs

root@ceph-mgr1:/etc/ceph# cd certs

root@ceph-mgr1:/etc/ceph/certs#openssl genrsa -out web.key 2048

root@ceph-mgr1:/etc/ceph/certs#openssl req -new -x509 -key /etc/ceph/certs/web.key -out web.crt -subj "/CN=rgw.qiange.com"

root@ceph-mgr1:/etc/ceph/certs#cat web.crt web.key > web.pem

root@ceph-mgr1:/etc/ceph/certs# tree

.

├── web.crt

├── web.key

└── web.pem

0 directories, 3 files

5.2.2 SSL的配置

[root@ceph-mgr2 certs]# vim /etc/ceph/ceph.conf

[client.rgw.ceph-mgr2]

rgw_host = ceph-mgr2

rgw_frontends = "civetweb port=9900+9443s ssl_certificate=/etc/ceph/certs/web.pem"

#重启服务

[root@ceph-mgr1 certs]# systemctl restart [email protected]

#验证:查看服务的端口是否起来

[root@ceph-mgr2 certs]# lsof -i:9900

5.3 日志及其他优化配置

#创建日志目录:

[root@ceph-mgr1 certs]# mkdir /var/log/radosgw

[root@ceph-mgr1 certs]# chown ceph.ceph /var/log/radosgw

#当前配置

[root@ceph-mgr1 ceph]# vim ceph.conf

[client.rgw.ceph-mgr1]

rgw_host = ceph-mgr1

rgw_frontends = civetweb port=9900+8443s

ssl_certificate=/etc/ceph/certs/civetweb.pem

error_log_file=/var/log/radosgw/civetweb.error.log

access_log_file=/var/log/radosgw/civetweb.access.log

request_timeout_ms=30000 num_threads=200

#重启服务

[root@ceph-mgr2 certs]# systemctl restart [email protected]

#访问测试:

[root@ceph-mgr2 certs]# curl -k https://172.31.6.108:8443

6、测试数据读写

6.1 RGW Server配置

在实际生产中,每个RGW节点的配置参数都是一致的

root@ceph-mgr1:/etc/ceph# cat ceph.conf

[global]

fsid = 3bc181dd-a0ef-4d72-a58d-ee4776e9870f

public_network = 172.17.0.0/16

cluster_network = 192.168.10.0/24

mon_initial_members = ceph-mon1

mon_host = 172.17.10.61

auth_cluster_required = cephx

auth_service_required = cephx

auth_client_required = cephx

mon clock drift allowed = 2

mon clock drift warn backoff = 30

mon_allow_pool_delete = true

osd pool default ec profile = /var/log/ceph_pool_healthy

[client.rgw.ceph-mgr1]

rgw_host = ceph-mgr1

rgw_frontends = civetweb port=9900

request_timeout_ms=30000 num_threads=200

rgw_dns_name = rgw.qiange.com

[client.rgw.ceph-mgr2]

rgw_host = ceph-mgr2

rgw_frontends = civetweb port=9900

request_timeout_ms=30000 num_threads=200

rgw_dns_name = rgw.qiange.com

6.2创建RGW账户

cephadmin@ceph-mon1:~/ceph-cluster$ radosgw-admin user create --uid="user1" --display-name="user2"

{

"user_id": "user1",

"display_name": "user2",

"email": "",

"suspended": 0,

"max_buckets": 1000,

"subusers": [],

"keys": [

{

"user": "user1",

"access_key": "NETEGA5FB1O2QK9OHOEA",

"secret_key": "7DivWbNrfEdc5usucGFqvCtJDPCMNVF0QcSjfTIy"

}

],

"swift_keys": [],

"caps": [],

"op_mask": "read, write, delete",

"default_placement": "",

"default_storage_class": "",

"placement_tags": [],

"bucket_quota": {

"enabled": false,

"check_on_raw": false,

"max_size": -1,

"max_size_kb": 0,

"max_objects": -1

},

"user_quota": {

"enabled": false,

"check_on_raw": false,

"max_size": -1,

"max_size_kb": 0,

"max_objects": -1

},

"temp_url_keys": [],

"type": "rgw",

"mfa_ids": []

}

6.3安装s3cmd客户端

s3cmd是一个通过命令行访问ceph RGW实现创建存储同桶、上传、下载以及管理数据到对象存储的命令行客户端工具。

1、下载安装s3cmd工具

cephadmin@ceph-mon1:~/ceph-cluster$sudo apt-cache madison s3cmd

cephadmin@ceph-mon1:~/ceph-cluster$sudo apt install s3cmd

2、配置命令执行环境

cephadmin@ceph-mon1:~/ceph-cluster$ s3cmd --configure

Enter new values or accept defaults in brackets with Enter.

Refer to user manual for detailed description of all options.

Access key and Secret key are your identifiers for Amazon S3. Leave them empty for using the env variables.

Access Key: NETEGA5FB1O2QK9OHOEA #输入用户access key

Secret Key: 7DivWbNrfEdc5usucGFqvCtJDPCMNVF0QcSjfTIy #输入用户secret key

Default Region [US]: #默认区域,直接按回车键即可

Use "s3.amazonaws.com" for S3 Endpoint and not modify it to the target Amazon S3.

S3 Endpoint [s3.amazonaws.com]: rgw.qiange.com:9900 #RGW的域名

Use "%(bucket)s.s3.amazonaws.com" to the target Amazon S3. "%(bucket)s" and "%(location)s" vars can be used

if the target S3 system supports dns based buckets.

DNS-style bucket+hostname:port template for accessing a bucket [%(bucket)s.s3.amazonaws.com]: rgw.qiange.com:9900/%(bucket) #bucket域名格式

Encryption password is used to protect your files from reading

by unauthorized persons while in transfer to S3

Encryption password:

Path to GPG program [/usr/bin/gpg]: #直接按回车键即可,gpg命令路径,用于认证管理

When using secure HTTPS protocol all communication with Amazon S3

servers is protected from 3rd party eavesdropping. This method is

slower than plain HTTP, and can only be proxied with Python 2.7 or newer

Use HTTPS protocol [Yes]: No #是否使用https

On some networks all internet access must go through a HTTP proxy.

Try setting it here if you can't connect to S3 directly

HTTP Proxy server name:

On some networks all internet access must go through a HTTP proxy.

Try setting it here if you can't connect to S3 directly

HTTP Proxy server name:

New settings: #最终配置

Access Key: NETEGA5FB1O2QK9OHOEA

Secret Key: 7DivWbNrfEdc5usucGFqvCtJDPCMNVF0QcSjfTIy

Default Region: region

S3 Endpoint: rgw.qiange.com:9900

DNS-style bucket+hostname:port template for accessing a bucket: rgw.qiange.com:9900/%(bucket)

Encryption password:

Path to GPG program: /usr/bin/gpg

Use HTTPS protocol: False

HTTP Proxy server name:

HTTP Proxy server port: 0

Test access with supplied credentials? [Y/n] y #是否保存以上配置

Please wait, attempting to list all buckets...

Success. Your access key and secret key worked fine :-)

Now verifying that encryption works...

Not configured. Never mind.

Save settings? [y/N] y

Configuration saved to '/home/cephadmin/.s3cfg' #配置文件的报错路径

6.4 命令客户端s3cmd验证数据上传

6.4.1 创建bucket以验证权限

存储空间(Bucket)是用于存储对象(Object)的容器,在上传任意类型的 Object 前,您

需要先创建 Bucket。

cephadmin@ceph-mon1:~/ceph-cluster$ s3cmd mb s3://test-bucket

Bucket 's3://test-bucket/' created

cephadmin@ceph-mon1:~/ceph-cluster$ s3cmd mb s3://test1-bucket

Bucket 's3://test1-bucket/' created

cephadmin@ceph-mon1:~/ceph-cluster$ s3cmd mb s3://test2-bucket

Bucket 's3://test2-bucket/' created

6.4.2 上传并验证数据

#上传数据

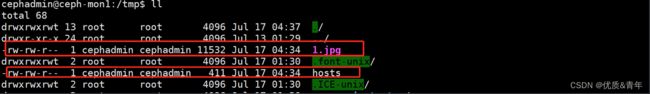

cephadmin@ceph-mon1:~$ s3cmd put 1.jpg s3://test-bucket

upload: '1.jpg' -> 's3://test-bucket/1.jpg' [1 of 1]

11532 of 11532 100% in 0s 204.64 kB/s done

cephadmin@ceph-mon1:~$ s3cmd put /etc/passwd s3://test1-bucket

upload: '/etc/passwd' -> 's3://test1-bucket/passwd' [1 of 1]

1778 of 1778 100% in 0s 46.85 kB/s done

cephadmin@ceph-mon1:~$ s3cmd put /etc/hosts s3://test2-bucket

upload: '/etc/hosts' -> 's3://test2-bucket/hosts' [1 of 1]

411 of 411 100% in 0s 7.22 kB/s done

#验证数据

cephadmin@ceph-mon1:~$ s3cmd ls s3://test-bucket

2023-07-17 04:34 11532 s3://test-bucket/1.jpg

cephadmin@ceph-mon1:~$ s3cmd ls s3://test1-bucket

2023-07-17 04:34 1778 s3://test1-bucket/passwd

cephadmin@ceph-mon1:~$ s3cmd ls s3://test2-bucket

2023-07-17 04:34 411 s3://test2-bucket/hosts

6.4.3 验证下载数据

cephadmin@ceph-mon1:~$ s3cmd get s3://test2-bucket/hosts /tmp

download: 's3://test2-bucket/hosts' -> '/tmp/hosts' [1 of 1]

411 of 411 100% in 0s 7.49 kB/s done

cephadmin@ceph-mon1:~$ s3cmd get s3://test-bucket/1.jpg /tmp

download: 's3://test-bucket/1.jpg' -> '/tmp/1.jpg' [1 of 1]

11532 of 11532 100% in 0s 741.34 kB/s done

6.4.4 删除文件

cephadmin@ceph-mon1:/tmp$ s3cmd rm s3://test2-bucket/hosts

delete: 's3://test2-bucket/hosts'

cephadmin@ceph-mon1:/tmp$ s3cmd ls s3://test2-bucket

7、报错

原因:在给客户端配置命令执行环境时,手动设置了域导致的

![]()

二、ceph dashborad

2.1 启用dashborad插件

Ceph mgr 是一个多插件(模块化)的组件,其组件可以单独的启用或关闭,以下为在

ceph-deploy 服务器操作:

注意:新版本需要安装 dashboard 安保,而且必须安装在 mgr 节点,否则报错

[ceph@ceph-deploy ceph-cluster]$ ceph mgr module enable dashboard #启用模块

注意:模块启用后还不能直接访问,需要配置关闭SSL或启用SSL以及指定监听地址

2.2 启用dashborad模块

Ceph dashboard 在 mgr 节点进行开启设置,并且可以配置开启或者关闭 SSL,如下:

[ceph@ceph-deploy ceph-cluster]$ceph config set mgr mgr/dashboard/ssl false #关闭SSL

[ceph@ceph-deploy ceph-cluster]$ceph config set mgr mgr/dashboard/ceph-mgr1/server_addr 172.17.10.64 #指定dashborad监听地址

[ceph@ceph-deploy ceph-cluster]$ceph config set mgr mgr/dashboard/ceph-mgr1/server_port 9999 #指定dashborad监听端口

#验证ceph集群的状态

cephadmin@ceph-mon1:~/ceph-cluster$ ceph -s

cluster:

id: 3bc181dd-a0ef-4d72-a58d-ee4776e9870f

health: HEALTH_OK

services:

mon: 3 daemons, quorum ceph-mon1,ceph-mon2,ceph-mon3 (age 78m)

mgr: ceph-mgr1(active, since 10m), standbys: ceph-mgr2

mds: 2/2 daemons up, 2 standby

osd: 9 osds: 9 up (since 3h), 9 in (since 4d)

rgw: 2 daemons active (2 hosts, 1 zones)

data:

volumes: 1/1 healthy

pools: 9 pools, 289 pgs

objects: 401 objects, 200 MiB

usage: 860 MiB used, 1.8 TiB / 1.8 TiB avail

pgs: 289 active+clean

2.3 设置dashborad账号和密码

ceph@ceph-deploy:/home/ceph/ceph-cluster$ echo "12345678" > pass.txt

#设置账号为admin,密码为12345678

ceph@ceph-deploy:/home/ceph/ceph-cluster$ ceph dashboard set-login-credentials admin -i pass.txt

p, 2 standby

osd: 9 osds: 9 up (since 3h), 9 in (since 4d)

rgw: 2 daemons active (2 hosts, 1 zones)

data:

volumes: 1/1 healthy

pools: 9 pools, 289 pgs

objects: 401 objects, 200 MiB

usage: 860 MiB used, 1.8 TiB / 1.8 TiB avail

pgs: 289 active+clean

## 2.3 设置dashborad账号和密码

```bash

ceph@ceph-deploy:/home/ceph/ceph-cluster$ echo "12345678" > pass.txt

#设置账号为admin,密码为12345678

ceph@ceph-deploy:/home/ceph/ceph-cluster$ ceph dashboard set-login-credentials admin -i pass.txt