yolov8 实例分割 onnx runtime C++部署

如果第一次部署分割,建议先看这篇博客:

YOLOv5 实例分割 用 OPenCV DNN C++ 部署_爱钓鱼的歪猴的博客-CSDN博客

目录

Pre

一、OpenCV DNN C++ 部署

二、ONNX RUNTIME C++ 部署

yolov8_seg_utils.h

yolov8_seg_utils.cpp

yolov8_seg_onnx.h

yolov8_seg_onnx.cpp

main.cpp

CMakelist.txt

Pre

一定要知道,yolov8的输出与Yolov5 7.0 实例分割的输出不一样,

output0: float32[1,116,8400]。 116是4个box坐标信息+80个类别概率+32个mask系数

output1: float32[1,32,160,160]。一张图片一组mask原型。这个mask原型是相对于整个图像的。

一、OpenCV DNN C++ 部署

所以output0需要装置一下,117->116,其他的与yolov5实例分割没啥区别:

net.setInput(blob);

std::vector net_output_img;

vector output_layer_names{ "output0","output1" };

net.forward(net_output_img, output_layer_names); //get outputs

std::vector class_ids;// res-class_id

std::vector confidences;// res-conf

std::vector boxes;// res-box

std::vector> picked_proposals; //output0[:,:, 4 + _className.size():net_width]===> for mask

int net_width = _className.size() + 4 + _segChannels;

//转置

Mat output0=Mat( Size(net_output_img[0].size[2], net_output_img[0].size[1]), CV_32F, (float*)net_output_img[0].data).t(); //[bs,116,8400]=>[bs,8400,116]

int rows = output0.rows;

float* pdata = (float*)output0.data;

for (int r = 0; r < rows; ++r) {

cv::Mat scores(1, _className.size(), CV_32FC1, pdata + 4);

Point classIdPoint;

double max_class_socre;

minMaxLoc(scores, 0, &max_class_socre, 0, &classIdPoint);

max_class_socre = (float)max_class_socre;

if (max_class_socre >= _classThreshold) {

vector temp_proto(pdata + 4 + _className.size(), pdata + net_width);

picked_proposals.push_back(temp_proto);

//rect [x,y,w,h]

float x = (pdata[0] - params[2]) / params[0];

float y = (pdata[1] - params[3]) / params[1];

float w = pdata[2] / params[0];

float h = pdata[3] / params[1];

int left = MAX(int(x - 0.5 * w + 0.5), 0);

int top = MAX(int(y - 0.5 * h + 0.5), 0);

class_ids.push_back(classIdPoint.x);

confidences.push_back(max_class_socre);

boxes.push_back(Rect(left, top, int(w + 0.5), int(h + 0.5)));

}

pdata += net_width;//next line

}

//NMS

vector nms_result;

NMSBoxes(boxes, confidences, _classThreshold, _nmsThreshold, nms_result);

std::vector> temp_mask_proposals;

Rect holeImgRect(0, 0, srcImg.cols, srcImg.rows);

for (int i = 0; i < nms_result.size(); ++i) {

int idx = nms_result[i];

OutputSeg result;

result.id = class_ids[idx];

result.confidence = confidences[idx];

result.box = boxes[idx] & holeImgRect;

temp_mask_proposals.push_back(picked_proposals[idx]);

output.push_back(result);

}

MaskParams mask_params;

mask_params.params = params;

mask_params.srcImgShape = srcImg.size();

for (int i = 0; i < temp_mask_proposals.size(); ++i) {

GetMask2(Mat(temp_mask_proposals[i]).t(), net_output_img[1], output[i], mask_params);

}

但是我read模型的时候报错:ERROR during processing node with 2 inputs and 1 outputs: [Reshape]:(/model.22/dfl/Reshape_output_0) from domain='ai.onnx'

应该是OpenCV版本需要升级到4.7。之前部署检测的时候也报这个错:yolov8 OpenCV DNN 部署 推理报错_爱钓鱼的歪猴的博客-CSDN博客

我不想升级OpenCV了,所以选择用ONNX RUNTIME 部署

二、ONNX RUNTIME C++ 部署

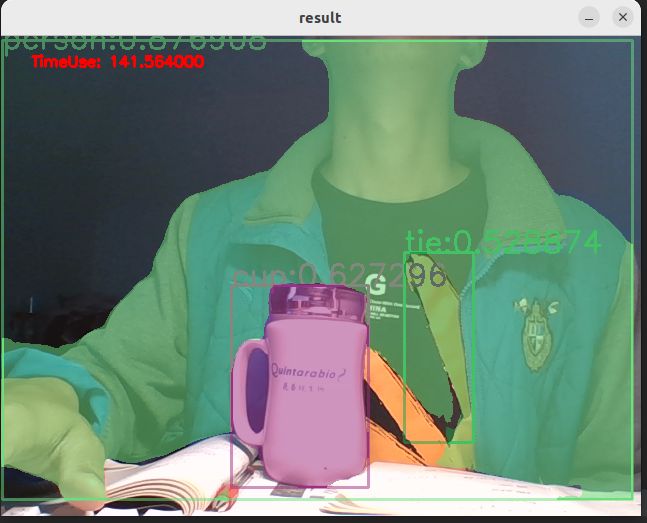

yolov8n-seg CPU 推理耗时150ms左右, 速度比yolov5n-seg慢一点(100ms),但是检测效果好一些,具体来说,同样是我穿上这件外套,yolov8n-seg给出的置信度更高!

yolov8_seg_utils.h

#pragma once

#include

#include

#include

#define YOLO_P6 false //是否使用P6模型

#define ORT_OLD_VISON 12 //ort1.12.0 之前的版本为旧版本API

struct OutputSeg {

int id; //结果类别id

float confidence; //结果置信度

cv::Rect box; //矩形框

cv::Mat boxMask; //矩形框内mask,节省内存空间和加快速度

};

struct MaskParams {

int segChannels = 32;

int segWidth = 160;

int segHeight = 160;

int netWidth = 640;

int netHeight = 640;

float maskThreshold = 0.5;

cv::Size srcImgShape;

cv::Vec4d params;

};

bool CheckParams(int netHeight, int netWidth, const int* netStride, int strideSize);

void DrawPred(cv::Mat& img, std::vector result, std::vector classNames, std::vector color);

void LetterBox(const cv::Mat& image, cv::Mat& outImage,

cv::Vec4d& params, //[ratio_x,ratio_y,dw,dh]

const cv::Size& newShape = cv::Size(640, 640),

bool autoShape = false,

bool scaleFill = false,

bool scaleUp = true,

int stride = 32,

const cv::Scalar& color = cv::Scalar(114, 114, 114));

void GetMask(const cv::Mat& maskProposals, const cv::Mat& maskProtos, std::vector& output, const MaskParams& maskParams);

void GetMask2(const cv::Mat& maskProposals, const cv::Mat& maskProtos, OutputSeg& output, const MaskParams& maskParams); yolov8_seg_utils.cpp

#pragma once

#include "yolov8_seg_utils.h"

using namespace cv;

using namespace std;

bool CheckParams(int netHeight, int netWidth, const int* netStride, int strideSize) {

if (netHeight % netStride[strideSize - 1] != 0 || netWidth % netStride[strideSize - 1] != 0)

{

cout << "Error:_netHeight and _netWidth must be multiple of max stride " << netStride[strideSize - 1] << "!" << endl;

return false;

}

return true;

}

void LetterBox(const cv::Mat& image, cv::Mat& outImage, cv::Vec4d& params, const cv::Size& newShape,

bool autoShape, bool scaleFill, bool scaleUp, int stride, const cv::Scalar& color)

{

if (false) {

int maxLen = MAX(image.rows, image.cols);

outImage = Mat::zeros(Size(maxLen, maxLen), CV_8UC3);

image.copyTo(outImage(Rect(0, 0, image.cols, image.rows)));

params[0] = 1;

params[1] = 1;

params[3] = 0;

params[2] = 0;

}

cv::Size shape = image.size();

float r = std::min((float)newShape.height / (float)shape.height,

(float)newShape.width / (float)shape.width);

if (!scaleUp)

r = std::min(r, 1.0f);

float ratio[2]{ r, r };

int new_un_pad[2] = { (int)std::round((float)shape.width * r),(int)std::round((float)shape.height * r) };

auto dw = (float)(newShape.width - new_un_pad[0]);

auto dh = (float)(newShape.height - new_un_pad[1]);

if (autoShape)

{

dw = (float)((int)dw % stride);

dh = (float)((int)dh % stride);

}

else if (scaleFill)

{

dw = 0.0f;

dh = 0.0f;

new_un_pad[0] = newShape.width;

new_un_pad[1] = newShape.height;

ratio[0] = (float)newShape.width / (float)shape.width;

ratio[1] = (float)newShape.height / (float)shape.height;

}

dw /= 2.0f;

dh /= 2.0f;

if (shape.width != new_un_pad[0] && shape.height != new_un_pad[1])

{

cv::resize(image, outImage, cv::Size(new_un_pad[0], new_un_pad[1]));

}

else {

outImage = image.clone();

}

int top = int(std::round(dh - 0.1f));

int bottom = int(std::round(dh + 0.1f));

int left = int(std::round(dw - 0.1f));

int right = int(std::round(dw + 0.1f));

params[0] = ratio[0];

params[1] = ratio[1];

params[2] = left;

params[3] = top;

cv::copyMakeBorder(outImage, outImage, top, bottom, left, right, cv::BORDER_CONSTANT, color);

}

void GetMask(const cv::Mat& maskProposals, const cv::Mat& maskProtos, std::vector& output, const MaskParams& maskParams) {

//cout << maskProtos.size << endl;

int seg_channels = maskParams.segChannels;

int net_width = maskParams.netWidth;

int seg_width = maskParams.segWidth;

int net_height = maskParams.netHeight;

int seg_height = maskParams.segHeight;

float mask_threshold = maskParams.maskThreshold;

Vec4f params = maskParams.params;

Size src_img_shape = maskParams.srcImgShape;

Mat protos = maskProtos.reshape(0, { seg_channels,seg_width * seg_height });

Mat matmul_res = (maskProposals * protos).t();

Mat masks = matmul_res.reshape(output.size(), { seg_width,seg_height });

vector maskChannels;

split(masks, maskChannels);

for (int i = 0; i < output.size(); ++i) {

Mat dest, mask;

//sigmoid

cv::exp(-maskChannels[i], dest);

dest = 1.0 / (1.0 + dest);

Rect roi(int(params[2] / net_width * seg_width), int(params[3] / net_height * seg_height), int(seg_width - params[2] / 2), int(seg_height - params[3] / 2));

dest = dest(roi);

resize(dest, mask, src_img_shape, INTER_NEAREST);

//crop

Rect temp_rect = output[i].box;

mask = mask(temp_rect) > mask_threshold;

output[i].boxMask = mask;

}

}

void GetMask2(const Mat& maskProposals, const Mat& mask_protos, OutputSeg& output, const MaskParams& maskParams) {

int seg_channels = maskParams.segChannels;

int net_width = maskParams.netWidth;

int seg_width = maskParams.segWidth;

int net_height = maskParams.netHeight;

int seg_height = maskParams.segHeight;

float mask_threshold = maskParams.maskThreshold;

Vec4f params = maskParams.params;

Size src_img_shape = maskParams.srcImgShape;

Rect temp_rect = output.box;

//crop from mask_protos

int rang_x = floor((temp_rect.x * params[0] + params[2]) / net_width * seg_width);

int rang_y = floor((temp_rect.y * params[1] + params[3]) / net_height * seg_height);

int rang_w = ceil(((temp_rect.x + temp_rect.width) * params[0] + params[2]) / net_width * seg_width) - rang_x;

int rang_h = ceil(((temp_rect.y + temp_rect.height) * params[1] + params[3]) / net_height * seg_height) - rang_y;

//如果下面的 mask_protos(roi_rangs).clone()位置报错,说明你的output.box数据不对,或者矩形框就1个像素的,开启下面的注释部分防止报错。

rang_w = MAX(rang_w, 1);

rang_h = MAX(rang_h, 1);

if (rang_x + rang_w > seg_width) {

if (seg_width - rang_x > 0)

rang_w = seg_width - rang_x;

else

rang_x -= 1;

}

if (rang_y + rang_h > seg_height) {

if (seg_height - rang_y > 0)

rang_h = seg_height - rang_y;

else

rang_y -= 1;

}

vector roi_rangs;

roi_rangs.push_back(Range(0, 1));

roi_rangs.push_back(Range::all());

roi_rangs.push_back(Range(rang_y, rang_h + rang_y));

roi_rangs.push_back(Range(rang_x, rang_w + rang_x));

//crop

Mat temp_mask_protos = mask_protos(roi_rangs).clone();

Mat protos = temp_mask_protos.reshape(0, { seg_channels,rang_w * rang_h });

Mat matmul_res = (maskProposals * protos).t();

Mat masks_feature = matmul_res.reshape(1, { rang_h,rang_w });

Mat dest, mask;

//sigmoid

cv::exp(-masks_feature, dest);

dest = 1.0 / (1.0 + dest);

int left = floor((net_width / seg_width * rang_x - params[2]) / params[0]);

int top = floor((net_height / seg_height * rang_y - params[3]) / params[1]);

int width = ceil(net_width / seg_width * rang_w / params[0]);

int height = ceil(net_height / seg_height * rang_h / params[1]);

resize(dest, mask, Size(width, height), INTER_NEAREST);

mask = mask(temp_rect - Point(left, top)) > mask_threshold;

output.boxMask = mask;

}

void DrawPred(Mat& img, vector result, std::vector classNames, vector color) {

Mat mask = img.clone();

for (int i = 0; i < result.size(); i++) {

int left, top;

left = result[i].box.x;

top = result[i].box.y;

int color_num = i;

rectangle(img, result[i].box, color[result[i].id], 2, 8);

if(result[i].boxMask.rows&& result[i].boxMask.cols>0)

mask(result[i].box).setTo(color[result[i].id], result[i].boxMask);

string label = classNames[result[i].id] + ":" + to_string(result[i].confidence);

int baseLine;

Size labelSize = getTextSize(label, FONT_HERSHEY_SIMPLEX, 0.5, 1, &baseLine);

top = max(top, labelSize.height);

//rectangle(frame, Point(left, top - int(1.5 * labelSize.height)), Point(left + int(1.5 * labelSize.width), top + baseLine), Scalar(0, 255, 0), FILLED);

putText(img, label, Point(left, top), FONT_HERSHEY_SIMPLEX, 1, color[result[i].id], 2);

}

addWeighted(img, 0.5, mask, 0.5, 0, img); //add mask to src

// imshow("1", img);

//imwrite("out.bmp", img);

// waitKey();

//destroyAllWindows();

}

yolov8_seg_onnx.h

#pragma once

#include

#include

#include

#include "yolov8_seg_utils.h"

#include

//#include //if use OrtTensorRTProviderOptionsV2

//#include

class Yolov8SegOnnx {

public:

Yolov8SegOnnx() :_OrtMemoryInfo(Ort::MemoryInfo::CreateCpu(OrtAllocatorType::OrtDeviceAllocator, OrtMemType::OrtMemTypeCPUOutput)) {};

~Yolov8SegOnnx() {};// delete _OrtMemoryInfo;

public:

/** \brief Read onnx-model

* \param[in] modelPath:onnx-model path

* \param[in] isCuda:if true,use Ort-GPU,else run it on cpu.

* \param[in] cudaID:if isCuda==true,run Ort-GPU on cudaID.

* \param[in] warmUp:if isCuda==true,warm up GPU-model.

*/

bool ReadModel(const std::string& modelPath, bool isCuda = false, int cudaID = 0, bool warmUp = true);

/** \brief detect.

* \param[in] srcImg:a 3-channels image.

* \param[out] output:detection results of input image.

*/

bool OnnxDetect(cv::Mat& srcImg, std::vector& output);

/** \brief detect,batch size= _batchSize

* \param[in] srcImg:A batch of images.

* \param[out] output:detection results of input images.

*/

bool OnnxBatchDetect(std::vector& srcImg, std::vector>& output);

private:

template

T VectorProduct(const std::vector& v)

{

return std::accumulate(v.begin(), v.end(), 1, std::multiplies());

};

int Preprocessing(const std::vector& SrcImgs, std::vector& OutSrcImgs, std::vector& params);

#if(defined YOLO_P6 && YOLO_P6==true)

//const float _netAnchors[4][6] = { { 19,27, 44,40, 38,94 },{ 96,68, 86,152, 180,137 },{ 140,301, 303,264, 238,542 },{ 436,615, 739,380, 925,792 } };

const int _netWidth = 1280; //ONNX图片输入宽度

const int _netHeight = 1280; //ONNX图片输入高度

const int _segWidth = 320; //_segWidth=_netWidth/mask_ratio

const int _segHeight = 320;

const int _segChannels = 32;

#else

//const float _netAnchors[3][6] = { { 10,13, 16,30, 33,23 },{ 30,61, 62,45, 59,119 },{ 116,90, 156,198, 373,326 } };

const int _netWidth = 640; //ONNX-net-input-width

const int _netHeight = 640; //ONNX-net-input-height

const int _segWidth = 160; //_segWidth=_netWidth/mask_ratio

const int _segHeight = 160;

const int _segChannels = 32;

#endif // YOLO_P6

int _batchSize = 1; //if multi-batch,set this

bool _isDynamicShape = false;//onnx support dynamic shape

float _classThreshold = 0.5;

float _nmsThreshold = 0.45;

float _maskThreshold = 0.5;

//ONNXRUNTIME

Ort::Env _OrtEnv = Ort::Env(OrtLoggingLevel::ORT_LOGGING_LEVEL_ERROR, "Yolov5-Seg");

Ort::SessionOptions _OrtSessionOptions = Ort::SessionOptions();

Ort::Session* _OrtSession = nullptr;

Ort::MemoryInfo _OrtMemoryInfo;

#if ORT_API_VERSION < ORT_OLD_VISON

char* _inputName, * _output_name0, * _output_name1;

#else

std::shared_ptr _inputName, _output_name0,_output_name1;

#endif

std::vector _inputNodeNames; //输入节点名

std::vector _outputNodeNames;//输出节点名

size_t _inputNodesNum = 0; //输入节点数

size_t _outputNodesNum = 0; //输出节点数

ONNXTensorElementDataType _inputNodeDataType; //数据类型

ONNXTensorElementDataType _outputNodeDataType;

std::vector _inputTensorShape; //输入张量shape

std::vector _outputTensorShape;

std::vector _outputMaskTensorShape;

public:

std::vector _className = {

"person", "bicycle", "car", "motorcycle", "airplane", "bus", "train", "truck", "boat", "traffic light",

"fire hydrant", "stop sign", "parking meter", "bench", "bird", "cat", "dog", "horse", "sheep", "cow",

"elephant", "bear", "zebra", "giraffe", "backpack", "umbrella", "handbag", "tie", "suitcase", "frisbee",

"skis", "snowboard", "sports ball", "kite", "baseball bat", "baseball glove", "skateboard", "surfboard",

"tennis racket", "bottle", "wine glass", "cup", "fork", "knife", "spoon", "bowl", "banana", "apple",

"sandwich", "orange", "broccoli", "carrot", "hot dog", "pizza", "donut", "cake", "chair", "couch",

"potted plant", "bed", "dining table", "toilet", "tv", "laptop", "mouse", "remote", "keyboard", "cell phone",

"microwave", "oven", "toaster", "sink", "refrigerator", "book", "clock", "vase", "scissors", "teddy bear",

"hair drier", "toothbrush"

};

}; yolov8_seg_onnx.cpp

#include "yolov8_seg_onnx.h"

using namespace std;

using namespace cv;

using namespace cv::dnn;

using namespace Ort;

bool Yolov8SegOnnx::ReadModel(const std::string& modelPath, bool isCuda, int cudaID, bool warmUp) {

if (_batchSize < 1) _batchSize = 1;

try

{

std::vector available_providers = GetAvailableProviders();

auto cuda_available = std::find(available_providers.begin(), available_providers.end(), "CUDAExecutionProvider");

if (isCuda && (cuda_available == available_providers.end()))

{

std::cout << "Your ORT build without GPU. Change to CPU." << std::endl;

std::cout << "************* Infer model on CPU! *************" << std::endl;

}

else if (isCuda && (cuda_available != available_providers.end()))

{

std::cout << "************* Infer model on GPU! *************" << std::endl;

//#if ORT_API_VERSION < ORT_OLD_VISON

// OrtCUDAProviderOptions cudaOption;

// cudaOption.device_id = cudaID;

// _OrtSessionOptions.AppendExecutionProvider_CUDA(cudaOption);

//#else

// OrtStatus* status = OrtSessionOptionsAppendExecutionProvider_CUDA(_OrtSessionOptions, cudaID);

//#endif

}

else

{

std::cout << "************* Infer model on CPU! *************" << std::endl;

}

//

_OrtSessionOptions.SetGraphOptimizationLevel(GraphOptimizationLevel::ORT_ENABLE_EXTENDED);

#ifdef _WIN32

std::wstring model_path(modelPath.begin(), modelPath.end());

_OrtSession = new Ort::Session(_OrtEnv, model_path.c_str(), _OrtSessionOptions);

#else

_OrtSession = new Ort::Session(_OrtEnv, modelPath.c_str(), _OrtSessionOptions);

#endif

Ort::AllocatorWithDefaultOptions allocator;

//init input

_inputNodesNum = _OrtSession->GetInputCount();

#if ORT_API_VERSION < ORT_OLD_VISON

_inputName = _OrtSession->GetInputName(0, allocator);

_inputNodeNames.push_back(_inputName);

#else

_inputName = std::move(_OrtSession->GetInputNameAllocated(0, allocator));

_inputNodeNames.push_back(_inputName.get());

#endif

Ort::TypeInfo inputTypeInfo = _OrtSession->GetInputTypeInfo(0);

auto input_tensor_info = inputTypeInfo.GetTensorTypeAndShapeInfo();

_inputNodeDataType = input_tensor_info.GetElementType();

_inputTensorShape = input_tensor_info.GetShape();

if (_inputTensorShape[0] == -1)

{

_isDynamicShape = true;

_inputTensorShape[0] = _batchSize;

}

if (_inputTensorShape[2] == -1 || _inputTensorShape[3] == -1) {

_isDynamicShape = true;

_inputTensorShape[2] = _netHeight;

_inputTensorShape[3] = _netWidth;

}

//init output

_outputNodesNum = _OrtSession->GetOutputCount();

if (_outputNodesNum != 2) {

cout << "This model has " << _outputNodesNum << "output, which is not a segmentation model.Please check your model name or path!" << endl;

return false;

}

#if ORT_API_VERSION < ORT_OLD_VISON

_output_name0 = _OrtSession->GetOutputName(0, allocator);

_output_name1 = _OrtSession->GetOutputName(1, allocator);

#else

_output_name0 = std::move(_OrtSession->GetOutputNameAllocated(0, allocator));

_output_name1 = std::move(_OrtSession->GetOutputNameAllocated(1, allocator));

#endif

Ort::TypeInfo type_info_output0(nullptr);

Ort::TypeInfo type_info_output1(nullptr);

bool flag = false;

#if ORT_API_VERSION < ORT_OLD_VISON

flag = strcmp(_output_name0, _output_name1) < 0;

#else

flag = strcmp(_output_name0.get(), _output_name1.get()) < 0;

#endif

if (flag) //make sure "output0" is in front of "output1"

{

type_info_output0 = _OrtSession->GetOutputTypeInfo(0); //output0

type_info_output1 = _OrtSession->GetOutputTypeInfo(1); //output1

#if ORT_API_VERSION < ORT_OLD_VISON

_outputNodeNames.push_back(_output_name0);

_outputNodeNames.push_back(_output_name1);

#else

_outputNodeNames.push_back(_output_name0.get());

_outputNodeNames.push_back(_output_name1.get());

#endif

}

else {

type_info_output0 = _OrtSession->GetOutputTypeInfo(1); //output0

type_info_output1 = _OrtSession->GetOutputTypeInfo(0); //output1

#if ORT_API_VERSION < ORT_OLD_VISON

_outputNodeNames.push_back(_output_name1);

_outputNodeNames.push_back(_output_name0);

#else

_outputNodeNames.push_back(_output_name1.get());

_outputNodeNames.push_back(_output_name0.get());

#endif

}

auto tensor_info_output0 = type_info_output0.GetTensorTypeAndShapeInfo();

_outputNodeDataType = tensor_info_output0.GetElementType();

_outputTensorShape = tensor_info_output0.GetShape();

auto tensor_info_output1 = type_info_output1.GetTensorTypeAndShapeInfo();

//_outputMaskNodeDataType = tensor_info_output1.GetElementType(); //the same as output0

//_outputMaskTensorShape = tensor_info_output1.GetShape();

//if (_outputTensorShape[0] == -1)

//{

// _outputTensorShape[0] = _batchSize;

// _outputMaskTensorShape[0] = _batchSize;

//}

//if (_outputMaskTensorShape[2] == -1) {

// //size_t ouput_rows = 0;

// //for (int i = 0; i < _strideSize; ++i) {

// // ouput_rows += 3 * (_netWidth / _netStride[i]) * _netHeight / _netStride[i];

// //}

// //_outputTensorShape[1] = ouput_rows;

// _outputMaskTensorShape[2] = _segHeight;

// _outputMaskTensorShape[3] = _segWidth;

//}

//warm up

if (isCuda && warmUp) {

//draw run

cout << "Start warming up" << endl;

size_t input_tensor_length = VectorProduct(_inputTensorShape);

float* temp = new float[input_tensor_length];

std::vector input_tensors;

std::vector output_tensors;

input_tensors.push_back(Ort::Value::CreateTensor(

_OrtMemoryInfo, temp, input_tensor_length, _inputTensorShape.data(),

_inputTensorShape.size()));

for (int i = 0; i < 3; ++i) {

output_tensors = _OrtSession->Run(Ort::RunOptions{ nullptr },

_inputNodeNames.data(),

input_tensors.data(),

_inputNodeNames.size(),

_outputNodeNames.data(),

_outputNodeNames.size());

}

delete[]temp;

}

}

catch (const std::exception&) {

return false;

}

}

int Yolov8SegOnnx::Preprocessing(const std::vector& srcImgs, std::vector& outSrcImgs, std::vector& params) {

outSrcImgs.clear();

Size input_size = Size(_netWidth, _netHeight);

for (int i = 0; i < srcImgs.size(); ++i) {

Mat temp_img = srcImgs[i];

Vec4d temp_param = { 1,1,0,0 };

if (temp_img.size() != input_size) {

Mat borderImg;

LetterBox(temp_img, borderImg, temp_param, input_size, false, false, true, 32);

//cout << borderImg.size() << endl;

outSrcImgs.push_back(borderImg);

params.push_back(temp_param);

}

else {

outSrcImgs.push_back(temp_img);

params.push_back(temp_param);

}

}

int lack_num = srcImgs.size() % _batchSize;

if (lack_num != 0) {

for (int i = 0; i < lack_num; ++i) {

Mat temp_img = Mat::zeros(input_size, CV_8UC3);

Vec4d temp_param = { 1,1,0,0 };

outSrcImgs.push_back(temp_img);

params.push_back(temp_param);

}

}

return 0;

}

bool Yolov8SegOnnx::OnnxDetect(cv::Mat& srcImg, std::vector& output) {

std::vector input_data = { srcImg };

std::vector> tenp_output;

if (OnnxBatchDetect(input_data, tenp_output)) {

output = tenp_output[0];

return true;

}

else return false;

}

bool Yolov8SegOnnx::OnnxBatchDetect(std::vector& srcImgs, std::vector>& output) {

vector params;

vector input_images;

cv::Size input_size(_netWidth, _netHeight);

//preprocessing

Preprocessing(srcImgs, input_images, params);

cv::Mat blob = cv::dnn::blobFromImages(input_images, 1 / 255.0, input_size, Scalar(0, 0, 0), true, false);

int64_t input_tensor_length = VectorProduct(_inputTensorShape);

std::vector input_tensors;

std::vector output_tensors;

input_tensors.push_back(Ort::Value::CreateTensor(_OrtMemoryInfo, (float*)blob.data, input_tensor_length, _inputTensorShape.data(), _inputTensorShape.size()));

output_tensors = _OrtSession->Run(Ort::RunOptions{ nullptr },

_inputNodeNames.data(),

input_tensors.data(),

_inputNodeNames.size(),

_outputNodeNames.data(),

_outputNodeNames.size()

);

//post-process

int net_width = _className.size() + 4 + _segChannels;

float* all_data = output_tensors[0].GetTensorMutableData();

_outputTensorShape = output_tensors[0].GetTensorTypeAndShapeInfo().GetShape();

_outputMaskTensorShape = output_tensors[1].GetTensorTypeAndShapeInfo().GetShape();

vector mask_protos_shape = { 1,(int)_outputMaskTensorShape[1],(int)_outputMaskTensorShape[2],(int)_outputMaskTensorShape[3] };

int mask_protos_length = VectorProduct(mask_protos_shape);

int64_t one_output_length = VectorProduct(_outputTensorShape) / _outputTensorShape[0];

for (int img_index = 0; img_index < srcImgs.size(); ++img_index) {

Mat output0 = Mat(Size((int)_outputTensorShape[2], (int)_outputTensorShape[1]), CV_32F, all_data).t(); //[bs,116,8400]=>[bs,8400,116]

all_data += one_output_length;

float* pdata = (float*)output0.data;

int rows = output0.rows;

std::vector class_ids;//\BD\E1\B9\FBid\CA\FD\D7\E9

std::vector confidences;//\BD\E1\B9\FBÿ\B8\F6id\B6\D4Ӧ\D6\C3\D0Ŷ\C8\CA\FD\D7\E9

std::vector boxes;//ÿ\B8\F6id\BE\D8\D0ο\F2

std::vector> picked_proposals; //output0[:,:, 5 + _className.size():net_width]===> for mask

for (int r = 0; r < rows; ++r) { //stride

cv::Mat scores(1, _className.size(), CV_32F, pdata +4);

Point classIdPoint;

double max_class_socre;

minMaxLoc(scores, 0, &max_class_socre, 0, &classIdPoint);

max_class_socre = (float)max_class_socre;

if (max_class_socre >= _classThreshold) {

vector temp_proto(pdata + 4 + _className.size(), pdata + net_width);

picked_proposals.push_back(temp_proto);

//rect [x,y,w,h]

float x = (pdata[0] - params[img_index][2]) / params[img_index][0]; //x

float y = (pdata[1] - params[img_index][3]) / params[img_index][1]; //y

float w = pdata[2] / params[img_index][0]; //w

float h = pdata[3] / params[img_index][1]; //h

int left = MAX(int(x - 0.5 * w + 0.5), 0);

int top = MAX(int(y - 0.5 * h + 0.5), 0);

class_ids.push_back(classIdPoint.x);

confidences.push_back(max_class_socre );

boxes.push_back(Rect(left, top, int(w + 0.5), int(h + 0.5)));

}

pdata += net_width;//\CF\C2һ\D0\D0

}

vector nms_result;

cv::dnn::NMSBoxes(boxes, confidences, _classThreshold, _nmsThreshold, nms_result);

std::vector> temp_mask_proposals;

cv::Rect holeImgRect(0, 0, srcImgs[img_index].cols, srcImgs[img_index].rows);

std::vector temp_output;

for (int i = 0; i < nms_result.size(); ++i) {

int idx = nms_result[i];

OutputSeg result;

result.id = class_ids[idx];

result.confidence = confidences[idx];

result.box = boxes[idx] & holeImgRect;

temp_mask_proposals.push_back(picked_proposals[idx]);

temp_output.push_back(result);

}

MaskParams mask_params;

mask_params.params = params[img_index];

mask_params.srcImgShape = srcImgs[img_index].size();

Mat mask_protos = Mat(mask_protos_shape, CV_32F, output_tensors[1].GetTensorMutableData() + img_index * mask_protos_length);

for (int i = 0; i < temp_mask_proposals.size(); ++i) {

GetMask2(Mat(temp_mask_proposals[i]).t(), mask_protos, temp_output[i], mask_params);

}

//******************** ****************

// \C0ϰ汾\B5ķ\BD\B0\B8\A3\AC\C8\E7\B9\FB\C9\CF\C3\E6\D4ڿ\AA\C6\F4\CE\D2ע\CA͵IJ\BF\B7\D6֮\BA\F3\BB\B9һֱ\B1\A8\B4\ED\A3\AC\BD\A8\D2\E9ʹ\D3\C3\D5\E2\B8\F6\A1\A3

// If the GetMask2() still reports errors , it is recommended to use GetMask().

// Mat mask_proposals;

//for (int i = 0; i < temp_mask_proposals.size(); ++i)

// mask_proposals.push_back(Mat(temp_mask_proposals[i]).t());

//GetMask(mask_proposals, mask_protos, output, mask_params);

//*****************************************************/

output.push_back(temp_output);

}

if (output.size())

return true;

else

return false;

}

main.cpp

#include

#include

#include "yolov8_seg_onnx.h"

#include "yolov8_seg_utils.h"

#include

using namespace std;

using namespace cv;

int main()

{

string model_path = "/home/jason/PycharmProjects/pytorch_learn/yolo/ultralytics-main-yolov8/yolov8n-seg.onnx";

Yolov8SegOnnx test;

if(test.ReadModel(model_path,false,0,false))

cout << "read net ok!\n";

else

cout << "read net err!\n";

vector color;

srand(time(0));

for (int i=0; i<80; i++){

int b = rand() % 256;

int g = rand() % 256;

int r = rand() % 256;

color.push_back(Scalar(b,g,r));

}

struct timeval t1, t2;

double timeuse;

VideoCapture capture(0);

Mat frame;

vector output;

while(1){

capture >> frame;

output.clear();

gettimeofday(&t1, NULL);

bool find = test.OnnxDetect(frame, output);

gettimeofday(&t2, NULL);

if (find)

DrawPred(frame, output, test._className, color);

else

cout << "not find!\n";

timeuse = (t2.tv_sec - t1.tv_sec) + (double)(t2.tv_usec - t1.tv_usec)/1000000; // s

timeuse *= 1000; // ms

string label = "TimeUse: " + to_string(timeuse);

putText(frame, label, Point(30,30), FONT_HERSHEY_SIMPLEX, 0.5, Scalar(0,0,255), 2, 8);

imshow("result", frame);

if(waitKey(1)=='q') break;

}

return 0;

}

CMakelist.txt

find_package(OpenCV 4 REQUIRED)

include_directories(/home/jason/下载/onnxruntime-linux-x64-1.14.1/include)

include_directories(./include )

aux_source_directory(./src SOURCES_LIST)

add_executable(${PROJECT_NAME} ${SOURCES_LIST})

target_link_libraries(${PROJECT_NAME} ${OpenCV_LIBS} "/home/jason/下载/onnxruntime-linux-x64-1.14.1/lib/libonnxruntime.so")