Docker 之 Consul容器服务更新与发现

一、Consul介绍

1、什么是服务注册与发现

- 服务注册与发现是微服务架构中不可或缺的重要组件。起初服务都是单节点的,不保障高可用性,也不考虑服务的压力承载,服务之间调用单纯的通过接口访问。直到后来出现了多个节点的分布式架构,起初的解决手段是在服务前端负载均衡,这样前端必须要知道所有后端服务的网络位置,并配置在配置文件中。

2、服务注册与发现中的问题

- 如果需要调用后端服务A-N,就需要配置N个服务的网络位置,配置很麻烦

- 后端服务的网络位置变化,都需要改变每个调用者的配置

- 既然有这些问题,那么服务注册与发现就是解决这些问题的。后端服务A-N可以把当前自己的网络位置注册到服务发现模块,服务发现就以K-V的方式记录下来,K一般是服务名,V就是IP:PORT。服务发现模块定时的进行健康检查,轮询查看这些后端服务能不能访问的了。前端在调用后端服务A-N的时候,就跑去服务发现模块问下它们的网络位置,然后再调用它们的服务。这样的方式就可以解决上面的问题了,前端完全不需要记录这些后端服务的网络位置,前端和后端完全解耦

3、什么是consul

- consul是google开源的一个使用go语言开发的服务管理软件。支持多数据中心、分布式高可用的、服务发现和配置共享。采用Raft算法,用来保证服务的高可用。内置了服务注册与发现框架、分布一致性协议实现、健康检查、Key/Value存储、多数据中心方案,不再需要依赖其他工具(比如ZooKeeper等)。服务部署简单,只有一个可运行的二进制的包。每个节点都需要运行agent,他有两种运行模式server 和 client。 每个数据中心官方建议需要3或5个server节点以保证数据安全,同时保证server-leader的选举能够正确的进行。

- 在client模式下,所有注册到当前节点的服务会被转发到server节点,本身是不持久化这些信息。

- 在server模式下,功能和client模式相似,唯一不同的是,它会把所有的信息持久化到本地,这样遇到故障,信息是可以被保留的。

- server-leader是所有server节点的老大,它和其它server节点不同的是,它需要负责同步注册的信息给其它的server节点,同时也要负责各个节点的健康监测。

4、consul提供的一些关键特性

- 服务注册与发现:consul通过DNS或者HTTP接口使服务注册和服务发现变的很容易,一些外部服务,例如saas提供的也可以一样注册

- 健康检查:健康检测使consul可以快速的告警在集群中的操作。和服务发现的集成,可以防止服务转发到故障的服务上面

- Key/Value存储:一个用来存储动态配置的系统。提供简单的HTTP接口,可以在任何地方操作。

- 多数据中心:无需复杂的配置,即可支持任意数量的区域

- 安装consul是用于服务注册,也就是容器本身的一些信息注册到consul里面,其他程序可以通过consul获取注册的相关服务信息,这就是服务注册与发现。

二、consul 部署

1、实验前准备

consul服务器 192.168.247.132 运行consul服务、nginx服务、consul-template守护进程

registrator服务器 192.168.247.131 运行registrator容器、运行nginx容器

2、 建立 Consul 服务(consul服务器)

(1)准备Consul 服务

[root@node02 ~]# systemctl stop firewalld.service

[root@node02 ~]# setenforce 0

setenforce: SELinux is disabled

[root@node02 ~]# mkdir /opt/consul

[root@node02 ~]# cd /opt/consul

[root@node02 consul]# unzip consul_0.9.2_linux_amd64.zip

Archive: consul_0.9.2_linux_amd64.zip

inflating: consul

[root@node02 consul]# mv consul /usr/local/bin/

(2)设置代理,在后台启动 consul 服务端

[root@node02 consul]# consul agent \

> -server \

> -bootstrap \

> -ui \

> -data-dir=/var/lib/consul-data \

> -bind=192.168.247.132 \

> -client=0.0.0.0 \

> -node=consul-server01 &> /var/log/consul.log &

[1] 1939

| 名称 | 作用 |

|---|---|

| server | 以server身份启动,默认是client |

| bootstrap | 用来控制一个server是否在bootstrap模式,在一个数据中心中只能有一个server处于bootstrap模式,当一个server处于 bootstrap模式时,可以自己选举为 server-leader |

| bootstrap-expect=2 | 集群要求的最少server数量,当低于这个数量,集群即失效 |

| ui | 指定开启 UI 界面,这样可以通过 http://localhost:8500/ui 这样的地址访问 consul 自带的 web UI 界面 |

| data-dir | 指定数据存储目录 |

| bind | 指定用来在集群内部的通讯地址,集群内的所有节点到此地址都必须是可达的,默认是0.0.0.0 |

| client | 指定 consul 绑定在哪个 client 地址上,这个地址提供 HTTP、DNS、RPC 等服务,默认是 127.0.0.1 |

| node | 节点在集群中的名称,在一个集群中必须是唯一的,默认是该节点的主机名 |

| datacenter | 指定数据中心名称,默认是dc1 |

(3)查看端口号

[root@node02 consul]# netstat -natp | grep consul

tcp 0 0 192.168.247.132:8300 0.0.0.0:* LISTEN 1939/consul

tcp 0 0 192.168.247.132:8301 0.0.0.0:* LISTEN 1939/consul

tcp 0 0 192.168.247.132:8302 0.0.0.0:* LISTEN 1939/consul

tcp 0 0 192.168.247.132:52015 192.168.247.132:8300 ESTABLISHED 1939/consul

tcp 0 0 192.168.247.132:8300 192.168.247.132:52015 ESTABLISHED 1939/consul

tcp6 0 0 :::8500 :::* LISTEN 1939/consul

tcp6 0 0 :::8600 :::* LISTEN 1939/consul

- 默认会监听的端口:

| 端口号 | 解释 |

|---|---|

| 8300 | replication、leader farwarding的端口 |

| 8301 | lan cossip的端口 |

| 8302 | wan gossip的端口 |

| 8500 | web ui界面的端口 |

| 8600 | 使用dns协议查看节点信息的端口 |

3、 查看集群信息

(1)查看members状态

[root@node02 consul]# consul members

Node Address Status Type Build Protocol DC

consul-server01 192.168.247.132:8301 alive server 0.9.2 2 dc1

(2)查看集群状态

[root@node02 consul]# consul operator raft list-peers

Node ID Address State Voter RaftProtocol

consul-server01 192.168.247.132:8300 192.168.247.132:8300 leader true 2

[root@node02 consul]# consul info | grep leader

leader = true

leader_addr = 192.168.247.132:8300

4、通过 http api 获取集群信息

curl 127.0.0.1:8500/v1/status/peers #查看集群server成员

curl 127.0.0.1:8500/v1/status/leader #集群 server-leader

curl 127.0.0.1:8500/v1/catalog/services #注册的所有服务

curl 127.0.0.1:8500/v1/catalog/nginx #查看 nginx 服务信息

curl 127.0.0.1:8500/v1/catalog/nodes #集群节点详细信息

三、registrator服务器

1、容器服务自动加入 Nginx 集群

(1)安装 Gliderlabs/Registrator

- Gliderlabs/Registrator 可检查容器运行状态自动注册,还可注销 docker 容器的服务到服务配置中心。目前支持 Consul、Etcd 和 SkyDNS2

[root@lion ~]# docker run -d \

> --name=registrator \

> --net=host \

> -v /var/run/docker.sock:/tmp/docker.sock \

> --restart=always \

> gliderlabs/registrator:latest \

> --ip=192.168.247.131 \

> consul://192.168.247.132:8500

560c5ce43950da1e8fb86c1059d355042b33c176b69a7a2631e2013d23910811

| 字段 | 作用 |

|---|---|

| –net=host | 把运行的docker容器设定为host网络模式 |

| -v /var/run/docker.sock:/tmp/docker.sock | 把宿主机的Docker守护进程(Docker daemon)默认监听的Unix域套接字挂载到容器中 |

| –restart=always | 设置在容器退出时总是重启容器 |

| –ip | 刚才把network指定了host模式,所以我们指定ip为宿主机的ip |

2、 测试服务发现功能是否正常

[root@lion ~]# docker run -itd -p:83:80 --name test-01 -h test01 nginx

2295f309fff88f72b5c6b19b2e688739ae8e7ab60ad97fb8a332138699e17861

[root@lion ~]# docker run -itd -p:84:80 --name test-02 -h test02 nginx

55757a713bbdbe575ddf40b93f72e87a1b5bc4eea1d2909d8d980f669a4d323a

[root@lion ~]# docker run -itd -p:88:80 --name test-03 -h test03 httpd

Unable to find image 'httpd:latest' locally

latest: Pulling from library/httpd

a2abf6c4d29d: Already exists

dcc4698797c8: Pull complete

41c22baa66ec: Pull complete

67283bbdd4a0: Pull complete

d982c879c57e: Pull complete

Digest: sha256:0954cc1af252d824860b2c5dc0a10720af2b7a3d3435581ca788dff8480c7b32

Status: Downloaded newer image for httpd:latest

641ed2409f2e1168084894dc4dc5a1df8b0f6e37c986fb2abc24683a3d846a92

[root@lion ~]# docker run -itd -p:89:80 --name test-04 -h test04 httpd

675e7af3911689326e20b4d22e5cfd24802f509b8a9d9fab287e21c9a6aa27ce

-

#-h:设置容器主机名

-

出现以下报错使用:systemctl restart docker

docker: Error response from daemon: driver failed programming external connectivity on endpoint test-02 (e5164ff8e230c5f3e3c52457132e5945ce8e106b18b586538853adeed47c29ce): (iptables failed: iptables --wait -t nat -A DOCKER -p tcp -d 0/0 --dport 84 -j DNAT --to-destination 172.17.0.2:80 ! -i docker0: iptables: No chain/target/match by that name.

(exit status 1)).

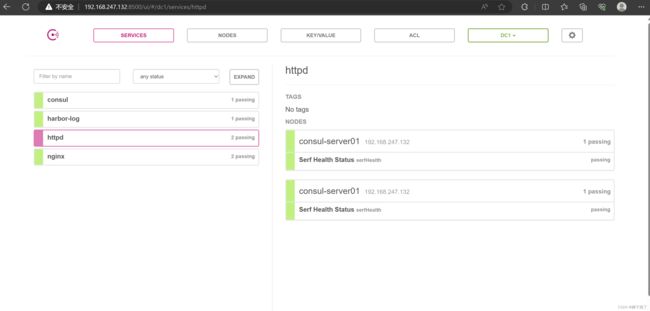

3、验证 http 和 nginx 服务是否注册到 consul

- 浏览器中,输入 http://192.168.247.132:8500,在 Web 页面中“单击 NODES”,然后单击“consurl-server01”,会出现 5 个服务

4、在consul服务器使用curl测试连接服务器

[root@node02 consul]# curl 127.0.0.1:8500/v1/catalog/services

{"consul":[],"harbor-log":[],"httpd":[],"nginx":[]}[

四、 consul-template

1、consul-template 介绍

- Consul-Template是基于Consul的自动替换配置文件的应用。Consul-Template是一个守护进程,用于实时查询Consul集群信息,并更新文件系统上任意数量的指定模板,生成配置文件。更新完成以后,可以选择运行 shell 命令执行更新操作,重新加载 Nginx

- Consul-Template可以查询Consul中的服务目录、Key、Key-values 等。这种强大的抽象功能和查询语言模板可以使 Consul-Template 特别适合动态的创建配置文件。例如:创建Apache/Nginx Proxy Balancers 、 Haproxy Backends等

2、准备 template nginx 模板文件

(1)定义nginx upstream一个简单模板(在consul服务器上操作)

vim /opt/consul/nginx.ctmpl

upstream http_backend {

{{range service "nginx"}}

server {{.Address}}:{{.Port}};

{{end}}

}

(2)定义一个server,监听8000端口,反向代理到upstream

server {

listen 8000;

server_name localhost 192.168.247.132;

access_log /var/log/nginx/group.com-access.log; #修改日志路径

index index.html index.php;

location / {

proxy_set_header HOST $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header Client-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_pass http://http_backend;

}

}

3、编译安装nginx

yum -y install pcre-devel zlib-devel gcc gcc-c++ make

useradd -M -s /sbin/nologin nginx

tar zxvf nginx-1.12.0.tar.gz -C /opt/

cd /opt/nginx-1.12.0/

./configure --prefix=/usr/local/nginx --user=nginx --group=nginx && make && make install

ln -s /usr/local/nginx/sbin/nginx /usr/local/sbin/

4、配置 nginx

(1)修改配置文件

vim /usr/local/nginx/conf/nginx.conf

......

http {

include mime.types;

include vhost/*.conf; #添加虚拟主机目录

default_type application/octet-stream;

......

(2)创建虚拟主机目录

[root@node02 nginx-1.24.0]# mkdir /usr/local/nginx/conf/vhost

(3)创建日志文件目录

[root@node02 nginx-1.24.0]# mkdir /var/log/nginx

(4)启动nginx

[root@node02 nginx-1.24.0]# nginx

[root@node02 nginx-1.24.0]# systemctl status nginx.service

● nginx.service - nginx

Loaded: loaded (/usr/lib/systemd/system/nginx.service; enabled; vendor preset: disabled)

Active: active (running) since 三 2023-07-26 17:56:19 CST; 50min ago

Process: 1170 ExecStart=/usr/local/nginx/sbin/nginx (code=exited, status=0/SUCCESS)

Main PID: 1183 (nginx)

CGroup: /system.slice/nginx.service

├─1183 nginx: master process /usr/local/nginx/sbin/nginx

└─1189 nginx: worker process

7月 26 17:56:19 node02 systemd[1]: Starting nginx...

7月 26 17:56:19 node02 systemd[1]: Started nginx.

5、template配置并启动

(1)配置template

[root@node02 opt]# unzip consul-template_0.19.3_linux_amd64.zip

Archive: consul-template_0.19.3_linux_amd64.zip

inflating: consul-template

[root@node02 opt]# cd /opt/

[root@node02 opt]# mv consul-template /usr/local/bin/

(2)在前台启动 template 服务,启动后不要按 ctrl+c 中止 consul-template 进程

[root@node02 opt]# consul-template --consul-addr 192.168.247.132:8500 \

> --template "/opt/consul/nginx.ctmpl:/usr/local/nginx/conf/vhost/group.conf:/usr/local/nginx/sbin/nginx -s reload" \

> --log-level=info

2023/07/26 11:26:49.018918 [INFO] consul-template v0.19.3 (ebf2d3d)

(3)另外打开一个终端查看生成配置文件

[root@node02 sbin]# cd /usr/local/nginx/conf/vhost

[root@node02 vhost]# ls

group.conf

[root@node02 vhost]# cat group.conf

upstream http_backend {

server 192.168.247.131:83;

server 192.168.247.131:84;

}

server {

listen 8000;

server_name localhost 192.168.247.132;

access_log /var/log/nginx/group.com-access.log;

index index.html index.php;

location / {

proxy_set_header HOST $host;

proxy_set_header X-Real-IP $remote_addr;

proxy_set_header Client-IP $remote_addr;

proxy_set_header X-Forwarded-For $proxy_add_x_forwarded_for;

proxy_pass http://http_backend;

}

}

6、 访问 template-nginx

docker ps -a

CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES

9f0dc08956f4 httpd "httpd-foreground" 1 hours ago Up 1 hours 0.0.0.0:89->80/tcp test-04

a0bde07299da httpd "httpd-foreground" 1 hours ago Up 1 hours 0.0.0.0:88->80/tcp test-03

4f74d2c38844 nginx "/docker-entrypoint.…" 1 hours ago Up 1 hours 0.0.0.0:84->80/tcp test-02

b73106db285b nginx "/docker-entrypoint.…" 1 hours ago Up 1 hours 0.0.0.0:83->80/tcp test-01

409331c16824 gliderlabs/registrator:latest "/bin/registrator -i…" 1 hours ago Up 1 hours registrator

docker exec -it 55757a713bbd bash

echo "this is test1 web" > /usr/share/nginx/html/index.html

docker exec -it 2295f309fff8bash

echo "this is test2 web" > /usr/share/nginx/html/index.html

- 浏览器访问:http://192.168.247.132:8000/ ,并不断刷新

7、增加一个 nginx 容器节点

(1)增加一个 nginx 容器节点,测试服务发现及配置更新功能

docker run -itd -p:85:80 --name test-05 -h test05 nginx

- 观察 template 服务,会从模板新/usr/local/nginx/conf/vhost/group.conf 文件内容,并且重载 nginx 服务

(2)查看/usr/local/nginx/conf/vhost/group.conf 文件内容

cat /usr/local/nginx/conf/vhost/group.conf

upstream http_backend {

server 192.168.247.131:83;

server 192.168.247.131:84;

server 192.168.247.131:85;

}

(3)查看三台 nginx 容器日志,请求正常轮询到各个容器节点上

docker logs -f test-01

docker logs -f test-02

docker logs -f test-05

五、consul 多节点

1、添加一台已有docker环境的服务器192.168.80.12/24加入已有的群集中

consul agent \

-server \

-ui \

-data-dir=/var/lib/consul-data \

-bind=192.168.80.12 \

-client=0.0.0.0 \

-node=consul-server02 \

-enable-script-checks=true \

-datacenter=dc1 \

-join 192.168.247.132 &> /var/log/consul.log &

-enable-script-checks=true :设置检查服务为可用

-datacenter : 数据中心名称

-join :加入到已有的集群中

consul members

Node Address Status Type Build Protocol DC

consul-server01 192.168.247.132:8301 alive server 0.9.2 2 dc1

consul-server02 192.168.80.12:8301 alive server 0.9.2 2 dc1

consul operator raft list-peers

Node ID Address State Voter RaftProtocol

consul-server01 192.168.247.132:8300 192.168.247.132:8300 leader true 2

consul-server02 192.168.80.12:8300 192.168.80.12:8300 follower true 2