sqoop导入数据‘‘--query搭配$CONDITIONS‘‘的理解

目录

- 运行测试

- 原理理解

引言

sqoop在导入数据时,可以使用--query搭配sql来指定查询条件,并且还需在sql中添加$CONDITIONS,来实现并行运行mr的功能。

回到顶部

运行测试

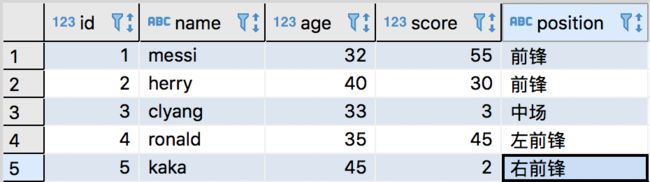

测试均基于sqoop1,mysql数据准备如下。

(1)只要有--query+sql,就需要加$CONDITIONS,哪怕只有一个maptask。

![]()

# 只有一个maptask

[hadoop@node01 /kkb/bin]$ sqoop import --connect jdbc:mysql://node01:3306/sqooptest --username root --password 123456 --target-dir /sqoop/conditiontest --delete-target-dir --query 'select * from Person where score>50' --m 1

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../hcatalog does not exist! HCatalog jobs will fail.

Please set $HCAT_HOME to the root of your HCatalog installation.

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../zookeeper does not exist! Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation.

20/02/07 10:43:20 INFO sqoop.Sqoop: Running Sqoop version: 1.4.6-cdh5.14.2

20/02/07 10:43:20 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

20/02/07 10:43:20 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset.

20/02/07 10:43:20 INFO tool.CodeGenTool: Beginning code generation

# 提示需要添加$CONDITIONS参数

20/02/07 10:43:20 ERROR tool.ImportTool: Import failed: java.io.IOException: Query [select * from Person where score>50] must contain '$CONDITIONS' in WHERE clause.

at org.apache.sqoop.manager.ConnManager.getColumnTypes(ConnManager.java:332)

at org.apache.sqoop.orm.ClassWriter.getColumnTypes(ClassWriter.java:1858)

at org.apache.sqoop.orm.ClassWriter.generate(ClassWriter.java:1657)

at org.apache.sqoop.tool.CodeGenTool.generateORM(CodeGenTool.java:106)

at org.apache.sqoop.tool.ImportTool.importTable(ImportTool.java:494)

at org.apache.sqoop.tool.ImportTool.run(ImportTool.java:621)

at org.apache.sqoop.Sqoop.run(Sqoop.java:147)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:70)

at org.apache.sqoop.Sqoop.runSqoop(Sqoop.java:183)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:234)

at org.apache.sqoop.Sqoop.runTool(Sqoop.java:243)

at org.apache.sqoop.Sqoop.main(Sqoop.java:252)

You have new mail in /var/spool/mail/root

![]()

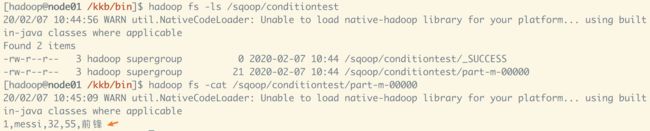

(2)如果只有一个maptask,可以不加--split-by来区分数据,因为处理的是整份数据,无需切分。

![]()

![]()

[hadoop@node01 /kkb/bin]$ sqoop import --connect jdbc:mysql://node01:3306/sqooptest --username root --password 123456 --target-dir /sqoop/conditiontest --delete-target-dir --query 'select * from Person where score>50 and $CONDITIONS' --m 1

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../hcatalog does not exist! HCatalog jobs will fail.

Please set $HCAT_HOME to the root of your HCatalog installation.

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../zookeeper does not exist! Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation.

20/02/07 10:44:08 INFO sqoop.Sqoop: Running Sqoop version: 1.4.6-cdh5.14.2

20/02/07 10:44:08 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

20/02/07 10:44:08 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset.

20/02/07 10:44:08 INFO tool.CodeGenTool: Beginning code generation

Fri Feb 07 10:44:08 CST 2020 WARN: Establishing SSL connection without server's identity verification is not recommended. According to MySQL 5.5.45+, 5.6.26+ and 5.7.6+ requirements SSL connection must be established by default if explicit option isn't set. For compliance with existing applications not using SSL the verifyServerCertificate property is set to 'false'. You need either to explicitly disable SSL by setting useSSL=false, or set useSSL=true and provide truststore for server certificate verification.

20/02/07 10:44:09 INFO manager.SqlManager: Executing SQL statement: select * from Person where score>50 and (1 = 0)

20/02/07 10:44:09 INFO manager.SqlManager: Executing SQL statement: select * from Person where score>50 and (1 = 0)

20/02/07 10:44:09 INFO manager.SqlManager: Executing SQL statement: select * from Person where score>50 and (1 = 0)

20/02/07 10:44:09 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /kkb/install/hadoop-2.6.0-cdh5.14.2

Note: /tmp/sqoop-hadoop/compile/ed46f1d7a2aeb419d32799b64aabdc82/QueryResult.java uses or overrides a deprecated API.

Note: Recompile with -Xlint:deprecation for details.

20/02/07 10:44:13 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-hadoop/compile/ed46f1d7a2aeb419d32799b64aabdc82/QueryResult.jar

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/kkb/install/hadoop-2.6.0-cdh5.14.2/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/kkb/install/hbase-1.2.0-cdh5.14.2/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

20/02/07 10:44:13 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

20/02/07 10:44:14 INFO tool.ImportTool: Destination directory /sqoop/conditiontest is not present, hence not deleting.

20/02/07 10:44:14 INFO mapreduce.ImportJobBase: Beginning query import.

20/02/07 10:44:14 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar

20/02/07 10:44:15 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

20/02/07 10:44:15 INFO client.RMProxy: Connecting to ResourceManager at node01/192.168.200.100:8032

Fri Feb 07 10:44:21 CST 2020 WARN: Establishing SSL connection without server's identity verification is not recommended. According to MySQL 5.5.45+, 5.6.26+ and 5.7.6+ requirements SSL connection must be established by default if explicit option isn't set. For compliance with existing applications not using SSL the verifyServerCertificate property is set to 'false'. You need either to explicitly disable SSL by setting useSSL=false, or set useSSL=true and provide truststore for server certificate verification.

20/02/07 10:44:21 INFO db.DBInputFormat: Using read commited transaction isolation

20/02/07 10:44:21 INFO mapreduce.JobSubmitter: number of splits:1

20/02/07 10:44:21 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1581042804134_0001

20/02/07 10:44:22 INFO impl.YarnClientImpl: Submitted application application_1581042804134_0001

20/02/07 10:44:22 INFO mapreduce.Job: The url to track the job: http://node01:8088/proxy/application_1581042804134_0001/

20/02/07 10:44:22 INFO mapreduce.Job: Running job: job_1581042804134_0001

20/02/07 10:44:33 INFO mapreduce.Job: Job job_1581042804134_0001 running in uber mode : true

20/02/07 10:44:33 INFO mapreduce.Job: map 0% reduce 0%

20/02/07 10:44:36 INFO mapreduce.Job: map 100% reduce 0%

20/02/07 10:44:36 INFO mapreduce.Job: Job job_1581042804134_0001 completed successfully

20/02/07 10:44:36 INFO mapreduce.Job: Counters: 32

File System Counters

FILE: Number of bytes read=0

FILE: Number of bytes written=0

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=100

HDFS: Number of bytes written=181519

HDFS: Number of read operations=128

HDFS: Number of large read operations=0

HDFS: Number of write operations=6

Job Counters

Launched map tasks=1

Other local map tasks=1

Total time spent by all maps in occupied slots (ms)=0

Total time spent by all reduces in occupied slots (ms)=0

TOTAL_LAUNCHED_UBERTASKS=1

NUM_UBER_SUBMAPS=1

Total time spent by all map tasks (ms)=1825

Total vcore-milliseconds taken by all map tasks=0

Total megabyte-milliseconds taken by all map tasks=0

Map-Reduce Framework

Map input records=1

Map output records=1

Input split bytes=87

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=44

CPU time spent (ms)=950

Physical memory (bytes) snapshot=160641024

Virtual memory (bytes) snapshot=3020165120

Total committed heap usage (bytes)=24555520

File Input Format Counters

Bytes Read=0

File Output Format Counters

Bytes Written=21

20/02/07 10:44:36 INFO mapreduce.ImportJobBase: Transferred 177.2646 KB in 21.4714 seconds (8.2558 KB/sec)

20/02/07 10:44:36 INFO mapreduce.ImportJobBase: Retrieved 1 records.

![]()

处理完结果合理。

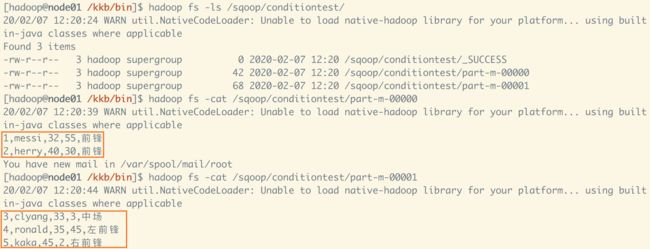

(3) 如果只有多个maptask,需使用--split-by来区分数据,$CONDITIONS替换查询范围。

![]()

# 指定了2个maptask

[hadoop@node01 /kkb/bin]$ sqoop import --connect jdbc:mysql://node01:3306/sqooptest --username root --password 123456 --target-dir /sqoop/conditiontest --delete-target-dir --query 'select * from Person where score>1 and $CONDITIONS' --m 2 --split-by id

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../hcatalog does not exist! HCatalog jobs will fail.

Please set $HCAT_HOME to the root of your HCatalog installation.

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../accumulo does not exist! Accumulo imports will fail.

Please set $ACCUMULO_HOME to the root of your Accumulo installation.

Warning: /kkb/install/sqoop-1.4.6-cdh5.14.2/../zookeeper does not exist! Accumulo imports will fail.

Please set $ZOOKEEPER_HOME to the root of your Zookeeper installation.

20/02/07 12:19:45 INFO sqoop.Sqoop: Running Sqoop version: 1.4.6-cdh5.14.2

20/02/07 12:19:45 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead.

20/02/07 12:19:45 INFO manager.MySQLManager: Preparing to use a MySQL streaming resultset.

20/02/07 12:19:45 INFO tool.CodeGenTool: Beginning code generation

Fri Feb 07 12:19:45 CST 2020 WARN: Establishing SSL connection without server's identity verification is not recommended. According to MySQL 5.5.45+, 5.6.26+ and 5.7.6+ requirements SSL connection must be established by default if explicit option isn't set. For compliance with existing applications not using SSL the verifyServerCertificate property is set to 'false'. You need either to explicitly disable SSL by setting useSSL=false, or set useSSL=true and provide truststore for server certificate verification.

20/02/07 12:19:46 INFO manager.SqlManager: Executing SQL statement: select * from Person where score>1 and (1 = 0)

20/02/07 12:19:46 INFO manager.SqlManager: Executing SQL statement: select * from Person where score>1 and (1 = 0)

20/02/07 12:19:46 INFO manager.SqlManager: Executing SQL statement: select * from Person where score>1 and (1 = 0)

20/02/07 12:19:46 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /kkb/install/hadoop-2.6.0-cdh5.14.2

Note: /tmp/sqoop-hadoop/compile/c4d8789310abaa32f4e44e03103924e6/QueryResult.java uses or overrides a deprecated API.

Note: Recompile with -Xlint:deprecation for details.

20/02/07 12:19:49 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-hadoop/compile/c4d8789310abaa32f4e44e03103924e6/QueryResult.jar

SLF4J: Class path contains multiple SLF4J bindings.

SLF4J: Found binding in [jar:file:/kkb/install/hadoop-2.6.0-cdh5.14.2/share/hadoop/common/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: Found binding in [jar:file:/kkb/install/hbase-1.2.0-cdh5.14.2/lib/slf4j-log4j12-1.7.5.jar!/org/slf4j/impl/StaticLoggerBinder.class]

SLF4J: See http://www.slf4j.org/codes.html#multiple_bindings for an explanation.

SLF4J: Actual binding is of type [org.slf4j.impl.Log4jLoggerFactory]

20/02/07 12:19:49 WARN util.NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

20/02/07 12:19:50 INFO tool.ImportTool: Destination directory /sqoop/conditiontest deleted.

20/02/07 12:19:50 INFO mapreduce.ImportJobBase: Beginning query import.

20/02/07 12:19:50 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar

20/02/07 12:19:50 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps

20/02/07 12:19:50 INFO client.RMProxy: Connecting to ResourceManager at node01/192.168.200.100:8032

Fri Feb 07 12:19:55 CST 2020 WARN: Establishing SSL connection without server's identity verification is not recommended. According to MySQL 5.5.45+, 5.6.26+ and 5.7.6+ requirements SSL connection must be established by default if explicit option isn't set. For compliance with existing applications not using SSL the verifyServerCertificate property is set to 'false'. You need either to explicitly disable SSL by setting useSSL=false, or set useSSL=true and provide truststore for server certificate verification.

20/02/07 12:19:55 INFO db.DBInputFormat: Using read commited transaction isolation

# 查询id范围,最大id,最小id

20/02/07 12:19:55 INFO db.DataDrivenDBInputFormat: BoundingValsQuery: SELECT MIN(id), MAX(id) FROM (select * from Person where score>1 and (1 = 1) ) AS t1

# id的范围是1-5,2个分片

20/02/07 12:19:55 INFO db.IntegerSplitter: Split size: 2; Num splits: 2 from: 1 to: 5

20/02/07 12:19:55 INFO mapreduce.JobSubmitter: number of splits:2

20/02/07 12:19:55 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1581042804134_0002

20/02/07 12:19:56 INFO impl.YarnClientImpl: Submitted application application_1581042804134_0002

20/02/07 12:19:56 INFO mapreduce.Job: The url to track the job: http://node01:8088/proxy/application_1581042804134_0002/

20/02/07 12:19:56 INFO mapreduce.Job: Running job: job_1581042804134_0002

20/02/07 12:20:04 INFO mapreduce.Job: Job job_1581042804134_0002 running in uber mode : true

20/02/07 12:20:04 INFO mapreduce.Job: map 0% reduce 0%

20/02/07 12:20:06 INFO mapreduce.Job: map 50% reduce 0%

20/02/07 12:20:07 INFO mapreduce.Job: map 100% reduce 0%

20/02/07 12:20:08 INFO mapreduce.Job: Job job_1581042804134_0002 completed successfully

20/02/07 12:20:09 INFO mapreduce.Job: Counters: 32

File System Counters

FILE: Number of bytes read=0

FILE: Number of bytes written=0

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

HDFS: Number of bytes read=315

HDFS: Number of bytes written=370770

HDFS: Number of read operations=260

HDFS: Number of large read operations=0

HDFS: Number of write operations=14

Job Counters

Launched map tasks=2

Other local map tasks=2

Total time spent by all maps in occupied slots (ms)=0

Total time spent by all reduces in occupied slots (ms)=0

TOTAL_LAUNCHED_UBERTASKS=2

NUM_UBER_SUBMAPS=2

Total time spent by all map tasks (ms)=2861

Total vcore-milliseconds taken by all map tasks=0

Total megabyte-milliseconds taken by all map tasks=0

Map-Reduce Framework

Map input records=5

Map output records=5

Input split bytes=189

Spilled Records=0

Failed Shuffles=0

Merged Map outputs=0

GC time elapsed (ms)=69

CPU time spent (ms)=1210

Physical memory (bytes) snapshot=327987200

Virtual memory (bytes) snapshot=6043004928

Total committed heap usage (bytes)=47128576

File Input Format Counters

Bytes Read=0

File Output Format Counters

Bytes Written=110

20/02/07 12:20:09 INFO mapreduce.ImportJobBase: Transferred 362.0801 KB in 18.2879 seconds (19.7989 KB/sec)

20/02/07 12:20:09 INFO mapreduce.ImportJobBase: Retrieved 5 records.

![]()

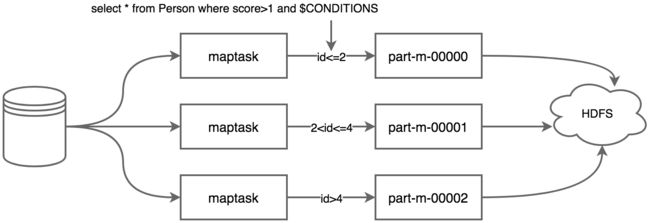

查看数据发现,可以理解第一个$CONDITIONS 条件被替换1<=id<=2,第二个$CONDITIONS 条件被替换2 回到顶部 当sqoop使用--query+sql执行多个maptask并行运行导入数据时,每个maptask将执行一部分数据的导入,原始数据需要使用'--split-by 某个字段'来切分数据,不同的数据交给不同的maptask去处理。maptask执行sql副本时,需要在where条件中添加$CONDITIONS条件,这个是linux系统的变量,可以根据sqoop对边界条件的判断,来替换成不同的值,这就是说若split-by id,则sqoop会判断id的最小值和最大值判断id的整体区间,然后根据maptask的个数来进行区间拆分,每个maptask执行一定id区间范围的数值导入任务,如下为示意图。 以上,是在参考文末博文的基础上,对$CONDITIONS的理解,后续继续完善补充。 参考博文: (1)https://www.cnblogs.com/kouryoushine/p/7814312.html 原理理解