awk基础知识和案例

文章目录

- awk

-

- 1 awk用法入门

-

- 1.1 BEGIN和END语句块

- 1.2 awk语法

-

- 1.2.1 常用命令选项

- 1.2.2 awk变量

-

- 内置变量

- 自定义变量

- 1.3 printf命令

-

- 1.3.1 格式

- 1.3.2 演示

- 1.4 操作符

- 2 awk高阶用法

-

- 2.1 awk控制语句(if-else判断)

- 2.2 awk控制语句(while循环)

- 2.3 awk控制语句(do-while循环)

- 2.4 awk控制语句(for循环)

- 2.5 awk数组

-

- 关联数组:array[index-expression]

- 演示

- 数值/字符串处理

- 2.6 awk自定义函数

- 3.awk的经典实战案例

-

- (1) 插入几个新字段

- (2) 格式化空白

- (3) 筛选IPV4地址

- (4) 读取.ini配置文件中的某段

- (5) 根据某字段去重

- (6) 次数统计

- (7) 统计TCP连接状态数量

- (8) 统计日志中各IP访问非200状态码的次数

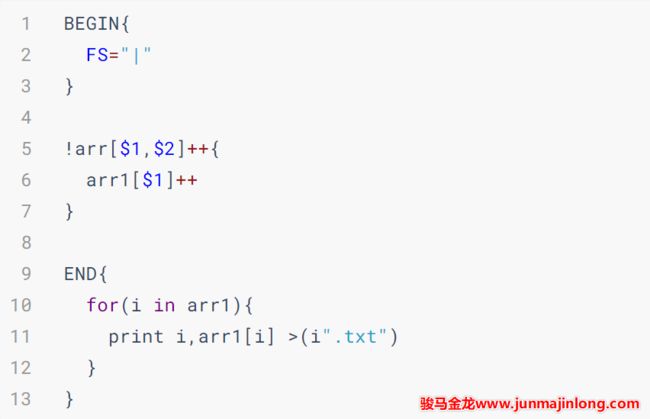

- (9) 统计独立IP

- (10) 处理字段缺失的数据

- (11) 处理字段中包含了字段分隔符的数据

- (12) 取字段中指定字符数量

- (13) 行列转换

- (14) 行列转换2

- (15) 筛选给定时间范围内的日志

-

- 方法一

- 方法二

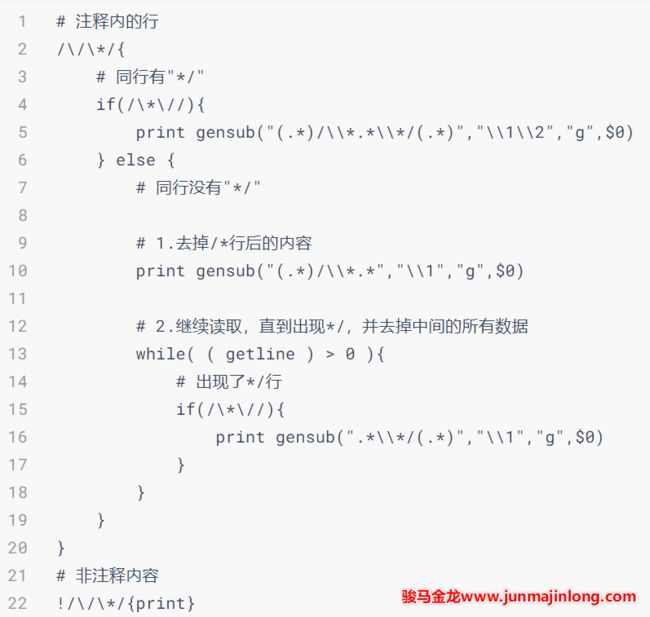

- (16) 去掉`/**/`中间的注释

- (17) 前后段落关系判断

awk

1 awk用法入门

awk 'awk_proge' file

awk_proge : awk执行的代码或代码文件

file : 内容文件

基本用法

# 输出a.txt中的每一行

awk '{print $0}' a.txt

# 多个代码块,代码块中多个语句

# 输出每行之后还输出两行:hello行和world行

awk '{print $0}{print "hello";print "world"}' a.txt

1.1 BEGIN和END语句块

[root@localhost work]# awk 'BEGIN{print "我在前面"}{print $0}' a.text

我在前面

ID name gender age email phone

1 Bob male 28 [email protected] 18023394012

2 Alice female 24 [email protected] 18084925203

3 Tony male 21 [email protected] 17048792503

4 Kevin male 21 [email protected] 17023929033

5 Alex male 18 [email protected] 18185904230

6 Andy female 22 [email protected] 18923902352

7 Jerry female 25 [email protected] 18785234906

8 Peter male 20 [email protected] 17729348758

9 Steven female 23 [email protected] 15947893212

10 Bruce female 27 [email protected] 13942943905

[root@localhost work]# awk 'END{print "我在后面"}{print $0}' a.text

ID name gender age email phone

1 Bob male 28 [email protected] 18023394012

2 Alice female 24 [email protected] 18084925203

3 Tony male 21 [email protected] 17048792503

4 Kevin male 21 [email protected] 17023929033

5 Alex male 18 [email protected] 18185904230

6 Andy female 22 [email protected] 18923902352

7 Jerry female 25 [email protected] 18785234906

8 Peter male 20 [email protected] 17729348758

9 Steven female 23 [email protected] 15947893212

10 Bruce female 27 [email protected] 13942943905

我在后面

BEGIN代码块

- 在读取文件之前执行,且执行一次

- 在 BEGIN 代码块中,无法使用 $0 或其它一些特殊变量

END代码块

- 在读取文件完成之后执行,且执行一次

- 有 END 代码块,必有要读取的数据 (可以是标准输入)

- END 代码块中可以使用 $0 等一些特殊变量,只不过这些特殊变量保存的是最后一轮 awk 循环的数据

1.2 awk语法

1.2.1 常用命令选项

- -F fs : fs指定输入分隔符 , fs可以是字符串或正则表达式 , 如-F :

- -v var=value : 赋值一个用户定义变量,将外部变量传递给awk

- -f scripfile : 从脚本文件中读取awk命令

1.2.2 awk变量

变量:内置和自定义变量,每个变量前加 -v 命令选项

内置变量

(1) 格式

- FS : 输入字段分隔符 , 默认为空白字符

- OFS : 输出字段分隔符 , 默认为空白字符

- RS : 输入记录分隔符 , 指定输入时的换行符,原换行符仍有效

- ORS : 输出记录分隔符 , 输出时用指定符号代替换行符

- NF : 字段数量,共有多少字段, N F 引用最后一列, NF引用最后一列, NF引用最后一列,(NF-1)引用倒数第2列

- NR : 行号,后可跟多个文件,第二个文件行号继续从第一个文件最后行号开始

- FNR :各文件分别计数, 行号,后跟一个文件和NR一样,跟多个文件,第二个文件行号从1开始

- **FILENAME **:当前文件名

- ARGC :命令行参数的个数

- ARGV :数组,保存的是命令行所给定的各参数,查看参数

(2)演示

[root@localhost work_awk]# cat awkdemo

hello:world

linux:redhat:lalala:hahaha

along:love:youou

[root@localhost work_awk]# awk -v FS=':' '{print $1,$2}' awkdemo

hello world

linux redhat

along love

[root@localhost work_awk]# awk -v FS=':' -v OFS='-+-' '{print $1,$2}' awkdemo

hello-+-world

linux-+-redhat

along-+-love

[root@localhost work_awk]# awk -v RS=':' '{print $1,$2}' awkdemo

hello

world linux

redhat

lalala

hahaha along

love

youou

[root@localhost work_awk]# awk -v ORS='-+-' '{print $1,$2}' awkdemo

hello:world -+-linux:redhat:lalala:hahaha -+-along:love:youou -+-

[root@localhost work_awk]# awk -F: '{print $(NF-1)}' awkdemo

hello

lalala

love

[root@localhost work_awk]# awk -F: '{print $NF}' awkdemo

world

hahaha

youou

[root@localhost work_awk]# awk '{print NR ")" $0}' awkdemo

1)hello:world

2)linux:redhat:lalala:hahaha

3)along:love:youou

[root@localhost work_awk]# awk '{print FNR ") " $0}' awkdemo awkdemo1

1) hello:world

2) linux:redhat:lalala:hahaha

3) along:love:youou

1) ni:hello

2) study:school

[root@localhost work_awk]# awk '{print "file_name=" FILENAME " text=" $0}' awkdemo1

file_name=awkdemo1 text=ni:hello

file_name=awkdemo1 text=study:school

[root@localhost work_awk]# awk '{print ARGC " text=" $0}' awkdemo1

2 text=ni:hello

2 text=study:school

自定义变量

自定义变量( 区分字符大小写)

(1)-v var=value

① 先定义变量,后执行动作print

[root@along ~]# awk -v name="along" -F: '{print name":"$0}' awkdemo

along:hello:world

along:linux:redhat:lalala:hahaha

along:along:love:you

② 在执行动作print后定义变量

[root@along ~]# awk -F: '{print name":"$0;name="along"}' awkdemo

:hello:world

along:linux:redhat:lalala:hahaha

along:along:love:you

(2)在program 中直接定义

可以把执行的动作放在脚本中,直接调用脚本 -f

[root@along ~]# cat awk.txt

{name="along";print name,$1}

[root@along ~]# awk -F: -f awk.txt awkdemo

along hello

along linux

along along

1.3 printf命令

比print更强大

1.3.1 格式

printf` `"FORMAT"``, item1,item2, ...

- 必须指定FORMAT

- 不会自动换行,需要显式给出换行控制符,\n

- FORMAT 中需要分别为后面每个item 指定格式符

(2)格式符:与item 一一对应

-

%c: 显示字符的ASCII码

-

%d, %i: 显示十进制整数

-

%e, %E: 显示科学计数法数值

-

%f :显示为浮点数,小数 %5.1f,带整数、小数点、整数共5位,小数1位,不够用空格补上

-

%g, %G :以科学计数法或浮点形式显示数值

-

%s :显示字符串;例:%5s最少5个字符,不够用空格补上,超过5个还继续显示

-

%u :无符号整数

-

%%: 显示% 自身

(3)修饰符:放在%c[/d/e/f…]之间

- #[.#]:第一个数字控制显示的宽度;第二个# 表示小数点后精度,%5.1f

- -:左对齐(默认右对齐) %-15s

- +:显示数值的正负符号 %+d

1.3.2 演示

[root@along ~]# awk -F: '{print $1,$3}' /etc/passwd

root 0

bin 1

---第一列显示小于20的字符串;第2列显示整数并换行

[root@along ~]# awk -F: '{printf "%20s---%u\n",$1,$3}' /etc/passwd

root---0

bin---1

---使用-进行左对齐;第2列显示浮点数

[root@along ~]# awk -F: '{printf "%-20s---%-10.3f\n",$1,$3}' /etc/passwd

root ---0.000

bin ---1.000

---使用printf做表格

[root@along ~]# awk -F: 'BEGIN{printf "username userid\n-----------------------------\n"}{printf "%-20s|%-10.3f|%-10s\n",$1,$3}' /etc/passwd

username userid

-----------------------------

root |0.000

bin |1.000

1.4 操作符

-

算术操作符:

- x+y, x-y, x*y, x/y, x^y, x%y

- -x: 转换为负数

- +x: 转换为数值

-

字符串操作符:没有符号的操作符,字符串连接

-

赋值操作符:

- =, +=, -=, *=, /=, %=, ^=

- ++a, --a

-

比较操作符:

- ==, !=, >, >=, <, <=

-

模式匹配符:~ :左边是否和右边匹配包含 !~ :是否不匹配

-

逻辑操作符:与&& ,或|| ,非!

-

函数调用: function_name(argu1, argu2, …)

-

条件表达式(三目表达式):

selector ? if-true-expression : if-false-expression

- 注释:先判断selector,如果符合执行 ? 后的操作;否则执行 : 后的操作

(1)模式匹配符

---查询以/dev开头的磁盘信息

[root@localhost work]# df -h | awk '$0 ~ /^\/dev/'

/dev/mapper/rhel-root 10G 7.8G 2.3G 78% /

/dev/mapper/rhel-home 3.0G 68M 3.0G 3% /home

/dev/nvme0n1p1 3.0G 272M 2.8G 9% /boot

[root@localhost work]# df -h | awk '/^\/dev/{print $0}'

/dev/mapper/rhel-root 10G 7.8G 2.3G 78% /

/dev/mapper/rhel-home 3.0G 68M 3.0G 3% /home

/dev/nvme0n1p1 3.0G 272M 2.8G 9% /boot

---只显示磁盘使用状况和磁盘名

[root@localhost work]# df -h | awk '/^\/dev/{print $0}'

/dev/mapper/rhel-root 10G 7.8G 2.3G 78% /

/dev/mapper/rhel-home 3.0G 68M 3.0G 3% /home

/dev/nvme0n1p1 3.0G 272M 2.8G 9% /boot

---查找磁盘大于40%的

[root@localhost work]# df -h | awk '/^\/dev/{print $(NF-1)"---"$1}' | awk -F% '$1 > 40'

78%---/dev/mapper/rhel-root

(2)逻辑操作符

[root@localhost work]# awk -F: '$3>=0 && $3<=1000 {print $1,$3}' /etc/passwd

root 0

bin 1

...

[root@localhost work]# awk -F: '$3==0 || $3>=1000 {print $1}' /etc/passwd

root

nobody

lin

[root@localhost work]# awk -F: '!($3==0) {print $1}' /etc/passwd

bin

daemon

adm

...

[root@localhost work]# awk -F: '!($0 ~ /bash$/) {print $1,$3}' /etc/passwd

bin 1

daemon 2

adm 3

...

(3)条件表达式(三目表达式)

[root@localhost work]# awk -F: '{$3 >= 1000?usertype="common user":usertype="sysadmin user";print usertype,$1,$3}' /etc/passwd

sysadmin user root 0

sysadmin user bin 1

sysadmin user daemon 2

2 awk高阶用法

2.1 awk控制语句(if-else判断)

(1) 语法

if(condition){statement;…}[else statement] 单/双分支

if(condition1){statement1}else if(condition2){statement2}else{statement3} 多分支

(2) 演示

[root@localhost work]# awk -F: '{if($3>10 && $3<30)print $1,$3}' /etc/passwd

operator 11

games 12

ftp 14

rpcuser 29

mysql 27

2.2 awk控制语句(while循环)

(1) 语法

while (condition){statement;…}

(2) 演示

[root@localhost work_awk]# cat awkdemo1

ni:hello

study:school

[root@localhost work_awk]# awk -F: '{i=1;while(i<=NF){print $i,length($i);i++}}' awkdemo1

ni 2

hello 5

study 5

school 6

2.3 awk控制语句(do-while循环)

(1) 语法

do{statement;…}while(condition)

(2) 演示

[root@localhost work_awk]# awk 'BEGIN{sum=0;i=1;do{sum+=i;i++}while(i<=100);print sum}'

5050

2.4 awk控制语句(for循环)

(1) 语法

for(expr1;expr2;expr3) {statement;…}

特殊用法:遍历数组中的元素

for(var in array){statement;...}

(2) 演示

[root@localhost work_awk]# awk -F: '{for(i=1;i<=NF;i++){print $i,length($i)}}' awkdemo1

ni 2

hello 5

study 5

school 6

2.5 awk数组

关联数组:array[index-expression]

(1)可使用任意字符串;字符串要使用双引号括起来

(2)如果某数组元素事先不存在,在引用时,awk 会自动创建此元素,并将其值初始化为“空串”

(3)若要判断数组中是否存在某元素,要使用“index in array”格式进行遍历

(4)若要遍历数组中的每个元素,要使用for 循环**:for(var in array)** {for-body}

演示

[root@along ~]# cat awkdemo2

aaa

bbbb

aaa

123

123

123

---去除重复的行

[root@along ~]# awk '!arr[$0]++' awkdemo2

aaa

bbbb

123

---打印文件内容,和该行重复第几次出现 ?

[root@along ~]# awk '{!arr[$0]++;print $0,arr[$0]}' awkdemo2

aaa 1

bbbb 1

aaa 2

123 1

123 2

123 3

数值/字符串处理

(1)数值处理

- rand():返回0和1之间一个随机数,需有个种子 srand(),没有种子,一直输出0.237788

[root@along ~]# awk 'BEGIN{print rand()}'

0.237788

[root@along ~]# awk 'BEGIN{srand(); print rand()}'

0.51692

[root@along ~]# awk 'BEGIN{srand(); print rand()}'

0.189917

---取0-50随机数

[root@along ~]# awk 'BEGIN{srand(); print int(rand()*100%50)+1}'

12

[root@along ~]# awk 'BEGIN{srand(); print int(rand()*100%50)+1}'

24

2)字符串处理:

- length([s]) :返回指定字符串的长度

- sub(r,s,[t]) :对t 字符串进行搜索r 表示的模式匹配的内容,并将第一个匹配的内容替换为s

- gsub(r,s,[t]) :对t 字符串进行搜索r 表示的模式匹配的内容,并全部替换为s 所表示的内容

- split(s,array,[r]) :以r 为分隔符,切割字符串s ,并将切割后的结果保存至array 所表示的数组中,第一个索引值为1, 第二个索引值为2,…

演示:

[root@along ~]# echo "2008:08:08 08:08:08" | awk 'sub(/:/,"-",$1)'

2008-08:08 08:08:08

[root@along ~]# echo "2008:08:08 08:08:08" | awk 'gsub(/:/,"-",$0)'

2008-08-08 08-08-08

[root@along ~]# echo "2008:08:08 08:08:08" | awk '{split($0,i,":")}END{for(n in i){print n,i[n]}}'

4 08

5 08

1 2008

2 08

3 08 08

2.6 awk自定义函数

(1)格式:和bash区别:定义函数()中需加参数,return返回值不是$?,是相当于echo输出

function name ( parameter, parameter, ... ) {

statements

return expression

}

(2)演示

[root@along ~]# cat fun.awk

function max(v1,v2) {

v1>v2?var=v1:var=v2

return var

}

BEGIN{a=3;b=2;print max(a,b)}

[root@along ~]# awk -f fun.awk

3

3.awk的经典实战案例

(1) 插入几个新字段

在"a b c d"的b后面插入3个字段e f g。

[root@localhost ~]# echo "a b c d" | awk '{$2=$2" e f g";print}'

a b e f g c d

(2) 格式化空白

[root@localhost work_awk]# awk '{$1;print}' 1.text

aaaa bbb ccc

bbb aaa ccc

ddd fff eee gg hh ii jj

[root@localhost work_awk]#

[root@localhost work_awk]#

[root@localhost work_awk]# awk '{$1=$1;print}' 1.text //用于去掉行尾的空格或者重新格式化输出。

aaaa bbb ccc

bbb aaa ccc

ddd fff eee gg hh ii jj

[root@localhost work_awk]# awk 'BEGIN{OFS="\t"}{$1=$1;print}' 1.text

aaaa bbb ccc

bbb aaa ccc

ddd fff eee gg hh ii jj

(3) 筛选IPV4地址

-

方法一

[root@localhost work_awk]# ifconfig | awk '/inet/ && !($2 ~ /^127/){print $2}' 192.168.182.136 fe80::20c:29ff:fe21:99f1 ::1 192.168.122.1 -

方法二

[root@localhost work_awk]# ifconfig | awk 'BEGIN{RS=""}!/lo/{print $6}' 192.168.182.136 192.168.122.1 -

方法三

[root@localhost work_awk]# ifconfig | awk 'BEGIN{RS="";FS="\n"}!/lo/{$0=$2;FS=" ";$0=$0;print $2}' 192.168.182.136

(4) 读取.ini配置文件中的某段

[base]

name=os_repo

baseurl=https://xxx/centos/$releasever/os/$basearch

gpgcheck=0

enable=1

[mysql]

name=mysql_repo

baseurl=https://xxx/mysql-repo/yum/mysql-5.7-community/el/$releasever/$basearch

gpgcheck=0

enable=1

[epel]

name=epel_repo

baseurl=https://xxx/epel/$releasever/$basearch

gpgcheck=0

enable=1

[percona]

name=percona_repo

baseurl = https://xxx/percona/release/$releasever/RPMS/$basearch

enabled = 1

gpgcheck = 0

[root@localhost work_awk]# awk 'BEGIN{RS=""}/\[mysql\]/{print;while((getline)>0){if(/\[.*\]/){exit}print}}' a.text

[mysql]

name=mysql_repo

baseurl=https://xxx/mysql-repo/yum/mysql-5.7-community/el/$releasever/$basearch

gpgcheck=0

enable=1

(5) 根据某字段去重

去掉uid=xxx重复的行。

2019-01-13_12:00_index?uid=123

2019-01-13_13:00_index?uid=123

2019-01-13_14:00_index?uid=333

2019-01-13_15:00_index?uid=9710

2019-01-14_12:00_index?uid=123

2019-01-14_13:00_index?uid=123

2019-01-15_14:00_index?uid=333

2019-01-16_15:00_index?uid=9710

首先利用uid去重,我们需要利用?进行划分,然后将uid=xxx保存在数组中,这是判断重复的依据

然后统计uid出现次数,第一次出现统计,第二次不统计

awk -F"?" '!arr[$2]++{print}' a.txt

awk -F"?" '{arr[$2]=arr[$2]+1;if(arr[$2]==1){print}}'

awk -F"?" '{arr[$2]++;if(arr[$2]==1){print}}'

结果:

2019-01-13_12:00_index?uid=123

2019-01-13_14:00_index?uid=333

2019-01-13_15:00_index?uid=9710

(6) 次数统计

portmapper

portmapper

portmapper

portmapper

portmapper

portmapper

status

status

mountd

mountd

mountd

mountd

mountd

mountd

nfs

nfs

nfs_acl

nfs

nfs

nfs_acl

nlockmgr

nlockmgr

nlockmgr

nlockmgr

nlockmgr

awk '{arr[$0]++}END{OFS="\t";for(idx in arr){printf arr[idx],idx}}' a.txt

awk '{arr[$0]++}END{for(i in arr){print arr[i], i}}' index.txt

(7) 统计TCP连接状态数量

$ netstat -tnap

Proto Recv-Q Send-Q Local Address Foreign Address State PID/Program name

tcp 0 0 0.0.0.0:22 0.0.0.0:* LISTEN 1139/sshd

tcp 0 0 127.0.0.1:25 0.0.0.0:* LISTEN 2285/master

tcp 0 96 192.168.2.17:22 192.168.2.1:2468 ESTABLISHED 87463/sshd: root@pt

tcp 0 0 192.168.2017:22 192.168.201:5821 ESTABLISHED 89359/sshd: root@no

tcp6 0 0 :::3306 :::* LISTEN 2289/mysqld

tcp6 0 0 :::22 :::* LISTEN 1139/sshd

tcp6 0 0 ::1:25 :::* LISTEN 2285/master

统计得到的结果:

5: LISTEN

2: ESTABLISHED

netstat -antp | awk '{arr[$6]++}END{for (i in arr){print arr[i], i}}'

netstat -antp | grep 'tcp' | awk '{print $6}' | sort | uniq -c

一行式:

netstat -tna | awk '/^tcp/{arr[$6]++}END{for(state in arr){print arr[state] ": " state}}'

netstat -tna | /usr/bin/grep 'tcp' | awk '{print $6}' | sort | uniq -c

(8) 统计日志中各IP访问非200状态码的次数

日志示例数据:

111.202.100.141 - - [2019-11-07T03:11:02+08:00] "GET /robots.txt HTTP/1.1" 301 169

统计非200状态码的IP,并取次数最多的前10个IP。

# 法一

awk '$9!=200{arr[$1]++}END{for(i in arr){print arr[i],i}}' access.log | sort -k1nr | head -n 10

# 法二:

awk中排序函数sort asort

设置排序顺序PROCINFO

PROCINFO["sorted_in"]=@val_num_desc

awk '

$9!=200{arr[$1]++}

END{

PROCINFO["sorted_in"]="@val_num_desc";

for(i in arr){

#设置计数器

if(cnt++==10){exit}

print arr[i],i

}

}' access.log

(9) 统计独立IP

? url 访问IP 访问时间 访问人

a.com.cn|202.109.134.23|2015-11-20 20:34:43|guest

b.com.cn|202.109.134.23|2015-11-20 20:34:48|guest

c.com.cn|202.109.134.24|2015-11-20 20:34:48|guest

a.com.cn|202.109.134.23|2015-11-20 20:34:43|guest

a.com.cn|202.109.134.24|2015-11-20 20:34:43|guest

b.com.cn|202.109.134.25|2015-11-20 20:34:48|guest

需求:统计每个URL的独立访问IP有多少个(去重),并且要为每个URL保存一个对应的文件,得到的结果类似:

a.com.cn 2

b.com.cn 2

c.com.cn 1

并且有三个对应的文件:

a.com.cn.txt

b.com.cn.txt

c.com.cn.txt

代码:

(10) 处理字段缺失的数据

ID name gender age email phone

1 Bob male 28 [email protected] 18023394012

2 Alice female 24 [email protected] 18084925203

3 Tony male 21 17048792503

4 Kevin male 21 [email protected] 17023929033

5 Alex male 18 [email protected] 18185904230

6 Andy female [email protected] 18923902352

7 Jerry female 25 [email protected] 18785234906

8 Peter male 20 [email protected] 17729348758

9 Steven 23 [email protected] 15947893212

10 Bruce female 27 [email protected] 13942943905

当字段缺失时,直接使用FS划分字段来处理会非常棘手。gawk为了解决这种特殊需求,提供了FIELDWIDTHS变量。

FIELDWIDTH可以按照字符数量划分字段。

将空白部分保留下来

awk '{print $0}' FIELDWIDTHS="2 2:6 2:6 2:3 2:13 2:11" a.txt

FIELDWIDTH第一个字段是字符宽度ID为2,指定2个字符宽度

第两个字段最大为6,但前面和ID之间还有两个空格,所以可以指定宽度为8,也可以跳过两个字符2:6

awk '{print $0}' FIELDWIDTHS="2 2:6 2:6 2:3 2:13 2:11" a.txt

awk 'NR==4{print $5}' FIELDWIDTHS="2 2:6 2:6 2:3 2:13 2:11" a.txt

(11) 处理字段中包含了字段分隔符的数据

下面是CSV文件中的一行,该CSV文件以逗号分隔各个字段。

Robbins,Arnold,"1234 A Pretty Street, NE",MyTown,MyState,12345-6789,USA

需求:取得第三个字段"1234 A Pretty Street, NE"。

当字段中包含了字段分隔符时,直接使用FS划分字段来处理会非常棘手。gawk为了解决这种特殊需求,提供了FPAT变量。

FPAT可以收集正则匹配的结果,并将它们保存在各个字段中。(就像grep匹配成功的部分会加颜色显示,而使用FPAT划分字段,则是将匹配成功的部分保存在字段$1 $2 $3...中)。

echo 'Robbins,Arnold,"1234 A Pretty Street, NE",MyTown,MyState,12345-6789,USA' |\

awk 'BEGIN{FPAT="[^,]+|\".*\""}{print $1,$3}'

(12) 取字段中指定字符数量

16 001agdcdafasd

16 002agdcxxxxxx

23 001adfadfahoh

23 001fsdadggggg

得到:

16 001

16 002

23 001

23 002

awk字符索引从1开始

awk '{print $1,substr($2,1,3)}'

利用FIELDWIDTH分割

awk 'BEGIN{FIELDWIDTHS="2 2:3"}{print $1,$2}' a.txt

(13) 行列转换

name age

alice 21

ryan 30

转换得到:

name alice ryan

第一行数据来源为第一列,而且跨行,我们需要利用数组保存起来

age 21 30

awk '

{

for(i=1;i<=NF;i++){

arr[i]=arr[i]" "$i

}

}

END{

for(i=1;i<=NF;i++){

print arr[i]

}

}

' a.txt

(14) 行列转换2

文件内容:

74683 1001

74683 1002

74683 1011

74684 1000

74684 1001

74684 1002

74685 1001

74685 1011

74686 1000

....

100085 1000

100085 1001

文件就两列,希望处理成

74683 1001 1002 1011

74684 1000 1001 1002

...

就是只要第一列数字相同, 就把他们的第二列放一行上,中间空格分开

{

if($1 in arr){

arr[$1] = arr[$1]" "$2

} else {

arr[$1] = $2

}

}

END{

for(i in arr){

printf "%s %s\n",i,arr[i]

}

}

(15) 筛选给定时间范围内的日志

awk提供了mktime()函数,它可以将时间转换成epoch时间值。

[root@localhost work]# awk 'BEGIN{print mktime("2019 11 10 03 42 40")}'

1573375360

借此,可以取得日志中的时间字符串部分,再将它们的年、月、日、时、分、秒都取出来,然后放入mktime()构建成对应的epoch值。因为epoch值是数值,所以可以比较大小,从而决定时间的大小。

方法一

2019-11-07T03:11:02+08:00的格式

实验数据

access.log文件

111.202.100.141 - - [2019-11-07T03:11:02+08:00] "GET /robots.txt HTTP/1.1" 301 169 "-" "Sogou web spider/4.0(+http://www.sogou.com/docs/help/webmasters.htm#07)" "-"

111.202.100.141 - - [2019-11-07T03:11:02+08:00] "GET /videos/index/ HTTP/1.1" 301 169 "-" "Sogou web spider/4.0(+http://www.sogou.com/docs/help/webmasters.htm#07)" "-"

50.7.235.2 - - [2019-11-07T03:11:32+08:00] "GET /robots.txt HTTP/1.1" 301 169 "-" "Mozilla/5.0 (Windows NT 6.1; rv:60.0) Gecko/20100101 Firefox/60.0" "-"

50.7.235.2 - - [2019-11-07T03:11:33+08:00] "GET / HTTP/1.1" 301 169 "-" "Mozilla/5.0 (Windows NT 6.1; rv:60.0) Gecko/20100101 Firefox/60.0" "-"

54.36.149.32 - - [2019-11-07T03:15:03+08:00] "GET /robots.txt HTTP/1.1" 301 169 "-" "Mozilla/5.0 (compatible; AhrefsBot/6.1; +http://ahrefs.com/robot/)" "-"

59.36.132.240 - - [2019-11-07T03:24:22+08:00] "GET http://junmajinlong.com/mysql/index HTTP/1.1" 301 169 "-" "Go-http-client/1.1" "-"

61.241.50.63 - - [2019-11-07T03:24:22+08:00] "GET http://junmajinlong.com/mysql/index HTTP/1.1" 301 169 "-" "Go-http-client/1.1" "-"

173.249.51.194 - - [2019-11-07T03:27:40+08:00] "GET / HTTP/1.0" 444 0 "-" "masscan/1.0 (https://github.com/robertdavidgraham/masscan)" "-"

220.195.3.152 - - [2019-11-07T03:37:11+08:00] "GET http://www.junmajinlong.com/ HTTP/1.1" 301 169 "-" "curl/7.47.0" "-"

50.7.235.2 - - [2019-11-07T03:41:07+08:00] "GET /robots.txt HTTP/1.1" 301 169 "-" "Mozilla/5.0 (Windows NT 6.1; rv:60.0) Gecko/20100101 Firefox/60.0" "-"

50.7.235.2 - - [2019-11-07T03:41:08+08:00] "GET / HTTP/1.1" 301 169 "-" "Mozilla/5.0 (Windows NT 6.1; rv:60.0) Gecko/20100101 Firefox/60.0" "-"

198.108.67.80 - - [2019-11-07T03:58:00+08:00] "\x16\x03\x01\x00y\x01\x00\x00u\x03\x03|\xD5\x03B#\xE6\xA9c\x85,[M\xAFu$\x11\xD4\xAB\x1D\xAF\xC8\xC2\x92qB\xE8zS:\x82\x00m\x00\x00\x1A\xC0/\xC0+\xC0\x11\xC0\x07\xC0\x13\xC0\x09\xC0\x14\xC0" 400 157 "-" "-" "-"

51.68.124.104 - - [2019-11-07T04:00:04+08:00] "GET / HTTP/1.0" 444 0 "-" "masscan/1.0 (https://github.com/robertdavidgraham/masscan)" "-"

59.36.132.240 - - [2019-11-07T04:03:34+08:00] "GET http://junmajinlong.com/categories/Coding HTTP/1.1" 301 169 "-" "Go-http-client/1.1" "-"

创建awk.text

BEGIN{

which_time = mktime("2019 11 10 03 42 40")

}

{

match($0,"^.*\\[(.*)\\].*",arr)

tmp_time = strptime1(arr[1])

if(tmp_time > which_time){print}

}

function strptime1(str ,arr,Y,M,D,H,m,S){

patsplit(str,arr,"[0-9]{1,4}")

Y=arr[1]

M=arr[2]

D=arr[3]

H=arr[4]

m=arr[5]

S=arr[6]

return mktime(sprintf("%s %s %s %s %s %s",Y,M,D,H,m,S))

}

match函数

用法 : match(字符串,正则表达式,[arr])

在字符串上匹配正则表达式,将匹配上的内容放入数据

patsplit函数

用法 : patsplit(string,array[,fieldpat])

根据字段分隔符fieldsep(支持正则表达式)将字符串string分割成各个字段并存入数组array中

执行

[root@localhost work]# awk -f awk.text access.log

方法二

以10/Nov/2019:23:53:44+08:00的格式

实验数据

access1.log文件

111.202.100.141 - - [07/Nov/2019:03:11:02+08:00] "GET /robots.txt HTTP/1.1" 301 169 "-" "Sogou web spider/4.0(+http://www.sogou.com/docs/help/webmasters.htm#07)" "-"

111.202.100.141 - - [07/Nov/2019:03:11:02+08:00] "GET /videos/index/ HTTP/1.1" 301 169 "-" "Sogou web spider/4.0(+http://www.sogou.com/docs/help/webmasters.htm#07)" "-"

50.7.235.2 - - [07/Nov/2019:03:11:32+08:00] "GET /robots.txt HTTP/1.1" 301 169 "-" "Mozilla/5.0 (Windows NT 6.1; rv:60.0) Gecko/20100101 Firefox/60.0" "-"

50.7.235.2 - - [07/Nov/2019:03:11:33+08:00] "GET / HTTP/1.1" 301 169 "-" "Mozilla/5.0 (Windows NT 6.1; rv:60.0) Gecko/20100101 Firefox/60.0" "-"

54.36.149.32 - - [07/Nov/2019:03:15:03+08:00] "GET /robots.txt HTTP/1.1" 301 169 "-" "Mozilla/5.0 (compatible; AhrefsBot/6.1; +http://ahrefs.com/robot/)" "-"

59.36.132.240 - - [07/Nov/2019:03:24:22+08:00] "GET http://junmajinlong.com/mysql/index HTTP/1.1" 301 169 "-" "Go-http-client/1.1" "-"

创建awk2.text

BEGIN{

which_time = mktime("2019 11 10 03 42 40")

}

{

match($0,"^.*\\[(.*)\\].*",arr)

tmp_time = strptime2(arr[1])

if(tmp_time > which_time){

print

}

}

function strptime2(str,dt_str,arr,Y,M,D,H,m,S) {

dt_str = gensub("[/:+]"," ","g",str)

split(dt_str,arr," ")

Y=arr[3]

M=mon_map(arr[2])

D=arr[1]

H=arr[4]

m=arr[5]

S=arr[6]

return mktime(sprintf("%s %s %s %s %s %s",Y,M,D,H,m,S))

}

function mon_map(str,mons){

mons["Jan"]=1

mons["Feb"]=2

mons["Mar"]=3

mons["Apr"]=4

mons["May"]=5

mons["Jun"]=6

mons["Jul"]=7

mons["Aug"]=8

mons["Sep"]=9

mons["Oct"]=10

mons["Nov"]=11

mons["Dec"]=12

return mons[str]

}

执行

[root@localhost work]# awk -f awk1.text access1.log

(16) 去掉/**/中间的注释

示例数据:

/*AAAAAAAAAA*/

1111

222

/*aaaaaaaaa*/

32323

12341234

12134 /*bbbbbbbbbb*/ 132412

14534122

/*

cccccccccc

*/

xxxxxx /*ddddddddddd

cccccccccc

eeeeeee

*/ yyyyyyyy

5642341

(17) 前后段落关系判断

从如下类型的文件中,找出false段的前一段为i-order的段,同时输出这两段。

2019-09-12 07:16:27 [-][

'data' => [

'http://192.168.100.20:2800/api/payment/i-order',

],

]

2019-09-12 07:16:27 [-][

'data' => [

false,

],

]

2019-09-21 07:16:27 [-][

'data' => [

'http://192.168.100.20:2800/api/payment/i-order',

],

]

2019-09-21 07:16:27 [-][

'data' => [

'http://192.168.100.20:2800/api/payment/i-user',

],

]

2019-09-17 18:34:37 [-][

'data' => [

false,

],

]

BEGIN{

RS="]\n"

ORS=RS

}

{

if(/false/ && prev ~ /i-order/){

print tmp

print

}

tmp=$0

}

“Feb”]=2

mons[“Mar”]=3

mons[“Apr”]=4

mons[“May”]=5

mons[“Jun”]=6

mons[“Jul”]=7

mons[“Aug”]=8

mons[“Sep”]=9

mons[“Oct”]=10

mons[“Nov”]=11

mons[“Dec”]=12

return mons[str]

}

执行

[root@localhost work]# awk -f awk1.text access1.log

### (16) 去掉`/**/`中间的注释

示例数据:

/AAAAAAAAAA/

1111

222

/aaaaaaaaa/

32323

12341234

12134 /bbbbbbbbbb/ 132412

14534122

/*

cccccccccc

*/

xxxxxx /*ddddddddddd

cccccccccc

eeeeeee

*/ yyyyyyyy

5642341

[外链图片转存中...(img-zrG4l3b6-1691411617667)]

### (17) 前后段落关系判断

从如下类型的文件中,找出false段的前一段为i-order的段,同时输出这两段。

2019-09-12 07:16:27 [-][

‘data’ => [

‘http://192.168.100.20:2800/api/payment/i-order’,

],

]

2019-09-12 07:16:27 [-][

‘data’ => [

false,

],

]

2019-09-21 07:16:27 [-][

‘data’ => [

‘http://192.168.100.20:2800/api/payment/i-order’,

],

]

2019-09-21 07:16:27 [-][

‘data’ => [

‘http://192.168.100.20:2800/api/payment/i-user’,

],

]

2019-09-17 18:34:37 [-][

‘data’ => [

false,

],

]

BEGIN{

RS=“]\n”

ORS=RS

}

{

if(/false/ && prev ~ /i-order/){

print tmp

print

}

tmp=$0

}